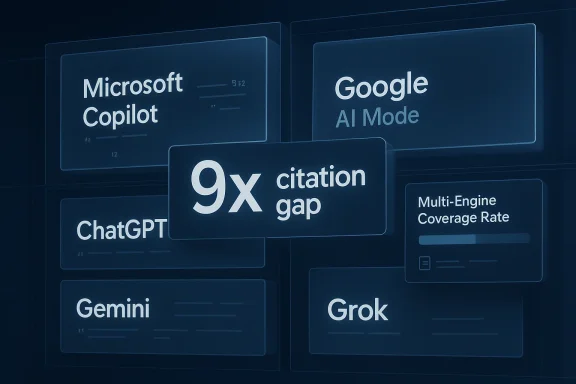

The multi-engine AI visibility gap is becoming a real marketing fault line in 2026, and the most interesting part is not that brands are being mentioned less often, but that they are being mentioned unevenly across the AI systems people now use to ask commercial questions. A recent Chronicle-Journal syndication framed the problem in sharp terms: brand citation rates can vary by roughly 9x between major AI search engines, with Microsoft Copilot reportedly leading and Google AI Mode lagging far behind. That claim fits a broader pattern already visible in recent WindowsForum coverage, where AI search visibility, generative engine optimization, and citation telemetry are emerging as a new measurement layer for digital discovery

For more than a decade, search marketing revolved around a simple operating model: optimize for one dominant engine, and you covered most of the market. That model was never perfect, but it was stable enough for brands to build budgets, teams, and reporting around Google rankings and click-through rates. The rise of AI-generated answers has broken that simplicity. Instead of one gatekeeper, marketers now face a distributed answer layer made up of multiple assistants and search products, each with its own retrieval logic, citation behavior, and content preferences.

The Chronicle-Journal piece argues that the real risk is not merely lower search traffic, but fragmentation of brand discovery across different AI surfaces. That is a stronger claim than the familiar “SEO is changing” narrative. It says the answer to the same commercial prompt can be materially different depending on whether the user asks ChatGPT, Copilot, Gemini, Perplexity, Grok, Google AI Overviews, or AI Mode. In practice, that means visibility is no longer one metric but many, and the brand may be strong in one channel while effectively absent in another.

That theme is consistent with recent forum coverage on generative engine optimization. WindowsForum has already highlighted that AI assistants are becoming “first-stop interfaces” for discovery, and that brands need to shape not just rankings but the actual sentences an engine delivers back to users . A separate thread on GEO and AEO made the same point more bluntly: the internet’s front door is moving from the classic results page to conversational systems, and brands must think in terms of answer quality, citation quality, and provenance rather than raw traffic alone

There is also a measuring problem. Traditional analytics tools track impressions, sessions, rankings, and conversions. Those remain useful, but they do not tell a marketer whether an AI engine actually names the brand in a response to a purchase-intent query. That gap explains why the new language around multi-engine coverage is gaining traction. It gives marketers a way to discuss discovery in a world where one answer engine may cite a brand often, another rarely, and a third not at all.

The practical consequence is that brands need to think in terms of portfolio visibility. One engine may be biased toward structured references and dense citations, while another may lean toward conversational phrasing, product pages, or third-party authority. The win condition is no longer “rank on page one.” It is “become a consistently cited entity across the engines that matter to your customers.”

That is why the “multi-engine AI visibility gap” is a useful phrase. It names the operational reality that marketing teams are already bumping into: a brand can score well in one system, fail in another, and still believe it is “doing fine” because old metrics have not caught up.

The Carnegie Mellon/KDD 2024 GEO framework matters because it reframed visibility as a model-sensitive problem. It suggested that the same content characteristics do not transfer cleanly across generative contexts. That is a significant departure from classic SEO wisdom, where certain fundamentals—authority, relevance, structure—tended to travel well across search engines. In AI search, the engine itself may be the variable, not just the query.

There is also a broader market context around zero-click behavior. If more user questions are answered directly on the results page or inside a conversational interface, the value of a citation increases because the citation itself becomes the destination. In that world, being mentioned by the engine is not a side effect of discovery; it is discovery. That makes the citation gap more than an academic curiosity.

The result is a discovery environment that behaves more like media buying than classic SEO. You are no longer just optimizing one webpage or one domain. You are optimizing for presence in a distributed attention system where each platform has distinct rules of evidence.

In that sense, the article’s “coverage rate” concept is useful even if the industry is still standardizing the methodology. It pushes teams to ask a better question: not “how high do we rank?” but “in how many answer systems do we appear at all?”

That is an important distinction for marketers and publishers. A strong brand can still fail to appear if the engine prefers certain source types or answer structures. Conversely, a smaller brand with well-structured, citation-rich material can punch above its weight in a more citation-friendly system.

This is exactly why multi-engine optimization cannot be reduced to one universal tactic. A playbook that boosts citations in Copilot may not be the same playbook that works in AI Mode. The Chronicle-Journal article treats that divergence as structural, not incidental, and that framing is believable given how quickly AI search features have evolved.

That does not mean Copilot is “easier” in a universal sense. It means the engine’s current retrieval and presentation style may favor the kinds of content brands can deliberately engineer. That is a different, and more actionable, proposition.

That difference matters because marketers often assume all AI answer surfaces are converging toward a single behavior. They are not. The engines may share a broad purpose, but they do not yet share a common citation standard.

The turnaround described in the article is equally instructive. The brand improved third-party citations, restructured its knowledge-base content around explicit statistical claims, and distributed expert commentary in ways that AI systems were more likely to ingest. Within 90 days, it reportedly achieved citation presence across 12 prompts spanning five of the eight major engines. Whether or not every detail is replicable, the arc is realistic: better sourcing, cleaner structure, and wider distribution can improve AI visibility.

What stands out is the unevenness of the gains. Perplexity and Copilot responded faster than AI Overviews, while other engines required more time or different source patterns. That tells us that optimization is not just about content quality; it is about timing, indexing, and the relationship between content format and engine behavior. A single launch does not fix a multi-engine gap.

That does not mean every company can copy the result quickly. It means the mechanics are understandable enough to act on, which is why the case study is more useful than a generic “AI is changing search” warning.

In other words, the multi-engine gap is not just a brand problem. It is a measurement problem, a governance problem, and a budgeting problem all at once.

The idea of multi-engine coverage rate is powerful because it is easy to explain to executives. It asks what percentage of monitored engines cite the brand at least once across a target prompt set. That gives teams a practical way to compare performance over time, across competitors, and across channels. It also creates a bridge between communications, SEO, PR, and content operations.

The article’s mention of GenOptima’s own cross-engine coverage improvements is especially telling. Even an agency specializing in this space reportedly needed deliberate multi-engine strategy to expand coverage quickly. That should caution any brand that assumes AI visibility will improve passively as long as it keeps publishing content. Passive publishing is not optimization.

Coverage also helps teams distinguish between breadth and depth. A brand may appear repeatedly in one engine yet be invisible elsewhere. The coverage metric exposes that imbalance immediately.

The Chronicle-Journal article repeatedly emphasizes authoritative third-party citations, structured knowledge-base content, and expert commentary. Those are not arbitrary suggestions. They map to how generative systems infer trust. If an engine can clearly trace a claim to a credible source, the brand’s chances improve. If the content is vague, purely promotional, or thinly supported, the odds fall.

This is where marketing and communications functions need tighter alignment. Owned content alone is often insufficient. Earned media, third-party references, and expert bylines increasingly matter because they shape how answer engines perceive authority. That is a major shift in power from pure brand publishing to ecosystem reputation.

A brand should ask whether each important page helps an engine answer a question quickly and confidently. If not, the page may still be useful for humans but weak for AI retrieval.

That does not mean brands should chase every mention indiscriminately. It means they should deliberately cultivate credible external references in the outlets and formats that answer engines are likely to ingest.

For consumers, the effect is subtler but potentially more disruptive. People increasingly rely on AI answers for product comparisons, quick recommendations, and “best for me” queries. If an engine omits a brand, that omission can quietly reshape demand. The consumer may never know the brand existed, and the brand will never know it lost the impression.

This is why the visibility gap is not merely a marketing fad. It touches discovery, trust, and market power. The brands that show up in more answer engines will have more opportunities to be considered. The ones that do not will have to fight for attention after the fact, which is always harder.

That makes governance essential. Teams need a repeatable process for testing prompts, auditing citations, and correcting outdated information.

If the engine cites a competitor more often, the competitive damage can be immediate, even without a click.

This also changes how rivals should think about benchmarking. Comparing your brand only to direct competitors may no longer be enough. You may need to compare your engine-by-engine visibility to category leaders, adjacent brands, and authoritative publishers that shape the answer layer. In a multi-engine world, your true competitor may be whoever the engine prefers to cite.

There is also a compounding effect. Brands that are already visible across multiple engines are more likely to be cited again because they accumulate more external mentions, more content reuse, and more semantic reinforcement. That makes early coverage gains especially valuable. Visibility begets visibility.

That means the market may split into two groups: brands that manage multi-engine visibility as a strategic system, and brands that remain trapped in old SEO reporting.

That lag is what makes the issue strategically urgent.

We should also expect more explicit telemetry. As answer engines mature, they will probably expose more visibility data, either through native dashboards or third-party tools. That will help standardize reporting, but it will not eliminate the underlying challenge: different engines will still reward different signals. The smart brands will therefore track both the metrics and the mechanisms.

Source: The Chronicle-Journal User

Overview

Overview

For more than a decade, search marketing revolved around a simple operating model: optimize for one dominant engine, and you covered most of the market. That model was never perfect, but it was stable enough for brands to build budgets, teams, and reporting around Google rankings and click-through rates. The rise of AI-generated answers has broken that simplicity. Instead of one gatekeeper, marketers now face a distributed answer layer made up of multiple assistants and search products, each with its own retrieval logic, citation behavior, and content preferences.The Chronicle-Journal piece argues that the real risk is not merely lower search traffic, but fragmentation of brand discovery across different AI surfaces. That is a stronger claim than the familiar “SEO is changing” narrative. It says the answer to the same commercial prompt can be materially different depending on whether the user asks ChatGPT, Copilot, Gemini, Perplexity, Grok, Google AI Overviews, or AI Mode. In practice, that means visibility is no longer one metric but many, and the brand may be strong in one channel while effectively absent in another.

That theme is consistent with recent forum coverage on generative engine optimization. WindowsForum has already highlighted that AI assistants are becoming “first-stop interfaces” for discovery, and that brands need to shape not just rankings but the actual sentences an engine delivers back to users . A separate thread on GEO and AEO made the same point more bluntly: the internet’s front door is moving from the classic results page to conversational systems, and brands must think in terms of answer quality, citation quality, and provenance rather than raw traffic alone

There is also a measuring problem. Traditional analytics tools track impressions, sessions, rankings, and conversions. Those remain useful, but they do not tell a marketer whether an AI engine actually names the brand in a response to a purchase-intent query. That gap explains why the new language around multi-engine coverage is gaining traction. It gives marketers a way to discuss discovery in a world where one answer engine may cite a brand often, another rarely, and a third not at all.

Why the old SEO playbook no longer scales

The old SEO playbook assumed a common optimization target. Even when search results varied, the ranking system was still broadly centralized, and brands could reason about one dominant algorithmic hierarchy. AI discovery is different because the interfaces, underlying models, and source-selection policies are no longer synchronized. That means the same content asset can perform very differently across engines.The practical consequence is that brands need to think in terms of portfolio visibility. One engine may be biased toward structured references and dense citations, while another may lean toward conversational phrasing, product pages, or third-party authority. The win condition is no longer “rank on page one.” It is “become a consistently cited entity across the engines that matter to your customers.”

The article’s core market signal

The strongest signal in the Chronicle-Journal piece is not the exact 9x figure by itself, but the idea that citation disparity is now a strategic variable. Even if individual measurements vary by prompt set or methodology, the directional takeaway is hard to ignore. The market is fragmenting fast enough that brand visibility can no longer be managed as a single channel problem.That is why the “multi-engine AI visibility gap” is a useful phrase. It names the operational reality that marketing teams are already bumping into: a brand can score well in one system, fail in another, and still believe it is “doing fine” because old metrics have not caught up.

What the Research and Industry Reporting Suggest

The Chronicle-Journal article leans on a mix of industry prediction, academic framing, and case-study style reporting. That is important because the story is still early, and the evidence base is not yet as mature as classic SEO research. Still, the pieces line up. Gartner’s early prediction that traditional search volume could drop meaningfully by 2026 has now become part of the broader conversation about AI-assisted discovery, and academic work on generative engine optimization has given marketers a vocabulary for analyzing citation behavior rather than just keyword rankThe Carnegie Mellon/KDD 2024 GEO framework matters because it reframed visibility as a model-sensitive problem. It suggested that the same content characteristics do not transfer cleanly across generative contexts. That is a significant departure from classic SEO wisdom, where certain fundamentals—authority, relevance, structure—tended to travel well across search engines. In AI search, the engine itself may be the variable, not just the query.

There is also a broader market context around zero-click behavior. If more user questions are answered directly on the results page or inside a conversational interface, the value of a citation increases because the citation itself becomes the destination. In that world, being mentioned by the engine is not a side effect of discovery; it is discovery. That makes the citation gap more than an academic curiosity.

What makes the multi-engine problem different

The multi-engine problem is not just diversity of interfaces. It is diversity of retrieval philosophies. Some engines prioritize fresh web context; others summarize from a mixture of search results, internal grounding, and model memory. Some are more likely to include citations visibly, while others answer more opaquely. That means the same brand can be surfaced through different mechanisms and with different levels of confidence.The result is a discovery environment that behaves more like media buying than classic SEO. You are no longer just optimizing one webpage or one domain. You are optimizing for presence in a distributed attention system where each platform has distinct rules of evidence.

The measurement challenge

There is no universal dashboard yet for AI citations across all major engines, and that is part of the problem. Brands that only inspect one platform can mistake partial coverage for broad presence. Multi-engine monitoring is emerging because executives need a better proxy for the new discovery layer, and old metrics simply do not capture answer-level visibility.In that sense, the article’s “coverage rate” concept is useful even if the industry is still standardizing the methodology. It pushes teams to ask a better question: not “how high do we rank?” but “in how many answer systems do we appear at all?”

- The old ranking model is too narrow for answer engines.

- Citation behavior now varies by platform architecture.

- A brand can be visible in one engine and absent in another.

- Multi-engine monitoring is becoming a strategic requirement.

- The measurement stack is still immature, which creates blind spots.

The Copilot vs. AI Mode Contrast

The article’s most provocative claim is that Microsoft Copilot reportedly cited brands at around nine times the rate of Google AI Mode in the sample it tracked. If that pattern holds across broader datasets, it would be a major sign that engine design, retrieval source mix, and answer formatting are shaping brand discovery as much as brand strength itself. In other words, visibility is not just about being good; it is about being compatible.That is an important distinction for marketers and publishers. A strong brand can still fail to appear if the engine prefers certain source types or answer structures. Conversely, a smaller brand with well-structured, citation-rich material can punch above its weight in a more citation-friendly system.

This is exactly why multi-engine optimization cannot be reduced to one universal tactic. A playbook that boosts citations in Copilot may not be the same playbook that works in AI Mode. The Chronicle-Journal article treats that divergence as structural, not incidental, and that framing is believable given how quickly AI search features have evolved.

Why Copilot may reward citation-rich content

Copilot has often been discussed as more explicit about sourcing and citations than some competing AI search experiences. That makes it attractive to brands that can supply clean, well-sourced content. If the engine is more willing to quote or reference structured claims, then authoritative third-party mentions and clearly sourced facts can have outsized impact.That does not mean Copilot is “easier” in a universal sense. It means the engine’s current retrieval and presentation style may favor the kinds of content brands can deliberately engineer. That is a different, and more actionable, proposition.

Why AI Mode may behave differently

Google AI Mode sits inside a different product and ecosystem logic than Copilot. It inherits Google’s long-standing emphasis on search result quality, but it also operates inside a fast-evolving answer interface where source selection and answer synthesis can be more opaque. If the system is more conservative about citations or more selective about commercial references, a brand may struggle to appear even when it has strong web authority.That difference matters because marketers often assume all AI answer surfaces are converging toward a single behavior. They are not. The engines may share a broad purpose, but they do not yet share a common citation standard.

Practical takeaway for marketers

The right response is not to declare one engine “better” and ignore the rest. It is to map where your customers actually ask questions, then compare visibility across those surfaces. A brand with excellent performance in Copilot but weak performance in AI Mode has not solved the problem; it has revealed one segment of it.- Copilot may reward structured, citation-dense content.

- AI Mode may apply different source-selection thresholds.

- Citation visibility is engine-specific, not universal.

- One strong engine does not imply healthy multi-engine coverage.

- Brand teams need engine-level diagnostics, not aggregate optimism.

The EdTech Case Study and Why It Matters

The Chronicle-Journal article’s EdTech example is valuable because it shows how invisible a brand can be even when it is already successful in traditional search. The company reportedly ranked on the first page of Google for core terms but appeared in zero AI engine responses for its target prompts at the start of the exercise. That is the kind of result that forces a strategy reset. Traditional SEO success did not translate into answer-engine visibility.The turnaround described in the article is equally instructive. The brand improved third-party citations, restructured its knowledge-base content around explicit statistical claims, and distributed expert commentary in ways that AI systems were more likely to ingest. Within 90 days, it reportedly achieved citation presence across 12 prompts spanning five of the eight major engines. Whether or not every detail is replicable, the arc is realistic: better sourcing, cleaner structure, and wider distribution can improve AI visibility.

What stands out is the unevenness of the gains. Perplexity and Copilot responded faster than AI Overviews, while other engines required more time or different source patterns. That tells us that optimization is not just about content quality; it is about timing, indexing, and the relationship between content format and engine behavior. A single launch does not fix a multi-engine gap.

Why this case study is credible

The case is believable because it matches what many marketers already see in adjacent areas. Structured content often performs better in systems that value explicit evidence. Third-party validation frequently matters more than brand-owned messaging in AI summaries. And engines that surface visible citations are naturally more sensitive to the shape and provenance of source material.That does not mean every company can copy the result quickly. It means the mechanics are understandable enough to act on, which is why the case study is more useful than a generic “AI is changing search” warning.

The hidden value of the example

The deeper lesson is that brands need to audit their own assumptions. The company in the example may have believed it was discoverable because it was already strong in Google. Once it looked across engines, the story changed. That is a classic visibility trap, and it is likely common.In other words, the multi-engine gap is not just a brand problem. It is a measurement problem, a governance problem, and a budgeting problem all at once.

The New Metric: Multi-Engine Coverage

Traditional digital reporting has trained leaders to think in terms of impressions, clicks, and rankings. Those metrics still matter, but they do not describe answer-era visibility well enough. If a buyer asks an AI engine a question and the brand is not mentioned, the click never happens. That means citation coverage is becoming the more meaningful early indicator.The idea of multi-engine coverage rate is powerful because it is easy to explain to executives. It asks what percentage of monitored engines cite the brand at least once across a target prompt set. That gives teams a practical way to compare performance over time, across competitors, and across channels. It also creates a bridge between communications, SEO, PR, and content operations.

The article’s mention of GenOptima’s own cross-engine coverage improvements is especially telling. Even an agency specializing in this space reportedly needed deliberate multi-engine strategy to expand coverage quickly. That should caution any brand that assumes AI visibility will improve passively as long as it keeps publishing content. Passive publishing is not optimization.

Why coverage is better than vanity metrics

Coverage reflects actual discoverability across systems that can answer commercial questions. Rankings, by contrast, are often too tied to the old blue-link model. A company can be “ranking well” and still be absent in AI answers. That is the new asymmetry.Coverage also helps teams distinguish between breadth and depth. A brand may appear repeatedly in one engine yet be invisible elsewhere. The coverage metric exposes that imbalance immediately.

A simple operating model for teams

A sensible program would begin with a fixed list of prompts that map to revenue, reputation, and support outcomes. Then the brand should test those prompts across the major engines it cares about, record where it appears, and classify the type of citation or mention it receives. Over time, the team can tie changes in content, PR, and technical structure to shifts in visibility.- Define the highest-value commercial prompts.

- Test them across each target AI engine.

- Record brand presence, citation style, and source type.

- Adjust content structure and third-party authority signals.

- Re-test on a regular cadence and track deltas.

What executives should ask for

Leadership teams should not settle for a single “AI visibility score” if it hides engine-level variation. They should ask for prompt coverage by engine, citation frequency, source diversity, and competitive share of answer. Those metrics are more operationally honest.- Coverage by engine.

- Prompt-level visibility.

- Citation frequency and depth.

- Source quality and provenance.

- Competitive share of answer.

- Time-to-change after content updates.

How Content Strategy Has to Change

The biggest strategic mistake brands can make is assuming that AI search visibility is just SEO with a new label. It is not. The content systems feeding AI engines are often more sensitive to structure, context, and authority signaling than classic page-level rankings were. That means content strategy has to become more modular and evidence-rich.The Chronicle-Journal article repeatedly emphasizes authoritative third-party citations, structured knowledge-base content, and expert commentary. Those are not arbitrary suggestions. They map to how generative systems infer trust. If an engine can clearly trace a claim to a credible source, the brand’s chances improve. If the content is vague, purely promotional, or thinly supported, the odds fall.

This is where marketing and communications functions need tighter alignment. Owned content alone is often insufficient. Earned media, third-party references, and expert bylines increasingly matter because they shape how answer engines perceive authority. That is a major shift in power from pure brand publishing to ecosystem reputation.

What kinds of content are likely to help

Content that performs well in answer engines is often cleaner, more structured, and more evidence-oriented than legacy marketing copy. That means explicit claims, properly attributed data, and a clear topic hierarchy. It also means avoiding generic fluff that adds little semantic value.A brand should ask whether each important page helps an engine answer a question quickly and confidently. If not, the page may still be useful for humans but weak for AI retrieval.

The role of third-party validation

Third-party citations matter because answer engines often lean on distributed evidence. A product page saying “we are the best” is weak compared with an independent article, analyst mention, or expert quote that says the same thing with context. The more corroboration, the better.That does not mean brands should chase every mention indiscriminately. It means they should deliberately cultivate credible external references in the outlets and formats that answer engines are likely to ingest.

Internal content is not enough

An internal knowledge base can support visibility, but only if it is written with machine legibility in mind. Dense structure, clear definitions, and sourced statistics make a difference. If the content reads like a brochure, it will likely underperform.- Prioritize evidence-rich pages.

- Use explicit claims with attribution.

- Build a strong third-party citation profile.

- Separate sales language from reference content.

- Write for extraction, not just for persuasion.

Enterprise vs. Consumer Impact

For enterprises, the multi-engine gap is a governance and procurement issue. If a company’s brand, products, or support resources are cited inconsistently across AI engines, then its customer journey is already fragmented. That affects sales enablement, reputation management, and support deflection. Enterprise teams will need to treat answer visibility the way they once treated search rankings and brand sentiment: as a monitored business surface.For consumers, the effect is subtler but potentially more disruptive. People increasingly rely on AI answers for product comparisons, quick recommendations, and “best for me” queries. If an engine omits a brand, that omission can quietly reshape demand. The consumer may never know the brand existed, and the brand will never know it lost the impression.

This is why the visibility gap is not merely a marketing fad. It touches discovery, trust, and market power. The brands that show up in more answer engines will have more opportunities to be considered. The ones that do not will have to fight for attention after the fact, which is always harder.

Enterprise priorities

Enterprises should be more formal about monitoring because they can usually connect visibility to pipeline and support outcomes. They also have more at stake in regulated or reputation-sensitive categories. A brand mischaracterized by one engine can create operational risk.That makes governance essential. Teams need a repeatable process for testing prompts, auditing citations, and correcting outdated information.

Consumer discovery priorities

Consumer brands care about recall, preference, and comparison moments. The user may ask which product is cheapest, safest, fastest, or most reputable. Those are precisely the moments where AI answers can compress the funnel.If the engine cites a competitor more often, the competitive damage can be immediate, even without a click.

The role of trust

Trust is the common thread. Enterprises need trustworthy citations because decisions are expensive. Consumers need trustworthy citations because they want confidence under uncertainty. AI systems that cite well will likely win more long-term loyalty than those that answer fast but vaguely.- Enterprises need governance and monitoring.

- Consumers are influenced by answer-level omission.

- Trust determines whether a citation converts into consideration.

- Multi-engine gaps can distort both demand and reputation.

- Answer visibility is now a brand equity issue.

Competitive Implications

The competitive implications of a ninefold citation gap are substantial because the gap is not merely a performance spread; it is a distributional advantage. If one engine cites a brand far more often than another, that brand gains an asymmetric share of attention in the channels users actually consult. Over time, that can influence market share, partner selection, and public perception.This also changes how rivals should think about benchmarking. Comparing your brand only to direct competitors may no longer be enough. You may need to compare your engine-by-engine visibility to category leaders, adjacent brands, and authoritative publishers that shape the answer layer. In a multi-engine world, your true competitor may be whoever the engine prefers to cite.

There is also a compounding effect. Brands that are already visible across multiple engines are more likely to be cited again because they accumulate more external mentions, more content reuse, and more semantic reinforcement. That makes early coverage gains especially valuable. Visibility begets visibility.

Why the leaders may pull away

Brands with strong PR engines, high-quality knowledge content, and disciplined information architecture will probably widen the gap faster than competitors. They are better positioned to feed different engine types with the right source material. They also have the resources to test and iterate.That means the market may split into two groups: brands that manage multi-engine visibility as a strategic system, and brands that remain trapped in old SEO reporting.

Why the laggards may not realize they are behind

The danger is that lagging brands may still see “good enough” results in one or two systems and assume they are safe. If revenue is not yet obviously declining, the problem can stay hidden. But by the time it becomes visible in pipeline data, the discovery gap may already be entrenched.That lag is what makes the issue strategically urgent.

Competitive lessons

- Visibility is becoming a source of compounding advantage.

- Engine-by-engine benchmarking is now necessary.

- PR and SEO are converging into one answer-layer discipline.

- Early movers may lock in more citations over time.

- Hidden weakness in one engine can distort confidence.

Strengths and Opportunities

The upside of the multi-engine AI visibility shift is that it gives brands a clearer map of where discovery actually happens. That creates a chance to outmaneuver slower competitors, especially in categories where information quality and authority are already differentiators. The companies that invest early can turn a chaotic market into a measurable advantage.- Multi-engine monitoring reveals hidden gaps quickly.

- Structured content can improve answer-engine performance.

- Third-party citations can compound credibility.

- Earned media becomes more valuable in an answer-first world.

- Brands can outperform larger rivals through better information architecture.

- Prompt-level testing creates more actionable feedback loops.

- Early adopters may lock in durable visibility advantages.

Risks and Concerns

The downside is just as significant. Brands can overfit to one engine, chase misleading metrics, or assume a few citation wins mean the problem is solved. The market is moving quickly enough that any single-engine strategy can become obsolete before the next reporting cycle.- Single-engine monitoring creates false confidence.

- Citation gaps may be hidden by legacy SEO success.

- Over-optimization for one platform may not transfer elsewhere.

- Poorly sourced content can be ignored or diluted.

- Measurement standards are still immature and inconsistent.

- Vendor claims may outpace independently verified evidence.

- Fragmentation makes budgeting and accountability harder.

A caution on the evidence

Some of the most dramatic figures in this space, including the 9x citation disparity and the EdTech turnaround, should be treated as directional rather than universal. They are useful because they highlight a likely structural shift, not because they settle every methodological question. That distinction matters.The organizational risk

The larger risk is internal. Marketing, SEO, PR, and product teams may each own part of the visibility puzzle, but no one may own the whole. Without a clear owner, brands can drift into fragmented action and weak accountability.Looking Ahead

The next phase of this story will likely be less about whether AI search matters and more about which systems matter most for which industries. Brands will need to decide where to invest, how to measure, and which engines deserve priority. The market is likely to fragment further before it consolidates, which means the winners will be the teams that can tolerate ambiguity while still acting decisively.We should also expect more explicit telemetry. As answer engines mature, they will probably expose more visibility data, either through native dashboards or third-party tools. That will help standardize reporting, but it will not eliminate the underlying challenge: different engines will still reward different signals. The smart brands will therefore track both the metrics and the mechanisms.

What to watch next

- New citation telemetry from major AI platforms.

- More independent studies on engine-by-engine visibility.

- Tools that standardize multi-engine coverage reporting.

- Evidence of correlation between citations and conversions.

- Shifts in how Google, Microsoft, OpenAI, and others expose sourcing.

- Industry adoption of GEO and AEO as formal disciplines.

- Whether enterprise buyers begin demanding answer-layer audits.

Source: The Chronicle-Journal User