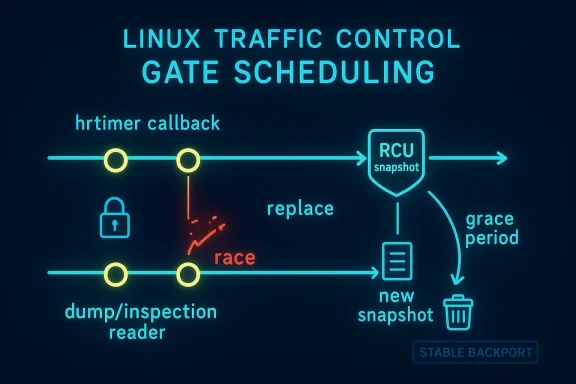

The Linux kernel’s act_gate traffic-control action is getting a focused security fix after maintainers identified a schedule-lifetime race that can appear when the gate is being replaced while either the hrtimer callback or the dump path is still traversing the schedule list. The upstream patch converts the action parameters into an RCU-protected snapshot, swaps updates under the existing lock, and frees the old snapshot with

At a glance, this CVE is not about a flashy remote exploit chain or a classic memory corruption primitive. It is about concurrent state management in a kernel subsystem that makes timing-sensitive decisions, and those are often the bugs that survive longest because they hide in rare interleavings rather than obvious bad inputs. The fix says a lot about the kernel’s maturity: instead of bolting on a heavy-handed rewrite, maintainers chose to preserve the existing model while making the data it reads and writes safe across concurrent paths. That is the kind of low-drama, high-value hardening that often prevents outages before they become incidents.

The vulnerability sits in Linux’s traffic-control machinery, where gate control is used to open and close transmission windows according to a schedule. In practical terms, that means the code must stay coherent while timers fire, dumps are generated, and control-plane replacements update the schedule. The patch notes that the gate action can be replaced while the hrtimer callback or dump path is walking the schedule list, which is exactly the sort of overlap that turns a benign configuration update into a race condition if the underlying data is not safely versioned or protected (lkml.org)

The new code is also a textbook example of how kernel teams think about lifecycle ownership. Rather than storing the parameters inline, the patch moves them behind an RCU pointer, adds a free path for old snapshots, and changes helper accessors so readers grab the protected copy under lock or within the appropriate RCU context. In other words, the fix is not merely “don’t crash here”; it is “make the object lifetime match how the object is actually used,” which is a much more durable design principle.

This vulnerability has another important operational angle: the patch is being treated as a stable candidate. The mailing-list history shows multiple revision cycles and a stable CC, indicating that the issue is serious enough to be backported and broad enough to merit ecosystem attention beyond the immediate upstream tree. In kernel security, that is often the difference between a bug that remains an academic concern and one that lands in vendor update streams within days or weeks

The act_gate action is one of those pieces. It controls whether traffic should be admitted according to a gate schedule, which means its internal parameters are not static metadata; they are live control data. The reported flaw stems from the fact that the action can be replaced while another thread of execution is still consuming the old schedule. That makes the object lifetime itself part of the security boundary, and lifetime bugs are notoriously hard to reason about if the data is embedded directly in the action structure.

The patch history tells an interesting story about kernel development culture. The first public series appeared in February 2026 and went through several revisions before reaching the version cited in the stable discussion. The commit message stayed consistent: the fix is to snapshot the parameters under RCU, preserve the old schedule when a replacement omits an entry list, and free the previous snapshot safely after a grace period. That evolution suggests the maintainers were not just chasing a crash but refining the exact lifetime semantics so the replacement path behaves predictably even when no new schedule entries are supplied (lkml.org)

The affected code path is especially sensitive because it interacts with both timers and configuration updates. The hrtimer callback can observe state while the action is active, while the dump path may iterate the schedule for introspection or user-space reporting. If a replacement updates the schedule in place, readers can see a structure that is only partially updated or, in the worst case, already freed. That is the classic shape of a use-after-free window, even if the exact symptom ends up being a crash rather than exploitable memory reuse.

The patch solves this by introducing a *`struct tcf_gate_params __rcu param

The important nuance is that this is not just an optimization or a stylistic cleanup. The old approach assumed the current parameter block was always valid to read directly, but concurrent replacement breaks that assumption. Once the replacement path can run while another path is still walking the schedule list, the code must either serialize those accesses tightly or stop mutating the object in place. The patch chooses the latter, which is usually the more robust option in kernel code that must stay fast for readers.

That is why the patch preserves the schedule when the replacement omits the entry list. The intent is to avoid unintentionally resetting or erasing effective behavior just because the caller is updating other fields. This detail is easy to miss, but it is a strong sign the fix is aimed at semantic continuity, not only memory safety (lkml.org)

In the act_gate case, the readers are the timer callback and dump logic. They need to see a self-consistent snapshot of gate settings, interval lists, and timing metadata. By using RCU, the code can create a new parameter object, swap the pointer, and let old readers finish naturally. That is a model that scales much better than trying to make in-place mutation safe under all possible interleavings.

There is also a maintainability benefit. When data is split into a snapshot object with an explicit lifetime, future developers are less likely to accidentally add a new reader that assumes the state is eternal. The code changes in the header show that the helper accessors were intentionally rewritten to access the current snapshot in a controlled way, which is the sort of defensive API design that prevents the next race before it starts.

That matters in networking, where the kernel already spends a lot of effort keeping common operations fast and predictable. A scheduling action that slows every dump or timer observation because of over-serialization would be a tradeoff with its own performance and scalability costs. The chosen fix avoids that trap.

The message itself is also revealing. It identifies the exact race window, references the original gate-control introduction commit, and says the bug is fixed by taking an RCU snapshot on replace. That is the sort of messaging maintainers use when they want backporters and stable trees to understand the intent immediately. Clear intent matters because small kernel fixes often need to survive being adapted across many codebases and release lines.

The patch also shows the Linux networking community’s preference for incremental hardening. Rather than redesign the gate action from scratch, the change respects the existing subsystem boundaries and makes the minimum necessary corrections. That conservative approach is why Linux can sustain a huge amount of networking functionality without constant regressions; the kernel tends to evolve the synchronization model before it tears up the feature itself.

This matters most for infrastructure teams running custom shaping, deterministic networking, or automation that reconfigures queueing disciplines dynamically. A race in a control-plane replacement path is exactly the kind of bug that can surface under orchestration, maintenance windows, or rollback procedures. Those are the moments when a “rare” bug becomes a pager event.

Enterprises should also note that bugs like this can be difficult to reproduce from a security operations perspective. If a crash does not happen deterministically, logs may only show a transient kernel warning or an unexplained reset of timing behavior. That means the absence of a visible incident is not proof the bug is irrelevant; it may simply mean the triggering interleaving has not happened yet.

Edge devices are a different story. Appliances, routers, embedded Linux systems, and industrial endpoints often use custom kernels or vendor configurations where traffic control is part of the product’s networking behavior. In those environments, even a narrow race condition can translate into packet loss, hangs, or reboot loops if the bug is triggered by configuration churn or watchdog-driven recovery logic.

The distinction is important because it affects how you think about exposure. A bug can be low-risk for a general-purpose laptop and still serious for a carrier appliance or a managed edge node. That is why kernel advisories often look deceptively narrow while still warranting broad attention from infrastructure operators.

It will also be worth watching whether other queueing or action modules receive similar scrutiny. Once maintainers tighten one lifetime edge in a networking subsystem, adjacent code often gets reviewed with fresh eyes. That is one of the hidden benefits of security work: a single fix can trigger a broader audit of assumptions that have gone unquestioned for years.

Finally, operators should expect the usual split between upstream certainty and downstream patch visibility. The public upstream message is clear, but the inventory story in each environment will depend on exactly which kernel trees, vendor builds, and appliance images are in use. That is always the tedious part of kernel security: the code-level truth is easier to establish than the estate-level truth.

Source: MSRC Security Update Guide - Microsoft Security Response Center

call_rcu(), which is exactly the kind of surgical synchronization change that tends to matter far more in production than its small diff size suggests. The patch was posted as “net/sched: act_gate: snapshot parameters with RCU on replace” and explicitly marked Cc: stable@vger.kernel.org, underscoring that this is meant to reach maintained kernels rather than remain an upstream-only cleanup (lkml.org)

Overview

Overview

At a glance, this CVE is not about a flashy remote exploit chain or a classic memory corruption primitive. It is about concurrent state management in a kernel subsystem that makes timing-sensitive decisions, and those are often the bugs that survive longest because they hide in rare interleavings rather than obvious bad inputs. The fix says a lot about the kernel’s maturity: instead of bolting on a heavy-handed rewrite, maintainers chose to preserve the existing model while making the data it reads and writes safe across concurrent paths. That is the kind of low-drama, high-value hardening that often prevents outages before they become incidents.The vulnerability sits in Linux’s traffic-control machinery, where gate control is used to open and close transmission windows according to a schedule. In practical terms, that means the code must stay coherent while timers fire, dumps are generated, and control-plane replacements update the schedule. The patch notes that the gate action can be replaced while the hrtimer callback or dump path is walking the schedule list, which is exactly the sort of overlap that turns a benign configuration update into a race condition if the underlying data is not safely versioned or protected (lkml.org)

The new code is also a textbook example of how kernel teams think about lifecycle ownership. Rather than storing the parameters inline, the patch moves them behind an RCU pointer, adds a free path for old snapshots, and changes helper accessors so readers grab the protected copy under lock or within the appropriate RCU context. In other words, the fix is not merely “don’t crash here”; it is “make the object lifetime match how the object is actually used,” which is a much more durable design principle.

This vulnerability has another important operational angle: the patch is being treated as a stable candidate. The mailing-list history shows multiple revision cycles and a stable CC, indicating that the issue is serious enough to be backported and broad enough to merit ecosystem attention beyond the immediate upstream tree. In kernel security, that is often the difference between a bug that remains an academic concern and one that lands in vendor update streams within days or weeks

Why this matters

The most important thing to understand is that race conditions are not “just bugs” in kernel code. They are often reliability faults first and security issues second, but in a privileged execution environment the line between the two can vanish quickly. A corrupted schedule, stale pointer, or half-updated parameter set can lead to arbitrary behavior, kernel warnings, denial of service, or worse depending on the exact path and the surrounding hardening.- The flaw is tied to concurrent replacement, not ordinary packet processing.

- The risk surface is concentrated in timing-sensitive paths like the hrtimer callback and dump logic.

- The fix uses RCU snapshots to align object lifetime with reader behavior.

- The patch being marked for stable backporting signals practical operator impact.

- The technical change is small, but the operational significance is large.

Background

Linux traffic control has long been a home for some of the kernel’s most subtle correctness problems because it blends networking, scheduling, and state machines under concurrent load. Queuing disciplines, action modules, and timer-driven state transitions all need to cooperate without stepping on each other. When they fail, the result is often not a spectacular crash on day one but a brittle edge case that shows up under load, during reconfiguration, or in environments with lots of automation.The act_gate action is one of those pieces. It controls whether traffic should be admitted according to a gate schedule, which means its internal parameters are not static metadata; they are live control data. The reported flaw stems from the fact that the action can be replaced while another thread of execution is still consuming the old schedule. That makes the object lifetime itself part of the security boundary, and lifetime bugs are notoriously hard to reason about if the data is embedded directly in the action structure.

The patch history tells an interesting story about kernel development culture. The first public series appeared in February 2026 and went through several revisions before reaching the version cited in the stable discussion. The commit message stayed consistent: the fix is to snapshot the parameters under RCU, preserve the old schedule when a replacement omits an entry list, and free the previous snapshot safely after a grace period. That evolution suggests the maintainers were not just chasing a crash but refining the exact lifetime semantics so the replacement path behaves predictably even when no new schedule entries are supplied (lkml.org)

The affected code path is especially sensitive because it interacts with both timers and configuration updates. The hrtimer callback can observe state while the action is active, while the dump path may iterate the schedule for introspection or user-space reporting. If a replacement updates the schedule in place, readers can see a structure that is only partially updated or, in the worst case, already freed. That is the classic shape of a use-after-free window, even if the exact symptom ends up being a crash rather than exploitable memory reuse.

Kernel concurrency in plain English

RCU, or Read-Copy-Update, is the kernel’s way of letting readers proceed quickly while writers swap in a new copy and retire the old one later. It is a natural fit for structures that are read often and modified infrequently, especially when the reads must not block. In this case, the act_gate patch moves the parameters into an RCU-managed object so readers can safely observe a coherent snapshot while writers prepare the next version.- Readers need a stable view of schedule parameters.

- Writers need to replace those parameters without interrupting active readers.

- Old state must remain valid until the grace period ends.

- The patch aligns the code with that model instead of relying on in-place mutation.

- The result is better correctness and a cleaner mental model for future maintainers.

How the bug surfaced

The upstream patch description is unusually direct: the gate action can be replaced while the hrtimer callback or dump path is walking the schedule list. That means the vulnerability is fundamentally a coordination failure between paths that were not guaranteed to be synchronized enough for in-place mutation. Rather than assuming the replacement path would be “rare enough,” the fix makes the data structure itself resistant to that assumption (lkml.org)- A timer can race with a control-plane update.

- A dump can race with a schedule replacement.

- A single inline parameter block is fragile under both conditions.

- RCU converts that fragility into a manageable lifecycle.

- The stable tag suggests this was considered production-relevant.

The Vulnerability Mechanics

The core of the issue is the mismatch between how the action is read and how it was originally stored. Before the fix, the gate parameters lived inline in thetcf_gate structure. That is convenient, but it means a replacement path can rewrite the same memory location that a timer callback or dump routine expects to remain coherent for the duration of its read.The patch solves this by introducing a *`struct tcf_gate_params __rcu param

** pointer and adding anrcu_head` to the parameter object itself. That shifts the problem from “mutable inline state” to “versioned snapshot with deferred reclamation.” It also means accessor helpers must be updated so they dereference the snapshot in a lock-aware way rather than reading fields directly from the action struct (lkml.org)The important nuance is that this is not just an optimization or a stylistic cleanup. The old approach assumed the current parameter block was always valid to read directly, but concurrent replacement breaks that assumption. Once the replacement path can run while another path is still walking the schedule list, the code must either serialize those accesses tightly or stop mutating the object in place. The patch chooses the latter, which is usually the more robust option in kernel code that must stay fast for readers.

What changed technically

The patch updates several helper functions to work against a locked RCU pointer rather than direct inline fields. It also refactors timer setup and list-copy behavior so the replacement path can build a new snapshot, install it safely, and keep the old one alive until RCU says it is safe to free. That is a more complex implementation, but the complexity is deliberate: it buys correctness in a path where races can be devastating.parambecomes an RCU pointer.- The parameter block gets its own

rcu_head. - Accessors use protected dereference helpers.

- Replacements can preserve the existing schedule if no new entries are supplied.

- Old snapshots are retired with

call_rcu()instead of immediate free.

Why “replace” is the dangerous verb

In many kernel subsystems, replace operations are the most dangerous code paths because they must reconcile old state, new state, and active users at the same time. In act_gate, the replacement path touches both policy and timing, which means it does not merely swap a configuration bit; it can alter the action’s live behavior. If the code does not capture a clean snapshot first, the running callback can end up with a moving target.That is why the patch preserves the schedule when the replacement omits the entry list. The intent is to avoid unintentionally resetting or erasing effective behavior just because the caller is updating other fields. This detail is easy to miss, but it is a strong sign the fix is aimed at semantic continuity, not only memory safety (lkml.org)

Why RCU Is the Right Tool

RCU is often the right answer when a kernel structure has many readers and relatively few writers, but the real value here is more nuanced. It is not simply about performance. It is about allowing the code to present a coherent “version” of state to callbacks that may be running in parallel, without forcing the entire subsystem into heavy locking that would hurt the fast path.In the act_gate case, the readers are the timer callback and dump logic. They need to see a self-consistent snapshot of gate settings, interval lists, and timing metadata. By using RCU, the code can create a new parameter object, swap the pointer, and let old readers finish naturally. That is a model that scales much better than trying to make in-place mutation safe under all possible interleavings.

There is also a maintainability benefit. When data is split into a snapshot object with an explicit lifetime, future developers are less likely to accidentally add a new reader that assumes the state is eternal. The code changes in the header show that the helper accessors were intentionally rewritten to access the current snapshot in a controlled way, which is the sort of defensive API design that prevents the next race before it starts.

The tradeoffs

RCU is not free. It adds conceptual complexity, and the code has to be careful about where and how it dereferences the pointer. Writers also need to manage deferred freeing, which means more plumbing and slightly more memory overhead while old snapshots wait out their grace periods. Still, for this class of bug, those costs are usually worth paying.- Readers gain stable, coherent snapshots.

- Writers avoid unsafe in-place mutation.

- The subsystem keeps fast read-side behavior.

- Deferred reclamation reduces use-after-free risk.

- The code becomes easier to reason about under concurrency.

Why not just lock harder?

The intuitive answer might be “use a bigger lock,” but that is rarely elegant in hot-path kernel code. A coarser lock would make every read path wait for every update, even though most reads do not actually modify anything. The RCU model lets the common path remain lightweight while still ensuring the data does not disappear under active readers.That matters in networking, where the kernel already spends a lot of effort keeping common operations fast and predictable. A scheduling action that slows every dump or timer observation because of over-serialization would be a tradeoff with its own performance and scalability costs. The chosen fix avoids that trap.

Patch History and Upstream Context

The patch did not appear out of nowhere. The public trail shows a v5 series in early February 2026, followed by additional revisions and stable-review discussion later in the month. That revision churn is normal for kernel fixes of this kind: reviewers often ask for cleaner naming, simpler control flow, or more precise timer handling before a patch is accepted. The end result is usually better for it, because the final backportable version is clearer about what changed and whyThe message itself is also revealing. It identifies the exact race window, references the original gate-control introduction commit, and says the bug is fixed by taking an RCU snapshot on replace. That is the sort of messaging maintainers use when they want backporters and stable trees to understand the intent immediately. Clear intent matters because small kernel fixes often need to survive being adapted across many codebases and release lines.

The patch also shows the Linux networking community’s preference for incremental hardening. Rather than redesign the gate action from scratch, the change respects the existing subsystem boundaries and makes the minimum necessary corrections. That conservative approach is why Linux can sustain a huge amount of networking functionality without constant regressions; the kernel tends to evolve the synchronization model before it tears up the feature itself.

Stable backport implications

Because the patch is marked for stable, operators should expect downstream vendor kernels to absorb it according to their normal servicing cadence. That matters for enterprise environments, where traffic-control features may be present in appliances, container hosts, edge systems, or specialized networking nodes even if they are not obvious in day-to-day administration. A kernel bug in this area is the kind of problem that can quietly affect infrastructure you do not think of as “doing scheduling.”- The series went through multiple public revisions.

- The issue was flagged for stable backporting.

- Review comments focused on mechanics and clarity, not whether the race was real.

- The final fix emphasizes preserving behavior during replace operations.

- The upstream process strongly suggests the issue is production-significant.

Enterprise Impact

For enterprises, the real concern is not whether act_gate is a mainstream feature on every host. It is whether any managed Linux fleet includes kernels with this subsystem enabled and uses traffic-control features in production or lab environments. Networking and scheduling bugs are especially unwelcome in environments that depend on deterministic behavior, because the failure mode can range from intermittent anomalies to outright service interruption.This matters most for infrastructure teams running custom shaping, deterministic networking, or automation that reconfigures queueing disciplines dynamically. A race in a control-plane replacement path is exactly the kind of bug that can surface under orchestration, maintenance windows, or rollback procedures. Those are the moments when a “rare” bug becomes a pager event.

Enterprises should also note that bugs like this can be difficult to reproduce from a security operations perspective. If a crash does not happen deterministically, logs may only show a transient kernel warning or an unexplained reset of timing behavior. That means the absence of a visible incident is not proof the bug is irrelevant; it may simply mean the triggering interleaving has not happened yet.

Operational priorities

The best response is the boring one: inventory kernel versions, identify whether the relevant traffic-control code is present in deployed builds, and move vendor updates through the normal emergency-change process if your estate uses these features. For many organizations, the practical risk is less about external exploitation and more about availability and trustworthiness of packet scheduling.- Inventory affected kernels and vendor backports.

- Check systems using traffic shaping or deterministic scheduling.

- Prioritize hosts that dynamically replace qdisc/action state.

- Validate rollback and maintenance workflows for repeatability.

- Treat unexplained kernel warnings as potential early indicators.

Consumer and Edge Considerations

For consumer desktops, this is probably not a headline bug in the way a browser RCE would be. Most users do not deliberately configure gate actions or manipulate queueing disciplines by hand. But modern consumer and prosumer systems increasingly run VPNs, container stacks, developer tooling, and local labs that may pull in advanced network features indirectly.Edge devices are a different story. Appliances, routers, embedded Linux systems, and industrial endpoints often use custom kernels or vendor configurations where traffic control is part of the product’s networking behavior. In those environments, even a narrow race condition can translate into packet loss, hangs, or reboot loops if the bug is triggered by configuration churn or watchdog-driven recovery logic.

The distinction is important because it affects how you think about exposure. A bug can be low-risk for a general-purpose laptop and still serious for a carrier appliance or a managed edge node. That is why kernel advisories often look deceptively narrow while still warranting broad attention from infrastructure operators.

Consumer-facing risk signals

Users should not panic, but they should recognize the patterns that usually separate theoretical kernel bugs from practical headaches. If a device uses advanced networking features, if it is frequently reconfigured, or if its vendor has a history of shipping kernel-based control features, then a patch should move up the queue. Otherwise, the fix can usually ride the normal update channel without dramatic disruption.- Advanced networking features can bring hidden kernel exposure.

- Edge appliances often have less tolerance for scheduler instability.

- Reconfiguration-heavy environments are more likely to hit race windows.

- Consumers may only see the bug indirectly as a reboot or stall.

- Normal update discipline is usually sufficient for individual PCs.

Strengths and Opportunities

The good news is that this patch lands in a subsystem where the fix is conceptually strong, operationally modest, and likely to backport cleanly. It improves correctness without changing the broader feature set, and it sets up the code for safer future maintenance. The use of RCU here is also a positive signal for the kernel community’s ability to convert concurrency hazards into well-structured lifetime management.- RCU-based snapshotting is a robust lifetime model.

- The fix preserves existing effective state when replace omits entries.

- The patch is small enough to be backportable.

- Reader paths remain fast, which is good for networking performance.

- The design reduces the chance of future race regressions.

- It strengthens both stability and security posture.

- The public revision trail shows active, healthy upstream scrutiny.

Risks and Concerns

The concern list is more practical than dramatic. First, kernel race conditions can be hard to observe before they bite, especially when they depend on a narrow timing window. Second, vendors may vary in how quickly they backport and disclose the fix, which can make fleet-wide exposure harder to assess than a simple version number check would suggest.- The bug may be hard to reproduce in testing.

- Fleet inventories may miss hidden kernel feature usage.

- Vendor backports can differ in timing and implementation.

- Edge and appliance kernels may lag behind upstream fixes.

- Operators may misread the issue as just a stability bug.

- Replacement-path races can be triggered by automation, not just humans.

- A lack of visible crashes does not mean the path is safe.

What to Watch Next

The next phase is about backports, vendor advisories, and whether downstream distributions keep the semantic details of the fix intact. That last part matters more than it sounds: when a patch is translated from upstream into a vendor kernel, the important question is not only whether the code compiles, but whether the lifetime rules and replacement semantics survive the journey unchanged. In this kind of bug, a poorly adapted backport can reintroduce the exact race it was meant to close.It will also be worth watching whether other queueing or action modules receive similar scrutiny. Once maintainers tighten one lifetime edge in a networking subsystem, adjacent code often gets reviewed with fresh eyes. That is one of the hidden benefits of security work: a single fix can trigger a broader audit of assumptions that have gone unquestioned for years.

Finally, operators should expect the usual split between upstream certainty and downstream patch visibility. The public upstream message is clear, but the inventory story in each environment will depend on exactly which kernel trees, vendor builds, and appliance images are in use. That is always the tedious part of kernel security: the code-level truth is easier to establish than the estate-level truth.

- Watch for stable backports in major distribution kernels.

- Check vendor advisories for explicit mention of act_gate or traffic-control fixes.

- Verify whether your appliances or edge systems expose relevant networking features.

- Monitor whether adjacent net/sched components receive related hardening.

- Validate that replacement paths in automation do not trigger unintended behavior.

Source: MSRC Security Update Guide - Microsoft Security Response Center