The mainstream AI assistants sweeping into everyday workflows are now being built and operated by companies whose core businesses are, in many cases, advertising and attention monetization — and that structural fact reshapes every privacy, legal, and architectural decision engineers and IT leaders must make today. The SitePoint trend piece that flagged this shift is right to sound the alarm: when Google Gemini, Meta’s AI, Amazon’s Alexa+/Bedrock stack, and Microsoft Copilot become the dominant conversational interfaces, the incentives that built advertising monopolies follow the assistants — with real consequences for how user prompts, developer API calls, and corporate secrets are handled in practice. rview

The big cloud-and-consumer-platform players did not stumble into conversation-based AI by accident; they layered generative models on top of business models that already monetize attention and user intent. In absolute dollars and as a share of total revenue, advertising remains the financial engine for Alphabet and Meta, while Amazon’s ad business is already a huge, rapidly growing segment of its business. These revenue concentrates shape product decisions — from default telemetry settings to which product surfaces get prioritized for monetization. Alphabet’s filings and quarterly reports show advertising contributing the majority of its revenue (roughly three quarters of total revenue in recent filings), and Meta’s financials make the company structurally ad-driven.

That incentive alignment matters because the conversational format gives advertisers something richer than search queries: direct, explicit signals of intent, context, and preferences. Where search historically relied on clickstreams and keywords to infer interest, a multi-turn chat captures goals, constraints, and sentiment in the user’s own words — a far better feed for personalization and conversion optimization. It’s the sort of dataset advertising systems would pay a premium for, and the companies that run the assistants are perfectly positioned to use it to improve ad targeting and ad formats. The product-level choice to do so is not hypothetical: Google and other players have begun testing ad placements inside generative-result surfaces.

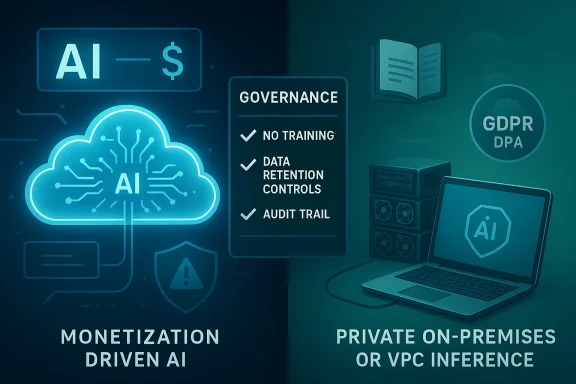

The arrival of powerful, conversational assistants from companies that also sell the ads and audience segments those assistants will inevitably inform represents one of the largest product-policy junctions of the decade. The choice facing technical teams is concrete and architectural, not merely ethical: design for trust and compliance now, or accept the legal and reputational costs later. For many developer and enterprise use cases, the incremental operational cost of local-first inference, contractual rigor, and a disciplined prompt governance program is modest compared with the downside of an exposed data pipeline feeding a monetization engine you do not control. The technical community’s decisions in the next 12–24 months will determine whether conversational AI becomes an ecosystem built around user agency — or whether it becomes yet another attention surface built primarily to serve advertisers.

Key references and verification anchors used in this analysis include vendor policy documents, recent company filings and announcements, regulatory summaries, and independent reporting noting ad rollouts and vendor training policies. The revenue concentration figures and major policy changes cited here are corroborated by public filings and contemporary reporting; readers and practitioners should re-check the vendor terms and regulatory guidance for the exact contractual language that will apply to specific deployments before making irrevocable design decisions.

Source: SitePoint Trend Watch: The Rise of 'Ad-Company' AI Assistants

Why “ad-company” assistants matter now

Why “ad-company” assistants matter now

The big cloud-and-consumer-platform players did not stumble into conversation-based AI by accident; they layered generative models on top of business models that already monetize attention and user intent. In absolute dollars and as a share of total revenue, advertising remains the financial engine for Alphabet and Meta, while Amazon’s ad business is already a huge, rapidly growing segment of its business. These revenue concentrates shape product decisions — from default telemetry settings to which product surfaces get prioritized for monetization. Alphabet’s filings and quarterly reports show advertising contributing the majority of its revenue (roughly three quarters of total revenue in recent filings), and Meta’s financials make the company structurally ad-driven.That incentive alignment matters because the conversational format gives advertisers something richer than search queries: direct, explicit signals of intent, context, and preferences. Where search historically relied on clickstreams and keywords to infer interest, a multi-turn chat captures goals, constraints, and sentiment in the user’s own words — a far better feed for personalization and conversion optimization. It’s the sort of dataset advertising systems would pay a premium for, and the companies that run the assistants are perfectly positioned to use it to improve ad targeting and ad formats. The product-level choice to do so is not hypothetical: Google and other players have begun testing ad placements inside generative-result surfaces.

What the SitePoint piece SitePoint analysis lays out three core claims that guide this article:

- The most-capable assistants are operated by companies with advertising incentives, and those incentives nal data will be used.

- Current product and API terms do not reliably exclude ad-personalization or downstream use of prompts for model improvement; in many cases, datper control.

- A practical, privacy-forward counter-movement — local and open-weight models — is mature enough for many developer use cases and should be part of any risk calculus.

The Money: Follow the incentives (verified)

- Meta: Public filings and investor reporting confirm that advertising is the dominant revenue source for Meta’s Family of Apps; advertising drove the overwhelming majority of Meta’s revenue in recent years. This dependency is the factual basis for assuming ad-driven incentives will affect product design.

- Alphabet: Alphabet’s reports and SEC filings show advertising continues to make up roughly three-quarters of total revenue, with Search and YouTube the largest contributors. That level of concentration means product choices that increase ad inventory or ad relevance have outsized corporate incentives.

- Amazon: Amazon’s advertising business is now a very large line item — crossing the $50B range recently — and is among the fastest-growing parts of Amazon’s overall business. Its incentives to fold conversational shopping and assistant interactions into commerce monetization are strong.

How data actually flows (and where the risks are)

Multiple channels for prompt data

Major assistant stacks generally split conversational inputs into several possible downstream flows:- Live inference for the immediate response (stateless processing).

- Logging and telemetry (for debugging, abuse detection, quality measurement).

- Retained history for personalization and “memory” features.

- Training pipelines for model improvement or fine-tuning (in whole or aggregated form).

- Integration with advertising stacks (to improve targeting, measure ad performance, or supply audience segments).

Hosted APIs: your payload leaves your hands

Every hosted LLM API call includes a payload (prompt, system instructions, attachments) and metadata (client IP, tokens counts, timestamps). Unless the commercial terms explicitly prohibit training and require deletion, those elements are potential inputs to model improvement or ad-personalization models. While some cloud vendors (particularly enterprise tiers) now offer explicit “no-training” or “no-retention” contractual options, the default consumer and developer tiers frequently permit logs to be retained for abuse and product improvement — and the line between "improvement" and "personalization" is blurry in real operations. Independent trackers that scan vendor terms show a wide variance in how training rights are described; some vendors reserve explicit rights to use content to train or refine models, others do not. TOS-tracking services and vendor DOCs confirm the asymmetry is real and shifting.Product surfaces and hidden multiplication of risk

Assistant integrations create unexpected pathways: a developer debugging proprietary authentication flows inside a workplace Copilot embedded in Teams may think the content stays inside Office365, but the underlying agent connectors or instrumented telemetry can route query text into model telemetry channels and, depending on contract terms, into improvement pipelines. Similarly, consumer assistants embedded into messaging apps (e.g., Meta’s assistant across Instagram/WhatsApp) may be covered by different policies than the host messaging service, creating confusing boundaries for where opt-outs apply. Reporting and legal reviews show that these cross-surface differences are not edge cases; they are the rule.Verification: public policies and real-world tests

- Google’s Gemini developer terms and privacy hub call out how Gemini-related activity and connected-app data can be used to “provide and improve Gemini” unless users change privacy controls — a practical admission that conversational inputs can feed improvement and product features. This is spelled out in developer-facing Additional Terms for the Gemini API.

- Meta has publicly discussed (and in some jurisdictions resumed) training models on publicly posted user content; the company’s moves and the attendant regulatory response have been widely reported. Those decisions are foundational: one of the largest suppliers of open-weight models (Meta’s Llama family) is also the company most dependent on ad revenue. That juxtaposition is strategic and consequential.

- Google has already piloted and rolled out ads inside AI Overviews / AI Mode in search — a concrete productization of ads in generative-AI outputs rather than only on traditional search result pages. If AI Overviews can host sponsored content today, generative chat responses are an obvious next surface.

- OpenAI recently began testing advertising in ChatGPT’s Free and lower-cost tiers as a funding mechanism — illustrating that even companies that initially avoided ad-based models are experimenting with them as they scale. OpenAI’s own announcement clarifies that the company intends to separate ads from answers visually and promises advertisers will not see personal chat logs, but the use-case demonstrates that ad insertion into conversational surfaces is now mainstream.

The open-source / local inference counter-movement (what works today)

Running inference locally (or in tightly controlled enterprise enclaves) is no longer a fantasy for many developer use cases. Practical, deployable models and tooling include:- Meta’s Llama 3.1 family (8B, 70B, 405B) — the smaller Llama 3.1 8B is explicitly targeted at local or private deployments and has been integrated into multiple local inference toolchains.

- Mistral’s 7B family — high-quality open-weight 7B-class models that run efficiently on consumer and datacenter hardware.

- Google’s Gemma 2 family (including Gemma 2 9B) — lighter, distilled models positioned for efficient local and edge inference. Gemma 2 9B is available for local inference and is packaged for common inference stacks.

- Compact vendor families like Microsoft’s Phi-3 (mini/7B) that are explicitly offered for low-latency and on-device inference in some configurations.

- llama.cpp, vLLM, Mistral.rs, and higher-level distributions (Ollama, LM Studio, Jan.ai) have lowered the friction to spin a local assistant that never sends prompts to a third-party server. Community projects document steps for downloading quantized models and running them with minimal RAM and GPU requirements. For many developer tasks — code generation, summarization, or internal data analysis — the capability gap between local 7–14B models and frontier 70B+ models is narrowing fast.

A practical decision matrix for engineers and IT leaders

When evaluating whether to use a hosted assistant or a local/self-hosted model, consider this prioritized checklist:- Data sensitivity and regulation

- If prompts may contain regulated data (HIPAA PHI, PCI, GDPR personal data, or data covered by contract), default to models and infrastructure that guarantee no third‑party training or retention without explicit contracting. GDPR Article 28’s controller/processor rules mean you may be contractually required to limit where personal data flows.

- Business model and brand trust

- If trust and confidentiality are part of the value proposition (e.g., a B2B product promising privacy), prefer on-prem or dedicated VPC-hosted inference with contractual no-training clauses.

- Capability vs privacy trade-offs

- For high-volume, non-sensitive tasks where the frontier model yields real efficiency gains, a hosted API with enterprise-tier contractual protections and a DPA may be acceptable. For sensitive or legally consequential tasks, use private models or strong contractual controls.

- Operational cost and resilience

- Self-hosted models require investment in GPU/instance capacity, quantization expertise, and lifecycle management for model updates and safety patches. But for many teams those costs are now comparable to the long-term risk of a compliance incident.

- Contractual hygiene

- Always review the provider’s API terms and additional terms. Avoid default consumer tiers in production. Negotiate explicit "no use for training" clauses and audit rights where regulation requires them. Use vendor DPA and SCCs where necessary. TOS trackers show that the presence or absence of training rights is a good initial filter when short-listing providers.

Concrete technical mitigations and an implementation checklist

- Data classification first

- Implement a mandatory prompt-classification gate in your application: tag or block inputs containing PHI, PII, credentials, secrets, or competitive intel. Treat those categories as non-transmittable to third-party APIs by default.

- Use dedicated enterprise contracts / DPAs

- Subscribe to enterprise offerings (contracted) that explicitly state inputs will not be used to train public models and that data retention windows meet your compliance obligations. Review the provider’s API Additional Terms, and require Data Processing Agreements aligning with GDPR Article 28 where applicable.

- Prefer private endpoints and VPC egress rules

- Where possible, use private network connectors (VPC endpoints, PrivateLink) and enforce data egress policies so that only approved services can receive prompts.

- Local-first for regulated workloads

- For PHI, regulated financial data, or proprietary algorithms, run inference locally or in a customer-controlled cloud project (e.g., hosted in your own cloud subscription using Bedrock-like private models that commit to not using customer inputs for training). AWS Bedrock’s enterprise model terms and partner documentation can be used as evidence of a no-training default for enterprise customers, but insist on contractual commitments.

- DLP, pre-send redaction and watermarking

- Use automated DLP to redact or token‑map sensitive fields before sending prompts. Where full redaction is impractical, apply pseudonymization and link‑tables that remain on your side.

- Logging, audit trails, and incident playbooks

- Maintain immutable audit trails for every prompt and output, including which policy branch allowed or blocked a transmission. Test incident response with tabletop exercises that assume prompt exfiltration.

- Test and monitor vendor behavior continuously

- Periodically re-check vendor policies and terms. Providers change legal language and product defaults; contract negotiation must include change-management clauses and notification requirements.

Strengths of the ad-company assistant model (what’s good)

- Rapid capability and scale: These platforms can host the largest models and integrate multimodal features, agents, and long-context memory at global scale.

- Product polish and developer velocity: SDKs, connectors, and prebuilt integrations accelerate time-to-market for many applications.

- Rich personalization (when legitimately desired): If used responsibly and with consent, conversational signals can power genuinely helpful, contextual personalization.

Primary risks and unresolved problems (what to watch out for)

- Ambiguous data-use language: Default terms and small-print additional terms can create downstream exposure; auditability is often lacking.

- Ads and monetization creep: Ad placements inside answers and overviews are already being tested and rolled out; the notion of a “neutral” assistant is eroding as monetization surfaces multiply. This creates subtle incentives to surface vendor-friendly content or sponsored results at points of high intent.

- Compliance slippage: Controller obligations under regulations like GDPR do not disappear because an assistant is convenient; third-party retention and training rights can convert a controller’s compliance risk into liability.

- Invisible third-party links: Enterprise connectors and browser extensions have already been shown to capture and exfiltrate chat text in real deployments; this attack surface should be treated as operationally real, not theoretical.

What to watch next (regulatory and product signals)

- Ads inside conversational assistants will expand: Google has tested ads in AI Overviews and AI Mode; OpenAI has begun ad tests in ChatGPT; the pattern is clear. Watch whether major assistants begin blending shopping/affiliate placements, performance-max-style ads, or sponsored suggestions into core answers.

- Contractual change windows: Major providers continuously update their API Additional Terms and privacy policies. Organizations should monitor legal changes and have dedicated change-control triggers in vendor management workflows.

- Regulatory clarifications: Expect regulators (data protection authorities, competition authorities) to ask whether conversational signals used for ad targeting require additional safeguards; GDPR supervisory authorities and the EU AI Act may produce guidance that firms should build into vendor DPAs and procurement policy. For GDPR specifically, Article 28 obligations and processor‑controller definitions are central to vendor selection. ([gdprinfo.eu/gdpr-article-28-explained-processor-obligations-contracts-and-5-practical-examples)

Final recommendations — an operational playbook

- Immediately classify all AI-related use cases by sensitivity (public, internal, confidential, regulated). Treat regulated as “no public cloud APIs without contract.”

- For any production use that touches confidential or regulated data, require a vendor data processing addendum that explicitly disclaims training on your inputs, includes audit rights, and mandates deletion/return on termination.

- Where trust is a differentiator for your product, invest in local or VPC-hosted inference workflows — run 7–14B open-weight models as primary inference and use hosted SOTA only in controlled, non-sensitive fallbacks.

- Implement input-side DLP and pseudonymization as standard middleware before any external call. Log everything immutably for forensic audits.

- Add vendor-policy monitoring to your procurement lifecycle and require 30–90 days’ advance notice of any terms change that affects data‑use, with the right to exit without penalty.

- Train engineers and product managers on the business implications (not only the technical ones): a customer trust incident caused by improvident AI prompts will cost more than the engineering lift needed to run models privately.

The arrival of powerful, conversational assistants from companies that also sell the ads and audience segments those assistants will inevitably inform represents one of the largest product-policy junctions of the decade. The choice facing technical teams is concrete and architectural, not merely ethical: design for trust and compliance now, or accept the legal and reputational costs later. For many developer and enterprise use cases, the incremental operational cost of local-first inference, contractual rigor, and a disciplined prompt governance program is modest compared with the downside of an exposed data pipeline feeding a monetization engine you do not control. The technical community’s decisions in the next 12–24 months will determine whether conversational AI becomes an ecosystem built around user agency — or whether it becomes yet another attention surface built primarily to serve advertisers.

Key references and verification anchors used in this analysis include vendor policy documents, recent company filings and announcements, regulatory summaries, and independent reporting noting ad rollouts and vendor training policies. The revenue concentration figures and major policy changes cited here are corroborated by public filings and contemporary reporting; readers and practitioners should re-check the vendor terms and regulatory guidance for the exact contractual language that will apply to specific deployments before making irrevocable design decisions.

Source: SitePoint Trend Watch: The Rise of 'Ad-Company' AI Assistants