Microsoft’s Azure has quietly moved from infrastructure runner-up to the fulcrum of Microsoft’s AI and cloud strategy — and that shift matters for enterprises, CIOs, and investors because Azure is now where Microsoft converts AI demand into recurring, high‑margin revenue.

Azure began life as Microsoft’s response to the public‑cloud wave, built to host Windows‑centric workloads and to extend familiar Microsoft tools into the cloud. Over the last several years, Microsoft has deepened that integration: Azure now hosts not only VMs and databases, but also production‑grade AI services, the Azure OpenAI Service, enterprise copilots, and a hybrid management fabric that keeps regulated customers comfortable running sensitive workloads outside public cloud datacenters. The result is a platform that solves both the traditional “lift and shift” cloud economics problem and the newer barrier to AI adoption: infrastructure and integration complexity. Microsoft’s own reporting shows the impact: Azure and related cloud services now account for tens of billions in annual revenue and continue to grow at high double‑digit rates — a material factor behind Microsoft’s valuation and free‑cash‑flow profile. Management has explicitly tied capex and datacenter investments to supporting AI workloads, even while pointing to efficiency gains in Azure.

A few concrete financial dynamics matter:

The upside is compelling — integrated AI experiences that reduce time to market, a broad compliance and hybrid story that wins regulated workloads, and a partnership model that brings leading foundation models into enterprise contexts. The risks are concrete too: elevated capex cycles, regulatory scrutiny over bundling, competitive pressure from other hyperscalers, and the operational challenges of scaling GPU‑heavy AI infrastructure.

For enterprises, Azure is a pragmatic platform to operationalize AI quickly and securely. For investors, Microsoft’s Azure story supports a long‑term compounder thesis, but the premium multiple means patience and careful monitoring of execution and regulatory developments are necessary.

Source: AD HOC NEWS Why Microsoft Azure Is Quietly Becoming the Most Important Cloud Bet on Wall Street

Background / Overview

Background / Overview

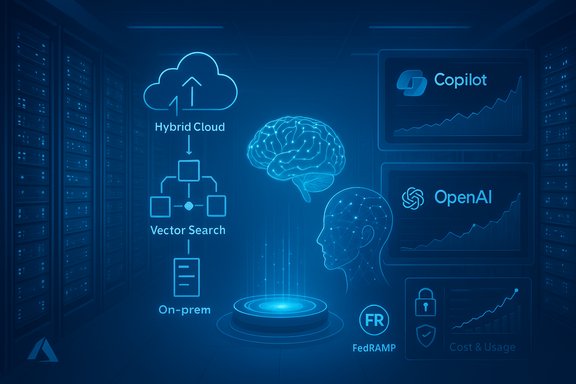

Azure began life as Microsoft’s response to the public‑cloud wave, built to host Windows‑centric workloads and to extend familiar Microsoft tools into the cloud. Over the last several years, Microsoft has deepened that integration: Azure now hosts not only VMs and databases, but also production‑grade AI services, the Azure OpenAI Service, enterprise copilots, and a hybrid management fabric that keeps regulated customers comfortable running sensitive workloads outside public cloud datacenters. The result is a platform that solves both the traditional “lift and shift” cloud economics problem and the newer barrier to AI adoption: infrastructure and integration complexity. Microsoft’s own reporting shows the impact: Azure and related cloud services now account for tens of billions in annual revenue and continue to grow at high double‑digit rates — a material factor behind Microsoft’s valuation and free‑cash‑flow profile. Management has explicitly tied capex and datacenter investments to supporting AI workloads, even while pointing to efficiency gains in Azure. What problem does Azure actually solve?

1. Legacy IT bloat and predictable economics

Many large firms still run mission‑critical apps in on‑premises data centers. Those environments are capital‑intensive, slow to scale, and often fragmented across regions and business units. Azure provides elastic compute and storage, platform managed services (databases, identity, security), and enterprise SLAs — all on a pay‑as‑you‑go model. For enterprises that have standardized on Microsoft Office, Windows, and Active Directory, Azure offers a natural migration path with lower migration friction.2. The AI gap — talent, tooling, and trustworthy models

Deploying large language models and advanced analytics at scale isn’t just about GPUs — it’s also about data governance, retrieval pipelines, model hosting, monitoring, and cost control. Azure’s suite of AI services, including Azure OpenAI Service, Azure AI Search (vector search and RAG support), and prebuilt Copilot experiences, addresses this stack by packaging complex plumbing as consumable services. That reduces time to production for AI use cases like fraud detection, supply‑chain forecasting, and conversational interfaces.Why Azure’s position is sticky: the competitive moat

Azure’s stickiness is not accidental; it emerges from three interlocking advantages that raise switching costs and deepen customer relationships.- Deep enterprise footprint. Microsoft owns the client and collaboration layers — Windows, Microsoft 365, Teams, and Dynamics — which creates natural integration points and identity surfaces (Azure AD). Enterprises that bind identity, data, and productivity tools into the cloud make Azure the default backend for those experiences.

- Hybrid and regulated‑industry capabilities. Azure Arc and Azure Government provide management planes, compliance controls, and FedRAMP authorizations that appeal to banks, regulated industries, and governments. This hybrid strength means customers can keep sensitive systems in‑place while still leveraging cloud AI and orchestration.

- AI‑first product synergies. Microsoft’s partnership and commercial arrangements with OpenAI — including exclusive Azure hosting rights for OpenAI APIs and deep integration in Copilot products — funnel AI workloads toward Azure. When enterprise copilots and developer tools are built on Microsoft platforms, the infrastructure naturally follows.

Market context: scale, share, and growth

Azure is competing in a market dominated by hyperscalers. Industry trackers show the cloud infrastructure market continuing to expand, with the big three (AWS, Microsoft Azure, Google Cloud) holding a dominant combined share. Recent independent market analyses indicate Azure capturing a substantial portion of growth as AI workloads scale, with some reports showing Azure growth rates materially above the long‑term average for cloud infrastructure. Microsoft’s earnings disclosures corroborate that Azure is a major growth engine: recent fiscal reporting disclosed that Azure and other cloud services surpassed $75 billion in a fiscal year and that the Intelligent Cloud segment continues to grow at strong double‑digit percentages, with Microsoft explicitly attributing a material portion of growth to AI‑related workloads and capacity constraints. Management has been transparent that gross margins in cloud can be pressured in the short term by AI infrastructure scale‑up even as long‑run efficiency improves.The product story: what’s new and why it matters

Azure OpenAI Service and the OpenAI partnership

Microsoft’s commercial relationship with OpenAI gives Azure unique positioning: OpenAI models and some commercial APIs are available through Azure OpenAI Service, enabling enterprises to use leading foundation models within Azure’s compliance and data governance framework. The partnership includes revenue sharing and access arrangements that make Azure a primary go‑to for customers wanting OpenAI capabilities behind enterprise controls. This is a major differentiator for customers who want top‑tier models plus enterprise security.Azure AI Search, vectors, and Retrieval‑Augmented Generation (RAG)

Azure AI Search has evolved to support large vector indexes, hybrid search (text + vector), and optimizations for RAG patterns. These capabilities let organizations keep knowledge bases in Azure and perform low‑latency semantic lookups that feed generative models — a practical requirement for many enterprise copilots and knowledge retrieval systems. Microsoft has explicitly focused on scaling vector capacity and lowering inference costs for customers building production RAG pipelines.Copilot and vertical integrations

Microsoft has pushed Copilot experiences across Microsoft 365, Dynamics, GitHub, and line‑of‑business apps. Those copilots, when they reach enterprise customers, generate additional Azure consumption: data extraction, contextual search, and model inference. Microsoft has also prioritized regulatory approvals (FedRAMP High for Azure OpenAI Service in Azure Government) to enable government and defense customers to use these capabilities.Financial implications: why Wall Street is watching Azure

Azure’s economics convert usage into recurring revenue. Unlike one‑time software license sales, cloud consumption scales with customer activity, often producing high‑visibility, recurring cash flows. That makes Azure a “compounder” in the eyes of many investors: as enterprises move more workloads and attach AI features to core applications, Microsoft captures incremental revenue that compounds over time.A few concrete financial dynamics matter:

- Revenue leverage. As customers add AI features and scale RAG or fine‑tuning workloads, incremental revenue can outpace incremental operating costs, improving gross margin dollars — though margin percentages can wobble while Microsoft scales AI infrastructure.

- Capex timing and capacity constraints. Microsoft has signaled elevated datacenter capex to support AI workloads and has at times been capacity constrained. That means growth can be lumpy based on when new capacity comes online, and near‑term margins can be affected as AI hardware and power/operational costs ramp. Investors value the long‑run payoff but must accept short‑term capital intensity.

- Optionality in monetization. Beyond raw infrastructure, Microsoft can monetize AI via bundled subscriptions (Copilot seats), vertical solutions, and developer platforms — increasing revenue per customer. This optionality is attractive to investors who prefer platforms that can expand monetization in multiple ways.

Wall Street sentiment and the valuation question

Investor sentiment broadly reflects a “cautiously bullish” posture: analysts recognize Azure as the core secular growth narrative, but valuations are priced for execution. Many research desks emphasize Azure as a durable cash‑flow engine that benefits regardless of which AI apps ultimately win the market — a “picks and shovels” play on AI adoption. At the same time, some analysts caution that trading at premium multiples leaves little room for execution missteps or a prolonged cloud optimization cycle among customers. Independent analyst notes and media coverage echo both points: Azure is the engine, but the market narrative is not risk‑free. Important caveat: Any specific short‑term price targets, simulated market snapshots, or hypothetical 5‑day/52‑week calculations included in third‑party summaries should be treated as illustrative unless verified against live market data. Market prices and analyst models change frequently; investors must check current quotes and firm research before acting. (This article does not provide investment advice.Risks and the downside scenarios

Azure’s strength is also the source of its primary risks. These are the scenarios that could pressure Microsoft’s stock or Azure’s growth trajectory.- Regulatory and antitrust scrutiny. Bundling cloud services with desktop and productivity suites invites regulatory attention. Remedies or restrictions that limit Microsoft’s ability to preferentially integrate Azure with Microsoft 365 or to obtain preferential model access could dent future growth. Regulators in the US, EU, and other jurisdictions have signaled interest in market concentration around AI and cloud.

- Cloud optimization cycles. Enterprises periodically optimize cloud spend, re‑architect to reduce costs, or shift workloads to other clouds for mix reasons. A prolonged optimization or macro slowdown could slow Azure consumption even if long‑term cloud adoption remains intact.

- Infrastructure cost dynamics. AI workloads are GPU‑heavy and power‑sensitive. If hardware or energy costs spike, or if Microsoft misjudges capacity timing, gross margins could be pressured more than expected. Microsoft has disclosed that gross margin percentage was impacted by scaling AI infrastructure even as gross margin dollars rose.

- Competitive moves. AWS, Google Cloud, and other providers are also stacking AI capabilities, signing large enterprise deals, and offering differentiated models and tooling. Market share pressures and competitive pricing could compress Microsoft’s growth corridor in some enterprise verticals. Independent market trackers show intense competition among the hyperscalers for the expanding AI workload pie.

- Partnership concentration risk. Microsoft’s OpenAI relationship is a strength, but it also concentrates strategic dependency. Public reporting and industry leaks indicate Microsoft is building in‑house model capabilities and chip clusters as a hedge, a prudent diversification that nonetheless signals shifting execution complexity. Any change in commercial terms with OpenAI could have revenue implications. Flag: some details about internal plans and leaked internal comments are difficult to fully verify and should be treated cautiously.

What this means for different stakeholders

For CIOs and IT leaders

Azure provides a pragmatic path to deploy enterprise AI without building the entire stack internally. Key evaluation points should be:- Security, compliance, and FedRAMP/Government authorizations where applicable.

- Total cost of ownership for inference and vector storage, not just training.

- Integration with identity (Azure AD), data platforms, and existing Microsoft 365 investments.

- Hybrid management needs via Azure Arc for regulated workloads.

For enterprise developers and data teams

Azure’s tooling around RAG, vector stores, and managed model hosting lowers friction for productionizing generative AI. Engineers should focus on:- Building robust retrieval pipelines (indexing, vectorization).

- Instrumenting inference cost monitoring and autoscaling.

- Designing for data minimization and privacy constraints where necessary.

For investors

Azure’s growth supports Microsoft’s long‑term cash generation thesis. Investment decisions hinge on:- Time horizon: Azure’s compounding benefits accrue over multiple years; short‑term traders must watch capex rhythms and regulatory headlines.

- Valuation discipline: Microsoft often trades at a premium for quality; be mindful of execution risk and limited margin for error priced into multiples.

- Diversification: Consider position sizing relative to other tech exposures given concentration in AI/cloud narratives.

Red flags and unverifiable claims to watch

- Beware of precise short‑term price targets or simulated trading snapshots that are not immediately verifiable against live market data. Those are useful for illustration but can mislead if treated as current facts.

- Internal leak reports about in‑house chip clusters and model roadmaps often surface before formal corporate announcements; treat them as informative but tentative until management confirms specifics.

- Any claim that “Azure is growing X% because of Y” should be cross‑checked against quarterly reports and commentary from Microsoft’s investor relations for context on capacity timing and recurring vs. one‑time contract recognition.

Strategic takeaways — how to think about Azure in 3‑5 years

- Azure is not simply an IaaS offering anymore; it is a full‑stack AI platform that binds together identity, productivity, data, and inference. That integration converts one‑time migrations into ongoing consumption.

- The AI era magnifies cloud winners because models need low‑latency access to enterprise data, governance controls, and scale — all things hyperscalers are best positioned to provide. Azure’s integration with Microsoft’s productivity stack gives it a differentiated path for corporate adoption.

- From a valuation perspective, Azure justifies a premium only if Microsoft continues to execute on capacity expansion, cost efficiencies, and the enterprise monetization of Copilot and vertical AI solutions. Execution risk and regulation are the principal “watch items.”

Practical recommendations (enterprise and investor lenses)

- For enterprises starting AI pilots: prototype with Azure’s managed RAG and Copilot building blocks to accelerate time to value, but design contracts and SLAs that allow control over costs and data residency.

- For IT procurement: negotiate cloud migration contracts that include capacity, pricing floors, and out‑clauses in case of rapid optimization cycles.

- For investors: treat Microsoft as a long‑term core holding if the goal is durable exposure to enterprise AI and cloud, but rebalance if valuation cushions become thin or if regulatory developments materially change the bundling dynamics.

Conclusion

Azure’s metamorphosis into Microsoft’s AI and cloud backbone is both strategic and financial: the platform pairs Microsoft’s entrenched enterprise software footprint with modern AI infrastructure and managed services, producing recurring consumption revenue and optionality for higher monetization per customer. That combination is why Wall Street is increasingly viewing Azure as the central bet underneath Microsoft’s valuation.The upside is compelling — integrated AI experiences that reduce time to market, a broad compliance and hybrid story that wins regulated workloads, and a partnership model that brings leading foundation models into enterprise contexts. The risks are concrete too: elevated capex cycles, regulatory scrutiny over bundling, competitive pressure from other hyperscalers, and the operational challenges of scaling GPU‑heavy AI infrastructure.

For enterprises, Azure is a pragmatic platform to operationalize AI quickly and securely. For investors, Microsoft’s Azure story supports a long‑term compounder thesis, but the premium multiple means patience and careful monitoring of execution and regulatory developments are necessary.

Source: AD HOC NEWS Why Microsoft Azure Is Quietly Becoming the Most Important Cloud Bet on Wall Street