Microsoft Azure is not universally “down” today — but last week’s high‑impact Azure Front Door disruption that began on October 29 produced a broad, multi‑hour outage across Microsoft 365, Xbox services, the Azure Portal and thousands of customer sites, and the technical aftershocks and industry debate from that incident are still shaping how enterprises answer the simple question “Is Azure down?” on November 3, 2025.

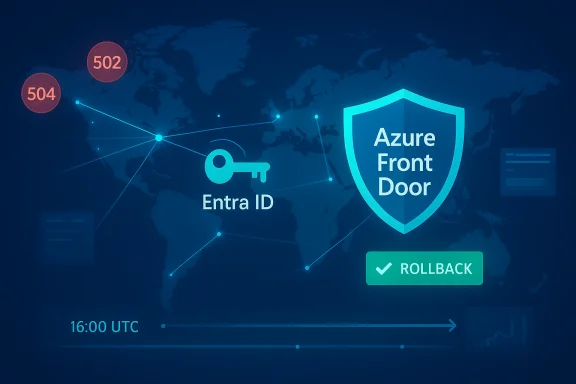

In plain terms, the visible outage that alarmed users and administrators around the world was rooted in a control‑plane configuration change to Azure Front Door (AFD) — Microsoft’s global Layer‑7 edge and application delivery fabric. The change caused inconsistent routing, DNS/TLS anomalies and capacity loss at a subset of AFD Points‑of‑Presence (PoPs), which in turn interfered with token issuance and portal rendering for services that depend on Microsoft Entra ID (formerly Azure AD). Microsoft’s immediate corrective action was to block further AFD configuration changes, roll back to a previously validated “last known good” configuration, fail the Azure Portal away from Front Door where possible, and recover edge nodes while rebalancing traffic. Those mitigation actions restored most services over several hours. This article explains what happened, why it looked like everything was down, how Microsoft mitigated the problem, the real impact for customers and admins, and the practical resilience steps organizations should adopt. Where public claims are imprecise or still unverified, those points are flagged for caution.

When a global ingress fabric like AFD suffers a configuration regression, client requests can fail at the edge before reaching origin systems, and token issuance or sign‑in flows can be interrupted. From the end‑user perspective this appears identical to a back‑end crash: portals won’t render, sign‑ins fail, and 502/504 gateway errors appear. That architectural coupling — edge routing + centralized identity — is the core reason a single misapplied change produced a broad, cross‑product outage.

This episode is not a single sentence of blame — it is an operational case study. The immediate symptoms have subsided, but the industry discussion it sparked about concentration risk, change control, and post‑incident transparency will persist — and organizations that ignore these lessons risk being caught unprepared the next time a global edge fabric hiccups.

Appendix: Quick references for admins (what to check now)

Source: DesignTAXI Community Is Microsoft Azure down? [November 3, 2025]

Background / Overview

Background / Overview

In plain terms, the visible outage that alarmed users and administrators around the world was rooted in a control‑plane configuration change to Azure Front Door (AFD) — Microsoft’s global Layer‑7 edge and application delivery fabric. The change caused inconsistent routing, DNS/TLS anomalies and capacity loss at a subset of AFD Points‑of‑Presence (PoPs), which in turn interfered with token issuance and portal rendering for services that depend on Microsoft Entra ID (formerly Azure AD). Microsoft’s immediate corrective action was to block further AFD configuration changes, roll back to a previously validated “last known good” configuration, fail the Azure Portal away from Front Door where possible, and recover edge nodes while rebalancing traffic. Those mitigation actions restored most services over several hours. This article explains what happened, why it looked like everything was down, how Microsoft mitigated the problem, the real impact for customers and admins, and the practical resilience steps organizations should adopt. Where public claims are imprecise or still unverified, those points are flagged for caution.Why a single edge change can look like a total outage

The role of Azure Front Door and Entra ID

Azure Front Door is not merely a CDN; it performs global TLS termination, host and header mappings, Web Application Firewall enforcement, global HTTP(S) routing, and origin failover. Many Microsoft first‑party services — including Microsoft 365 web apps, the Azure Portal and parts of Xbox/Minecraft identity flows — use AFD as the public ingress path. Separately, Microsoft Entra ID is the authentication and token issuance control plane used across Microsoft 365, Xbox services and many Azure management surfaces.When a global ingress fabric like AFD suffers a configuration regression, client requests can fail at the edge before reaching origin systems, and token issuance or sign‑in flows can be interrupted. From the end‑user perspective this appears identical to a back‑end crash: portals won’t render, sign‑ins fail, and 502/504 gateway errors appear. That architectural coupling — edge routing + centralized identity — is the core reason a single misapplied change produced a broad, cross‑product outage.

Why user reports spiked so fast

Public outage aggregators and social feeds registered very rapid spikes in user complaints after the AFD regression began. That’s typical: when a control‑plane failure hits a global fabric, it affects many endpoints at once and triggers high volumes of symptom reports from disparate user populations (gamers, enterprise admins, retail customers). These crowd signals are useful to show scope but are not substitutes for provider telemetry. Reported peak counts vary significantly by tracker and methodology; treat them as directional rather than exact.What happened: concise, verified timeline

Detection and public surfacing

- Approximately 16:00 UTC on October 29, 2025, Microsoft’s telemetry and third‑party monitors began registering elevated packet loss, DNS anomalies and HTTP gateway errors for AFD‑fronted endpoints. External observability vendors also detected edge timeouts and error spikes.

- Public outage trackers and social platforms quickly showed big increases in reports for Azure, Microsoft 365 and related services; gaming authentication flows (Xbox/Minecraft) and the Azure Portal were especially visible victims.

Mitigation actions Microsoft took

- Blocked further AFD configuration changes to stop the faulty state from spreading.

- Deployed a rollback to the most recent validated configuration (a “last known good” state).

- Failed the Azure Portal away from AFD where possible so administrators could regain management access.

- Recovered and restarted orchestration units and edge nodes, then rebalanced traffic to healthy PoPs.

- Continued rolling, node‑by‑node restoration to avoid re‑triggering the failure and to manage capacity.

Duration and residual effects

The main disruption window was measured in hours rather than days, but a long tail of intermittent failures is typical after a global edge rollback. DNS caching, client caches and ISP propagation can leave some users seeing errors well after the underlying control‑plane state is corrected. That tail effect means “restored for most customers” is a different operational milestone from “completely converged for every tenant.”Who and what were impacted

First‑party Microsoft services that reported visible disruption

- Microsoft 365 web applications (Outlook on the web, Office for the web)

- Microsoft 365 admin center and Azure Portal (blank or partially rendered blades)

- Teams web sessions and meeting entry points

- Xbox Live authentication, Game Pass storefronts, and Minecraft authentication/Realms

- Copilot integrations that rely on portal or identity flows

Downstream customer sites and industries affected

Many third‑party websites and mobile apps that use AFD for edge routing showed 502/504 gateway errors or timeouts. High‑visibility impacts were reported at airlines, retailers and banking portals where AFD fronted public endpoints and check‑in or payment flows were interrupted. Airlines reported check‑in and boarding pass issues in some regions. These downstream customer impacts magnified public attention beyond Microsoft’s first‑party services.Scale: how severe was it?

Public trackers reported spikes in user incidents numbering in the tens of thousands at peak; different trackers and outlets produced different peak numbers. Independent internet‑observability vendors confirmed global edge timeouts and node failures consistent with a control‑plane misconfiguration. Because public outage counts are aggregated from user submissions and monitoring probes with different sampling regimes, they should be used to gauge scope rather than to calculate precise numbers for contractual claims.Technical anatomy: how a control‑plane change cascaded

What a “configuration change” to AFD can touch

A single misapplied configuration affecting AFD can alter:- DNS mappings and host header routing,

- TLS certificate mappings and SNI behavior,

- Health‑check and origin routing rules,

- WAF policy attachments and rewrites.

Why automated safeguards can still be bypassed in practice

Large control planes rely on automation, canaries and gating rules. But complex operational workflows and cleanup operations sometimes require human interventions that, if misapplied or if an automation bug exists, can propagate bad state quickly. The October 29 event's pattern — a configuration change that deployed to parts of the fleet and then propagated widely — mirrors prior AFD incidents and demonstrates the fragility of global rollouts unless gated extremely conservatively. Public technical accounts caution that precise causal mechanics should be verified against Microsoft’s formal post‑incident review.How Microsoft communicated — strengths and weaknesses

What Microsoft did well

- Microsoft acknowledged the incident publicly on its status channels and provided progressive mitigation updates, which reduced speculation and gave admins actionable interim guidance.

- The containment playbook was appropriate: freeze rollouts, rollback to validated state, fail critical management portals away from the affected fabric, and recover nodes gradually. These are standard, conservative choices that prioritize avoiding oscillation or re‑triggering the failure.

What raised concerns

- Customers complained that status surfaces and some regional signals initially lagged the actual user experience, creating a disconnect between what operators saw and what end users experienced.

- Multiple commentators and enterprise customers have asked for a detailed post‑incident review (PIR) with commit hashes, exact configuration diffs and a clear timeline — requests that Microsoft historically responds to but which, until published, leave reconstruction efforts provisional. Treat any fine‑grained technical assertions as provisional until Microsoft’s PIR is published.

Immediate action plan for administrators (what to do right now)

If your organization was affected, prioritize the following steps in this order:- Record the incident window for your tenant — include timestamps (UTC), error codes, HTTP response patterns and affected geographies.

- Export and preserve diagnostics: network traces, application logs, conditional access failures, and Entra ID/STS logs.

- Open a Microsoft support case referencing your tenant ID and include the preserved logs. Request a Post Incident Review (PIR) and a timeline from your Microsoft account team.

- Assess whether public endpoints require multi‑path fronting (secondary CDNs, DNS failovers) or shorter DNS TTLs for faster recovery.

- Test non‑portal management flows now — service principals, CLI scripts, managed runbooks — and ensure at least one programmatic path can operate during portal outages.

- Ensure service principals and automation have least‑privilege tokens for emergency tasks.

- Confirm DNS TTLs are not so long that rollbacks leave clients on stale mappings.

- Implement synthetic monitoring that checks both edge and origin paths separately.

Longer‑term lessons and procurement implications

Architectural lessons

- Avoid single‑path exposure for critical public endpoints. Where downtime costs are high, design multi‑path ingress so origin services can be reached if one edge fabric misbehaves.

- Decentralize highly critical identity paths where possible. Consider regional token caches or validated fallback identity flows under tightly controlled guardrails.

- Strengthen canarying and gating. Changes that touch global control planes should require incremental propagation with verifiable rollback gates and automated safety interlocks.

Contractual and regulatory considerations

- Enterprises should re‑examine SLA language to understand what evidence is needed for claims and to demand greater transparency around change control and post‑incident reporting.

- Regulators and industry bodies may intensify scrutiny of hyperscaler concentration risk when public‑facing critical infrastructure (airlines, government services) experiences service disruption as a result of a provider control‑plane regression.

What to watch for next (verification and accountability)

- Microsoft’s formal Post Incident Review (PIR) — the definitive source for root cause specifics, exact configuration diffs, and procedural changes.

- Provider commitments — look for changes to deployment gating, stronger non‑bypassable validations, and improved canarying discipline for global control‑plane updates.

- Customer tooling — Microsoft may publish recommended architecture patterns and tooling to reduce dependence on a single AFD boundary for mission‑critical endpoints. Until Microsoft publishes its PIR, some technical attributions circulating in community posts remain provisional; they should be treated as well‑supported reconstructions rather than definitive facts.

Practical guidance for Windows and Azure administrators

Short checklist (operational resilience)

- Document the event and conserve logs (tenant ID, diagnostic packages).

- Verify ability to manage resources via automation (PowerShell, CLI) without relying on the Azure Portal.

- Establish a secondary ingress option (Traffic Manager + alternate CDN) for customer‑facing services.

- Reduce DNS TTLs for services that require rapid failover and test DNS rollover procedures with your ISPs.

- Exercise tabletop drills that simulate identity and edge fabric failures.

Recommended monitoring and testing

- Deploy synthetic checks for:

- Edge health (AFD‑fronted endpoint success/failure),

- Origin reachability bypassing edge (direct-to-origin checks),

- Entra ID token issuance and refresh behavior under simulated edge failures.

- Log and alert on unusual spikes in 5xx responses, TLS failures, or token error codes; those often precede visible portal outages.

Risks and criticisms worth heeding

- Concentration risk: centralizing identity and global ingress provides efficiency but increases systemic risk; organizations must balance simplicity against resilience.

- Communication lag: misaligned or delayed status updates exacerbate operational confusion. Providers and customers should agree on improved signal semantics in multi‑tenant incidents.

- Residual legal exposure: accurate tenant telemetry is critical for any SLA claim; public outage counts are not a substitute for provider audit data.

Final assessment and conclusion

Answering the question that motivated the DesignTAXI community thread on November 3: Microsoft Azure is not globally down today; the major disruption began on October 29 and Microsoft’s mitigation (freeze, rollback, node recovery and traffic rebalancing) restored service for most customers within hours. However, the incident exposed a structural fragility in the modern cloud model — when a widely used global edge fabric and centralized identity plane are tightly coupled, a single control‑plane regression can cascade into broad, multi‑industry disruption. Independent observability vendors and reputable news outlets corroborate Microsoft’s public narrative that an inadvertent Azure Front Door configuration change was the proximate trigger and that a rollback was the corrective action. For administrators and procurement teams the takeaways are urgent and practical: document and preserve tenant evidence, demand post‑incident transparency, test non‑portal management paths, and design multi‑path public ingress for high‑criticality endpoints. For cloud providers the required work is operational: stronger deployment gating, verifiable canary isolation and clearer post‑incident reporting are necessary to rebuild and sustain customer trust.This episode is not a single sentence of blame — it is an operational case study. The immediate symptoms have subsided, but the industry discussion it sparked about concentration risk, change control, and post‑incident transparency will persist — and organizations that ignore these lessons risk being caught unprepared the next time a global edge fabric hiccups.

Appendix: Quick references for admins (what to check now)

- Preserve tenant logs and open a Support case with Microsoft including tenant ID.

- Verify programmatic management paths (Azure CLI, PowerShell) and service principal access.

- Check DNS TTLs for public endpoints; consider lowering temporarily for rapid failover testing.

- Audit dependent services for single‑provider ingress reliance and plan secondary paths where downtime risk is not acceptable.

Source: DesignTAXI Community Is Microsoft Azure down? [November 3, 2025]