Azure IaaS is making a familiar but increasingly urgent argument: resilience can no longer be treated as a premium add-on or an after-hours disaster-recovery drill. In Microsoft’s latest framing, the platform’s value is not just raw compute, storage, and networking, but the way those layers are designed to help critical applications survive hardware faults, maintenance windows, zonal disruptions, and regional incidents with less business impact. That message lands at a moment when enterprise IT leaders are being asked to do more than simply “move to the cloud”; they are being asked to make continuity an operating assumption.

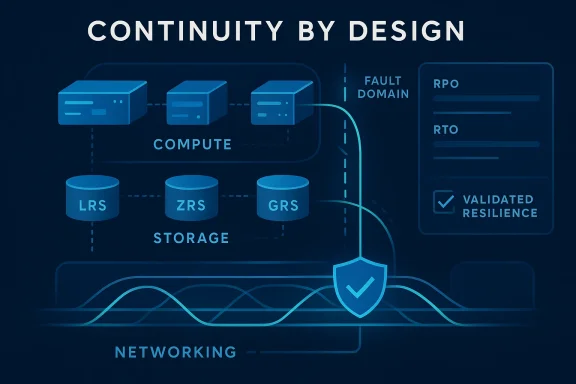

Microsoft’s Azure IaaS pitch is straightforward on the surface, but the implications are broader than a typical product marketing refresh. Azure says its infrastructure stack is built to support enterprise-proven resiliency across compute, storage, and networking, with the goal of keeping workloads available through disruption rather than merely helping them recover afterward. The company is also tying that story to a resource center model, a sign that resiliency has become not just a product capability but a guided operating discipline. (azure.microsoft.com)

The timing matters because cloud resilience has shifted from theoretical architecture talk to board-level operational risk. A wave of outages over the past year has reminded enterprises that even highly abstracted cloud systems still depend on concrete failure domains, routing paths, and replication choices. Microsoft’s own recent reliability guidance says resiliency must be intentional, measurable, and continuously validated, not assumed through default redundancy alone. (azure.microsoft.com)

What makes this announcement more interesting is that it is not about one product or one feature. It is about how compute isolation, data durability, traffic steering, and recovery automation must work together if an application is truly mission critical. That is a more mature message than the old cloud-era promise that simply “hosting it elsewhere” solves continuity. It reflects a market in which customers increasingly understand that architecture decisions still decide outage outcomes. (azure.microsoft.com)

At the center of the story is the shared-responsibility reality that Microsoft has been emphasizing more aggressively. Azure can provide the platform foundation, but customers still have to place workloads well, configure redundancy correctly, and validate failover behavior in their own environments. In practical terms, that means the difference between a resilient architecture and a fragile one is often not the cloud service itself, but how the service is combined, tested, and operated. (azure.microsoft.com)

This change in thinking matters because the modern cloud estate is not one application with one database and one front end. It is usually a web of microservices, APIs, identity dependencies, data stores, load balancers, and external integrations. A weakness in any one layer can become a customer-visible outage, and the more distributed the system becomes, the more valuable it is to define resiliency as a property of the whole workload rather than a checklist for isolated resources. (azure.microsoft.com)

Microsoft’s reliability guidance also makes room for a subtle but important point: not all disruptive conditions are faults in the classic sense. Demand spikes can threaten service availability even when infrastructure is healthy, which means autoscaling and elastic distribution are part of resiliency, not just performance tuning. In other words, resilience increasingly includes the ability to adapt to load changes without overreacting or collapsing under pressure. (azure.microsoft.com)

This also explains why Microsoft is now packaging guidance into a resource center and not just scattered product pages. Enterprise customers want more than product documentation; they want decision trees, architecture patterns, and remediation guidance. The resource-center approach acknowledges that resilience is partly an education problem, especially for teams managing legacy workloads that were never originally designed for cloud-native failure isolation. (azure.microsoft.com)

Availability zones are especially central to this story. Microsoft’s documentation says each zone has independent power, cooling, and networking, which means a zone outage does not automatically take the whole region down. For architects, the lesson is obvious but still easy to overlook: zone-aware design is no longer exotic best practice; it is becoming the baseline for mission-critical systems.

The value of scale sets is not only redundancy but automation. Manual failover is often where resiliency plans break down, because the organization assumes that people will act quickly and consistently under stress. Automated placement and replacement remove some of that human variance, which is precisely why cloud platforms are such an appealing home for continuity-sensitive workloads. (azure.microsoft.com)

The same point applies to maintenance events. A well-designed compute layer can survive hardware faults and localized disruptions, but only if the rest of the stack is prepared to continue serving traffic and preserve state. In practice, that means compute resilience is necessary, but it is never sufficient on its own. (azure.microsoft.com)

Azure Storage offers several redundancy models, and each one addresses a different failure scope. LRS keeps multiple copies within a single datacenter, ZRS synchronously replicates across availability zones, and GRS or RA-GRS extend protection into a secondary region. The strategic point is not simply that more copies are better, but that the right redundancy model should match the workload’s business criticality, locality requirements, and recovery expectations. (azure.microsoft.com)

For managed disks and VM-based workloads, Azure also points to snapshots, Azure Backup, and Azure Site Recovery as part of the broader recovery toolkit. These tools are not merely archival features; they are operational levers that define how quickly an environment can be restored and how much work is required to rebuild a system after an incident. In a real outage, that distinction is everything. (azure.microsoft.com)

The implication for enterprises is that the right storage posture is often a business decision, not just an IT one. If the organization cannot tolerate long outages or material data loss, it has to choose more aggressive replication and recovery controls. If the workload is less critical, it can accept simpler storage models and lower cost. Resiliency, in other words, is not free; it is a strategic allocation of risk. (azure.microsoft.com)

Azure provides multiple routing and distribution options, each suited to a different scope. Azure Load Balancer distributes traffic across healthy instances, Application Gateway adds Layer 7 awareness for web workloads, Traffic Manager routes using DNS across endpoints, and Azure Front Door handles global traffic steering and failover. Together, these services are the connective tissue that turns isolated healthy resources into a reachable application. (azure.microsoft.com)

Microsoft’s guidance also makes it clear that networking resilience is not just a regional issue. For workloads that span multiple regions, traffic management services become the control plane for continuity, deciding where requests go and how failover behaves. That makes networking one of the most policy-sensitive parts of the stack, because small misconfigurations can have broad user-facing consequences. (azure.microsoft.com)

This is especially relevant for hybrid deployments, where network dependencies can extend into on-premises systems and third-party services. In those environments, cloud resiliency is only one part of the answer, and path diversity matters as much as machine diversity. The most robust architectures treat network connectivity as a first-class survivability problem. (learn.microsoft.com)

The reason this matters is simple: cloud migrations frequently fail to deliver full resilience gains when they merely recreate the old infrastructure in a new place. If a workload is lifted and shifted without redesign, then old assumptions about clustered hosts, manual failover, or region-bound storage often come along for the ride. Azure’s current messaging strongly suggests that the cloud is most valuable when it is used as a chance to break those patterns. (azure.microsoft.com)

This also helps explain why deployment pipelines and GitHub-based workflows are increasingly part of resiliency planning. If the same templates that create a production environment can also recreate a recovery environment, the organization is much less likely to depend on tribal knowledge during an incident. Automation is not just convenience here; it is continuity insurance. (azure.microsoft.com)

The broader implication is that migration is no longer just a technical transfer. In the Azure framing, it is an opportunity to redesign operational recovery, not simply relocate servers. That is a much stronger pitch for enterprises trying to justify the cloud investment beyond capacity and cost. (azure.microsoft.com)

This is not a drawback so much as the nature of enterprise infrastructure. Different workloads have different tolerances for loss, and the cloud needs to accommodate that variability rather than forcing a single answer. That flexibility is one of Azure IaaS’s strengths, but it also means customers need stronger internal governance, better application inventories, and more disciplined testing. (learn.microsoft.com)

For consumers, the impact is more immediate and emotional. They rarely care whether an outage came from a zone failure or a routing error; they care that the app stopped working. That is why the platform’s emphasis on minimizing blast radius and preserving user reachability matters so much. The best resiliency is the kind users never notice. (azure.microsoft.com)

The company’s newer resiliency assessment approach reflects that reality by grouping resources into application service groups and tracking posture over time. That model is useful because it turns resilience from a vague ideal into something operationally measurable. If you cannot test it, you do not really own it. (azure.microsoft.com)

It is also likely that Microsoft will keep pushing customers toward the idea that resiliency and security are increasingly intertwined. In cloud operations, the same architectural discipline that limits blast radius during an outage often limits blast radius during an attack or misconfiguration. The more Azure can present resilience as part of a broader operational posture, the more persuasive the platform becomes to regulated enterprises and mission-critical operators. (azure.microsoft.com)

Source: Microsoft Azure Azure IaaS: Keep critical applications running with built-in resiliency at scale | Microsoft Azure Blog

Overview

Overview

Microsoft’s Azure IaaS pitch is straightforward on the surface, but the implications are broader than a typical product marketing refresh. Azure says its infrastructure stack is built to support enterprise-proven resiliency across compute, storage, and networking, with the goal of keeping workloads available through disruption rather than merely helping them recover afterward. The company is also tying that story to a resource center model, a sign that resiliency has become not just a product capability but a guided operating discipline. (azure.microsoft.com)The timing matters because cloud resilience has shifted from theoretical architecture talk to board-level operational risk. A wave of outages over the past year has reminded enterprises that even highly abstracted cloud systems still depend on concrete failure domains, routing paths, and replication choices. Microsoft’s own recent reliability guidance says resiliency must be intentional, measurable, and continuously validated, not assumed through default redundancy alone. (azure.microsoft.com)

What makes this announcement more interesting is that it is not about one product or one feature. It is about how compute isolation, data durability, traffic steering, and recovery automation must work together if an application is truly mission critical. That is a more mature message than the old cloud-era promise that simply “hosting it elsewhere” solves continuity. It reflects a market in which customers increasingly understand that architecture decisions still decide outage outcomes. (azure.microsoft.com)

At the center of the story is the shared-responsibility reality that Microsoft has been emphasizing more aggressively. Azure can provide the platform foundation, but customers still have to place workloads well, configure redundancy correctly, and validate failover behavior in their own environments. In practical terms, that means the difference between a resilient architecture and a fragile one is often not the cloud service itself, but how the service is combined, tested, and operated. (azure.microsoft.com)

Why resiliency has become a design principle

For years, many organizations treated resiliency as a separate discipline from application design. They built the application first, then layered on backups, failover, and disaster recovery later, often as a compliance exercise or a policy box to check. Microsoft’s current messaging pushes hard against that habit by insisting that resilience belongs in the design phase, where tradeoffs around failure domains, data replication, and routing behavior can be made intentionally rather than retrofitted under pressure. (azure.microsoft.com)This change in thinking matters because the modern cloud estate is not one application with one database and one front end. It is usually a web of microservices, APIs, identity dependencies, data stores, load balancers, and external integrations. A weakness in any one layer can become a customer-visible outage, and the more distributed the system becomes, the more valuable it is to define resiliency as a property of the whole workload rather than a checklist for isolated resources. (azure.microsoft.com)

From failure recovery to continuity engineering

The practical shift is from asking, “How fast can we recover?” to asking, “How much disruption can we absorb before users feel it?” That is a significant difference. The first question is about recovery time, while the second is about continuity under stress, and those are not interchangeable in enterprise operations. (azure.microsoft.com)Microsoft’s reliability guidance also makes room for a subtle but important point: not all disruptive conditions are faults in the classic sense. Demand spikes can threaten service availability even when infrastructure is healthy, which means autoscaling and elastic distribution are part of resiliency, not just performance tuning. In other words, resilience increasingly includes the ability to adapt to load changes without overreacting or collapsing under pressure. (azure.microsoft.com)

- Resilience is now architectural, not cosmetic.

- Validation matters as much as configuration.

- Workload behavior is the real metric, not checkbox redundancy.

- Continuity is the true business objective, not just uptime statistics.

- Recoverability must be measurable against the application, not the VM.

Application-level thinking wins

Azure’s latest guidance repeatedly pushes teams to assess resiliency at the application level, because customers experience outages as broken workflows, not as missing disks or downed hosts. That is an important framing change. It forces architects to ask whether the complete user journey can survive a zone loss, a traffic surge, or a dependency outage, rather than assuming that any single healthy component guarantees service. (azure.microsoft.com)This also explains why Microsoft is now packaging guidance into a resource center and not just scattered product pages. Enterprise customers want more than product documentation; they want decision trees, architecture patterns, and remediation guidance. The resource-center approach acknowledges that resilience is partly an education problem, especially for teams managing legacy workloads that were never originally designed for cloud-native failure isolation. (azure.microsoft.com)

Compute resilience: placement, isolation, and automatic recovery

Compute is usually the first layer people think about when they hear “high availability,” but Azure’s model is more nuanced than simply running multiple virtual machines. The platform is built around the idea that placement matters: if all the instances of a workload are too tightly clustered, a localized problem can have a larger blast radius than expected. That is why Azure keeps pointing customers toward availability zones, fault-domain-aware distribution, and scale sets for workloads that must stay online. (azure.microsoft.com)Availability zones are especially central to this story. Microsoft’s documentation says each zone has independent power, cooling, and networking, which means a zone outage does not automatically take the whole region down. For architects, the lesson is obvious but still easy to overlook: zone-aware design is no longer exotic best practice; it is becoming the baseline for mission-critical systems.

Virtual Machine Scale Sets and zone spread

Azure Virtual Machine Scale Sets are one of the clearest examples of how the platform turns resiliency into an operational pattern. They make it easier to deploy and manage fleets of instances while spreading them across zones and fault domains, which reduces the chance that one infrastructure event eliminates the whole service. That matters most for front-end and stateless tiers, where replacement is easier than repair and where the service can keep functioning as long as enough healthy instances remain. (learn.microsoft.com)The value of scale sets is not only redundancy but automation. Manual failover is often where resiliency plans break down, because the organization assumes that people will act quickly and consistently under stress. Automated placement and replacement remove some of that human variance, which is precisely why cloud platforms are such an appealing home for continuity-sensitive workloads. (azure.microsoft.com)

- Availability zones isolate workloads within a region.

- Scale sets make distributed compute operationally manageable.

- Stateless tiers benefit most from instance replacement.

- Fault domains reduce correlated failure risk.

- Automation lowers the odds of recovery drift.

Why compute alone is not enough

Still, compute resiliency cannot save a workload if the data layer or routing layer becomes the bottleneck. That is why Microsoft’s recent guidance explicitly prioritizes networking first, then storage, then compute when making zone-resiliency decisions. It is a useful reminder that the failure point most visible to users is often not the one admins first think about. (learn.microsoft.com)The same point applies to maintenance events. A well-designed compute layer can survive hardware faults and localized disruptions, but only if the rest of the stack is prepared to continue serving traffic and preserve state. In practice, that means compute resilience is necessary, but it is never sufficient on its own. (azure.microsoft.com)

Storage resilience: durability, replication, and recovery objectives

If compute is about keeping services running, storage is about making sure the service can come back with its data intact. Microsoft’s framing here is especially important for stateful applications, where failure recovery is not a binary decision between “up” and “down” but a question of how much data loss is acceptable and how much time the business can tolerate before normal operations resume. That is where RPO and RTO stop being abstract acronyms and start becoming architecture drivers.Azure Storage offers several redundancy models, and each one addresses a different failure scope. LRS keeps multiple copies within a single datacenter, ZRS synchronously replicates across availability zones, and GRS or RA-GRS extend protection into a secondary region. The strategic point is not simply that more copies are better, but that the right redundancy model should match the workload’s business criticality, locality requirements, and recovery expectations. (azure.microsoft.com)

Storage choice shapes recovery speed

Microsoft’s zone-resiliency guidance makes storage one of the first priorities after networking because operational data stores tend to serve many workloads at once. That means a single storage system can become a systemic dependency. If the store is not resilient, every application relying on it inherits the weakness, no matter how well the compute tier is designed. (learn.microsoft.com)For managed disks and VM-based workloads, Azure also points to snapshots, Azure Backup, and Azure Site Recovery as part of the broader recovery toolkit. These tools are not merely archival features; they are operational levers that define how quickly an environment can be restored and how much work is required to rebuild a system after an incident. In a real outage, that distinction is everything. (azure.microsoft.com)

- LRS is narrowest in scope but simplest in design.

- ZRS helps protect against zonal failure.

- GRS supports broader geographic continuity.

- Backups reduce the cost of human error and corruption.

- Site Recovery helps move workloads to a healthy region.

Stateful workloads demand harder choices

Stateful systems are where cloud resiliency becomes expensive, in both money and complexity. A stateless web tier can be replaced relatively quickly, but a transaction platform, file system, or database must preserve consistency, integrity, and sequencing. That is why Microsoft stresses that storage is central to the conversation about continuity, not a backend detail to be decided after the application is already live. (azure.microsoft.com)The implication for enterprises is that the right storage posture is often a business decision, not just an IT one. If the organization cannot tolerate long outages or material data loss, it has to choose more aggressive replication and recovery controls. If the workload is less critical, it can accept simpler storage models and lower cost. Resiliency, in other words, is not free; it is a strategic allocation of risk. (azure.microsoft.com)

Networking resilience: the hidden layer that keeps workloads reachable

Networking is the invisible layer of availability, and that invisibility is part of why it is so often underappreciated. A workload can be perfectly healthy from a compute and storage perspective and still be effectively unavailable if users cannot reach it. Microsoft’s own guidance now places networking first in zone-resiliency planning, which is a quiet admission that traffic routing is often the difference between a minor interruption and a customer-visible outage. (learn.microsoft.com)Azure provides multiple routing and distribution options, each suited to a different scope. Azure Load Balancer distributes traffic across healthy instances, Application Gateway adds Layer 7 awareness for web workloads, Traffic Manager routes using DNS across endpoints, and Azure Front Door handles global traffic steering and failover. Together, these services are the connective tissue that turns isolated healthy resources into a reachable application. (azure.microsoft.com)

Traffic flow is resilience in motion

The practical benefit of resilient networking is that traffic can keep flowing when a specific instance, zone, or endpoint fails. Rather than stopping, the application can reroute around the problem and continue serving users from healthy paths. That may sound simple, but in operational terms it is one of the highest-value forms of resilience because it directly affects whether customers notice the issue at all. (azure.microsoft.com)Microsoft’s guidance also makes it clear that networking resilience is not just a regional issue. For workloads that span multiple regions, traffic management services become the control plane for continuity, deciding where requests go and how failover behaves. That makes networking one of the most policy-sensitive parts of the stack, because small misconfigurations can have broad user-facing consequences. (azure.microsoft.com)

- Load balancing prevents hot spots from becoming outages.

- Layer 7 routing helps web apps make smarter failover decisions.

- DNS-based steering is useful for endpoint-level redundancy.

- Global traffic routing is essential for cross-region designs.

- Network design often decides whether failures are visible to users.

Critical infrastructure needs network-first thinking

Microsoft’s prioritization order—networking first, then storage, then compute—may surprise teams that traditionally start with VMs and databases. But it makes sense in a world where so many applications depend on shared network components such as gateways, firewalls, and public IPs. If the traffic path is broken, the rest of the workload might as well be offline. (learn.microsoft.com)This is especially relevant for hybrid deployments, where network dependencies can extend into on-premises systems and third-party services. In those environments, cloud resiliency is only one part of the answer, and path diversity matters as much as machine diversity. The most robust architectures treat network connectivity as a first-class survivability problem. (learn.microsoft.com)

Migration as a resiliency opportunity

Microsoft is also using the Azure IaaS message to reposition migration as more than a move from one platform to another. The company wants organizations to see migration as the best moment to remove inherited single points of failure, modernize recovery posture, and standardize deployment patterns. That is a compelling argument because migration is often the one time enterprises are willing to revisit architecture from first principles. (azure.microsoft.com)The reason this matters is simple: cloud migrations frequently fail to deliver full resilience gains when they merely recreate the old infrastructure in a new place. If a workload is lifted and shifted without redesign, then old assumptions about clustered hosts, manual failover, or region-bound storage often come along for the ride. Azure’s current messaging strongly suggests that the cloud is most valuable when it is used as a chance to break those patterns. (azure.microsoft.com)

Infrastructure as code strengthens recovery

The article’s example of Carne Group is revealing because it ties resiliency not just to Azure Site Recovery but to Terraform-based landing zones and duplicate-region readiness. That is the point where resilience becomes repeatable rather than artisanal. Infrastructure as code reduces configuration drift and gives teams a way to rebuild environments with fewer surprises when they need to recover under pressure.This also helps explain why deployment pipelines and GitHub-based workflows are increasingly part of resiliency planning. If the same templates that create a production environment can also recreate a recovery environment, the organization is much less likely to depend on tribal knowledge during an incident. Automation is not just convenience here; it is continuity insurance. (azure.microsoft.com)

- Terraform and similar IaC tools reduce drift.

- Landing zones standardize recovery-ready environments.

- Site Recovery helps operationalize regional failover.

- CI/CD turns resiliency into a repeatable pattern.

- Templates make rebuilds less dependent on heroics.

Migration tools are part of the resilience story

Microsoft is also pointing to migration helpers such as Azure Migrate, Azure Storage Mover, and Azure Data Box as part of the broader path to resilient infrastructure. These tools do not create resilience on their own, but they shape how smoothly organizations can transition into architectures that do. That matters because the smoother the move, the more likely teams are to preserve business continuity while they improve it. (azure.microsoft.com)The broader implication is that migration is no longer just a technical transfer. In the Azure framing, it is an opportunity to redesign operational recovery, not simply relocate servers. That is a much stronger pitch for enterprises trying to justify the cloud investment beyond capacity and cost. (azure.microsoft.com)

Shared responsibility in practice

Microsoft’s wording around shared responsibility is especially important because it removes one of the most common cloud misconceptions: that the platform vendor is responsible for resilience end-to-end. Azure can provide zone support, redundancy options, and recovery services, but the customer must choose the architecture, set the policy, and validate the result. In practice, this means the platform is only as resilient as the way it is used. (azure.microsoft.com)This is not a drawback so much as the nature of enterprise infrastructure. Different workloads have different tolerances for loss, and the cloud needs to accommodate that variability rather than forcing a single answer. That flexibility is one of Azure IaaS’s strengths, but it also means customers need stronger internal governance, better application inventories, and more disciplined testing. (learn.microsoft.com)

Enterprise and consumer impact are not the same

For enterprises, the focus is on business continuity, data integrity, compliance, and predictable recovery. The cost of failure is measured in transactions lost, productivity interrupted, and regulatory exposure created. Resilient Azure IaaS design helps here by offering a foundation that can be aligned to strict recovery objectives, but the business still has to define those objectives. (azure.microsoft.com)For consumers, the impact is more immediate and emotional. They rarely care whether an outage came from a zone failure or a routing error; they care that the app stopped working. That is why the platform’s emphasis on minimizing blast radius and preserving user reachability matters so much. The best resiliency is the kind users never notice. (azure.microsoft.com)

Validation is the missing discipline

Microsoft’s most mature point here may be that configuration is not enough. Teams need drills, fault simulations, observability, and ongoing assessment to make sure the architecture behaves the way they think it will. That is the real test of resiliency, because documented redundancy can still fail if an organization has never actually exercised failover under realistic conditions. (azure.microsoft.com)The company’s newer resiliency assessment approach reflects that reality by grouping resources into application service groups and tracking posture over time. That model is useful because it turns resilience from a vague ideal into something operationally measurable. If you cannot test it, you do not really own it. (azure.microsoft.com)

Strengths and Opportunities

Microsoft’s Azure IaaS resiliency message has several clear advantages. It is broad enough to matter across workloads, specific enough to be operationally useful, and aligned with the way modern enterprises actually manage infrastructure risk.- Strong platform breadth: compute, storage, and networking are covered as an integrated stack. (azure.microsoft.com)

- Zone-aware design guidance: the documentation clearly prioritizes availability zones and zonal recovery patterns.

- Operational realism: Microsoft emphasizes application-level resiliency instead of isolated resource health. (azure.microsoft.com)

- Clear recovery tooling: Azure Backup, Site Recovery, snapshots, and redundant storage form a coherent recovery story. (learn.microsoft.com)

- Automation-friendly architecture: infrastructure as code and deployment pipelines fit naturally into the model. (azure.microsoft.com)

- Enterprise alignment: the guidance maps well to recovery objectives, compliance needs, and continuity planning.

- Educational value: the resource-center format can help customers standardize resiliency knowledge across teams. (azure.microsoft.com)

Risks and Concerns

The same model also carries real risks. The biggest one is that organizations may confuse Microsoft’s platform capabilities with guaranteed resilience, when the actual result still depends on architecture, configuration, and validation.- False confidence: customers may assume built-in features equal end-to-end resilience. (azure.microsoft.com)

- Design complexity: multi-zone and multi-region patterns can become harder to operate than teams expect. (learn.microsoft.com)

- Cost escalation: stronger redundancy and replication usually increase storage and operational costs. (learn.microsoft.com)

- Testing gaps: untested failover plans often fail in real incidents. (azure.microsoft.com)

- Configuration drift: over time, environments can diverge from the resilient design. (azure.microsoft.com)

- Shared-dependency risk: networking and storage layers can become hidden single points of failure. (learn.microsoft.com)

- Migration complacency: lift-and-shift approaches can preserve old weaknesses in a new cloud home. (azure.microsoft.com)

Looking Ahead

The most likely next phase of Azure IaaS resiliency will be less about announcing new abstract concepts and more about making validation easier, faster, and more routine. Microsoft has already signaled that resiliency assessment and posture tracking are becoming part of the platform conversation, and that suggests a future where organizations can monitor not just uptime, but resilience quality itself. That is a meaningful step forward because resilience only becomes actionable when it is measurable. (azure.microsoft.com)It is also likely that Microsoft will keep pushing customers toward the idea that resiliency and security are increasingly intertwined. In cloud operations, the same architectural discipline that limits blast radius during an outage often limits blast radius during an attack or misconfiguration. The more Azure can present resilience as part of a broader operational posture, the more persuasive the platform becomes to regulated enterprises and mission-critical operators. (azure.microsoft.com)

- More guided resiliency tooling for assessment and validation.

- Expanded zone-aware defaults across key Azure services.

- Greater emphasis on application-level posture dashboards.

- Tighter integration between recovery planning and IaC workflows.

- Continued pressure on customers to test failover, not just design it.

Source: Microsoft Azure Azure IaaS: Keep critical applications running with built-in resiliency at scale | Microsoft Azure Blog

Last edited: