Microsoft Azure saying it has validated and readied its datacenters for NVIDIA’s new Vera Rubin NVL72 rack-scale AI system marks a major inflection point: hyperscalers are no longer preparing for incremental GPU upgrades — they are rearchitecting entire racks, networks, and operations to host co-designed, rack-first AI supercomputers that fuse CPUs, GPUs, DPUs, and ultra-high‑bandwidth fabrics.

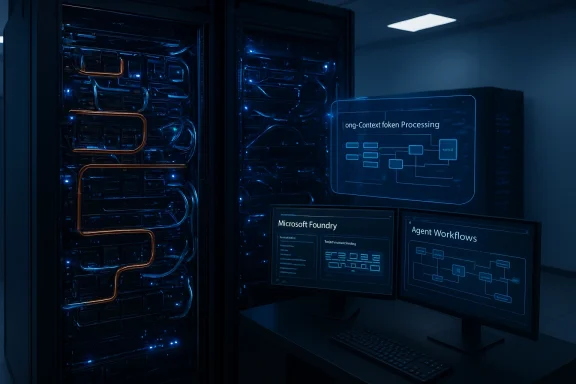

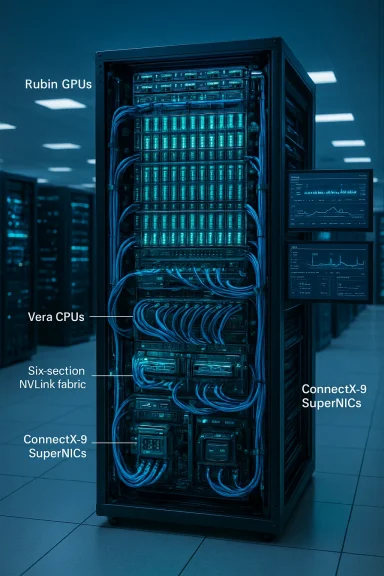

NVIDIA unveiled the Vera Rubin platform at CES and GTC briefings as a rack-scale architecture intended to make reasoning-class AI models (very large context windows and agentic systems) practical in production datacenters. The core NVL72 building block combines 72 Rubin GPUs, 36 Vera CPUs, ConnectX‑9 SuperNICs, and BlueField‑4 DPUs into a single, coherent system that shares memory and connectivity with a sixth‑generation NVLink fabric. NVIDIA positions this not as a single accelerator but as a purpose-built AI supercomputer per rack.

Microsoft’s Azure team published an engineering-focused post saying Azure datacenters — including newly designed “AI superfactory” sites — have been prepared to host Rubin NVL72 racks at scale and that Azure’s rack architecture already supports the NVLink‑6 bandwidth and topology Rubin requires. Third‑party reporting and vendor briefings suggest Microsoft has gone beyond engineering readiness to early production deployments, with Azure describing large-scale GB300/NVL72 cluster configurations for inference customers. Those claims are receiving broad press coverage and partner confirmations, though some specifics (exact cluster sizes and customer lists) vary by outlet.

Key architecture points repeated across vendor materials and press coverage:

Vendor materials claim the rack provides TBs of fast memory accessible at fabric speeds, and news reporting ties NVL72 to moves like HBM4 adoption and memory‑centric design. These claims come from NVIDIA, partner briefings, and reporting; independent benchmark data — especially on real LLM workloads at scale — is still limited in public. Until comparative, repeatable benchmarks are published by neutral parties, treat raw capacity and bandwidth numbers as directional but credible engineering indicators.

However, confidential computing at rack scale introduces complexity:

This two‑track rollout matters: hyperscalers will push massive scale, integration, and managed services, while boutique providers focus on early access, flexible pricing, and bespoke performance tuning. Customers will choose based on needs: raw scale and embedded productization at hyperscalers vs. agility and early availability at specialized providers.

That shift promises material performance and capability gains for workloads that need vast, fast context and tight CPU–GPU coherence — but it also raises practical, operational, and strategic questions about cost, software portability, supply, and vendor concentration. For enterprises and developers, the sensible path is staged: evaluate the specific advantages Rubin offers to your models, pilot carefully with early cloud partners, and insist on transparent benchmarks and security controls before committing large‑scale workloads.

The Vera Rubin NVL72 era may be arriving quickly, but it will unfold in layers: engineering validation, early trials, staged availability through partners, and finally mainstream adoption — each step bringing new technical proofs and new business trade‑offs to decide.

Source: econotimes.com https://econotimes.com/Microsoft-Az...aling-a-New-Era-in-AI-Infrastructure-1736280/

Background / Overview

Background / Overview

NVIDIA unveiled the Vera Rubin platform at CES and GTC briefings as a rack-scale architecture intended to make reasoning-class AI models (very large context windows and agentic systems) practical in production datacenters. The core NVL72 building block combines 72 Rubin GPUs, 36 Vera CPUs, ConnectX‑9 SuperNICs, and BlueField‑4 DPUs into a single, coherent system that shares memory and connectivity with a sixth‑generation NVLink fabric. NVIDIA positions this not as a single accelerator but as a purpose-built AI supercomputer per rack.Microsoft’s Azure team published an engineering-focused post saying Azure datacenters — including newly designed “AI superfactory” sites — have been prepared to host Rubin NVL72 racks at scale and that Azure’s rack architecture already supports the NVLink‑6 bandwidth and topology Rubin requires. Third‑party reporting and vendor briefings suggest Microsoft has gone beyond engineering readiness to early production deployments, with Azure describing large-scale GB300/NVL72 cluster configurations for inference customers. Those claims are receiving broad press coverage and partner confirmations, though some specifics (exact cluster sizes and customer lists) vary by outlet.

What the Vera Rubin NVL72 actually is

A rack, not a GPU

The defining idea behind NVL72 is scale by design. Rather than offering a single, denser GPU, NVIDIA designed a rack‑scale system in which many compute elements — Rubin GPUs and Vera CPUs — are co‑engineered and connected with an extremely high‑bandwidth fabric so the rack behaves like a single accelerator.Key architecture points repeated across vendor materials and press coverage:

- 72 Rubin GPUs plus 36 Vera CPUs in a rack node.

- Sixth‑generation NVLink fabric intended to deliver on the order of hundreds of terabytes per second of scale‑up bandwidth (NVIDIA guidance and vendor posts reference ~260 TB/s at rack scale).

- Integrated ConnectX‑9 SuperNICs and BlueField‑4 DPUs to offload networking, telemetry, and confidential‑computing services at wire speed.

- New “context memory” and storage constructs designed to surface large, fast working sets to models that need vast token‑level context.

Rubin GPU and Vera CPU: co‑design matters

NVIDIA’s Rubin GPU family and the Vera CPU are designed to work together, not just sit on the same PCIe bus. Rubin pushes memory capacity (next‑generation HBM and larger die‑stacks reported in vendor coverage), while Vera CPUs offer NVLink‑coherent links so CPU and GPU can share address space and memory semantics much more tightly than traditional server architectures allow. This is the architectural pivot that makes the “rack as single accelerator” promise technically viable.Microsoft Azure: “Validated” — what that really means

Engineering validation vs. commercial availability

When Microsoft says Azure datacenters are “engineered to support” Rubin NVL72, that carries two separate meanings:- Infrastructure validation — rack power distribution, liquid cooling headroom, NVLink topology, and network backplane design have been updated and stress-tested to meet NVL72 requirements. Microsoft’s Azure blog explains that Fairwater sites and other large‑scale deployments were architected with Rubin’s bandwidth and topology in mind.

- Operational validation — hardware arrival, firmware testing, scheduling integration, and workload profiling to ensure Rubin racks can be provisioned, monitored, and maintained in production. Third‑party reporting suggests Microsoft has taken steps into operational deployment, with press coverage describing large GB300/NVL72 clusters used for demanding inference workloads. Those accounts appear to come from vendor briefings and internal Microsoft disclosures and are being repeated by multiple trade outlets.

Is Azure the “first” to validate?

Multiple cloud and service providers have announced Rubin NVL72 support plans (Nebius, other NVIDIA Cloud Partners, and early hyperscaler experimentation). Microsoft’s publicly documented engineering work and press coverage make it a leading, visible validator for large‑scale Rubin deployments. Independent verification of “first” status is tricky because vendors stagger announcements, and many early validations are carried out under NDA with NVIDIA. Practically speaking, Microsoft appears to be among the earliest hyperscalers to publish explicit Rubin readiness engineering documentation and to describe production‑scale trials. Treat “first” as leading public validation rather than an uncontested, singular industry debut.Technical implications for performance and software

Bandwidth, memory, and model scale

Rubin/NVL72’s most important engineering bet is that memory capacity and low‑latency bandwidth are the gating factors for next‑generation reasoning models, not raw FLOPS alone. By pooling GPU HBM, CPU LPDDR, and high‑bandwidth NVLink interconnects into a unified fabric, NVL72 aims to present much larger working sets to models without the expensive data movement of traditional host–device transfers. NVIDIA and Microsoft say this enables much larger context windows, faster streaming of long token sequences, and improved inference throughput for agentic workloads.Vendor materials claim the rack provides TBs of fast memory accessible at fabric speeds, and news reporting ties NVL72 to moves like HBM4 adoption and memory‑centric design. These claims come from NVIDIA, partner briefings, and reporting; independent benchmark data — especially on real LLM workloads at scale — is still limited in public. Until comparative, repeatable benchmarks are published by neutral parties, treat raw capacity and bandwidth numbers as directional but credible engineering indicators.

Software and stack changes

Deploying a rack that behaves like a single accelerator requires substantial changes above the hardware layer:- Hypervisor and scheduler changes to map tenant workloads onto coherent multi‑die fabrics.

- New device drivers and firmware for NVLink‑6, ConnectX‑9, and BlueField‑4 DPUs.

- Changes to frameworks (PyTorch, TensorFlow, runtime shims) to exploit remote memory semantics and fabric‑coherent allocations.

- Observability, telemetry, and automated repair systems to handle rack‑scale failure modes.

Operational realities: power, cooling, and reliability

The non‑trivial cost of rack‑scale

NVL72 racks are denser, draw more power, and demand more sophisticated cooling than commodity GPU servers. Azure’s reworking of Fairwater and other “AI superfactory” datacenters points to capital investments in power distribution, liquid cooling plumbing, and remote maintenance that many on‑prem customers cannot easily replicate. Expect:- High upfront capital outlay for hyperscalers and large cloud partners.

- Operational changes: water‑loop service contracts, specialized technicians, and new failure modes tied to tightly coupled fabrics.

- Incrementally higher questions about spare parts, firmware rollouts, and out‑of‑band management for whole‑rack failover.

Reliability & “zero downtime” ambitions

NVIDIA and partners have highlighted new RAS (reliability, availability, serviceability) features and “zero downtime” maintenance concepts for Vera Rubin racks. These include granular health telemetry, swap‑out strategies for defective blades, and DPU‑centric orchestrations to isolate faults without taking an entire rack offline. Those are promising, but real‑world reliability at scale will only be proven through months of production operation and transparent incident reporting. Until then, claims about continuous operation deserve cautious optimism.Security and confidential computing

NVL72 brings hardware offloads — DPUs and SuperNICs — into the picture, enabling on‑rack confidential computing primitives. NVIDIA has emphasized an evolution of its confidential computing stack for Rubin that can provide hardware‑anchored attestation, encrypted context memory, and platform isolation. This is attractive for regulated workloads and multi‑tenant inference where data residency and model confidentiality matter.However, confidential computing at rack scale introduces complexity:

- Attestation chains must cover firmware, DPU, CPU, and GPU microcode.

- Multi‑tenant scheduling needs to enforce memory separation across fabric‑coherent pools.

- Supply‑chain and firmware integrity become larger attack surfaces when entire racks share a single coherent memory plane.

Ecosystem: who’s on board and why it matters

Hyperscalers, AI clouds, and partners

Announced Rubin partners range from hyperscalers that can invest at datacenter scale to specialized AI clouds and system integrators that will offer Rubin instances in select regions. Nebius (a cloud partner) has publicly stated plans to offer NVL72 in US and Europe in H2 2026, and Microsoft’s Azure documentation and vendor briefings position it as a major early platform. NVIDIA has also aligned major OEMs and networking vendors to ship Rubin‑qualified systems.This two‑track rollout matters: hyperscalers will push massive scale, integration, and managed services, while boutique providers focus on early access, flexible pricing, and bespoke performance tuning. Customers will choose based on needs: raw scale and embedded productization at hyperscalers vs. agility and early availability at specialized providers.

Models and customers

NVIDIA framed Rubin as optimized for “reasoning” and agentic workloads — models that need very long contexts, dynamic memory, and low‑latency orchestration. That aligns Rubin with next‑generation LLMs, multimodal systems, and large mixture‑of‑experts setups. Early adopters will likely be high‑value verticals (AI platform companies, research labs, and large enterprises building in‑house reasoning systems) rather than SMBs. Press coverage connects Rubin support to major model providers and to cloud customers who need inference at massive scale.Business and strategic implications

For NVIDIA

Rubin represents a strategic move from selling GPUs to selling AI platforms — a verticalization that captures more of the value chain (chips, DPUs, interconnects). That gives NVIDIA more leverage across datacenter design, but also exposes the company to the operational expectations of hyperscalers and cloud partners. If Rubin succeeds, NVIDIA cements itself as the systems vendor for large reasoning workloads; if adoption stalls because of cost or software friction, competitors emphasizing openness or price/performance could gain share.For Microsoft and other hyperscalers

Hyperscalers that validate Rubin early stand to offer a new class of differentiated AI services: larger context, faster inference, and integrated confidential compute that could win enterprise contracts. But they also shoulder significant capex and Opex burdens. Microsoft’s Azure blog and strategic positioning show a bet on integrated infrastructure as a moat and on customer willingness to pay for new, differentiated capabilities.For enterprises and service providers

Enterprises should view NVL72 as strategic infrastructure rather than a drop‑in performance boost. Adoption paths:- Early trials via specialized cloud partners for pilot projects.

- Production adoption at hyperscalers for mission‑critical, scale‑dependent workloads.

- On‑prem or co‑located deployments only if organizations can match datacenter power, cooling, and operational expertise.

Risks, unknowns, and what to watch next

- Benchmark transparency: Public, standardized benchmarks running real LLM workloads at scale are scarce. Expect vendors to release white papers and papers of results — but independent third‑party validations will be crucial.

- Power and TCO: Dense racks are expensive to run. Organizations should demand total cost of ownership analyses that include cooling, maintenance, and amortized hardware replacement. Early TCO claims from vendors are directionally useful but need customer‑level case studies.

- Software portability: Not all models or training pipelines will reap NVL72 benefits out of the box. Developers must adapt memory management, streaming strategies, and sharding approaches to exploit the fabric.

- Supply chain and availability: Early announcements from multiple clouds and partners suggest constrained supply initially; expect staged rollouts and regionally limited availability through 2026.

- Vendor lock‑in and ecosystem concentration: The tight coupling of CPU, GPU, DPU, and NVLink implies a reliance on a particular stack. Customers should plan for multi‑cloud strategies or insist on open interconnect standards where possible.

Practical guidance for WindowsForum readers

- If you’re an enterprise architect: Map your critical AI workloads to the specific advantages Rubin offers (long contexts, streaming inference, stateful agents). Budget for pilot costs and infrastructure readiness reviews. Demand auditable security and compliance documentation for any confidential‑computing claims.

- If you’re a solutions or platform engineer: Start experimenting with memory‑centric runtime models and test migrations in small steps. Track framework updates (PyTorch, Triton, ONNX Runtime) for Rubin/NVLink primitives and instrument workloads to measure whether pooled memory improves latency or throughput for your models.

- If you’re a procurement or finance leader: Don’t buy capacity by FLOPS alone. Ask vendors for workload‑based pricing examples and realistic TCO models that include energy and maintenance costs. Explore specialized cloud partners if you need early access.

Conclusion

NVIDIA’s Vera Rubin NVL72 is less a single new chip and more a manifesto for how the next era of AI infrastructure will be assembled: rack‑scale, memory‑centric, and co‑designed across CPU, GPU, DPU, and fabric. Microsoft Azure’s public engineering validation and apparent early deployments make Azure one of the most visible first movers in taking that architecture from concept to production.That shift promises material performance and capability gains for workloads that need vast, fast context and tight CPU–GPU coherence — but it also raises practical, operational, and strategic questions about cost, software portability, supply, and vendor concentration. For enterprises and developers, the sensible path is staged: evaluate the specific advantages Rubin offers to your models, pilot carefully with early cloud partners, and insist on transparent benchmarks and security controls before committing large‑scale workloads.

The Vera Rubin NVL72 era may be arriving quickly, but it will unfold in layers: engineering validation, early trials, staged availability through partners, and finally mainstream adoption — each step bringing new technical proofs and new business trade‑offs to decide.

Source: econotimes.com https://econotimes.com/Microsoft-Az...aling-a-New-Era-in-AI-Infrastructure-1736280/