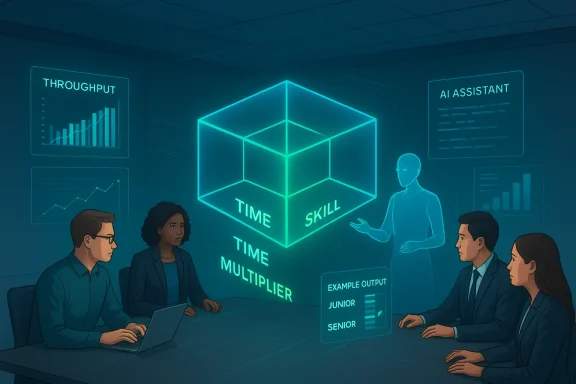

Thomson Reuters’ new “Capability leap” framework reframes the enterprise AI ROI debate: instead of treating value as a single axis of time saved, it measures outcomes across three orthogonal dimensions—Time Saved (re-anchored), Time Multiplier (volume/throughput), and Skill Multiplier (capability expansion)—arguing that a large portion of AI’s enterprise value is produced when people do new, higher‑skill work they could not do before. ([thomsonreuters.comreuters.com/en-us/posts/innovation/the-capability-leap-a-framework-for-measuring-the-enterprise-value-of-ai-tools/)

Enterprises have relied on simple, familiar KPIs to justify software spend: minutes saved per task, seat adoption, and task throughput. Those measures are tidy and auditable, but they systematically undercount the value of new capabilities—work that previously required specialized roles or simply didn’t happen at all. Thomson Reuters’ assessment grew from that tension: pilots with Anthropic’s Claude, Microsoft Copilot, and OpenAI’s ChatGPT surfaced repeated instances where the type of work changed more than the speed of existing work. The resulting measurement framework is deliberately pragmatic and self‑reported, designed to capture not only efficiency gains but also increases in throughput and jumps in individual capability that reshape workflows.

Source: Thomson Reuters The Capability leap: A framework for measuring the enterprise value of AI tools - Thomson Reuters Institute

Background / Overview

Background / Overview

Enterprises have relied on simple, familiar KPIs to justify software spend: minutes saved per task, seat adoption, and task throughput. Those measures are tidy and auditable, but they systematically undercount the value of new capabilities—work that previously required specialized roles or simply didn’t happen at all. Thomson Reuters’ assessment grew from that tension: pilots with Anthropic’s Claude, Microsoft Copilot, and OpenAI’s ChatGPT surfaced repeated instances where the type of work changed more than the speed of existing work. The resulting measurement framework is deliberately pragmatic and self‑reported, designed to capture not only efficiency gains but also increases in throughput and jumps in individual capability that reshape workflows.Why conventional ROI falls short

Traditional ROI approaches focus on efficiency. They work well when AI replaces or accelerates existing steps—taking a two‑hour manual draft and trimming it to 30 minutes is straightforward to quantify. But consider three common scenarios where that logic breaks down:- A non‑technical business analyst produces predictive models and interactive visualizations that previously required a data scientist; there’s no clear “time saved” because the analyst gained the capability rather than becoming faster at an existing task.

- A junior analyst produces strategic recommendations matching senior quality; the senior’s time was not saved—the output’s quality jumped.

- A program manager builds automated dashboards that used to require engineering time; the organization didn’t save the program manager’s hours, it avoided costly engineering gatekeeping.

The three‑dimension framework — what it measures and why it matters

Thomson Reuters organizes measurement into three dimensions designed to be simple to collect and reliable to interpret.Dimension 1 — Time Saved (with better anchoring)

Rather than asking users for an open‑ended estimate (which produces noisy, biased numbers), the framework asks people to pick magnitude buckets:- Minutes → seconds

- Hours → minutes

- Days → hours

- Weeks → days

Dimension 2 — Time Multiplier (volume/throughput)

This captures whether users can handle more volume after adopting the tool. Instead of asking “how many more tasks,” which produces brittle counts, the survey asks for multiplicative buckets:- Slightly more (1–2×)

- Moderately more (2×+)

- Significantly more (10×+)

Dimension 3 — Skill Multiplier (capability expansion)

The most novel dimension measures whether users are performing work at a higher expertise level than before. Questions probe whether the tool enabled outputs that would otherwise have required specialists (data scientists, senior analysts, technical writers). That lets leaders quantify how much specialist‑level output their generalist workforce is now producing, or how many previously blocked initiatives are proceeding because the skill gating was removed.What the framework captures that other metrics miss

- New work happening: projects, analyses, and artifacts that would not exist without AI.

- Reduced specialist bottlenecks: a small specialist pool no longer gates many requests.

- Organizational agility: faster experimentation because teams can prototype specialist outputs internally.

- Richer adoption signals: qualitative shifts in responsibility, not just seat counts.

Cross‑checking the wider evidence base

Thomson Reuters’ framework aligns with and complements other measurement and exposure approaches from industry and academia.- Forrester’s commissioned TEI analyses of large vendor platforms (for example, Microsoft Foundry) emphasize developer productivity and platform reuse as primary ROI drivers—similar to the Capability leap’s observation that capability unlocked (especially in technical roles) represents outsized value. Forrester’s composite Foundry model emphasized developer productivity as a major contributor to a multi‑hundred percent ROI over three years. That echoes the Reuters focus on capability and specialist work as high‑value outputs.

- Large public trials of Microsoft 365 Copilot—government and health‑sector pilots—have reported meaningful per‑user time savings in the tens of minutes per day, concentrated where the assistant is embedded int(meetings, emails, document drafting). Those findings validate the Time Saved dimension but also highlight adoption variance by role and task type—exactly the empirical nuance the Capability leap framework aims to capture. Public‑sector experiments demonstrate that where a tool is embedded matters as much as how much time it saves.

- Research programs such as MIT’s Project Iceberg and vendor efforts (Anthropic’s observed exposure approach, Microsoft’s Copilot telemetry) converge on a central theme: use‑case specificity matters. Some task clusters show heavy immediate applicability (writers, data‑centric roles, developers), while others require more onboarding and governance. These methods map to the Time Multiplier and Skill Multiplier signals: exposure + observed usage point to where capability expansion is likely to occur.

Strengths of the Capability leap framework

- Practical and low‑friction: the survey buckets and anchored questions are straightforward to roll into pilot evaluation templates and procurement playbooks.

- Human‑centered measurement: by relying on user self‑reporting with structured anchors, the framework captures signals that telemetry alone would miss, such as perceived expertise gains and newly undertaken tasks.

- Decision‑focused: the three‑axis output directly informs procurement (which teams benefit most), skilling (who needs training to move to higher‑value tasks safely), and architecture (which workflows deserve integration investment).

- Actionable outputs: translating Skill Multiplier responses into proxy specialist‑hour equivalents lets finance teams model avoided wait times or outsourcing costs—useful for procurement and build vs. buy decisions.

Key risks, blind spots, and caveats

- Measurement bias: Self‑reported data is powerful but vulnerable to optimism bias, social desirability, and selection bias (early adopters report higher capability gains). Thomson Reuters’ internal percentages—such as the 60% capability‑expansion share—are valuable signals but should be validated with representative sampling and telemetry where possible.

- Double counting and attribution: Combining dimensional signals into a single monetary ROI risks double counting (e.g., counting the same outcome as both time saved and additional throughput). Clear rules are needed for aggregation.

- Safety and quality: Capability expansion does not guarantee domain‑level correctness. Specialist output by non‑specialists must be paired with verification gates, provenance, and human‑in‑the‑loop checks to avoid downstream risk.

- Temporal displacement of value: Time saved may be reinvested into higher‑value work rather than producing immediate cost savings; capability gains may show up as strategic options rather than direct revenue.

- Vendor comparisons: Tools that produce large Skill Multiplier signals may do so because they incorporate domain templates or provide better grounding—differences that matter for procurement but are easy to miss if you only look at efficiency metrics.

Operationalizing the framework at scale — a practical playbook

To move from one‑off surveys to a continuously informative system, organizations should:- Instrument pilots with the three‑dimension survey as part of every launch checklist. Ask users the anchored Time Saved bucket, a Time Multiplier bucket, and a Skill Multiplier question about which specialist role(s) the output approximates.

- Combine self‑reporting with behavioral telemetry:

- Capture usage frequency, prompt templates used, and whether outputs are copied into formal documents or shared externally.

- Correlate “Skill Multiplier” responses with outcome quality metrics (peer review scores, revision rates, stakeholder approvals).

- Define conservative conversion rules:

- Translate a reported “senior analyst” level output into a conservative fraction of a senior analyst hour (e.g., count as 0.3–0.6 of a senior hour until validated).

- Avoid treating self‑reports as fully substitutable without quality validation.

- Run short A/B pilots for high‑value workflows:

- Cohort A: work proceeds with human baseline.

- Cohort B: same work assisted by the AI tool and measured by the three dimensions plus outcome quality.

- Track downstream KPIs (decision cycle time, rework rate, revenue impact).

- Build a capability register:

- Catalog which teams report volume, skill, and time gains for which workflows.

- Use the register to prioritize where to expand seats, governance, and training.

- Insist on governance and verification:

- Implement human verification thresholds for outputs flagged as specialist‑level.

- Log provenance and the model/agent used for auditability.

- Feed procurement with dynamic signals:

- Instead of only quarterly reviews, stream Skill Multiplier and Time Multiplier trends to procurement dashboards so license allocation can follow value in near real time.

Sample survey templates and data translation rules

- Time Saved (choose one): Minutes→seconds; Hours→minutes; Days→hours; Weeks→days.

- Time Multiplier (choose one): 1–2×; ~2×; 10×+.

- Skill Multiplier: “Did this output match the work of a role you don’t occupy?” If yes, select roles (Data Scientist, Senior Analyst, Technical Writer, Designer, Other) and rate confidence (Low/Medium/High).

- When a user reports “Hours→minutes” and a 2× Time Multiplier for a casework workflow, record:

- Efficiency gain: conservative 50% bucket midpoint applied to pre‑pilot time.

- Throughput gain: scale headcount equivalence by reported multiplier.

- When a Skill Multiplier indicates “Data Scientist (Medium confidence),” assign a provisional avoided specialist wait time of 0.5 hours per occurrence until validated by peer review.

Governance, training, and the human factor

Capability expansion without governance is a liability. Thomson Reuters and independent pilots both emphasize that AI adoption must be coupled with:- Role‑specific training that teaches verification, prompt design, and domain guardrails.

- Data loss prevention and tenant controls when models access proprietary information.

- Human‑in‑the‑loop thresholds for specialist‑grade outputs—clear rules about when a domain expert must validate an AI‑generated deliverable.

- Representative pilots to avoid early‑adopter bias: require pilots to reflect workforce diversity and skill mixes so the Capability leap signals generalize across the organization.

Procurement implications — how to use the framework when buying AI

- Request pilot measurement templates from vendors that include Time Multiplier and Skill Multiplier questions; demand transparency in how outcomes were measured.

- Negotiate proof‑of‑value milestones tied to capability expansion (e.g., deliver X validated specialist‑level artifacts in the pilot window).

- Insist on billing flexibility and portability—capability gains are ecosystem‑dependent, and vendors should provide clear export paths for knowledge artifacts and agent configurations. Independent TEI analyses remind procurement to treat vendor‑commissioned ROI numbers as scenarios, not guarantees.

Translating Skill Multiplier into dollars: a conservative approach

When CFOs ask “How do I value a junior producing senior‑level output?” use a conservative, audit‑friendly method:- Identify the specialist role and its fully‑burdened hourly rate (R).

- For each validated occurrence where a junior produces acceptable senior work, credit a conservative fraction (f) of R—e.g., f = 0.25–0.5 until the quality is proven at scale.

- Multiply f × R × occurrences per period to estimate avoided specialist cost or opportunity cost (fewer escalations, faster time to insight).

Where the framework should be expanded and further validated

- Longitudinal studies — track cohorts over 6–12 months to measure whether Skill Multiplier gains persist or regress as tasks become normalized.

- Quality audits — pair self‑reports with blind reviews to quantify false positives in claimed capability expansion.

- Sectoral baselines — build industry‑specific anchors (healthcare, finance, legal) because a “senior analyst” in one sector has a different risk and review profile than in another.

- Telemetry fusion — automate signals where possible: detect when an AI‑generated output is copied into shared artifacts, then surface a follow‑up survey to capture Skill Multiplier and confidence.

Conclusion

Thomson Reuters’ Capability leap framework is a pragmatic and much‑needed advance in how enterprises evaluate AI. By treating value as multidimensional—efficiency, scale, and capability—it realigns evaluation with how real work changes in the presence of AI. The framework is not a silver bullet: self‑reporting needs conservative translation rules, governance must accompany capability expansion, and finance teams must avoid double counting. But its core insight—that a substantial portion of AI’s enterprise value lies in enabling work that would otherwise be impossible or delayed—is validated by independent patterns in vendor TEI studies and government pilots. Organizations that adopt a disciplined, auditable version of this framework—one that blends anchored self‑reporting, telemetry, and quality audits—will be far better equipped to decide which tools to scale, where to invest in skilling, and when to build governance controls that let capability leap safely become lasting advantage.Source: Thomson Reuters The Capability leap: A framework for measuring the enterprise value of AI tools - Thomson Reuters Institute