ChatGPT has evolved from a novelty chatbot into a sprawling AI platform, and the 2026 version is defined by a simple but consequential shift: it is no longer just answering questions, it is increasingly doing work. The latest guide on TECHi frames that transformation around GPT-5, a wide pricing ladder, deep research tools, voice, memory, connectors, and a growing app ecosystem that makes ChatGPT feel more like an operating layer for digital tasks than a single product. Recent forum material also points to OpenAI testing a cheaper ChatGPT Go tier and interface refinements such as pinned chats and customizable themes, underscoring how aggressively the company is trying to widen adoption and reduce friction .

The most important thing to understand about ChatGPT in 2026 is that it sits at the intersection of consumer software, enterprise automation, and model-platform strategy. The TECHi guide portrays it as a product that spans free users, paid power users, teams, large enterprises, developers, and educators, with capabilities that now include web search, image generation, research agents, coding agents, memory, and app integrations. That breadth matters because it changes the competitive conversation: the question is no longer simply “which chatbot is best,” but “which AI workspace can replace the most manual effort.”

ChatGPT’s rise is inseparable from the broader generative AI boom that began in late 2022 and accelerated through 2023 and 2024. The article emphasizes that the original GPT-3.5-powered launch became a cultural event, then a product category, and eventually a platform race. By 2026, the guide says ChatGPT has more than 800 million weekly users, a scale that helps explain why every major competitor is now building not just models, but ecosystems.

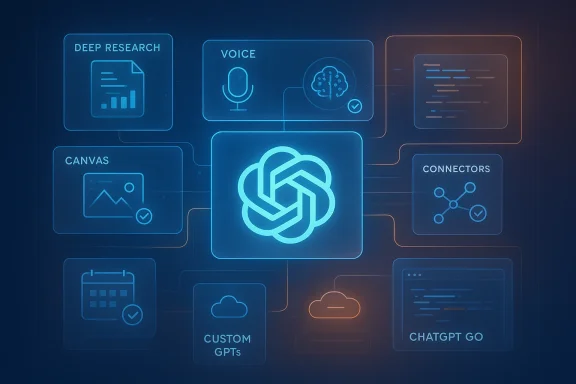

That ecosystem framing is central. The guide’s structure suggests OpenAI is trying to make ChatGPT useful in three different ways at once: as a conversational assistant for casual users, as a productivity engine for professionals, and as an integration hub for businesses and developers. Those ambitions are visible in the emphasis on Deep Research, Custom GPTs, connectors, Canvas, and app partnerships that push ChatGPT beyond a plain chat window.

There is also a clear commercial logic underneath the feature expansion. The more tasks ChatGPT can complete, the more defensible the subscription tiers become. The uploaded file search material reinforces this direction, showing OpenAI exploring a lower-cost ChatGPT Go tier, while other recent threads highlight broader interface changes and more autonomous workflow features in the same ecosystem . In practical terms, OpenAI appears to be building a ladder that can capture users at every spend level, then retain them with convenience and capability.

That makes ChatGPT a bellwether for the AI market. Its pricing, its feature rollouts, and even its missteps influence how rivals like Gemini, Claude, Copilot, Perplexity, and DeepSeek position themselves. The uploaded forum materials repeatedly frame OpenAI’s moves as part of a platform war, not a feature update cycle .

The historical pattern matters because it shows why users now expect more from ChatGPT than a chatbot. The original experience was impressive because it felt human-like. The current experience is impressive because it can assist across writing, software, research, shopping, planning, and workflow automation. That is a much harder promise to sustain, but also a much larger market opportunity.

OpenAI’s strategy has been to keep bundling capabilities into the core ChatGPT surface rather than forcing users to stitch together separate tools. The guide’s inventory of features suggests a deliberate move toward a single front door for AI work. That includes web search with citations, file uploads, custom assistants, voice, memory, collaborative editing, and integrated apps.

The uploaded forum files add an important 2026 wrinkle: price segmentation is becoming more aggressive. A thread discussing ChatGPT Go describes OpenAI testing a lower-cost plan to expand access, while other threads discuss a broader pattern across the AI industry in which vendors are remaking subscription structures, packaging more value into premium tiers, and experimenting with new monetization models . That suggests the company is trying to balance growth, compute costs, and user retention at the same time.

That’s why features like connectors and Canvas matter so much. They are not flashy on their own, but they create the feeling that ChatGPT is becoming a workspace rather than an app.

The Free tier is effectively an acquisition funnel. It provides enough functionality for casual users and first-timers to experience the product, but not enough to satisfy demanding daily use. That’s important because it lowers friction while preserving clear upgrade paths.

Plus remains the broad middle ground for individual professionals. At $20 per month, it is positioned as the value plan for heavy users who want stronger models, image generation, voice, and custom GPT creation without enterprise complexity. In practice, Plus is the plan that makes ChatGPT feel like a serious productivity tool rather than an experiment.

Pro is where OpenAI signals that the highest-value capabilities have a premium price. The guide describes it as the tier for near-unlimited access, extended reasoning, and Deep Research. The existence of a $200 plan is a strategic statement: some users will pay dramatically more for time savings, higher limits, and deeper analysis.

A forum thread about a possible lower-cost ChatGPT Go plan reinforces the idea that OpenAI wants an even more granular price architecture, perhaps to serve users who sit between free and Plus. If that pricing layer arrives, it could help convert price-sensitive users without undermining the premium brand of Pro or Enterprise .

For companies, the question is not just “what does it cost?” but “what risk does it remove?” That is where enterprise value often lies.

That bundling changes user expectations. People no longer ask only whether ChatGPT can write better prose. They ask whether it can summarize meetings, shop for products, inspect code, reason over documents, or connect to third-party services. That makes the product more powerful, but also more complex to explain.

One of the guide’s strongest themes is integration density. The more systems ChatGPT can touch, the more “sticky” it becomes in daily workflows. This is especially visible in the section on connectors and app integrations, which frame ChatGPT as a central interface for Google Drive, Slack, GitHub, Spotify, Canva, Expedia, and more.

The uploaded forum results support this direction, particularly in the thread discussing Google Workspace connectors and the move to let ChatGPT pull in Gmail, Calendar, and Contacts. That is a serious escalation: the assistant is not just reading the web, but reading your work life, with obvious productivity upside and equally obvious privacy implications.

Deep Research goes further by turning the assistant into a multi-step research workflow. In the guide, it can analyze dozens of sites and produce structured reports, which makes it especially relevant for analysts, journalists, marketers, and competitive intelligence teams. In the forum corpus, similar ideas appear in coverage of other AI products, which suggests the whole sector is racing toward citation-backed research assistants .

The guide’s mention of massive image-generation volume shows how quickly multimodal use can go viral. Once a feature becomes fun as well as useful, adoption accelerates.

That means the quality gap is not only about model strength. It is also about user skill. A well-structured prompt can make an older model feel surprisingly capable, while a vague prompt can make a newer model feel disappointingly generic.

This is why practices like chaining prompts, asking for self-critique, and forcing structured output matter. They reduce ambiguity and help the model stay on task. They also make it easier to verify results, which is crucial when the model is being used for research, writing, or business decisions.

The guide is especially useful in showing that ChatGPT is strongest when users understand its boundaries. It is excellent at generating options, but less reliable when the task requires a single, perfectly factual answer without verification.

The strongest business cases are not about replacing employees. They are about compressing the time between first draft and final output. In customer support, that might mean faster response times. In marketing, it means quicker content production. In legal work, it means more efficient document review before human oversight.

The forum material also shows how enterprise AI is shifting toward governed, integrated systems rather than isolated chat experiences. This aligns with the ChatGPT Team and Enterprise pitch in the guide: if organizations want to use AI at scale, they need permissions, privacy guarantees, admin controls, and consistent behavior across users.

The real benefit is not elimination of support staff. It is the reduction of low-value repetitive work so humans can focus on exceptions and emotionally sensitive cases.

The risk, of course, is sameness. If too many teams rely on the same underlying model without strong editorial oversight, content can become formulaic.

That matters because software teams are not only asking for code generation. They want code understanding, repository-level context, and integration with their existing systems.

ChatGPT’s moat, according to the guide, is its ecosystem. That is a reasonable claim. No rival currently combines search, voice, image generation, custom assistants, enterprise tooling, and a broad app layer in quite the same way.

Still, the competition is real. Google is pushing long context and deep ecosystem integration. Anthropic is leaning into writing quality and careful analysis. Microsoft is turning Copilot into the productivity face of Office. Perplexity is staking out the research-first niche. DeepSeek and others are pressuring price and efficiency.

The uploaded forum materials back this framing, especially threads discussing Gemini Enterprise, Copilot’s GPT-5 rollout, and cross-platform AI competition. The common thread is that every major player is trying to own the interface layer where work happens.

That kind of continuity matters because most people do not want to manage five AI tools. They want one assistant that gets them through the day.

That creates a powerful flywheel: more users attract more integrations, which create more use cases, which attract more users. That is platform economics in action.

The uploaded forum material adds additional cautionary context around agentic behavior, connector access, and enterprise governance. As ChatGPT touches more systems, the risk surface expands. An assistant that can browse, act, and connect is more useful, but also more dangerous if permissions, validation, or oversight are weak.

The biggest question is whether OpenAI can keep the product simple while making it more capable. That balance is difficult. Every new feature adds power, but also complexity, permission prompts, and potential failure points. If OpenAI gets the balance right, ChatGPT becomes the default AI layer for work and life. If it gets it wrong, users may fragment across more specialized tools.

What to watch next:

In that sense, ChatGPT in 2026 is no longer just a product to review. It is a moving target, a business model, a software ecosystem, and a preview of how people may interact with computing for years to come.

Source: techi.com ChatGPT: The Complete Guide to Features, Pricing, Tips & More [2026]

Overview

Overview

The most important thing to understand about ChatGPT in 2026 is that it sits at the intersection of consumer software, enterprise automation, and model-platform strategy. The TECHi guide portrays it as a product that spans free users, paid power users, teams, large enterprises, developers, and educators, with capabilities that now include web search, image generation, research agents, coding agents, memory, and app integrations. That breadth matters because it changes the competitive conversation: the question is no longer simply “which chatbot is best,” but “which AI workspace can replace the most manual effort.”ChatGPT’s rise is inseparable from the broader generative AI boom that began in late 2022 and accelerated through 2023 and 2024. The article emphasizes that the original GPT-3.5-powered launch became a cultural event, then a product category, and eventually a platform race. By 2026, the guide says ChatGPT has more than 800 million weekly users, a scale that helps explain why every major competitor is now building not just models, but ecosystems.

That ecosystem framing is central. The guide’s structure suggests OpenAI is trying to make ChatGPT useful in three different ways at once: as a conversational assistant for casual users, as a productivity engine for professionals, and as an integration hub for businesses and developers. Those ambitions are visible in the emphasis on Deep Research, Custom GPTs, connectors, Canvas, and app partnerships that push ChatGPT beyond a plain chat window.

There is also a clear commercial logic underneath the feature expansion. The more tasks ChatGPT can complete, the more defensible the subscription tiers become. The uploaded file search material reinforces this direction, showing OpenAI exploring a lower-cost ChatGPT Go tier, while other recent threads highlight broader interface changes and more autonomous workflow features in the same ecosystem . In practical terms, OpenAI appears to be building a ladder that can capture users at every spend level, then retain them with convenience and capability.

Why this matters now

What makes ChatGPT especially significant in 2026 is not merely model quality, but productization. Many AI systems can generate text, summarize documents, or code snippets. Far fewer can combine those abilities with account memories, multimodal interaction, external tools, and a consumer interface that ordinary users can adopt quickly.That makes ChatGPT a bellwether for the AI market. Its pricing, its feature rollouts, and even its missteps influence how rivals like Gemini, Claude, Copilot, Perplexity, and DeepSeek position themselves. The uploaded forum materials repeatedly frame OpenAI’s moves as part of a platform war, not a feature update cycle .

Background

ChatGPT launched in late 2022 as a research preview and quickly turned into a mainstream habit. The guide traces that leap from GPT-3.5 to GPT-4 and onward to GPT-5, with each step adding more reliability, more modalities, and more usefulness. In the early phase, the novelty was conversational fluency. By 2024 and 2025, the novelty had shifted to utility: image generation, voice, long-context analysis, and agent-style behavior.The historical pattern matters because it shows why users now expect more from ChatGPT than a chatbot. The original experience was impressive because it felt human-like. The current experience is impressive because it can assist across writing, software, research, shopping, planning, and workflow automation. That is a much harder promise to sustain, but also a much larger market opportunity.

OpenAI’s strategy has been to keep bundling capabilities into the core ChatGPT surface rather than forcing users to stitch together separate tools. The guide’s inventory of features suggests a deliberate move toward a single front door for AI work. That includes web search with citations, file uploads, custom assistants, voice, memory, collaborative editing, and integrated apps.

The uploaded forum files add an important 2026 wrinkle: price segmentation is becoming more aggressive. A thread discussing ChatGPT Go describes OpenAI testing a lower-cost plan to expand access, while other threads discuss a broader pattern across the AI industry in which vendors are remaking subscription structures, packaging more value into premium tiers, and experimenting with new monetization models . That suggests the company is trying to balance growth, compute costs, and user retention at the same time.

From chatbot to workspace

This transition is the core story. ChatGPT is not replacing email, docs, spreadsheets, or browsers outright, but it is increasingly embedding itself into those workflows. The result is a softer form of replacement: fewer app switches, fewer copy-paste steps, and less manual research.That’s why features like connectors and Canvas matter so much. They are not flashy on their own, but they create the feeling that ChatGPT is becoming a workspace rather than an app.

The market context

The competitive backdrop is intensifying. The uploaded file set contains multiple references to model-routing, long context windows, and enterprise AI platform consolidation, which reflects how the market is maturing from “best demo” to “best platform.” Competitors are improving fast, but ChatGPT’s advantage remains breadth, not just benchmark performance .Pricing Strategy

OpenAI’s pricing structure is one of the clearest signals of how it sees the market. The guide lays out Free, Plus, Pro, Team, Enterprise, and Edu options, each aimed at a distinct audience. That is a classic SaaS playbook, but ChatGPT’s version is unusually dynamic because the capabilities themselves are changing so quickly.The Free tier is effectively an acquisition funnel. It provides enough functionality for casual users and first-timers to experience the product, but not enough to satisfy demanding daily use. That’s important because it lowers friction while preserving clear upgrade paths.

Plus remains the broad middle ground for individual professionals. At $20 per month, it is positioned as the value plan for heavy users who want stronger models, image generation, voice, and custom GPT creation without enterprise complexity. In practice, Plus is the plan that makes ChatGPT feel like a serious productivity tool rather than an experiment.

Pro is where OpenAI signals that the highest-value capabilities have a premium price. The guide describes it as the tier for near-unlimited access, extended reasoning, and Deep Research. The existence of a $200 plan is a strategic statement: some users will pay dramatically more for time savings, higher limits, and deeper analysis.

Why the pricing ladder is strategic

The ladder works because it maps to use intensity. Casual users subsidize growth, professionals subsidize feature expansion, teams subsidize governance, and enterprises subsidize security and scale. That is not unique to OpenAI, but it is especially visible here because the product itself spans so many use cases.A forum thread about a possible lower-cost ChatGPT Go plan reinforces the idea that OpenAI wants an even more granular price architecture, perhaps to serve users who sit between free and Plus. If that pricing layer arrives, it could help convert price-sensitive users without undermining the premium brand of Pro or Enterprise .

Consumer versus enterprise economics

Consumer pricing is about adoption and habit formation. Enterprise pricing is about governance, privacy, and seat expansion. The guide’s distinction between consumer plans and Team/Enterprise data handling is especially important because it shows how OpenAI is trying to reassure businesses that ChatGPT can be deployed safely.For companies, the question is not just “what does it cost?” but “what risk does it remove?” That is where enterprise value often lies.

- Free is best for exploration and light use.

- Plus is the most balanced individual plan.

- Pro is for users who monetarily justify deep research and heavy throughput.

- Team suits small organizations that need collaboration and data controls.

- Enterprise is about security, compliance, and administration.

- Edu extends the model into academic institutions.

The pricing challenge

The challenge is that AI usage is still elastic. Some months a user needs a little help; other months they need constant support. That makes subscription pricing feel both attractive and imperfect. If OpenAI prices too high, users churn to alternatives. If it prices too low, it risks underserving heavy users and compressing margins.Feature Expansion

The most striking part of the guide is the sheer number of features now attached to ChatGPT. It is not a single capability stack anymore; it is a portfolio. Search, image generation, Deep Research, agents, voice, memory, connectors, meeting notes, shopping, Canvas, and app integrations all live under one brand.That bundling changes user expectations. People no longer ask only whether ChatGPT can write better prose. They ask whether it can summarize meetings, shop for products, inspect code, reason over documents, or connect to third-party services. That makes the product more powerful, but also more complex to explain.

One of the guide’s strongest themes is integration density. The more systems ChatGPT can touch, the more “sticky” it becomes in daily workflows. This is especially visible in the section on connectors and app integrations, which frame ChatGPT as a central interface for Google Drive, Slack, GitHub, Spotify, Canva, Expedia, and more.

The uploaded forum results support this direction, particularly in the thread discussing Google Workspace connectors and the move to let ChatGPT pull in Gmail, Calendar, and Contacts. That is a serious escalation: the assistant is not just reading the web, but reading your work life, with obvious productivity upside and equally obvious privacy implications.

Search and research as core utilities

ChatGPT Search is perhaps the clearest bridge between old search and new AI. Instead of returning links, it synthesizes a response and supports the answer with citations. That’s valuable because it reduces the friction of hopping between pages, but it also means users must trust the assistant’s selection and interpretation of sources.Deep Research goes further by turning the assistant into a multi-step research workflow. In the guide, it can analyze dozens of sites and produce structured reports, which makes it especially relevant for analysts, journalists, marketers, and competitive intelligence teams. In the forum corpus, similar ideas appear in coverage of other AI products, which suggests the whole sector is racing toward citation-backed research assistants .

Multimodality and creative work

Image generation, voice mode, and vision turn ChatGPT into more than a text engine. Users can speak, upload images, and create visuals without switching tools. That lowers the barrier to entry for nontechnical users and expands the product’s addressable audience.The guide’s mention of massive image-generation volume shows how quickly multimodal use can go viral. Once a feature becomes fun as well as useful, adoption accelerates.

Collaboration and memory

Memory, Canvas, and Custom GPTs are among the most consequential “quiet” features. They reduce repetition, preserve context, and allow tailored workflows. That matters because real work is rarely a one-off prompt; it is an iterative process with evolving context.- Memory improves continuity.

- Canvas improves collaboration.

- Custom GPTs improve specialization.

- Connectors improve relevance.

- App integrations improve reach.

Autonomous agents

The guide’s treatment of agents and Codex signals the most important long-term change: ChatGPT is moving toward action, not just advice. That raises the ceiling on productivity but also raises the stakes for correctness, permissions, and auditability. The forum results on agentic AI in enterprise workflows underscore how quickly organizations are trying to operationalize this idea .Tips for Getting Better Results

The guide’s “power tips” section is more than a prompt-cheat sheet. It reflects a broader truth about ChatGPT: users get dramatically better outcomes when they stop treating it like a search box and start treating it like a collaborator. The best prompting often includes role, goal, constraints, audience, and format.That means the quality gap is not only about model strength. It is also about user skill. A well-structured prompt can make an older model feel surprisingly capable, while a vague prompt can make a newer model feel disappointingly generic.

This is why practices like chaining prompts, asking for self-critique, and forcing structured output matter. They reduce ambiguity and help the model stay on task. They also make it easier to verify results, which is crucial when the model is being used for research, writing, or business decisions.

The guide is especially useful in showing that ChatGPT is strongest when users understand its boundaries. It is excellent at generating options, but less reliable when the task requires a single, perfectly factual answer without verification.

Practical prompting habits

A few simple habits can materially improve results:- State the role you want ChatGPT to play.

- Define the output format before asking for content.

- Add constraints on length, tone, and audience.

- Give examples of the style you want.

- Ask for a second pass that critiques the first draft.

Tip categories that matter most

The guide divides tips into prompting, productivity, creative work, and advanced API use, and that structure is helpful because it mirrors how users actually adopt the tool. Casual users usually begin with writing and summarization. Power users move into files, data, and workflow automation. Developers eventually push into APIs, function calling, and retrieval systems.- Prompt with specificity.

- Iterate instead of restarting.

- Use file uploads for documents and spreadsheets.

- Apply Memory for recurring projects.

- Build Custom GPTs for repetitive tasks.

- Use the API for real integrations.

The biggest user mistake

The most common mistake is assuming the first answer is the final answer. ChatGPT performs best in a dialogue, not a one-shot test. That is a subtle but important shift in mindset, and it is one of the reasons experienced users feel they are getting a completely different product from beginners.Business Use Cases

The guide is persuasive when it moves from abstract features to actual business applications. ChatGPT can support customer service, marketing, sales, HR, legal work, healthcare administration, education, finance, and retail. That breadth is one reason enterprise adoption has moved so quickly.The strongest business cases are not about replacing employees. They are about compressing the time between first draft and final output. In customer support, that might mean faster response times. In marketing, it means quicker content production. In legal work, it means more efficient document review before human oversight.

The forum material also shows how enterprise AI is shifting toward governed, integrated systems rather than isolated chat experiences. This aligns with the ChatGPT Team and Enterprise pitch in the guide: if organizations want to use AI at scale, they need permissions, privacy guarantees, admin controls, and consistent behavior across users.

Customer support and service

Customer support is one of the most obvious fit cases. ChatGPT can triage common questions, draft replies, and route issues based on confidence or policy. That makes it valuable in environments where speed matters and the long tail of repetitive requests is expensive.The real benefit is not elimination of support staff. It is the reduction of low-value repetitive work so humans can focus on exceptions and emotionally sensitive cases.

Marketing and content operations

Marketing teams use ChatGPT for ideation, copy variation, SEO support, and competitive research. The guide’s emphasis on Deep Research and Canva integration makes this especially potent because teams can move from insight to asset more quickly.The risk, of course, is sameness. If too many teams rely on the same underlying model without strong editorial oversight, content can become formulaic.

Developer workflows

For developers, ChatGPT is increasingly relevant as a coding partner, explanation engine, and debugging helper. The guide’s API discussion shows that OpenAI is trying to make this role more formal through Codex, function calling, structured outputs, and longer context windows.That matters because software teams are not only asking for code generation. They want code understanding, repository-level context, and integration with their existing systems.

- Faster first drafts of code.

- Better explanations of legacy systems.

- Assistance with refactoring.

- Support for automated testing workflows.

- API orchestration across tools and services.

Operations and knowledge management

The most underrated use case is internal knowledge work. Meeting notes, document summaries, and connector-based retrieval can save teams huge amounts of time. ChatGPT becomes especially valuable when it can pull from existing business systems instead of relying on users to re-upload files manually.Competition and Market Position

ChatGPT’s leadership position is still strong, but the market is no longer forgiving. The guide’s comparison table places it against Gemini, Claude, Microsoft Copilot, and Perplexity, each of which has a compelling story. That diversity is healthy for the market, but it also means OpenAI cannot afford stagnation.ChatGPT’s moat, according to the guide, is its ecosystem. That is a reasonable claim. No rival currently combines search, voice, image generation, custom assistants, enterprise tooling, and a broad app layer in quite the same way.

Still, the competition is real. Google is pushing long context and deep ecosystem integration. Anthropic is leaning into writing quality and careful analysis. Microsoft is turning Copilot into the productivity face of Office. Perplexity is staking out the research-first niche. DeepSeek and others are pressuring price and efficiency.

The uploaded forum materials back this framing, especially threads discussing Gemini Enterprise, Copilot’s GPT-5 rollout, and cross-platform AI competition. The common thread is that every major player is trying to own the interface layer where work happens.

What gives ChatGPT an edge

Its advantage is not singular superiority on every benchmark. It is product breadth. Users can start with a simple chat, then graduate to search, files, voice, images, research, agents, and integrations without leaving the app.That kind of continuity matters because most people do not want to manage five AI tools. They want one assistant that gets them through the day.

Where competitors can win

Competitors can still win on focused strengths. Gemini can win on context length and Google integration. Claude can win on polished writing and analysis. Copilot can win on Microsoft ecosystem gravity. Perplexity can win on source-first research. The market is therefore less about a single champion than about preferred workflow fit.The platform war

What’s emerging is a platform war for AI-native productivity. The winner will not simply have the best model. It will have the best combination of model, interface, permissions, integrations, and trust. That is why the ChatGPT guide reads less like a product review and more like an operating manual for a new digital layer.Strengths and Opportunities

ChatGPT’s biggest strength is that it now solves many different problems inside one consistent interface, which lowers learning costs and makes adoption easier across both consumers and businesses. The guide’s feature list and pricing ladder show OpenAI has built a product that can scale from casual experimentation to enterprise deployment without forcing users into a completely different environment.- Breadth of capability across writing, coding, research, voice, images, and workflow automation.

- Strong consumer funnel through the Free tier and low-friction onboarding.

- Clear monetization ladder from Plus to Pro to Enterprise.

- Enterprise readiness through Team and Enterprise controls.

- Integrations ecosystem that increases stickiness and switching costs.

- Research and agent features that create high-value professional use cases.

- Brand leadership that makes ChatGPT the default AI reference point for many users.

Why the upside is still large

The opportunity is not just to add more users. It is to deepen usage per user. If ChatGPT becomes the default place where people research, draft, meet, code, and buy, the value captured per account rises dramatically.That creates a powerful flywheel: more users attract more integrations, which create more use cases, which attract more users. That is platform economics in action.

Risks and Concerns

The same features that make ChatGPT powerful also create new risks. The guide is appropriately direct about hallucinations, privacy, data handling, and the fact that the product is not a substitute for professional expertise. Those are not side issues; they are central to whether the platform can be trusted at scale.The uploaded forum material adds additional cautionary context around agentic behavior, connector access, and enterprise governance. As ChatGPT touches more systems, the risk surface expands. An assistant that can browse, act, and connect is more useful, but also more dangerous if permissions, validation, or oversight are weak.

- Hallucinations and factual errors remain possible, even when responses sound authoritative.

- Privacy exposure is a serious concern on consumer plans.

- Overreliance may encourage users to skip verification.

- Agentic actions can create unintended side effects if permissions are too broad.

- Data governance complexity increases as connectors expand.

- Pricing pressure could push OpenAI toward more aggressive monetization.

- Regulatory scrutiny may intensify as AI becomes more embedded in daily work.

The trust problem

Trust is the hardest product problem in AI. Users need the system to be helpful, but also honest about uncertainty. If ChatGPT becomes too agreeable, too confident, or too eager to act, it risks being less useful in the long run.The enterprise security problem

The more ChatGPT plugs into inboxes, calendars, drives, and repos, the more it resembles a privileged user. That means enterprises need serious controls, not just convenience. The Team and Enterprise distinction is therefore not cosmetic; it is foundational.The human factor

There is also a softer risk: dependency. If people outsource too much thinking to AI, they may weaken their own judgment over time. The best use of ChatGPT is augmentation, not abdication.Looking Ahead

The future of ChatGPT looks less like a single product roadmap and more like a battle over the interface of work. The guide’s references to ads, Atlas, deeper app integrations, and future model generations suggest OpenAI wants ChatGPT to become more ubiquitous, more personalized, and more economically durable. The forum results around a possible cheaper tier and wider workspace integration reinforce that the company is still actively tuning the product-market fit in real time.The biggest question is whether OpenAI can keep the product simple while making it more capable. That balance is difficult. Every new feature adds power, but also complexity, permission prompts, and potential failure points. If OpenAI gets the balance right, ChatGPT becomes the default AI layer for work and life. If it gets it wrong, users may fragment across more specialized tools.

What to watch next:

- Pricing experiments such as lower-cost tiers or revised plan limits.

- More agentic features that can take actions, not just generate text.

- Broader connector support across calendars, drives, messaging, and business apps.

- Enterprise governance upgrades for auditability and compliance.

- Competitive model releases from Google, Anthropic, Microsoft, and others.

- Ad and monetization changes that could affect user trust.

- Interface refinements that make ChatGPT feel more like a workspace and less like a chat app.

In that sense, ChatGPT in 2026 is no longer just a product to review. It is a moving target, a business model, a software ecosystem, and a preview of how people may interact with computing for years to come.

Source: techi.com ChatGPT: The Complete Guide to Features, Pricing, Tips & More [2026]