Cisco’s push to bring AI and human expertise directly into the product experience is no longer theoretical — it’s running in customers’ environments and, by the company’s account, changing how support works at scale. In a recent AI Agent & Copilot Podcast interview, Cisco principal engineer Nik Kale laid out the architecture and outcomes of an in‑product system called AI Support Fabric, describing a stack that fuses proactive guidance, multi‑agent AI assistance, and a robust human‑escalation pipeline — all underpinned by a unified data foundation. The result, Cisco claims, is faster resolutions, less noise for customers, and knowledge that scales across channels.

Customers of complex networking and security products routinely face steep diagnostic journeys: they jump between portals, documentation sites, troubleshooting guides, and support tickets to get a complete picture. This fragmented experience drives time‑to‑resolution up and increases friction for both customers and engineering teams. Cisco’s AI Support Fabric attempts to address that by embedding support where the user already is — inside the product — and by turning institutional support experience into reusable, actionable guidance delivered just in time.

That goal maps to two industry trends IT leaders should already recognize: (1) the rise of Digital Adoption Platforms (DAPs) and in‑product guidance to reduce support volume, and (2) the use of AI and ML to compress time spent on root‑cause analysis and remediation. Vendors such as WalkMe and Chameleon popularized the DAP approach: surface targeted guidance inside the application UI, reducing the need for separate knowledge‑base lookups. Cisco’s approach stitches the DAP concept to AI agents and an enterprise knowledge backbone so the guidance is not only contextual but also predictive and evidence‑driven.

Independent artifacts in Cisco’s product documentation and adapter references show internal services and API endpoints that use the name “tron” in telemetry and adapter contexts, indicating this isn’t merely a podcast soundbite but plausibly an internal platform component used in Cisco cross‑product stacks. That said, Tron — as an enterprise single source of truth for support telemetry — is principally documented in the podcast; Cisco’s public product literature references analogous data‑foundation functions in other products, but does not publish full architecture details for Tron specifically. Treat the podcast description as the most direct public account to date.

This “author once, deploy everywhere” pattern is a pragmatic response to a chronic problem: organizational knowledge often lives in multiple silos — tickets, internal wikis, KB articles, and patch notes — and loses effectiveness because it’s inconsistent or stale across channels. DICE, if realized, solves a real operational pain point by centralizing canonical answers and ensuring they are discoverable both by people and by automated agents.

This is also where auditability and safety controls must be strongest: every AI recommendation should be logged, traceable to data sources, and made revocable. Cisco emphasizes this point in the podcast, and the theme is consistent with best practice guidance for AI systems deployed in security or mission‑critical support.

At the same time, the practical success of such platforms hinges on disciplined governance: grounding model outputs in verified telemetry, protecting data privacy, maintaining content quality, and preserving auditability for security actions. The headline metrics reported in the podcast — user counts and MTTR improvements — provide an encouraging signal, but buyers and engineers should require concrete case studies and independent audits before using such systems to automate high‑impact or security‑sensitive remediation. When combined with strict safety controls and a phased adoption plan, AI‑enabled, in‑product support can deliver measurable operational savings and a significantly smoother customer experience — but it will only do so if built with the same rigor we expect from the systems it aims to heal.

Source: Cloud Wars AI Agent and Copilot Podcast: Cisco Engineering Leader on AI's Impact in Product Support

Background / Overview

Background / Overview

Customers of complex networking and security products routinely face steep diagnostic journeys: they jump between portals, documentation sites, troubleshooting guides, and support tickets to get a complete picture. This fragmented experience drives time‑to‑resolution up and increases friction for both customers and engineering teams. Cisco’s AI Support Fabric attempts to address that by embedding support where the user already is — inside the product — and by turning institutional support experience into reusable, actionable guidance delivered just in time.That goal maps to two industry trends IT leaders should already recognize: (1) the rise of Digital Adoption Platforms (DAPs) and in‑product guidance to reduce support volume, and (2) the use of AI and ML to compress time spent on root‑cause analysis and remediation. Vendors such as WalkMe and Chameleon popularized the DAP approach: surface targeted guidance inside the application UI, reducing the need for separate knowledge‑base lookups. Cisco’s approach stitches the DAP concept to AI agents and an enterprise knowledge backbone so the guidance is not only contextual but also predictive and evidence‑driven.

What the AI Support Fabric claims to deliver

- In‑product guidance and remediation: contextual help and remediation content surfaced where customers encounter friction, reducing clicks and context switches.

- AI‑assisted troubleshooting: a multi‑agent assistant coordinates diagnostics and recommended actions at machine speed.

- Human escalation with context: when issues require human judgment, the system packages diagnostics, logs, and the AI’s reasoning for rapid handoff.

- A unified knowledge and data foundation: an ingestion and classification pipeline that converts years of customer interactions into reusable content, tagged by outcome (defect, doc gap, configuration issue).

- Measured business impact: Cisco reports weekly engagement at scale (hundreds of thousands of users and tens of thousands of customers), and MTTR reductions in the mid‑teens percentage range.

Architecture and the unified data foundation

Tron: single source of truth (as described)

At the heart of the system Kale describes is a unified ingestion layer called Tron. Tron’s role, per the podcast, is to act as a canonical data plane: ingest telemetry and support interactions at scale, categorize interactions into outcomes (defect, documentation gap, configuration issue), and make those outcomes queryable for downstream agent workflows and content generation.Independent artifacts in Cisco’s product documentation and adapter references show internal services and API endpoints that use the name “tron” in telemetry and adapter contexts, indicating this isn’t merely a podcast soundbite but plausibly an internal platform component used in Cisco cross‑product stacks. That said, Tron — as an enterprise single source of truth for support telemetry — is principally documented in the podcast; Cisco’s public product literature references analogous data‑foundation functions in other products, but does not publish full architecture details for Tron specifically. Treat the podcast description as the most direct public account to date.

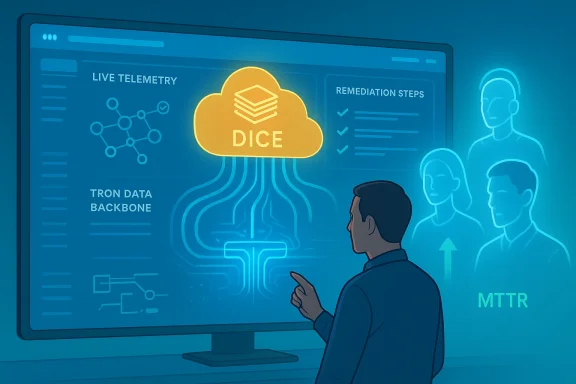

DICE: Digital Intellectual Capital Ecosystem

The podcast also introduces an operational knowledge layer called DICE (Digital Intellectual Capital Ecosystem). DICE is described as the distillation of institutional support knowledge — the reusable content, runbooks, and remediation playbooks derived from years of support operations. The stated design principle is “build once, deliver everywhere”: content authored or validated once in DICE can be pushed to in‑product guidance, chat assistants, human case notes, or documentation portals.This “author once, deploy everywhere” pattern is a pragmatic response to a chronic problem: organizational knowledge often lives in multiple silos — tickets, internal wikis, KB articles, and patch notes — and loses effectiveness because it’s inconsistent or stale across channels. DICE, if realized, solves a real operational pain point by centralizing canonical answers and ensuring they are discoverable both by people and by automated agents.

The three layers of support: how they work together

1) Proactive guidance

The first layer surfaces contextual guidance at the moment of friction. This is where the Digital Adoption Platform integration matters — instead of forcing the customer to search, the product surfaces the right remediation steps, checklists, or configuration validation. This reduces noise by narrowing content to what is relevant to that product instance and to the customer’s telemetry.- Benefits:

- Reduces initial support escalations.

- Drives self‑service completion for routine work.

- Improves customer perception of product helpfulness.

- Risks:

- Overly aggressive guidance can interrupt workflows.

- Poorly targeted content could erode trust.

2) AI assistant: multi‑agent coordination

The middle layer is an AI assistant described as a multi‑agent system. Instead of a single chat model, this assistant coordinates multiple specialized agents — for telemetry analysis, log parsing, configuration validation, and remediation sequencing — to act like a rapid response task force.- Benefits:

- Parallelizes diagnostic steps.

- Speeds root‑cause narrowing by executing tool integrations.

- Composes a recommendation set instead of a single hypothesis.

- Technical considerations:

- Grounding: agents must be reliably grounded in product telemetry and official documentation to avoid hallucinations.

- Tooling: safe tool invocation, authentication, rate limits, and audit trails are essential.

- Orchestration: an orchestration layer must resolve conflicting agent outputs and produce deterministic, auditable decisions.

3) Human escalation: human‑in‑the‑loop as a first‑class feature

The top layer treats escalation as a product capability. When human judgement is required — particularly in security incidents — the system packages the AI’s work product: the steps taken, relevant logs, hypothesis ranking, and suggested remediation. The benefits here are twofold: engineers get a curated context bundle that reduces investigative toil, and the customer benefits from faster, more informed human assistance.This is also where auditability and safety controls must be strongest: every AI recommendation should be logged, traceable to data sources, and made revocable. Cisco emphasizes this point in the podcast, and the theme is consistent with best practice guidance for AI systems deployed in security or mission‑critical support.

Implementation scale and claimed outcomes — what’s verifiable

The podcast reports striking adoption numbers: over 200,000 unique users and 15,000 unique customers engaging weekly with AI Support Fabric, and a 15–20% reduction in mean time to resolution (MTTR). Those are the headline metrics that justify the investment case: engagement at product scale, measurable MTTR gains, and reusable knowledge leverage.- Verifiability note: the user counts and MTTR reduction figures come directly from the Cisco engineer interviewed on the Cloud Wars / AI Agent & Copilot Podcast. Those numbers are credible as first‑party reporting but are not, as of this article’s publication, independently confirmed in Cisco’s public product pages or third‑party case studies. Readers should treat the figures as Cisco’s operational claims pending corroborating case studies or whitepapers.

- Cisco products have long included features that promise proactive monitoring, anomaly detection, and tools to shorten MTTR — Nexus Dashboard Insights is one public example where Cisco explicitly claims MTTR improvements from analytics and automated root‑cause workflows. This shows institutional competence and product continuity between observability and support functions.

- The DAP approach (digital adoption, in‑product guidance) is a widely adopted product pattern across enterprise apps, showing the general soundness of surfacing contextual help inside applications.

Critical analysis: strengths

- Putting support in context is practical and measurable. Reducing cognitive load by surfacing the right content in the product is low friction and aligns with product‑led support strategies that demonstrably lower ticket volume and time‑to‑value for customers.

- Centralized knowledge plus AI multiplies institutional learning. The DICE idea — distilling historic support interactions into reusable outcomes — enables knowledge to be leveraged by agents and people alike, improving consistency and reducing duplicated authoring effort.

- Multi‑agent design fits complex troubleshooting. Complex networking and security problems rarely have a single‑step solution. A coordinated agent approach that runs diagnostics in parallel and composes a ranked list of remediation steps can save significant time versus manual, sequential investigation.

- Human‑in‑the‑loop is respected and engineered. Making escalation a first‑class feature — with curated diagnostic bundles and audit trails — is sensible for security products and lowers operational risk.

- Potential for proactive remediation at scale. The podcast’s zero‑day vulnerability example — where targeted remediation content was pushed to affected customers — showcases how the system can move support from reactive to preventative outcomes.

Critical analysis: risks, gaps, and unanswered questions

- Model grounding and hallucinations. Any system that synthesizes recommendations from LLMs or generative models must rigorously ground suggestions in verified telemetry and product documentation. The podcast references grounding via Tron and DICE, but the technical safeguards (e.g., evidence attribution, model confidence thresholds, verification checks) need public scrutiny.

- Data residency, privacy, and compliance. Embedding telemetry and potentially sensitive logs into an AI pipeline raises questions about data residency, export controls, and customer consent. Enterprises in regulated sectors will demand clear controls for what data is used for model training versus ephemeral inference.

- Auditability and explainability. For security incidents and compliance cases, organizations need deterministic, explainable decisions. The claim that escalation bundles include AI reasoning is good, but auditors will ask for full traceability — which requires careful design of immutable logs, provenance metadata, and retention policies.

- Operational complexity and cost. Running a multi‑agent system with an ingestion pipeline at scale is nontrivial: compute, storage, model updates, and ongoing quality engineering are costly. ROI will depend on managing model lifecycle costs and proving sustained MTTR benefits.

- Content quality and stale knowledge. The “build once, deliver everywhere” model works only if content governance is strong. Reusable remediation can become a single point of failure if outdated content propagates across channels. Editorial review processes and versioning are essential.

- Vendor lock‑in and portability. Customers embedding Cisco’s in‑product assistance risk becoming tied to Cisco’s support fabric for both content and telemetry integration. Enterprises will want clear exit strategies, exportable knowledge artifacts, and interoperability with third‑party support platforms.

- Security of the AI agents themselves. Agents that execute diagnostics or suggest remediation must be hardened against adversarial inputs, prompt injection, and unauthorized tool invocation — particularly when they run in environments handling security telemetry.

Practical implications for IT leaders and product teams

If you’re evaluating or planning to deploy an in‑product AI support capability, consider the following checklist:- Data governance

- Define what telemetry is allowed into the AI pipeline and what must remain on‑premises.

- Identify regulatory constraints and encryption/retention policies.

- Model and content governance

- Institute authoring workflows with version control for remediation content.

- Require human sign‑off and periodic reviews for critical remediation playbooks.

- Safety and escalation design

- Enforce explicit confirmation thresholds before automated remediation or configuration changes.

- Build clear, auditable escalation paths with packaged context for humans.

- Observability and measurement

- Instrument outcomes to continuously measure MTTR, ticket deflection, and customer satisfaction.

- Run controlled pilots and A/B tests to quantify lift and detect regressions.

- Portability and vendor risk

- Ensure knowledge artifacts are exportable in standard formats.

- Demand SLAs and clear documentation of data flows from vendors.

ROI: how to frame the business case

The podcast frames ROI across three dimensions, which is a useful template for buyers:- Resolution speed — Measure reductions in handoff time, diagnostic loops, and overall MTTR.

- Knowledge leverage — Estimate the multiplier effect of turning a single engineer’s solution into a reusable playbook that prevents repeated manual work.

- Shift from reactive to proactive — Quantify incidents or outages prevented by proactive remediation and early detection.

- Start with a narrow, measurable pilot that targets a well-defined class of incidents.

- Instrument baseline metrics for MTTR, ticket volume, and customer satisfaction.

- Run a time‑boxed pilot and compute incremental improvements; then extrapolate conservatively.

Security and compliance considerations (special focus)

Security products demand special caution because automated guidance may influence firewall rules, patching behavior, or zero‑day responses. The design principles to insist upon are:- Immutable audit trails for every AI recommendation and subsequent human action.

- Role‑based approvals for any action that changes network or security posture.

- Separation of duties so an automated agent cannot both identify a vulnerability and apply a high‑impact remediation without human oversight.

- Provenance metadata to trace each recommendation back to raw telemetry and supporting documentation.

Technical and organizational adoption challenges

- Integration engineering: Instrumenting product telemetry into a central ingestion layer (Tron, per the podcast) requires engineering effort and often changes to observability and logging pipelines.

- Change management: Product teams, support engineers, and customers must be trained to trust and use in‑product guidance without overriding it reflexively.

- Continuous improvement: The knowledge backbone must be fed with new cases and audited regularly; this requires a surfaced process for triage and content update.

- Cross‑team coordination: Product, support, security, and legal teams must collaborate early to set guardrails and SLAs.

Recommendations — how to approach AI‑first support programs

- Begin with a high‑ROI use case: look for repetitive, welldefined incidents where guided remediation can eliminate manual steps.

- Design for auditability from day one: logs, provenance, and human approval flows must be built in.

- Focus on grounding: make sure every recommendation points to the telemetry and specific knowledge asset that supports it.

- Pilot with a small cohort and measure rigorously: track MTTR, ticket volume, resolution quality, and customer satisfaction.

- Govern content centrally: implement versioning and author review workflows for DICE‑style knowledge artifacts.

- Protect sensitive data: isolate what goes to cloud inference services versus on‑prem inference and ensure encryption and residency controls.

Conclusion

Cisco’s AI Support Fabric — as presented by Nik Kale on the AI Agent & Copilot Podcast — is a compelling articulation of the next stage of product support: in‑product, AI‑assisted, and human‑validated. The architecture addresses real operational problems by centralizing knowledge and automating diagnostic choreography while preserving human judgment where it matters. The idea of Tron + DICE + multi‑agent orchestration maps cleanly onto known enterprise patterns (data foundations, digital adoption, and agent orchestration), and Cisco’s broader product portfolio already demonstrates comparable investments in proactive monitoring and MTTR reduction.At the same time, the practical success of such platforms hinges on disciplined governance: grounding model outputs in verified telemetry, protecting data privacy, maintaining content quality, and preserving auditability for security actions. The headline metrics reported in the podcast — user counts and MTTR improvements — provide an encouraging signal, but buyers and engineers should require concrete case studies and independent audits before using such systems to automate high‑impact or security‑sensitive remediation. When combined with strict safety controls and a phased adoption plan, AI‑enabled, in‑product support can deliver measurable operational savings and a significantly smoother customer experience — but it will only do so if built with the same rigor we expect from the systems it aims to heal.

Source: Cloud Wars AI Agent and Copilot Podcast: Cisco Engineering Leader on AI's Impact in Product Support