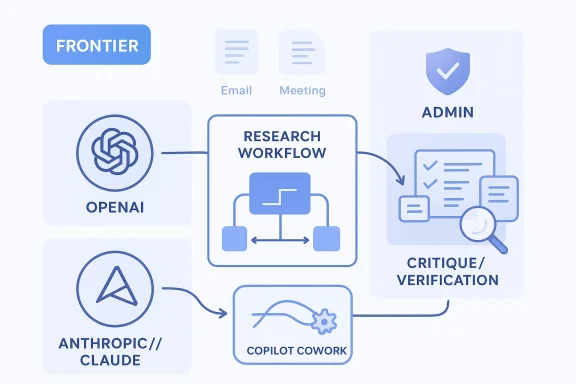

Microsoft’s latest push to make M365 Copilot Researcher smarter is really a bet on multi-model intelligence—and it may be the clearest sign yet that enterprise AI is moving beyond the single-model era. According to Microsoft’s own recent announcements, the company is now blending OpenAI and Anthropic capabilities inside Microsoft 365 Copilot, with Claude available in mainline Copilot Chat via the Frontier program and with Copilot Cowork bringing long-running, multi-step task execution into the product family. The direction is obvious: Microsoft wants AI that not only answers faster, but also checks itself before it speaks.

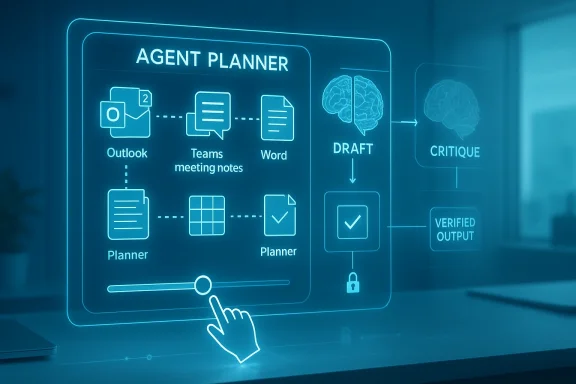

The immediate significance is twofold. First, Microsoft is turning Researcher into a more disciplined research workflow by adding a second model layer for critique, validation, and quality control. Second, the company is making a public case that the future of work AI will be built from a portfolio of models, not a single flagship system. That matters because it changes how enterprises think about accuracy, trust, governance, and vendor dependency all at once.

Microsoft introduced Researcher and Analyst in March 2025 as first-of-their-kind reasoning agents for Microsoft 365 Copilot, designed to work across emails, meetings, files, chats, and the web. In that first version, Researcher combined OpenAI’s deep research model with Microsoft 365 Copilot’s orchestration and search stack, positioning it as an answer engine for more complex, multi-step work. The company’s message then was that AI could move from chatty assistance to actual knowledge work.

What has changed since then is the architecture of trust. Microsoft has spent the last year broadening model choice across Copilot Studio and Microsoft 365 Copilot, while adding Anthropic models to its enterprise stack and emphasizing that the right model should be chosen for the right job. That includes support for Claude in Researcher, model selection in Copilot Studio, and broader “multi-model” language in Microsoft’s newest frontier messaging.

The result is a new competitive framing. Instead of asking whether OpenAI or Anthropic “wins,” Microsoft is asking whether a workflow can be composed from the best parts of each model. That is a subtle but important shift, because enterprise buyers generally care less about brand loyalty in the model layer than they do about quality, security, and operational reliability.

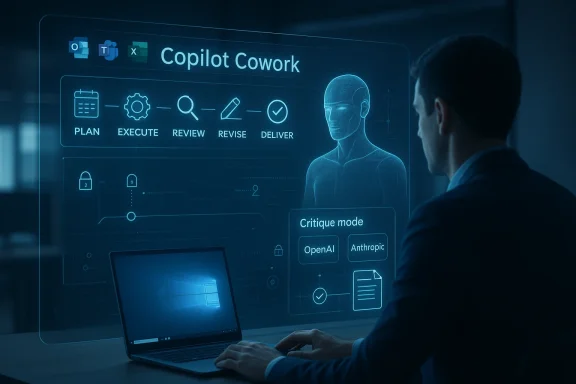

Microsoft’s Frontier program is central to this strategy. Frontier is Microsoft’s early-access space for new AI features in Microsoft 365, letting eligible users try experimental agents before general availability. In practical terms, that gives Microsoft a controlled way to test model behavior, collect feedback, and stage rollouts without forcing every customer into the same pace of change.

The TechRadar report’s framing of “multi-model agents checking each other” fits squarely inside Microsoft’s published roadmap, even if some of the benchmark claims in that article are not directly verifiable from Microsoft’s public material alone. What Microsoft has confirmed is the broader strategy: OpenAI remains foundational, Anthropic is now part of the stack, and Researcher can use Claude in a live session before reverting to the default Microsoft model afterward.

For Microsoft, the strategic value is that model diversity reduces the risk of overcommitting to any one frontier system. For customers, it creates a chance to pair models with different strengths, but it also raises the bar for governance. The more models that touch a workflow, the more important it becomes to know which one made which decision.

The multi-model idea is compelling because it mirrors how human teams operate. One person drafts, another reviews, and a third verifies facts or raises edge cases. In AI terms, Microsoft is trying to encode that same workflow by having one system generate and another critique. That is not just a product feature; it is a design philosophy.

That matters because many AI failures are not “model failures” in a narrow sense. They are workflow failures: wrong source selection, shallow verification, poor context retention, or a lack of quality gates. A critique pass can help catch some of those issues, but only if the system is designed to expose uncertainty rather than hide it. That is the real test.

That aligns with Microsoft’s broader “Frontier” framing, where agents are meant to complete tasks across time rather than merely answer questions in the moment. The company is no longer just selling a copilot; it is selling a layered workflow fabric.

The new step is not simply “better answers.” It is better supervised answers. Microsoft’s documentation now shows Claude available in Researcher sessions, with admins able to control access and with the system reverting to the default model after the session ends. That suggests Microsoft is treating Claude as an opt-in specialist rather than a wholesale replacement.

There is also a competitive reason. Google, OpenAI, Perplexity, and others are all staking claims in the deep research category, but enterprise buyers care about more than benchmark bragging rights. They want sources, controllability, and fit with existing productivity software. Microsoft’s advantage is that it can surface research, review, and action inside the same Microsoft 365 environment.

This is a classic enterprise compromise. Flexibility is welcome, but only if it doesn’t make the platform harder to secure. Microsoft seems to understand that the winner in enterprise AI may be the vendor that makes advanced model choice feel boringly manageable.

This shift is important for a simple reason: enterprises do not buy software to chat with it. They buy software to reduce effort. A copilot that can help draft and review is useful; a copilot that can carry a task forward over time is potentially transformative.

In practical terms, this may be the more disruptive part of the announcement. Research agents get headlines, but delegated workflow execution is where ROI tends to emerge. If an AI assistant can manage multi-step work with fewer human interventions, organizations can save time in ways that are easier to measure and justify.

That caution is healthy. In enterprise environments, an agent that is occasionally wrong is one thing; an agent that is wrong and persistent is something else entirely. The more work you hand over, the more important traceability becomes.

That is a subtle challenge to rivals. OpenAI may still power core capabilities, but Microsoft is no longer behaving like a single-supplier dependent. Anthropic is now a visible part of the Copilot story, and Microsoft has publicly described itself as “model-diverse by design.” That phrase is doing a lot of work.

That could ultimately be good for buyers. Multi-model competition tends to reduce lock-in and push vendors to differentiate on actual strengths. But it also means customers need to think more carefully about data flows, policy boundaries, and how model outputs are routed across systems.

That is why the market should be careful about treating any single score as destiny. Benchmark wins are useful signals, but enterprise value depends on real workflows, not synthetic leaderboards. The hard part is making intelligence dependable every day.

Microsoft’s own research on deep-research systems underscores the complexity of evaluation. In its LiveDRBench work, the company argued that deep research should be understood as broad, reasoning-intensive exploration and not merely the generation of long reports. That matters because it suggests the hard part is not prose length but evidence collection and claim formation.

It is also a psychologically useful feature. Users are more likely to trust a system that visibly checks itself than one that simply delivers a confident answer. That said, visible self-checking only helps if the checks are meaningful and not just ceremonial. A polished mistake is still a mistake.

Another risk is user overreliance. If the system looks more rigorous, people may stop checking as carefully, especially in high-volume enterprise environments. Microsoft’s governance and admin controls will matter a lot here, because trust must be designed into the workflow rather than assumed from the branding.

This is also where Microsoft has an advantage over pure-play AI startups. It already owns the productivity layer, the identity layer, and much of the compliance surface area that enterprises care about. If it can make agent governance feel native, that may matter more than any single benchmark gain.

For consumers and smaller teams, the story is slightly different. They are more likely to value speed and simplicity than policy controls and admin governance, but the same multi-model foundation may still improve answer quality. The biggest consumer benefit will likely be better research synthesis and more capable task completion in familiar apps.

Microsoft is clearly trying to answer those concerns ahead of time by emphasizing phased rollout, admin control, and the Frontier program. That is the right instinct. Enterprise AI adoption usually fails when product ambition outruns operational maturity.

It also gives Microsoft a strong story for regulated and enterprise-heavy markets. By combining Frontier access, admin controls, and session-scoped model choice, the company can argue that it is innovating without abandoning governance. That balance is likely to be attractive to IT teams.

That is why this is more than a feature update. It is a statement about what modern productivity software should be: less chat, more collaboration; less single-answer prompting, more managed reasoning. That is a meaningful product philosophy.

There is also a reputational risk. If Microsoft markets the system as highly reliable and users encounter confident but wrong outputs, trust could erode quickly. In enterprise software, trust is sticky when things work and very fragile when they don’t.

There is also a market risk that the industry becomes fixated on model choreography while underinvesting in better information grounding. If retrieval is weak, multiple models will simply debate weak evidence faster. That is why Microsoft’s own deep-research research is so important: it points to the underlying problem rather than just the product wrapper.

What to watch next is whether Microsoft can preserve simplicity while adding power. That is the central tension in enterprise AI right now. Users want better answers and more automation, but they do not want every task to become a model-selection exercise.

Source: TechRadar Microsoft and OpenAI are making AI research tools smarter to answer the trickiest questions

The immediate significance is twofold. First, Microsoft is turning Researcher into a more disciplined research workflow by adding a second model layer for critique, validation, and quality control. Second, the company is making a public case that the future of work AI will be built from a portfolio of models, not a single flagship system. That matters because it changes how enterprises think about accuracy, trust, governance, and vendor dependency all at once.

Overview

Overview

Microsoft introduced Researcher and Analyst in March 2025 as first-of-their-kind reasoning agents for Microsoft 365 Copilot, designed to work across emails, meetings, files, chats, and the web. In that first version, Researcher combined OpenAI’s deep research model with Microsoft 365 Copilot’s orchestration and search stack, positioning it as an answer engine for more complex, multi-step work. The company’s message then was that AI could move from chatty assistance to actual knowledge work.What has changed since then is the architecture of trust. Microsoft has spent the last year broadening model choice across Copilot Studio and Microsoft 365 Copilot, while adding Anthropic models to its enterprise stack and emphasizing that the right model should be chosen for the right job. That includes support for Claude in Researcher, model selection in Copilot Studio, and broader “multi-model” language in Microsoft’s newest frontier messaging.

The result is a new competitive framing. Instead of asking whether OpenAI or Anthropic “wins,” Microsoft is asking whether a workflow can be composed from the best parts of each model. That is a subtle but important shift, because enterprise buyers generally care less about brand loyalty in the model layer than they do about quality, security, and operational reliability.

Microsoft’s Frontier program is central to this strategy. Frontier is Microsoft’s early-access space for new AI features in Microsoft 365, letting eligible users try experimental agents before general availability. In practical terms, that gives Microsoft a controlled way to test model behavior, collect feedback, and stage rollouts without forcing every customer into the same pace of change.

The TechRadar report’s framing of “multi-model agents checking each other” fits squarely inside Microsoft’s published roadmap, even if some of the benchmark claims in that article are not directly verifiable from Microsoft’s public material alone. What Microsoft has confirmed is the broader strategy: OpenAI remains foundational, Anthropic is now part of the stack, and Researcher can use Claude in a live session before reverting to the default Microsoft model afterward.

Why this matters now

The enterprise AI market has spent the last two years debating whether one strong general-purpose model is enough. Microsoft’s answer is increasingly no—at least not for work that requires citation quality, multi-step reasoning, or policy-sensitive outcomes. That is especially important in document-heavy environments, where bad synthesis can be more damaging than a simple factual error.For Microsoft, the strategic value is that model diversity reduces the risk of overcommitting to any one frontier system. For customers, it creates a chance to pair models with different strengths, but it also raises the bar for governance. The more models that touch a workflow, the more important it becomes to know which one made which decision.

From Single-Model Assistants to Multi-Model Workflows

Microsoft’s new direction reflects a broader industry realization: complex work is rarely a one-shot prompt. A research agent may need to gather, summarize, cross-check, and refine information before producing something worthy of business use. That makes a chain of responsibility more valuable than a single model speaking with confidence.The multi-model idea is compelling because it mirrors how human teams operate. One person drafts, another reviews, and a third verifies facts or raises edge cases. In AI terms, Microsoft is trying to encode that same workflow by having one system generate and another critique. That is not just a product feature; it is a design philosophy.

Why orchestration matters

Orchestration is where enterprise AI becomes operational rather than experimental. Microsoft has repeatedly emphasized that Researcher depends on Copilot’s deep search and task orchestration, while Copilot Studio now supports multiple model families for specialized tasks. In other words, the model is only one part of the system; the workflow design around it is what creates value.That matters because many AI failures are not “model failures” in a narrow sense. They are workflow failures: wrong source selection, shallow verification, poor context retention, or a lack of quality gates. A critique pass can help catch some of those issues, but only if the system is designed to expose uncertainty rather than hide it. That is the real test.

- Multi-model orchestration can improve output quality.

- Critique layers may reduce unsupported claims.

- Task delegation can lower the burden on users.

- Governance becomes more complex as workflows span more models.

- The best system may not be the biggest model, but the best chain.

A more human-like division of labor

The clearest benefit of this approach is specialization. One model can be optimized for retrieval-heavy synthesis, another for long-context review, and another for final presentation. Microsoft’s product direction suggests it sees the research agent as a team rather than a solo performer.That aligns with Microsoft’s broader “Frontier” framing, where agents are meant to complete tasks across time rather than merely answer questions in the moment. The company is no longer just selling a copilot; it is selling a layered workflow fabric.

Researcher’s Evolution Inside Microsoft 365 Copilot

Researcher has always been one of the most ambitious parts of Microsoft 365 Copilot because it reaches beyond casual assistance into serious synthesis. Microsoft originally described it as a reasoning agent that could combine secure access to work data with web research to deliver highly skilled expertise on demand. That positioning was important because it framed Copilot not as a chatbot, but as a work-relevant analyst.The new step is not simply “better answers.” It is better supervised answers. Microsoft’s documentation now shows Claude available in Researcher sessions, with admins able to control access and with the system reverting to the default model after the session ends. That suggests Microsoft is treating Claude as an opt-in specialist rather than a wholesale replacement.

How the Claude option changes the product

There is a strategic reason Microsoft chose to expose model choice in Researcher before many other surfaces. Researcher is exactly where accuracy expectations are highest and where a second-pass critique can have the most visible value. If users trust a research agent on difficult questions, they are more likely to trust the rest of the Copilot ecosystem.There is also a competitive reason. Google, OpenAI, Perplexity, and others are all staking claims in the deep research category, but enterprise buyers care about more than benchmark bragging rights. They want sources, controllability, and fit with existing productivity software. Microsoft’s advantage is that it can surface research, review, and action inside the same Microsoft 365 environment.

- Researcher is moving from a single-model pattern to a model-choice pattern.

- Claude is being used as an optional session-level capability.

- Enterprise admins retain control over model access.

- The workflow is designed to fit Microsoft 365, not sit beside it.

- Microsoft is prioritizing trust and orchestration over novelty alone.

The importance of session boundaries

Microsoft’s session-based Claude support is easy to overlook, but it matters a lot. By reverting to the default model after a session ends, Microsoft limits some of the operational ambiguity that can come from persistent model switching. That makes the feature easier to govern, audit, and explain to IT teams.This is a classic enterprise compromise. Flexibility is welcome, but only if it doesn’t make the platform harder to secure. Microsoft seems to understand that the winner in enterprise AI may be the vendor that makes advanced model choice feel boringly manageable.

Copilot Cowork and the Move Toward Delegated Work

If Researcher is about better answers, Copilot Cowork is about better delegation. Microsoft says the feature is built in close collaboration with Anthropic and brings the technology behind Claude Cowork into Microsoft 365 Copilot to support long-running, multi-step tasks. That is a meaningful evolution because it moves the product from “help me think” toward “handle this workflow.”This shift is important for a simple reason: enterprises do not buy software to chat with it. They buy software to reduce effort. A copilot that can help draft and review is useful; a copilot that can carry a task forward over time is potentially transformative.

From prompts to task execution

Microsoft has been saying for months that it wants Copilot to do more than answer one-off prompts. Its “Wave 3” messaging focuses on intelligence that understands context of work, while Frontier exposes early access to experimental agents and workflow capabilities. Copilot Cowork fits directly into that narrative.In practical terms, this may be the more disruptive part of the announcement. Research agents get headlines, but delegated workflow execution is where ROI tends to emerge. If an AI assistant can manage multi-step work with fewer human interventions, organizations can save time in ways that are easier to measure and justify.

What users should expect

Even so, this is still frontier technology, not a mature autopilot. The benefits depend on the task being well-structured, the data being available, and the approval process being clear. Microsoft’s own Frontier framing signals that the company sees these experiences as experimental and subject to change.That caution is healthy. In enterprise environments, an agent that is occasionally wrong is one thing; an agent that is wrong and persistent is something else entirely. The more work you hand over, the more important traceability becomes.

- Better for multi-step, repeated work.

- More aligned with how business processes actually run.

- Potentially higher ROI than pure chat features.

- Requires tighter governance and auditability.

- Still depends on user oversight and policy controls.

What Microsoft Is Signaling to the Market

This announcement is about more than product updates. Microsoft is signaling that the AI market should expect composition rather than consolidation. The future, in Microsoft’s view, is not one model to rule them all, but a managed stack of models coordinated by a trusted work platform.That is a subtle challenge to rivals. OpenAI may still power core capabilities, but Microsoft is no longer behaving like a single-supplier dependent. Anthropic is now a visible part of the Copilot story, and Microsoft has publicly described itself as “model-diverse by design.” That phrase is doing a lot of work.

Competitive implications for OpenAI and Anthropic

For OpenAI, the upside is continued platform reach inside Microsoft’s enterprise distribution machine. For Anthropic, the upside is broader enterprise exposure and a strong validation point inside one of the world’s most important productivity suites. For both, the downside is that Microsoft is increasingly the orchestrator, not the captive customer.That could ultimately be good for buyers. Multi-model competition tends to reduce lock-in and push vendors to differentiate on actual strengths. But it also means customers need to think more carefully about data flows, policy boundaries, and how model outputs are routed across systems.

Why benchmarks matter, but only up to a point

TechRadar highlighted benchmark comparisons such as DRACO, but Microsoft’s public material does not fully expose the testing methodology behind every claim in that report. What Microsoft does have publicly is a growing body of research on deep research systems, citation reliability, and the difficulty of auditing such tools at scale. That research suggests the broader category is still hard to evaluate cleanly.That is why the market should be careful about treating any single score as destiny. Benchmark wins are useful signals, but enterprise value depends on real workflows, not synthetic leaderboards. The hard part is making intelligence dependable every day.

- Microsoft is positioning itself as a multi-model platform.

- OpenAI remains central, but not exclusive.

- Anthropic gains enterprise legitimacy through Copilot.

- Competition may improve quality and reduce lock-in.

- Benchmarks are informative, but workflow reliability matters more.

Accuracy, Critique, and the Problem of Trust

The most interesting part of the story may be the critique layer itself. AI systems that review other AI systems are a logical response to the problem of hallucinations, but they are not magic. A second model can improve quality, yet it can also inherit blind spots, overconfidence, or shared training assumptions.Microsoft’s own research on deep-research systems underscores the complexity of evaluation. In its LiveDRBench work, the company argued that deep research should be understood as broad, reasoning-intensive exploration and not merely the generation of long reports. That matters because it suggests the hard part is not prose length but evidence collection and claim formation.

What critique can do well

A critique pass is strongest when the failure mode is obvious: missing sources, weak logic, incomplete coverage, or factual inconsistency. It can also help standardize style and force a system to revisit weak assumptions before delivering the output. In that sense, critique is a quality-control mechanism more than a source of new intelligence.It is also a psychologically useful feature. Users are more likely to trust a system that visibly checks itself than one that simply delivers a confident answer. That said, visible self-checking only helps if the checks are meaningful and not just ceremonial. A polished mistake is still a mistake.

Where critique can fail

The biggest risk is correlated error. If two models draw from similar assumptions, datasets, or retrieval patterns, they may agree for the wrong reasons. In that case, a critique layer becomes a confidence amplifier rather than a truth detector.Another risk is user overreliance. If the system looks more rigorous, people may stop checking as carefully, especially in high-volume enterprise environments. Microsoft’s governance and admin controls will matter a lot here, because trust must be designed into the workflow rather than assumed from the branding.

- Critique improves surface quality, but not infallibility.

- Shared blind spots can survive multi-model review.

- Users may over-trust polished outputs.

- Governance controls are essential.

- The best outcomes still depend on human oversight.

The enterprise trust stack

Microsoft’s broader agent strategy shows it understands trust as a stack, not a checkbox. Frontier provides early access, admin controls shape rollout, and Agent 365 is being positioned as the control plane for AI agents. That architecture suggests Microsoft knows the hard problem is not model access; it is lifecycle management.This is also where Microsoft has an advantage over pure-play AI startups. It already owns the productivity layer, the identity layer, and much of the compliance surface area that enterprises care about. If it can make agent governance feel native, that may matter more than any single benchmark gain.

Enterprise vs. Consumer Impact

For enterprise customers, the new Researcher and Copilot Cowork direction could be genuinely useful, especially in legal, finance, consulting, research, and operations teams. These are environments where the value of AI depends on source quality, workflow consistency, and the ability to reuse existing Microsoft 365 data securely. Microsoft’s own materials repeatedly tie Copilot to emails, files, meetings, chats, and organizational knowledge.For consumers and smaller teams, the story is slightly different. They are more likely to value speed and simplicity than policy controls and admin governance, but the same multi-model foundation may still improve answer quality. The biggest consumer benefit will likely be better research synthesis and more capable task completion in familiar apps.

The business buyer’s lens

Enterprise buyers will ask whether the multi-model layer improves outcomes, not just demos. They will care about latency, cost, audit trails, access controls, and whether the agent can consistently cite reliable sources. Those questions will matter more than whether the system sounds smarter in a launch video.Microsoft is clearly trying to answer those concerns ahead of time by emphasizing phased rollout, admin control, and the Frontier program. That is the right instinct. Enterprise AI adoption usually fails when product ambition outruns operational maturity.

The consumer-quality question

Consumers, on the other hand, may not notice the model plumbing at all. What they will notice is whether research answers are more complete, whether outputs need fewer edits, and whether the assistant actually helps finish work. In that sense, the success metric is not model diversity itself, but perceived usefulness.- Enterprise users need governance and traceability.

- Consumer users care more about ease and usefulness.

- Microsoft can differentiate through native Microsoft 365 integration.

- Multi-model depth may be invisible until something goes wrong.

- Successful rollout depends on confidence, not just capability.

Strengths and Opportunities

Microsoft’s approach has several genuine strengths. It is rare to see a major platform company openly embrace model diversity instead of pretending one architecture should serve every need. That gives Microsoft flexibility, makes the platform more resilient, and creates room for specialized quality gains in difficult workflows.It also gives Microsoft a strong story for regulated and enterprise-heavy markets. By combining Frontier access, admin controls, and session-scoped model choice, the company can argue that it is innovating without abandoning governance. That balance is likely to be attractive to IT teams.

- Model diversity reduces single-vendor dependency.

- Critique layers can improve answer quality.

- Copilot Cowork extends AI from answering to doing.

- Frontier offers a safe-ish way to test new features.

- Microsoft 365 integration keeps workflows in one place.

- Admin controls help with enterprise governance.

- Anthropic support increases competitive leverage and choice.

A better fit for complex knowledge work

The biggest opportunity is in work that demands synthesis rather than recall. Research, briefing, analysis, and workflow execution are exactly where Microsoft wants Copilot to live. If it succeeds, it could define the next phase of productivity software.That is why this is more than a feature update. It is a statement about what modern productivity software should be: less chat, more collaboration; less single-answer prompting, more managed reasoning. That is a meaningful product philosophy.

Risks and Concerns

The risks are just as real as the opportunities. Multi-model systems can be more accurate, but they can also become more opaque, especially when users do not know which model handled which stage of a task. Once the workflow gets complicated, troubleshooting and accountability get complicated too.There is also a reputational risk. If Microsoft markets the system as highly reliable and users encounter confident but wrong outputs, trust could erode quickly. In enterprise software, trust is sticky when things work and very fragile when they don’t.

- Model hallucinations can still slip through critique.

- Shared blind spots may survive multi-model review.

- Governance complexity rises with every added model.

- Admin misconfiguration could limit safe rollout.

- Overpromising benchmarks can backfire if real-world results lag.

- Latency and cost may increase with multi-step review.

- User overreliance could reduce independent checking.

The hidden cost of sophistication

More sophistication usually means more moving parts. That can be fine in a lab, but at enterprise scale it creates support, audit, and compliance burdens. Microsoft’s architecture may be right, but the operational overhead will still need to be justified by measurable gains.There is also a market risk that the industry becomes fixated on model choreography while underinvesting in better information grounding. If retrieval is weak, multiple models will simply debate weak evidence faster. That is why Microsoft’s own deep-research research is so important: it points to the underlying problem rather than just the product wrapper.

Looking Ahead

The next phase will be about proving that this architecture works outside of controlled previews. Microsoft has already signaled that Frontier access, Claude support, and new Wave 3 experiences are rolling out in stages, which means the real test will be customer adoption, not launch-day enthusiasm. If users consistently see better research quality and smoother task completion, the strategy will gain momentum quickly.What to watch next is whether Microsoft can preserve simplicity while adding power. That is the central tension in enterprise AI right now. Users want better answers and more automation, but they do not want every task to become a model-selection exercise.

- Frontier expansion beyond early access cohorts

- Wider Claude availability in Researcher and Copilot Chat

- More public detail on critique and validation behavior

- Agent 365 governance adoption by enterprise IT teams

- Evidence of real workflow gains, not just benchmark wins

Source: TechRadar Microsoft and OpenAI are making AI research tools smarter to answer the trickiest questions