Microsoft has moved Copilot Cowork from preview talk to practical deployment, and that matters because it signals a broader shift in enterprise AI: from tools that help people draft work to systems that can actually carry multi-step work across Microsoft 365. The new Frontier rollout puts Work IQ, Anthropic-powered reasoning, and agentic execution into the same commercial frame, which is exactly the kind of change that can redraw adoption patterns inside large organisations. It is also a clear message to customers still treating Copilot as an add-on: the next phase of Microsoft’s AI strategy is not about generating text faster, but about doing work inside governed enterprise boundaries.

Microsoft’s March 9, 2026 Frontier announcements marked a decisive escalation in its Copilot strategy. The company positioned Wave 3 of Microsoft 365 Copilot as the point where AI begins to move from assistance toward execution, while also introducing Agent 365 and the new Microsoft 365 E7: The Frontier Suite as the commercial wrapper for that change. Microsoft described this as a move toward durable enterprise value rather than isolated experimentation, and it tied the rollout to a broader “Frontier Transformation” narrative.

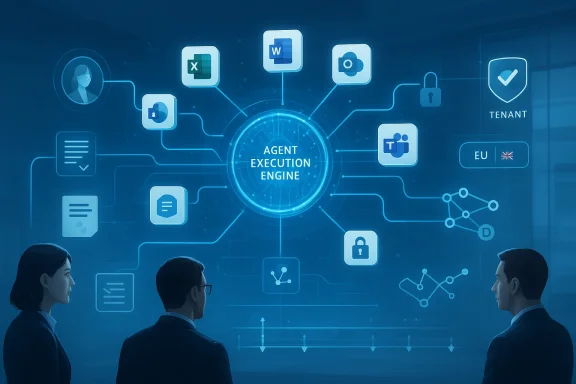

The central technical idea is Work IQ, Microsoft’s intelligence layer for understanding how employees work across apps, data, and collaboration surfaces. Microsoft says Work IQ powers the new agentic experiences in Word, Excel, PowerPoint, and Outlook, letting Copilot operate with the context of people’s jobs, relationships, and organisational data. That framing is important because it distinguishes Microsoft’s approach from generic model access: the company is selling context plus governance, not just access to a model endpoint.

A second, equally important shift is Microsoft’s embrace of model diversity. The company has made Anthropic models available in Copilot Studio and other Microsoft 365 scenarios, and its documentation now states that Anthropic is a Microsoft subprocessor for Microsoft Online Services, with region-specific controls and exclusions. Microsoft’s own guidance says Anthropic is available by default in some geographies, but disabled by default in the EU, UK, and EFTA, and unavailable in government clouds.

That foundation helps explain why the Copilot Cowork story is getting attention now. If Copilot was the first wave of “AI in the flow of work,” Cowork is Microsoft’s attempt to turn that flow into a managed execution layer. In practical terms, that means more planning, more orchestration, more approvals, and more background processing across multiple Microsoft 365 services. The market should read that as a response to a very simple buyer expectation: if AI is going to sit at the center of work, it has to do more than write a nice first draft.

That distinction matters because enterprise work is rarely a single generation event. A launch brief, an executive review package, or a competitive analysis usually requires gathering emails, meeting notes, spreadsheets, source documents, and approvals before anything is final. Cowork is designed to reduce the friction between those steps, which is why Microsoft is presenting it as a move from assistant to execution engine.

Microsoft also says the experience stays within the customer’s tenant and existing security and governance boundaries. That is not a minor detail; it is the difference between an interesting demo and something an IT department can consider for regulated workflows. In a market where buyers remain wary of AI systems wandering outside approved data and compliance limits, that promise is doing a lot of the selling.

The strategic significance is obvious: Microsoft wants Copilot to be model-agnostic where it helps customers, while still keeping the work inside Microsoft’s trust and identity layer. That lets the company argue that it is not locked to a single frontier lab, even as it continues to use OpenAI models heavily. In a market where model leadership can shift every few months, that flexibility is a defensive advantage as much as a product feature.

There is also a commercial reality here. If Microsoft can prove that a combination of OpenAI and Anthropic models works better for specific enterprise tasks, the company gains leverage in procurement conversations. Buyers do not just ask which model is strongest; they ask which platform gives them the best combination of quality, control, and deployment simplicity. Microsoft wants the answer to be Copilot.

The idea is sensible, even if the benchmark claim should be read with caution. Any deep-research tool is vulnerable to confident but weakly supported synthesis, and a second-pass reviewer can reduce some of that risk. Separating generation from critique also reflects a broader industry trend: companies are starting to treat verification as a product feature, not an afterthought.

That said, benchmark-driven improvements should not be overinterpreted. Even a 13.8% gain on a benchmark does not guarantee that every real-world briefing will be more accurate or more useful. Benchmarks measure a slice of the problem, and procurement teams should remember that research quality in the wild depends on source selection, domain coverage, and user judgment.

That pricing structure is telling because it shows Microsoft is no longer selling Copilot as an isolated premium feature. It is building a stack in which productivity, identity, security, and agent governance are bundled into a more complete enterprise AI proposition. The result is not just a new SKU; it is a new argument for platform consolidation.

The broader implication is that Microsoft is creating a pathway from experimentation to operational standardisation. If customers can justify E7 as a simpler, cheaper, and more governable way to deploy AI at scale, the suite could become the enterprise default for Frontier-era AI. That would make it harder for point solutions to compete on total cost of ownership alone.

That means the UK and EU enterprise story is not the same as the U.S. one. Administrators in those regions have to consciously enable Anthropic-based functionality, and some organisations may choose not to do so at all. This is a classic example of how regulation, data residency, and vendor architecture all collide in enterprise AI deployment.

It also means that companies with multinational footprints may end up with uneven functionality across regions. That is not necessarily a deal-breaker, but it can complicate internal expectations. A workflow available in the U.S. may need a different control profile in Europe, and the resulting inconsistency can slow rollout unless IT teams plan for it in advance.

Google, OpenAI, Anthropic, and other frontier labs each have their own strengths, but Microsoft’s advantage is distribution inside the productivity stack where work already happens. The company is leveraging that position to offer multi-model access without forcing customers to assemble the infrastructure themselves. That is a powerful combination, and it could make enterprise AI buying decisions more conservative around pure model branding.

For OpenAI, the risk is not that Microsoft stops using its models, but that Microsoft becomes the primary workplace interface regardless of which model is doing the underlying reasoning. For Anthropic, the upside is distribution into Microsoft’s enterprise base. For Google, the challenge is that Microsoft is combining productivity and AI in a way that may be harder to unbundle once the operating model settles.

The opportunity is even bigger if Microsoft can keep translating demo-ready orchestration into dependable day-to-day outcomes. The companies that win the next phase of enterprise AI will not necessarily be the ones with the flashiest model; they will be the ones that make AI feel like a natural extension of work. Microsoft is clearly aiming for that outcome.

There are also governance and regional compliance risks. Anthropic being disabled by default in the EU, UK, and EFTA, plus its exclusion from government clouds, shows how quickly rollout complexity can arise when AI is embedded in regulated environments. For multinational buyers, that can become a real operational headache.

Watch for adoption signals in three places: how quickly customers enable Anthropic in supported regions, how widely Agent 365 is deployed as a governance layer, and whether Researcher’s critique-based approach improves trust in everyday use. Those will be the clearest indicators of whether Microsoft’s “assistant to execution engine” thesis is real or just well-marketed.

Microsoft’s latest Copilot move is significant because it changes the question enterprises ask about AI. The debate is no longer whether a model can draft a good answer, but whether a platform can safely execute work across people, data, and systems. That is a much higher bar, and it is also a much more valuable one. If Microsoft can clear it, Frontier will not just widen the gap between early adopters and laggards; it will redefine what workplace AI is expected to do.

Source: uctoday.com Microsoft Copilot Cowork Goes Live on Frontier as Wave 3 Shifts AI from Assistant to Execution Engine - UC Today

Background

Background

Microsoft’s March 9, 2026 Frontier announcements marked a decisive escalation in its Copilot strategy. The company positioned Wave 3 of Microsoft 365 Copilot as the point where AI begins to move from assistance toward execution, while also introducing Agent 365 and the new Microsoft 365 E7: The Frontier Suite as the commercial wrapper for that change. Microsoft described this as a move toward durable enterprise value rather than isolated experimentation, and it tied the rollout to a broader “Frontier Transformation” narrative.The central technical idea is Work IQ, Microsoft’s intelligence layer for understanding how employees work across apps, data, and collaboration surfaces. Microsoft says Work IQ powers the new agentic experiences in Word, Excel, PowerPoint, and Outlook, letting Copilot operate with the context of people’s jobs, relationships, and organisational data. That framing is important because it distinguishes Microsoft’s approach from generic model access: the company is selling context plus governance, not just access to a model endpoint.

A second, equally important shift is Microsoft’s embrace of model diversity. The company has made Anthropic models available in Copilot Studio and other Microsoft 365 scenarios, and its documentation now states that Anthropic is a Microsoft subprocessor for Microsoft Online Services, with region-specific controls and exclusions. Microsoft’s own guidance says Anthropic is available by default in some geographies, but disabled by default in the EU, UK, and EFTA, and unavailable in government clouds.

That foundation helps explain why the Copilot Cowork story is getting attention now. If Copilot was the first wave of “AI in the flow of work,” Cowork is Microsoft’s attempt to turn that flow into a managed execution layer. In practical terms, that means more planning, more orchestration, more approvals, and more background processing across multiple Microsoft 365 services. The market should read that as a response to a very simple buyer expectation: if AI is going to sit at the center of work, it has to do more than write a nice first draft.

What Copilot Cowork Actually Changes

Copilot Cowork is best understood as an orchestration layer rather than a chatbot feature. Instead of asking Copilot to produce a document, the user describes an outcome, and the system plans the work, executes steps in the background, and pauses for checkpoints before making changes. Microsoft’s own description emphasizes long-running tasks that can stretch for hours, which is a major departure from the old “prompt-response” model.That distinction matters because enterprise work is rarely a single generation event. A launch brief, an executive review package, or a competitive analysis usually requires gathering emails, meeting notes, spreadsheets, source documents, and approvals before anything is final. Cowork is designed to reduce the friction between those steps, which is why Microsoft is presenting it as a move from assistant to execution engine.

From drafting to delegation

The strongest part of the Cowork pitch is not that it writes better prose. It is that it can coordinate tasks across Outlook, Teams, Excel, SharePoint, Word, and PowerPoint with less human handoff. That has obvious implications for operational teams, because the time cost in enterprise knowledge work often comes from switching contexts, not from the act of writing itself.Microsoft also says the experience stays within the customer’s tenant and existing security and governance boundaries. That is not a minor detail; it is the difference between an interesting demo and something an IT department can consider for regulated workflows. In a market where buyers remain wary of AI systems wandering outside approved data and compliance limits, that promise is doing a lot of the selling.

- Outcome-first workflows replace prompt-by-prompt drafting.

- Background execution reduces the need for manual handoffs.

- Checkpoints preserve human oversight.

- Tenant-bound processing supports governance and compliance.

- Multi-app coordination is the real value, not isolated text generation.

Why Anthropic Matters to Microsoft’s Strategy

Microsoft’s partnership with Anthropic is no longer a side story. In November 2025, Microsoft, NVIDIA, and Anthropic announced a strategic relationship that included Anthropic’s commitment to purchase $30 billion of Azure compute capacity and additional capacity up to one gigawatt. That agreement created the economic and technical basis for deeper integration, and Microsoft later confirmed Anthropic as a subprocessor across Microsoft Online Services.The strategic significance is obvious: Microsoft wants Copilot to be model-agnostic where it helps customers, while still keeping the work inside Microsoft’s trust and identity layer. That lets the company argue that it is not locked to a single frontier lab, even as it continues to use OpenAI models heavily. In a market where model leadership can shift every few months, that flexibility is a defensive advantage as much as a product feature.

A multi-model platform, not a single-model bet

Microsoft’s message is that customers should not have to follow the latest “king of the hill” model from one vendor to another. Instead, Copilot can route work to whichever model best fits the task, while Work IQ supplies the customer context that makes the output useful inside real business workflows. That is a smart strategic posture, because it reduces dependence on any one model brand and keeps Microsoft closer to the customer relationship.There is also a commercial reality here. If Microsoft can prove that a combination of OpenAI and Anthropic models works better for specific enterprise tasks, the company gains leverage in procurement conversations. Buyers do not just ask which model is strongest; they ask which platform gives them the best combination of quality, control, and deployment simplicity. Microsoft wants the answer to be Copilot.

- Anthropic expands model choice for enterprise users.

- Azure compute becomes part of the broader AI platform story.

- Copilot Studio and Microsoft 365 benefit from shared model access.

- Vendor flexibility becomes a selling point.

- Platform control remains with Microsoft.

Researcher, Critique, and the Reliability Problem

Microsoft is not only pushing execution; it is also trying to improve trust in AI-generated research. The company says its Researcher agent now uses a Critique feature that separates generation from evaluation, allowing one model to draft a response while another reviews accuracy and citation quality before the result is delivered. Microsoft says the feature uses both OpenAI and Anthropic models, and claims a 13.8% lift on the DRACO benchmark.The idea is sensible, even if the benchmark claim should be read with caution. Any deep-research tool is vulnerable to confident but weakly supported synthesis, and a second-pass reviewer can reduce some of that risk. Separating generation from critique also reflects a broader industry trend: companies are starting to treat verification as a product feature, not an afterthought.

Why evaluation matters as much as generation

For enterprise users, the challenge is rarely getting an answer at all. The challenge is getting an answer that can survive scrutiny from finance, legal, security, or leadership teams. A research agent that can surface citations, compare model outputs, and flag weaknesses is materially more useful than a system that merely produces fluent prose.That said, benchmark-driven improvements should not be overinterpreted. Even a 13.8% gain on a benchmark does not guarantee that every real-world briefing will be more accurate or more useful. Benchmarks measure a slice of the problem, and procurement teams should remember that research quality in the wild depends on source selection, domain coverage, and user judgment.

- Critique adds a verification layer to Researcher.

- Model separation may reduce hallucination risk.

- Citation quality becomes part of the product promise.

- Benchmark gains should be treated as directional, not absolute.

- Human review still matters for high-stakes output.

The Enterprise Economics of Frontier

The commercial framing around Frontier is just as important as the technology. Microsoft says Microsoft 365 E7: The Frontier Suite will be generally available on May 1, 2026, at $99 per user, combining Microsoft 365 E5, Microsoft 365 Copilot, and Agent 365 into a single bundle. Microsoft also says Agent 365 will be generally available on the same date at $15 per user per month, and positions the suite as cheaper than buying those capabilities separately.That pricing structure is telling because it shows Microsoft is no longer selling Copilot as an isolated premium feature. It is building a stack in which productivity, identity, security, and agent governance are bundled into a more complete enterprise AI proposition. The result is not just a new SKU; it is a new argument for platform consolidation.

Licensing as a strategy, not a footnote

Microsoft has always understood that licensing architecture can shape adoption. By bundling Copilot, security, and agent governance into a single suite, it lowers the friction for buyers who want to standardise on one vendor rather than piece together several products. That is especially relevant when CIOs are under pressure to prove AI value without increasing integration complexity.The broader implication is that Microsoft is creating a pathway from experimentation to operational standardisation. If customers can justify E7 as a simpler, cheaper, and more governable way to deploy AI at scale, the suite could become the enterprise default for Frontier-era AI. That would make it harder for point solutions to compete on total cost of ownership alone.

- E7 bundles AI, productivity, and security.

- Agent 365 provides a control plane for agents.

- Pricing simplicity can speed procurement.

- Standardisation may matter more than isolated feature wins.

- Platform lock-in concerns will still need scrutiny.

Regional Controls, Governance, and Compliance

One of the most important details in the rollout is that Anthropic availability is not uniform. Microsoft’s documentation says Anthropic models in Microsoft 365 Copilot and related services are disabled by default in the EU Data Boundary, the UK, and EFTA regions, and are not available in government clouds such as GCC, GCC High, and DoD. Microsoft also notes a phased rollout, with full availability expected by the end of March 2026.That means the UK and EU enterprise story is not the same as the U.S. one. Administrators in those regions have to consciously enable Anthropic-based functionality, and some organisations may choose not to do so at all. This is a classic example of how regulation, data residency, and vendor architecture all collide in enterprise AI deployment.

What administrators need to think about

The governance message from Microsoft is that Copilot Cowork can remain inside the tenant and still respect existing control boundaries. But organisations must still decide whether to enable Anthropic processing, how to govern agent permissions, and whether a given workload is appropriate for agentic execution. In practice, that means AI governance teams are becoming a new kind of operations layer.It also means that companies with multinational footprints may end up with uneven functionality across regions. That is not necessarily a deal-breaker, but it can complicate internal expectations. A workflow available in the U.S. may need a different control profile in Europe, and the resulting inconsistency can slow rollout unless IT teams plan for it in advance.

- EU, UK, and EFTA require explicit admin action.

- Government clouds are excluded from Anthropic use.

- Phased rollout means not every tenant gets the same timing.

- Governance teams will need clearer operating models.

- Regional inconsistency may affect global deployments.

Competitive Implications for OpenAI, Google, and the AI Platform Market

Microsoft’s move has implications well beyond Copilot itself. If the company succeeds, it will not simply have a better assistant; it will have a more credible enterprise execution platform that sits above competing models. That changes the competitive frame from “whose model is best?” to “whose platform is easiest to adopt, govern, and scale?”Google, OpenAI, Anthropic, and other frontier labs each have their own strengths, but Microsoft’s advantage is distribution inside the productivity stack where work already happens. The company is leveraging that position to offer multi-model access without forcing customers to assemble the infrastructure themselves. That is a powerful combination, and it could make enterprise AI buying decisions more conservative around pure model branding.

The platform war moves up the stack

What Microsoft is really selling is not just inference. It is context, identity, permissions, orchestration, and approval flows, all wrapped around a set of frontier models. That means the competitive field is now centered on work management and governance, not only on response quality or benchmark scores.For OpenAI, the risk is not that Microsoft stops using its models, but that Microsoft becomes the primary workplace interface regardless of which model is doing the underlying reasoning. For Anthropic, the upside is distribution into Microsoft’s enterprise base. For Google, the challenge is that Microsoft is combining productivity and AI in a way that may be harder to unbundle once the operating model settles.

- Platform depth may matter more than model superiority.

- Distribution inside Microsoft 365 is a major advantage.

- Multi-model routing reduces dependence on one provider.

- Governance and identity are now key differentiators.

- Vendor switching costs may rise as workflows become agentic.

Strengths and Opportunities

Microsoft’s Frontier push has several obvious strengths, and the most important one is that it links AI capability to a real operating model. That gives buyers a clearer path from pilot projects to scaled deployments, especially when the organisation already lives in Microsoft 365. It also means Microsoft can sell value in terms executives understand: time saved, workflows simplified, and governance improved.The opportunity is even bigger if Microsoft can keep translating demo-ready orchestration into dependable day-to-day outcomes. The companies that win the next phase of enterprise AI will not necessarily be the ones with the flashiest model; they will be the ones that make AI feel like a natural extension of work. Microsoft is clearly aiming for that outcome.

- Stronger enterprise integration across Microsoft 365.

- Better governance through tenant-bound execution.

- Model choice without leaving the platform.

- Long-running workflow support for real business processes.

- Clearer commercial packaging via E7 and Agent 365.

- Potential productivity gains across knowledge work.

- Reduced friction for organisations already standardized on Microsoft.

Risks and Concerns

The biggest risk is that agentic systems can become too persuasive before they become truly reliable. Even with checkpoints and critique layers, AI that can plan, execute, and coordinate work raises the stakes of mistakes. A wrong assumption at the beginning of a workflow can propagate through multiple documents and approvals before anyone notices.There are also governance and regional compliance risks. Anthropic being disabled by default in the EU, UK, and EFTA, plus its exclusion from government clouds, shows how quickly rollout complexity can arise when AI is embedded in regulated environments. For multinational buyers, that can become a real operational headache.

- Error propagation across multi-step automated workflows.

- Approval fatigue if users are asked to review too many checkpoints.

- Regional inconsistency in feature availability and controls.

- Vendor concentration if AI and productivity become too tightly bundled.

- Benchmark optimism that may not fully reflect real-world performance.

- Security misconfiguration if admins rush rollout.

- User overreliance on automation that still needs supervision.

What to Watch Next

The next few months will reveal whether Cowork is a headline feature or the beginning of a real operating model. The most important question is not whether the demo works, but whether organisations can safely scale it across repeated, business-critical workflows without creating new governance burdens. If Microsoft can answer that, Frontier may become its most important enterprise AI pivot yet.Watch for adoption signals in three places: how quickly customers enable Anthropic in supported regions, how widely Agent 365 is deployed as a governance layer, and whether Researcher’s critique-based approach improves trust in everyday use. Those will be the clearest indicators of whether Microsoft’s “assistant to execution engine” thesis is real or just well-marketed.

Key things to monitor

- Frontier enrollment among large enterprises.

- Anthropic enablement rates in the EU, UK, and EFTA.

- Agent 365 adoption as the control plane matures.

- Researcher usage in executive and compliance workflows.

- Customer case studies showing measurable time savings.

- Competitive responses from Google, OpenAI, and independent AI vendors.

Microsoft’s latest Copilot move is significant because it changes the question enterprises ask about AI. The debate is no longer whether a model can draft a good answer, but whether a platform can safely execute work across people, data, and systems. That is a much higher bar, and it is also a much more valuable one. If Microsoft can clear it, Frontier will not just widen the gap between early adopters and laggards; it will redefine what workplace AI is expected to do.

Source: uctoday.com Microsoft Copilot Cowork Goes Live on Frontier as Wave 3 Shifts AI from Assistant to Execution Engine - UC Today