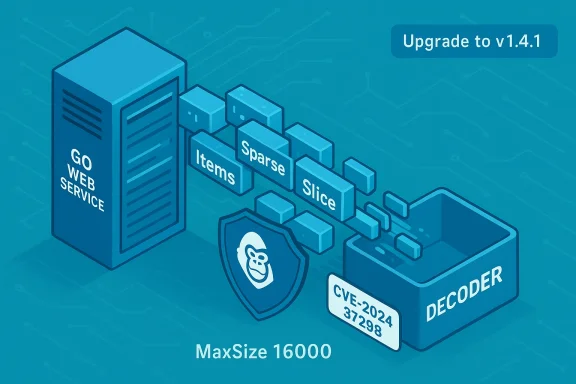

A high‑severity denial‑of‑service vulnerability — tracked as CVE‑2024‑37298 — was disclosed in the popular Go library github.com/gorilla/schema, allowing an attacker to force unbounded memory allocations when the library decodes form or query parameters into structs that contain slices of nested structs. The library was patched in v1.4.1, which introduces a configurable slice size cap and defensive checks; operators and developers should treat this as an urgent availability risk and upgrade or mitigate immediately. (github.com)

Gorilla’s schema package is widely used in Go web applications to convert HTTP form and query values into Go structs via

This is an availability‑only issue: there is no evidence the defect exposes confidentiality or integrity concerns, but the operational impact can be total — services can be forced into OOM (out‑of‑memory) conditions, crash loops, or sustained unresponsiveness under attack. Public trackers and vendors assign a CVSS v3.1 base score of 7.5 (High) for this defect.

Many public writeups and advisories reference similar proof‑of‑concept parameter strings to demonstrate the effect; the intent there is to illustrate the class of attack rather than provide weaponized code.

If immediate upgrade is not possible, apply the following mitigations in priority order:

For teams that consume vendor or distribution updates, check your distribution advisories (Debian, Red Hat, container base images) and third‑party dependency scanners (Snyk, Aqua, Rapid7, etc.) — many of these systems now flag the vulnerability and recommend the same upgrade path.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

Gorilla’s schema package is widely used in Go web applications to convert HTTP form and query values into Go structs via schema.Decoder.Decode(). The bug arises from how the decoder supports sparse slice semantics: when a form key names a slice element at an index, the decoder will allocate a slice large enough to include that index. If an attacker supplies a very large index in an HTTP parameter, the decoder will allocate memory proportional to the index value — with no hard upper bound in vulnerable versions — allowing a remote, unauthenticated client to exhaust process memory and trigger an application-level denial of service.This is an availability‑only issue: there is no evidence the defect exposes confidentiality or integrity concerns, but the operational impact can be total — services can be forced into OOM (out‑of‑memory) conditions, crash loops, or sustained unresponsiveness under attack. Public trackers and vendors assign a CVSS v3.1 base score of 7.5 (High) for this defect.

What exactly went wrong: the technical root cause

Sparse slices and uncontrolled growth

Go’s slices are dynamic arrays. Gorilla/schema implements convenient semantics for nested form fields: keys likeitems.0.Name, items.42.Name produce a slice where indices 0 and 42 exist — intermediate elements are zero‑value placeholders. Vulnerable implementations of the decoder translate an index seen in the incoming form into a slice of length index + 1 by calling reflect.MakeSlice(...) without imposing limits. An attacker who can control the index (for example, by making a request containing arr.10000000.Field=1) can force the server to allocate memory for ten million elements. The allocation size multiplies by the element size (a nested struct) and quickly consumes available memory.The defensive change in the patch

The upstream fix merged as a single commit explicitly adds a defaultMaxSize (16000) and amaxSize field to Decoder, and includes a defensive check that returns an error if the requested index is larger than the configured maxSize. The patch also exposes a MaxSize(size int) setter for callers to tune the limit. In short: rather than letting an attacker control slice length directly, the decoder now rejects requests that would create excessively large slices. The commit message and diff show these additions and the new error path. (github.com)Scope and who’s affected

- Any Go application that imports github.com/gorilla/schema and calls

schema.Decoder.Decode()on request data that maps to fields of type[]struct{...}is potentially vulnerable if the package version is earlier than v1.4.1. - Vendors and distributions packaging Go applications (containers, microservices, API gateways) that include the library are also at risk — several Linux distribution trackers and commercial security databases have already recorded advisories and fixes for packages that include

gorilla/schema. - Internet‑facing endpoints that parse query parameters or form payloads into nested slices are the highest‑risk surface because the attacker needs only to control the HTTP parameters to trigger the allocation.

schema is a generic form decoding library, it can appear deep inside business logic, controller helpers, or third‑party middleware — an application that does not directly import schema might still be affected if a dependency brings it in transitively.Real‑world exploitation scenario (high level)

An attacker targets an application whose handler decodes query parameters into a struct like:- type Item struct { Name string; Qty int }

- type Payload struct { Items []Item }

- GET /api/endpoint?items.10000000.Name=probe

Item, and the memory allocation multiplies by the struct size. Repeating this request, or combining it with compressed payloads or multiple parameter vectors, drives memory to exhaustion and can bring down the process.Many public writeups and advisories reference similar proof‑of‑concept parameter strings to demonstrate the effect; the intent there is to illustrate the class of attack rather than provide weaponized code.

What maintainers changed — specific, verifiable fixes

The upstream fix merged under the commit id referenced in the repository adds:- A new constant

defaultMaxSize = 16000and an instance fieldmaxSize intonDecoder. - An initialization change:

NewDecoder()now setsmaxSizetodefaultMaxSize. - A public setter ICODE MaxSize(size int)[/ICODE] to allow callers to tune limits.

- A defensive check in the slice allocation path: if the parsed index exceeds

d.maxSize,decode()returns an error that prevents huge slice creation.

How to prioritize remediation (practical guidance)

Upgrading is the single best action: move to gorilla/schema v1.4.1 or later as soon as possible. The patch is small and backward compatible in most usages, and it removes the largest operational risk.If immediate upgrade is not possible, apply the following mitigations in priority order:

- Upgrade application dependencies:

- Update

go.modto referencegithub.com/gorilla/schema v1.4.1(or newer) and rungo mod tidy. - Rebuild CI/CD artifacts and redeploy.

- If upgrade cannot be reached quickly, harden the HTTP surface:

- Enforce strict request size limits and header length caps at the reverse proxy (NGINX, Envoy, HAProxy) and load balancer.

- Block or log requests containing parameter names that match nested slice indexing patterns (e.g., regex matching

.\d+.). - Add application‑level guards:

- Replace or avoid calling

Decoder.Decode()on structs that contain[]struct{...}for untrusted input. Use manual parsing or a controlled custom decoder for that field. - Where possible, call

Decoder.MaxSize(<small cap>)to set an application‑appropriate slice cap after upgrading; for older versions that lack the setter, implement wrapper validation to reject suspicious parameter names. - Limit runtime resources:

- Run services with memory limits (cgroups, container memory caps) to prevent host instability, and configure restart policies carefully to avoid thrashing services under attack.

- Implement per‑request memory or CPU timeouts where available.

- Monitoring and alerting:

- Alert on sharp process RSS growth, frequent OOM kills, or unusual error logs from the decoder.

- Track requests with extremely large parameter indices or payloads.

maxSize is 16,000 in the patch. That is a pragmatic, conservative default intended to reduce the risk while preserving legitimate uses. Teams should consider their own expected maximum slice sizes and set MaxSize() accordingly. (github.com)Detection, hunting, and incident response

- Hunting indicators:

- Unusually large query keys that include numeric indices: search access logs for parameter patterns like

items.\d+.orarr.\d+.. - Sudden, repetitive spikes in memory allocation correlated with specific endpoints or query parameter shapes.

- Frequent OOM kills, process restarts, or long GC pauses on web workers.

- Runtime defenses:

- Enable structured request logging (correlate request ID to memoreoints that accept arbitrary form fields, especially ones used by unauthenticated clients.

- Incident response steps:

- Identify and isolate the vulnerable service instance.

- Apply application or proxy request filtering to block obviously malicious parameters.

- Deploy the patched binary or apply the

MaxSize()limiter and restart gracefully. - Review access logs to estimate exposure and potential exploitation attempts.

- If muls were impacted (for example, API‑as‑a‑service), rotate credentials and communicate timelines to affected tion techniques mirror those used for other memory‑exhaustion issues historically seen across ecosystems; our csimilar attack patterns in other projects where unbounded parsing behavior allowed resource exhaustion.

Broader context: why this class of bug keeps recurring

The underlying class is simple and recurring: deserialization or parsing code that builds in‑memory structures directly from attacker‑controlled indices or counts without imposing reasonable upper bounds invites resource‑exhaustion attacks. Across languages and libraries we have seen:- HTTP/2 and QUIC parsers that allow uncontrolled structures to grow state and exhaust memory.

- Archive and compression handling that expands tiny inputs into huge allocations (decompression bombs).

- JSON and form parsers that create deeply nested or massively sparse containers when given crafted payloads.

- Parsing logic must treat counts and indices supplied by remote peers as untrusted and enforce caps.

- Libraries that provide convenience for mapping arbitrary form keys into deep structures should document and, by default, enforce safe limits.

- Application authors should avoid assuming that helpers are safe from resource abuse; defensive operational controls are still required at the edge (proxies, rate limits, quotas).

gorilla/schema note this pattern explicitly and recommend the defensive, configurable cap approach the upstream commit implements.Vendor and ecosystem response — verification and tracking

Major vulnerability databases and distributors have recorded and published advisories for CVE‑2024‑37298, giving a consistent view: the issue affectsgorilla/schema versions prior to 1.4.1, the severity is high for availability impact, and the recommended remediation is to upgrade. Examples include vendor and distribution trackers that list fixes or package updates, and commercial vulnerability feeds that assign CVSS and exploitation guidance. These independent entries provide corroborating evidence that the problem is real and that the upstream patch is authoritative.For teams that consume vendor or distribution updates, check your distribution advisories (Debian, Red Hat, container base images) and third‑party dependency scanners (Snyk, Aqua, Rapid7, etc.) — many of these systems now flag the vulnerability and recommend the same upgrade path.

Practical checklist for developers and operations (do this today)

- For maintainers and devs:

- 1.) Update

github.com/gorilla/schematov1.4.1or newer in allgo.modfiles; run full CI builds and tests. - 2.) Audit code paths that

Decode()into[]struct{...}. Replace or add validation around those fields. - 3.) If you accept untrusted form/query data, add server-side rate limiting and input validation.

- For operations and platform teams:

- 1.) Deploy updated service images that include the patched library.

- 2.) Apply reverse proxy rules to cap URI/query length and to block suspicious indexed parameter patterns.

- 3.) Apply memory limits at the container or process supervisor level; configure alerts for memory anomalies.

- For security teams:

- 1.) Search logs for requests matching sparse-index patterns and correlate with any availability incidents.

- 2.) Run dependency scans across your fleet to find packages or binaries that include older versions of

gorilla/schema. - 3.) Prioritize remediation for internet‑facing endpoints and multi‑tenant services.

Risks and remaining gaps

- Upgrading quickly is the correct fix, but in complex dependency trees

gorilla/schemacan be pulled in transitively by many packages. Inventory and rebuild efforts can therefore be non‑trivial in large organizations; dependency scanning is essential to avoid overlooked exposures. - The patch introduces a default cap (16,000). That is a pragmatic safeguard but not a silver bullet: applications with legitimate needs for very large sparse slices must explicitly set

MaxSize()to an appropriate value and be prepared for the memory behavior that entails. Decisions to increase the cap should be deliberate and accompanied by resource safeguards. (github.com) - Detection and forensics are still hard: an attacker can blend sparse‑index parameters into normal traffic patterns. Proactive request validation and anomaly detection are the most reliable defenses.

Conclusion — what WindowsForum readers must take away

CVE‑2024‑37298 is a straightforward but consequential example of a common software engineering blind spot: trusting remote input to shape in‑memory sizes. The defect is fixed in gorilla/schema v1.4.1 by introducing a configurablemaxSize and denying slice creation beyond that bound — a pragmatic, verifiable defensive change that upstream has merged. Administrators and developers should:- Treat the vulnerability as an urgent availability risk (CVSS 7.5).

- Upgrade to

v1.4.1+across all affected services. - Harden the HTTP edge with request size caps and parameter filtering.

- Audit and monitor for evidence of exploitation, and apply runtime resource limits.

Source: MSRC Security Update Guide - Microsoft Security Response Center