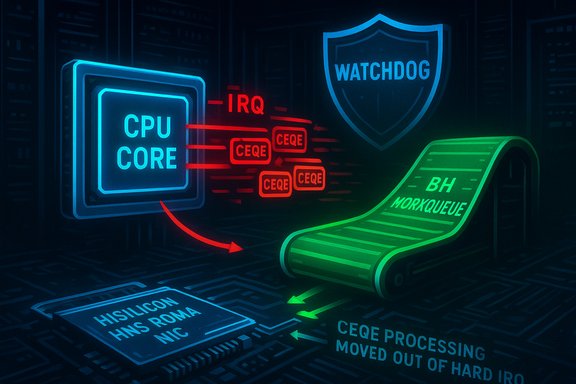

A recently disclosed Linux-kernel vulnerability, tracked as CVE‑2024‑43872, exposes a stability risk in the RDMA HNS (Hisilicon) driver by allowing the CPU to remain in interrupt context for too long under heavy Completion Event Queue Entry (CEQE) load — a condition that can produce sustained soft lockups and degrade or completely deny system availability. The upstream fix moves CEQE processing out of the hard IRQ path into a bottom‑half (BH) workqueue and imposes an upper limit on the number of CEQEs a single work handler call will process, restoring preemption and preventing long-running interrupt handlers from freezing a CPU core. This change is recorded in mainstream tracking databases and has been rolled into stable kernel trees and vendor packages; operators should treat the issue as an availability-first risk and prioritize patching hosts that run HiSilicon/Huawei HNS RDMA devices or kernels in the affected version range.

Remote Direct Memory Access (RDMA) technologies such as RoCE enable high-throughput, low-latency network I/O by allowing NICs to move memory between hosts with minimal CPU intervention. The HNS family in Linux — commonly referenced as hns, hns‑roce, or hns3 — is the Hisilicon / Huawei Net subsystem driver set that implements RoCE/RDMA support and related NIC frameworks. The HNS drivers are used on hardware identifying as Hisilicon / Huawei RDMA network controllers and appear under kernel config items like CONFIG_INFINIBAND_HNS and CONFIG_HNS3. A central design trade-off in performance‑oriented drivers is where to handle completion and event processing: doing more work inside an interrupt context reduces latency but risks monopolizing the CPU’s interrupt handling window; moving work to a bottom half or workqueue improves preemptibility but slightly increases service latency. The bug fixed by CVE‑2024‑43872 arose from a CEQE handler that remained in the hard IRQ path and, under heavy CEQE load, could leave the CPU core stuck long enough to trigger kernel soft lockup detection (watchdog timeouts). The upstream remedy relocates CEQE handling to a BH workqueue and caps per-call processing to bound CPU time spent servicing CEQEs.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

Remote Direct Memory Access (RDMA) technologies such as RoCE enable high-throughput, low-latency network I/O by allowing NICs to move memory between hosts with minimal CPU intervention. The HNS family in Linux — commonly referenced as hns, hns‑roce, or hns3 — is the Hisilicon / Huawei Net subsystem driver set that implements RoCE/RDMA support and related NIC frameworks. The HNS drivers are used on hardware identifying as Hisilicon / Huawei RDMA network controllers and appear under kernel config items like CONFIG_INFINIBAND_HNS and CONFIG_HNS3. A central design trade-off in performance‑oriented drivers is where to handle completion and event processing: doing more work inside an interrupt context reduces latency but risks monopolizing the CPU’s interrupt handling window; moving work to a bottom half or workqueue improves preemptibility but slightly increases service latency. The bug fixed by CVE‑2024‑43872 arose from a CEQE handler that remained in the hard IRQ path and, under heavy CEQE load, could leave the CPU core stuck long enough to trigger kernel soft lockup detection (watchdog timeouts). The upstream remedy relocates CEQE handling to a BH workqueue and caps per-call processing to bound CPU time spent servicing CEQEs. What went wrong: technical anatomy

CEQE handling in interrupt context

In the vulnerable code path, Completion Event Queue Entries (CEQEs) were processed directly inside the interrupt handler. That made the CPU core execute potentially many CEQE handlers while interrupts were disabled or in a special IRQ context, preventing normal scheduling and watchdog checks from making forward progress. Under a sustained flow of CEQEs the handler could repeatedly consume CPU time until the kernel’s soft‑lockup watchdog declared the core unresponsive. This is an Availability problem rather than a confidentiality or integrity bug: the system becomes unresponsive or degraded rather than leaking secrets or allowing code execution.The patch: move work to BH and impose processing limits

The upstream patch converts CEQE processing into a bottom‑half workqueue handler (a deferred context where scheduling and preemption are permitted) and adds a safety cap on the number of CEQEs processed during a single invocation of the worker. This twofold approach prevents a single long running handler from blocking the CPU while still allowing batched CEQE processing for reasonable performance. The fix is intentionally minimal and conservative — it addresses the specific availability bug without a large redesign of the hns RDMA stack. Multiple independent trackers and vendor advisories summarize the same correction.Why this is an availability-first defect

The core problem is a classic kernel concurrency/design tradeoff: expensive work inside hard interrupts harms the scheduler’s ability to schedule other work and to service system watchdogs. Even though an interrupt‑context CEQE handler might be safe from a memory‑safety perspective, the sheer CPU time it can consume under heavy load translates to operational denial‑of‑service: processes stall, heartbeats fail, and automated recovery systems may reboot the machine. In operational terms that equals service outage. Tracking records and CVSS vectoring reflect High impact to availability while leaving confidentiality and integrity unaffected.Affected versions and distribution status

Multiple vulnerability trackers are consistent in the affected kernel range and vendor responses:- Vulnerable upstream kernel range: reported as versions from approximately 4.16 up to, but excluding, 6.10.3 in public vulnerability metadata. Operators running kernels in that range with the HNS driver enabled should consider themselves exposed until they install kernels that include the stable upstream commits or vendor backports.

- Distribution advisories and OSV entries list affected packages and fixed versions; downstream vendors (Debian, Ubuntu, SUSE, and others) have mapped the upstream commits into their package releases and issued kernel updates where appropriate. Check your vendor’s security tracker for the exact fixed package and version for your release.

- EPSS/exploitation telemetry at publication showed low exploitation probability; the primary symptom observed in field reports was soft lockup/availability loss rather than active exploitation or remote code execution. That said, availability primitives are operationally valuable and should not be ignored.

Exploitability and operational risk

Who can trigger this?

- Attack vector is local or tenant‑adjacent. An unprivileged local process, a malicious container, or a co‑tenant VM that can exercise the RDMA device or cause heavy completion traffic can trigger the condition. In many cloud and multi‑tenant configurations, “local access” may be reasonably available to attackers in shared environments.

- Pure remote unauthenticated exploitation is unlikely because the attacker needs to cause heavy CEQE production on the specific RDMA hardware path. That typically requires local access, driver interaction, or direct control over RDMA traffic generation on the host or across a privileged network fabric.

What the attacker gains

- The practical effect is denial‑of‑service: processes hang or fail, Kubernetes nodes may be marked NotReady, and service orchestration may trigger failovers. The vulnerability does not, as disclosed, provide code execution or data exfiltration paths on its own. However, availability vulnerabilities can be weaponized in targeted disruption campaigns and may enable time windows for other attack steps in complex threat scenarios.

Likelihood of in‑the‑wild exploitation

- Public telemetry and vulnerability feeds recorded no widespread in‑the‑wild exploitation against this CVE at publish time. EPSS values were low. Nevertheless, availability-oriented bugs are straightforward for an adversary with local access to weaponize and therefore should be treated as a priority on systems that host multi‑tenant workloads, CI runners, or untrusted containers.

Detection and forensic signs

This vulnerability produces operational symptoms rather than stealthy artifacts. Look for:- Kernel log entries showing soft lockup watchdog messages or repeated "CPU stuck" traces around the time of heavy RDMA activity or noisy CEQE flows.

- Sudden unresponsiveness on nodes that run HNS devices: stalled user processes, missed heartbeats, or failing I/O that correlate with RDMA activity.

- If you capture kernel crash dumps or persistent logs, search for backtraces that include the HNS RDMA CEQE handling paths. If you relied on vendor telemetry, consult your vendor’s recommended log signatures and the patched commit IDs to map log traces to the fixed code.

- Identify kernel version: uname -r

- Check for HNS modules: lsmod | grep hns or lsmod | grep hns_roce

- Inspect kernel log around incidents: journalctl -k --since "YYYY-MM-DD HH:MM" | grep -i -E "soft lockup|watchdog|hns|roce|CEQE"

- For persistent forensic capture: enable kdump/vmcore to retain crash dumps; collect dmesg and persist kernel logs to centralized logging before reboot.

Immediate mitigations and remediation steps

The single definitive remediation is to install a kernel that includes the upstream fix or a vendor backport and to reboot into that kernel. Follow this prioritized checklist:- Inventory: map hosts that run HNS drivers or whose hardware reports vendor ID 0x19e5 (Huawei / Hisilicon) and devices matching HNS PCI IDs. Use lspci and ethtool -i to identify NICs.

- Check vendor advisories: consult your distribution’s security tracker and kernel package changelogs for entries referencing CVE‑2024‑43872 or the upstream stable commit IDs. Only mark hosts remediated once you confirm the packaged kernel includes the fix.

- Patch and reboot: apply the vendor/kernel update and schedule reboots as required; kernel-level patches require a restart.

- Staged rollout: pilot the patched kernel on representative hosts (especially ones that exercise RDMA/InfiniBand or run latency-sensitive workloads), monitor for regressions, then roll out in waves.

- Compensating controls (short‑term): if immediate patching is impossible, reduce or isolate workloads that generate heavy RDMA completion traffic, and restrict untrusted local access to hosts with HNS hardware. For cloud providers, prioritize multi‑tenant hosts for patching and consider live‑migrating critical workloads to patched hosts during maintenance windows.

- Identify exposed hosts (inventory).

- Pilot patch on a non‑production representative host.

- Validate with RDMA workloads and monitoring windows.

- Deploy across production in waves, monitor kernel logs for at least one full business cycle after each wave.

- Capture and retain diagnostics for any anomalous post‑patch behavior.

Why the upstream approach is sensible — strengths and residual risks

Strengths- The fix is minimal and targeted: moving CEQE work out of the interrupt handler and capping processing preserves performance benefits while eliminating unbounded CPU consumption in IRQ context — a conservative, safe remedy that is straightforward to backport to stable kernel trees. Multiple vendors incorporated the upstream commits into their kernels quickly because the change is small.

- The mitigation restores expected kernel scheduling and watchdog behavior without a large refactor of RDMA internals or device firmware. That reduces regression risk for vendors shipping backports.

- Vendor‑supplied kernels and appliance images may lag the upstream fix. Embedded devices, vendor kernels for appliances, and old distribution versions may not receive timely backports; these hosts require special tracking and coordination with the vendor for fixed images.

- Availability bugs can be exploited as part of multi‑stage attacks (for example to create windows for other local escalations); while no such chaining was reported publicly for this CVE, defenders should not assume the absence of a PoC implies zero risk.

- Some operators opt to delay kernel updates; for those teams, use staging, comprehensive testing, and compensating controls (isolation, reduced exposure, tenant migration) to reduce exposure until a patch can be applied.

Broader context: RDMA drivers and kernel hardening

This CVE is another example of how small design decisions in performance‑sensitive kernel drivers can yield outsized operational impact. Similar RDMA and network driver issues in recent years have produced availability faults or race conditions that required surgical fixes and careful backporting. In particular, RDMA drivers often straddle strict timing/latency requirements and kernel safety constraints (interrupt context, spinlocks, workqueue deadlines). Operators should treat RDMA-capable hosts as a distinct risk class:- Inventory RDMA-capable hardware and make patching for those hosts part of a high-priority maintenance stream.

- Maintain kernel changelog and vendor advisory monitoring for RDMA driver fixes; they are more likely to affect cluster stability and service availability.

- Use conservative kernel‑update testing for NIC firmware, HBA driver versions, and vendor-provided integration tests, because RDMA stacks interact tightly with fabric and remote controllers. The historical pattern of RDMA driver fixes and the need for careful validation is documented across several similar kernel advisories and post‑mortems.

Practical checklist for administrators (quick reference)

- Confirm exposure:

- uname -r

- lsmod | grep -E "hns|hns_roce|hns3"

- lspci -nn | grep -i hisilicon

- Consult vendor security tracker for fixed package versions (Debian, Ubuntu, SUSE, etc..

- Apply vendor-packaged kernel update that references CVE‑2024‑43872 or the upstream stable commit IDs.

- Reboot into the patched kernel; verify by repeating the lsmod/lspci checks and by confirming the kernel package changelog lists the upstream commit.

- Monitor kernel logs, RDMA telemetry, and service health windows for two weeks after rollout.

Caveats and verification notes

- The patch described above is recorded in multiple vendor and vulnerability trackers; however, direct viewing of some upstream git commit pages may be restricted by repository access or tool gating in some environments. Where direct commit fetch is not possible, rely on distribution changelogs and CVE mappings to confirm the fix is present in your packaged kernel. If you require the original patch for auditing, request direct git access or vendor-supplied evidence of backport inclusion.

- Several independent trackers provide overlapping confirmations of the issue and the fix (NVD, OSV, Debian, Ubuntu, vendor advisories). Cross‑checking at least two authoritative sources (for example, the NVD entry plus your distribution security tracker or OSV) is recommended before you conclude remediation.

Conclusion

CVE‑2024‑43872 highlights an operationally significant but conceptually straightforward class of kernel bug: work left too long in interrupt context can choke a CPU core and bring down availability. The HNS RDMA stack’s fix — moving CEQE processing to a workqueue and limiting work per invocation — is conservative, effective, and already present in upstream stable branches and vendor updates. Prioritize inventorying hosts that use Hisilicon/Huawei HNS RDMA hardware, consult your distribution’s advisory mappings, and plan staged kernel updates and reboots. For multi‑tenant, cloud, or RDMA‑heavy environments, accelerate remediation: availability faults at kernel level are simple to trigger and can have cascading operational impact. Cross‑verify vendor package changelogs to confirm the upstream commit is present, and monitor kernel telemetry closely during and after rollout to validate the fix in your environment.Source: MSRC Security Update Guide - Microsoft Security Response Center