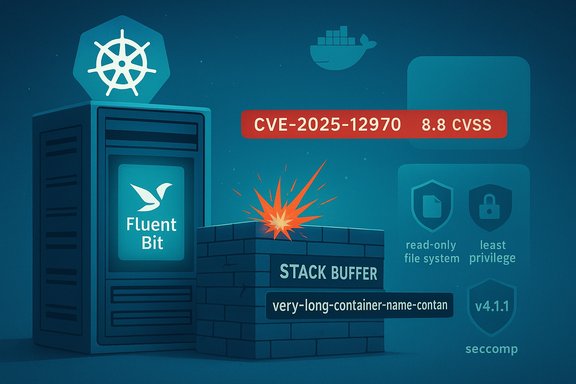

A stack-buffer overflow in Fluent Bit’s Docker input plugin has been cataloged as CVE-2025-12970, and it’s the kind of flaw that turns a seemingly innocuous container name into a potential foothold for attackers. The vulnerability stems from the in_docker plugin’s extract_name routine copying container names into a fixed-size stack buffer without validating length, enabling an attacker who can create or control container names to crash the Fluent Bit process — or, in the worst case, achieve arbitrary code execution. The issue is widely reported as high severity (CVSS ~8.8) and has been addressed by the Fluent Bit project in the 4.1.1 release (with backports for some 4.0.x maintenance releases); operators must treat this as urgent for any deployment where attackers can influence container names or where Fluent Bit runs with elevated privileges.

Fluent Bit is a lightweight, high-performance log processor and forwarder used extensively in containerized and cloud-native environments. It commonly runs as a DaemonSet on Kubernetes nodes, acting as the agent that collects container logs and metrics and forwards them to logging backends or SIEMs. Because Fluent Bit often runs with wide permissions and is present on virtually every node in many fleets, vulnerabilities in its input plugins have outsized operational consequences.

CVE-2025-12970 targets the Docker input plugin (in_docker), specifically the extract_name function that reads container names. The defect is a classic unsafe stack copy: the code copies an unbounded container name into a fixed-size local buffer without length checks. Multiple vulnerability trackers assign a high-severity rating and note that the bug can cause process crashes (DoS) or escalate to arbitrary code execution if exploited reliably. The NVD and other major trackers list the vulnerability details and severity, while the Fluent Bit project describes the fixes in their security advisory and release notes.

Practical exploitation caveats:

The remedial path is also clear: update to the patched Fluent Bit release, harden agent runtime privileges, restrict container creation capabilities, and add detection for agent crashes and log tampering. Operators and platform teams must treat telemetry infrastructure as critical security infrastructure: the incident underlines that agents which touch logs and metrics deserve prioritized patching, hardened configurations, and proactive monitoring.

For teams running Fluent Bit at scale, the immediate priorities are inventory, patching, and restriction of container-creation privileges across your build and runtime environments. Apply the vendor guidance, validate your installed versions, and treat any unusual Fluent Bit behavior as a high-priority incident until you can confirm that all nodes are running patched, hardened agents. Community discussions and forum activity reflect the urgency and the operational challenges — patch quickly, but patch carefully and validate fully.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

Fluent Bit is a lightweight, high-performance log processor and forwarder used extensively in containerized and cloud-native environments. It commonly runs as a DaemonSet on Kubernetes nodes, acting as the agent that collects container logs and metrics and forwards them to logging backends or SIEMs. Because Fluent Bit often runs with wide permissions and is present on virtually every node in many fleets, vulnerabilities in its input plugins have outsized operational consequences.CVE-2025-12970 targets the Docker input plugin (in_docker), specifically the extract_name function that reads container names. The defect is a classic unsafe stack copy: the code copies an unbounded container name into a fixed-size local buffer without length checks. Multiple vulnerability trackers assign a high-severity rating and note that the bug can cause process crashes (DoS) or escalate to arbitrary code execution if exploited reliably. The NVD and other major trackers list the vulnerability details and severity, while the Fluent Bit project describes the fixes in their security advisory and release notes.

Why this matters: risk to Kubernetes clusters and cloud infrastructure

When a log-collection agent like Fluent Bit is compromised, the consequences ripple outward in predictable and dangerous ways:- Fluent Bit is frequently deployed as a Kubernetes DaemonSet and runs on every node; a single compromised agent can give an attacker local code execution on a node. This can be escalated to host compromise (container escape & persistence).

- Because Fluent Bit aggregates telemetry and forwards it to central systems, an attacker can tamper with logs (hide activity), inject false telemetry, or poison security pipelines — undermining detection and forensic trails.

- Many operators run Fluent Bit with elevated privileges or as root, and often grant it access to host-level sockets and filesystems; that increases the blast radius of any exploit.

- In multi-tenant clusters, a malicious tenant could intentionally create containers with crafted names to target the agent on the shared node.

Technical analysis: what the bug is, how it works, and exploitation considerations

The defect (at a glance)

- Vulnerable function: extract_name in the in_docker input plugin

- Root cause: copying container name into a fixed-size stack buffer without checking the name’s length (classic stack-buffer overflow)

- Typical outcome: process crash (segfault) or memory corruption that could be turned into arbitrary code execution if the conditions (stack layout, compiler mitigations, ASLR, stack canaries) allow

- Affected versions: 4.1.0 (and likely some earlier 4.x snapshots); patched in 4.1.1 and backported maintenance releases per the Fluent Bit advisory.

Exploitation model and prerequisites

Exploitation requires one of the following capabilities:- The attacker can create containers on the same host (e.g., access to a Docker daemon, an attacker-controlled container runtime, or a compromised container provisioning system).

- The attacker can influence container naming through CI/CD, container orchestration APIs, or misconfigured tenant environments.

Practical exploitation caveats:

- Memory-safety mitigations (stack canaries, ASLR, DEP/NX) can make reliable remote code execution more difficult. In many modern deployments, turning a stack overflow into reliable code execution requires additional weaknesses (e.g., disabled mitigations, predictable memory layout, or the use of unsafe build flags).

- A crash (denial of service) is trivial to cause and may be the easiest outcome in many environments; however, DoS across a cluster can still be operationally severe.

- On nodes where Fluent Bit runs as root—or is otherwise able to access host resources—successful exploitation could permit local privilege escalation and persistent footholds.

Confirmed technical specifics

Independent sources converge on the key technical claims:- NVD summarizes the defect as an unsafe copy into a fixed-size stack buffer.

- The Fluent Bit project’s security advisory and release notes confirm a stack-buffer overflow in in_docker and list remediation in v4.1.1 and backports. The advisory enumerates a set of related fixes and the PR numbers used to remediate these issues.

- Multiple security vendors (Snyk, Wiz, PT Security / dbugs) and CERT entries analyze the impact, propagation, and recommended remediations, and report a high CVSS score around 8.8.

What operators must do now: mitigation and remediation checklist

The single most effective action: update Fluent Bit to a patched version.- Immediate remediation:

- Update Fluent Bit to v4.1.1 or a later patched release. If you use a 4.0.x maintenance branch, upgrade to the fixed maintenance release the vendor specifies for that branch (consult your vendor’s package channel).

- Restart Fluent Bit processes and validate that the new binary is running on all nodes.

- If you cannot patch immediately:

- Restrict who can create containers on hosts: tighten RBAC policies, restrict Docker socket access (docker.sock), and lock down CI/CD pipelines that can create named containers.

- Run Fluent Bit with least privilege: avoid running the agent as root; use a dedicated unprivileged user where possible.

- Constrain Fluent Bit’s filesystem and network access with container runtime security features and Pod Security Policies / Pod Security Standards (Kubernetes): use read-only file systems, drop unnecessary capabilities, and use seccomp/AppArmor profiles.

- Block or monitor Docker API access: if the Docker daemon or API is exposed to users who should not create containers, restrict it behind firewalls or host-level policy.

- Monitor for process crashes and restarts: set up alerts for Fluent Bit crashes (systemd / k8s pod / DaemonSet restarts) and unusual log gaps.

- Hunt for suspicious container name creation events (audit logs, orchestration API calls).

- Validate image and container metadata flows: confirm that only trusted services can create containers on nodes where Fluent Bit runs.

- Harden your pipeline: ensure CI runners and automated creation flows sanitize container names (enforce length limits and safe character sets).

- Use network segmentation and host isolation so a low-privilege tenant or runner cannot reach the nodes running Fluent Bit control interfaces.

Patch mapping, version guidance, and validation

- Patched releases: Fluent Bit’s security advisory and release notes list fixes shipped in v4.1.1 and backported fixes for maintenance branches. Some package vendors and trackers list slightly different 4.0.x micro versions as the backport target (4.0.12, 4.0.13, or 4.0.14) — verify the exact fixed version for your distribution’s package mirror. The vendor-authored advisory is the canonical mapping for release numbers.

- How to validate:

- Confirm the Fluent Bit binary version on each host/pod (fluent-bit --version or check container image tags).

- Validate the container image digest if you use image pinning.

- For Kubernetes, ensure DaemonSet rolling-updates completed successfully and check pod restart counts for anomalous spikes post-update.

- Run configuration scanning or vulnerability scanning tools against deployed images to confirm they no longer include the vulnerable version.

Detection, forensics, and post-exploitation indicators

Detection is harder when an attacker aims to hide activity by modifying logs, but there are still observable signals to monitor:- Fluent Bit process crashes and abnormal restarts (systemd logs, Kubernetes pod restarts).

- Sudden suppression, gaps, or manipulation of logs forwarded to central systems; missing time windows are a red flag.

- Unexpected new container names appearing in orchestrator audit logs or via the Docker/K8s API.

- New local binaries, persistence artifacts, or unusual outbound traffic from nodes running Fluent Bit (indicators of post-compromise staging).

- Unusual tags or malformed records forwarded to sinks (attackers sometimes tamper with tags to disrupt downstream processing).

- Isolate affected nodes (cordon/drain in Kubernetes).

- Preserve logs and memory captures for forensic analysis.

- Rebuild nodes from known-good images after rotating credentials used by the cluster and the logging pipeline.

- Check for lateral movement and persistence mechanisms such as new DaemonSets, CronJobs, or host crontabs that could reinstate the agent.

Broader supply-chain and cloud-provider implications

Public reporting — including analysis from independent researchers — highlights the systemic risk: Fluent Bit is widely deployed across major cloud providers, container platforms, and managed services. Vulnerabilities that allow log agents to be crashed or exploited can be weaponized to:- Evade detection by removing or altering telemetry before it reaches centralized monitoring.

- Establish persistent footholds in multi-tenant clusters that are difficult to find if logs are suppressed.

- Move laterally inside a cloud provider environment where the agent runs on many nodes.

Responsible disclosure timeline and community response

- The vulnerability was publicly cataloged in late November 2025; NVD and other trackers populated entries shortly after.

- The Fluent Bit project published a consolidated advisory and release notes describing the fixes and the specific PRs used to harden buffer handling and input validation.

- Multiple security vendors and CERT-like entities produced advisories and detection rules while package maintainers and cloud providers pushed out patched images and configuration guidance.

Practical, prioritized action plan for administrators (30/60/90)

- First 24–72 hours (critical):

- Inventory: identify all Fluent Bit instances (DaemonSets, host agents, managed services).

- Patch: upgrade to Fluent Bit v4.1.1 (or the vendor-specified patched maintenance release) and roll the update to all nodes.

- Validate: ensure updated images are running; monitor for crashes and restarts.

- First 1–2 weeks (stabilize):

- Harden: drop unnecessary capabilities, run agents as unprivileged users, apply seccomp/AppArmor/Pod Security controls.

- Audit: ensure Docker API access is restricted; rotate any CI/CD or automation credentials that can create containers.

- Detection: implement log-integrity checks and alerts for missing telemetry or unusual tag patterns.

- First 30–90 days (sustain and learn):

- Bake fixes into infrastructure-as-code: pin to patched images and add vulnerability checks in CI pipelines.

- Review operational policies: limit who can create containers and enforce sanitization of container names.

- Run threat hunting: look for signs of past tampering in logs and cluster events; check for unusual new objects (DaemonSets, Jobs).

Strengths of the vendor response and outstanding risks

What went right:- The Fluent Bit upstream team published a clear advisory and released patched versions in a matter of weeks.

- Multiple security vendors and database trackers quickly profiled the issue, produced detection guidance, and recommended mitigations.

- Cloud vendors and managed service operators initiated internal remediation on high-priority fleets.

- Inconsistent backport micro-version numbering across community trackers caused confusion for some operators; confirm the exact fixed package for your distribution.

- The exploitability window still depends on environment-specific factors (privilege separation, mitigations), and there is limited public evidence of widespread, successful exploitation — but absence of public PoC is not the same as absence of risk. Multiple advisories urge patching regardless.

- Multi-tenant and poorly segmented clusters are the highest risk; if an attacker can create containers on nodes that run Fluent Bit, they may often obtain the precondition necessary to exploit this bug.

Final assessment and conclusion

CVE-2025-12970 is a textbook example of how a minor implementation oversight — an unchecked string copy — can become a major operational hazard when it affects an agent that runs across an entire fleet. The defect is straightforward to describe and, under permissive conditions, straightforward to exploit for denial-of-service and potentially more serious outcomes.The remedial path is also clear: update to the patched Fluent Bit release, harden agent runtime privileges, restrict container creation capabilities, and add detection for agent crashes and log tampering. Operators and platform teams must treat telemetry infrastructure as critical security infrastructure: the incident underlines that agents which touch logs and metrics deserve prioritized patching, hardened configurations, and proactive monitoring.

For teams running Fluent Bit at scale, the immediate priorities are inventory, patching, and restriction of container-creation privileges across your build and runtime environments. Apply the vendor guidance, validate your installed versions, and treat any unusual Fluent Bit behavior as a high-priority incident until you can confirm that all nodes are running patched, hardened agents. Community discussions and forum activity reflect the urgency and the operational challenges — patch quickly, but patch carefully and validate fully.

Source: MSRC Security Update Guide - Microsoft Security Response Center