CVE-2026-23313 is a deceptively small Linux kernel fix with outsized value for anyone tracking networking stack reliability, especially in enterprise and virtualized environments. Microsoft’s Security Update Guide identifies the issue as “i40e: Fix preempt count leak in napi poll tracepoint,” which points straight at Intel’s 700-series Ethernet driver and a bug in a tracepoint path that should never have disturbed kernel preemption accounting in the first place. The vulnerability entry sits inside Microsoft’s broader update-guide ecosystem, which continues to surface CVEs assigned by industry partners and to publish machine-readable vulnerability data for faster remediation

The i40e driver is Intel’s Linux network driver for 700-series Ethernet hardware, and it is widely used in servers, hypervisors, and high-throughput network appliances. In practice, that makes any flaw in its polling, tracing, or interrupt-handling paths worth attention even when the bug does not look like a classic memory-corruption event. Intel’s driver documentation shows that i40e is a mature, feature-rich subsystem rather than a niche add-on, which helps explain why kernel maintainers are careful about even seemingly minor correctness defects

The phrase “preempt count leak” is the real signal here. In Linux, preemption accounting is part of the kernel’s internal bookkeeping for determining when a task may be rescheduled; if that count leaks upward, the system can behave as though it is still inside a critical region. The kernel documentation on preemption makes clear that preemption control is tightly coupled to correctness, and that the preempt counter is not decorative state — it is core scheduler machinery

What makes this CVE interesting is that it lives in a tracepoint, not a packet parser. Tracepoints are supposed to be observability hooks, not functional side effects. The Linux kernel docs emphasize that tracepoints are part of the event-tracing infrastructure and are meant to be used without invasive instrumentation, which is exactly why a bookkeeping leak in that path is so easy to overlook during routine testing

Microsoft’s disclosure model also matters. Since 2024, MSRC has been adding machine-readable CSAF alongside its existing vulnerability data channels, while continuing to publish CVEs through the Security Update Guide. That means a CVE like this can land in enterprise tooling more quickly and with more consistent metadata than in the older, more manual workflow

The key operational risk is not necessarily immediate corruption; it is state imbalance. If the preempt count leaks, the scheduler may think it is not safe to preempt when it actually is. That can produce latency spikes, soft lockup symptoms, or hard-to-reproduce timing anomalies, especially under load or in kernels that already have a lot of tracing enabled

This is also why the wording matters. A “count leak” is not the same as a crash bug, but it can still destabilize a system in ways that are worse for operators because they are intermittent and workload-dependent. Those are the bugs that make troubleshooting expensive and make tracing — ironically — look guilty even when it is just the messenger

That matters because the more traffic a system handles, the more often it enters the exact code paths where a tracepoint can fire. A defect that is rare on a lightly used workstation may become very visible on a busy NIC in a datacenter. The scale of deployment turns a tiny kernel imbalance into a fleet-level risk profile

The broader lesson is that driver bugs are not just driver bugs. In the Linux kernel, network drivers sit at the intersection of interrupt handling, softirq processing, polling, tracing, and scheduling. A fault in any one of those layers can ripple across the others, especially when the code is designed for maximum throughput and minimum overhead

What makes tracepoint-related bugs difficult is that they often do not show up as obvious functional failures. The packet still arrives. The interface still comes up. The system still passes a smoke test. But internal accounting has shifted, and that can surface later as latency jitter, stuck polling behavior, or a scheduler that behaves less predictably than it should

This is exactly the kind of issue that kernel maintainers tend to fix early. The Linux kernel community has long treated preemption correctness as foundational, because once scheduling assumptions are wrong, a lot of downstream debugging becomes misleading. A tracepoint leak is dangerous not because it is flashy, but because it quietly erodes trust in the execution model

That is especially true in infrastructure environments. If a network driver’s polling path holds on to preemption state too long, the resulting latency changes can affect other services on the same host. On a busy server, that can translate into performance degradation that looks like a networking problem, a scheduler problem, or a general system health problem depending on where you start looking

The likely security posture is therefore conservative but not alarmist: patch it, test it, and keep an eye on whether vendors classify it as a reliability bug, a denial-of-service risk, or something broader. When a kernel issue touches accounting and tracing rather than memory safety, precision matters more than drama

The 2024 MSRC announcements about CSAF and cloud-service CVEs show a clear direction: more machine-readable, more integrated, and more automation-friendly disclosure. For security teams, that means a CVE like this can move from awareness to inventory matching faster, which is exactly what modern patch operations need

There is also a documentation lesson here. Microsoft’s update-guide ecosystem is no longer just a static listing of vulnerabilities; it is a live feed into vulnerability-management tooling, advisory ingestion, and response automation. That makes the quality of the metadata around a CVE almost as important as the patch itself

This is where surgical fixes shine. They minimize regression risk, they are easier to backport, and they are usually easier for vendors to incorporate into stable branches. Microsoft’s own vulnerability ecosystem, including its update-guide tooling and CSAF publication, is built to distribute exactly these kinds of fixes efficiently across fleets

The broader implication is that a lot of modern kernel security work is really about making hidden assumptions explicit. If a tracepoint ever behaves as if it were part of the scheduling core, that is a design smell. The repair is not just to eliminate a leak, but to make the boundary visible again

A second question is whether maintainers use this as a prompt to review other network-driver tracepoints for similar preemption side effects. That would be the mature response: not treating the bug as an isolated oddity, but as evidence that a particular design pattern deserves closer scrutiny. In large kernel subsystems, one accounting mistake is often the clue to a broader family of risks

The larger lesson is that kernel security is often won not by dramatic exploit narratives, but by relentless attention to bookkeeping, scheduling, and hot-path correctness. CVE-2026-23313 is a good example of that pattern: small in code, meaningful in consequence, and exactly the kind of issue that mature infrastructure teams should respect before it has a chance to become a recurring problem.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Overview

Overview

The i40e driver is Intel’s Linux network driver for 700-series Ethernet hardware, and it is widely used in servers, hypervisors, and high-throughput network appliances. In practice, that makes any flaw in its polling, tracing, or interrupt-handling paths worth attention even when the bug does not look like a classic memory-corruption event. Intel’s driver documentation shows that i40e is a mature, feature-rich subsystem rather than a niche add-on, which helps explain why kernel maintainers are careful about even seemingly minor correctness defectsThe phrase “preempt count leak” is the real signal here. In Linux, preemption accounting is part of the kernel’s internal bookkeeping for determining when a task may be rescheduled; if that count leaks upward, the system can behave as though it is still inside a critical region. The kernel documentation on preemption makes clear that preemption control is tightly coupled to correctness, and that the preempt counter is not decorative state — it is core scheduler machinery

What makes this CVE interesting is that it lives in a tracepoint, not a packet parser. Tracepoints are supposed to be observability hooks, not functional side effects. The Linux kernel docs emphasize that tracepoints are part of the event-tracing infrastructure and are meant to be used without invasive instrumentation, which is exactly why a bookkeeping leak in that path is so easy to overlook during routine testing

Microsoft’s disclosure model also matters. Since 2024, MSRC has been adding machine-readable CSAF alongside its existing vulnerability data channels, while continuing to publish CVEs through the Security Update Guide. That means a CVE like this can land in enterprise tooling more quickly and with more consistent metadata than in the older, more manual workflow

What the Bug Is Really About

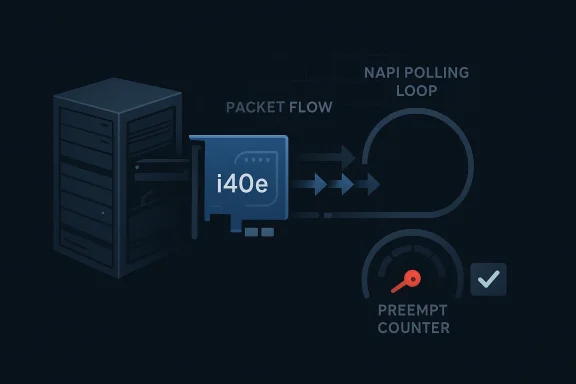

At a technical level, the bug description implies that the NAPI poll tracepoint in i40e temporarily altered preemption state and failed to restore it properly. NAPI is Linux’s polling mechanism for packet processing, and it exists to reduce interrupt overhead under heavy traffic. That makes the polling path performance-sensitive, which is precisely the kind of place where a subtle accounting mistake can linger for a long time before someone notices a side effectWhy a tracepoint can still be dangerous

Tracepoints are often treated as passive observers, but in kernel code they still execute in a live scheduling context. The kernel docs on tracepoints show that these hooks are integrated into the event system, and the preemption docs explain that any imbalance in preempt_disable/preempt_enable semantics can distort scheduling behavior. In other words, observability code is still kernel code, and kernel code always has rulesThe key operational risk is not necessarily immediate corruption; it is state imbalance. If the preempt count leaks, the scheduler may think it is not safe to preempt when it actually is. That can produce latency spikes, soft lockup symptoms, or hard-to-reproduce timing anomalies, especially under load or in kernels that already have a lot of tracing enabled

This is also why the wording matters. A “count leak” is not the same as a crash bug, but it can still destabilize a system in ways that are worse for operators because they are intermittent and workload-dependent. Those are the bugs that make troubleshooting expensive and make tracing — ironically — look guilty even when it is just the messenger

- The bug is in the NAPI poll tracepoint path, not the packet payload path.

- The symptom is a preemption accounting imbalance, not a straightforward buffer overflow.

- The likely outcome is scheduler and latency disruption, especially under heavy networking load.

- Tracepoints are supposed to be low-impact, so a leak here is especially undesirable.

- The i40e path is performance-critical enough that small defects can have disproportionate consequences.

Why i40e Matters

Intel’s i40e driver sits in a class of networking code that tends to be deployed where performance, virtualization density, and operational stability all matter at once. In those environments, even a bug that only affects accounting can become a production concern because networking drivers are already on the hottest execution paths in the kernelThe 700-series context

The kernel documentation for Intel’s Ethernet 700-series driver shows that the i40e stack is a long-lived, production-oriented component. That is a clue about impact: bugs in this family do not stay confined to lab systems or toy hardware; they show up in servers, storage networks, virtual switches, and other infrastructure where packet volume is high and latency budgets are tightThat matters because the more traffic a system handles, the more often it enters the exact code paths where a tracepoint can fire. A defect that is rare on a lightly used workstation may become very visible on a busy NIC in a datacenter. The scale of deployment turns a tiny kernel imbalance into a fleet-level risk profile

The broader lesson is that driver bugs are not just driver bugs. In the Linux kernel, network drivers sit at the intersection of interrupt handling, softirq processing, polling, tracing, and scheduling. A fault in any one of those layers can ripple across the others, especially when the code is designed for maximum throughput and minimum overhead

- High-throughput NIC drivers amplify small timing defects.

- Server deployments are more likely than desktops to exercise these code paths continuously.

- Virtualization stacks often depend on stable NIC behavior under sustained load.

- Tracing and performance monitoring are common in exactly the environments that run i40e.

- A “minor” bookkeeping bug can still become a fleet-wide incident if it lands in a hot path.

NAPI, Polling, and Scheduler Semantics

NAPI is one of the Linux networking stack’s most important performance mechanisms. It lets the kernel switch from interrupt-heavy packet processing to a poll-based model when traffic rises, which improves scalability and reduces interrupt storms. That design is efficient, but it also means the NAPI poll loop is a highly sensitive execution region where preemption discipline has to be correct every timeWhy preemption accounting is fragile here

The kernel’s preemption documentation explains that preempt counts are used to determine when scheduling may safely occur. If a path increments the count and fails to decrement it properly, the kernel can carry that state forward beyond the intended critical section. In an ordinary code path that might be annoying; in NAPI polling, it can distort timing across packet bursts and tracing hooksWhat makes tracepoint-related bugs difficult is that they often do not show up as obvious functional failures. The packet still arrives. The interface still comes up. The system still passes a smoke test. But internal accounting has shifted, and that can surface later as latency jitter, stuck polling behavior, or a scheduler that behaves less predictably than it should

This is exactly the kind of issue that kernel maintainers tend to fix early. The Linux kernel community has long treated preemption correctness as foundational, because once scheduling assumptions are wrong, a lot of downstream debugging becomes misleading. A tracepoint leak is dangerous not because it is flashy, but because it quietly erodes trust in the execution model

Practical implications for operators

For operators, this means the bug is most relevant where packet processing is both busy and closely observed. Network monitoring, packet capture, performance tracing, and heavy virtualization all increase the odds that this path matters in production. That is why “just a tracepoint” is the wrong mental model here; in a modern kernel, tracepoints sit close enough to critical paths that they deserve the same discipline as functional code- NAPI is designed to improve scaling, so its internals are inherently performance-sensitive.

- Preemption state must remain balanced across entry and exit points.

- Tracepoints should not alter functional scheduling behavior.

- Bugs in hot networking paths can have outsized operational impact.

- Monitoring-heavy environments are more likely to expose tracepoint side effects.

Security Impact and Severity

This CVE should be treated as a kernel correctness and stability issue first, and a security issue second, unless additional vendor guidance says otherwise. There is no indication in the available description that the flaw is an obvious remote code execution primitive or a direct memory corruption path. Instead, the concern is that kernel accounting can drift in a way that affects scheduling and reliabilityWhy “non-exploit” bugs still matter

Kernel vulnerabilities are not limited to attack chains that end in shell access. Microsoft and the Linux kernel ecosystem both track defects that affect correctness, portability, and scheduling behavior because those defects can still be security relevant when combined with other conditions. A system that behaves unpredictably under load is harder to defend, harder to monitor, and harder to trustThat is especially true in infrastructure environments. If a network driver’s polling path holds on to preemption state too long, the resulting latency changes can affect other services on the same host. On a busy server, that can translate into performance degradation that looks like a networking problem, a scheduler problem, or a general system health problem depending on where you start looking

The likely security posture is therefore conservative but not alarmist: patch it, test it, and keep an eye on whether vendors classify it as a reliability bug, a denial-of-service risk, or something broader. When a kernel issue touches accounting and tracing rather than memory safety, precision matters more than drama

How to think about exposure

Exposure is highest on systems that actually use the affected driver and exercise the NAPI polling path heavily. In most WindowsForum readers’ environments that may mean servers, cluster nodes, hypervisors, or appliances rather than consumer desktops. The bug is real, but it is not the kind of issue that should trigger panic on a random laptop that never touches Intel 700-series server NICs- Treat it as a real kernel update item.

- Do not assume “tracepoint” means “low relevance.”

- Expect the strongest impact in server and infrastructure workloads.

- Avoid overstating exploitability without vendor confirmation.

- Prioritize systems using i40e or closely related Intel server networking stacks.

Microsoft’s Role in the Disclosure

Microsoft’s Security Update Guide has become a more flexible disclosure platform over time, especially after the addition of industry-partner CVEs and machine-readable advisory formats. That matters here because the CVE is not just a Linux kernel fix in isolation; it is also an enterprise-distribution event that lands inside Microsoft’s vulnerability cataloging and response workflowsWhy this matters to enterprise security teams

For large organizations, the real value of the MSRC system is normalization. A Linux kernel issue can be surfaced, tracked, and triaged alongside Windows, cloud, and application vulnerabilities in one operational process. That reduces the friction of cross-platform remediation, which is increasingly important in mixed estates that run both Microsoft services and Linux infrastructureThe 2024 MSRC announcements about CSAF and cloud-service CVEs show a clear direction: more machine-readable, more integrated, and more automation-friendly disclosure. For security teams, that means a CVE like this can move from awareness to inventory matching faster, which is exactly what modern patch operations need

There is also a documentation lesson here. Microsoft’s update-guide ecosystem is no longer just a static listing of vulnerabilities; it is a live feed into vulnerability-management tooling, advisory ingestion, and response automation. That makes the quality of the metadata around a CVE almost as important as the patch itself

- Cross-platform vulnerability tracking is now a practical necessity.

- Machine-readable advisory formats reduce manual triage work.

- Enterprise remediation depends on consistent identifiers and metadata.

- Linux kernel issues can appear in Microsoft-facing workflows when they affect managed infrastructure.

- Better disclosure plumbing shortens the distance between publication and patching.

Why This Fix Is Likely Small but Important

The most interesting kernel fixes are often the ones that change very little code but correct a fundamental assumption. A preempt count leak in a tracepoint falls squarely into that category: the patch is probably tiny, but the conceptual correction is large. It restores the boundary between “observe” and “affect,” which is one of the kernel’s most important design promisesThe engineering tradeoff

Kernel maintainers generally prefer the least disruptive fix that fully resolves the underlying problem. If a tracepoint accidentally disturbs preemption state, the right answer is usually not to redesign the entire driver — it is to remove the side effect, restore balance, and preserve performance. That is especially true in networking code, where additional locking can be more expensive than the bug itselfThis is where surgical fixes shine. They minimize regression risk, they are easier to backport, and they are usually easier for vendors to incorporate into stable branches. Microsoft’s own vulnerability ecosystem, including its update-guide tooling and CSAF publication, is built to distribute exactly these kinds of fixes efficiently across fleets

The broader implication is that a lot of modern kernel security work is really about making hidden assumptions explicit. If a tracepoint ever behaves as if it were part of the scheduling core, that is a design smell. The repair is not just to eliminate a leak, but to make the boundary visible again

Operational upside

For administrators, a small patch is good news because it usually carries less rollout risk than a structural refactor. That does not mean “safe to ignore”; it means easier to stage, easier to validate, and easier to backport into vendor kernels. In a fleet, that is often the difference between patching this week and punting to the next maintenance window- Small code changes often indicate a well-contained fix.

- Backporting is usually easier when the remediation is surgical.

- Tracepoint side effects are easier to remove than to redesign.

- Reduced patch complexity lowers operational risk.

- Fixes that preserve behavior while removing side effects are ideal for stable kernels.

Strengths and Opportunities

This CVE actually reveals several strengths in the Linux and Microsoft disclosure ecosystem. It shows that sanitizers, tracepoints, stable kernel review, and enterprise vulnerability distribution are all working together to surface and move a subtle bug before it turns into a broader incident. The opportunity for defenders is to use this as a reminder to audit similarly “non-functional” code paths that still live close to scheduler and network hot paths- Early detection through kernel accounting and tracing discipline.

- Low patch surface, which usually helps stable backports.

- Clear subsystem ownership in the i40e networking stack.

- Enterprise-friendly disclosure through Microsoft’s update guide.

- Better fleet visibility when vulnerability data is machine-readable.

- Opportunity to audit adjacent tracepoints for similar preemption side effects.

- Improved confidence in the NAPI polling path after remediation.

Risks and Concerns

The biggest concern is not that this one bug is catastrophic; it is that bugs like this are easy to dismiss until they accumulate. A preempt count leak can create latent instability, and because it sits in a tracepoint, the symptoms may be blamed on observability tooling rather than on the underlying driver. That makes it a classic “small bug, expensive diagnosis” problem- Latency spikes or scheduling anomalies under heavy traffic.

- Misattribution of symptoms to tracing or monitoring tools.

- Hard-to-reproduce behavior in large fleets.

- Greater sensitivity on server NICs than on casual desktop systems.

- Potential for adjacent bugs if similar code patterns exist elsewhere.

- Operational confusion if different vendors describe the severity differently.

- Delayed remediation if administrators underestimate the impact of a tracepoint leak.

Looking Ahead

The next thing to watch is how downstream vendors classify and backport the fix. Because Microsoft now publishes CVE data through a more machine-readable and integrated model, the operational question is less about whether the CVE exists and more about how quickly the fixed builds propagate into real-world Linux deploymentsA second question is whether maintainers use this as a prompt to review other network-driver tracepoints for similar preemption side effects. That would be the mature response: not treating the bug as an isolated oddity, but as evidence that a particular design pattern deserves closer scrutiny. In large kernel subsystems, one accounting mistake is often the clue to a broader family of risks

What administrators should watch

- Vendor backports for i40e across stable kernel branches.

- Any follow-up advisories that clarify impact or severity.

- Evidence of similar issues in adjacent networking tracepoints.

- Patch availability in distributions that target server and virtualization workloads.

- Changes in fleet telemetry after the fix is deployed.

The larger lesson is that kernel security is often won not by dramatic exploit narratives, but by relentless attention to bookkeeping, scheduling, and hot-path correctness. CVE-2026-23313 is a good example of that pattern: small in code, meaningful in consequence, and exactly the kind of issue that mature infrastructure teams should respect before it has a chance to become a recurring problem.

Source: MSRC Security Update Guide - Microsoft Security Response Center