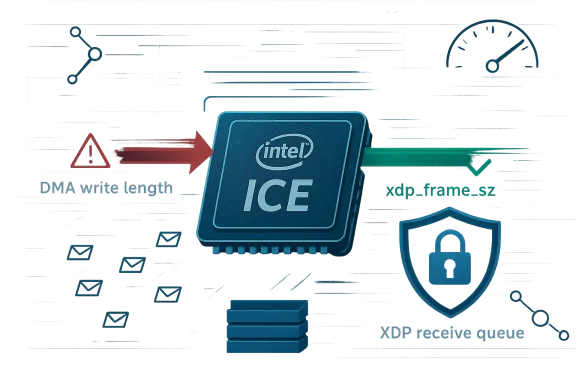

CVE-2026-23377 is a Linux kernel networking issue in Intel’s ice driver, and the patch title itself gives away the core of the problem: the XDP receive queue’s fragment size was being derived from the DMA write length instead of the actual xdp.frame_sz. That sounds small, but in high-performance packet paths, small mismatches between hardware-facing buffer accounting and software-facing frame sizing can become correctness bugs, performance regressions, or even memory-safety hazards. The change has now surfaced in Microsoft’s Security Update Guide entry for CVE-2026-23377, which is a reminder that kernel networking defects can ripple far beyond a single driver tree.

For WindowsForum readers, the interesting part is not just the CVE label, but what it says about the evolving shape of network-stack hardening. Modern NICs, especially those used for cloud, virtualization, and high-throughput packet processing, rely on highly optimized fast paths where XDP, AF_XDP, and DMA-backed buffers must agree on exact sizes and ownership rules. When those layers disagree, the result is often subtle at first and then expensive later: dropped packets, corrupted metadata, broken zero-copy assumptions, or an exploitable out-of-bounds condition.

The ice driver is Intel’s Linux Ethernet driver for E800-series hardware and related deployments where XDP and zero-copy packet processing matter. In that environment, the receive path is tuned for speed, and the software stack tries to keep packet buffers aligned with what the NIC and DMA engine actually consumed or wrote. The patch title indicates that the driver previously treated the DMA write length as the authoritative fragment size, which is not always the same thing as the frame size the XDP subsystem expects to manage.

That distinction matters because DMA write length reflects how much the device transferred, while xdp.frame_sz reflects the intended frame allocation seen by the XDP memory model. In fast-path code, a mismatch can distort packet accounting, especially when pages are shared, fragmented, or recycled in place. The current patch discussion suggests Intel and the Linux networking community are correcting the driver to use the XDP frame size as the canonical value, which is usually a sign that the earlier code path was coupling hardware transfer semantics too tightly to software buffer semantics.

CVE-2026-23377 is therefore less a headline-grabbing exploit story than a window into how modern networking bugs are found, classified, and corrected. The patch itself is precise, but the lesson is broad: when high-performance drivers blur the line between what hardware wrote and what software expects, security and reliability both suffer. The good news is that the fix is conceptually right, and the industry appears to be converging on a better model where XDP frame semantics, not DMA convenience, define the truth.

Source: MSRC Security Update Guide - Microsoft Security Response Center

For WindowsForum readers, the interesting part is not just the CVE label, but what it says about the evolving shape of network-stack hardening. Modern NICs, especially those used for cloud, virtualization, and high-throughput packet processing, rely on highly optimized fast paths where XDP, AF_XDP, and DMA-backed buffers must agree on exact sizes and ownership rules. When those layers disagree, the result is often subtle at first and then expensive later: dropped packets, corrupted metadata, broken zero-copy assumptions, or an exploitable out-of-bounds condition.

Background

Background

The ice driver is Intel’s Linux Ethernet driver for E800-series hardware and related deployments where XDP and zero-copy packet processing matter. In that environment, the receive path is tuned for speed, and the software stack tries to keep packet buffers aligned with what the NIC and DMA engine actually consumed or wrote. The patch title indicates that the driver previously treated the DMA write length as the authoritative fragment size, which is not always the same thing as the frame size the XDP subsystem expects to manage.That distinction matters because DMA write length reflects how much the device transferred, while xdp.frame_sz reflects the intended frame allocation seen by the XDP memory model. In fast-path code, a mismatch can distort packet accounting, especially when pages are shared, fragmented, or recycled in place. The current patch discussion suggests Intel and the Linux networking community are correcting the driver to use the XDP frame size as the canonical value, which is usually a sign that the earlier code path was coupling hardware transfer semantics too tightly to software buffer semantics.

Why this class of bug keeps appearing

High-performance drivers often optimize for the common case, and the common case is not always the safe case. A fast NIC path may skip redundant checks, reuse descriptor metadata, or infer buffer size from the most immediately available field. That is efficient until the underlying abstraction changes, at which point the “obvious” field is no longer the right one.- DMA and frame sizing solve different problems.

- XDP prefers explicit frame accounting.

- Zero-copy paths magnify the cost of any mismatch.

- Driver optimizations can outlive the assumptions they were written for.

What the Patch Actually Changes

The Linux patch title is concise, but it reveals the important fix: ice should set XDP RxQ fragment size from xdp.frame_sz rather than the DMA write length. That suggests the receive queue’s metadata previously reflected what the device wrote, not what the XDP subsystem allocated or expected to recycle. The correction aligns queue bookkeeping with the XDP memory contract, which is a classic example of making the software abstraction, not the hardware artifact, the source of truth.The significance of source-of-truth alignment

When a driver uses the wrong reference value, it may still work for common MTU sizes and familiar traffic patterns. But packet paths become fragile when frame recycling, fragment boundaries, or unusual frame sizes enter the picture. By tying frag_size to xdp.frame_sz, the driver reduces the chance that a packet buffer is interpreted differently by the NIC-facing logic and the XDP-facing logic.- Better buffer accounting.

- More predictable XDP behavior.

- Reduced reliance on DMA-side heuristics.

- Cleaner interaction with zero-copy workloads.

Why XDP Makes This More Sensitive

XDP sits in a privileged position in the packet path. It is designed to process packets very early, before the rest of the networking stack gets involved, which is exactly why it is attractive for performance-sensitive applications. But the earlier a packet is handled, the less room there is for sloppy metadata, because there are fewer later checks to catch mistakes.Zero-copy raises the stakes

In zero-copy architectures, packets are not copied as often between hardware and software, so the same memory may be shared conceptually across multiple layers. If the fragment size is overstated, understated, or derived from the wrong field, the driver can step outside the intended contract for the buffer. That can manifest as data corruption, malformed packet delivery, or a security boundary failure depending on how downstream code consumes the metadata.- Zero-copy reduces overhead.

- Zero-copy reduces redundancy.

- Zero-copy also reduces safety margins.

- Buffer metadata must be exact, not approximate.

Security impact versus reliability impact

Not every driver bookkeeping issue is automatically exploitable, and that caution matters here. The public patch title alone does not prove exploitability, and it would be irresponsible to overstate the case. Still, the appearance of a CVE suggests Microsoft judged the issue important enough to track as a vulnerability rather than a mere correctness fix.Microsoft’s Decision to Track It

Microsoft’s Security Update Guide entry is notable because it places a Linux driver fix into the broader vulnerability-tracking ecosystem that Windows administrators watch closely. That does not mean the issue affects Windows directly in every case; rather, it shows the cross-platform significance of a network-driver flaw that is important enough to warrant formal CVE handling. In practice, security teams should treat it as a signal that packet-processing correctness is under scrutiny across the industry.What administrators should infer

For operators, the main lesson is that driver-level packet handling is part of the attack surface, even when the affected code lives in a Linux tree rather than a Windows kernel. Enterprises that rely on Intel network hardware in mixed OS environments should care because the same silicon, the same DMA behavior, and the same packet-processing expectations often show up in multiple stacks. When a defect appears in one ecosystem, it can foreshadow engineering attention elsewhere.- The CVE underscores cross-platform network-driver risk.

- Packet-path bugs can have enterprise consequences.

- Shared hardware often means shared classes of failure.

- Security teams should track vendor driver advisories, not just OS patches.

Competitive and Market Implications

Intel’s networking stack is deeply embedded in cloud infrastructure, telco deployments, appliance vendors, and high-speed packet processing environments. Any driver fix that touches XDP or zero-copy behavior therefore matters to competitors and customers alike, because these are precisely the scenarios where organizations choose hardware based on packet efficiency and software maturity. A frag_size correction may look minor, but in a market where microseconds and cache misses matter, even small stability problems can influence procurement decisions.Why rivals should care

Competing NIC vendors do not need the same bug to suffer the same scrutiny. Once one major driver family is shown to need a semantics correction in the XDP path, customers begin asking tougher questions about how every vendor handles buffer sizing, metadata propagation, and DMA boundaries. That can accelerate code audits across the broader ecosystem.- Cloud buyers become more selective about driver updates.

- OEMs may revisit their validation matrices.

- Kernel teams can expect more review of XDP accounting.

- Security teams may expand regression testing for packet paths.

Enterprise versus consumer impact

For consumers, this kind of issue is largely invisible unless it ships in a consumer-facing NIC driver or causes broad system instability. For enterprises, especially those running hypervisors, packet brokers, NAT appliances, or high-throughput edge nodes, the stakes are much higher. These operators may depend on XDP not for novelty but for workload economics, and a fragile queue-sizing bug can translate directly into wasted capacity or unexpected downtime.Technical Reading of the Vulnerability

At a technical level, the CVE appears to concern a mismatch between the size of the DMA operation and the size of the XDP frame abstraction. That mismatch matters because the receive queue’s frag_size informs how packet buffers are treated after the device writes into them. If the queue reports the wrong size, later code may trust metadata that no longer corresponds to the actual allocation semantics.How bugs like this tend to surface

These issues are usually discovered by code review, fuzzing, or integration testing rather than by dramatic crash signatures. The reason is simple: packet paths are built to avoid overhead, so they do not always fail loudly when the metadata is slightly wrong. Instead, they fail in ways that look like intermittent packet drops, weird offsets, or difficult-to-reproduce memory issues.- Incorrect size propagation.

- Metadata inconsistency.

- Edge-case packet handling.

- Potential memory-safety fallout.

Why the fix is cleanly scoped

The patch title suggests a straightforward correction, which is often the best kind of security fix: narrow, auditable, and semantically obvious. Replacing DMA write length with xdp.frame_sz is the sort of change reviewers can reason about without needing a redesign of the entire receive path. In security engineering, a fix that aligns abstractions is often more valuable than a broad workaround that merely hides symptoms.Operational Implications for Large Deployments

Enterprises running Intel-based infrastructure should care less about the CVE label in isolation and more about what it reveals about the validation burden around XDP. If your environment uses AF_XDP, packet steering, DPDK-adjacent workflows, or custom kernel networking features, you want to know exactly how receive buffers are defined and who owns the truth about their size. That is especially true in fleets where a single NIC model may be deployed across many hosts with slightly different kernel revisions.What IT teams should review

The practical response is not panic; it is inventory discipline. Teams should identify where ice-based adapters are used, whether XDP or zero-copy is enabled, and whether vendor kernel updates have been applied in the affected branches. They should also verify that packet-processing tests include atypical frame sizes and long-running soak runs, because those are the scenarios most likely to expose accounting mismatches.- Inventory Intel E800-series and related NIC deployments.

- Determine whether XDP or AF_XDP is enabled.

- Check kernel and driver package versions.

- Run regression tests with non-default packet sizes.

- Validate update cadence for maintenance windows.

Strengths and Opportunities

This fix has a lot going for it because it points in the direction modern networking stacks should already be moving: clearer abstractions, better metadata discipline, and tighter alignment between device behavior and software expectations. It also gives vendors an opportunity to review a broader family of XDP receive-path assumptions before they become customer-facing outages.- Correct abstraction choice: using xdp.frame_sz is more semantically accurate than reusing DMA write length.

- Cleaner XDP accounting: the receive queue now reflects the memory model XDP expects.

- Reduced ambiguity: fewer chances for buffer-size disagreements between hardware and software.

- Better auditability: reviewers can trace the meaning of the field more easily.

- Broader ecosystem benefit: adjacent drivers can adopt the same consistency model.

- Enterprise confidence: validation efforts can focus on known packet-path contracts.

- Security posture improvement: narrowing the gap between actual and intended sizes reduces attack surface.

Risks and Concerns

The main risk is that a fix this narrow can look deceptively simple while concealing deeper assumptions elsewhere in the driver family. If one field was wrong, there may be neighboring code paths that rely on similar shortcut logic, especially in older branches or backported builds. Security teams should therefore avoid treating this as a one-line bug and move on. That would be too optimistic.- Residual assumptions may remain in related fast paths.

- Backport variance could produce inconsistent behavior across distributions.

- Hidden regressions are possible if downstream code relied on the old value.

- Testing gaps may miss unusual frame-size combinations.

- Operational complexity increases when only some hosts are patched.

- Performance tuning can mask correctness issues during basic validation.

- Mixed environments may make root-cause analysis harder.

Looking Ahead

The next thing to watch is how quickly distributions and vendors carry this change into supported kernels and appliance images. Because the issue is tied to driver semantics rather than a high-level protocol, backports should be straightforward in principle, but network stacks have a long history of turning straightforward backports into version-specific surprises. Teams should expect that the same CVE may look slightly different depending on the kernel branch and NIC firmware combination.Signals to monitor

The broader signal to watch is whether more Intel networking code is normalized around XDP frame-size semantics. If that trend continues, it would indicate a deliberate cleanup of receive-path assumptions rather than an isolated remediation. That would be good news for long-term maintainability, even if it temporarily increases patch churn.- Vendor kernel update notes.

- Downstream backport timelines.

- Related fixes in i40e and idpf.

- Regression reports from high-throughput operators.

- Any follow-on advisories for adjacent packet paths.

CVE-2026-23377 is therefore less a headline-grabbing exploit story than a window into how modern networking bugs are found, classified, and corrected. The patch itself is precise, but the lesson is broad: when high-performance drivers blur the line between what hardware wrote and what software expects, security and reliability both suffer. The good news is that the fix is conceptually right, and the industry appears to be converging on a better model where XDP frame semantics, not DMA convenience, define the truth.

Source: MSRC Security Update Guide - Microsoft Security Response Center