CVE-2026-23401 is the kind of Linux kernel vulnerability that looks modest on a scorecard but deserves close attention from anyone running KVM-based virtualization on x86 hosts. The flaw sits in KVM’s x86 memory-management code, where a shadow page table entry can be overwritten as an emulated MMIO entry without first removing the existing present mapping. The result is not a remote internet exploit, but a local virtualization bug with serious availability implications and, according to public technical discussion, potential memory-corruption consequences in host kernel context.

The issue was published in early April 2026 and later enriched by NVD with a CVSS 3.1 base score of 5.5, rated Medium. That score reflects a local attack vector, low privileges required, no user interaction, unchanged scope, no confidentiality or integrity impact in the base assessment, and high availability impact.

That framing can make the bug sound routine, but virtualization bugs often need a different lens. A flaw that allows a guest-controlled path to destabilize a host hypervisor can affect multiple workloads, interrupt service availability, and complicate assumptions about isolation between tenants, lab environments, or nested virtualization layers.

Historically, KVM’s x86 MMU has been one of the most performance-sensitive and security-sensitive areas of the kernel. It must reconcile the guest’s view of memory, the host’s real memory, hardware virtualization features such as Intel EPT and AMD NPT, and Linux memory-management features like page migration, swapping, dirty tracking, and KSM.

The missing case was subtler. Host userspace, device-backed activity, or writes outside KVM’s narrow guest-write assumptions can modify guest memory in a way that changes a guest page table entry from a memory-backed mapping into an emulated MMIO mapping. When the guest later faults on the relevant address, KVM may install the MMIO SPTE while leaving behind stale bookkeeping tied to the old present SPTE.

That makes this vulnerability a classic kernel lesson: the impossible state was only impossible under the assumptions of one path. Modern virtualization is full of side paths, accelerators, DMA-like behavior, nested translations, and userspace cooperation, any of which can invalidate a neat local invariant.

An SPTE is not just a raw pointer to memory. It also participates in KVM’s internal accounting, reverse mappings, dirty tracking, access permissions, and invalidation flows. If the visible entry changes but the metadata around it does not, the hypervisor can later act on stale assumptions.

That stale state matters most when other KVM paths traverse reverse mappings or zap shadow pages. A stale reverse map may point to memory that has already been freed or repurposed, which creates the familiar kernel danger zone of use-after-free behavior.

The public warning trace associated with the issue points into

For virtualization operators, the score can be misleading. The attacker’s “local” position may be inside a guest VM, not necessarily on the host shell. In cloud, hosting, CI, malware-analysis, and lab environments, guests are often intentionally exposed to untrusted or semi-trusted code.

A hypervisor availability bug has a multiplier effect. A single host crash can affect multiple workloads, interrupt live services, and trigger noisy failover. Even if confidentiality and integrity remain unproven in the formal score, host kernel memory corruption is rarely something operators should dismiss.

The distinction is especially important for organizations using KVM as part of a larger platform. A developer workstation running one trusted VM has a very different risk profile from a multi-tenant compute node, a nested virtualization lab, or a public CI runner that launches arbitrary guest images.

Those upstream version numbers are useful, but they are not the whole story. Enterprise distributions frequently backport security fixes into kernels whose visible version numbers do not match upstream stable releases. A RHEL, Ubuntu, Debian, SUSE, Oracle, or Azure-oriented kernel may contain the fix even if

That is why administrators should avoid simplistic version comparison. The right question is whether the vendor’s kernel package includes the CVE-2026-23401 fix, not whether the banner string happens to equal an upstream stable release.

For rolling-release users, the answer is often to update to the latest kernel package and reboot. For enterprise users, the answer may involve checking vendor advisories, errata, live-patching status, and whether KVM modules are actually loaded on the affected hosts.

Nested virtualization raises the stakes because it creates more complex translation layers. The public discussion around the bug specifically highlights scenarios involving nested guest behavior and shadow paging paths. Even when hardware-assisted translation is present, KVM still contains logic for shadow MMU cases, emulation, invalidations, and compatibility paths.

Enterprises should also think beyond production. Internal engineering labs often run older kernels, custom KVM builds, and unusual QEMU device configurations. Those environments may not host customer data, but they frequently connect to internal networks and carry privileged credentials.

The biggest operational risk is not necessarily a dramatic VM escape headline. It is a host kernel crash or corruption event that interrupts workloads and forces incident teams to determine whether the crash was accidental, malicious, or a failed exploit attempt.

A Windows 11 user running Hyper-V, Windows Sandbox, WSL2, or VirtualBox on Windows is not typically exercising Linux KVM as the host hypervisor. WSL2 uses Microsoft’s virtualization stack rather than KVM in the normal Windows-hosted configuration. However, a Windows VM running on a Linux KVM host is squarely in scope from the host’s perspective.

Enthusiasts who dual-boot Windows and Linux may overlook the Linux side if they only use it for gaming, VFIO, or experimentation. If that Linux install hosts Windows VMs with GPU passthrough or nested virtualization, it should be patched like any other hypervisor.

Home lab users are also more exposed than they may think. Proxmox, Debian KVM servers, Ubuntu virtualization hosts, and Fedora-based lab systems often run experimental kernels and nested setups that resemble the interesting paths for this bug.

That creates a delicate distinction. A guest physical address might refer to ordinary RAM today and an emulated device region tomorrow, depending on memslot configuration, device state, or nested translation. KVM must recognize that distinction quickly because MMIO exits are expensive and performance-sensitive.

To optimize this, KVM can cache MMIO information in SPTEs. That is sensible engineering: repeated accesses to the same emulated region should not require rediscovering the same facts from scratch. But cached state is safe only if invalidation and transition rules are airtight.

CVE-2026-23401 shows how a seemingly narrow transition can violate that rule. If KVM treats a slot as safely replaceable without removing the old present mapping’s reverse-map state, later code may walk into metadata that no longer matches reality.

For Windows administrators, the value of a Microsoft-facing CVE entry is often visibility rather than ownership of the original bug. It tells mixed-environment teams that a vulnerability may matter somewhere in their estate, even if it is not a Windows kernel flaw.

This is increasingly normal. Modern Windows environments rarely consist only of Windows endpoints and Windows Server. They include Linux build agents, KVM-based appliances, Azure workloads, Kubernetes nodes, WSL-adjacent developer workflows, and third-party virtual appliances.

The correct response is not to panic about Windows being affected. The correct response is to ask whether any Linux KVM host under your administrative umbrella runs affected kernel code.

However, organizations often run both. A Windows-heavy company may use Hyper-V for on-premises Windows workloads while also using KVM indirectly through cloud services, Linux appliances, or developer machines. Security teams therefore need asset-level clarity, not platform assumptions.

A warning in this area should not be dismissed as harmless noise. KVM MMU warnings often indicate that an internal invariant failed, which means later behavior may be unpredictable. Even if a workload keeps running, the host may have entered a state that deserves investigation.

Administrators should review kernel logs, hypervisor logs, crash dumps, and monitoring alerts around KVM hosts. Look for KVM MMU warnings, unexpected host reboots, VM exits followed by panics, or errors involving shadow paging, EPT, NPT, reverse maps, dirty tracking, or MMU notifier paths.

Detection is not the same as proof of exploitation. This bug can be found through fuzzing and may be triggered in research conditions, but ordinary bugs, unusual device models, or nested virtualization experiments can also generate alarming traces.

Competitors such as Hyper-V, VMware ESXi, bhyve, and Xen face their own classes of memory-virtualization flaws. The difference is not that one hypervisor model avoids complexity. The difference is how each ecosystem discovers, patches, backports, and communicates those flaws.

KVM’s open development model gives defenders unusual visibility. The public can inspect patches, mailing-list discussion, stable backports, and distribution advisories. That transparency helps operators understand risk, but it also means attackers can study patch diffs quickly.

This is the familiar patch-gap problem. Once a fix is public, unpatched systems become easier to reason about. For kernel vulnerabilities, researchers and attackers can compare vulnerable and fixed code paths to build reliable triggers faster than many enterprises can complete maintenance windows.

Security teams should also watch whether exploitability assessments evolve. NVD’s current scoring emphasizes availability, while public discussion of the underlying condition includes memory-corruption concerns. Those two views are not necessarily contradictory; formal scoring often lags the most aggressive technical interpretation.

The operational watchlist is straightforward:

It should also encourage better separation between trusted and untrusted virtualization pools. Running experimental nested virtualization workloads on the same class of hosts as business-critical VMs is convenient, but convenience is rarely a strong security boundary.

CVE-2026-23401 is ultimately a small ordering bug with large architectural resonance: before KVM can safely mark memory as emulated MMIO, it must first cleanly remove what used to be there. That principle applies well beyond one function in the Linux kernel. In virtualization security, old state is dangerous state, and the safest platforms are the ones that know exactly when to forget.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

Background

Background

Why this CVE matters

CVE-2026-23401 affects the Linux kernel’s KVM x86/mmu subsystem, specifically the logic that handles shadow page table entries, or SPTEs. KVM is the kernel component that turns Linux into a hardware-assisted hypervisor, powering everything from developer laptops running QEMU to enterprise virtualization stacks and cloud infrastructure.The issue was published in early April 2026 and later enriched by NVD with a CVSS 3.1 base score of 5.5, rated Medium. That score reflects a local attack vector, low privileges required, no user interaction, unchanged scope, no confidentiality or integrity impact in the base assessment, and high availability impact.

That framing can make the bug sound routine, but virtualization bugs often need a different lens. A flaw that allows a guest-controlled path to destabilize a host hypervisor can affect multiple workloads, interrupt service availability, and complicate assumptions about isolation between tenants, lab environments, or nested virtualization layers.

Historically, KVM’s x86 MMU has been one of the most performance-sensitive and security-sensitive areas of the kernel. It must reconcile the guest’s view of memory, the host’s real memory, hardware virtualization features such as Intel EPT and AMD NPT, and Linux memory-management features like page migration, swapping, dirty tracking, and KSM.

The road to this bug

The vulnerable logic traces back to earlier KVM work intended to optimize or simplify MMIO SPTE handling. A prior commit reasoned that converting a shadow-present SPTE into an MMIO SPTE should not happen through a normal guest write path, because KVM would intercept and invalidate the relevant state.The missing case was subtler. Host userspace, device-backed activity, or writes outside KVM’s narrow guest-write assumptions can modify guest memory in a way that changes a guest page table entry from a memory-backed mapping into an emulated MMIO mapping. When the guest later faults on the relevant address, KVM may install the MMIO SPTE while leaving behind stale bookkeeping tied to the old present SPTE.

That makes this vulnerability a classic kernel lesson: the impossible state was only impossible under the assumptions of one path. Modern virtualization is full of side paths, accelerators, DMA-like behavior, nested translations, and userspace cooperation, any of which can invalidate a neat local invariant.

What Actually Went Wrong

SPTEs, MMIO, and stale state

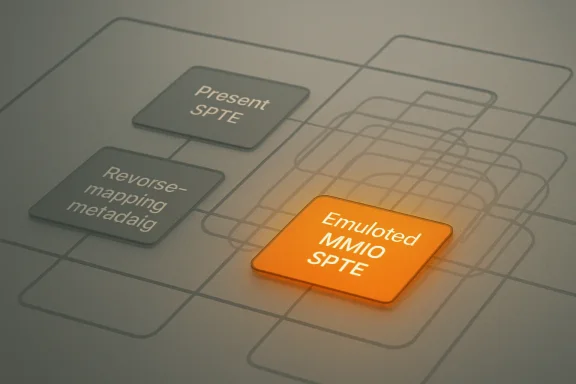

At the heart of the bug is an ordering problem. When KVM installs an emulated MMIO SPTE, it must first drop or zap any existing shadow-present SPTE occupying that slot. CVE-2026-23401 exists because KVM could instead mark the slot as MMIO without properly clearing the previous present mapping’s associated tracking state.An SPTE is not just a raw pointer to memory. It also participates in KVM’s internal accounting, reverse mappings, dirty tracking, access permissions, and invalidation flows. If the visible entry changes but the metadata around it does not, the hypervisor can later act on stale assumptions.

That stale state matters most when other KVM paths traverse reverse mappings or zap shadow pages. A stale reverse map may point to memory that has already been freed or repurposed, which creates the familiar kernel danger zone of use-after-free behavior.

The public warning trace associated with the issue points into

mark_mmio_spte, after KVM encounters a shadow-present SPTE where the code path does not expect one. That warning is a symptom, not the full disease: the dangerous part is the mismatch between the entry’s new MMIO role and the cleanup that should have happened first.The simplified chain

Administrators do not need to memorize every MMU structure to understand the operational risk. The bug is about incorrect transition handling when a mapping changes meaning.- A guest-visible page table entry may originally resolve to normal guest memory.

- KVM creates a shadow-present SPTE and associated tracking metadata.

- The guest memory backing the page table changes outside the expected KVM guest-write path.

- The same logical entry now resolves to emulated MMIO.

- KVM installs an MMIO SPTE without first removing the old present SPTE state.

- Later cleanup or tracking code can encounter stale metadata and destabilize the host kernel.

Why the CVSS Score Understates the Operational Impact

Medium does not mean low priority

The 5.5 Medium score assigned by NVD is technically coherent: the attacker needs local access, privileges are required, user interaction is not required, and the scored impact is availability rather than data theft. In vulnerability-management dashboards, that may push the issue below remote code execution bugs and browser zero-days.For virtualization operators, the score can be misleading. The attacker’s “local” position may be inside a guest VM, not necessarily on the host shell. In cloud, hosting, CI, malware-analysis, and lab environments, guests are often intentionally exposed to untrusted or semi-trusted code.

A hypervisor availability bug has a multiplier effect. A single host crash can affect multiple workloads, interrupt live services, and trigger noisy failover. Even if confidentiality and integrity remain unproven in the formal score, host kernel memory corruption is rarely something operators should dismiss.

The distinction is especially important for organizations using KVM as part of a larger platform. A developer workstation running one trusted VM has a very different risk profile from a multi-tenant compute node, a nested virtualization lab, or a public CI runner that launches arbitrary guest images.

Reading the vector correctly

The CVSS vector says a lot if read in context. AV:L tells us the attacker is not reaching the bug over the network directly. PR:L indicates some level of privilege is needed, while UI:N means no victim click or prompt is required once the attacker is in position.- Attack Vector: Local — exploitation starts from a local context, such as a guest or host-adjacent execution path.

- Attack Complexity: Low — NVD does not treat the required conditions as especially difficult.

- Privileges Required: Low — the attacker is not assumed to already be root on the host.

- User Interaction: None — the path can proceed without an administrator opening a file or accepting a prompt.

- Availability: High — a successful attack can significantly disrupt the affected system.

Affected Kernel Lines and Patch Reality

Version ranges require distro interpretation

The NVD entry lists affected Linux kernel ranges beginning with the 5.13 era and extending across multiple long-term and current series, with fixed thresholds in lines such as 5.15.203, 6.1.168, 6.6.131, 6.12.80, 6.18.21, and 6.19.11. It also identifies 7.0 release candidates in the affected set.Those upstream version numbers are useful, but they are not the whole story. Enterprise distributions frequently backport security fixes into kernels whose visible version numbers do not match upstream stable releases. A RHEL, Ubuntu, Debian, SUSE, Oracle, or Azure-oriented kernel may contain the fix even if

uname -r appears older than the upstream fixed version.That is why administrators should avoid simplistic version comparison. The right question is whether the vendor’s kernel package includes the CVE-2026-23401 fix, not whether the banner string happens to equal an upstream stable release.

For rolling-release users, the answer is often to update to the latest kernel package and reboot. For enterprise users, the answer may involve checking vendor advisories, errata, live-patching status, and whether KVM modules are actually loaded on the affected hosts.

Practical inventory checks

A good response starts with knowing where KVM is in use. Many systems include KVM modules, but fewer actively expose untrusted virtual machines.- Identify hosts running KVM workloads, including developer machines, CI runners, lab boxes, and production compute nodes.

- Determine whether nested virtualization is enabled, especially on x86 hosts using Intel VMX or AMD SVM.

- Confirm the installed kernel package and vendor security status for CVE-2026-23401.

- Apply the relevant kernel update or live patch where available.

- Reboot or otherwise ensure the fixed kernel code is active, because updating a package alone does not replace a running kernel.

Enterprise Impact: Cloud, CI, and Virtualization Hosts

Where the risk concentrates

The highest-risk environments are those where users can influence guest behavior while the host remains a shared or valuable asset. That includes cloud compute nodes, hosted desktop infrastructure, automated test farms, security sandboxes, and build systems that run untrusted VM images.Nested virtualization raises the stakes because it creates more complex translation layers. The public discussion around the bug specifically highlights scenarios involving nested guest behavior and shadow paging paths. Even when hardware-assisted translation is present, KVM still contains logic for shadow MMU cases, emulation, invalidations, and compatibility paths.

Enterprises should also think beyond production. Internal engineering labs often run older kernels, custom KVM builds, and unusual QEMU device configurations. Those environments may not host customer data, but they frequently connect to internal networks and carry privileged credentials.

The biggest operational risk is not necessarily a dramatic VM escape headline. It is a host kernel crash or corruption event that interrupts workloads and forces incident teams to determine whether the crash was accidental, malicious, or a failed exploit attempt.

Operational priorities

For virtualization teams, CVE-2026-23401 belongs in the same workflow as other hypervisor isolation bugs. It should not be lumped into generic Linux desktop patching without context.- Prioritize shared KVM hosts above single-user workstations.

- Prioritize hosts running untrusted guests above hosts running tightly controlled appliances.

- Prioritize nested virtualization environments above simple VM setups.

- Prioritize systems with KSM, dirty logging, migration, or heavy MMU activity enabled.

- Prioritize internet-facing CI and test infrastructure that accepts user-supplied images.

- Prioritize hosts showing KVM warnings, unexplained panics, or MMU-related traces.

Consumer and Enthusiast Impact

What WindowsForum readers should know

For WindowsForum readers, the obvious question is whether this affects typical Windows users. The answer is usually not directly, but there are important edge cases. If you run Linux as a host with KVM/QEMU, virt-manager, GNOME Boxes, Proxmox, or a Linux-based lab server, then the issue may be relevant.A Windows 11 user running Hyper-V, Windows Sandbox, WSL2, or VirtualBox on Windows is not typically exercising Linux KVM as the host hypervisor. WSL2 uses Microsoft’s virtualization stack rather than KVM in the normal Windows-hosted configuration. However, a Windows VM running on a Linux KVM host is squarely in scope from the host’s perspective.

Enthusiasts who dual-boot Windows and Linux may overlook the Linux side if they only use it for gaming, VFIO, or experimentation. If that Linux install hosts Windows VMs with GPU passthrough or nested virtualization, it should be patched like any other hypervisor.

Home lab users are also more exposed than they may think. Proxmox, Debian KVM servers, Ubuntu virtualization hosts, and Fedora-based lab systems often run experimental kernels and nested setups that resemble the interesting paths for this bug.

Practical guidance for home labs

The consumer response should be calm but deliberate. This is not a browser bug waiting for a malicious website, but it is a reason to keep Linux virtualization hosts current.- If you do not run KVM, the practical risk is low.

- If you run only trusted guests, the risk is lower but not zero.

- If you test malware, unknown ISOs, or third-party VM images, patch promptly.

- If you expose VM creation to friends, customers, students, or CI jobs, treat the host as higher priority.

- If you use nested virtualization for Hyper-V inside KVM or KVM inside KVM, update quickly.

- If your host has logged KVM MMU warnings, investigate rather than ignoring them.

Why MMIO Handling Is So Tricky

Emulated devices complicate memory rules

MMIO, or memory-mapped I/O, lets software interact with device registers through address ranges that look like memory but behave like hardware. In virtualization, many of those accesses must be trapped and emulated by KVM or userspace components such as QEMU.That creates a delicate distinction. A guest physical address might refer to ordinary RAM today and an emulated device region tomorrow, depending on memslot configuration, device state, or nested translation. KVM must recognize that distinction quickly because MMIO exits are expensive and performance-sensitive.

To optimize this, KVM can cache MMIO information in SPTEs. That is sensible engineering: repeated accesses to the same emulated region should not require rediscovering the same facts from scratch. But cached state is safe only if invalidation and transition rules are airtight.

CVE-2026-23401 shows how a seemingly narrow transition can violate that rule. If KVM treats a slot as safely replaceable without removing the old present mapping’s reverse-map state, later code may walk into metadata that no longer matches reality.

The performance-security trade-off

Virtualization MMUs live under constant pressure to be fast. Every unnecessary VM exit, TLB flush, page-table walk, or lock acquisition can degrade workload performance. That pressure encourages shortcuts, early returns, caching, and special handling for common paths.- Fast paths reduce overhead but can bypass cleanup logic if not carefully structured.

- Caching improves repeated MMIO access but increases invalidation complexity.

- Nested virtualization multiplies translation layers and rare corner cases.

- Dirty tracking supports migration and monitoring but touches reverse maps.

- Shadow paging remains necessary for compatibility and complex virtualization modes.

Microsoft, Linux, and the Cross-Platform Security Angle

Why this appears in Microsoft-facing feeds

The CVE is associated with the Linux kernel, yet it also appears in Microsoft security channels. That is not as odd as it may sound in 2026. Microsoft ships, supports, or depends on Linux in multiple contexts, including Azure infrastructure, Linux distributions for cloud workloads, container platforms, and security guidance that tracks third-party CVEs.For Windows administrators, the value of a Microsoft-facing CVE entry is often visibility rather than ownership of the original bug. It tells mixed-environment teams that a vulnerability may matter somewhere in their estate, even if it is not a Windows kernel flaw.

This is increasingly normal. Modern Windows environments rarely consist only of Windows endpoints and Windows Server. They include Linux build agents, KVM-based appliances, Azure workloads, Kubernetes nodes, WSL-adjacent developer workflows, and third-party virtual appliances.

The correct response is not to panic about Windows being affected. The correct response is to ask whether any Linux KVM host under your administrative umbrella runs affected kernel code.

Hyper-V versus KVM

Hyper-V and KVM solve similar problems but live in different kernels and ecosystems. A vulnerability in KVM’s x86 MMU does not automatically translate into a Hyper-V vulnerability. The memory-management code, data structures, and device models differ.However, organizations often run both. A Windows-heavy company may use Hyper-V for on-premises Windows workloads while also using KVM indirectly through cloud services, Linux appliances, or developer machines. Security teams therefore need asset-level clarity, not platform assumptions.

- Hyper-V hosts should be patched through Microsoft’s Windows update process.

- KVM hosts should be patched through their Linux distribution or kernel supplier.

- WSL2 users should not assume KVM is involved merely because Linux user space is present.

- Azure or hosted environments should rely on provider guidance for managed infrastructure.

- Self-managed Linux virtualization nodes remain the customer’s patching responsibility.

Detection, Monitoring, and Incident Response

What signs administrators might see

The public trace for CVE-2026-23401 includes a kernel warning aroundis_shadow_present_pte in KVM’s MMU code. In real environments, symptoms could vary widely. Some systems may show warnings; others may experience crashes, VM instability, or no visible signs before patching.A warning in this area should not be dismissed as harmless noise. KVM MMU warnings often indicate that an internal invariant failed, which means later behavior may be unpredictable. Even if a workload keeps running, the host may have entered a state that deserves investigation.

Administrators should review kernel logs, hypervisor logs, crash dumps, and monitoring alerts around KVM hosts. Look for KVM MMU warnings, unexpected host reboots, VM exits followed by panics, or errors involving shadow paging, EPT, NPT, reverse maps, dirty tracking, or MMU notifier paths.

Detection is not the same as proof of exploitation. This bug can be found through fuzzing and may be triggered in research conditions, but ordinary bugs, unusual device models, or nested virtualization experiments can also generate alarming traces.

Response checklist

A disciplined response avoids both complacency and overreaction. Treat the issue as a hypervisor patching event first, and an incident only if logs or business context justify that escalation.- Confirm whether KVM modules are loaded on the host.

- Identify whether untrusted guests had access before patching.

- Preserve logs if KVM warnings or crashes occurred.

- Patch the kernel through the trusted vendor channel.

- Reboot or activate a validated live patch.

- Re-test VM workloads that use nested virtualization or unusual device emulation.

- Monitor for repeat MMU warnings after remediation.

Competitive and Ecosystem Implications

KVM’s strength is also its exposure

KVM’s deep integration with the Linux kernel is one of its greatest strengths. It benefits from Linux scheduling, memory management, hardware enablement, observability, and a massive developer ecosystem. That same integration means kernel memory-management bugs can become hypervisor bugs with broad reach.Competitors such as Hyper-V, VMware ESXi, bhyve, and Xen face their own classes of memory-virtualization flaws. The difference is not that one hypervisor model avoids complexity. The difference is how each ecosystem discovers, patches, backports, and communicates those flaws.

KVM’s open development model gives defenders unusual visibility. The public can inspect patches, mailing-list discussion, stable backports, and distribution advisories. That transparency helps operators understand risk, but it also means attackers can study patch diffs quickly.

This is the familiar patch-gap problem. Once a fix is public, unpatched systems become easier to reason about. For kernel vulnerabilities, researchers and attackers can compare vulnerable and fixed code paths to build reliable triggers faster than many enterprises can complete maintenance windows.

Market pressure on virtualization security

The broader market implication is that hypervisor security is becoming a shared concern across operating-system tribes. Windows administrators must understand Linux CVEs. Linux administrators must understand Windows guest behavior. Cloud teams must understand how nested virtualization changes threat models.- Cloud providers need rapid kernel rollout mechanisms.

- Enterprise Linux vendors need clear backport status.

- Security tools need better KVM host discovery.

- CI providers need stricter guest trust boundaries.

- Home lab users need simpler patch visibility.

- Cross-platform teams need less siloed CVE ownership.

Strengths and Opportunities

What the response ecosystem gets right

The handling of CVE-2026-23401 shows several strengths in the Linux security ecosystem. The issue was identified, discussed in technical detail, assigned a CVE, and patched across multiple stable kernel lines. That gives defenders the raw material they need to act, provided they can translate kernel-level facts into operational priorities.- Transparent patching lets vendors and administrators verify the exact area of code changed.

- Stable backports help long-term kernel users avoid risky major-version jumps.

- Public technical analysis improves understanding of the root cause and affected paths.

- Distribution advisories help translate upstream commits into package-level action.

- Fuzzing-driven discovery continues to expose subtle hypervisor state bugs before widespread abuse is evident.

- Medium severity scoring still captures high availability impact, even if operators must add context.

- Cross-vendor visibility helps Windows, Linux, and cloud teams converge on the same risk conversation.

Risks and Concerns

Where defenders may underestimate the issue

The main risk is under-prioritization. A Medium CVSS score, local attack vector, and kernel warning trace can lull teams into treating the issue as routine maintenance. In a shared virtualization environment, that would be a mistake.- Patch gaps may persist on lab hosts, CI runners, and edge virtualization nodes.

- Backport confusion may cause teams to misread fixed vendor kernels as vulnerable or vulnerable kernels as fixed.

- Nested virtualization may create exposure that asset inventories do not record.

- Untrusted guest images may be more common than administrators realize.

- Availability impact may cascade when one host supports many workloads.

- Memory corruption language may be ignored because the formal CVSS impact omits confidentiality and integrity.

- Live patch assumptions may be wrong if the specific KVM MMU path is not covered.

What to Watch Next

Patch adoption and vendor clarity

The next thing to watch is how quickly distributions and downstream kernel providers complete their advisories and backports. Upstream fixed version numbers are useful, but enterprises live by vendor package status. Expect some confusion where long-term kernels carry backported fixes without changing their apparent upstream base.Security teams should also watch whether exploitability assessments evolve. NVD’s current scoring emphasizes availability, while public discussion of the underlying condition includes memory-corruption concerns. Those two views are not necessarily contradictory; formal scoring often lags the most aggressive technical interpretation.

The operational watchlist is straightforward:

- Vendor advisories confirming fixed packages for your exact distribution and kernel flavor.

- Cloud-provider guidance for managed KVM-based services or Linux compute images.

- Reports of reliable proof-of-concept triggers circulating publicly.

- Follow-up kernel patches in adjacent KVM MMU or MMIO handling code.

- Crash reports involving KVM shadow paging after partial or failed patch rollout.

A broader lesson for virtualization teams

CVE-2026-23401 should prompt teams to revisit how they classify guest-to-host availability risks. If a guest can crash a host, the business impact may exceed what a generic vulnerability scanner reports. That is especially true where one physical machine hosts many tenants, high-value workloads, or security-sensitive analysis jobs.It should also encourage better separation between trusted and untrusted virtualization pools. Running experimental nested virtualization workloads on the same class of hosts as business-critical VMs is convenient, but convenience is rarely a strong security boundary.

CVE-2026-23401 is ultimately a small ordering bug with large architectural resonance: before KVM can safely mark memory as emulated MMIO, it must first cleanly remove what used to be there. That principle applies well beyond one function in the Linux kernel. In virtualization security, old state is dangerous state, and the safest platforms are the ones that know exactly when to forget.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center