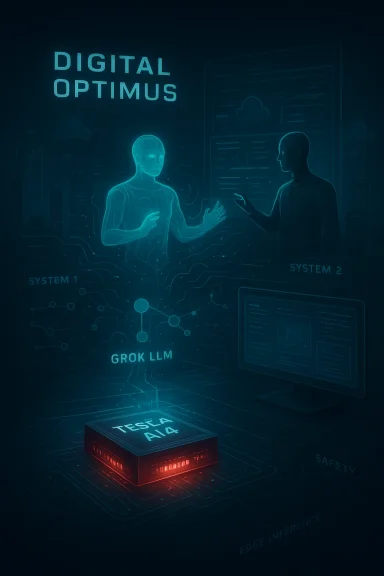

Elon Musk has just resurrected the old “Macrohard” gag — this time as a working-sounding program called Digital Optimus — claiming a joint xAI–Tesla effort can pair xAI’s Grok reasoning model with Tesla-built, screen-controlling AI agents to “emulate the function of entire companies.” In a short run of posts on X on March 11, 2026, Musk described Digital Optimus (aka Macrohard) as a system where Grok acts as a “master conductor/navigator” while Tesla’s agent processes the last five seconds of real‑time screen video and keyboard/mouse actions, runs mainly on Tesla’s AI4 silicon (which he priced at $650), and — in his words — will be “the only real‑time smart AI system.” The announcement arrives against a backdrop of Tesla’s recent financial contraction, ongoing regulatory scrutiny around Full Self‑Driving (FSD), and a contentious web of investments that tie Tesla, xAI and SpaceX ever closer together.

Musk’s Macrohard quip — a deliberate jab at Microsoft — first reappeared publicly in a recruiting-style post on X on August 22, 2025, when he invited engineers to “Join @xAI and help build a purely AI software company called Macrohard.” That trademark-minded signal was later followed by reporting and filings that showed xAI positioning the name and idea as a serious project rather than a tantrum-sized meme. On January 2026 Tesla disclosed a roughly $2 billion investment in xAI; Musk’s March 11, 2026 posts explicitly framed Digital Optimus / Macrohard as part of that investment agreement and as an xAI–Tesla joint initiative.

Those two strands — the August 2025 recruitment and the March 2026 technical tease — are the backbone of the narrative: an AI-first software company concept (Macrohard), now concretized into a system architecture (Digital Optimus) that combines a large language model with a fast, screen‑interacting agent and low‑cost edge inference hardware from Tesla.

Two important quality vectors to consider:

For journalists, investors and technical buyers, the sensible posture is cautious optimism: respect the technical plausibility of component pieces, but demand demonstrable, audited, third‑party‑verified results before accepting claims that an AI system can safely run whole business functions.

At the same time, bold claims that a single product can “emulate the function of entire companies” should be treated as claims — not as verified engineering accomplishments. Independent verification, robust demonstrations under adversarial conditions, and third‑party audits will be the essential next steps to move the idea from clever PR into credible enterprise technology.

If you care about the future of software, AI agents, and the intersection of hardware, models and robotics, watch four things closely over the next 6–12 months:

Conclusion

Digital Optimus and Macrohard package a headline‑ready narrative: a low‑cost, real‑time intelligent agent stack capable of automating entire companies sounds like a technological leap and a strategic threat to legacy software incumbents. Yet the deeper story is far more mundane and far more important: this is a systems‑integration and safety problem as much as a model capability problem. Realizing the promise will require not just more compute and glitzy claims, but rigorous engineering, exhaustive testing, hardened security and clear governance — the exact things that are often the least glamorous but most essential to turning AI hype into durable business value. Until independent evidence of those things appears, treat Macrohard as a high‑stakes experiment rather than an operating system for the future.

Source: theregister.com Musk makes the Macrohard joke again

Background

Background

Musk’s Macrohard quip — a deliberate jab at Microsoft — first reappeared publicly in a recruiting-style post on X on August 22, 2025, when he invited engineers to “Join @xAI and help build a purely AI software company called Macrohard.” That trademark-minded signal was later followed by reporting and filings that showed xAI positioning the name and idea as a serious project rather than a tantrum-sized meme. On January 2026 Tesla disclosed a roughly $2 billion investment in xAI; Musk’s March 11, 2026 posts explicitly framed Digital Optimus / Macrohard as part of that investment agreement and as an xAI–Tesla joint initiative.Those two strands — the August 2025 recruitment and the March 2026 technical tease — are the backbone of the narrative: an AI-first software company concept (Macrohard), now concretized into a system architecture (Digital Optimus) that combines a large language model with a fast, screen‑interacting agent and low‑cost edge inference hardware from Tesla.

What Musk actually announced (and what he claimed)

- On March 11, 2026, Musk said Macrohard or Digital Optimus is “a joint xAI–Tesla project” tied to Tesla’s investment in xAI.

- He described Grok (xAI’s large language model) as “the master conductor/navigator” that directs a Tesla-built Digital Optimus agent which processes the past five seconds of real‑time screen video and keyboard/mouse actions.

- Musk compared the architecture to dual‑process theory: Digital Optimus = System 1 (fast, instinctive), and Grok = System 2 (deliberative reasoning).

- He insisted the system will run competitively on Tesla’s AI4 chip (which he priced at $650) with frugal use of more expensive xAI/NVIDIA cloud hardware.

- He claimed the project is capable, in principle, of “emulating the function of entire companies,” and that “no other company can yet do this.”

Overview: what Digital Optimus actually appears to be

At a technical summary level, Digital Optimus — as described by Musk — is an agent stack with three linked components:- A perception-and-action agent that observes a user’s screen (video frames of UI) plus keyboard and mouse events in real time, then replays or acts on those interfaces the way a human worker would.

- A local, fast inference layer (Tesla’s AI4 silicon) that handles the time-critical “System 1” tasks — immediate perception, fast reflexive actions, and UI-level control loops.

- A remote or high-level reasoning layer (xAI’s Grok LLM on Nvidia cloud hardware) that acts as the “conductor” for planning, cross-context reasoning, and supervisory decision‑making.

Why this is headline‑grabbing: the big promises

- Automation of knowledge work at scale. If Digital Optimus can reliably watch, understand and interact with arbitrary desktop applications, it becomes an agentic replacement for many repetitive clerical tasks: data entry, reconciliation, HR onboarding workflows, routine customer responses, and even software‑driven business processes.

- A new commercial model for “pure AI companies.” Macrohard (as pitched earlier) is the idea of a company where AI writes, ships and supports software — potentially shifting the balance in software engineering labor economics.

- Competitive positioning against incumbents. The name Macrohard and the messaging are clearly designed to provoke: Microsoft and other major software companies are rapidly adopting AI in development and operations, and Musk’s narrative is that a software company built from the ground up around agentic AI can outcompete them.

- Hardware + model stack economics. Musk claims a cost advantage by running most of the inference on relatively cheap, Tesla-produced AI4 chips and using Nvidia‑based servers only for select tasks — an attempt to push both technical and cost differentiation.

Technical feasibility: what’s plausible and what’s not

The plausible parts

- Desktop‑controlling agents already exist in narrow forms: RPA (Robotic Process Automation), scriptable UI automation and task bots have been used for years to automate repetitive GUI tasks.

- Large language models (LLMs) are being used today to orchestrate multi-step tasks and call specialized agents — a pattern that is actively being commercialized in several startups.

- Combining a local visual agent that interprets screen frames with an LLM for planning is technically straightforward at a conceptual level. The real challenge has always been robustness, generalization, and handling ambiguous UI states.

The hard problems that remain

- Robust, general visual understanding of arbitrary UIs. Desktop applications and web pages are wildly heterogeneous; reliably interpreting every possible application screen state is non‑trivial and historically brittle.

- Action safety and error recovery. An agent that types, clicks and triggers financial transactions must be far more conservative and auditable than current RPA bots. Mistakes at scale — especially when an agent is “trusted” to act like a human — amplify risks.

- Long‑horizon reasoning and state management. Simulating business logic and policy enforcement for multi‑step transactions requires deep, auditable models of intent; LLM hallucinations or misinterpretations are dangerous here.

- Latency and bandwidth for “real‑time” mixed local/cloud operation. Musk’s architecture leans on cheap local chips + occasional cloud reasoning; the coordination and failover behavior under network degradation is a material systems engineering problem.

- Security and privacy. Screen‑scraping agents require access to potentially sensitive PII, financial data and credentials. Guaranteeing that such agents do not leak data — either accidentally through misrouting or deliberately via model memorization — is a major challenge.

Business and corporate implications

For Tesla and xAI

- The collaboration folds xAI’s generative reasoning capability into Tesla’s robotics and edge compute playbook. That alignment supports Tesla’s broader pivot toward robotics (Optimus) and recurring‑revenue services.

- Tesla’s $2 billion investment in xAI (announced in January 2026) materially links the companies financially and strategically. That tie also sharpens corporate governance questions already raised by plaintiffs and institutional investors.

- If Digital Optimus works as pitched, Tesla could monetize agent services across enterprise workflows — and leverage Optimus humanoids later as the physical endpoint for hybrid tasks.

For software incumbents (Microsoft et al.)

- Musk’s taunt around Macrohard is partly PR — Microsoft and others already use AI heavily in development and operations. Microsoft’s CEO has publicly said AI generates roughly 20–30% of code in the company’s repos, and large vendors are actively embedding AI into developer toolchains.

- Incumbents’ strengths — massive engineering teams, sophisticated DevOps processes, enterprise sales channels, and legal/regulatory compliance infrastructure — are non‑trivial to displace. A new AI‑only software company will need a razor‑sharp product‑market fit, rigorous QA and credible SLAs to win major enterprise accounts.

For workers and the labor market

- Agentic AI that can interact with software UIs like a human raises immediate displacement questions for back‑office roles. The net impact will depend on deployment speed, regulatory policy, and the ability for human workers to move to higher‑value tasks that require judgment and deep domain expertise.

- There is a real risk of job erosion in repetitive clerical functions — but history shows many technology transitions also create new roles (agent oversight, prompt engineering, SRE for agent fleets, compliance auditors).

Legal, regulatory and governance risks

- Shareholder and fiduciary issues. Musk’s repeated shifting of xAI and Tesla narratives — plus Tesla’s investment in xAI — strengthens the arguments raised by shareholder litigation alleging conflicts of interest and potential misallocation of corporate opportunity. Public statements tying the two companies together may be used as evidence in court.

- Safety and consumer protection. Tesla’s Full Self‑Driving (FSD) operations have been subject to regulatory probes and public criticism after several crashes and investigations. Any software agent that automates workplace tasks will also face consumer‑protection-style questions when errors cause financial harm.

- Privacy and data protection. Screen‑observing agents will potentially process sensitive user data. Different jurisdictions’ privacy rules (GDPR‑style consents, US sectoral laws) will likely apply, and any data breach or misuses would draw swift regulatory action.

- Compliance and auditability. Running mission‑critical workflows through opaque LLM-driven stacks raises auditability and explainability concerns. Enterprises and regulators will demand logging, deterministic fallbacks, and testable compliance guarantees.

Trust, quality and the “AI writes software” debate

Musk’s claim that Macrohard could simulate a software company relies on current trends where AI is already being used to generate code and assist development workflows. Microsoft and other leading tech firms have said that AI contributes a material share of code in repositories today. But adding that code is not the same as ensuring production quality, maintainability, or the long tail of corner cases.Two important quality vectors to consider:

- Defect rates for AI‑generated code. Internal and academic studies have suggested developers find different and sometimes more subtle bugs in AI‑generated code; review processes change and new tooling is required to maintain velocity without sacrificing quality.

- Operational complexity and tech debt. Autonomous agent fleets that act on behalf of enterprises will require their own DevOps lifecycle, observability, and incident response playbooks. Those operational costs must be counted against labor savings.

Safety and ethics: where the red flags are brightest

- Screen capture as attack surface. An agent that records and replicates screen actions could be repurposed to exfiltrate data or be tricked by malicious UI elements. The trust envelope required is wide and fragile.

- Model hallucinations applied to transaction systems. LLMs hallucinate plausible but incorrect outputs. When those outputs lead to automated transactions, financial loss can occur rapidly.

- Bias and fairness. Corporate processes are full of embedded decisions that require legal or human judgment (e.g., hiring, lending). Delegating such decisions to LLM agents without strong guardrails risks discriminatory outcomes and legal exposure.

- Accountability and transparency. When an agent “does the work,” who signs off on correctness? Enterprises will need robust lineage, human-in-the-loop approval gates, and immutable audit trails.

Strategic and reputational context: Musk’s track record

Musk’s public history of bold timelines and provocative statements is well known. Past examples include ambitious targets for self‑driving autonomy, production goals for products like the Cybertruck, and timelines for human Mars missions. Those misses create a pattern that should temper exuberant belief in immediate delivery. At the same time, Musk’s ventures have produced real technical advances and delivered outsized outcomes on other fronts.For journalists, investors and technical buyers, the sensible posture is cautious optimism: respect the technical plausibility of component pieces, but demand demonstrable, audited, third‑party‑verified results before accepting claims that an AI system can safely run whole business functions.

Practical advice for IT leaders and buyers (if you’re evaluating agentic AI today)

- Require robust safety and auditability guarantees: immutable logs, human approval gates for high‑risk actions, and roll‑back mechanisms.

- Start small with low‑risk pilot domains: invoicing reconciliation, standardized form processing, or internal triage tasks.

- Insist on data‑protection contracts and SOC‑type assurances if agents need access to PII or financial systems.

- Measure error modes, not just throughput: what kinds of failures occur and how quickly can a human detect and remediate them?

- Avoid vendor lock‑in on proprietary, opaque agent stacks; demand clear portability of workflows and a clean separation of data, policies and models.

Why Macrohard is a useful thought experiment — and why it’s dangerous to treat PR as product

Macrohard, as a concept, forces a conversation about the shape of software companies in an era of rapidly improving generative models and lightweight edge inference. It’s plausible that a future business will be built around agentic automation and very small human teams for oversight. But today’s reality is still one of significant gaps:- Robust perception and action across heterogeneous UIs remains brittle.

- LLM hallucinations and the need for domain‑specific verification remain unresolved.

- Legal, privacy and safety frameworks have yet to catch up with agentic deployments at scale.

Final assessment: what to believe and how to watch this closely

Elon Musk’s March 11, 2026 briefing-style posts about Digital Optimus / Macrohard are a mix of plausible architecture and high‑octane aspiration. The components cited — Grok, a screen‑observing agent, and Tesla’s AI4 silicon — are real pieces in the ecosystem. The financial and corporate ties between Tesla and xAI have been formalized (Tesla’s roughly $2 billion investment in xAI), which makes the collaboration plausible and legally consequential.At the same time, bold claims that a single product can “emulate the function of entire companies” should be treated as claims — not as verified engineering accomplishments. Independent verification, robust demonstrations under adversarial conditions, and third‑party audits will be the essential next steps to move the idea from clever PR into credible enterprise technology.

If you care about the future of software, AI agents, and the intersection of hardware, models and robotics, watch four things closely over the next 6–12 months:

- Public technical demos with measurable benchmarks and reproducible results.

- Audit reports or third‑party evaluations focused on safety, privacy and reliability.

- Enterprise pilot announcements with named customers and concrete SLAs.

- Regulatory or legal developments tied to Tesla, xAI or agentic deployments that clarify liability and compliance requirements.

Conclusion

Digital Optimus and Macrohard package a headline‑ready narrative: a low‑cost, real‑time intelligent agent stack capable of automating entire companies sounds like a technological leap and a strategic threat to legacy software incumbents. Yet the deeper story is far more mundane and far more important: this is a systems‑integration and safety problem as much as a model capability problem. Realizing the promise will require not just more compute and glitzy claims, but rigorous engineering, exhaustive testing, hardened security and clear governance — the exact things that are often the least glamorous but most essential to turning AI hype into durable business value. Until independent evidence of those things appears, treat Macrohard as a high‑stakes experiment rather than an operating system for the future.

Source: theregister.com Musk makes the Macrohard joke again