Re: DirectX 11 Cards

What are Nvidia's GF104, GF106 and GF108?

As we have been saying for over a year now, Nvidia picked the wrong architecture for Fermi/GF100, it attempts to do everything well, and ends up doing everything in a mediocre way. The Fermi architecture is simply too inefficient to compete against ATI's Evergreen in performance per mm^2, performance/Watt, and yield.

Cutting down an inefficient architecture into smaller chunks does not change the fundamental problem of it being inefficient compared to the competition. In fact, if you cut a GF100 in half, you will get something that loses by almost the same ratio to a Cypress/HD5870 cut in half, a GPU you know as Juniper/5770.

The GF104/106/108 chip were never really meant to be what they are now, they are a desperate stopgap that simply won't work, but that is better than trying to sell DX10 parts for another year. The problem is that Nvidia makes a big, hot, fast part, and then shrinks it relatively quickly. The big chips are at the top of the performance stack, so they sell at a premium, if they didn't the math wouldn't work out.

From there, the shrink offers about 90% of the performance of the big brother at 'consumer' level prices. The math works out, and Nvidia makes money. They did it with the 90nm G80/8800GTX, and shrunk it to the 65nm G92/8800GT. It worked out nicely. The G200b/GTX285 on 55nm was supposed to be tarted up and shrunk to the 45nm G212, but that project failed, as did it's smaller brother the G214. This put NV in a big bind, and explains the utter lack of decent DX10.1 parts from the company.

More importantly, it broke the economics of the G200 line, something that did not get derivatives until late Q3/2009, leaving a huge hole in Nvidia's line. Eventually, that, when combined with the woefully late GT300/Fermi architecture, made them too expensive to manufacture for the price they could command in the market.

Nvidia silently EOLed the chips then was forced to pretend that they were still in production. They weren't. With Fermi, the problem is worse, much worse. Nvidia designed an unmanufacturable chip and now has to do the same hot-shoe dance to pretend it is viable in the market. It isn't, and was never meant to be, the shrinks to 32nm would cure that little problem, until then, the architecture is not economically viable.

Then TSMC canceled the 32nm node. This left Nvidia with an economically non-viable high end chip, no mid-range, and a woefully out of date low end. The process tech that was going to save them went bye bye with the 32nm cancellation, and their worst nightmare, status quo, was in force.

The stopgap plan was to take the GF100 and cut it up. Since GF100 is quite modular, it can be cut into four parts easily, that is exactly what happened. No shrinks, no updates, and no better chance of economic viability until TSMC comes through with 28nm wafers in mid-2011. Maybe. The magic 8-ball of semiconductor process tech just laughed when asked if 28nm would be on time.

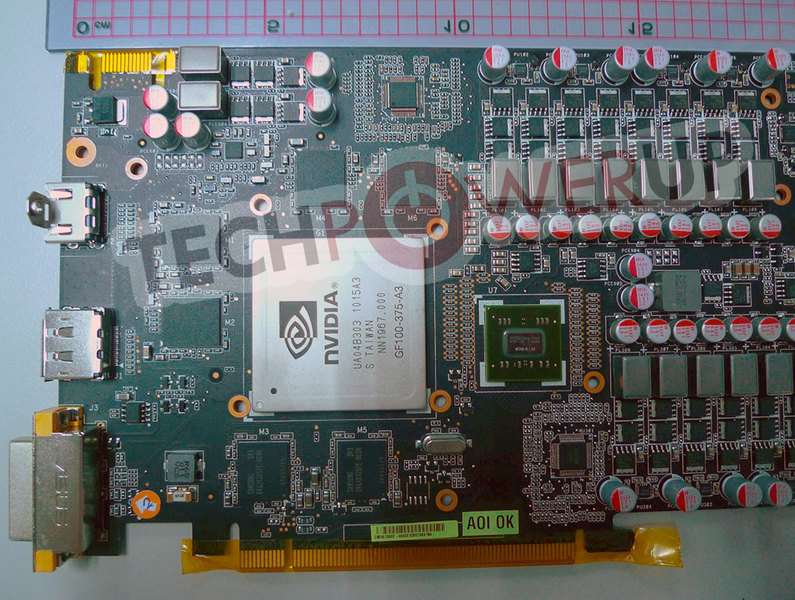

So, what are the derivatives? One of the four, the full shrink/G102, had to die for obvious reasons leaving three derivatives. The new dice are 3/4s of a GF100 for the GF104, 1/2 GF100 for the GF106, and 1/4 GF100 for the GF108. Using a bit of math brings us the GF104 with 384 shaders and a 256 bit memory bus, GF106 has 256 shaders and a 192 bit bus, and GF108 has 128 shaders and a 128 bit bus.

With that out of the way, the math starts looking really ugly. Since Nvidia won't release official die numbers, lets go with a little larger than the 530mm^2 we first told you about. For the sake of argument and tidy numbers, lets just go with 540mm^2, our sources later clarified that GF100 was 23+mm * 23+mm, so that is still likely a bit small. Each quarter of the chip is going to be about 135mm^2, give or take a little.

If you remove some of the GPGPU features and caches, not only do you remove some of the performance, but also some die size. Word has it that Nvidia is basically adding a bit of pixel fill capacity to make it competitive on DX10 performance, so lets just assume the additions and subtractions are a die size wash. Performance of GF10x is said to be very close to GF100 on a per-shader basis, and a bit better than GF100 on older games.

GF100 is also hot, it sucks power like an Nvidia PR agent sucks up the Kool-Aid, the official 250W is, well, laughable. For the sake of argument, lets lowball and say it takes 280W even though several companies list it as 295W and 320W in their sales literature. Being kind would put power use at 70W per quarter GF100.

Power is one area that Nvidia can make the most progress on the new chips, but we hear that the progress, like the silicon changes, are minimal at best. The claims of 150W GF104s should be taken with two big grains of salt. First is that there will be a lot of units fused off and clocks 'managed' for thermal reasons more than anything else. Second, since Nvidia is still claiming 250W for GTX480, why not make up a similarly laughable number for the new part? The last claim worked, right?

So, that would put the GF104 at 210W, GF106 at 140W, and the GF108 at 70W, but that is before shaders are fused off. Since the GF10x line is more or less unchanged from the GF100 in most ways, the yields are going to be in the same toilet. Why? Read this.

The same problem that affected GF100 are going to hit the GF10x parts, and Nvidia is going to end up doing the same things to make salable parts, fuse-off bad clusters while downclocking like mad. Contrary to their magical statement about 40nm yields, our checks at Computex said quite the opposite. Sources tell SemiAccurate that the only way GF100 yields broke 20% is if you include GTX465. Then again, hitting 50% or so yields with 5/16 shader clusters disabled, 80% of your intended clock rate, and nearly 100% over power budget is one thing I would not recommend putting on your resume. The Fermi architecture is still an unmanufacturable mess.

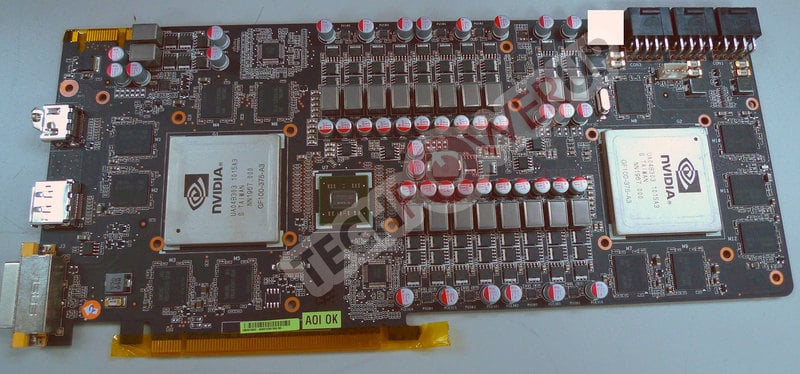

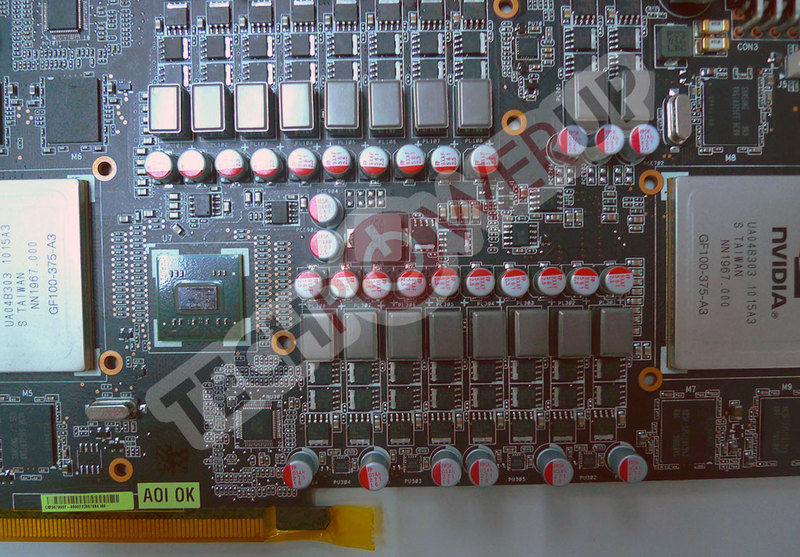

The GF104/6/8 are based on the same architecture, more or less unchanged, so they are going to be a mess as well. Since they are smaller, around 405mm^2, 270mm^2 and 135mm^2, yields should improve proportionately, but still be way behind the competitive ATI chips. If you want a sign of how bad yields are going to be, look at the samples floating around, specifically EXPreview's great find.

It looks like the initial GF104s are going to have at least one block of 32 shaders disabled if not two. The 336 shader number is likely an artifact of the stats program not being fully aware of the new chip yet, but Nvidia might have added the ability to disable half a cluster. GF108s shown at Computex had 96 of 128 shaders active.

In any case, the same problems of vias failing and failing clusters don't seem to be fixed this time around, so expect all sorts of yield irregularities, non-fully functional 'fully functional' parts, and the other half-baked solutions many have grown to expect from Nvidia of late.

Another good example of how unchanged the GF104 is can be seen by the shape of the GF104 at EXPreview. If you take three of the four roughly square clusters of 128 shaders from the GF100 and arrange them in a shape that wastes the least area, what do you get? A rectangle, long and thin, like this. That more than anything shows the cursory nature of the cut and paste job done by Nvidia, don't expect any real changes.

This all leaves Nvidia in quite an economic pickle. The shrink that was going to make the architecture financially viable didn't happen. The updates that were going to make the chips manufacturable didn't happen. The attendant power savings didn't happen. The attendant performance gain didn't happen. What you are going to get is simply a smaller shader count with a slight front end rebalancing of an architecture that wasn't competitive in the first place. These derivatives don't make it any more competitive.

The GF104s are larger than ATI's Cypress by quite a bit, consume more power, and are much slower. The GF104 cards are rumored to sell for less than the lowest end GF100 based 465, so economic viability is, well, questionable from day one. If Nvidia raises the clocks enough to make them competitive, the chips not only blow out the power budget, and drop yields, but they also obsolete the GF100 based 470.

That in turn makes the GF100 chip economically non-viable, the two most manufacturable variants, relatively speaking, are under water. This is the problem with a broken architecture, damned if you do, damned if you don't, and there is no hope for change.

When will they be coming out? Originally, the GF104 was slated for a Computex launch, but that didn't happen. Contrary to rumors started by people not actually at the show, there were no GF104s at Computex, and partners had not received samples yet. This means launch slipped from June to mid-July at the earliest, maybe later.

GF106 was supposed to launch about the same time as GF104, so it may be out at the same time as well. GF108 originally had an August launch, but that slipped a month as well to September. The open question is what Nvidia can actually make, and at what clock speed?

Nvidia was tweaking clocks and shader counts three weeks before 'shipping' in late March, and we hear much of the same hand wringing is going on now. Maybe the magic 8-ball won't laugh as hard if I ask it again in a few weeks. The more things change at Nvidia, the more they stay the same, GF104, GF106, and GF108 are proof that the company is lost.