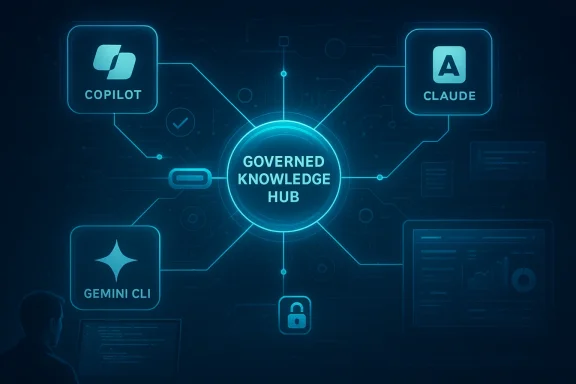

eGain’s new AI platform connectors are a sign that enterprise AI is moving past the novelty phase and into the harder work of governance, consistency, and operational trust. The company is pairing its AI Knowledge Hub with Microsoft Copilot, Anthropic Claude, Google Gemini CLI, and Cursor, framing the release as a way to give AI systems a single governed source of truth instead of forcing employees and developers to improvise across scattered repositories. That pitch matters because AI success in the enterprise is increasingly judged not by flashy demos, but by whether answers are repeatable, auditable, and safe enough to act on.

The announcement also lands at exactly the right moment in the market. Model Context Protocol, or MCP, has become one of the clearest interoperability trends in enterprise AI, and both Anthropic and Microsoft have publicly documented support for MCP-style connections in their ecosystems. eGain’s move therefore is not just about compatibility; it is about inserting itself into the emerging control plane for AI knowledge, where the real competition is over who owns the governed layer beneath the model. In that sense, this is as much a strategy article as a product release.

For years, eGain has positioned itself as a knowledge management company first and an AI company second, and that framing turns out to be an asset in the current market. The company’s 2025 product direction centered on eGain Composer, a modular platform built on the company’s AI Knowledge Hub, with explicit emphasis on trusted knowledge, compliance, and composable integration. That earlier launch described the problem clearly: enterprise AI systems are often fragmented, disconnected, and inflexible, which makes them difficult to trust at scale.

The new connectors are best understood as the next step in that roadmap. If Composer was about giving developers a stronger internal foundation, the connectors are about extending that foundation into the tools people already use every day. In other words, eGain is trying to make its knowledge layer portable across the broader AI ecosystem rather than locking it into a single interface or assistant.

This is also happening against a backdrop of rising concern about AI accuracy and governance. Enterprises do not just want AI that sounds confident; they want AI that can cite the right policy, retrieve the latest procedural update, and avoid exposing the organization to compliance headaches. eGain’s long-standing focus on regulated industries and customer service workflows gives it a narrative advantage here, because the company can credibly argue that knowledge quality is not a feature, but the prerequisite for agentic AI.

The broader industry has validated that assumption. Microsoft’s own documentation for Copilot Studio describes MCP as a way to connect agents to tools, resources, and prompts through standardized server connections, while Anthropic has described MCP as a universal, open standard for connecting AI systems to external data sources and tools. That means eGain is not inventing the standard; it is exploiting the moment when the standard is becoming normal enough for enterprises to demand concrete implementations.

One more reason this launch matters is that it reflects the shift from chat interfaces to action-oriented AI. As model providers and app vendors race toward agents that can do more than answer questions, the value of a verified knowledge base rises sharply. If the model is the brain, the governed content layer is becoming the memory, and memory is what keeps actions from drifting into error.

The company is also leaning hard into MCP compatibility. Its own developer guide explains that the eGain MCP server offers a single interface to portal-managed knowledge and content, with access to search, retrieval, certified or generative answers, and content suggestions. The same guide lists compatibility with environments such as Claude, Cursor, and Gemini CLI, which helps explain why these connectors were positioned as part of a broader ecosystem strategy rather than a standalone integration play.

The company is also talking about trust in a very specific way. It is not just claiming that the responses will be better; it is claiming that the answers will be certified, traceable, and aligned with enterprise compliance expectations. That distinction matters because most enterprises do not need more AI creativity. They need fewer surprises.

Key implications include:

eGain’s MCP guide says its server provides one endpoint that integrates major knowledge retrieval APIs and works across clients like Claude, Cursor, and other LLM environments. That matters because it lets eGain offer a single governed knowledge interface instead of maintaining separate one-off integrations for every assistant. In enterprise IT terms, this is the difference between a scalable platform and a pile of bespoke plumbing.

The timing is favorable. MCP has gained enough momentum that vendors are no longer debating whether to support it; they are racing to demonstrate support in public. Anthropic said in late 2025 that MCP had already been adopted across multiple platforms, including ChatGPT, Cursor, Gemini, Microsoft Copilot, and Visual Studio Code. That is a powerful signal that interoperability is becoming a procurement requirement, not just an engineering preference.

This has several consequences:

For enterprises, that is a practical win. Employees do not want to toggle between a knowledge portal, a chat assistant, and a policy site just to answer one question. They want the assistant to surface the right answer inside the flow of work, whether that flow is a support queue, a service desk, or a Teams conversation. eGain’s connector story is aimed directly at that expectation.

The significance is even greater in regulated sectors. When answers are embedded into Copilot-based workflows, governance matters more because the assistant becomes an operational layer, not a novelty layer. If the content is stale or inconsistent, the enterprise risks turning convenience into liability. A governed knowledge source therefore becomes a control mechanism as much as an enablement mechanism.

That said, Microsoft also raises the bar. The more central Copilot becomes, the less tolerance enterprises have for knowledge errors. A mistake in a side chatbot is annoying; a mistake in a mainstream productivity assistant can become a governance incident. That is exactly why the promise of certified answers matters so much.

Anthropic’s own documentation emphasizes MCP as a way to connect Claude and Claude Code to external tools and data sources. eGain’s MCP guide similarly lists Claude and Cursor among supported hosts, while also mentioning Gemini CLI. That alignment suggests eGain is trying to make its knowledge layer available wherever AI-assisted work is happening, not just where business users sit.

This is strategically smart because developer tools are where AI adoption becomes sticky. Once internal teams trust a knowledge source inside their editor, terminal, or coding assistant, they are more likely to reuse it in other workflows. The connector then becomes part of the organization’s operational muscle memory.

This can improve several areas at once:

eGain has been making that case for a while. Its earlier product messaging around Trusted Knowledge and AI CX automation stressed that AI needs a reliable content layer to deliver usable answers. The company’s annual reporting also highlights open APIs and third-party connectors as part of its strategy to help customers integrate enterprise assets into a single view of the customer and improve compliance with policies and best practices. The new connectors extend that philosophy into the modern AI stack.

The deeper insight is that enterprise AI is becoming less about raw model capability and more about knowledge operationalization. Companies are discovering that success depends on how quickly they can locate the right answer, certify it, govern it, and keep it current. In that sense, eGain is not selling “knowledge” in the abstract; it is selling a system for maintaining the knowledge that AI should be allowed to use.

The strongest parts of this thesis are:

The company also benefits from the fact that many major AI vendors are now embracing ecosystems rather than closed gardens. Microsoft supports MCP in Copilot Studio, Anthropic is actively promoting MCP across its own products, and other vendors are racing to make their tools available through the same protocol. That leaves room for specialists that can supply the content side of the equation.

At the same time, this is not a safe niche. Larger platform companies could eventually bundle enough governance, retrieval, and content controls into their own stacks to compress third-party opportunities. eGain therefore needs to keep proving that its depth in knowledge management, compliance, and content lifecycle management is not just useful, but distinctive.

Potential advantages include:

For enterprise IT, the benefits are obvious. A governed knowledge source reduces the risk that employees receive inconsistent answers from different assistants. It also helps compliance teams enforce policy, maintain audit trails, and control which knowledge can be surfaced in which environment. In a world where AI is increasingly embedded into everyday work, that control becomes a form of operational hygiene.

Consumer AI, by contrast, is more forgiving. If a consumer assistant gets a recommendation slightly wrong, the outcome is usually annoyance. If an enterprise assistant gets procurement guidance, HR policy, or incident-response advice wrong, the outcome can be far more costly. That asymmetry is why the connector story should resonate more with CIOs than with hobbyists.

That could support purchases in:

Its second strength is timing. MCP is emerging as a widely accepted interoperability standard, and that makes eGain’s integration story feel future-facing rather than experimental. The company is also benefiting from the market’s growing recognition that context quality matters as much as model quality.

There is also competitive pressure from the platforms themselves. Microsoft, Anthropic, Google, and other ecosystem players may continue to improve native knowledge and connector capabilities, which could reduce the need for a specialized third-party layer. In that scenario, eGain would need to prove that its depth is worth the additional procurement and integration effort.

The next wave of interest will likely focus on adoption patterns. Which customers connect eGain to Copilot first? Do technical teams use it more heavily than service teams? And can the company show that MCP-based access actually reduces support friction, improves answer consistency, and shortens time to resolution? Those are the metrics that will matter.

The most likely near-term outcome is not a dramatic redefinition of the market, but a steady re-rating of what enterprise AI infrastructure actually means. The companies that win may not be the ones with the loudest models, but the ones that can supply the most reliable context. If eGain can keep making that case with real customer traction, it may find that the humble knowledge layer is one of the most valuable positions in the AI stack.

Source: www.quiverquant.com https://www.quiverquant.com/news/eG...+Anthropic+Claude,+Google+Gemini,+and+Cursor/

The announcement also lands at exactly the right moment in the market. Model Context Protocol, or MCP, has become one of the clearest interoperability trends in enterprise AI, and both Anthropic and Microsoft have publicly documented support for MCP-style connections in their ecosystems. eGain’s move therefore is not just about compatibility; it is about inserting itself into the emerging control plane for AI knowledge, where the real competition is over who owns the governed layer beneath the model. In that sense, this is as much a strategy article as a product release.

Background

Background

For years, eGain has positioned itself as a knowledge management company first and an AI company second, and that framing turns out to be an asset in the current market. The company’s 2025 product direction centered on eGain Composer, a modular platform built on the company’s AI Knowledge Hub, with explicit emphasis on trusted knowledge, compliance, and composable integration. That earlier launch described the problem clearly: enterprise AI systems are often fragmented, disconnected, and inflexible, which makes them difficult to trust at scale.The new connectors are best understood as the next step in that roadmap. If Composer was about giving developers a stronger internal foundation, the connectors are about extending that foundation into the tools people already use every day. In other words, eGain is trying to make its knowledge layer portable across the broader AI ecosystem rather than locking it into a single interface or assistant.

This is also happening against a backdrop of rising concern about AI accuracy and governance. Enterprises do not just want AI that sounds confident; they want AI that can cite the right policy, retrieve the latest procedural update, and avoid exposing the organization to compliance headaches. eGain’s long-standing focus on regulated industries and customer service workflows gives it a narrative advantage here, because the company can credibly argue that knowledge quality is not a feature, but the prerequisite for agentic AI.

The broader industry has validated that assumption. Microsoft’s own documentation for Copilot Studio describes MCP as a way to connect agents to tools, resources, and prompts through standardized server connections, while Anthropic has described MCP as a universal, open standard for connecting AI systems to external data sources and tools. That means eGain is not inventing the standard; it is exploiting the moment when the standard is becoming normal enough for enterprises to demand concrete implementations.

One more reason this launch matters is that it reflects the shift from chat interfaces to action-oriented AI. As model providers and app vendors race toward agents that can do more than answer questions, the value of a verified knowledge base rises sharply. If the model is the brain, the governed content layer is becoming the memory, and memory is what keeps actions from drifting into error.

What eGain Announced

At the center of the release is a family of AI Knowledge Connectors that link eGain’s knowledge foundation to four major AI surfaces: Microsoft Copilot, Anthropic Claude, Google Gemini CLI, and Cursor. According to the company’s framing, the goal is to provide unified, governed, and traceable answers wherever employees or developers are working. That is a notable shift from isolated knowledge portals toward an AI experience that follows the user across multiple environments.The company is also leaning hard into MCP compatibility. Its own developer guide explains that the eGain MCP server offers a single interface to portal-managed knowledge and content, with access to search, retrieval, certified or generative answers, and content suggestions. The same guide lists compatibility with environments such as Claude, Cursor, and Gemini CLI, which helps explain why these connectors were positioned as part of a broader ecosystem strategy rather than a standalone integration play.

The practical pitch

The practical value proposition is straightforward. If an employee asks Copilot for a policy explanation, or a developer queries a coding assistant for internal support guidance, eGain wants the response to come from a certified knowledge layer rather than a loosely assembled prompt. That should reduce hallucinations, reduce policy drift, and give governance teams a cleaner audit trail.The company is also talking about trust in a very specific way. It is not just claiming that the responses will be better; it is claiming that the answers will be certified, traceable, and aligned with enterprise compliance expectations. That distinction matters because most enterprises do not need more AI creativity. They need fewer surprises.

Key implications include:

- Unified knowledge access across assistants and development tools.

- Traceable answers that can be tied back to governed content.

- Reduced duplication of knowledge logic across teams.

- Better compliance posture in regulated workflows.

- More consistent employee experience across platforms.

Why MCP Matters

The most important technical detail in this announcement is not the list of brand names. It is the use of Model Context Protocol as the integration pattern. Anthropic introduced MCP as an open standard for connecting AI assistants to the systems where data lives, and Microsoft now documents MCP support inside Copilot Studio and related enterprise tooling. That makes MCP the emerging lingua franca for AI-to-tool integrations, especially in enterprise environments where custom connectors are expensive and brittle.eGain’s MCP guide says its server provides one endpoint that integrates major knowledge retrieval APIs and works across clients like Claude, Cursor, and other LLM environments. That matters because it lets eGain offer a single governed knowledge interface instead of maintaining separate one-off integrations for every assistant. In enterprise IT terms, this is the difference between a scalable platform and a pile of bespoke plumbing.

The timing is favorable. MCP has gained enough momentum that vendors are no longer debating whether to support it; they are racing to demonstrate support in public. Anthropic said in late 2025 that MCP had already been adopted across multiple platforms, including ChatGPT, Cursor, Gemini, Microsoft Copilot, and Visual Studio Code. That is a powerful signal that interoperability is becoming a procurement requirement, not just an engineering preference.

A standard becomes a battleground

Once a standard starts spreading, vendors compete on what sits above it. That is where eGain sees an opening. If the protocol is the pipe, then the differentiator becomes the quality of the water, the reliability of the source, and the governance around who can change it. eGain’s bet is that enterprises will increasingly care more about the knowledge source than the model brand.This has several consequences:

- MCP lowers integration friction for enterprise AI deployments.

- It increases pressure on vendors to prove governance and security.

- It shifts the value debate from model capability to context quality.

- It creates opportunities for specialized knowledge platforms like eGain.

- It may reduce the appeal of siloed, assistant-specific knowledge silos.

Microsoft Copilot and the Enterprise Desktop

Microsoft is the most consequential part of this story because Copilot is the default AI ambition for many enterprises already invested in the Microsoft stack. Microsoft’s documentation states that Copilot Studio can connect to MCP servers, and that MCP tools and resources can be exposed directly to agents. That means a knowledge layer like eGain’s can be pulled into Microsoft-centered workflows without rethinking the whole desktop experience.For enterprises, that is a practical win. Employees do not want to toggle between a knowledge portal, a chat assistant, and a policy site just to answer one question. They want the assistant to surface the right answer inside the flow of work, whether that flow is a support queue, a service desk, or a Teams conversation. eGain’s connector story is aimed directly at that expectation.

The significance is even greater in regulated sectors. When answers are embedded into Copilot-based workflows, governance matters more because the assistant becomes an operational layer, not a novelty layer. If the content is stale or inconsistent, the enterprise risks turning convenience into liability. A governed knowledge source therefore becomes a control mechanism as much as an enablement mechanism.

Why Microsoft matters more than the others

Microsoft is not just another AI surface; it is the platform many large organizations already use for identity, productivity, collaboration, and security. That makes Copilot a natural distribution point for enterprise knowledge. If eGain can become the trusted content backbone inside that ecosystem, it gains a strong position in large account deployments where buying decisions tend to favor existing vendor relationships.That said, Microsoft also raises the bar. The more central Copilot becomes, the less tolerance enterprises have for knowledge errors. A mistake in a side chatbot is annoying; a mistake in a mainstream productivity assistant can become a governance incident. That is exactly why the promise of certified answers matters so much.

Claude, Gemini CLI, and Cursor: Why Developer Surfaces Matter

The inclusion of Claude, Gemini CLI, and Cursor shows that eGain is not only targeting service teams and business users. It is also targeting developers, builders, and technical operators who increasingly live inside AI-native environments. That is important because developers are often the first group to adopt new AI workflows, and their tooling choices can shape the architecture of enterprise deployments.Anthropic’s own documentation emphasizes MCP as a way to connect Claude and Claude Code to external tools and data sources. eGain’s MCP guide similarly lists Claude and Cursor among supported hosts, while also mentioning Gemini CLI. That alignment suggests eGain is trying to make its knowledge layer available wherever AI-assisted work is happening, not just where business users sit.

This is strategically smart because developer tools are where AI adoption becomes sticky. Once internal teams trust a knowledge source inside their editor, terminal, or coding assistant, they are more likely to reuse it in other workflows. The connector then becomes part of the organization’s operational muscle memory.

Developer productivity meets governance

For technical teams, the promise is not just faster answers. It is fewer context switches and fewer assumptions. If a developer can query internal standards, service procedures, or platform runbooks from inside Cursor or Gemini CLI, the productivity gain can be meaningful. More importantly, the organization gets a better chance of keeping technical advice aligned with official policy.This can improve several areas at once:

- Onboarding for new developers and support engineers.

- Internal support for IT and operations teams.

- Policy retrieval during incident response.

- Consistency in code-adjacent knowledge workflows.

- Reduction in tribal knowledge held by a few experts.

The Knowledge Management Thesis

This release is really a vote for knowledge management as AI infrastructure. That may sound old-fashioned in a market obsessed with foundation models, but it is precisely the kind of argument enterprises are starting to take seriously. A better model cannot fully compensate for fragmented, outdated, or contradictory enterprise knowledge.eGain has been making that case for a while. Its earlier product messaging around Trusted Knowledge and AI CX automation stressed that AI needs a reliable content layer to deliver usable answers. The company’s annual reporting also highlights open APIs and third-party connectors as part of its strategy to help customers integrate enterprise assets into a single view of the customer and improve compliance with policies and best practices. The new connectors extend that philosophy into the modern AI stack.

The deeper insight is that enterprise AI is becoming less about raw model capability and more about knowledge operationalization. Companies are discovering that success depends on how quickly they can locate the right answer, certify it, govern it, and keep it current. In that sense, eGain is not selling “knowledge” in the abstract; it is selling a system for maintaining the knowledge that AI should be allowed to use.

From content repository to decision layer

That shift matters because enterprises increasingly want AI to influence decisions, not just answer questions. If an assistant can recommend next steps, draft responses, or trigger workflows, then the knowledge behind those actions must be managed with the same seriousness as policy or code. That is where eGain’s platform story becomes more compelling.The strongest parts of this thesis are:

- AI performance depends heavily on knowledge quality.

- Governance is harder to bolt on after deployment.

- Shared knowledge reduces inconsistency across teams.

- Traceability supports compliance and auditing.

- Content freshness directly affects user trust.

Competitive Positioning

From a market perspective, eGain is competing in a crowded but still unsettled category. It is not trying to outmodel OpenAI, Anthropic, or Google. Instead, it is trying to sit underneath them as the knowledge governance layer that makes their outputs enterprise-safe. That is a clever position because the model layer remains volatile while the knowledge layer may be stickier over time.The company also benefits from the fact that many major AI vendors are now embracing ecosystems rather than closed gardens. Microsoft supports MCP in Copilot Studio, Anthropic is actively promoting MCP across its own products, and other vendors are racing to make their tools available through the same protocol. That leaves room for specialists that can supply the content side of the equation.

At the same time, this is not a safe niche. Larger platform companies could eventually bundle enough governance, retrieval, and content controls into their own stacks to compress third-party opportunities. eGain therefore needs to keep proving that its depth in knowledge management, compliance, and content lifecycle management is not just useful, but distinctive.

Where eGain can win

Its best route to differentiation is probably not breadth, but depth. If it can show that its knowledge workflows are more trustworthy, more auditable, and more enterprise-ready than ad hoc connector stacks, it can remain relevant even as the AI tooling market consolidates. That is especially true in service-heavy industries where knowledge quality directly affects cost and customer satisfaction.Potential advantages include:

- A longstanding knowledge management focus.

- Strong positioning in regulated environments.

- MCP alignment across multiple AI ecosystems.

- Emphasis on certified answers and traceability.

- Existing customer familiarity with eGain’s content workflows.

Enterprise vs. Consumer Impact

The enterprise use case is far more convincing than any consumer story here. Consumers care about convenience and novelty, but enterprises care about accuracy, compliance, and reproducibility. eGain’s connectors are designed for the latter, and that is where the economic value is easier to defend.For enterprise IT, the benefits are obvious. A governed knowledge source reduces the risk that employees receive inconsistent answers from different assistants. It also helps compliance teams enforce policy, maintain audit trails, and control which knowledge can be surfaced in which environment. In a world where AI is increasingly embedded into everyday work, that control becomes a form of operational hygiene.

Consumer AI, by contrast, is more forgiving. If a consumer assistant gets a recommendation slightly wrong, the outcome is usually annoyance. If an enterprise assistant gets procurement guidance, HR policy, or incident-response advice wrong, the outcome can be far more costly. That asymmetry is why the connector story should resonate more with CIOs than with hobbyists.

Why this matters for buyer behavior

Enterprise buyers are also becoming more skeptical of “AI for AI’s sake.” They want to know what business process improves, what compliance risk drops, and what ownership model keeps the knowledge current. eGain’s platform language speaks directly to those questions. It does not promise magic; it promises governance, consistency, and cleaner integration.That could support purchases in:

- Customer service and contact center operations.

- Internal help desk and employee support.

- IT knowledge workflows.

- Regulated business units.

- Developer enablement and runbook automation.

Strengths and Opportunities

eGain’s biggest strength is that it is attacking a real pain point rather than chasing AI hype. Enterprises already know that fragmented knowledge creates bad answers, inconsistent policies, and avoidable compliance exposure. By positioning its connectors as a governed layer across major AI platforms, the company can ride a demand wave that is likely to grow as agentic AI becomes more common.Its second strength is timing. MCP is emerging as a widely accepted interoperability standard, and that makes eGain’s integration story feel future-facing rather than experimental. The company is also benefiting from the market’s growing recognition that context quality matters as much as model quality.

- Strong fit with the enterprise governance agenda.

- MCP alignment reduces integration friction.

- Cross-platform reach across Microsoft, Anthropic, Google, and Cursor.

- Traceable answers support compliance-heavy workflows.

- Developer accessibility broadens adoption beyond service teams.

- Composable architecture helps customers avoid lock-in.

- Knowledge lifecycle controls can improve content freshness.

Risks and Concerns

The biggest risk is that “governed knowledge” sounds essential but can be difficult to prove in practice. Enterprises may initially be enthusiastic about connectors, then discover that the real work is content cleanup, ownership, and process discipline. If the knowledge base is weak, the connector cannot hide that weakness for long.There is also competitive pressure from the platforms themselves. Microsoft, Anthropic, Google, and other ecosystem players may continue to improve native knowledge and connector capabilities, which could reduce the need for a specialized third-party layer. In that scenario, eGain would need to prove that its depth is worth the additional procurement and integration effort.

- Implementation complexity may slow enterprise adoption.

- Content quality debt can undermine the value of the connectors.

- Native platform features could compete with third-party layers.

- Security and permissions must be handled with great care.

- Buyer confusion may arise if governance is not clearly differentiated.

- Change management could become the hidden cost of deployment.

- Market consolidation might compress specialized vendors over time.

What to Watch Next

The most important question now is whether eGain can turn this connector launch into a broader ecosystem story. If the company follows up with concrete customer wins, proof points, and measurable improvements in answer quality or compliance posture, the release will feel like the start of a durable strategy rather than a one-off announcement. If not, the market may treat it as another incremental integration in a very noisy category.The next wave of interest will likely focus on adoption patterns. Which customers connect eGain to Copilot first? Do technical teams use it more heavily than service teams? And can the company show that MCP-based access actually reduces support friction, improves answer consistency, and shortens time to resolution? Those are the metrics that will matter.

Key indicators to monitor

- New customer references in regulated or high-compliance industries.

- Expansions beyond pilot deployments into production use.

- Evidence of measurable gains in answer accuracy or deflection.

- Broader support for additional AI clients and agent tools.

- Deeper proof that the MCP layer simplifies enterprise integration.

- Signs that eGain can translate connector adoption into platform stickiness.

- Competitive responses from Microsoft-centric and AI-native rivals.

The most likely near-term outcome is not a dramatic redefinition of the market, but a steady re-rating of what enterprise AI infrastructure actually means. The companies that win may not be the ones with the loudest models, but the ones that can supply the most reliable context. If eGain can keep making that case with real customer traction, it may find that the humble knowledge layer is one of the most valuable positions in the AI stack.

Source: www.quiverquant.com https://www.quiverquant.com/news/eG...+Anthropic+Claude,+Google+Gemini,+and+Cursor/