OpenAI’s GPT-5.5 is arriving in Microsoft Foundry as another clear sign that the enterprise AI race has moved past novelty and into operations. Microsoft is positioning the model not just as a smarter assistant, but as a production-ready component for agentic systems that need persistence, tool use, and governance. The bigger story is not simply that a new frontier model is available; it is that Microsoft is continuing to turn Azure Foundry into the layer where advanced models become manageable enterprise systems.

Microsoft’s announcement follows a familiar pattern that has now become central to its AI strategy: OpenAI releases a stronger model, and Microsoft immediately frames it inside a larger platform story. That was true when GPT-5 arrived in Azure AI Foundry, where Microsoft said the model paired frontier reasoning with enterprise-grade deployment and production confidence. The GPT-5.5 announcement extends that arc by emphasizing deeper long-context reasoning, better agentic execution, improved computer-use accuracy, and token efficiency.

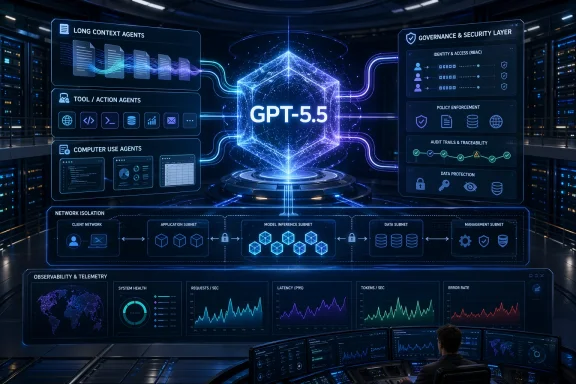

The progression also reflects how Microsoft now talks about AI value. The company is no longer pitching models as standalone chat engines. Instead, it is describing an ecosystem in which models, orchestration, identity, telemetry, and policy must all work together. That philosophy is visible in Foundry Agent Service, which Microsoft defines as a fully managed platform for building, deploying, and scaling AI agents with hosting, observability, identity, and enterprise security built in.

This matters because the enterprise AI market has learned, sometimes painfully, that the leap from demo to deployment is not about raw benchmark scores alone. It is about durable workflows, controlled access, testability, and the ability to trace every action an AI system takes. Microsoft’s documentation makes that point directly by describing agents as systems that combine a model, instructions, and tools, then adding that Foundry handles the operational burden around them.

In that context, GPT-5.5 is best understood as a product that serves two audiences at once. For developers, it is a more capable model for difficult reasoning, coding, and computer-use tasks. For platform teams, it is a new workload that Foundry is expected to absorb without making governance harder. That dual audience is the real theme of the launch.

There is also an important historical thread here: Microsoft’s Azure OpenAI Service originally brought premium OpenAI models to the enterprise with Microsoft’s security and cloud controls, and Azure AI Foundry later expanded that story into a broader platform for apps and agents. Microsoft’s current Foundry messaging shows the evolution from access to orchestration to operationalization. GPT-5.5 sits squarely in that third phase.

The most notable improvement is not one feature, but the combination of reasoning depth, agentic reliability, and token efficiency. In practical terms, that means the model is being tuned to hold onto a task longer, take more steps with fewer resets, and spend less compute to get to the answer. That is especially valuable for production agents that must operate at scale rather than merely impress in a demo.

Microsoft also stresses computer-use accuracy. That phrase deserves attention, because computer-use systems tend to fail in small but costly ways: clicking the wrong element, losing state, or misunderstanding an interface that changed slightly. A better computer-use model is not just a convenience feature; it is an operational prerequisite for serious agent deployment.

That framing is important because raw model access is no longer enough. Foundry is increasingly being presented as the system that turns a model into a governed service, with policy, telemetry, and deployment boundaries attached. Microsoft is effectively arguing that the differentiator in enterprise AI is not just what model you use, but where and how it runs.

The platform also supports multiple agent styles, including prompt agents, workflow agents, and hosted agents. That gives enterprises a path from quick experiments to structured automation without switching stacks every time they mature a use case. The result is an architecture meant to reduce friction as teams move from prototype to production.

Microsoft’s own documentation makes this architecture explicit. An agent is made up of a model, instructions, and tools, and Foundry handles the runtime burden around that combination. That approach gives developers room to focus on business logic while the platform manages the mechanics of scaling and deployment.

GPT-5.5 seems tuned for that reality. A model that can sustain context across large systems and multi-session histories is more useful for workflows than one that produces a strong answer in a single turn. In enterprise settings, the ability to stay oriented is often more valuable than a flash of brilliance.

The ability to reason through a fix before making a change is especially important. In real codebases, a patch often has ripple effects that affect tests, dependencies, interfaces, and release pipelines. A model that anticipates those relationships is more valuable than one that simply edits a file quickly.

GPT-5.5’s improved computer-use accuracy also broadens its usefulness beyond code generation. UI-driven business systems, legacy admin consoles, and internal enterprise portals still dominate many workflows. A model that can navigate those interfaces more reliably can unlock automation where APIs are missing or incomplete.

That stack matters because agents are not passive systems. They can call tools, move data, and take actions. If those behaviors are not bounded, monitored, and permissioned, they become a compliance problem as fast as they become a productivity gain. Microsoft’s messaging is clearly trying to front-load that reality.

The platform also provides end-to-end tracing and Application Insights integration, which is essential for operational debugging. Enterprises rarely fail because they cannot launch an AI agent; they fail because they cannot explain what it did, why it did it, or what data it touched. Observability is not optional in that world.

The pricing structure also reinforces the importance of token efficiency. Microsoft is explicitly arguing that GPT-5.5 can reach better outputs with fewer tokens and fewer retries. In production, that matters as much as raw capability because repeated calls and long outputs can become a major cost center.

There is a broader pattern here. In past announcements, Microsoft has repeatedly linked Foundry to cost-efficiency through routing, model choice, and managed deployment. GPT-5 in Azure AI Foundry was positioned as part of a system that could reduce inference costs while preserving fidelity. GPT-5.5 appears to continue that same strategic line.

For rivals, the challenge is not only matching model quality. They must also offer identity, governance, workflow orchestration, observability, and a credible production path. Microsoft is trying to make those platform layers feel inseparable from the model itself. That is a strong retention strategy.

The announcement also shows how the enterprise AI market has matured. Earlier waves were about access to a powerful model. Now the question is which vendor can run the most agents safely, at scale, with the least friction. That shift is subtle but commercially decisive.

Microsoft will also need to show that agentic AI can be both more capable and more governable over time. If Foundry can keep reducing the friction between model experimentation and enterprise deployment, it may become the default home for a growing share of business AI workloads. If not, customers may continue to split their stack across multiple vendors and frameworks.

The strategic watch items are fairly clear. Each one will reveal whether GPT-5.5 is simply a stronger model, or another step toward a more durable enterprise AI operating model.

Source: Microsoft Azure OpenAI’s GPT-5.5 in Microsoft Foundry: Frontier intelligence on an enterprise ready platform | Microsoft Azure Blog

Background

Background

Microsoft’s announcement follows a familiar pattern that has now become central to its AI strategy: OpenAI releases a stronger model, and Microsoft immediately frames it inside a larger platform story. That was true when GPT-5 arrived in Azure AI Foundry, where Microsoft said the model paired frontier reasoning with enterprise-grade deployment and production confidence. The GPT-5.5 announcement extends that arc by emphasizing deeper long-context reasoning, better agentic execution, improved computer-use accuracy, and token efficiency.The progression also reflects how Microsoft now talks about AI value. The company is no longer pitching models as standalone chat engines. Instead, it is describing an ecosystem in which models, orchestration, identity, telemetry, and policy must all work together. That philosophy is visible in Foundry Agent Service, which Microsoft defines as a fully managed platform for building, deploying, and scaling AI agents with hosting, observability, identity, and enterprise security built in.

This matters because the enterprise AI market has learned, sometimes painfully, that the leap from demo to deployment is not about raw benchmark scores alone. It is about durable workflows, controlled access, testability, and the ability to trace every action an AI system takes. Microsoft’s documentation makes that point directly by describing agents as systems that combine a model, instructions, and tools, then adding that Foundry handles the operational burden around them.

In that context, GPT-5.5 is best understood as a product that serves two audiences at once. For developers, it is a more capable model for difficult reasoning, coding, and computer-use tasks. For platform teams, it is a new workload that Foundry is expected to absorb without making governance harder. That dual audience is the real theme of the launch.

There is also an important historical thread here: Microsoft’s Azure OpenAI Service originally brought premium OpenAI models to the enterprise with Microsoft’s security and cloud controls, and Azure AI Foundry later expanded that story into a broader platform for apps and agents. Microsoft’s current Foundry messaging shows the evolution from access to orchestration to operationalization. GPT-5.5 sits squarely in that third phase.

What GPT-5.5 Adds

GPT-5.5 is being framed as a model for professional work where mistakes are expensive and persistence matters. Microsoft’s own description emphasizes coding, computer use, research, document synthesis, and long-context analysis, which suggests a focus on workflows that break simpler models. The premium GPT-5.5 Pro variant pushes that further for the highest-complexity tasks.The most notable improvement is not one feature, but the combination of reasoning depth, agentic reliability, and token efficiency. In practical terms, that means the model is being tuned to hold onto a task longer, take more steps with fewer resets, and spend less compute to get to the answer. That is especially valuable for production agents that must operate at scale rather than merely impress in a demo.

Microsoft also stresses computer-use accuracy. That phrase deserves attention, because computer-use systems tend to fail in small but costly ways: clicking the wrong element, losing state, or misunderstanding an interface that changed slightly. A better computer-use model is not just a convenience feature; it is an operational prerequisite for serious agent deployment.

Why the details matter

The distinction between “better reasoning” and “better operations” is subtle but important. Many AI announcements highlight intelligence, but enterprise buyers care about whether the model can keep working after a dozen turns, a partial failure, or a messy file tree. GPT-5.5’s positioning suggests Microsoft thinks those are the real differentiators now.- Long-context reasoning helps with large documents, repositories, and multi-session histories.

- Agentic execution matters for end-to-end workflows rather than isolated prompts.

- Computer-use reliability reduces brittle UI automation failures.

- Token efficiency lowers cost and latency across repeated production runs.

- GPT-5.5 Pro appears aimed at the highest-value enterprise scenarios.

Why Microsoft Foundry Matters

Microsoft Foundry is doing the heavy lifting in this story. Microsoft describes Foundry Agent Service as a fully managed environment that handles hosting, scaling, identity, observability, and enterprise security, while allowing customers to use many models from the catalog and multiple frameworks. In other words, Foundry is the control plane that makes frontier models feel like enterprise software.That framing is important because raw model access is no longer enough. Foundry is increasingly being presented as the system that turns a model into a governed service, with policy, telemetry, and deployment boundaries attached. Microsoft is effectively arguing that the differentiator in enterprise AI is not just what model you use, but where and how it runs.

The platform also supports multiple agent styles, including prompt agents, workflow agents, and hosted agents. That gives enterprises a path from quick experiments to structured automation without switching stacks every time they mature a use case. The result is an architecture meant to reduce friction as teams move from prototype to production.

The platform layer as the real product

Microsoft’s pitch is, in effect, that enterprise customers are buying more than a model catalog. They are buying a runtime, a governance model, and an operations layer that can absorb multiple frameworks and multiple deployment patterns. That is a stronger moat than raw benchmark leadership because it creates switching costs around enterprise workflows.- Identity and RBAC help map AI actions to enterprise access rules.

- Observability makes agent decisions auditable.

- Virtual network isolation supports stricter deployment boundaries.

- Publishing tools let teams version and distribute stable agent endpoints.

- Built-in tools reduce the need to assemble infrastructure from scratch.

Agentic Workflows Move to the Center

The deeper strategic shift here is Microsoft’s belief that AI value increasingly comes from agents, not isolated prompts. Foundry Agent Service is designed around agents that can reason, call tools, access data, and take multi-step actions. That is a very different production problem from classic chatbots.Microsoft’s own documentation makes this architecture explicit. An agent is made up of a model, instructions, and tools, and Foundry handles the runtime burden around that combination. That approach gives developers room to focus on business logic while the platform manages the mechanics of scaling and deployment.

GPT-5.5 seems tuned for that reality. A model that can sustain context across large systems and multi-session histories is more useful for workflows than one that produces a strong answer in a single turn. In enterprise settings, the ability to stay oriented is often more valuable than a flash of brilliance.

From prompt to production

Microsoft is also broadening the kinds of agentic systems it supports. Prompt agents are the low-friction starting point, workflow agents help orchestrate repeatable processes, and hosted agents bring in code-based control for more sophisticated behavior. That spectrum matters because different teams mature at different speeds.- Prompt agents are best for fast prototyping.

- Workflow agents fit repeatable automation with approvals.

- Hosted agents suit custom frameworks and complex logic.

- YAML-based workflows make some orchestration visible and reviewable.

- Human-in-the-loop steps remain part of the design for controlled execution.

Coding, Computer Use, and the Software Lifecycle

One of the strongest implications of GPT-5.5 is its role in software engineering. Microsoft highlights multi-step engineering tasks, architectural diagnosis, and codebase-wide reasoning, all of which point to deeper support for development workflows. That is significant because software engineering remains one of the clearest proving grounds for agentic AI.The ability to reason through a fix before making a change is especially important. In real codebases, a patch often has ripple effects that affect tests, dependencies, interfaces, and release pipelines. A model that anticipates those relationships is more valuable than one that simply edits a file quickly.

GPT-5.5’s improved computer-use accuracy also broadens its usefulness beyond code generation. UI-driven business systems, legacy admin consoles, and internal enterprise portals still dominate many workflows. A model that can navigate those interfaces more reliably can unlock automation where APIs are missing or incomplete.

Why developers should care

For engineering teams, the most practical benefit may be reduced back-and-forth. Fewer retries and fewer errors mean less time spent validating output and more time shipping. That is where the token efficiency claim becomes more than a cost metric; it becomes a throughput metric.- Refactoring can benefit from larger contextual awareness.

- Test generation becomes more useful when the model understands dependencies.

- Documentation is more credible when it reflects the structure of the codebase.

- Migration work can be planned with broader system awareness.

- UI automation becomes more viable when computer-use is precise.

Enterprise Governance and Security

The most important enterprise differentiator in this announcement is still governance. Microsoft is not just selling intelligence; it is selling a controlled environment in which intelligence can be supervised. Foundry Agent Service offers Microsoft Entra identity, RBAC, content filters, and virtual network isolation, all of which are designed to reassure security teams.That stack matters because agents are not passive systems. They can call tools, move data, and take actions. If those behaviors are not bounded, monitored, and permissioned, they become a compliance problem as fast as they become a productivity gain. Microsoft’s messaging is clearly trying to front-load that reality.

The platform also provides end-to-end tracing and Application Insights integration, which is essential for operational debugging. Enterprises rarely fail because they cannot launch an AI agent; they fail because they cannot explain what it did, why it did it, or what data it touched. Observability is not optional in that world.

Governance is the buying criterion

For many organizations, model quality is only one part of the decision. The other part is whether the AI system can be made to fit internal policy, residency requirements, and audit obligations. That is why Microsoft continues to emphasize enterprise-grade trust as a platform feature rather than an afterthought.- Microsoft Entra identity ties agents to enterprise access controls.

- RBAC helps limit what an agent can do.

- Content filters support safety and policy enforcement.

- Virtual network isolation helps protect sensitive deployments.

- Tracing and metrics support auditability and incident response.

Pricing, Efficiency, and Scale

Microsoft published pricing for GPT-5.5 and GPT-5.5 Pro alongside the announcement, which tells us a lot about how it wants the model to be used. The standard GPT-5.5 tier is priced at $5 per million input tokens and $30 per million output tokens, while GPT-5.5 Pro rises to $30 and $180 respectively. That spread signals a clear segmentation between routine enterprise use and premium deep-reasoning workloads.The pricing structure also reinforces the importance of token efficiency. Microsoft is explicitly arguing that GPT-5.5 can reach better outputs with fewer tokens and fewer retries. In production, that matters as much as raw capability because repeated calls and long outputs can become a major cost center.

There is a broader pattern here. In past announcements, Microsoft has repeatedly linked Foundry to cost-efficiency through routing, model choice, and managed deployment. GPT-5 in Azure AI Foundry was positioned as part of a system that could reduce inference costs while preserving fidelity. GPT-5.5 appears to continue that same strategic line.

Economics at enterprise scale

The real economic story is less about sticker price and more about workload shape. A model that finishes tasks faster, uses fewer retries, and preserves context more effectively can reduce hidden operational costs. Those savings often matter more than the nominal per-token rate.- Lower retries reduce wasteful compute.

- Higher accuracy reduces human review time.

- Better context retention lowers rework.

- Premium pricing allows clear workload separation.

- Platform integration can simplify procurement and operations.

Competitive Implications

GPT-5.5 in Foundry is as much a platform announcement as it is a model announcement. It keeps Microsoft tightly coupled to OpenAI’s frontier roadmap while also reinforcing Azure’s role as the enterprise distribution channel. That combination remains a formidable competitive position.For rivals, the challenge is not only matching model quality. They must also offer identity, governance, workflow orchestration, observability, and a credible production path. Microsoft is trying to make those platform layers feel inseparable from the model itself. That is a strong retention strategy.

The announcement also shows how the enterprise AI market has matured. Earlier waves were about access to a powerful model. Now the question is which vendor can run the most agents safely, at scale, with the least friction. That shift is subtle but commercially decisive.

Rivals face a broader benchmark

Microsoft’s move raises the bar for the entire category. A platform that combines frontier reasoning, controlled deployment, and managed agents makes it harder for competitors to win purely on model novelty. They need a platform story that is equally credible, or better.- Cloud vendors must match both model access and enterprise controls.

- Model labs need distribution as well as intelligence.

- Workflow platforms need deeper reasoning and better agent execution.

- SaaS providers must explain why their AI layer is more operationally complete.

- Enterprises gain leverage by comparing ecosystems, not just models.

Strengths and Opportunities

GPT-5.5 in Microsoft Foundry has several clear strengths, and the opportunity set is larger than a simple model launch would suggest. The combination of stronger reasoning, better agentic execution, and enterprise-grade hosting gives Microsoft a credible story for production workloads. It also gives customers a way to move from experimentation to operational deployment inside one platform.- Stronger enterprise fit for regulated and high-stakes workflows.

- Better agent runtime support through Foundry Agent Service.

- Framework flexibility with Agent Framework, LangGraph, and SDK options.

- Improved observability for tracing and debugging agent behavior.

- Identity and access controls that align with enterprise security models.

- Token efficiency gains that can reduce production costs.

- Premium tiering for complex, high-value use cases.

Risks and Concerns

The launch is impressive, but it is not without risks. Any frontier model introduced into agentic workflows can amplify mistakes if the surrounding guardrails are weak. Microsoft’s own documentation on Agent Framework and third-party systems makes clear that responsibility for testing, safety, and compliance remains with the customer.- Overreliance on autonomy could lead to silent failures in production.

- Computer-use errors may still create costly mistakes despite improvements.

- Complex integrations can increase operational and security risk.

- Third-party frameworks introduce governance and boundary questions.

- Premium pricing may limit use to high-value workloads.

- Model drift or tool mismatch can undermine repeatability.

- Human oversight gaps remain a concern in high-stakes settings.

Looking Ahead

The next phase will likely be defined by adoption patterns, not just launch headlines. Enterprises will want to know where GPT-5.5 consistently outperforms GPT-5, where the Pro tier is justified, and how much operational improvement Foundry Agent Service actually delivers in live systems. In other words, the proof point will be production telemetry, not marketing language.Microsoft will also need to show that agentic AI can be both more capable and more governable over time. If Foundry can keep reducing the friction between model experimentation and enterprise deployment, it may become the default home for a growing share of business AI workloads. If not, customers may continue to split their stack across multiple vendors and frameworks.

The strategic watch items are fairly clear. Each one will reveal whether GPT-5.5 is simply a stronger model, or another step toward a more durable enterprise AI operating model.

- How quickly enterprises move GPT-5.5 into production

- Whether GPT-5.5 Pro finds a real market among high-complexity workloads

- How Foundry Agent Service performs under large-scale agent deployment

- Whether token efficiency translates into measurable cost savings

- How rivals respond with their own platform-and-model combinations

Source: Microsoft Azure OpenAI’s GPT-5.5 in Microsoft Foundry: Frontier intelligence on an enterprise ready platform | Microsoft Azure Blog