Microsoft Research's latest paper and demonstrations make a bold promise: a laser-etched glass archival system that can store terabytes of data in a coaster‑sized plate and survive for millennia—potentially 10,000 years or more—without powered maintenance. What was once a laboratory curiosity has been presented as a full end‑to‑end archival system: write, read, decode, and validate, with automated microscopes and machine‑learning decoders doing the heavy lifting. For organizations wrestling with explosive data growth and the repeated costs and risks of media refresh cycles, this approach reframes archival storage as a materials and optics problem rather than a purely magnetic or semiconductor engineering one.

Glass as a data medium is not new: researchers have been experimenting with femtosecond‑laser inscription in silica and quartz for more than a decade. The recent work from Microsoft’s Project Silica advances the idea from isolated demonstrations to a complete, quantifiable archival system with published density, throughput, energy numbers, and accelerated‑aging evidence. The team demonstrated a glass plate roughly the size of a small coaster—written in hundreds of stacked layers of microscopic marks (voxels)—storing about 4.8 terabytes of data in a 120 mm × 120 mm × 2 mm plate, and they report a volumetric density of about 1.59 gigabits per cubic millimeter.

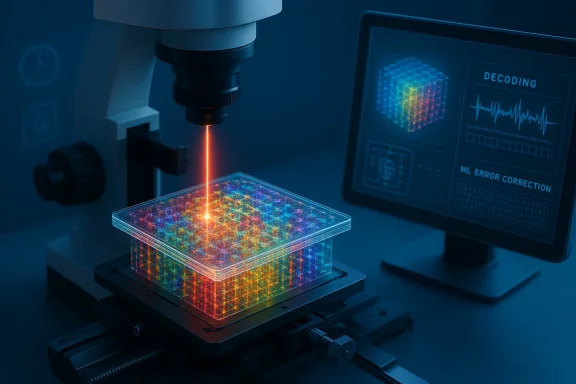

What distinguishes this generation of glass storage is the engineering of the write modalities, the automation of the read path, and an explicit durability evaluation. The researchers developed two voxel types—phase voxels and birefringent voxels—that can each be written reliably with a single femtosecond laser pulse, enabling much faster, energy‑efficient writing than earlier multi‑pulse schemes. Reading is performed with polarization microscopy and an automated stage, while the decoding chain uses machine learning to deconvolve optical interference and correct errors. Critically, the team also ran accelerated ageing tests that support claims of data stability measured in thousands to tens of thousands of years under expected storage conditions.

Automation is central: the microscope captures each layer in series, stitching millions of voxel images into a bitstream. Error correction and redundancy are baked in to cope with manufacturing and reading noise, delivering bit‑perfect reconstruction across billions of bits in the experiments.

Reading is equally slow in absolute terms: an automated microscope must capture many high‑resolution images across hundreds of layers. Use cases that require frequent retrieval or low latency access are not a fit.

This is a nontrivial concern: preserving the ability to decode data requires careful standards, open formats, or at least widely distributed reference readers and decoders archived alongside the glass plates.

However, several questions remain unresolved or only partially addressed:

That said, the technology is not a turnkey replacement for existing storage layers. Real adoption will demand careful handling of cost, standardization, physical custody, and reader availability. The promise of “write once, preserve for millennia” is real—but fulfilling it responsibly requires a broader ecosystem: open formats, multiple vendors, standards bodies, and archival best practices that treat the reader and decoder as part of the object being preserved. If those pieces come together, glass could become one of the most important technologies we design for cultural and scientific continuity.

Source: IEEE Spectrum IEEE Spectrum

Background and overview

Background and overview

Glass as a data medium is not new: researchers have been experimenting with femtosecond‑laser inscription in silica and quartz for more than a decade. The recent work from Microsoft’s Project Silica advances the idea from isolated demonstrations to a complete, quantifiable archival system with published density, throughput, energy numbers, and accelerated‑aging evidence. The team demonstrated a glass plate roughly the size of a small coaster—written in hundreds of stacked layers of microscopic marks (voxels)—storing about 4.8 terabytes of data in a 120 mm × 120 mm × 2 mm plate, and they report a volumetric density of about 1.59 gigabits per cubic millimeter.What distinguishes this generation of glass storage is the engineering of the write modalities, the automation of the read path, and an explicit durability evaluation. The researchers developed two voxel types—phase voxels and birefringent voxels—that can each be written reliably with a single femtosecond laser pulse, enabling much faster, energy‑efficient writing than earlier multi‑pulse schemes. Reading is performed with polarization microscopy and an automated stage, while the decoding chain uses machine learning to deconvolve optical interference and correct errors. Critically, the team also ran accelerated ageing tests that support claims of data stability measured in thousands to tens of thousands of years under expected storage conditions.

How the technology works

Laser pulses, voxels, and 3‑D encoding

At the core of the system are ultrashort laser pulses—femtosecond lasers—that deposit energy in a tiny focal volume inside transparent glass. That energy creates localized, permanent modifications to the glass’s optical properties. Instead of etching the surface, the system writes voxels inside the bulk material and stacks hundreds of layers across the glass thickness to exploit the third spatial dimension.- Phase voxels change the refractive index uniformly, producing an isotropic optical change that can be detected by microscopy.

- Birefringent voxels create direction‑dependent refractive changes that encode extra degrees of freedom (orientation-dependent signals), increasing per‑voxel information density.

Readout: optics meets machine learning

Reading a plated glass chip requires scanning optical images of each layer under polarized light. Rather than relying on a single human‑tuned threshold, the read pipeline in this system digitizes 2‑D images from each layer and feeds them to a decoder that uses machine‑learning models to correct for optical cross talk, slight misalignments, and material heterogeneity.Automation is central: the microscope captures each layer in series, stitching millions of voxel images into a bitstream. Error correction and redundancy are baked in to cope with manufacturing and reading noise, delivering bit‑perfect reconstruction across billions of bits in the experiments.

Speed and energy characteristics

Earlier glass‑writing methods often required multiple pulses per voxel, limiting throughput and inflating energy costs. By engineering voxels that require a single pulse, the system reduces energy per bit and allows continuous scanning of the write focus.- The laboratory system reports a per‑beam write throughput on the order of tens of megabits per second and an energy efficiency of roughly 10 nanojoules per bit in the demonstrated regimes.

- The researchers also demonstrated parallelization possibilities—for example, splitting the laser into multiple beams or using multiple write heads to scale aggregate write speed.

The headline numbers (what was actually demonstrated)

- Capacity demonstrated: ~4.8 terabytes written into a 120 mm × 120 mm × 2 mm glass plate.

- Layering: ~301 layers stacked through the depth of the 2 mm plate.

- Volumetric density: ~1.59 Gbit mm−3.

- Per‑beam write throughput demonstrated: tens of megabits per second (single‑beam numbers in the experimental setup). Parallelization strategies suggest higher effective throughput in future implementations.

- Energy per bit (demonstrated regime): ~10.1 nJ/bit.

- Longevity evidence: accelerated ageing tests and thermal stress experiments that support projected stability measured in thousands to >10,000 years at ambient temperatures.

Why this matters: strengths and practical benefits

1. Durability and low maintenance

Glass—especially high‑purity fused silica—is chemically inert, resistant to moisture and electromagnetic interference, and stable across wide temperature ranges. Once written, a glass plate requires no powered environment to retain its data; there’s no spin, no refresh cycles, and no moving media maintenance. For archivists, the promise is simple: write once, store passively, and avoid the recurring costs and risks of media migration.2. Density for archival use

Stacking information through the glass thickness multiplies effective area without pushing extreme surface lithography techniques. For applications where data are written once and accessed rarely—scientific archives, cultural heritage, legal records—the reported terabyte‑scale per‑plate capacity is compelling. A single, robot‑managed vault of glass plates could represent years of data in a stable, air‑gapped form.3. Energy and environmental gains over time

The life‑cycle carbon and energy cost of maintaining spinning tape farms and periodic data refresh cycles are nontrivial. A long‑lived, maintenance‑free archive could reduce ongoing energy usage substantially. For cold, write‑once archival tiers, glass could present an energy‑efficient alternative.4. Physical security and tamper resistance

A sealed glass plate offers intrinsic protection against electromagnetic tampering and remote altering. The physical medium is easily sealed and stored offline in secure facilities. For specific classes of irreplaceable records, that physical barrier is a feature.The caveats, constraints, and real risks

No technology is a silver bullet. The glass‑storage approach, powerful as it is, comes with important limitations and risks that every systems architect and archivist must weigh.1. Write and read latency vs operational needs

Write speeds—even with single‑pulse voxels and per‑beam rates in the multi‑megabit range—are orders of magnitude slower than modern HDDs and SSDs. This makes glass inherently unsuited for any but the coldest archival tiers where data are written rarely and read even more rarely.Reading is equally slow in absolute terms: an automated microscope must capture many high‑resolution images across hundreds of layers. Use cases that require frequent retrieval or low latency access are not a fit.

2. Specialized hardware and cost

Femtosecond lasers, high‑precision scanners, polarization microscopes, and automated stages are the backbone of the system. These are expensive instruments compared with tape drives and commodity disk arrays. Until economies of scale or alternative low‑cost write paths emerge, capital costs for writer and reader hardware will be significant.3. Format and reader obsolescence

The longevity of the physical marks is only one half of the preservation equation. The other is readability: future generations must have the hardware, optics, and decoding algorithms necessary to interpret the voxels. If reading systems are proprietary or tied to single vendors, archival value is at risk.This is a nontrivial concern: preserving the ability to decode data requires careful standards, open formats, or at least widely distributed reference readers and decoders archived alongside the glass plates.

4. Fragility and catastrophic physical damage

Glass is stable chemically, but it is brittle. A smashed plate is difficult to reconstruct. Long‑term archival strategies must therefore factor in physical redundancy, multiple geographically dispersed copies, and protective enclosures. Accelerated ageing tests may not address all failure modes (e.g., impact, deliberate destruction, or chemical corrosion in hostile environments).5. Vendor and supply‑chain dependency

Large‑scale adoption would create new dependencies: femtosecond laser suppliers, optics manufacturers, and specialized robotics firms. Concentration of these supply chains or closed IP could raise costs, slow innovation, and centralize archival control—conditions that archivists and public institutions may find uncomfortable.6. Security tradeoffs

While air‑gapped glass plates resist remote hacking, they are physically stealable and require strict custodial practices. Moreover, because the read chain depends on complex optics and ML decoding, any compromise in the reader or decoder software could corrupt retrieval—so software supply‑chain security is important.Practical adoption pathways: how organizations could use glass archival plates

Glass-based archival storage is not a drop‑in replacement for existing storage tiers. Instead, reasonable near‑term adoption models focus on write‑once, long‑term copies of high-value datasets. Here are practical steps organizations could take to pilot and adopt the technology responsibly.Recommended phased approach

- Proof‑of‑concept and integration testing

- Choose a small set of truly long‑lived assets: raw scientific datasets, master audiovisual archives, legal records.

- Write duplicate plates and test retrieval under various controlled conditions.

- Metadata and format governance

- Store metadata and decoding specifications in open, well‑documented formats.

- Archive reference readers (hardware blueprints, firmware images, decoder software) alongside plates on multiple media types.

- Redundancy and geographic dispersion

- Implement N‑copy strategies: multiple plates in separate vaults with different environmental profiles.

- Pair glass plates with traditional backups; glass is an additional layer—not a single point of truth.

- Automated vaults and robotics

- Integrate glass plates into robot‑managed archival stacks to manage retrieval latency and throughput efficiently.

- Test robot reliability for handling fragile media across expected life cycles.

- Interoperability and standards

- Collaborate on open standards for encoding, error correction, and file systems for glass media.

- Encourage multiple hardware vendors to implement compatible readers.

Use cases that make sense today

- National and cultural archives preserving irreplaceable records.

- Scientific data repositories with long‑tail retention requirements.

- Film and media studios storing "master" copies of culturally valuable movies in the highest fidelity.

- Cold cloud archival tiers that require extremely low maintenance and long lifespans.

What the experiments do — and what they don’t yet show

The work that produced the headline 4.8 TB plate and the longevity projections is important because it moves glass inscription from artful demos to a system demonstration: automated writing/reading, error correction, and accelerated ageing tests across billions of bits. The experiments show that the physics and decoding approaches are viable at scale—not just a laboratory trick on a few megabits.However, several questions remain unresolved or only partially addressed:

- Production economics: what is the cost per terabyte at scale when factoring in hardware amortization, labor, and redundancy?

- Operational tooling: can write/read systems be ruggedized, miniaturized, and made affordable for routine use by archives?

- Damage recovery: how well can data be recovered if a plate is cracked into multiple fragments?

- Standards and long‑term readability: will future archivists be able to reconstruct and decode data if vendor‑specific software and hardware are unavailable?

Security, legal and ethical considerations

Adopting a new physical format for storing society’s memory raises governance questions.- Access control and custody: physical media require secure custody models. Policy should define who is authorized to access plates and how chain of custody is maintained.

- Authenticity and provenance: long‑lived archives must support provenance tracking so future readers can verify authenticity and detect tampering.

- Privacy and retention law: countries and institutions have differing retention requirements for personal and sensitive data. A 10,000‑year retention medium obliges archivists to consider legal limits and ethical risks of indefinite retention.

- Open standards and public interest: to avoid vendor lock‑in and maintain public trust, nations and cultural institutions should push for open encoding standards and broad access to reader specifications.

The competitive landscape and who else is working on long‑term media

Glass inscription is one of several approaches aimed at long‑term archival preservation. Other contenders include:- DNA data storage, which promises extremely high density but currently faces read/write cost and stability questions.

- 5D glass memory explored by other groups and startups, which uses additional optical degrees of freedom to push density claims even higher.

- Ceramic and quartz inscription projects from legacy electronics firms, using different laser/etching and encoding strategies.

Roadmap: technical milestones to watch

For glass archival to become a mainstream archival tier, expect the following milestones to be crucial:- Reduction in per‑unit writer and reader hardware cost via industrialization or alternative low‑cost write heads.

- Increased parallelism in writing (many beams / many heads) to raise aggregate throughput to tens or hundreds of MB/sec.

- Standardized, open encoding formats and published reader specifications to guarantee long‑term decodeability.

- Ruggedized plate enclosures and validated physical‑damage recovery techniques.

- Broader third‑party validation of accelerated‑aging claims across different glass chemistries and environmental stressors.

Practical checklist for IT and archive managers

If you’re responsible for long‑term archives and want to evaluate glass storage, start here:- Evaluate which assets truly require millennial‑scale retention versus typical long‑term retention (30–100 years).

- Insist on open, documented encoding formats and archived decoders before committing to any proprietary plates.

- Require demonstrable tests for damage recovery and validation of accelerated‑age extrapolations under varied environmental stresses.

- Plan for physical redundancy: multiple plates, multiple sites, and at least one alternative media copy.

- Budget for hardware lifecycle: writers and readers will need maintenance and eventually replacement; archive the tools and firmware images used for write/read operations.

Conclusion

Laser‑etched glass as an archival medium marks an important convergence of optics, materials science, and systems engineering. The recent system demonstrations show that glass plates can hold terabytes of data in a compact, chemically inert form and that carefully controlled inscriptions can survive accelerated ageing tests consistent with lifetimes measured in thousands of years. For institutions whose primary concerns are long‑term stability and low ongoing maintenance costs, glass archival plates are a compelling addition to the archival toolkit.That said, the technology is not a turnkey replacement for existing storage layers. Real adoption will demand careful handling of cost, standardization, physical custody, and reader availability. The promise of “write once, preserve for millennia” is real—but fulfilling it responsibly requires a broader ecosystem: open formats, multiple vendors, standards bodies, and archival best practices that treat the reader and decoder as part of the object being preserved. If those pieces come together, glass could become one of the most important technologies we design for cultural and scientific continuity.

Source: IEEE Spectrum IEEE Spectrum