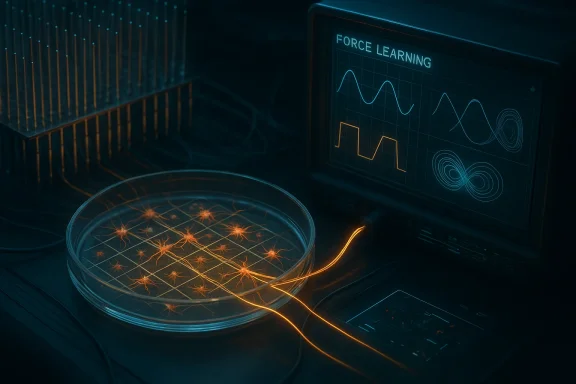

Researchers at Tohoku University and Future University Hakodate have turned a living rat cortical network into something that behaves, in a limited but striking sense, like a real-time AI computer. In experiments published in the Proceedings of the National Academy of Sciences on March 12, the team trained cultured neurons to generate target waveforms through a closed-loop reservoir computing system, using electrical stimulation, high-density electrode recording, and real-time FORCE learning. The result is not a brain in a box, but it is a meaningful step toward biohybrid computing and, potentially, future brain-machine interfaces that use living tissue as part of the control loop.

The headline result is easy to oversell if stripped of context, so it is worth being precise about what the researchers actually built. They did not teach rat neurons to “think” in a human sense, nor did they create a general-purpose biological replacement for a GPU. They created a carefully engineered system in which cultured neurons, arranged in a controlled physical structure, could be trained to produce time-varying signals and then adapt to feedback in a way that resembles machine learning. That is a smaller claim than sci-fi headlines suggest, but it is also much more scientifically important.

This work sits at the intersection of neuroscience, microfabrication, and reservoir computing. The living neurons provided a dynamic substrate, the microelectrode array captured activity, and the FORCE learning algorithm adjusted the output mapping in real time. According to the report, the setup could learn periodic signals such as sine, triangle, and square waves, and could even approximate a Lorenz attractor, which is a classic chaotic system used to test whether a model can reproduce complex temporal dynamics. The study’s broader implication is that living neural cultures may function as a novel computational medium under the right physical constraints.

The key technical insight was that structure matters. Unpatterned neuronal cultures tend to become highly synchronized and relatively low in computational diversity, which is a problem for reservoir computing because the system needs rich, varied dynamics rather than lockstep firing. By using PDMS microfluidic films to constrain where cell bodies could grow and how they could connect, the researchers increased the dimensionality of the network’s activity and reduced pairwise correlations. That transformed the cultures from noisy biological soup into something closer to an engineered information-processing substrate.

The implications are intriguing for both future brain-machine interfaces and the broader neuromorphic field. If living tissue can be shaped into a stable, trainable dynamic system, it could someday contribute to adaptive controllers, low-power biological sensors, or hybrid prosthetic interfaces. But the research also underscores how fragile these systems are: when feedback was removed, performance degraded quickly, and the loop latency limited the ability to track fast-changing waveforms. In other words, this is a proof of concept, not a production-ready platform.

This matters because biological networks are not naturally convenient computers. They are messy, adaptive, and nonlinear, which is exactly why they are so interesting to scientists. Yet that same messiness makes them hard to control. The challenge is to preserve enough diversity in the activity patterns to support computation without letting the network collapse into synchronized behavior that carries little useful information. The study’s use of microfluidic patterning is a direct response to that problem, converting a biological culture into a more intentionally arranged computational substrate.

Reservoir computing is a particularly apt framework for such experiments. In classical reservoir systems, one keeps a complex dynamic core relatively fixed and trains only a readout layer to map those dynamics to desired outputs. FORCE learning takes that idea further by adjusting the decoder online as the system runs. In this experiment, the neuronal culture served as the reservoir, the linear readout extracted the target signal, and the feedback loop sent the output back into the neurons through stimulation. That architecture is elegant because it uses the network’s natural dynamics instead of trying to force the biology to behave like conventional digital logic.

The approach also reflects a broader shift in AI engineering toward embodied or physical computation. In silicon systems, the appeal of analog or neuromorphic approaches is often energy efficiency, latency reduction, or richer event-driven processing. Living neurons take that logic one step further by bringing actual biological plasticity into the loop. That does not automatically make them better than digital systems, but it does open a different design space—one where computation is shaped not only by code and circuits, but by growth, connectivity, and cellular excitability.

The timing also mattered. The loop cycled at roughly 333 milliseconds, which is slow by digital-computing standards but still fast enough to support real-time training of seconds-scale waveforms. The researchers used FORCE learning to optimize the readout weights continuously, minimizing the distance between the culture’s output and the desired target. That allowed the same neural preparation to be retrained for different frequencies and waveform shapes, which is an important sign that the system was not merely a one-off coincidence.

The feedback architecture also exposes an important limitation. When the loop stopped, the system’s autonomous performance deteriorated sharply, and the reported mean squared error increased in 99% of trials. That suggests the learned behavior was not deeply self-sustaining in the way a stable digital model would be. Instead, it was a fragile emergent pattern that depended on continued interaction with the decoder and stimulation pathway.

Key takeaways from the architecture:

The researchers addressed this by confining neuronal cell bodies to 128 square wells, each around 100 by 100 micrometers, with about 14.6 neurons per well on average. Those wells were linked by microchannels, creating two different layouts: a lattice design with uniform nearest-neighbor connectivity and a hierarchical design with sparser multi-scale links. Both patterns significantly reduced pairwise correlations compared with unpatterned cultures, which increased computational diversity and improved learning.

The results also help explain why this field keeps returning to microfabrication. Biological computation is not just about the cells themselves; it is about how they are arranged. The microfluidic patterning effectively turned a culture dish into a programmable physical environment. That is a subtle but powerful move, because it makes the biology more legible to engineering methods without completely removing its adaptive character.

Important design lessons:

That said, the achievement should not be mistaken for robust autonomous intelligence. The report notes that performance degraded after training was halted, which means the network did not naturally hold its learned state very well. In practical terms, the system behaved more like a trained adaptive controller than a self-sufficient biological model. That distinction matters a great deal when translating the work into future devices.

The Lorenz attractor result is especially interesting because it points to the system’s ability to inhabit a higher-dimensional state space. That is one of the central promises of reservoir computing: the complex internal dynamics do the hard work, while the decoder handles the mapping. In this case, the living neurons were not just a novelty; they were a dynamic reservoir with enough richness to approximate a canonical chaos problem.

A few practical observations stand out:

The work also challenges a common assumption in AI engineering, namely that intelligence must be fully digital to be useful. This study suggests that biological tissue can participate in a learning loop if the environment is engineered carefully enough. That does not displace transformer models or other mainstream AI systems, but it broadens the conversation about where computation can happen and what kind of physical substrate can support it.

It is also possible that the real breakthrough is methodological rather than product-oriented. The researchers showed that living networks can be patterned, trained, and interrogated with enough precision to support controlled computation. That kind of platform could become valuable even if it never becomes a mass-market computer. In science, useful tools are often more important than flashy end-state visions.

Potentially relevant implications:

That could matter for prosthetics, adaptive controllers, or neural repair platforms, where a biohybrid layer might one day help translate between biological signals and machine commands. A living reservoir might respond to subtle temporal patterns in a way that conventional digital models find expensive to simulate. But those possible uses remain speculative, and the jump from cultured neurons in a lab to therapeutic hardware in patients is enormous.

There is also a broader scientific payoff. Even if these systems never become implantable, they can provide a new way to study how structured neural populations process time-varying information. That could improve our understanding of cortical dynamics, learning, and synchronization. In that sense, the platform is both an engineering prototype and a neuroscience instrument.

Possible application lanes:

This is also a genuinely interdisciplinary breakthrough. It sits at the overlap of neural engineering, microfluidics, and real-time machine learning, which means improvements in one area can improve the whole stack. That kind of cross-disciplinary leverage is often where major platform shifts begin.

There are also ethical and translational concerns. Even though these are cultured rat neurons rather than brains with consciousness, the use of living tissue in computational systems will inevitably raise questions about welfare, oversight, and acceptable use. As the field advances, those questions will become more prominent, not less.

Researchers will also need to shrink latency and improve autonomy if they want these systems to move beyond demonstration experiments. The paper itself points toward specialized hardware and alternative filtering as possible fixes, which is exactly the sort of pragmatic engineering that can move a lab curiosity toward a platform technology. In the short term, that may matter more than grand claims about artificial general intelligence or synthetic brains.

Source: Tom's Hardware Researchers train living rat neurons to perform real-time AI computations — experiments could pave the way for new brain-machine interfaces

Overview

Overview

The headline result is easy to oversell if stripped of context, so it is worth being precise about what the researchers actually built. They did not teach rat neurons to “think” in a human sense, nor did they create a general-purpose biological replacement for a GPU. They created a carefully engineered system in which cultured neurons, arranged in a controlled physical structure, could be trained to produce time-varying signals and then adapt to feedback in a way that resembles machine learning. That is a smaller claim than sci-fi headlines suggest, but it is also much more scientifically important.This work sits at the intersection of neuroscience, microfabrication, and reservoir computing. The living neurons provided a dynamic substrate, the microelectrode array captured activity, and the FORCE learning algorithm adjusted the output mapping in real time. According to the report, the setup could learn periodic signals such as sine, triangle, and square waves, and could even approximate a Lorenz attractor, which is a classic chaotic system used to test whether a model can reproduce complex temporal dynamics. The study’s broader implication is that living neural cultures may function as a novel computational medium under the right physical constraints.

The key technical insight was that structure matters. Unpatterned neuronal cultures tend to become highly synchronized and relatively low in computational diversity, which is a problem for reservoir computing because the system needs rich, varied dynamics rather than lockstep firing. By using PDMS microfluidic films to constrain where cell bodies could grow and how they could connect, the researchers increased the dimensionality of the network’s activity and reduced pairwise correlations. That transformed the cultures from noisy biological soup into something closer to an engineered information-processing substrate.

The implications are intriguing for both future brain-machine interfaces and the broader neuromorphic field. If living tissue can be shaped into a stable, trainable dynamic system, it could someday contribute to adaptive controllers, low-power biological sensors, or hybrid prosthetic interfaces. But the research also underscores how fragile these systems are: when feedback was removed, performance degraded quickly, and the loop latency limited the ability to track fast-changing waveforms. In other words, this is a proof of concept, not a production-ready platform.

Background

Biohybrid computing has long promised something unusual: not just copying the brain’s algorithms in silicon, but integrating actual living neurons into computational systems. That idea has surfaced in different forms over the years, from dissociated neural cultures on electrodes to organoid-based experiments and neuromorphic hardware inspired by synapses and plasticity. What makes the Japanese team’s work notable is that it does not merely observe spontaneous neural activity; it actively trains a living network in a closed loop, using feedback to shape output behavior in real time.This matters because biological networks are not naturally convenient computers. They are messy, adaptive, and nonlinear, which is exactly why they are so interesting to scientists. Yet that same messiness makes them hard to control. The challenge is to preserve enough diversity in the activity patterns to support computation without letting the network collapse into synchronized behavior that carries little useful information. The study’s use of microfluidic patterning is a direct response to that problem, converting a biological culture into a more intentionally arranged computational substrate.

Reservoir computing is a particularly apt framework for such experiments. In classical reservoir systems, one keeps a complex dynamic core relatively fixed and trains only a readout layer to map those dynamics to desired outputs. FORCE learning takes that idea further by adjusting the decoder online as the system runs. In this experiment, the neuronal culture served as the reservoir, the linear readout extracted the target signal, and the feedback loop sent the output back into the neurons through stimulation. That architecture is elegant because it uses the network’s natural dynamics instead of trying to force the biology to behave like conventional digital logic.

The approach also reflects a broader shift in AI engineering toward embodied or physical computation. In silicon systems, the appeal of analog or neuromorphic approaches is often energy efficiency, latency reduction, or richer event-driven processing. Living neurons take that logic one step further by bringing actual biological plasticity into the loop. That does not automatically make them better than digital systems, but it does open a different design space—one where computation is shaped not only by code and circuits, but by growth, connectivity, and cellular excitability.

How the Living Neuron System Worked

The hardware stack was central to the experiment’s success. The researchers combined cultured rat cortical neurons with a 26,400-electrode array on a 17.5-micrometer pitch, then used microfluidic films to impose a physical layout on the cells. The array recorded spike trains, those trains were filtered into continuous signals, and a linear decoder translated them into output waveforms. That output was then fed back as electrical stimulation, creating the closed loop that allowed the culture to adapt.The timing also mattered. The loop cycled at roughly 333 milliseconds, which is slow by digital-computing standards but still fast enough to support real-time training of seconds-scale waveforms. The researchers used FORCE learning to optimize the readout weights continuously, minimizing the distance between the culture’s output and the desired target. That allowed the same neural preparation to be retrained for different frequencies and waveform shapes, which is an important sign that the system was not merely a one-off coincidence.

Why Feedback Is the Core Innovation

In ordinary neural culture experiments, activity is recorded passively. Here, the culture was not just observed; it was conditioned through feedback. That is the difference between a biological recording and a computational loop. The stimulation altered the future state of the network, and the readout adapted as the network responded, which is closer to how control systems work in robotics than how traditional bench neuroscience is usually done.The feedback architecture also exposes an important limitation. When the loop stopped, the system’s autonomous performance deteriorated sharply, and the reported mean squared error increased in 99% of trials. That suggests the learned behavior was not deeply self-sustaining in the way a stable digital model would be. Instead, it was a fragile emergent pattern that depended on continued interaction with the decoder and stimulation pathway.

Key takeaways from the architecture:

- The system used living rat cortical neurons as the dynamic core.

- A high-density 26,400-electrode array captured the electrical activity.

- FORCE learning tuned the readout in real time.

- Electrical feedback completed the loop every roughly 333 milliseconds.

- The network could be retrained for different target signals.

Why Physical Patterning Mattered

The most important engineering lesson in the study may be that neurons need architectural discipline if they are going to compute reliably. Without patterning, cultured neurons tend to form densely interconnected, highly correlated networks that behave in a synchronized way. That kind of synchrony is biologically natural, but computationally limiting, because it reduces the network’s ability to produce diverse trajectories.The researchers addressed this by confining neuronal cell bodies to 128 square wells, each around 100 by 100 micrometers, with about 14.6 neurons per well on average. Those wells were linked by microchannels, creating two different layouts: a lattice design with uniform nearest-neighbor connectivity and a hierarchical design with sparser multi-scale links. Both patterns significantly reduced pairwise correlations compared with unpatterned cultures, which increased computational diversity and improved learning.

Lattice Versus Hierarchical Design

The lattice configuration outperformed the hierarchical one across the tested waveforms, likely because its denser intermodular connectivity produced higher firing rates and gave the decoder more usable signal. That is a useful reminder that “more brain-like” is not automatically “better” in an engineering sense. Sometimes the best design is the one that preserves enough structure to keep the network expressive, while still allowing the readout to extract enough information to work with.The results also help explain why this field keeps returning to microfabrication. Biological computation is not just about the cells themselves; it is about how they are arranged. The microfluidic patterning effectively turned a culture dish into a programmable physical environment. That is a subtle but powerful move, because it makes the biology more legible to engineering methods without completely removing its adaptive character.

Important design lessons:

- Unpatterned cultures were too synchronized to learn the targets effectively.

- Physical confinement increased network dimensionality.

- The lattice performed better than the hierarchical layout.

- Higher firing rates improved decoder performance.

- Connectivity is as important as cell viability.

What the Neurons Learned

The demonstrator tasks were deliberately chosen to test temporal computation rather than simple classification. The system learned sine waves with periods of 4, 10, and 30 seconds, plus triangle and square waves. It also approximated a Lorenz attractor, which is particularly notable because chaotic systems are a tougher benchmark than periodic oscillations. If a biological reservoir can follow that kind of target during training, it suggests the network has enough dynamical richness to support more than rote signal replay.That said, the achievement should not be mistaken for robust autonomous intelligence. The report notes that performance degraded after training was halted, which means the network did not naturally hold its learned state very well. In practical terms, the system behaved more like a trained adaptive controller than a self-sufficient biological model. That distinction matters a great deal when translating the work into future devices.

Periodic and Chaotic Targets

Periodic waveforms are useful because they test whether the system can maintain timing and phase relationships. Chaotic targets are useful because they stress whether the network can track nonlinear, highly sensitive trajectories. Together, they provide a compact but meaningful range of benchmarks, showing that the culture can do more than emit generic oscillations.The Lorenz attractor result is especially interesting because it points to the system’s ability to inhabit a higher-dimensional state space. That is one of the central promises of reservoir computing: the complex internal dynamics do the hard work, while the decoder handles the mapping. In this case, the living neurons were not just a novelty; they were a dynamic reservoir with enough richness to approximate a canonical chaos problem.

A few practical observations stand out:

- The culture could learn both simple periodic and more complex chaotic targets.

- The same preparation could be retrained for different outputs.

- Performance was better during active training than in autonomous mode.

- Faster, sharper waveforms remained hard to track.

- The system’s learning is real, but still bounded by biology and latency.

Why This Matters for AI Research

This experiment is part of a wider conversation about what kinds of systems can serve as computational substrates in an era when conventional scaling faces physical and economic limits. Silicon AI keeps getting larger and more power-hungry, which has renewed interest in neuromorphic chips, analog accelerators, and biologically inspired architectures. Living neurons occupy the farthest end of that spectrum, but they also bring something unique: intrinsic adaptivity and native temporal processing that electronics still struggle to reproduce efficiently.The work also challenges a common assumption in AI engineering, namely that intelligence must be fully digital to be useful. This study suggests that biological tissue can participate in a learning loop if the environment is engineered carefully enough. That does not displace transformer models or other mainstream AI systems, but it broadens the conversation about where computation can happen and what kind of physical substrate can support it.

Biohybrid Computing Versus Conventional AI

A biohybrid system is not competing on the same axis as a large language model. It is likely to matter more for control, sensing, adaptation, and perhaps low-power specialized tasks than for text generation or general reasoning. That means the most realistic near-term path is not consumer AI, but niche scientific and medical applications where the biology itself provides unique value.It is also possible that the real breakthrough is methodological rather than product-oriented. The researchers showed that living networks can be patterned, trained, and interrogated with enough precision to support controlled computation. That kind of platform could become valuable even if it never becomes a mass-market computer. In science, useful tools are often more important than flashy end-state visions.

Potentially relevant implications:

- Neuromorphic design could borrow more from biological dynamics.

- Closed-loop training may become more important in hybrid systems.

- Low-power computation remains a long-term goal.

- Temporal signal processing may be a strong fit for living reservoirs.

- Scientific instrumentation could be the first beneficiary of this research.

Brain-Machine Interface Potential

The most obvious application path is not a laptop made of neurons, but a future brain-machine interface that blends living tissue with electronics for sensing or control. Because the system is already built around stimulation and recording, it naturally resembles the basic architecture of a neural interface. The difference is that here the cultured neurons are not just being read; they are actively being used as part of the computational loop.That could matter for prosthetics, adaptive controllers, or neural repair platforms, where a biohybrid layer might one day help translate between biological signals and machine commands. A living reservoir might respond to subtle temporal patterns in a way that conventional digital models find expensive to simulate. But those possible uses remain speculative, and the jump from cultured neurons in a lab to therapeutic hardware in patients is enormous.

Medical and Assistive Uses

If the field matures, the first beneficiaries are more likely to be patients than consumers. Brain-machine interfaces need systems that can adapt to noisy, changing neural signals in real time. A living network that naturally exhibits rich dynamics could be a useful intermediary, although only if stability, safety, and reproducibility can be solved.There is also a broader scientific payoff. Even if these systems never become implantable, they can provide a new way to study how structured neural populations process time-varying information. That could improve our understanding of cortical dynamics, learning, and synchronization. In that sense, the platform is both an engineering prototype and a neuroscience instrument.

Possible application lanes:

- Neural prosthetics with adaptive signal translation.

- Closed-loop sensing systems for biomedical devices.

- Research platforms for studying cortical computation.

- Hybrid controllers for low-power edge devices.

- Experimental interfaces between living tissue and silicon electronics.

Strengths and Opportunities

The study’s most obvious strength is that it transforms a speculative idea into a repeatable lab result. The use of patterning, feedback, and online learning shows a coherent engineering strategy rather than a one-off biological curiosity. That makes the work more credible as a foundation for future platforms, especially where time-domain computation matters.This is also a genuinely interdisciplinary breakthrough. It sits at the overlap of neural engineering, microfluidics, and real-time machine learning, which means improvements in one area can improve the whole stack. That kind of cross-disciplinary leverage is often where major platform shifts begin.

- It proves that living neurons can participate in closed-loop computation.

- It shows that physical patterning improves performance.

- It uses a high-density recording platform that captures fine-grained activity.

- It demonstrates real-time adaptation with FORCE learning.

- It suggests a path toward biohybrid neural interfaces.

- It offers a new tool for studying dynamic neural computation.

Risks and Concerns

The biggest caution is that the system remains deeply dependent on feedback and careful laboratory conditions. When the loop stops, performance falls off quickly, which means the learned dynamics are not yet robust enough for real-world deployment. That fragility is not a trivial detail; it defines the current ceiling of the technology.There are also ethical and translational concerns. Even though these are cultured rat neurons rather than brains with consciousness, the use of living tissue in computational systems will inevitably raise questions about welfare, oversight, and acceptable use. As the field advances, those questions will become more prominent, not less.

- The system shows degraded autonomous performance when feedback is removed.

- The 333-millisecond latency limits fast waveform tracking.

- Biological cultures are inherently variable and fragile.

- Scaling from lab demo to device is a major translation challenge.

- Animal-derived neurons introduce ethical and regulatory complexity.

- Stability, reproducibility, and contamination control remain unresolved.

Looking Ahead

The most important next step is not to ask whether neurons can replace silicon, but to identify where living computation offers a genuine advantage. The most promising areas are likely to be narrow: adaptive control, time-series processing, and interface problems where biological dynamics are a feature rather than a bug. If the field stays disciplined, it could produce tools that complement conventional AI instead of competing with it head-on.Researchers will also need to shrink latency and improve autonomy if they want these systems to move beyond demonstration experiments. The paper itself points toward specialized hardware and alternative filtering as possible fixes, which is exactly the sort of pragmatic engineering that can move a lab curiosity toward a platform technology. In the short term, that may matter more than grand claims about artificial general intelligence or synthetic brains.

- Reduce feedback delay with faster hardware.

- Improve long-term autonomous stability.

- Test whether other patterned geometries outperform the lattice.

- Explore scaling to more complex temporal tasks.

- Investigate whether similar methods work with other cell types.

Source: Tom's Hardware Researchers train living rat neurons to perform real-time AI computations — experiments could pave the way for new brain-machine interfaces