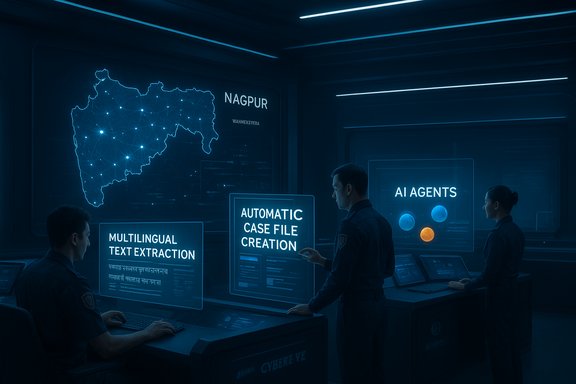

Maharashtra’s government and Microsoft unveiled MahaCrimeOS AI on December 12, 2025 — an AI-powered, cloud-native platform that promises to accelerate cybercrime investigations across the state by combining Microsoft Azure OpenAI Service, Microsoft Foundry, local ISV development, and a new state-level governance vehicle called MARVEL. The system is already live in a pilot covering 23 police stations in Nagpur and, according to officials, is slated to scale to all 1,100 police stations in Maharashtra — a deployment that, if completed, would make it the largest state-level AI policing rollout in India to date.

Background / Overview

MahaCrimeOS AI was unveiled during the Microsoft AI Tour event in Mumbai, where Maharashtra Chief Minister Devendra Fadnavis met Microsoft Chairman and CEO Satya Nadella. The public announcement framed the project as a model for “ethical and responsible AI for public good,” a phrase repeated by state and industry leaders during the launch. Microsoft described the platform as a collaboration between Microsoft India Development Center (IDC), CyberEye (the vendor/ISV), and the Maharashtra government’s Special Purpose Vehicle MARVEL. The rollout is presented as a targeted response to a steep rise in cybercrime complaints in India: government reporting and multiple press outlets cite roughly 3.6 million cybercrime and financial-fraud complaints logged on national portals in 2024 — a number used to justify rapid investment in tools that can scale investigative capacity. Parallel to the announcement, Microsoft reiterated a broader investment and infrastructure push in India, including major cloud and AI spending commitments that are intended to support initiatives like MahaCrimeOS AI.What is MahaCrimeOS AI?

Core components and partners

MahaCrimeOS AI is described as an integrated platform built on two Microsoft building blocks:- Microsoft Azure OpenAI Service — provides access to large language models and generative capabilities used for assistant-style interactions and language extraction.

- Microsoft Foundry — acts as the production platform for building, orchestrating and governing AI agents, enabling multi-agent workflows, observability, and enterprise governance.

- CyberEye — the vendor/ISV that built the solution tailored to police workflows.

- MARVEL — the Maharashtra government’s Special Purpose Vehicle (Maharashtra Advanced Research and Vigilance for Enforcement of Reformed Laws), created to pilot AI-driven public-safety solutions.

- Microsoft India Development Center (IDC) — providing engineering support and cloud infrastructure.

Key features being promoted

Officials and Microsoft executives highlighted a set of features aimed specifically at cyber investigators and frontline officers:- Instant digital case-file creation: officers can create case records and structured digital files quickly using prebuilt workflows.

- Multilingual data extraction: AI-driven extraction and normalization of evidence from unstructured text, images and audio in regional languages.

- Contextual legal and procedural assistance: an AI-assisted knowledge base offering quick references to relevant statutes, procedural checklists and locally applicable guidance.

- Case-linking and pattern analysis: automated linking of related reports and identification of emergent patterns across cases.

- Secure cloud storage and role-based access: leveraging Azure security controls, RBAC and audit trails for chain-of-custody and investigation integrity.

Why Maharashtra is scaling this now

A sharp rise in reported digital crime

India’s public statistics and parliamentary disclosures point to a rapid increase in reported cyber fraud and complaints across recent years. Multiple government briefings and media reports cite roughly 3.6 million complaints in 2024, together with hundreds of billions of rupees lost to online scams and an increase in specialized enforcement requirements. That surge is central to the government’s argument for large-scale digital tooling for police forces.Strategic fit with Microsoft’s India program

Microsoft is deepening investments in India — announcing multi‑billion dollar cloud and AI commitments during the same visit — making Azure and Foundry natural choices for a large public-safety deployment. The pairing allows Maharashtra to tap a managed AI stack with enterprise-grade governance aides (observability, logging, RBAC) while benefiting from vendor integration through CyberEye and MARVEL.Technical anatomy: how MahaCrimeOS AI is built (high level)

Foundry + Azure OpenAI as the foundation

Microsoft Foundry provides an enterprise-ready framework for building AI agents, orchestrated workflows and observability. In practice, the platform gives teams:- Tools to build agents that perform defined tasks (e.g., extract entities from a complaint).

- Access to model catalogs (OpenAI and other models) and tooling to evaluate, monitor and rank models by performance and safety.

- Centralized monitoring, logging and policy enforcement that enterprises need for auditability.

Data flows and security

According to the platform descriptions, MahaCrimeOS integrates secure cloud storage, role-based access control, and automated audit trails. Typical flows described publicly include:- Evidence intake (complaint, screenshots, logs) into a secure case workspace.

- AI-assisted extraction and normalization (phone numbers, transaction IDs, URLs, IP addresses).

- Automated linking against existing cases and open-source intelligence (OSINT) sources.

- Investigator review, annotations, and downstream actions (FIR drafting, evidence preservation orders).

Deployment scale and timelines

- Pilot status: the platform is live in 23 police stations in Nagpur (pilot beginning in April 2025, expanded and hardened over the following months).

- Planned expansion: political and administrative leaders have stated an intention to expand MahaCrimeOS AI to all 1,100 police stations across Maharashtra, making it a statewide program if the timelines hold.

Comparative context: are other states doing the same?

MahaCrimeOS AI is being presented as a first-of-its-kind, state‑level AI copilot for cybercrime investigations. That claim is best read as a scale and focus distinction: other Indian states and agencies have adopted AI-assisted cybersecurity tools and built security operations centers (SOC) and indigenous AI platforms. For instance, Kerala has launched an Advanced Security Operations Centre (SOC) using a domestically developed AI platform called TRINETRA, aimed primarily at protecting critical infrastructure and police systems. National platforms also exist for reporting and certain automated FIR processes. MahaCrimeOS differs in being marketed as an AI-first investigative workflow that directly integrates with frontline police casework and intends to operate at an entire-state policing scale.Strengths and potential upsides

- Operational acceleration: Automating extraction and triage can materially reduce the time from complaint to investigative lead, easing backlog pressure and enabling faster victim relief.

- Multilingual capability: India’s linguistic diversity makes automated multilingual processing a force-multiplier in evidence handling, particularly in rural and semi-urban stations.

- Standardized workflows: A common digital case format can improve evidence preservation, reduce inconsistent practices across stations, and support analytics that detect patterns across jurisdictions.

- Vendor + cloud governance: Using Foundry and Azure buys enterprise features—observability, RBAC, audit logs and policy control—out of the box, which is crucial for public accountability and court defensibility.

- Scale and public-private collaboration: The MARVEL vehicle and CyberEye partnership provide a mechanism to iteratively adapt the product to real police workflows, rather than imposing an off-the-shelf tool.

Risks, unknowns and red flags (what to watch for)

While the promise is significant, large-scale AI deployments in policing also carry measurable risks. The following sections identify operational, technical, legal and civil-liberties concerns.1) Data privacy, retention and scope creep

Police case work involves highly sensitive personal data — financial records, device identifiers, questionable or private communications. Cloud-hosted processing raises questions about:- Where data is physically stored and whether localized data‑residency requirements apply.

- Retention policies and deletion rights for complainants and suspects.

- Clear limits on secondary use (analytics, model training) to prevent mission creep.

2) Model limitations, hallucinations and legal admissibility

Large language models can produce confident but incorrect answers (“hallucinations”). In a legal or investigative context, unsourced or mistaken AI suggestions could misdirect investigations or be misinterpreted as investigative findings. Critical considerations:- AI outputs must be treated as assistive, not determinative.

- Audit logs must preserve the provenance of AI-suggested leads and distinguish AI-generated content from human findings.

- Courts and prosecutors will need robust chains of custody and documentation to accept AI-assisted evidence. Microsoft Foundry provides tracing and observability tooling, but operational discipline is required to ensure court-ready provenance.

3) Over-reliance on automation & skill erosion

If routine investigative tasks are fully offloaded to automated flows, there’s a risk that personnel stop developing or maintaining essential digital-forensics skills. Training programs and periodic manual-exercise requirements should be instituted to prevent institutional skill decay.4) Bias and fairness in triage/prioritization

Algorithms that prioritize cases could inadvertently embed bias — deprioritizing victims from certain geographies or communities if training data reflects prior enforcement biases. Transparent evaluation metrics and fairness testing (a standard part of modern responsible AI toolchains) must be reported publicly to maintain trust. Microsoft’s model-evaluation and safety ranking tools can help, but independent third-party audits strengthen credibility.5) Connectivity and digital divide

Rural stations may lack reliable bandwidth, modern devices, or trained staff. A statewide rollout to 1,100 stations will need to address last-mile connectivity and offline workflows that guarantee secure evidence capture even when cloud sync is delayed.Governance, oversight and mitigation strategies

To responsibly operationalize MahaCrimeOS AI at scale, the following governance and operational safeguards are essential:- Transparent data governance framework: publish clear policies on data residency, retention, access controls, and allowable secondary uses. Ensure citizens can understand how their complaint data is handled.

- Human-in-the-loop and fail-safes: require investigator signoff for AI-suggested leads, and document the human decision that acted on AI outputs.

- Auditability and immutable logs: enable immutable logging of evidence accession, AI prompts and model outputs so forensic reconstruction is feasible for courts and oversight bodies. Microsoft Foundry’s observability and tracing features provide technical support for this requirement.

- External audits and red-team testing: commission independent audits for privacy, fairness, security and legal defensibility. Regular red‑teaming should stress-test agent behaviors against adversarial prompts.

- Public accountability mechanisms: create an ombuds or review board with civil-society representation to review sensitive cases and policy decisions.

- Training and capacity-building: invest in continuous training programs for investigators on digital forensics, AI literacy, and evidence handling to prevent overdependence on automation.

Operational recommendations (practical next steps)

- Prioritize pilot evaluation metrics: measure time-to-triage, lead conversion rate, false positives introduced by AI, and user satisfaction among investigators.

- Publish a phased expansion plan tied to objective milestones (connectivity readiness, training completion, audit results).

- Maintain a strict “no training on live crime data” rule unless explicit consent and anonymization safeguards are in place; create synthetic datasets for ongoing model tuning.

- Create a transparent appeals and correction process for citizens who believe AI-assisted handling of their case caused harm.

- Develop offline-first client applications and lightweight evidence ingestion tools for low-bandwidth environments.

The political and social dimension

AI for policing sits at the intersection of public safety, civil liberties and governance. MahaCrimeOS AI arrives in a charged environment: citizens face a growing tide of online fraud and demands for faster relief; governments are under pressure to demonstrate responsive action; and global technology vendors are increasing investments in regional digital infrastructure.This dynamic creates political momentum for rapid deployment. It also increases the imperative for legally grounded policies, independent oversight and public transparency. Without those safeguards, even well-intentioned systems risk eroding trust if errors, misuse, or opaque practices emerge.

What this means for other Indian states and the broader public-safety market

The MahaCrimeOS AI announcement signals a maturing market for vendor‑built, cloud-hosted investigative platforms tailored for public safety. States and federal agencies will watch the Nagpur pilot and Maharashtra’s expansion for evidence of measurable benefit and for lessons on governance and procurement.Other states already investing in AI and SOC capabilities — such as Kerala’s TRINETRA SOC — indicate a plural marketplace: some governments prefer indigenous stacks for critical infrastructure; others prioritize rapid scale via established cloud providers. Maharashtra’s approach demonstrates one viable model: an ISV-built solution layered on top of a major cloud provider, managed through a governmental SPV for oversight.

Conclusion: potential, but conditional

MahaCrimeOS AI is a high‑profile example of how generative AI and agent frameworks can be applied to a concrete public-good problem: reducing the time and friction of cybercrime investigations across a large, diverse jurisdiction. The combination of Microsoft Foundry, Azure OpenAI Service and a local ISV working with a state SPV is technologically plausible and operationally attractive.However, the program’s success will hinge on rigorous governance, independent oversight, careful operational rollouts, and ongoing public transparency about data use and model behavior. If Maharashtra can marry technical capability with robust legal and ethical guardrails — and demonstrate measurable improvements in investigative outcomes without compromising rights — MahaCrimeOS AI could become a template for responsible, scaled AI deployment in public safety. Conversely, if the project shortcuts governance for the sake of speed, the reputational and civil-liberties costs could be significant.

For voters, advocates and technologists watching this space, the clear markers to watch in the coming months are pilot evaluation reports, audit results, published data‑governance policies, and the practical experience of frontline investigators using the system in low-connectivity environments. The technology’s promise is real; whether it becomes a durable public‑service improvement depends on governance as much as code.

Source: Malaysia Sun https://www.malaysiasun.com/news/27...s-meets-satya-nadella-discusses-ai-potential/