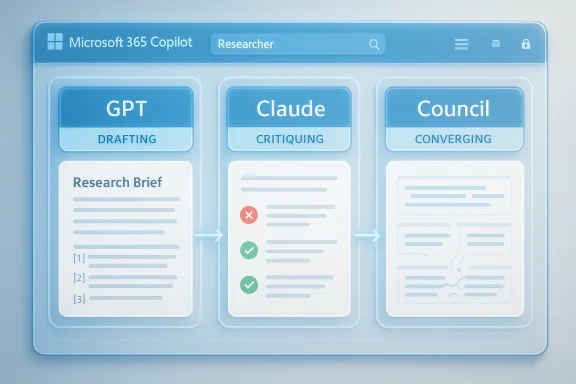

Microsoft 365 Copilot’s latest evolution is not just another model swap; it is a signal that the AI assistant race has moved from “who has the strongest single model” to “who can orchestrate the best multi-model workflow.” Microsoft is now positioning Researcher to draft with GPT and then apply Claude as a second-pass reviewer for accuracy, completeness, and citation integrity, a design that Microsoft says improves DRACO benchmark performance by 13.8%. That may sound like a minor product tweak, but it is a meaningful shift in how enterprise AI is being sold: not as a monolith, but as a managed system of specialized reasoning steps. (support.microsoft.com)

For most of the last two years, the public AI conversation revolved around model supremacy. OpenAI, Anthropic, Google, and others competed on benchmark charts, while platform vendors mostly chose one primary engine and wrapped it in a product layer. Microsoft has already been moving away from that single-model framing, but the new Researcher setup makes the company’s direction unmistakable: the real value is not one model doing everything, but a workflow that can combine drafting, verification, and refinement in sequence. (blogs.microsoft.com)

That shift did not happen overnight. Microsoft first launched Researcher and Analyst as first-of-their-kind reasoning agents in the Frontier program, then made them generally available in June 2025. At that stage, Researcher relied on OpenAI’s deep research model plus Microsoft 365 Copilot orchestration and deep search. The new Claude-enabled experience shows Microsoft widening the model pool rather than doubling down on a single vendor relationship. (microsoft.com)

The practical logic is easy to understand. Long-form research work often fails for two different reasons: the answer may be well written but wrong, or it may be factually cautious but incomplete. By separating drafting from critique, Microsoft is trying to reduce both failure modes at once. That is a sensible enterprise answer to a problem that consumer chat tools still struggle with: confidence is not the same thing as correctness. (support.microsoft.com)

This also fits Microsoft’s broader “Frontier” narrative. The company has repeatedly said Copilot is being built as a model-diverse system that can use OpenAI and Anthropic models depending on the job. In Microsoft’s own framing, Copilot is meant to be open, heterogeneous, and governed, rather than locked to one AI stack. That is both a technical strategy and a market strategy, because enterprises increasingly want choice without having to manage every integration themselves. (blogs.microsoft.com)

What makes the announcement more interesting is the Critique behavior described in Microsoft’s own messaging. Microsoft says GPT drafts the response, Claude reviews it for accuracy, completeness, and citation integrity, and the final output is only delivered after that second pass. In other words, Microsoft is treating model disagreement as a feature rather than a defect. That matters because many of the best enterprise use cases are less about raw creativity and more about reducing the odds of a costly mistake. (blogs.microsoft.com)

The company also highlights a Council capability, which sends the same prompt to multiple models and surfaces where they agree or disagree. Even if that sounds like a niche research tool, it reflects a broader product philosophy: turn model pluralism into an interface, not a backend complexity that users have to manage themselves. For analysts, consultants, and knowledge workers, that can be a lot more useful than another generic chatbot reply.

That approach has a few advantages. First, it lowers dependence on a single vendor’s roadmap. Second, it lets Microsoft match a task to the model best suited for it. Third, it creates room for product differentiation at the workflow level, which is where enterprise software has always made its money. A spreadsheet that knows when to use a different calculation engine is less important than a system that helps workers finish a report safely and quickly. (blogs.microsoft.com)

There is also a psychological effect. Users tend to over-trust polished outputs, especially when those outputs look confident and well formatted. By making one model critique another, Microsoft can position the second model as a safeguard against false certainty. That is a powerful idea in research and compliance-heavy work, even if it is not a perfect guarantee of correctness. No LLM can promise truth; it can only reduce error rates. (support.microsoft.com)

The Critique layer is the most important part of that story. Instead of asking users to notice hallucinations after the fact, Microsoft is trying to catch issues before the output leaves the system. That may not eliminate errors, but it is a better failure mode than silently shipping a polished mistake to an executive team. In enterprise software, reducing the probability of bad decisions is often more valuable than trying to guarantee perfection. (support.microsoft.com)

Council is conceptually different, but just as interesting. Where Critique is about judgment, Council is about comparison: multiple models answer the same prompt, and the user can inspect convergence and disagreement. That is especially useful in ambiguous or policy-sensitive research, where the correct answer is not always obvious and the path to confidence matters. For advanced users, this could become a kind of model debate interface that helps them triangulate truth.

This matters most in roles where mistakes are expensive. Legal teams, sales operations, consultants, analysts, and strategy groups all benefit when AI can synthesize data while preserving traceability. A tool that can draft, critique, and show model disagreement is likely to be more useful in those settings than a faster but opaque assistant. (microsoft.com)

It also reinforces Microsoft’s broader enterprise pitch: AI should live inside the productivity suite, inherit the tenant’s security and governance model, and respect organizational controls. Microsoft says Cowork and related experiences operate within its security, identity, and governance framework, and that framing will resonate with IT leaders who have spent years trying to make AI safer without killing adoption. The promise is not just better answers; it is managed answers. (microsoft.com)

That said, consumers and small teams will experience the feature differently from large enterprises. Microsoft says Claude in Researcher is tied to a Microsoft 365 Copilot license, and full rollout depends on organizational enablement. So while the product story sounds universal, the practical availability is still enterprise-shaped. That is a reminder that the modern AI market remains split between mass-market hype and licensed business reality. (support.microsoft.com)

The user-facing value may be easiest to see in research-heavy personal workflows: comparing product options, summarizing sources, preparing for a presentation, or synthesizing notes into an organized brief. In those cases, a built-in fact-check pass is welcome because it reduces the burden on the user to verify every line. Still, users should remember that better than before is not the same as correct enough to trust blindly. (microsoft.com)

OpenAI still matters because GPT remains central to Copilot’s drafting and reasoning flows. Anthropic matters because Claude is being positioned as the sharper reviewer and multi-step worker. Microsoft matters because it is the one orchestrating the environment, the governance, and the user experience. In a market where model leadership can change quickly, being the platform that sits above the models is a strategically powerful place to be. (support.microsoft.com)

This also gives Microsoft a way to answer a recurring enterprise objection: “Why should we commit to one AI vendor when the market is moving so fast?” The answer is now, effectively, “You do not have to.” Copilot can be sold as a managed layer that incorporates multiple best-in-class models as needed. That reduces buyer anxiety and makes Microsoft look unusually pragmatic in a market full of grand claims. (blogs.microsoft.com)

Over the longer term, the most important question is whether this becomes the template for all serious AI work. If drafting, critique, and model council become normal parts of Copilot, then Microsoft could make the case that enterprise AI should behave less like a chatbot and more like a controlled decision-support system. That would be a meaningful shift in both product design and market expectations. (blogs.microsoft.com)

Source: XDA Microsoft 365 Copilot's new agent uses Claude to fact-check GPT's work

Background

Background

For most of the last two years, the public AI conversation revolved around model supremacy. OpenAI, Anthropic, Google, and others competed on benchmark charts, while platform vendors mostly chose one primary engine and wrapped it in a product layer. Microsoft has already been moving away from that single-model framing, but the new Researcher setup makes the company’s direction unmistakable: the real value is not one model doing everything, but a workflow that can combine drafting, verification, and refinement in sequence. (blogs.microsoft.com)That shift did not happen overnight. Microsoft first launched Researcher and Analyst as first-of-their-kind reasoning agents in the Frontier program, then made them generally available in June 2025. At that stage, Researcher relied on OpenAI’s deep research model plus Microsoft 365 Copilot orchestration and deep search. The new Claude-enabled experience shows Microsoft widening the model pool rather than doubling down on a single vendor relationship. (microsoft.com)

The practical logic is easy to understand. Long-form research work often fails for two different reasons: the answer may be well written but wrong, or it may be factually cautious but incomplete. By separating drafting from critique, Microsoft is trying to reduce both failure modes at once. That is a sensible enterprise answer to a problem that consumer chat tools still struggle with: confidence is not the same thing as correctness. (support.microsoft.com)

This also fits Microsoft’s broader “Frontier” narrative. The company has repeatedly said Copilot is being built as a model-diverse system that can use OpenAI and Anthropic models depending on the job. In Microsoft’s own framing, Copilot is meant to be open, heterogeneous, and governed, rather than locked to one AI stack. That is both a technical strategy and a market strategy, because enterprises increasingly want choice without having to manage every integration themselves. (blogs.microsoft.com)

What Microsoft Actually Announced

The headline feature is Claude support inside the Researcher agent in Microsoft 365 Copilot. Microsoft support says users can select Claude in Researcher, but only if their organization’s administrator has enabled Anthropic access in the Microsoft 365 admin center. The rollout is phased, and full availability is expected by the end of March 2026. (support.microsoft.com)What makes the announcement more interesting is the Critique behavior described in Microsoft’s own messaging. Microsoft says GPT drafts the response, Claude reviews it for accuracy, completeness, and citation integrity, and the final output is only delivered after that second pass. In other words, Microsoft is treating model disagreement as a feature rather than a defect. That matters because many of the best enterprise use cases are less about raw creativity and more about reducing the odds of a costly mistake. (blogs.microsoft.com)

The company also highlights a Council capability, which sends the same prompt to multiple models and surfaces where they agree or disagree. Even if that sounds like a niche research tool, it reflects a broader product philosophy: turn model pluralism into an interface, not a backend complexity that users have to manage themselves. For analysts, consultants, and knowledge workers, that can be a lot more useful than another generic chatbot reply.

Why this matters

The business case is obvious. Companies do not only need answers; they need answers they can defend. A system that shows model disagreement, then forces a critique pass, fits governance-heavy workflows better than a single-shot assistant does. It also reduces the organizational friction that comes from asking employees to trust an AI response without showing how the response was checked. (support.microsoft.com)- Drafting becomes faster when one model focuses on synthesis.

- Verification becomes stronger when a different model checks citations and completeness.

- Auditability improves when disagreement is surfaced instead of hidden.

- Adoption becomes easier when admins can control model availability.

- Enterprise trust improves when the process is visible, not magical.

The fine print

Microsoft’s support documentation makes clear that Claude is not universally available. Anthropic is being introduced gradually, some organizations may see limited functionality during rollout, and government and sovereign clouds are excluded. The EU Data Boundary and UK also have restrictions, which means the feature is as much a compliance story as a product story. That is the kind of detail that often gets lost in hype-driven coverage, but it will matter enormously in real deployments.Why Multi-Model AI Is Becoming the Default

The new Copilot experience reflects a larger trend: the best AI products are increasingly designed as systems rather than isolated models. In practical terms, that means one model may excel at drafting, another at critique, and a third at task orchestration. Microsoft is betting that customers will value the composition layer more than the identity of any single model. (blogs.microsoft.com)That approach has a few advantages. First, it lowers dependence on a single vendor’s roadmap. Second, it lets Microsoft match a task to the model best suited for it. Third, it creates room for product differentiation at the workflow level, which is where enterprise software has always made its money. A spreadsheet that knows when to use a different calculation engine is less important than a system that helps workers finish a report safely and quickly. (blogs.microsoft.com)

There is also a psychological effect. Users tend to over-trust polished outputs, especially when those outputs look confident and well formatted. By making one model critique another, Microsoft can position the second model as a safeguard against false certainty. That is a powerful idea in research and compliance-heavy work, even if it is not a perfect guarantee of correctness. No LLM can promise truth; it can only reduce error rates. (support.microsoft.com)

Model diversity versus model loyalty

Microsoft’s public messaging now repeatedly stresses that Copilot is model diverse by design. That phrase is doing a lot of work. It signals openness to customers, flexibility for Microsoft, and a strategic hedge against any single model family becoming a bottleneck. It also acknowledges a simple fact that the market has learned the hard way: different models are genuinely better at different jobs. (blogs.microsoft.com)- Drafting and reasoning are not always the same skill.

- Creativity and citation discipline are not identical.

- Single-model purity is less useful than workflow reliability.

- Enterprise buyers want optionality with controls.

- Product value is increasingly in orchestration.

The competitive subtext

This is also a competitive move against Google, OpenAI, and Anthropic themselves. If Microsoft can present Copilot as the place where the best models are assembled into a business workflow, then the company becomes less of a model vendor and more of an operating layer for AI at work. That is a stronger strategic position than trying to win every benchmark with a single model. (blogs.microsoft.com)Researcher, Critique, and Council

Researcher has always been Microsoft’s showcase for deep work inside Copilot. It is meant to gather information across web sources and Microsoft 365 data, then deliver something closer to a structured research brief than a quick chat response. Microsoft’s June 2025 GA announcement described it as a tool for multi-step research, with citations as a core trust feature. The new Claude integration pushes that concept further by formalizing verification as part of the workflow. (microsoft.com)The Critique layer is the most important part of that story. Instead of asking users to notice hallucinations after the fact, Microsoft is trying to catch issues before the output leaves the system. That may not eliminate errors, but it is a better failure mode than silently shipping a polished mistake to an executive team. In enterprise software, reducing the probability of bad decisions is often more valuable than trying to guarantee perfection. (support.microsoft.com)

Council is conceptually different, but just as interesting. Where Critique is about judgment, Council is about comparison: multiple models answer the same prompt, and the user can inspect convergence and disagreement. That is especially useful in ambiguous or policy-sensitive research, where the correct answer is not always obvious and the path to confidence matters. For advanced users, this could become a kind of model debate interface that helps them triangulate truth.

How this changes the user experience

Instead of one final answer arriving as if it dropped from the sky, the user gets a workflow that exposes more of the machine’s internal reasoning process. That is valuable because it makes the result easier to challenge, which is exactly what serious users want. It also gives teams a way to create more repeatable research habits instead of relying on whatever the chatbot happened to produce that day. (support.microsoft.com)- Critique improves confidence by checking the draft.

- Council improves judgment by surfacing disagreement.

- Citations matter because they support verification.

- Workflow transparency matters because enterprise users need accountability.

- Human review remains essential for high-stakes decisions.

The benchmark claim

Microsoft says the combined workflow scores 13.8% higher on DRACO, which it describes as an industry standard for deep research quality. Even if readers should treat benchmark claims carefully, the direction of travel is plausible: multi-stage systems often outperform single-pass generation when the goal is accurate synthesis rather than fluent text. Still, benchmark improvements are not the same thing as universal real-world gains, and procurement teams should remember that distinction. (support.microsoft.com)Enterprise Impact

For enterprise customers, the important question is not whether Claude or GPT is “better.” The real question is whether the combined system reduces rework, errors, and review time enough to justify adoption. Microsoft is clearly pitching Researcher as a way to get from question to defensible answer faster, especially in work that crosses documents, web sources, and internal knowledge. (microsoft.com)This matters most in roles where mistakes are expensive. Legal teams, sales operations, consultants, analysts, and strategy groups all benefit when AI can synthesize data while preserving traceability. A tool that can draft, critique, and show model disagreement is likely to be more useful in those settings than a faster but opaque assistant. (microsoft.com)

It also reinforces Microsoft’s broader enterprise pitch: AI should live inside the productivity suite, inherit the tenant’s security and governance model, and respect organizational controls. Microsoft says Cowork and related experiences operate within its security, identity, and governance framework, and that framing will resonate with IT leaders who have spent years trying to make AI safer without killing adoption. The promise is not just better answers; it is managed answers. (microsoft.com)

What IT teams will care about

The admin story is just as important as the user story. Microsoft says Anthropic access must be enabled in the Microsoft 365 admin center, and the company notes that Claude availability is controlled by region and cloud boundary policy. That means deployment will be gated by compliance, not merely by enthusiasm. In practice, the rollout will probably vary widely by industry and geography. (support.microsoft.com)- Policy controls will shape real-world availability.

- Cloud boundary rules may delay adoption in some regions.

- Admin approval will be a prerequisite for broad use.

- Security teams will want visibility into model routing.

- Procurement teams may ask which model does what, and when.

The productivity angle

If Microsoft’s workflow really does reduce the amount of manual fact-checking required after a first draft, the productivity gains could be significant. The strongest enterprise AI tools are not the ones that merely write faster; they are the ones that shorten the path from messy input to usable artifact. That is the lane Microsoft is trying to own. (microsoft.com)Consumer and Knowledge Worker Impact

For individual users, the appeal is simpler. The average worker does not care which model wrote the first draft, as long as the output is useful, accurate, and easy to refine. By layering critique on top of drafting, Microsoft is trying to make Copilot feel less like a chatbot and more like a second set of hands. (support.microsoft.com)That said, consumers and small teams will experience the feature differently from large enterprises. Microsoft says Claude in Researcher is tied to a Microsoft 365 Copilot license, and full rollout depends on organizational enablement. So while the product story sounds universal, the practical availability is still enterprise-shaped. That is a reminder that the modern AI market remains split between mass-market hype and licensed business reality. (support.microsoft.com)

The user-facing value may be easiest to see in research-heavy personal workflows: comparing product options, summarizing sources, preparing for a presentation, or synthesizing notes into an organized brief. In those cases, a built-in fact-check pass is welcome because it reduces the burden on the user to verify every line. Still, users should remember that better than before is not the same as correct enough to trust blindly. (microsoft.com)

Practical use cases

The most obvious day-to-day wins will likely come from jobs where workers repeatedly turn scattered material into polished outputs. Think account planning, internal strategy memos, launch briefs, or competitive research. The more a task depends on synthesis and source quality, the more the draft-and-critique pattern becomes useful. (microsoft.com)- Research summaries become easier to assemble.

- Meeting prep can start from a structured brief.

- Competitive analysis benefits from citation checks.

- Executive memos gain a second layer of review.

- Knowledge workers spend less time formatting and more time deciding.

The trust issue

For individuals, trust is still the biggest psychological barrier. Users may like the idea of a fact-checking assistant, but they will still need confidence that the verification step is meaningful and not just another model producing a different kind of confident prose. That is why Microsoft’s emphasis on citations, visibility, and disagreement is so important. Trust has to be earned in the interface, not asserted in the marketing. (support.microsoft.com)Microsoft Versus the Competition

Microsoft’s move should be read as part of a wider competitive shift among AI platforms. Instead of asking, “Which company has the smartest model today?” buyers are increasingly asking, “Which platform can route work across multiple models safely and efficiently?” That is a much more enterprise-friendly question, and Microsoft is clearly trying to own it. (blogs.microsoft.com)OpenAI still matters because GPT remains central to Copilot’s drafting and reasoning flows. Anthropic matters because Claude is being positioned as the sharper reviewer and multi-step worker. Microsoft matters because it is the one orchestrating the environment, the governance, and the user experience. In a market where model leadership can change quickly, being the platform that sits above the models is a strategically powerful place to be. (support.microsoft.com)

This also gives Microsoft a way to answer a recurring enterprise objection: “Why should we commit to one AI vendor when the market is moving so fast?” The answer is now, effectively, “You do not have to.” Copilot can be sold as a managed layer that incorporates multiple best-in-class models as needed. That reduces buyer anxiety and makes Microsoft look unusually pragmatic in a market full of grand claims. (blogs.microsoft.com)

The market signal

The broader signal is that AI product differentiation is shifting upward. Raw model quality still matters, but workflow design, governance, and multi-model composition matter more than they did a year ago. Microsoft is betting that enterprises will pay for reliability plus choice rather than for one headline model improvement at a time. That is a smart bet, even if competitors will try to catch up. (blogs.microsoft.com)- Platform orchestration is becoming a differentiator.

- Model diversity is becoming a selling point.

- Governance is becoming a product feature.

- Verification is becoming part of the workflow.

- Enterprise AI is moving beyond single-turn chat.

The long game

If Microsoft can keep proving that multi-model workflows outperform single-model interactions in high-value tasks, it could redefine what “good Copilot” means. The product would no longer be judged only on fluency or speed, but on how often it helps users reach a defensible answer with less manual effort. That is a much stronger foundation for enterprise loyalty. (microsoft.com)Strengths and Opportunities

Microsoft’s new Researcher design has several clear advantages. It pairs the speed and structure of drafting with a second-pass review that can catch gaps, which is exactly the kind of hybrid workflow enterprises pay for. It also aligns with Microsoft’s security and governance pitch, giving IT buyers a stronger story than “the model is smart, trust us.” (support.microsoft.com)- Better research quality through layered model review.

- More defensible outputs thanks to citation integrity checks.

- Stronger enterprise appeal because of admin controls and governance.

- Workflow transparency from model disagreement surfaced in Council.

- Vendor flexibility through a model-diverse architecture.

- Potential productivity gains for research-heavy teams.

- Improved user trust when the system shows its work.

The opportunity beyond research

The bigger opportunity is that Microsoft could extend this pattern across more Copilot surfaces. If drafting plus critique works for research, it could work for document creation, analysis, planning, and maybe even workflow automation. That would make Copilot less like a product feature and more like an AI operating layer for the Microsoft 365 ecosystem. (blogs.microsoft.com)Risks and Concerns

The biggest risk is overpromising benchmark-driven quality gains that do not fully translate into messy real-world work. A 13.8% improvement on DRACO is encouraging, but businesses will care more about how the system performs on their own internal documents, policy constraints, and domain-specific edge cases. Benchmarks are useful; they are not a substitute for pilot testing. (support.microsoft.com)- Benchmark gains may not generalize to every workflow.

- Residual hallucinations can still survive critique layers.

- Regional restrictions may frustrate global rollouts.

- Admin complexity may slow adoption in larger organizations.

- Model confusion could occur if users do not understand which model did what.

- Governance gaps may emerge if teams treat AI output as final.

- Compliance issues could arise where Anthropic availability is restricted.

The hidden operational cost

There is also a cost to multi-model sophistication. More routing, more model choices, and more review steps can mean more latency, more training requirements, and more support burden. Users may love the extra rigor in a research workflow, but they may not love it if the experience feels slower or harder to predict than a simpler assistant. Complexity is a tax, even when it is worth paying. (blogs.microsoft.com)The trust paradox

There is a second-order risk as well: the more Microsoft emphasizes verification, the more visible any failure becomes. If a fact-checking layer misses something, that can undermine confidence more than a plain chatbot error would. In other words, higher expectations raise the reputational stakes. That is the paradox of trust features in AI products. (support.microsoft.com)Looking Ahead

The next few weeks will tell us whether Microsoft’s Claude rollout is a genuine product evolution or mostly a premium preview feature with a smart narrative. The support documentation says full availability is expected by the end of March 2026, so the near-term test will be whether organizations can actually turn it on, use it, and see measurable value without too much administrative friction. If that works, Microsoft will have a strong story for how multi-model AI should look inside the enterprise. (support.microsoft.com)Over the longer term, the most important question is whether this becomes the template for all serious AI work. If drafting, critique, and model council become normal parts of Copilot, then Microsoft could make the case that enterprise AI should behave less like a chatbot and more like a controlled decision-support system. That would be a meaningful shift in both product design and market expectations. (blogs.microsoft.com)

Key things to watch

- Whether Critique expands beyond Researcher into other Copilot experiences.

- Whether Council becomes a mainstream interface or stays niche.

- Whether enterprises report real-world gains that match the benchmark claims.

- Whether regional and compliance restrictions slow adoption in regulated industries.

- Whether Microsoft continues to add more model choices without making Copilot harder to use.

- Whether competitors respond with their own multi-model verification workflows.

Source: XDA Microsoft 365 Copilot's new agent uses Claude to fact-check GPT's work