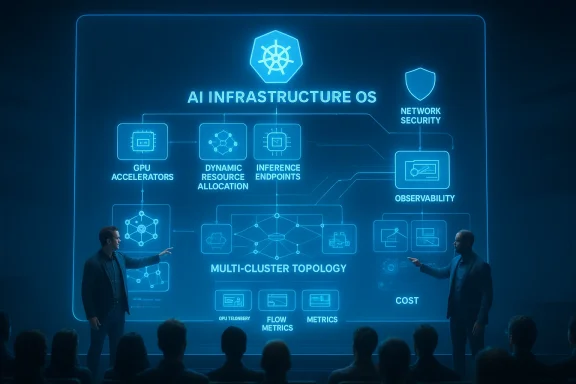

Microsoft is using KubeCon Europe 2026 to make a bigger point than a product pitch: the company wants Kubernetes to become the operating system for AI infrastructure as much as it already is for cloud-native applications. In practice, that means pushing GPU scheduling, inference APIs, network security, observability, and cluster lifecycle management closer to open standards and upstream projects, while also folding those ideas into Azure Kubernetes Service. The result is a strategy aimed at turning today’s fragmented AI operations into something closer to repeatable, shared practice. That is a familiar cloud-native playbook, but the AI layer is still early enough that it can reshape the market.

The current wave of AI infrastructure has exposed a problem that cloud teams know well: when every platform team builds its own stack, operational knowledge does not compound cleanly. Instead, it fragments. Model serving, GPU placement, networking, telemetry, security, and rollout patterns all become separate concerns, and each one tends to grow local workarounds before the broader ecosystem agrees on common abstractions. Kubernetes solved part of that problem for containers by making safe change management and declarative operations the baseline; now the industry is trying to do something similar for AI workloads.

Microsoft has been steadily positioning itself at that intersection. Over the past several KubeCon cycles, the company has layered open-source contributions on top of managed Azure capabilities, using the same feedback loop that made Kubernetes itself useful: build in the open, learn from production, and upstream the primitives that matter. That pattern was already visible in Microsoft’s earlier KubeCon messaging, where the company emphasized reliability, security, and AI-native workloads rather than isolated feature announcements. The 2026 story is that those efforts are now maturing into a broader platform strategy.

What makes this moment different is that AI is no longer just a model-training concern. Enterprises are now asking how to run inference continuously, securely, and economically, often across multiple clusters and regions. That changes everything. A training job can be bursty and expensive; an inference platform has to behave like production infrastructure, with predictable capacity, careful routing, and strong observability.

Microsoft’s European KubeCon announcements reflect that shift. The company is not just adding AKS features. It is also contributing upstream to Dynamic Resource Allocation, Cilium, and CNCF projects that support troubleshooting, image building, and inference orchestration. That matters because the more AI workloads resemble standard distributed systems, the more the industry needs shared tools instead of one-off acceleration hacks.

The broader competitive backdrop is equally important. NVIDIA, cloud providers, open-source AI projects, and enterprise platform vendors are all converging on the same question: how do you make GPUs behave like first-class Kubernetes resources without burying developers in infrastructure complexity? Microsoft’s answer is to work from both ends of the stack at once. It is adding managed capabilities in AKS while also helping define the open interfaces that other vendors will have to support.

The company’s emphasis on open source is not merely ideological. It is strategic. When a technology layer is young and rapidly changing, the vendor that helps define the shared primitives often ends up shaping the market’s default assumptions. Kubernetes did that for clusters; Microsoft now wants to help do it for GPU-backed inference and multi-cluster AI operations.

The significance is operational, not cosmetic. If the ecosystem can standardize how GPUs, NICs, storage, and model-serving endpoints are represented, then platform teams can treat AI as a managed workload instead of a custom project. That opens the door to automation, policy enforcement, and safer change management.

Key implications include:

That dual approach is smart because it captures both communities. Open source wins mindshare and interoperability, while AKS gives Microsoft a concrete operational footprint. The risk, of course, is that the two layers drift apart if the managed experience becomes too Azure-specific. For now, Microsoft appears to be trying to avoid that trap by pushing useful work upstream.

Microsoft says DRA has graduated to general availability, alongside the DRA example driver and DRA Admin Access. That is a strong signal because it suggests the resource model is becoming stable enough to support production use. The deeper point is that accelerator scheduling is moving from special-case extensions toward a first-class Kubernetes pattern.

Microsoft’s work on DRANet compatibility for Azure RDMA NICs matters here because it extends DRA concepts beyond the GPU itself. That’s an important evolution. In a real AI cluster, performance is shaped by the whole fabric, not just the accelerator.

For enterprises, this is about more than speed. It is about predictability, repeatability, and cost. If the platform can place workloads more intelligently, it can also avoid the wasted spend that comes from ill-matched hardware or scattered provisioning.

The most important design choice is not just that AI Runway exists, but that it tries to abstract serving technology behind a common interface. That suggests Microsoft sees the market moving away from one serving framework to many. Platform teams need a way to manage model deployments without retooling every time the ecosystem changes.

The built-in Hugging Face discovery, GPU memory fit indicators, and real-time cost estimates are especially interesting. They suggest a product philosophy that combines operational guardrails with developer ergonomics. In other words, Microsoft wants the platform to answer the question, can this model run here, and what will it cost? before a deployment becomes a support problem.

The larger implication is that Microsoft is trying to make inference portable across serving back ends, while keeping the operational model consistent. That could be a strong differentiator if it reduces lock-in without sacrificing managed convenience. It is also a competitive response to the broader market’s tendency to create isolated AI platforms.

The most interesting security pattern in the announcement is the push toward identity-aware networking and build-time hardening. Instead of relying only on perimeter controls, Microsoft is emphasizing authenticated workload communication, provenance, and smaller attack surfaces. That is exactly the direction zero-trust architecture has been moving in for years.

A minimal image strategy reduces risk by trimming unnecessary components. Provenance and SBOM generation add traceability, which is increasingly important when regulated enterprises need to know what exactly is running in production. For AI workloads, where model-serving stacks are changing constantly, that visibility is not optional.

This reinforces a broader point: modern AI platforms need to secure traffic without turning every request into an operations project. Sidecarless encryption and better flow intelligence are attractive because they reduce overhead while preserving control.

The big theme is that operators need better visibility without drowning in data. AI systems are expensive enough already; they should not become even more expensive because every metric is collected at full fidelity forever. The trick is balancing detail with operational signal.

Once GPU telemetry sits alongside standard Kubernetes metrics, capacity planning becomes easier. Teams can correlate GPU saturation with deployment patterns, model sizes, or traffic spikes instead of inferring those relationships after the fact. That shortens incident response and improves ROI on expensive hardware.

The addition of Azure Monitor dashboards and one-click onboarding reduces friction, but the deeper value is organizational. Platform teams can share a common operational view instead of building one-off observability stacks for each cluster. That should help standardize how incidents are diagnosed.

That tension is real. Helpful agents are easy to admire in demos, but production operations require auditability and guardrails. Microsoft appears aware of that, and the Azure community hub explicitly framed the tool as useful only when strict RBAC, approval workflows, and audit trails remain in place.

That’s where Azure Kubernetes Fleet Manager and the managed Cilium cluster mesh come in. They offer unified connectivity, global service discovery, and centrally managed routing across AKS clusters. In enterprise terms, this is about reducing the tax of operating many clusters like a coherent platform.

This matters especially for AI inference. If one region is saturated or a model needs to fail over to another cluster, the routing and identity model need to be consistent. Otherwise, cross-cluster failover becomes a support incident instead of a resilience feature.

That said, shared infrastructure always brings tradeoffs. The operational gain is obvious, but the management model must stay clear or the simplicity disappears. Storage abstractions only help when the team can still reason about performance and failure domains.

That includes blue-green agent pool upgrades, agent pool rollback, and prepared image specification. These are not headline-grabbing features, but they speak directly to the part of Kubernetes that operators care about most: whether change can happen without downtime or panic.

The benefit is not just technical. It is also organizational. Teams are more likely to adopt upgrades when they believe recovery is straightforward. That confidence can shorten the lag between security patches and actual deployment.

The larger implication is that AKS is continuing to move from “a place to run clusters” toward “a place to run production operating models.” That is an important distinction. Enterprise buyers do not just want raw infrastructure; they want less entropy.

That is why these capabilities matter so much for AI infrastructure. Rapid innovation is useful only if the platform can absorb mistakes. Microsoft seems to understand that the best infrastructure products are the ones that make recovery feel routine.

That combination creates pressure on rivals. If Microsoft can make AKS the most complete environment for open-source AI operations while also contributing the underlying primitives to the ecosystem, it can shape the default architecture for enterprise inference. That is a meaningful advantage.

That is especially true for enterprise buyers who want portability. They are increasingly suspicious of AI stacks that look convenient but lock them into proprietary control planes. Open-source alignment is becoming a buying criterion, not a nice-to-have.

To be clear, this is still a competitive strategy. But it is one that aligns with how cloud-native markets actually mature. The vendor that helps define the shared standard often wins even when it does not own every layer.

The more interesting test may be cultural rather than technical. Do developers trust the abstractions enough to use them, and do operators trust them enough to standardize on them? Those are harder questions than feature parity, but they are the ones that decide whether platforms endure.

What to watch next:

Source: HPCwire Microsoft Advances Open-Source AI Infrastructure on Kubernetes at KubeCon Europe 2026 - HPCwire

Background

Background

The current wave of AI infrastructure has exposed a problem that cloud teams know well: when every platform team builds its own stack, operational knowledge does not compound cleanly. Instead, it fragments. Model serving, GPU placement, networking, telemetry, security, and rollout patterns all become separate concerns, and each one tends to grow local workarounds before the broader ecosystem agrees on common abstractions. Kubernetes solved part of that problem for containers by making safe change management and declarative operations the baseline; now the industry is trying to do something similar for AI workloads.Microsoft has been steadily positioning itself at that intersection. Over the past several KubeCon cycles, the company has layered open-source contributions on top of managed Azure capabilities, using the same feedback loop that made Kubernetes itself useful: build in the open, learn from production, and upstream the primitives that matter. That pattern was already visible in Microsoft’s earlier KubeCon messaging, where the company emphasized reliability, security, and AI-native workloads rather than isolated feature announcements. The 2026 story is that those efforts are now maturing into a broader platform strategy.

What makes this moment different is that AI is no longer just a model-training concern. Enterprises are now asking how to run inference continuously, securely, and economically, often across multiple clusters and regions. That changes everything. A training job can be bursty and expensive; an inference platform has to behave like production infrastructure, with predictable capacity, careful routing, and strong observability.

Microsoft’s European KubeCon announcements reflect that shift. The company is not just adding AKS features. It is also contributing upstream to Dynamic Resource Allocation, Cilium, and CNCF projects that support troubleshooting, image building, and inference orchestration. That matters because the more AI workloads resemble standard distributed systems, the more the industry needs shared tools instead of one-off acceleration hacks.

The broader competitive backdrop is equally important. NVIDIA, cloud providers, open-source AI projects, and enterprise platform vendors are all converging on the same question: how do you make GPUs behave like first-class Kubernetes resources without burying developers in infrastructure complexity? Microsoft’s answer is to work from both ends of the stack at once. It is adding managed capabilities in AKS while also helping define the open interfaces that other vendors will have to support.

The Open-Source AI Infrastructure Thesis

At the center of Microsoft’s KubeCon Europe 2026 message is a simple but consequential idea: AI infrastructure needs a common operational grammar. If that sounds abstract, it isn’t. A platform team cannot reliably scale AI if scheduling, networking, telemetry, and image construction each require bespoke logic. Shared APIs and upstream projects reduce that burden by making the environment more predictable for both operators and developers.The company’s emphasis on open source is not merely ideological. It is strategic. When a technology layer is young and rapidly changing, the vendor that helps define the shared primitives often ends up shaping the market’s default assumptions. Kubernetes did that for clusters; Microsoft now wants to help do it for GPU-backed inference and multi-cluster AI operations.

Why Kubernetes Matters Here

Kubernetes is attractive for AI not because it is perfect, but because it already provides the control loop, declarative state, and scheduling discipline that production AI needs. The challenge is that AI workloads stress those abstractions in new ways, especially when accelerators, high-bandwidth networking, and large model artifacts enter the picture. That is why Microsoft’s announcements focus on primitives rather than just add-ons.The significance is operational, not cosmetic. If the ecosystem can standardize how GPUs, NICs, storage, and model-serving endpoints are represented, then platform teams can treat AI as a managed workload instead of a custom project. That opens the door to automation, policy enforcement, and safer change management.

Key implications include:

- Less bespoke orchestration for every AI platform team.

- More portable workloads across clusters and potentially across clouds.

- Better cost control because resource use becomes more visible and schedulable.

- Faster platform reuse as common patterns harden upstream.

- A smaller gap between cloud-native app operations and AI operations.

Microsoft’s Two-Layer Strategy

Microsoft is clearly working on two layers at once. The upstream layer is about standards, open-source projects, and CNCF participation. The managed layer is about AKS capabilities that make those same patterns available to enterprise customers without forcing them to assemble everything themselves.That dual approach is smart because it captures both communities. Open source wins mindshare and interoperability, while AKS gives Microsoft a concrete operational footprint. The risk, of course, is that the two layers drift apart if the managed experience becomes too Azure-specific. For now, Microsoft appears to be trying to avoid that trap by pushing useful work upstream.

Scheduling, GPUs, and the Resource Model

One of the most important themes in the announcement is Dynamic Resource Allocation. In AI infrastructure, the old model of generic CPU and memory scheduling is no longer enough. GPUs, high-speed interconnects, and topology-aware placement matter directly to performance, and the operational consequences are visible in both training and inference.Microsoft says DRA has graduated to general availability, alongside the DRA example driver and DRA Admin Access. That is a strong signal because it suggests the resource model is becoming stable enough to support production use. The deeper point is that accelerator scheduling is moving from special-case extensions toward a first-class Kubernetes pattern.

Hardware Becomes a Kubernetes Citizen

The moment GPUs and RDMA NICs are represented as schedulable resources, platform teams can reason about performance more systematically. This is especially relevant for distributed training, where data movement can matter as much as raw compute. If the GPU-to-NIC topology is poor, throughput suffers and expensive hardware sits underutilized.Microsoft’s work on DRANet compatibility for Azure RDMA NICs matters here because it extends DRA concepts beyond the GPU itself. That’s an important evolution. In a real AI cluster, performance is shaped by the whole fabric, not just the accelerator.

KubeRay and Workload-Aware Scheduling

The update also notes Workload Aware Scheduling for Kubernetes 1.36, including DRA support in the Workload API and integration into KubeRay. That is meaningful because KubeRay has become a practical path for distributed AI and data workloads on Kubernetes. When scheduling awareness reaches that layer, the platform begins to resemble a coordinated AI control plane rather than a generic cluster.For enterprises, this is about more than speed. It is about predictability, repeatability, and cost. If the platform can place workloads more intelligently, it can also avoid the wasted spend that comes from ill-matched hardware or scattered provisioning.

- DRA makes accelerators more structured and portable.

- Topology-aware networking improves distributed training efficiency.

- Integration with KubeRay reduces the need for custom scheduling glue.

- Better resource description supports policy-driven capacity management.

Inference as a Platform, Not an App

Microsoft’s new AI Runway project may be the most revealing announcement in the entire set. The project introduces a common Kubernetes API for inference workloads, which is a big deal because inference is becoming the dominant production AI workload. Training gets the headlines, but inference pays the bills and creates the operational load.The most important design choice is not just that AI Runway exists, but that it tries to abstract serving technology behind a common interface. That suggests Microsoft sees the market moving away from one serving framework to many. Platform teams need a way to manage model deployments without retooling every time the ecosystem changes.

Centralized Control Without Forcing Kubernetes Knowledge

AI Runway also includes a web interface for users who should not need to know Kubernetes just to deploy a model. That is a practical acknowledgment of a persistent problem in enterprise AI: the people building models are often not the same people running clusters. If the deployment workflow is too infrastructure-heavy, adoption slows and shadow platforms emerge.The built-in Hugging Face discovery, GPU memory fit indicators, and real-time cost estimates are especially interesting. They suggest a product philosophy that combines operational guardrails with developer ergonomics. In other words, Microsoft wants the platform to answer the question, can this model run here, and what will it cost? before a deployment becomes a support problem.

Supporting Multiple Runtimes

The support for runtimes including NVIDIA Dynamo, KubeRay, llm-d, and KAITO is important because it reflects a pluralistic ecosystem rather than a single blessed path. That is likely the right move. The serving layer is still changing quickly, and organizations will want to swap runtimes as needs evolve.The larger implication is that Microsoft is trying to make inference portable across serving back ends, while keeping the operational model consistent. That could be a strong differentiator if it reduces lock-in without sacrificing managed convenience. It is also a competitive response to the broader market’s tendency to create isolated AI platforms.

Security, Supply Chain, and the Zero-Trust Angle

AI infrastructure is often discussed through the lens of performance, but security may be the more durable differentiator. Microsoft’s KubeCon Europe 2026 update makes that clear by pairing new AI primitives with work on Cilium, Dalec, and security-oriented runtime tooling. That combination matters because production AI systems are not just expensive; they are also attractive targets.The most interesting security pattern in the announcement is the push toward identity-aware networking and build-time hardening. Instead of relying only on perimeter controls, Microsoft is emphasizing authenticated workload communication, provenance, and smaller attack surfaces. That is exactly the direction zero-trust architecture has been moving in for years.

Build-Time Security Matters More in AI

Dalec is noteworthy because it focuses on declarative package builds, minimal container images, and provenance attestations. This is not glamorous work, but it is foundational. AI teams routinely assemble complex container images with model dependencies, system libraries, and runtime glue, which can inflate the attack surface if build discipline is weak.A minimal image strategy reduces risk by trimming unnecessary components. Provenance and SBOM generation add traceability, which is increasingly important when regulated enterprises need to know what exactly is running in production. For AI workloads, where model-serving stacks are changing constantly, that visibility is not optional.

Cilium and Identity-Aware Traffic

Microsoft also highlighted contributions to Cilium, including native mTLS ztunnel support, Hubble metrics cardinality controls, flow log aggregation, and Cluster Mesh feature proposals. The practical value here is that security and observability are being welded together. That is useful because the more distributed a platform becomes, the more important it is to connect traffic telemetry with policy enforcement.This reinforces a broader point: modern AI platforms need to secure traffic without turning every request into an operations project. Sidecarless encryption and better flow intelligence are attractive because they reduce overhead while preserving control.

- SBOMs and provenance improve software trust.

- Minimal images shrink the attack surface.

- mTLS and identity-based networking support zero trust.

- Flow aggregation helps control observability costs.

- Cluster Mesh improves cross-cluster consistency.

Observability Becomes an AI Requirement

Kubernetes observability used to mean a mix of logs, metrics, and traces for application troubleshooting. With AI workloads, observability expands into accelerator telemetry, flow-level diagnostics, and cost-aware data collection. Microsoft’s AKS updates reflect that shift in a way that feels overdue.The big theme is that operators need better visibility without drowning in data. AI systems are expensive enough already; they should not become even more expensive because every metric is collected at full fidelity forever. The trick is balancing detail with operational signal.

GPU Telemetry Finally Joins the Stack

Surfacing GPU performance and utilization directly into managed Prometheus and Grafana is a practical improvement with real enterprise value. Previously, GPU visibility often depended on custom exporters or separate monitoring paths. That created blind spots and fragmented the operational picture.Once GPU telemetry sits alongside standard Kubernetes metrics, capacity planning becomes easier. Teams can correlate GPU saturation with deployment patterns, model sizes, or traffic spikes instead of inferring those relationships after the fact. That shortens incident response and improves ROI on expensive hardware.

Network Flow Intelligence at Scale

Microsoft also added per-flow L3/L4 and supported L7 visibility across HTTP, gRPC, and Kafka traffic. That matters because AI platforms are increasingly service-heavy, with model gateways, retrieval systems, orchestration layers, and downstream microservices all talking to each other. If operators cannot see those interactions clearly, troubleshooting becomes guesswork.The addition of Azure Monitor dashboards and one-click onboarding reduces friction, but the deeper value is organizational. Platform teams can share a common operational view instead of building one-off observability stacks for each cluster. That should help standardize how incidents are diagnosed.

Agentic Troubleshooting

The mention of HolmesGPT joining the CNCF as a Sandbox project is especially timely. Agentic troubleshooting can reduce mean time to resolution by correlating logs, metrics, and cluster state faster than a human can do manually. But it also raises trust and governance questions.That tension is real. Helpful agents are easy to admire in demos, but production operations require auditability and guardrails. Microsoft appears aware of that, and the Azure community hub explicitly framed the tool as useful only when strict RBAC, approval workflows, and audit trails remain in place.

Multi-Cluster and Cross-Cloud Reality

The KubeCon Europe 2026 announcement also makes it clear that the “single cluster” era is over for many AI teams. Workloads now span regions, clusters, and sometimes clouds, especially when GPU supply is fragmented or when latency and compliance constraints force distributed deployments. Microsoft’s answer is to make multi-cluster networking and centralized management much less painful.That’s where Azure Kubernetes Fleet Manager and the managed Cilium cluster mesh come in. They offer unified connectivity, global service discovery, and centrally managed routing across AKS clusters. In enterprise terms, this is about reducing the tax of operating many clusters like a coherent platform.

Cross-Cluster Networking Gets Real

Multi-cluster networking has long been one of the hardest parts of Kubernetes at scale. It often requires custom service discovery, duplicated policy work, and complicated failure handling. Microsoft’s managed cluster mesh approach suggests a desire to make those patterns routine instead of exotic.This matters especially for AI inference. If one region is saturated or a model needs to fail over to another cluster, the routing and identity model need to be consistent. Otherwise, cross-cluster failover becomes a support incident instead of a resilience feature.

Storage and Capacity Flexibility

The announcement that clusters can consume storage from a shared Elastic SAN pool is less flashy, but still important. Stateful AI and analytics workloads often have variable storage demands, and per-workload disk provisioning can become a bottleneck. Shared capacity models simplify planning and can improve efficiency.That said, shared infrastructure always brings tradeoffs. The operational gain is obvious, but the management model must stay clear or the simplicity disappears. Storage abstractions only help when the team can still reason about performance and failure domains.

- Unified routing helps multi-cluster AI failover.

- Central service discovery reduces duplicate operations work.

- Shared storage improves capacity utilization.

- Cross-cluster standards reduce custom network glue.

- Fleet-based management strengthens enterprise governance.

Safer Upgrades and Faster Recovery

One of the most enterprise-friendly parts of the announcement is the focus on safer upgrade paths. Upgrading Kubernetes in production is always a risk, and the consequences get more severe when AI workloads are involved because GPU nodes are expensive and often tightly tuned. Microsoft’s response is to make cluster change management more reversible and less nerve-wracking.That includes blue-green agent pool upgrades, agent pool rollback, and prepared image specification. These are not headline-grabbing features, but they speak directly to the part of Kubernetes that operators care about most: whether change can happen without downtime or panic.

Blue-Green Infrastructure for Confidence

Blue-green upgrades are appealing because they preserve a known-good environment while the new configuration is validated in parallel. For AI workloads, that can be invaluable. Drivers, images, and node-level changes can all influence performance, and a parallel pool gives operators a cleaner rollback path.The benefit is not just technical. It is also organizational. Teams are more likely to adopt upgrades when they believe recovery is straightforward. That confidence can shorten the lag between security patches and actual deployment.

Faster Provisioning, Less Drift

Prepared image specification helps by preloading containers, OS settings, and initialization scripts. In environments that need burst capacity, this can reduce provisioning time significantly. It also improves consistency, which is critical when reproducibility matters to both performance and troubleshooting.The larger implication is that AKS is continuing to move from “a place to run clusters” toward “a place to run production operating models.” That is an important distinction. Enterprise buyers do not just want raw infrastructure; they want less entropy.

Rollback as a Product Philosophy

Rollback deserves its own attention because it changes the psychological model of Kubernetes operations. When rollback is easy, teams are more willing to move forward. When rollback is hard, organizations freeze.That is why these capabilities matter so much for AI infrastructure. Rapid innovation is useful only if the platform can absorb mistakes. Microsoft seems to understand that the best infrastructure products are the ones that make recovery feel routine.

Competition and Market Positioning

Microsoft’s message at KubeCon Europe 2026 is not happening in a vacuum. Every major cloud and infrastructure vendor is trying to claim a piece of the AI platform stack, and many are using open source as the battleground. What distinguishes Microsoft is not just breadth, but the combination of upstream influence and managed enterprise delivery.That combination creates pressure on rivals. If Microsoft can make AKS the most complete environment for open-source AI operations while also contributing the underlying primitives to the ecosystem, it can shape the default architecture for enterprise inference. That is a meaningful advantage.

Why This Matters for Rivals

For cloud competitors, the challenge is that AI infrastructure is becoming a platform category, not a feature. If customers begin to expect standard Kubernetes APIs for inference, resource allocation, meshless encryption, and multi-cluster networking, vendors will need to match that experience or explain why their stack is different.That is especially true for enterprise buyers who want portability. They are increasingly suspicious of AI stacks that look convenient but lock them into proprietary control planes. Open-source alignment is becoming a buying criterion, not a nice-to-have.

The Open-Source Credibility Factor

Microsoft’s long-term bet is that credibility compounds when contributions are real and useful upstream. That is why the company’s emphasis on projects like Cilium, DRA, HolmesGPT, Dalec, and KAITO matters. It’s not just about what Azure can do today. It’s about shaping what the community expects tomorrow.To be clear, this is still a competitive strategy. But it is one that aligns with how cloud-native markets actually mature. The vendor that helps define the shared standard often wins even when it does not own every layer.

- Open-source work builds ecosystem trust.

- Managed services convert that trust into enterprise adoption.

- Standards reduce customer switching friction.

- AI infrastructure becomes more portable and comparable.

- Platform differentiation shifts toward operational maturity.

Strengths and Opportunities

Microsoft’s KubeCon Europe 2026 package has several clear strengths. It ties together upstream credibility, AKS productization, and a coherent vision for AI infrastructure that treats Kubernetes as the shared operating substrate. That combination should appeal to enterprise platform teams that want innovation without abandoning operational discipline.- Strong upstream story across DRA, Cilium, Dalec, HolmesGPT, and KAITO.

- Practical AKS enhancements that target real production pain points.

- Better GPU and network visibility for AI and distributed workloads.

- Identity-aware security without relying entirely on heavy service-mesh overhead.

- Cleaner multi-cluster operations through Fleet Manager and cluster mesh.

- Improved upgrade safety with blue-green pools and rollback.

- More accessible inference workflows through AI Runway and its web UI.

Risks and Concerns

There is a lot to like here, but the strategy is not without risk. The biggest concern is complexity masquerading as simplification. Microsoft is adding many capabilities at once, and platform teams can still end up with a large surface area to understand, even if each individual feature is better than what came before.- Feature sprawl may overwhelm smaller platform teams.

- Azure-specific implementation details could limit portability if not handled carefully.

- CNCF project maturity will vary, and not every sandbox project will become production-grade quickly.

- Operational trust in agentic troubleshooting will require strict guardrails.

- Multi-cluster networking remains inherently difficult despite better tooling.

- Security assumptions can break if teams misconfigure identity or policy layers.

- AI resource contention may still cause expensive performance surprises even with better scheduling.

Looking Ahead

The next phase will be about adoption, not announcement. The important question is whether platform teams use these tools to build repeatable AI operations, or whether they remain a set of promising but separate capabilities. If Microsoft can prove that the pieces fit together in the real world, the company will have a strong case that Kubernetes is becoming the default substrate for enterprise AI.The more interesting test may be cultural rather than technical. Do developers trust the abstractions enough to use them, and do operators trust them enough to standardize on them? Those are harder questions than feature parity, but they are the ones that decide whether platforms endure.

What to watch next:

- Upstream traction for AI Runway, HolmesGPT, and Dalec.

- Customer adoption of DRA-based scheduling in real production environments.

- AKS usage growth for GPU-heavy inference and multi-cluster deployments.

- How quickly meshless security and Gateway API support mature in AKS.

- Whether competitors mirror Microsoft’s open-source-first AI infrastructure model.

Source: HPCwire Microsoft Advances Open-Source AI Infrastructure on Kubernetes at KubeCon Europe 2026 - HPCwire