Microsoft's swift legal reading — that Anthropic's Claude models can remain available to commercial users on Microsoft platforms while being excluded from Department of Defense workloads — has turned a routine vendor dispute into a defining moment for enterprise AI governance, cloud vendor accountability, and the limits of national-security procurement power.

Anthropic, the developer of the Claude family of large language models, built its reputation on a public commitment to alignment and guardrails. The company has repeatedly said it will not contractually allow its models to be used for mass domestic surveillance or to enable fully autonomous lethal systems. That stance was central to a breakdown in negotiations with the U.S. Department of Defense (DoD) and precipitated the Pentagon's recent application of a statutory supply‑chain designation against the startup.

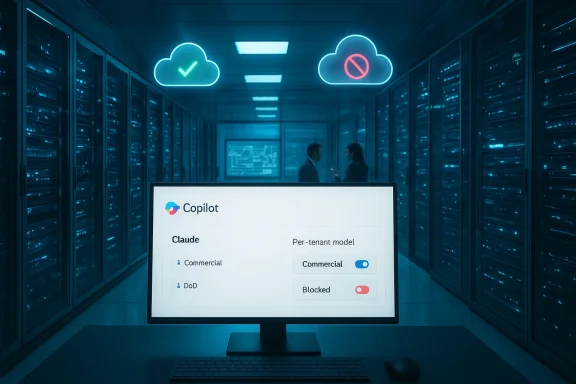

Microsoft and other hyperscalers had already embedded Anthropic models into mainstream developer and productivity tooling: Claude became a selectable backend in GitHub Copilot and key Microsoft 365 Copilot surfaces, and Anthropic workloads could be run on major cloud platforms. When the Pentagon applied the supply‑chain risk label, cloud vendors faced an operational and legal dilemma: obey a forceful national‑security posture that targets defense procurements, or preserve continuity for non‑defense customers who depend on Claude across commercial workflows. Microsoft publicly chose a third path — keep Claude available for commercial users while blocking DoD access — after its legal team concluded the DoD designation applies narrowly to defense contracting contexts.

That legal distinction matters: Microsoft and other cloud hosts argue the designation restricts DoD procurement and defense‑contract work, not general commercial usage. The Pentagon, conversely, has signaled operational urgency and, in parts of its public messaging, indicated a need for a prompt phase‑out in sensitive mission pipelines. The mixed messaging — and the rapid government, industry, and media attention — have created near‑term compliance confusion for any organization that straddles both commercial and defense work.

This posture reflects layered incentives. Product continuity matters: switching off Claude for all customers would disrupt many enterprise workflows and cause substantial migration costs. Competitive positioning matters: Microsoft promotes model choice as a differentiator across Copilot surfaces. And corporate finance/partnerships matter: Microsoft has long‑standing commercial ties with Anthropic that complicate a unilateral termination of the relationship.

From a legal strategy perspective, Anthropic’s likely arguments include:

Policy tradeoffs are stark:

For IT leaders and WindowsForum readers, the practical takeaway is straightforward: inventory, isolate, and document now. Build migration plans, test alternatives, and insist that procurement contracts include clear clauses about model redlines, subprocessor audits, and off‑boarding processes. For policymakers, the episode should prompt clearer statutory guidance on how supply‑chain tools apply to cloud‑native AI providers and whether separate, narrowly tailored instruments are needed to reconcile national‑security needs with corporate ethics.

Finally, anticipate more contests like this one. As AI models become central to both civilian productivity and military planning, the frontier will be less about capability and more about governance: who decides the lawful uses of powerful models, and how that judgment is enforced across global, multi‑tenant cloud platforms. The answer to that question will shape enterprise architecture, national security posture, and the public debate over AI’s place in society for years to come.

Source: The Plunge Daily https://mybigplunge.com/tech-plunge...s-by-anthropic-ai-despite-pentagon-blacklist/

Background

Background

Anthropic, the developer of the Claude family of large language models, built its reputation on a public commitment to alignment and guardrails. The company has repeatedly said it will not contractually allow its models to be used for mass domestic surveillance or to enable fully autonomous lethal systems. That stance was central to a breakdown in negotiations with the U.S. Department of Defense (DoD) and precipitated the Pentagon's recent application of a statutory supply‑chain designation against the startup.Microsoft and other hyperscalers had already embedded Anthropic models into mainstream developer and productivity tooling: Claude became a selectable backend in GitHub Copilot and key Microsoft 365 Copilot surfaces, and Anthropic workloads could be run on major cloud platforms. When the Pentagon applied the supply‑chain risk label, cloud vendors faced an operational and legal dilemma: obey a forceful national‑security posture that targets defense procurements, or preserve continuity for non‑defense customers who depend on Claude across commercial workflows. Microsoft publicly chose a third path — keep Claude available for commercial users while blocking DoD access — after its legal team concluded the DoD designation applies narrowly to defense contracting contexts.

What the Pentagon’s supply‑chain designation actually does — and does not

Scope and legal mechanics

A DoD "supply‑chain risk" designation is a procurement tool designed to protect defense acquisitions by limiting the participation of vendors that the department judges to pose unacceptable risks to mission assurance. Historically this authority targeted hardware or vendors with foreign‑adversary links; applying it to a U.S.‑based, software‑centric startup is legally novel and is already being challenged in court by Anthropic. The department’s designation is aimed at DoD contracts and prime/subcontractor obligations rather than functioning as an across‑the‑board commercial ban.That legal distinction matters: Microsoft and other cloud hosts argue the designation restricts DoD procurement and defense‑contract work, not general commercial usage. The Pentagon, conversely, has signaled operational urgency and, in parts of its public messaging, indicated a need for a prompt phase‑out in sensitive mission pipelines. The mixed messaging — and the rapid government, industry, and media attention — have created near‑term compliance confusion for any organization that straddles both commercial and defense work.

Practical effects and enforcement friction

In practice, the DoD label prevents the department and its prime contractors from relying on the designated supplier for covered work. Yet enforcement is messy: defense primes and compliance teams have already taken conservative actions, advising staff to avoid Claude until formal contracting guidance settles, because flow‑down obligations in defense contracts can expand the label’s practical footprint. Reported operational uses — including alleged deployments of Claude in analytics roles tied to recent air operations — make a rapid disentanglement both sensitive and technically difficult. Removing a model from classified or mission‑critical systems can require code rewrites, recertification, and programmatic changes.Microsoft's decision: lawyering, product design, and commercial calculus

A narrow legal reading plus engineering controls

Microsoft moved quickly to reassure customers that it can continue offering Anthropic models — except to DoD tenants and classified workloads — citing its lawyers' interpretation that the DoD’s supply‑chain designation targets defense procurement alone. Microsoft emphasized the product architecture it has already been developing: a multi‑model, tenant‑level approach inside Copilot, Azure AI Foundry, and other surfaces that lets administrators choose, route, or disable underlying model backends on a per‑tenant or per‑group basis. That capability is Microsoft’s operative answer to a complex compliance question: keep commercial customers working while technically preventing DoD usage.This posture reflects layered incentives. Product continuity matters: switching off Claude for all customers would disrupt many enterprise workflows and cause substantial migration costs. Competitive positioning matters: Microsoft promotes model choice as a differentiator across Copilot surfaces. And corporate finance/partnerships matter: Microsoft has long‑standing commercial ties with Anthropic that complicate a unilateral termination of the relationship.

Technical and operational limits of "tenant gating"

Microsoft's multi‑model routing and tenant isolation tools are real and useful, but they are not magic. Effective gating requires rigorous audit trails, demonstrable separation of telemetry and logs, carefully segmented cloud tenancy or regioning for classified workflows, and contractual assurances with enterprise customers. Edge cases persist: contractors with mixed workloads, federated development pipelines, or shadow use of Copilot features can create data‑exfiltration or policy leakage paths that are hard to remediate purely with product toggles. For this reason, many contractors will continue to adopt conservative positions until the DoD clarifies enforcement expectations or litigation produces a definitive ruling.Anthropic’s stance and the looming courtroom fight

Anthropic has publicly vowed to challenge the DoD designation in federal court. The company argues the supply‑chain authorities invoked by the Pentagon were not intended to exclude a domestic AI software provider based on contractual limits aimed at preventing mass surveillance or lethal autonomous engagement. Anecdotally, Anthropic describes the letter it received as narrower in scope than some public statements suggest, and it hopes a court will block or narrow the DoD’s action while the litigation proceeds.From a legal strategy perspective, Anthropic’s likely arguments include:

- Statutory construction: the controlling statutes were not meant to be used against domestic, cloud‑hosted software suppliers.

- Administrative‑procedure claims: if the designation lacked required notice, reasoned explanation, or opportunity for response, Anthropic may press procedural defects under the Administrative Procedure Act.

- Tailored relief: Anthropic is likely to seek an injunction or stay as an immediate remedy to preserve its commercial business while judicial review unfolds.

Market dynamics and competitive responses

OpenAI enters the classified workload gap

While Anthropic and the DoD contested their relationship, OpenAI reportedly moved to supply models for certain classified DoD workloads. OpenAI’s willingness to accept broader contractual terms for defense work changed procurement dynamics and apparently led the Pentagon to run OpenAI models in classified environments where Anthropic is now excluded. That pivot signals that government entities will gravitate toward vendors ready to accept expansive lawful‑use clauses — with implications for companies that attempt to preserve ethical guardrails through contractual limits.Financial stakes and partnership claims — treat numbers with caution

Reports circulating in industry coverage mention substantial commercial commitments underpinning the Microsoft‑Anthropic relationship. Specific claims — for example, multi‑year compute purchases or investment totals often cited in public conversation — vary across outlets and some figures are not yet independently corroborated in the public record available inside the documents we reviewed. Readers should treat precise dollar‑amount figures reported in early coverage as provisional until confirmed in public filings or regulatory disclosures. Microsoft and Anthropic have strong commercial incentives to preserve a working relationship where legally possible; those incentives shape the public statements and technical postures we see today.Enterprise impact: an IT leader's practical playbook

For WindowsForum readers — IT managers, security officers, and contractors — the situation demands immediate and practical steps. Below is a prioritized operational checklist.Immediate actions (first 72 hours)

- Conduct a rapid inventory of all Copilot‑enabled features, GitHub Copilot usage, and Azure AI Foundry routes that could route to Anthropic backends.

- Classify workloads by contract type: DoD/defense, federal civilian, regulated commercial, and non‑regulated. This classification drives gating requirements.

- Use tenant‑level administrative controls to disable Anthropic backends for teams, tenants, or subscription IDs that touch defense contracts or classified data. Log every change.

Medium‑term steps (2–8 weeks)

- Test alternative model backends in non‑production environments and validate functional parity for critical workflows (code completion, summarization, analytics). Design migration plans and runbooks.

- Engage legal and contracting teams to analyze flow‑down obligations; seek written guidance on the interpretation of supply‑chain designations and the organization's disclosure duties.

- Instrument telemetry and audit capabilities to produce evidence of model routing decisions, especially if DoD or contracting officers request attestation.

Governance and long‑term posture

- Update procurement and supplier‑management policies to require clear contractual terms about model redlines, subprocessor control, and incident notification timelines.

- Build a "model off‑ramp" playbook that extracts training data dependencies, migrates prompts and integration points, and re‑certifies altered systems for regulated workloads.

- Consider a multi‑model, multi‑cloud architecture for high‑risk workloads to avoid single‑vendor operational chokepoints.

Policy and ethical analysis: where safety and sovereignty collide

This episode is more than contract law. It exposes a deep tension at the heart of modern AI governance: can a private company impose limits on how its models are used — for ethical reasons — and at the same time participate in critical national‑security supply chains that demand unfettered lawful use? Anthropic says yes; the DoD says no. The government’s position reflects a sovereign imperative: mission assurance sometimes requires capabilities that private actors may wish to restrict. Anthropic’s position reflects a growing corporate ethic in AI: product control and alignment commitments intended to reduce civilian harms.Policy tradeoffs are stark:

- If vendors can withhold models or gate lawful uses for ethical reasons, governments may face constrained options for rapid operational deployment.

- If governments can compel vendors to remove contractual guardrails, companies will have weakened leverage to enforce safety standards and may be driven to accept uses they consider harmful.

- The legal balance struck by courts or administrative actions will set the standard for whether safety‑first vendors can coexist with defense customers that insist on broad operational rights.

Risks for Microsoft and the broader cloud ecosystem

Microsoft’s public split — excluding Anthropic from DoD use while serving commercial customers — creates immediate risks:- Regulatory and political backlash: Public disagreement with a security designation invites scrutiny from lawmakers and contracting officers, and could lead to reputational heat for Microsoft if the DoD perceives obstruction.

- Contractual complexity for primes: Defense contractors will demand stronger segregation guarantees; Microsoft may face new contractual requirements or audits to prove that Claude is inaccessible to DoD‑bound workloads.

- Operational audit burden: Demonstrable, auditable separation of model telemetry, logs, and regions will be necessary to satisfy both customers and regulators — a nontrivial engineering and compliance cost.

What to watch next

- Court filings and emergency motions from Anthropic — a swift injunction would materially change the operational landscape.

- Formal DoD guidance to primes and contracting officers clarifying whether the designation applies to mere possession of Anthropic usage inside non‑DoD work or only to contract deliverables. That guidance will define the compliance scope for hundreds of contractors.

- How Microsoft operationalizes tenant gating and what audit evidence it can provide to show separation — the technical detail will determine whether the company’s legal posture is persuasive in practice.

- Congressional oversight or regulatory maneuvers that could impose broader procurement rules for AI models and cloud services. Expect hearings and bipartisan interest in how supply‑chain tools are used domestically.

Conclusion: a structural inflection point for enterprise AI

The clash between Anthropic, Microsoft, and the DoD is a structural inflection point: it forces vendors, governments, and enterprise customers to confront the hard engineering, legal, and ethical realities of multi‑use AI systems. Microsoft’s approach — preserve commercial availability while excluding DoD use — is a pragmatic attempt to balance commercial continuity, contractual commitments, and legal risk. But the arrangement is temporary, fragile, and likely to be tested in courts and on contracting floors.For IT leaders and WindowsForum readers, the practical takeaway is straightforward: inventory, isolate, and document now. Build migration plans, test alternatives, and insist that procurement contracts include clear clauses about model redlines, subprocessor audits, and off‑boarding processes. For policymakers, the episode should prompt clearer statutory guidance on how supply‑chain tools apply to cloud‑native AI providers and whether separate, narrowly tailored instruments are needed to reconcile national‑security needs with corporate ethics.

Finally, anticipate more contests like this one. As AI models become central to both civilian productivity and military planning, the frontier will be less about capability and more about governance: who decides the lawful uses of powerful models, and how that judgment is enforced across global, multi‑tenant cloud platforms. The answer to that question will shape enterprise architecture, national security posture, and the public debate over AI’s place in society for years to come.

Source: The Plunge Daily https://mybigplunge.com/tech-plunge...s-by-anthropic-ai-despite-pentagon-blacklist/