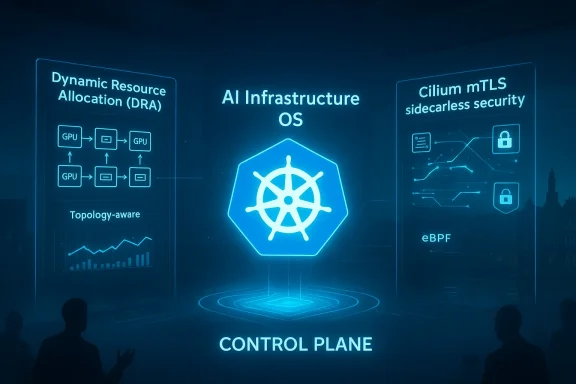

Microsoft used KubeCon Europe 2026 to send a very clear message: Kubernetes is no longer just the control plane for cloud-native apps, but the operational foundation for modern AI infrastructure. The company shipped Dynamic Resource Allocation into general availability, unveiled AI Runway as an open-source inference API, and expanded its Cilium-based networking and security stack for AKS, all while adding new CNCF contributions that strengthen the upstream ecosystem. Taken together, the announcements show Microsoft trying to collapse the distance between model deployment, GPU scheduling, networking, observability, and supply-chain security. That is a meaningful shift for enterprises that have spent the last two years bolting AI workflows onto platforms that were not originally built for them.

Microsoft’s KubeCon push arrives at a point where the industry has moved beyond the novelty stage of generative AI and into the gritty problem of operating it at scale. That matters because training and inference behave very differently, and inference is where the volume lives. The company’s own framing, echoed across its recent infrastructure work, is that AI has become a production workload with real scheduling, networking, security, and cost constraints rather than a lab experiment with some extra GPUs attached.

The biggest backdrop is the shift from static capacity planning to elastic, topology-aware scheduling. Traditional Kubernetes scheduling was designed around CPU, memory, and generic storage patterns, but AI clusters need more than just “enough” GPU capacity. They need the right GPU type, proximity to the right NICs, the right memory fit, and increasingly the right network path for distributed inference and training. That is why Microsoft’s emphasis on Dynamic Resource Allocation is so important: it reflects a broader industry acknowledgement that proprietary device plugins and ad hoc cloud-specific logic no longer scale cleanly across multi-cloud fleets.

Another key context is the steady erosion of sidecar-heavy network designs. For years, service meshes and policy systems often depended on per-pod proxies, which improved consistency but imposed overhead that became painful at scale. In GPU-dense environments, where every core and every gigabyte of memory is expensive, that overhead is no longer an abstract architectural nuisance. Microsoft’s move toward sidecarless mTLS and meshless Istio support shows the company aligning with a wider eBPF-driven trend: move security and telemetry closer to the data plane and keep application pods lean.

The CNCF angle is equally important. Microsoft is not just trying to make Azure Kubernetes Service better; it is trying to shape the upstream primitives the whole market will rely on. The contributions around HolmesGPT and Dalec suggest a bet that the future of AI infrastructure will be won as much through open governance and shared interfaces as through proprietary platform features. That strategy is especially compelling in an era when cloud buyers increasingly want portability, transparency, and reduced lock-in.

Finally, the timing matters. KubeCon Europe 2026 in Amsterdam provided a stage where cloud vendors could position their AI stories in front of the exact audience that has to operationalize them: platform engineers, SREs, infrastructure architects, and open-source maintainers. Microsoft’s message was not subtle. It wants Kubernetes to become the AI infrastructure OS for the enterprise, and it wants AKS to be the most complete expression of that vision.

That change matters because AI workload orchestration has been one of the most fragmented parts of the cloud stack. Each cloud provider has historically tried to solve GPU placement, topology awareness, and accelerator lifecycle management in its own way. DRA narrows that gap by turning GPU allocation into an upstream standard rather than a cloud-specific trick, which reduces the amount of custom glue platform teams have to maintain. In a multi-cloud environment, that is not a small convenience; it is a cost and risk reducer.

Microsoft’s broader point is that GPU scheduling should live in the Kubernetes control plane, not in vendor-specific outer layers. That is a strategic statement as much as a technical one. If DRA becomes the shared abstraction, cloud vendors compete more on execution quality, availability, and ecosystem integration than on hidden scheduling features that are difficult to port.

KubeRay integration also widens the implication. If higher-level AI runtimes can request specific accelerator characteristics through a standard Kubernetes interface, then orchestration becomes less of a bespoke engineering project and more of a documented platform capability. That is the real promise here: not just better GPU allocation, but a saner operational model for the entire AI stack.

That is a smart move because inference is where AI becomes a business process rather than a science project. Platform teams need to know what model can fit on what hardware, what it will cost to run, and what runtime stack it should use. AI Runway’s support for NVIDIA Dynamo, KubeRay, llm-d, and KAITO suggests Microsoft is not trying to dictate a single runtime but to unify them under a common operational umbrella. That feels pragmatic rather than ideological.

This also gives Microsoft a way to connect infrastructure economics to operational decisions. GPU fit and cost estimates turn model selection into a more explicit tradeoff, which is exactly what finance-conscious AI teams need. The organization that can see the cost impact before deployment is often the one that avoids the most painful surprises later.

Neither approach is inherently better. The integrated model reduces implementation burden, while the modular path can preserve flexibility and make it easier to swap components. The more important point is that the market is converging on a shared assumption: inference is now a core cloud workload, and it deserves dedicated orchestration primitives.

The AKS public preview for Cilium mTLS encryption is particularly notable because it secures pod-to-pod traffic with X.509 identities and SPIRE-based management without requiring sidecars. That matters because sidecars consume CPU and memory at precisely the moment when operators want to maximize application density and GPU utilization. For many teams, the appeal of sidecarless security will be immediate: less overhead, fewer moving parts, and simpler upgrades.

The broader architectural trend is toward eBPF-based data-plane intelligence. That direction aligns with a decade of cloud-native evolution, but it now has a more urgent rationale because AI workloads are so resource-sensitive. When security can live closer to the kernel and away from per-pod proxies, infrastructure teams get a more streamlined path to scale.

The message to competitors is clear: if infrastructure buyers can get secure service communication, metrics, and routing without sidecars, the old “mesh at all costs” narrative becomes harder to defend. This does not eliminate the need for advanced traffic management, but it reframes the performance-security tradeoff in a way that should resonate with platform owners.

HolmesGPT is especially interesting because it mixes telemetry, reasoning, and runbooks into a troubleshooting workflow. That suggests Microsoft sees AI not only as the workload being hosted, but also as the tool used to keep the host infrastructure healthy. In a world of sprawling microservice estates and GPU clusters, that kind of assistance may become less of a novelty and more of a necessity.

This is also where Microsoft’s upstream posture helps its credibility. A lot of vendors talk about open source while trying to keep the critical abstractions proprietary. By placing these projects in the CNCF ecosystem, Microsoft is signaling that it wants influence through contribution, not just consumption. That is a meaningful distinction for enterprise buyers who care about governance and longevity.

This is where the open-source story and the platform story meet. If Kubernetes is the orchestration layer and CNCF projects are the building blocks, then telemetry is the feedback mechanism that keeps the whole thing usable. Microsoft seems to understand that “more AI” is not enough; teams also need better answers when the AI stack misbehaves.

One important piece is Azure Kubernetes Fleet Manager, which now offers cross-cluster networking through managed Cilium Cluster Mesh with unified service registry and intelligent routing. That capability matters for organizations running fleets of clusters across regions or environments, because it reduces the burden of stitching together service connectivity manually. In multi-region AI deployments, those details quickly become make-or-break operational concerns.

The release of AKS desktop as a general-availability local development environment is another quiet but useful move. Matching production configuration locally has always been difficult, and anything that reduces the “works on my laptop, fails in the cluster” problem is a win. Microsoft is clearly trying to make the developer experience feel more continuous from workstation to cloud.

What emerges is a strategy that aims to make AKS feel like a comprehensive operational environment rather than a generic managed Kubernetes option. That is a competitive play against both the raw flexibility of DIY Kubernetes and the opinionated simplicity of alternative managed platforms. Microsoft is betting that the middle ground—managed, integrated, but still open-source aligned—will win the largest share of enterprise AI deployments.

For developers and ML engineers, the appeal is more immediate and more tactile. A model catalog with memory fit hints and cost estimates is much easier to use than a pile of low-level cluster objects. The same applies to routing, telemetry, and diagnostics tools that expose the right information without forcing every user to become a Kubernetes specialist overnight.

The risk, of course, is that the stack becomes broad enough to intimidate smaller teams. An all-in-one platform can be powerful, but it can also feel like a lot to absorb at once. Microsoft will need to prove that the pieces can be adopted incrementally, not only as part of a full AKS-centric strategy.

Google’s parallel work, including upstream contributions around autoscaling and inference routing, suggests that the market is converging on similar conclusions from different directions. AWS is likely to respond in its own way, but the broader signal is that no major cloud vendor can afford to treat GPU orchestration as a secondary concern anymore. If AI inference is now a mainstream production workload, then the control plane needs to understand it natively.

Microsoft seems comfortable with that contest. By contributing upstream while packaging the result inside AKS, it can say it supports portability without surrendering differentiation. That is a clever position, and it may prove especially effective with large enterprises that want a credible open-source story without giving up the convenience of a managed platform.

The most important question is whether this vision remains portable. If the shared primitives continue to mature upstream, customers can adopt them with confidence and retain bargaining power across clouds. If, however, the best experience only exists inside a tightly integrated Microsoft environment, the portability story will weaken and competitors will have a clearer opening.

Source: WinBuzzer Microsoft Ships GPU Scheduling, AI Runway at KubeCon 2026

Background

Background

Microsoft’s KubeCon push arrives at a point where the industry has moved beyond the novelty stage of generative AI and into the gritty problem of operating it at scale. That matters because training and inference behave very differently, and inference is where the volume lives. The company’s own framing, echoed across its recent infrastructure work, is that AI has become a production workload with real scheduling, networking, security, and cost constraints rather than a lab experiment with some extra GPUs attached.The biggest backdrop is the shift from static capacity planning to elastic, topology-aware scheduling. Traditional Kubernetes scheduling was designed around CPU, memory, and generic storage patterns, but AI clusters need more than just “enough” GPU capacity. They need the right GPU type, proximity to the right NICs, the right memory fit, and increasingly the right network path for distributed inference and training. That is why Microsoft’s emphasis on Dynamic Resource Allocation is so important: it reflects a broader industry acknowledgement that proprietary device plugins and ad hoc cloud-specific logic no longer scale cleanly across multi-cloud fleets.

Another key context is the steady erosion of sidecar-heavy network designs. For years, service meshes and policy systems often depended on per-pod proxies, which improved consistency but imposed overhead that became painful at scale. In GPU-dense environments, where every core and every gigabyte of memory is expensive, that overhead is no longer an abstract architectural nuisance. Microsoft’s move toward sidecarless mTLS and meshless Istio support shows the company aligning with a wider eBPF-driven trend: move security and telemetry closer to the data plane and keep application pods lean.

The CNCF angle is equally important. Microsoft is not just trying to make Azure Kubernetes Service better; it is trying to shape the upstream primitives the whole market will rely on. The contributions around HolmesGPT and Dalec suggest a bet that the future of AI infrastructure will be won as much through open governance and shared interfaces as through proprietary platform features. That strategy is especially compelling in an era when cloud buyers increasingly want portability, transparency, and reduced lock-in.

Finally, the timing matters. KubeCon Europe 2026 in Amsterdam provided a stage where cloud vendors could position their AI stories in front of the exact audience that has to operationalize them: platform engineers, SREs, infrastructure architects, and open-source maintainers. Microsoft’s message was not subtle. It wants Kubernetes to become the AI infrastructure OS for the enterprise, and it wants AKS to be the most complete expression of that vision.

Dynamic Resource Allocation Goes GA

The most consequential headline is the graduation of Dynamic Resource Allocation (DRA) to general availability in Kubernetes. In practical terms, this replaces static device-plugin thinking with a more declarative, Kubernetes-native approach to specialized hardware, including GPUs and other accelerators. Instead of bolting on vendor-specific scheduling logic, clusters can now reason about hardware as first-class resources in a more portable way.That change matters because AI workload orchestration has been one of the most fragmented parts of the cloud stack. Each cloud provider has historically tried to solve GPU placement, topology awareness, and accelerator lifecycle management in its own way. DRA narrows that gap by turning GPU allocation into an upstream standard rather than a cloud-specific trick, which reduces the amount of custom glue platform teams have to maintain. In a multi-cloud environment, that is not a small convenience; it is a cost and risk reducer.

Why DRA changes the economics

The economic significance is easy to miss if you only look at the API surface. When a scheduling layer becomes standard, platform teams can move workloads with less rewriting, which lowers switching costs and weakens the gravitational pull of proprietary GPU tooling. That in turn makes procurement, capacity planning, and cluster design more modular, which is exactly what large enterprises want when they are trying to compare providers on something other than raw chip availability.Microsoft’s broader point is that GPU scheduling should live in the Kubernetes control plane, not in vendor-specific outer layers. That is a strategic statement as much as a technical one. If DRA becomes the shared abstraction, cloud vendors compete more on execution quality, availability, and ecosystem integration than on hidden scheduling features that are difficult to port.

- Vendor-neutral scheduling reduces lock-in.

- Topology-aware placement improves performance for AI jobs.

- Declarative APIs simplify provisioning and automation.

- Upstream adoption encourages ecosystem consistency.

- Cluster portability becomes more realistic for multi-cloud teams.

The importance of topology

The article’s mention of Azure RDMA NIC compatibility under DRA is especially telling. For high-performance AI workloads, GPU-to-NIC alignment can be a performance differentiator, particularly when distributed training or low-latency communication is involved. This is one of those infrastructure details that sounds arcane until you realize it can decide whether a cluster is merely expensive or actually efficient.KubeRay integration also widens the implication. If higher-level AI runtimes can request specific accelerator characteristics through a standard Kubernetes interface, then orchestration becomes less of a bespoke engineering project and more of a documented platform capability. That is the real promise here: not just better GPU allocation, but a saner operational model for the entire AI stack.

AI Runway as an Inference Control Plane

Microsoft’s other major reveal, AI Runway, is best understood as an attempt to standardize the serving side of AI on Kubernetes. The project exposes a common API for inference workloads and wraps that API in a web interface that integrates with Hugging Face’s model catalog, shows GPU memory fit indicators, and estimates cost in real time. In other words, it is trying to bring ordering to the messy middle between “we have a model” and “we have a service.”That is a smart move because inference is where AI becomes a business process rather than a science project. Platform teams need to know what model can fit on what hardware, what it will cost to run, and what runtime stack it should use. AI Runway’s support for NVIDIA Dynamo, KubeRay, llm-d, and KAITO suggests Microsoft is not trying to dictate a single runtime but to unify them under a common operational umbrella. That feels pragmatic rather than ideological.

A better user experience for platform teams

The user experience angle should not be underestimated. Kubernetes is powerful, but it often exposes complexity rather than hiding it. By adding a web interface and model catalog integration, Microsoft is acknowledging that many AI platform users are not just cluster operators; they are ML engineers, application teams, and data scientists who need guided choices rather than raw YAML.This also gives Microsoft a way to connect infrastructure economics to operational decisions. GPU fit and cost estimates turn model selection into a more explicit tradeoff, which is exactly what finance-conscious AI teams need. The organization that can see the cost impact before deployment is often the one that avoids the most painful surprises later.

- Model catalog integration lowers discovery friction.

- GPU fit indicators reduce trial-and-error provisioning.

- Cost estimates improve budget planning.

- Multiple runtime support avoids premature lock-in.

- Web-based workflows broaden usability beyond cluster specialists.

Comparing Microsoft’s path with rivals

Microsoft’s approach contrasts with Google Cloud’s parallel efforts, which the article describes as more modular, with Google open-sourcing its GKE Cluster Autoscaler and submitting llm-d as a CNCF Sandbox project. That modularity can be attractive for teams that want to assemble a bespoke stack from upstream parts. Microsoft’s tighter pairing of DRA and AI Runway, by contrast, offers a more integrated story for organizations that prefer a guided platform experience.Neither approach is inherently better. The integrated model reduces implementation burden, while the modular path can preserve flexibility and make it easier to swap components. The more important point is that the market is converging on a shared assumption: inference is now a core cloud workload, and it deserves dedicated orchestration primitives.

Cilium, mTLS, and the End of the Sidecar Tax

Microsoft’s networking story at KubeCon is almost as consequential as the GPU story. The company highlighted Cilium mTLS support for sidecarless encrypted communication, along with Hubble metrics controls, flow-log aggregation, and Cluster Mesh feature proposals. This is not just incremental polish; it is a sign that Microsoft sees the networking layer as a critical lever for AI-era performance and security.The AKS public preview for Cilium mTLS encryption is particularly notable because it secures pod-to-pod traffic with X.509 identities and SPIRE-based management without requiring sidecars. That matters because sidecars consume CPU and memory at precisely the moment when operators want to maximize application density and GPU utilization. For many teams, the appeal of sidecarless security will be immediate: less overhead, fewer moving parts, and simpler upgrades.

Why sidecarless matters in AI clusters

In a traditional web app, sidecar overhead is an annoyance. In a GPU-bound AI cluster, it can become an expensive tax. Every CPU cycle spent on a proxy is a cycle not helping the workload feed the accelerator, and every extra memory footprint tightens packing density. Microsoft’s move recognizes that operational elegance and compute efficiency are now linked.The broader architectural trend is toward eBPF-based data-plane intelligence. That direction aligns with a decade of cloud-native evolution, but it now has a more urgent rationale because AI workloads are so resource-sensitive. When security can live closer to the kernel and away from per-pod proxies, infrastructure teams get a more streamlined path to scale.

- Reduced CPU overhead improves pod density.

- Lower memory pressure benefits GPU-heavy nodes.

- Fewer sidecars simplify deployment and upgrades.

- Certificate-based identity strengthens service-to-service trust.

- eBPF data planes align with modern cloud-native design.

AKS networking as a platform advantage

Microsoft is also extending Azure Kubernetes Application Network with mutual TLS, application-aware authorization, and traffic telemetry across ingress and in-cluster communication. Paired with Application Routing with Meshless Istio, the company is effectively trying to offer service-mesh value without the full proxy burden. That is a sensible evolution for teams that want policy and observability without the cost curve of older mesh architectures.The message to competitors is clear: if infrastructure buyers can get secure service communication, metrics, and routing without sidecars, the old “mesh at all costs” narrative becomes harder to defend. This does not eliminate the need for advanced traffic management, but it reframes the performance-security tradeoff in a way that should resonate with platform owners.

CNCF Sandbox Projects and the Open-Source Bet

Microsoft’s contributions of HolmesGPT and Dalec to the CNCF Sandbox are a strategic signal, not just a contribution list. HolmesGPT brings AI-assisted troubleshooting into the observability stack, while Dalec focuses on minimal container builds with SBOM generation and provenance attestations. Both projects speak directly to the two pain points AI infrastructure teams now face: diagnosing increasingly complex systems and proving that the software supply chain is trustworthy.HolmesGPT is especially interesting because it mixes telemetry, reasoning, and runbooks into a troubleshooting workflow. That suggests Microsoft sees AI not only as the workload being hosted, but also as the tool used to keep the host infrastructure healthy. In a world of sprawling microservice estates and GPU clusters, that kind of assistance may become less of a novelty and more of a necessity.

Supply chain security becomes part of the AI story

Dalec points to another uncomfortable truth: AI teams are not just fighting runtime complexity, they are also fighting build-time trust issues. Minimal images, reproducible builds, SBOMs, and attestations are increasingly essential when the software chain includes accelerators, model servers, and orchestration layers from multiple vendors. Security is no longer a separate track from AI infrastructure; it is part of the deployment architecture.This is also where Microsoft’s upstream posture helps its credibility. A lot of vendors talk about open source while trying to keep the critical abstractions proprietary. By placing these projects in the CNCF ecosystem, Microsoft is signaling that it wants influence through contribution, not just consumption. That is a meaningful distinction for enterprise buyers who care about governance and longevity.

- HolmesGPT targets AI-assisted operations.

- Dalec strengthens build transparency and provenance.

- Sandbox placement encourages experimentation.

- Open governance lowers adoption anxiety.

- Shared tooling helps multi-vendor environments.

Observability closes the loop

The article also notes that AKS now surfaces GPU performance and utilization in managed Prometheus and Grafana, which is more important than it might first appear. AI teams cannot optimize what they cannot see, and GPU visibility has historically been one of the nastier gaps in managed Kubernetes environments. Better telemetry means better packing, better scaling, and faster root-cause analysis when performance slips.This is where the open-source story and the platform story meet. If Kubernetes is the orchestration layer and CNCF projects are the building blocks, then telemetry is the feedback mechanism that keeps the whole thing usable. Microsoft seems to understand that “more AI” is not enough; teams also need better answers when the AI stack misbehaves.

Azure Kubernetes Service as the Integration Layer

AKS is where Microsoft is turning these upstream ideas into a managed customer experience. The service now ties together DRA, Cilium mTLS, observability improvements, cluster lifecycle automation, and cross-cluster networking. The result is not just a longer feature list, but a more coherent platform identity: AKS as the place where cloud-native infrastructure becomes AI-native.One important piece is Azure Kubernetes Fleet Manager, which now offers cross-cluster networking through managed Cilium Cluster Mesh with unified service registry and intelligent routing. That capability matters for organizations running fleets of clusters across regions or environments, because it reduces the burden of stitching together service connectivity manually. In multi-region AI deployments, those details quickly become make-or-break operational concerns.

Cluster lifecycle gets smarter

Microsoft also pointed to blue-green agent pool upgrades and agent pool rollback, both of which are practical but highly consequential operational enhancements. Blue-green upgrades reduce risk by introducing a parallel pool rather than mutating production nodes in place, while rollback gives teams a cleaner escape hatch when a version turns out to be problematic. These are the kinds of features that platform teams appreciate most after the second or third outage.The release of AKS desktop as a general-availability local development environment is another quiet but useful move. Matching production configuration locally has always been difficult, and anything that reduces the “works on my laptop, fails in the cluster” problem is a win. Microsoft is clearly trying to make the developer experience feel more continuous from workstation to cloud.

- Fleet Manager helps unify multi-cluster operation.

- Blue-green upgrades reduce deployment risk.

- Rollback support improves recovery options.

- AKS desktop narrows the local-production gap.

- Shared storage options simplify stateful workloads.

Storage and scale in the real world

The mention of shared Elastic SAN for AKS is easy to overlook, but it fits the same pattern. Stateful AI adjacent services and supporting application layers still need storage that is manageable at scale, and reducing per-workload disk sprawl can lower both administrative overhead and provisioning time. In a platform stack this broad, small simplifications compound.What emerges is a strategy that aims to make AKS feel like a comprehensive operational environment rather than a generic managed Kubernetes option. That is a competitive play against both the raw flexibility of DIY Kubernetes and the opinionated simplicity of alternative managed platforms. Microsoft is betting that the middle ground—managed, integrated, but still open-source aligned—will win the largest share of enterprise AI deployments.

Enterprise Impact Versus Developer Experience

For enterprises, the value proposition is obvious: less fragmentation, more standardization, and better cost control. DRA reduces lock-in, AI Runway simplifies inference planning, Cilium mTLS removes some network overhead, and the CNCF contributions support a broader open governance story. That combination is especially appealing to platform teams that are already tired of stitching together one-off solutions around GPUs.For developers and ML engineers, the appeal is more immediate and more tactile. A model catalog with memory fit hints and cost estimates is much easier to use than a pile of low-level cluster objects. The same applies to routing, telemetry, and diagnostics tools that expose the right information without forcing every user to become a Kubernetes specialist overnight.

Two audiences, two benefits

That split matters because enterprise buyers and individual practitioners often want different things. Enterprises want governance, portability, and compliance; builders want speed, clarity, and less setup friction. Microsoft’s announcement is notable because it tries to serve both audiences without making one look like an afterthought.The risk, of course, is that the stack becomes broad enough to intimidate smaller teams. An all-in-one platform can be powerful, but it can also feel like a lot to absorb at once. Microsoft will need to prove that the pieces can be adopted incrementally, not only as part of a full AKS-centric strategy.

- Enterprises gain standardization and policy control.

- Developers get simpler model and runtime choices.

- Platform teams benefit from better telemetry.

- Ops teams get safer upgrade and rollback paths.

- Security teams get stronger identity and provenance tools.

Competitive Implications for Google, AWS, and the CNCF Ecosystem

The competitive read is straightforward: Microsoft is trying to make Kubernetes the neutral layer where AI infrastructure becomes portable, but it is also trying to make AKS the best integrated expression of that neutrality. That puts pressure on cloud rivals to either match the upstream-first strategy or differentiate through deeper specialization.Google’s parallel work, including upstream contributions around autoscaling and inference routing, suggests that the market is converging on similar conclusions from different directions. AWS is likely to respond in its own way, but the broader signal is that no major cloud vendor can afford to treat GPU orchestration as a secondary concern anymore. If AI inference is now a mainstream production workload, then the control plane needs to understand it natively.

Open standards as the battleground

This is where the CNCF becomes strategically important. The more the industry standardizes around upstream APIs and shared projects, the less room there is for cloud-specific scheduling secrets to define market power. That is good news for customers, but it also means vendors have to compete harder on execution quality, ecosystem breadth, and operational polish.Microsoft seems comfortable with that contest. By contributing upstream while packaging the result inside AKS, it can say it supports portability without surrendering differentiation. That is a clever position, and it may prove especially effective with large enterprises that want a credible open-source story without giving up the convenience of a managed platform.

- Google is pushing modular upstream building blocks.

- Microsoft is pairing upstream standards with managed integration.

- AWS will need to defend its own AI orchestration story.

- CNCF projects are becoming strategic infrastructure, not side quests.

- Customers gain leverage as standards mature.

Strengths and Opportunities

Microsoft’s KubeCon package has several strengths that go beyond the novelty of the individual announcements. The most compelling opportunity is that the company is addressing the full lifecycle of AI infrastructure—from GPU scheduling to inference serving, from networking to troubleshooting, and from build provenance to cluster operations. That breadth makes the story feel less like a feature dump and more like a platform strategy.- Standardization of GPU scheduling through DRA can reduce lock-in.

- AI Runway can lower the barrier to inference deployment.

- Cilium mTLS offers better security with less overhead.

- HolmesGPT could reduce operational toil in complex environments.

- Dalec strengthens supply-chain trust and reproducibility.

- AKS telemetry improvements can improve capacity efficiency.

- Fleet-level networking helps multi-cluster and multi-region deployments.

Risks and Concerns

The biggest risk is complexity. Even if Microsoft has assembled a coherent platform vision, the number of moving parts is still substantial, and some customers will struggle to understand where DRA ends and AI Runway begins, or how AKS-specific capabilities map back to upstream projects. A platform that promises simplicity can lose trust quickly if it feels too layered or too dependent on Microsoft-curated defaults.- Adoption friction if teams cannot integrate incrementally.

- Perceived lock-in if the AKS experience feels too bundled.

- Operational learning curve for teams new to DRA and eBPF networking.

- Tool sprawl if telemetry and inference layers are not well integrated.

- Project maturity risk for newer CNCF Sandbox contributions.

- Implementation variability across clouds and runtimes.

- Security configuration complexity if identity and policy are misaligned.

Looking Ahead

The next phase will be about proof, not presentation. Microsoft has laid down a credible architecture for Kubernetes-based AI operations, but the market will now test whether DRA, AI Runway, Cilium mTLS, and the broader AKS stack actually reduce friction in production. If the answer is yes, the company may have set a template for how enterprise AI infrastructure should be built in the next several years.The most important question is whether this vision remains portable. If the shared primitives continue to mature upstream, customers can adopt them with confidence and retain bargaining power across clouds. If, however, the best experience only exists inside a tightly integrated Microsoft environment, the portability story will weaken and competitors will have a clearer opening.

- Watch DRA adoption in Kubernetes 1.36 and beyond.

- Track AI Runway’s runtime support as it expands.

- Monitor AKS preview feedback on Cilium mTLS and sidecarless security.

- See whether HolmesGPT and Dalec gain real CNCF momentum.

- Compare Microsoft’s integration model with Google’s modular approach.

Source: WinBuzzer Microsoft Ships GPU Scheduling, AI Runway at KubeCon 2026