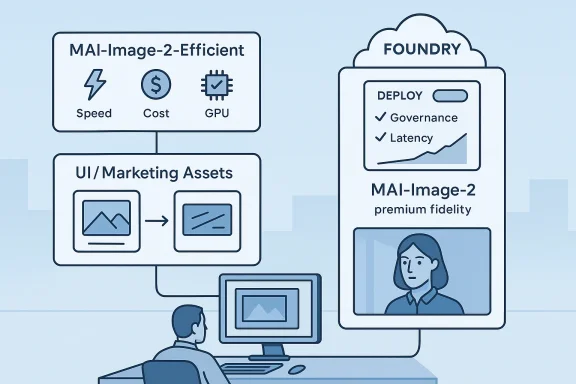

Microsoft’s launch of MAI-Image-2-Efficient signals a clear shift in how the company wants enterprises to think about image generation: not as a single premium model for every job, but as a tiered production stack where speed, cost, and output quality can be matched to the workload. The new model is positioned as the faster, leaner workhorse for commerce, marketing, UI concepts, and branded assets, while MAI-Image-2 remains the higher-fidelity option for demanding visuals. In practical terms, Microsoft is not just releasing another image generator; it is formalizing a two-model strategy that looks designed to win budget-conscious, high-throughput customers. (techcommunity.microsoft.com)

Microsoft’s image-model push has accelerated quickly in 2026, and that speed matters because the company is building this stack in public, in stages, and in tight sequence. Just a week before the efficient variant arrived, Microsoft previewed MAI-Image-2, MAI-Voice-1, and MAI-Transcribe-1 as first-party models in Foundry, framing them as a multimedia suite for developers. That matters because the company is no longer treating image generation as a side feature; it is part of a broader first-party AI platform meant to live inside Microsoft Foundry and, by extension, Azure’s enterprise ecosystem. (techcommunity.microsoft.com)

The official launch note says MAI-Image-2-Efficient is built on the same architecture as MAI-Image-2 and is available in public preview in Microsoft Foundry and the MAI Playground. Microsoft says it is up to 22% faster and offers 4x more efficiency versus MAI-Image-2 when normalized by latency and GPU usage, while also outpacing leading text-to-image models by 40% on average in Microsoft’s internal tests. Those are not subtle claims; they are a direct attempt to redefine the competitive argument around image models as one of throughput per GPU, not only visual polish. (techcommunity.microsoft.com)

The pricing also gives the launch strategic weight. Microsoft says the new model starts at $5 per 1 million text input tokens and $19.50 per 1 million image output tokens, which is materially lower than a premium image-generation posture and aligns with the company’s pitch that this model is meant for large-scale production flows. That suggests Microsoft is trying to make the economics of image generation more predictable for teams that need to create thousands of assets rather than a few prized hero images. (techcommunity.microsoft.com)

This also fits Microsoft Foundry’s broader product direction. The Foundry model catalog is designed to give customers a large selection of models, with direct Azure models receiving the kind of integration, support, and enterprise controls that large organizations want. Microsoft’s documentation emphasizes security, scalability, responsible AI review, content safety, and deployment flexibility, all of which make it easier for procurement teams and platform owners to justify adopting a first-party model family instead of stitching together third-party tools. (learn.microsoft.com)

The company also says the model is intended for real-time and conversational workflows, short-form text rendering such as labels and headlines, and batch pipelines where compute cost is important. That positioning is significant because it acknowledges a reality many enterprises already know: not every image task needs the finest photorealistic nuance. Sometimes the real business value is in fast enough generation at a volume the finance team can tolerate. (techcommunity.microsoft.com)

Microsoft’s blog is also careful to distinguish the two models in the family. MAI-Image-2-Efficient is recommended when latency and scale matter, while MAI-Image-2 is the better fit for precise text rendering, deep photorealism, and smoother contrast. In other words, Microsoft is separating the stack by job to be done, not just by price. That is a classic enterprise software move, and it reduces the pressure to claim that one model can do everything perfectly. (techcommunity.microsoft.com)

Key product facts stand out:

Microsoft says the model is up to 22% faster than MAI-Image-2 and 4x more efficient when normalized by latency and GPU usage, based on testing on April 13, 2026. The blog also states that the comparison used an NVIDIA H100 at 1024×1024 with optimized batch sizes and matched latency targets. That detail is important because it shows the company is not making a generic “it’s faster” claim; it is describing a specific throughput scenario that matters to cloud operators. (techcommunity.microsoft.com)

The comparison to other leading systems is even more telling. Microsoft says MAI-Image-2-Efficient outpaces leading text-to-image models by 40% on average, with comparisons that included Gemini 3.1 Flash variants and GPT-Image-1.5-High under defined measurement conditions. As always, benchmark framing matters as much as the result, because changing prompt sets, reasoning modes, or latency targets can shift outcomes. Still, Microsoft clearly wants buyers to see the model as a performance-per-dollar play, not just a quality upgrade. (techcommunity.microsoft.com)

The practical benefits include:

This is a smart platform move because different workloads really do have different quality thresholds. A marketing team making social graphics may care more about speed and batch size than subtle shadow gradients, while a product rendering team may need the original model’s smoother contrast and text fidelity. By acknowledging this split, Microsoft is aligning the product family with actual enterprise behavior rather than an idealized one-model-fits-all story. (techcommunity.microsoft.com)

The blog also notes that the two models have distinct visual signatures. The efficient model produces sharper, more defined lines, making it strong for illustration, animation, and attention-grabbing photoreal content. The flagship model produces smoother, more nuanced contrast, which Microsoft says is better for deeper photorealism and subtlety. That distinction may sound cosmetic, but in creative workflows it can determine whether a model is chosen for prototyping, production, or both. (techcommunity.microsoft.com)

It also strengthens the Foundry catalog narrative. Microsoft’s model catalog already includes a broad range of first-party and partner models, but MAI gives Microsoft something more valuable: a branded family it can shape around enterprise demand. The more the company can anchor customers in that family, the more the platform starts to look like a long-term operating layer rather than a temporary model marketplace. (learn.microsoft.com)

The clearest fit is commerce. E-commerce platforms need product banners, ad creatives, lifestyle variations, seasonal assets, and localized visuals at scale, often under aggressive deadlines. A model that can reduce latency and GPU consumption while remaining visually competitive could become a backend staple for merchant tooling, especially if it is paired with policy controls and deployment governance already present in Foundry. (techcommunity.microsoft.com)

Marketing teams are another obvious audience. They often need rapid concept exploration rather than museum-grade final art, which makes the new model’s speed and lower price point especially appealing. If a creative team can generate dozens of prompt variations quickly, the business value is not just saving money; it is shortening the time from idea to approved campaign. (techcommunity.microsoft.com)

High-value enterprise scenarios include:

For creators, the most visible change may be responsiveness. If a model can generate acceptable visuals faster, then iterative ideation becomes more natural and less disruptive. That matters in consumer tools because people are far more likely to experiment when results appear immediately, or at least quickly enough to preserve the flow of thought. (techcommunity.microsoft.com)

There is also a subtle UX implication here: Microsoft may increasingly use the efficient model for background or conversational generation, while keeping the higher-fidelity model for premium moments. That split mirrors how software often separates preview-quality outputs from final renders. It could lead to a more responsive feel across Copilot-style experiences without sacrificing the ability to deliver polished visuals when needed. (techcommunity.microsoft.com)

It also helps Microsoft differentiate surface-level access from back-end infrastructure. Users may only notice that Copilot feels quicker or more consistent, while the real architectural change happens inside Foundry and Azure. That is a classic enterprise-cloud advantage: the value is visible in the app, but the leverage comes from the platform underneath. (learn.microsoft.com)

That matters because the image-model race is no longer only about who can generate the most beautiful single image. It is increasingly about who can deliver the best blend of speed, controllability, governance, and cost inside a cloud ecosystem. Microsoft’s advantage is that it can pair the model with Foundry, Azure billing, enterprise identity, and content-safety tooling in one environment. (learn.microsoft.com)

The company is also benefiting from a broader platform narrative. Microsoft says Foundry’s model catalog offers over 1,900 models, with direct Azure models, partner models, performance leaderboards, benchmark metrics, and deployment options. That breadth can make the MAI family feel less like a standalone model release and more like part of a mature AI procurement story. Buyers do not just buy the model; they buy the platform that makes the model governable. (learn.microsoft.com)

Competitive takeaways:

The Foundry model catalog documentation helps explain why Microsoft keeps emphasizing these details. Models sold directly by Azure are described as deeply integrated into Azure’s ecosystem, with enterprise-grade SLAs, support, responsible AI review, and transparent documentation. The same documentation also emphasizes content safety, deployment types, and the importance of selecting appropriate models for each use case. (learn.microsoft.com)

That governance layer gives Microsoft something of a moat. A customer evaluating MAI-Image-2-Efficient is not just buying a faster generator; they are buying into an operational environment with Microsoft support, network controls, and policy tools that can be aligned with enterprise compliance processes. In many organizations, that is the difference between an experimental demo and a model that makes it into production. (learn.microsoft.com)

Key governance advantages include:

This matters because image generation economics can be deceptively harsh. When a team needs thousands of iterations for ads, catalogs, layout concepts, or localization variants, even moderate per-image costs can balloon quickly. A model that reduces the unit economics while preserving acceptable creative quality can change whether a project is viable at all. (techcommunity.microsoft.com)

The pricing also reinforces Microsoft’s message that the model is a production tool. If a model is being marketed with high-level artistic language but priced like an expensive premium asset, customers may hesitate to use it broadly. By contrast, MAI-Image-2-Efficient is being priced to encourage routine use. That is usually a sign that a vendor wants to become embedded in a workflow, not merely admired in a demo. (techcommunity.microsoft.com)

That said, cheaper does not mean free, and usage management still matters. Organizations that let generative workloads scale unchecked can discover that “efficient” models still become expensive at high volume. Microsoft’s pricing pitch is therefore both an opportunity and a warning: cost discipline remains a design requirement, not a post-launch cleanup task. (techcommunity.microsoft.com)

There is also a bigger strategic question about where Microsoft places the model in its flagship products. If MAI-Image-2-Efficient becomes the default for conversational or lightweight image generation, while MAI-Image-2 stays reserved for premium renderings, the company could establish a very efficient internal routing layer that users never see but businesses depend on heavily. That would be a meaningful competitive advantage because it blends user experience with infrastructure economics. (techcommunity.microsoft.com)

Most importantly, Microsoft has signaled that more announcements are coming. The company explicitly teased further developments around Microsoft Build 2026, which suggests the MAI stack is still early in its commercial arc. If Microsoft can keep pairing model quality with platform controls and credible economics, it may become one of the more formidable enterprise AI image providers in the market. (techcommunity.microsoft.com)

Source: TestingCatalog Microsoft launches faster MAI-Image-2-Efficient for business

Background

Background

Microsoft’s image-model push has accelerated quickly in 2026, and that speed matters because the company is building this stack in public, in stages, and in tight sequence. Just a week before the efficient variant arrived, Microsoft previewed MAI-Image-2, MAI-Voice-1, and MAI-Transcribe-1 as first-party models in Foundry, framing them as a multimedia suite for developers. That matters because the company is no longer treating image generation as a side feature; it is part of a broader first-party AI platform meant to live inside Microsoft Foundry and, by extension, Azure’s enterprise ecosystem. (techcommunity.microsoft.com)The official launch note says MAI-Image-2-Efficient is built on the same architecture as MAI-Image-2 and is available in public preview in Microsoft Foundry and the MAI Playground. Microsoft says it is up to 22% faster and offers 4x more efficiency versus MAI-Image-2 when normalized by latency and GPU usage, while also outpacing leading text-to-image models by 40% on average in Microsoft’s internal tests. Those are not subtle claims; they are a direct attempt to redefine the competitive argument around image models as one of throughput per GPU, not only visual polish. (techcommunity.microsoft.com)

The pricing also gives the launch strategic weight. Microsoft says the new model starts at $5 per 1 million text input tokens and $19.50 per 1 million image output tokens, which is materially lower than a premium image-generation posture and aligns with the company’s pitch that this model is meant for large-scale production flows. That suggests Microsoft is trying to make the economics of image generation more predictable for teams that need to create thousands of assets rather than a few prized hero images. (techcommunity.microsoft.com)

This also fits Microsoft Foundry’s broader product direction. The Foundry model catalog is designed to give customers a large selection of models, with direct Azure models receiving the kind of integration, support, and enterprise controls that large organizations want. Microsoft’s documentation emphasizes security, scalability, responsible AI review, content safety, and deployment flexibility, all of which make it easier for procurement teams and platform owners to justify adopting a first-party model family instead of stitching together third-party tools. (learn.microsoft.com)

Why the timing matters

The release lands while Microsoft is trying to establish momentum around its in-house AI brand. A model family that arrives quickly after another launch can be read as incremental engineering, but commercially it functions more like a message: Microsoft intends to ship fast-follow variants that match specific enterprise needs before rivals can lock in the market. That is especially important in image generation, where buyers often compare not just model quality, but rendering speed, cost, and the ability to integrate into workflows. (techcommunity.microsoft.com)What Microsoft actually launched

At its core, MAI-Image-2-Efficient is a text-to-image model meant for production-scale use. Microsoft says it supports a 32,000-token context window, PNG output, English support, and configurable width and height with a minimum size of 768×768 and a maximum pixel budget equivalent to 1024×1024. Those limits are a clue to the product philosophy: this is not being presented as a sprawling, multi-format creative suite, but as a focused generation engine for workflows that need reliable, manageable outputs. (techcommunity.microsoft.com)The company also says the model is intended for real-time and conversational workflows, short-form text rendering such as labels and headlines, and batch pipelines where compute cost is important. That positioning is significant because it acknowledges a reality many enterprises already know: not every image task needs the finest photorealistic nuance. Sometimes the real business value is in fast enough generation at a volume the finance team can tolerate. (techcommunity.microsoft.com)

Microsoft’s blog is also careful to distinguish the two models in the family. MAI-Image-2-Efficient is recommended when latency and scale matter, while MAI-Image-2 is the better fit for precise text rendering, deep photorealism, and smoother contrast. In other words, Microsoft is separating the stack by job to be done, not just by price. That is a classic enterprise software move, and it reduces the pressure to claim that one model can do everything perfectly. (techcommunity.microsoft.com)

Model identity and deployment

Microsoft says the efficient variant is now available in public preview in Microsoft Foundry and the MAI Playground, which immediately gives developers something to test inside the company’s own governance model. That matters because the Foundry layer is not merely a hosting wrapper; it is the mechanism through which Microsoft presents its models as enterprise-ready, supported, and policy-aware. (techcommunity.microsoft.com)Key product facts stand out:

- Public preview is live in Foundry and the MAI Playground. (techcommunity.microsoft.com)

- The model uses the same architecture as MAI-Image-2. (techcommunity.microsoft.com)

- Microsoft says it is tuned for high-volume workflows and interactive experiences. (techcommunity.microsoft.com)

- The new model is priced lower and aims to reduce GPU cost. (techcommunity.microsoft.com)

- It is designed with sharpness and defined lines in mind, not only soft photorealism. (techcommunity.microsoft.com)

The efficiency story

The headline number is not just that the model is faster; it is that Microsoft is framing efficiency in terms of latency plus GPU usage. That is a much more enterprise-relevant metric than raw benchmark bragging because it maps directly to throughput economics and capacity planning. A model that is only marginally better on aesthetic quality but dramatically better on operational cost can be far more attractive to a large deployment team. (techcommunity.microsoft.com)Microsoft says the model is up to 22% faster than MAI-Image-2 and 4x more efficient when normalized by latency and GPU usage, based on testing on April 13, 2026. The blog also states that the comparison used an NVIDIA H100 at 1024×1024 with optimized batch sizes and matched latency targets. That detail is important because it shows the company is not making a generic “it’s faster” claim; it is describing a specific throughput scenario that matters to cloud operators. (techcommunity.microsoft.com)

The comparison to other leading systems is even more telling. Microsoft says MAI-Image-2-Efficient outpaces leading text-to-image models by 40% on average, with comparisons that included Gemini 3.1 Flash variants and GPT-Image-1.5-High under defined measurement conditions. As always, benchmark framing matters as much as the result, because changing prompt sets, reasoning modes, or latency targets can shift outcomes. Still, Microsoft clearly wants buyers to see the model as a performance-per-dollar play, not just a quality upgrade. (techcommunity.microsoft.com)

What efficiency means in practice

For enterprises, efficiency is not an abstract score. It determines how many assets can be generated per minute, how many users can be served in parallel, and how much room a team has to expand usage before budgets start to break. A model like this is especially compelling when image generation sits inside a larger workflow, such as dynamic ads, product mockups, or creative copilots. (techcommunity.microsoft.com)The practical benefits include:

- More images per GPU hour.

- Lower marginal cost for A/B testing creative variations.

- Better feasibility for conversational image generation.

- Reduced latency in design and commerce tools.

- More room for batch jobs without scaling infrastructure aggressively.

How it changes the MAI image stack

The introduction of MAI-Image-2-Efficient creates a simple but powerful two-tier model stack. One tier now emphasizes throughput and cost control, while the original MAI-Image-2 occupies the premium lane for nuanced photorealism and more demanding text rendering. That separation gives Microsoft a more mature product portfolio and helps avoid the common trap where one model is forced to carry every creative use case at once. (techcommunity.microsoft.com)This is a smart platform move because different workloads really do have different quality thresholds. A marketing team making social graphics may care more about speed and batch size than subtle shadow gradients, while a product rendering team may need the original model’s smoother contrast and text fidelity. By acknowledging this split, Microsoft is aligning the product family with actual enterprise behavior rather than an idealized one-model-fits-all story. (techcommunity.microsoft.com)

The blog also notes that the two models have distinct visual signatures. The efficient model produces sharper, more defined lines, making it strong for illustration, animation, and attention-grabbing photoreal content. The flagship model produces smoother, more nuanced contrast, which Microsoft says is better for deeper photorealism and subtlety. That distinction may sound cosmetic, but in creative workflows it can determine whether a model is chosen for prototyping, production, or both. (techcommunity.microsoft.com)

A two-model strategy is a platform strategy

This kind of product layering is a classic cloud strategy because it creates internal upsell paths and use-case segmentation. Microsoft can steer some customers toward the efficient model for most workloads while keeping the flagship model available when polish matters more than cost. That is a tidy way to preserve premium positioning without overloading the highest-end model with every routine request. (techcommunity.microsoft.com)It also strengthens the Foundry catalog narrative. Microsoft’s model catalog already includes a broad range of first-party and partner models, but MAI gives Microsoft something more valuable: a branded family it can shape around enterprise demand. The more the company can anchor customers in that family, the more the platform starts to look like a long-term operating layer rather than a temporary model marketplace. (learn.microsoft.com)

Enterprise use cases

Microsoft is openly targeting business workflows rather than consumer novelty. The blog names high-volume production, real-time conversational experiences, and rapid prototyping as the core scenarios where MAI-Image-2-Efficient should shine. That focus is unsurprising, but it is strategically important because enterprise buyers often care less about headline sample images and more about how a model behaves when embedded in a pipeline. (techcommunity.microsoft.com)The clearest fit is commerce. E-commerce platforms need product banners, ad creatives, lifestyle variations, seasonal assets, and localized visuals at scale, often under aggressive deadlines. A model that can reduce latency and GPU consumption while remaining visually competitive could become a backend staple for merchant tooling, especially if it is paired with policy controls and deployment governance already present in Foundry. (techcommunity.microsoft.com)

Marketing teams are another obvious audience. They often need rapid concept exploration rather than museum-grade final art, which makes the new model’s speed and lower price point especially appealing. If a creative team can generate dozens of prompt variations quickly, the business value is not just saving money; it is shortening the time from idea to approved campaign. (techcommunity.microsoft.com)

Where enterprises may see the most value

The model’s strongest enterprise uses are likely to cluster around operational creativity rather than fine art. That means workflows where image generation is one step inside a larger system, not the system itself. In those scenarios, latency is product quality because slow generation breaks the experience. (techcommunity.microsoft.com)High-value enterprise scenarios include:

- E-commerce product and ad imagery.

- Marketing automation with large variant sets.

- Creative copilot experiences in design tools.

- UI concepting for product teams.

- Batch asset generation for localizable campaigns.

Consumer and creator impact

Although Microsoft is framing the release for builders, the knock-on effect will likely be felt by consumer-facing products too. The company says MAI-Image-2 has begun rolling out across Copilot, with phased expansion into Bing and PowerPoint. That means improvements in the model family may eventually surface in tools that ordinary users already know, even if they never touch Foundry directly.For creators, the most visible change may be responsiveness. If a model can generate acceptable visuals faster, then iterative ideation becomes more natural and less disruptive. That matters in consumer tools because people are far more likely to experiment when results appear immediately, or at least quickly enough to preserve the flow of thought. (techcommunity.microsoft.com)

There is also a subtle UX implication here: Microsoft may increasingly use the efficient model for background or conversational generation, while keeping the higher-fidelity model for premium moments. That split mirrors how software often separates preview-quality outputs from final renders. It could lead to a more responsive feel across Copilot-style experiences without sacrificing the ability to deliver polished visuals when needed. (techcommunity.microsoft.com)

The consumer experience may become less “art demo” and more “workflow utility”

That shift is important because it changes expectations. Consumer AI image generation often gets marketed through wow-factor demos, but repeated everyday use depends on speed, reliability, and predictable output. A model built for production efficiency can quietly improve the whole experience by making generation feel instant enough to be habitual rather than special. (techcommunity.microsoft.com)It also helps Microsoft differentiate surface-level access from back-end infrastructure. Users may only notice that Copilot feels quicker or more consistent, while the real architectural change happens inside Foundry and Azure. That is a classic enterprise-cloud advantage: the value is visible in the app, but the leverage comes from the platform underneath. (learn.microsoft.com)

Positioning against rivals

Microsoft’s launch arrives in a crowded market where Google, OpenAI, and other image-model vendors are all competing on quality, latency, and integration. The company’s announcement explicitly references comparisons to Gemini-based and GPT-based offerings in its latency testing, which indicates that Microsoft wants the market to think of MAI-Image-2-Efficient as a credible rival in mainstream production scenarios, not a niche internal model. (techcommunity.microsoft.com)That matters because the image-model race is no longer only about who can generate the most beautiful single image. It is increasingly about who can deliver the best blend of speed, controllability, governance, and cost inside a cloud ecosystem. Microsoft’s advantage is that it can pair the model with Foundry, Azure billing, enterprise identity, and content-safety tooling in one environment. (learn.microsoft.com)

The company is also benefiting from a broader platform narrative. Microsoft says Foundry’s model catalog offers over 1,900 models, with direct Azure models, partner models, performance leaderboards, benchmark metrics, and deployment options. That breadth can make the MAI family feel less like a standalone model release and more like part of a mature AI procurement story. Buyers do not just buy the model; they buy the platform that makes the model governable. (learn.microsoft.com)

Why the rivalry is about more than raw quality

This is where Microsoft may have an advantage that pure-play model vendors do not always enjoy. Enterprise customers often care about the boring but decisive stuff: access controls, region availability, billing predictability, and security posture. By embedding MAI inside Foundry, Microsoft is effectively competing on the whole operational stack rather than the model alone. (learn.microsoft.com)Competitive takeaways:

- Speed is now a first-class marketing claim.

- Cost efficiency is a core differentiator, not an afterthought.

- Foundry integration gives Microsoft a distribution edge.

- Model choice lets Microsoft cover both premium and volume use cases.

- Enterprise controls remain a major selling point versus standalone APIs.

Availability, regions, and governance

Microsoft says the new model is available now in Microsoft Foundry and the MAI Playground, though the playground remains limited to select markets, including the US, with EU countries coming later. The company also lists regional availability for the model in West Central US, East US, West US, West Europe, Sweden Central, and South India. That region list matters because enterprise teams often need to plan around latency, residency expectations, and local deployment constraints. (techcommunity.microsoft.com)The Foundry model catalog documentation helps explain why Microsoft keeps emphasizing these details. Models sold directly by Azure are described as deeply integrated into Azure’s ecosystem, with enterprise-grade SLAs, support, responsible AI review, and transparent documentation. The same documentation also emphasizes content safety, deployment types, and the importance of selecting appropriate models for each use case. (learn.microsoft.com)

That governance layer gives Microsoft something of a moat. A customer evaluating MAI-Image-2-Efficient is not just buying a faster generator; they are buying into an operational environment with Microsoft support, network controls, and policy tools that can be aligned with enterprise compliance processes. In many organizations, that is the difference between an experimental demo and a model that makes it into production. (learn.microsoft.com)

What the Foundry context adds

Foundry is doing a lot of quiet work here. It turns model access into a standardized procurement and deployment story, which reduces the friction of experimentation and the fear of hidden platform risk. In an era where AI adoption can stall over governance concerns, that kind of integration is more valuable than it may look at first glance. (learn.microsoft.com)Key governance advantages include:

- Enterprise support from Microsoft.

- Model transparency and documentation.

- Content safety integration for supported deployments.

- Region-based availability planning.

- Billing clarity via pay-per-token or compute-based deployment models.

Why the pricing matters

Pricing often sounds like a footnote in AI launch coverage, but here it is one of the most important parts of the story. Microsoft says MAI-Image-2-Efficient starts at $5 per 1 million text input tokens and $19.50 per 1 million image output tokens, which is positioned as roughly 41% lower than the flagship model’s pricing. That is a direct invitation for enterprises to route volume workloads to the cheaper tier. (techcommunity.microsoft.com)This matters because image generation economics can be deceptively harsh. When a team needs thousands of iterations for ads, catalogs, layout concepts, or localization variants, even moderate per-image costs can balloon quickly. A model that reduces the unit economics while preserving acceptable creative quality can change whether a project is viable at all. (techcommunity.microsoft.com)

The pricing also reinforces Microsoft’s message that the model is a production tool. If a model is being marketed with high-level artistic language but priced like an expensive premium asset, customers may hesitate to use it broadly. By contrast, MAI-Image-2-Efficient is being priced to encourage routine use. That is usually a sign that a vendor wants to become embedded in a workflow, not merely admired in a demo. (techcommunity.microsoft.com)

A lower price can also reshape internal buying behavior

Inside enterprises, the lower sticker price can be as important as the absolute savings. Once a model becomes cheap enough for experimentation, teams are more likely to test it in product prototypes, internal tools, and pilot workflows. That can create a compounding effect where usage expands because it is no longer gated by budget anxiety. (techcommunity.microsoft.com)That said, cheaper does not mean free, and usage management still matters. Organizations that let generative workloads scale unchecked can discover that “efficient” models still become expensive at high volume. Microsoft’s pricing pitch is therefore both an opportunity and a warning: cost discipline remains a design requirement, not a post-launch cleanup task. (techcommunity.microsoft.com)

Strengths and Opportunities

Microsoft has built MAI-Image-2-Efficient around a very practical enterprise thesis: most image workloads do not need the absolute best visual polish every time, but they do need speed, cost control, and dependable output. That makes the launch commercially sensible and strategically coherent, especially inside Foundry where Microsoft can bundle governance and deployment controls with the model itself. (techcommunity.microsoft.com)- Clear workload segmentation between premium and efficient image generation.

- Lower per-unit cost for high-volume creative operations.

- Better fit for real-time experiences in chat and copilot-style workflows.

- Strong enterprise framing through Foundry and Azure Direct models.

- Useful visual signature for illustration, animation, and attention-grabbing assets.

- Potential Copilot and Bing spillover as Microsoft expands the MAI family.

- Improved procurement story for teams needing predictable model economics.

Risks and Concerns

The biggest risk is that the model’s speed-first identity could invite overuse in scenarios where quality still matters. A sharp, efficient output is helpful, but if teams apply it to premium brand work or detailed photorealism, the trade-off could be visible to end users and clients. Microsoft itself acknowledges that MAI-Image-2 remains the better choice for some fidelity-sensitive tasks. (techcommunity.microsoft.com)- Quality trade-offs may frustrate users who expect flagship-level realism.

- Benchmark comparisons can vary depending on prompt set and latency targets.

- Regional availability gaps may slow adoption outside key markets.

- Public preview status means the product is still maturing.

- Cost savings can be offset by runaway volume if governance is weak.

- Text rendering limits may still matter in branding-heavy workflows.

- Competitive reactions from rivals could compress Microsoft’s edge quickly.

Looking Ahead

The next phase to watch is whether Microsoft turns MAI into a broader family with more clear-cut specialization. If the company follows the same pattern it has used here, we may see more variants optimized for distinct task types, latency tiers, or creative domains. That would push Foundry further toward being a true model operating system rather than a simple catalog. (techcommunity.microsoft.com)There is also a bigger strategic question about where Microsoft places the model in its flagship products. If MAI-Image-2-Efficient becomes the default for conversational or lightweight image generation, while MAI-Image-2 stays reserved for premium renderings, the company could establish a very efficient internal routing layer that users never see but businesses depend on heavily. That would be a meaningful competitive advantage because it blends user experience with infrastructure economics. (techcommunity.microsoft.com)

Most importantly, Microsoft has signaled that more announcements are coming. The company explicitly teased further developments around Microsoft Build 2026, which suggests the MAI stack is still early in its commercial arc. If Microsoft can keep pairing model quality with platform controls and credible economics, it may become one of the more formidable enterprise AI image providers in the market. (techcommunity.microsoft.com)

- Watch for broader Copilot integration.

- Watch for region expansion, especially in Europe.

- Watch for model-card updates and benchmark refinements.

- Watch for partner adoption in commerce and media.

- Watch for pricing or throughput changes as preview usage grows.

Source: TestingCatalog Microsoft launches faster MAI-Image-2-Efficient for business