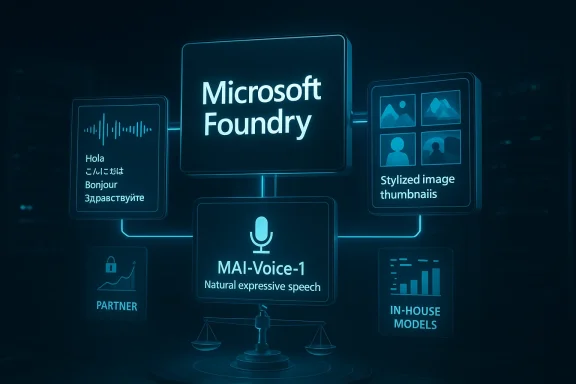

Microsoft’s release of three in-house AI models marks more than a routine product expansion. It is a signal that the company is no longer content to be seen primarily as OpenAI’s biggest backer and cloud host; it wants to be a model maker in its own right. By launching MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 inside Microsoft Foundry, the company is now competing directly in the same enterprise lanes where OpenAI’s transcription, speech, and image tools already live. Microsoft’s own signal is clear: the company wants greater independence, broader platform control, and a tighter grip on the economics of AI.

The Microsoft-OpenAI relationship has always been unusual: part investment, part partnership, and part strategic hedge. Microsoft became OpenAI’s largest investor and deeply embedded itself in OpenAI’s growth by supplying Azure infrastructure while also using OpenAI models to power Copilot across its software stack. That arrangement gave Microsoft access to frontier AI without having to build everything from scratch, but it also created a dependency that looked increasingly uncomfortable as AI became central to both consumer and enterprise strategy.

Over the past year, Microsoft has made a series of moves that suggest it wants optionality, not just alliance. The company reorganized around Microsoft AI under Mustafa Suleyman, and in 2025 he publicly framed the work in terms of creating AI companions and broader consumer experiences. More recently, Microsoft announced a leadership update that explicitly tied Suleyman’s remit to superintelligence efforts and “world class models” over the next five years. That wording matters because it reads less like a product support function and more like the foundation of an independent model strategy.

At the same time, Microsoft has been widening the surface area of its own AI platform. Foundry now serves as the company’s central place for building, customizing, and deploying AI applications at scale, and its model catalog includes not only OpenAI offerings but also models from Anthropic, Meta, Mistral, Cohere, NVIDIA, Hugging Face, and others. Microsoft is clearly positioning Foundry as a brokerage layer for enterprise AI, one that makes Microsoft the default marketplace rather than merely the favorite tenant hosting someone else’s frontier models.

The timing of this release also reflects a broader market shift. By 2026, enterprise buyers no longer want a single model story; they want a portfolio, with specialized tools for transcription, voice, image generation, search, and agents. Microsoft’s in-house models fit neatly into that need. They are not pitched as universal replacements for GPT-class systems. Instead, they are task-specific models that can be sold into workflows where accuracy, latency, price, or governance matter more than raw generality.

That matters because Microsoft is not merely experimenting in a lab. It is productizing these models as commercial services for developers and businesses. This immediately places them in the same category as OpenAI’s Whisper, text-to-speech tools, and DALL·E family, which Microsoft also sells through Foundry in one form or another. In other words, Microsoft is now competing with a partner whose models still remain part of its own sales story.

The narrowness is actually a strength. Microsoft can tune each model for a defined business scenario, integrate them tightly into Foundry, and market them as building blocks for applications rather than as headline-grabbing general intelligence. That makes them easier to govern, easier to benchmark, and potentially easier to sell to regulated industries. It also lets Microsoft compete where the margin is good and the switching costs are high.

Key implications:

By placing MAI models inside Foundry, Microsoft can bundle them with its broader cloud, security, compliance, and enterprise tooling. That gives Microsoft a classic platform advantage: model choice becomes part of a larger procurement and governance relationship. A customer evaluating transcription or voice generation is no longer buying only model quality; they are also buying Microsoft identity, Azure integration, compliance posture, and operational simplicity.

That is why this announcement should be read as a platform maneuver as much as a model launch. Microsoft is using in-house AI to deepen the value of its cloud relationship and reduce the risk that an enterprise customer might drift toward another provider for specific workloads. If a customer can buy OpenAI and Microsoft-trained models in the same place, Microsoft benefits from being the default broker.

That could be good news for teams building production applications. If Microsoft can offer a transcription model that is cheaper or faster, a voice model that sounds more natural, or an image model that better suits enterprise content pipelines, the company can win by incrementally displacing OpenAI in specific jobs. That is a classic platform strategy: win the workflow, not the ideology.

This overlap is not necessarily a breakup signal. If anything, it reflects how mature AI markets work once they move from novelty into procurement. Enterprises want benchmarks, alternatives, and negotiating leverage. Microsoft can preserve its OpenAI relationship while still building substitutes where the economics or strategic control make sense. The real question is not whether the partnership ends tomorrow; it is whether Microsoft gradually reduces the share of workloads that depend on OpenAI alone.

The same logic applies to investor dynamics. Microsoft’s continued role as OpenAI’s biggest backer gives it a seat at the table, but not necessarily full control over the model roadmap. Building its own models gives Microsoft insurance against shifts in pricing, access, or strategic direction. In a fast-moving AI market, insurance is often worth as much as innovation.

Image generation is similarly ripe for segmentation. Enterprise buyers care about control, safety, watermarking, style consistency, and integration with content systems. A model that is slightly less famous but better governed can be more attractive in corporate environments. Microsoft’s challenge is proving that its models are not just “good enough,” but commercially superior for real workloads.

These tasks are also easier to benchmark than open-ended chat. A company can measure transcription accuracy, voice naturalness, or image quality with internal evaluation sets and user feedback. That makes them ideal for a new entrant that wants to prove itself without needing to win the entire frontier model race on day one.

That practical angle is important because the AI market is maturing. Buyers are less impressed by demos than by reliability, compliance, and integration. Microsoft is betting that the winning pitch is not “our model is the most magical,” but “our model is integrated, governable, and deployable inside your existing stack.”

It also gives Microsoft room to iterate under lower public scrutiny. Consumer AI features are judged instantly and emotionally, while enterprise tools can be improved through controlled pilots and account-level deployment. Microsoft’s likely playbook is prove it in business, refine it in the platform, then surface it more broadly.

His public language has increasingly emphasized self-sufficiency, frontier model building, and systems that reinforce Microsoft’s own product roadmap. That framing matters because it turns model development into a strategic necessity rather than an optional experiment. When a CEO uses phrases like world class models and self-sufficient in AI, the company is not signaling dependence reduction as a side effect; it is making it the point.

That vertical integration is especially relevant in enterprise AI, where customers often want fewer vendors, not more. If Microsoft can provide the models, the deployment layer, the security stack, and the application layer, it can capture a much larger share of the AI budget. OpenAI, by contrast, remains primarily a model and product company, even as it expands its own ecosystem.

That does not mean the OpenAI relationship is fraying. It means Microsoft is acting like a company that expects AI to remain a strategic battleground for years, not months. The smarter move is to preserve partnership optionality while building internal muscle at the same time.

Microsoft’s strongest claim so far is directional, not definitive. The company says MAI-Transcribe-1 is the most accurate transcription model in the world and MAI-Voice-1 sets a new standard for natural speech. Those are bold claims, but they will need independent validation, especially because transcription and voice quality are easy to assert and harder to settle in a universally accepted way.

Other cloud rivals will also pay attention. If Microsoft can successfully sell homegrown models alongside outside models in Foundry, it reinforces the idea that cloud providers should be marketplaces for multiple AI suppliers rather than single-brand showcases. That is potentially good for enterprise buyers and potentially less good for model makers who want direct customer relationships.

Watch for:

The opportunity is bigger than the immediate product set. If Microsoft can prove that it can build competitive models internally, it gains strategic flexibility across pricing, procurement, and roadmap planning. It also sends a message to the market that the company is not merely an OpenAI distribution channel, but a credible AI platform builder in its own right.

There is also the possibility of channel conflict. Microsoft benefits from selling OpenAI models through Foundry, but it now also benefits from replacing some of that usage with its own models. Managing that tension without confusing customers or weakening the partnership will require careful packaging and messaging. That balance may be harder than the model training itself.

The broader strategic question is whether Microsoft continues to expand its in-house model family beyond speech and images. If it does, then the company is effectively building a parallel AI stack that can stand beside OpenAI rather than beneath it. If it does not, the current release may end up as a useful but limited proof point.

What to watch:

Source: Business Insider Microsoft released 3 new AI models, ramping up competition with its close partner, OpenAI

Background

Background

The Microsoft-OpenAI relationship has always been unusual: part investment, part partnership, and part strategic hedge. Microsoft became OpenAI’s largest investor and deeply embedded itself in OpenAI’s growth by supplying Azure infrastructure while also using OpenAI models to power Copilot across its software stack. That arrangement gave Microsoft access to frontier AI without having to build everything from scratch, but it also created a dependency that looked increasingly uncomfortable as AI became central to both consumer and enterprise strategy.Over the past year, Microsoft has made a series of moves that suggest it wants optionality, not just alliance. The company reorganized around Microsoft AI under Mustafa Suleyman, and in 2025 he publicly framed the work in terms of creating AI companions and broader consumer experiences. More recently, Microsoft announced a leadership update that explicitly tied Suleyman’s remit to superintelligence efforts and “world class models” over the next five years. That wording matters because it reads less like a product support function and more like the foundation of an independent model strategy.

At the same time, Microsoft has been widening the surface area of its own AI platform. Foundry now serves as the company’s central place for building, customizing, and deploying AI applications at scale, and its model catalog includes not only OpenAI offerings but also models from Anthropic, Meta, Mistral, Cohere, NVIDIA, Hugging Face, and others. Microsoft is clearly positioning Foundry as a brokerage layer for enterprise AI, one that makes Microsoft the default marketplace rather than merely the favorite tenant hosting someone else’s frontier models.

The timing of this release also reflects a broader market shift. By 2026, enterprise buyers no longer want a single model story; they want a portfolio, with specialized tools for transcription, voice, image generation, search, and agents. Microsoft’s in-house models fit neatly into that need. They are not pitched as universal replacements for GPT-class systems. Instead, they are task-specific models that can be sold into workflows where accuracy, latency, price, or governance matter more than raw generality.

What Microsoft Actually Released

The three models are narrowly scoped but strategically important. According to Microsoft’s own announcement, MAI-Transcribe-1 handles transcription across 25 languages, MAI-Voice-1 produces natural expressive speech generation, and MAI-Image-2 is described as Microsoft’s most capable image model yet. The company says they are available on Microsoft Foundry and the MAI Playground, with Foundry being the enterprise-facing route.That matters because Microsoft is not merely experimenting in a lab. It is productizing these models as commercial services for developers and businesses. This immediately places them in the same category as OpenAI’s Whisper, text-to-speech tools, and DALL·E family, which Microsoft also sells through Foundry in one form or another. In other words, Microsoft is now competing with a partner whose models still remain part of its own sales story.

A targeted rather than general-purpose approach

This release is best understood as a specialized model bundle, not a grand declaration that Microsoft has matched OpenAI across the board. Each model solves a specific problem, which is exactly what enterprise customers often need when deploying AI into production workflows. Transcription, speech synthesis, and image generation are all highly monetizable infrastructure tasks that can be sold independently of the broader chatbot stack.The narrowness is actually a strength. Microsoft can tune each model for a defined business scenario, integrate them tightly into Foundry, and market them as building blocks for applications rather than as headline-grabbing general intelligence. That makes them easier to govern, easier to benchmark, and potentially easier to sell to regulated industries. It also lets Microsoft compete where the margin is good and the switching costs are high.

Key implications:

- Task-specific AI is now a core Microsoft product strategy.

- Enterprise distribution may matter more than raw model prestige.

- Foundry becomes the commercial center of gravity.

- OpenAI overlap is no longer theoretical; it is a sales reality.

- Model specialization supports pricing and governance advantages.

Why Foundry Matters More Than the Models Themselves

The models are important, but the platform is the real story. Microsoft Foundry is designed to be the place where customers discover, test, customize, and deploy a wide range of AI models within Azure. Microsoft’s documentation presents it as an “AI app and agent factory,” which is a telling phrase because it frames AI not as a single chatbot capability but as a production pipeline.By placing MAI models inside Foundry, Microsoft can bundle them with its broader cloud, security, compliance, and enterprise tooling. That gives Microsoft a classic platform advantage: model choice becomes part of a larger procurement and governance relationship. A customer evaluating transcription or voice generation is no longer buying only model quality; they are also buying Microsoft identity, Azure integration, compliance posture, and operational simplicity.

The enterprise distribution moat

For enterprises, distribution often matters more than novelty. A model can be technically excellent and still lose if it is hard to procure, harder to secure, or awkward to integrate with existing systems. Microsoft’s advantage is that Foundry already sits inside a huge enterprise ecosystem where Azure contracts, security frameworks, and developer familiarity can accelerate adoption.That is why this announcement should be read as a platform maneuver as much as a model launch. Microsoft is using in-house AI to deepen the value of its cloud relationship and reduce the risk that an enterprise customer might drift toward another provider for specific workloads. If a customer can buy OpenAI and Microsoft-trained models in the same place, Microsoft benefits from being the default broker.

What this means for developers

Developers gain more choice, but also more complexity. They now need to compare not just model performance, but how each model fits into latency, region availability, pricing, guardrails, and workflow integration. Microsoft’s Foundry documentation already emphasizes model variety and deployment options, which suggests the company wants developers to think in terms of architecture selection rather than brand loyalty.That could be good news for teams building production applications. If Microsoft can offer a transcription model that is cheaper or faster, a voice model that sounds more natural, or an image model that better suits enterprise content pipelines, the company can win by incrementally displacing OpenAI in specific jobs. That is a classic platform strategy: win the workflow, not the ideology.

The OpenAI Overlap Is Real

Microsoft is not launching these models in a vacuum. OpenAI already supplies transcription, voice, and image capabilities through Whisper, text-to-speech, and DALL·E, and those capabilities are already available in Microsoft’s own ecosystem. That means Microsoft is effectively both hosting and competing with its own partner in adjacent product categories.This overlap is not necessarily a breakup signal. If anything, it reflects how mature AI markets work once they move from novelty into procurement. Enterprises want benchmarks, alternatives, and negotiating leverage. Microsoft can preserve its OpenAI relationship while still building substitutes where the economics or strategic control make sense. The real question is not whether the partnership ends tomorrow; it is whether Microsoft gradually reduces the share of workloads that depend on OpenAI alone.

Competitive tension without open conflict

The public tone remains careful. Microsoft has not framed the models as replacements for OpenAI, and OpenAI remains central to Copilot and Azure’s AI story. But the product architecture tells a more interesting story: Microsoft is making sure it can answer a customer request without having to route every use case through OpenAI. That is a subtle but meaningful power shift.The same logic applies to investor dynamics. Microsoft’s continued role as OpenAI’s biggest backer gives it a seat at the table, but not necessarily full control over the model roadmap. Building its own models gives Microsoft insurance against shifts in pricing, access, or strategic direction. In a fast-moving AI market, insurance is often worth as much as innovation.

Why specialization can beat generality

OpenAI’s biggest strengths are broad capability and brand leadership. Microsoft’s opening is different: specialize aggressively where the customer wants dependable, production-grade infrastructure. Transcription and voice, in particular, are often judged by a few painful metrics such as word error rate, latency, and stability under noisy conditions. If Microsoft can outperform on those dimensions, it can win business even without dethroning OpenAI’s broader reputation.Image generation is similarly ripe for segmentation. Enterprise buyers care about control, safety, watermarking, style consistency, and integration with content systems. A model that is slightly less famous but better governed can be more attractive in corporate environments. Microsoft’s challenge is proving that its models are not just “good enough,” but commercially superior for real workloads.

Why Voice, Speech, and Images Are the Right Beachhead

Microsoft’s choice of categories is not random. Speech-to-text, text-to-speech, and image generation are among the most practical, widely deployable AI functions in enterprise software. They sit close to customer service, media workflows, accessibility, content moderation, documentation, and knowledge capture, which means they can generate value quickly.These tasks are also easier to benchmark than open-ended chat. A company can measure transcription accuracy, voice naturalness, or image quality with internal evaluation sets and user feedback. That makes them ideal for a new entrant that wants to prove itself without needing to win the entire frontier model race on day one.

Enterprise use cases are obvious

The most immediate enterprise uses are straightforward. Call centers can transcribe interactions, internal teams can convert meetings into searchable records, and customer-facing products can add voice interfaces or image tools. Microsoft already has the distribution pathways to put these capabilities into Azure-based apps, Copilot-adjacent experiences, and custom enterprise workflows.That practical angle is important because the AI market is maturing. Buyers are less impressed by demos than by reliability, compliance, and integration. Microsoft is betting that the winning pitch is not “our model is the most magical,” but “our model is integrated, governable, and deployable inside your existing stack.”

Consumer and creator spillover

The consumer opportunity is different. A voice model can power narration, assistants, accessibility tools, and creation features; an image model can support design, marketing, and productivity. Microsoft may eventually push these capabilities deeper into consumer products, but the current rollout is clearly enterprise-first. That is sensible because enterprise sales can validate the technology while consumer branding catches up.It also gives Microsoft room to iterate under lower public scrutiny. Consumer AI features are judged instantly and emotionally, while enterprise tools can be improved through controlled pilots and account-level deployment. Microsoft’s likely playbook is prove it in business, refine it in the platform, then surface it more broadly.

Mustafa Suleyman’s Role Changes the Interpretation

This release would mean less if Microsoft AI were still viewed as a small product team. But Mustafa Suleyman’s position changes the stakes. Since joining Microsoft to lead Copilot and later being tasked with a broader Microsoft AI mandate, he has been one of the company’s clearest voices for building more of the stack in-house.His public language has increasingly emphasized self-sufficiency, frontier model building, and systems that reinforce Microsoft’s own product roadmap. That framing matters because it turns model development into a strategic necessity rather than an optional experiment. When a CEO uses phrases like world class models and self-sufficient in AI, the company is not signaling dependence reduction as a side effect; it is making it the point.

A more vertically integrated Microsoft

Microsoft’s history in cloud and software has always favored integration. The company understands that owning more of the stack can improve margins, simplify support, and create lock-in. In AI, that instinct is now becoming explicit, and Suleyman is the executive most closely associated with that turn.That vertical integration is especially relevant in enterprise AI, where customers often want fewer vendors, not more. If Microsoft can provide the models, the deployment layer, the security stack, and the application layer, it can capture a much larger share of the AI budget. OpenAI, by contrast, remains primarily a model and product company, even as it expands its own ecosystem.

A hedge against partner dependency

There is also a geopolitical and business continuity angle. Dependence on a single external model supplier can become a risk if prices rise, access changes, or strategic priorities diverge. Microsoft’s in-house models provide a hedge, and hedge-building is what disciplined enterprise platforms do when they become too important to outsource.That does not mean the OpenAI relationship is fraying. It means Microsoft is acting like a company that expects AI to remain a strategic battleground for years, not months. The smarter move is to preserve partnership optionality while building internal muscle at the same time.

The Market Reaction Will Depend on Benchmark Proof

Announcements like this tend to generate excitement first and scrutiny later. The real test will not be the launch blog post, but the comparative performance data Microsoft releases, the customer benchmarks it can stand behind, and the adoption it drives inside Foundry. Without that proof, the models risk being seen as symbolic rather than transformative.Microsoft’s strongest claim so far is directional, not definitive. The company says MAI-Transcribe-1 is the most accurate transcription model in the world and MAI-Voice-1 sets a new standard for natural speech. Those are bold claims, but they will need independent validation, especially because transcription and voice quality are easy to assert and harder to settle in a universally accepted way.

How rivals may respond

OpenAI will likely respond by continuing to improve its own audio and image offerings. It has already positioned newer audio models as outperforming Whisper on established benchmarks, and it has a broader multimodal roadmap than the narrow categories Microsoft is emphasizing here. The competitive response may therefore be less about panic and more about acceleration.Other cloud rivals will also pay attention. If Microsoft can successfully sell homegrown models alongside outside models in Foundry, it reinforces the idea that cloud providers should be marketplaces for multiple AI suppliers rather than single-brand showcases. That is potentially good for enterprise buyers and potentially less good for model makers who want direct customer relationships.

What will matter most

The most important factors over the next few quarters will be practical, not theatrical. Customers will want to know whether the models are cheaper, faster, easier to govern, or better integrated than the alternatives. If Microsoft can answer yes on even one or two of those dimensions, the launch could matter far more than the headline suggests.Watch for:

- Independent benchmarks on transcription and speech quality.

- Enterprise adoption inside regulated industries.

- Pricing and packaging changes in Foundry.

- Whether Microsoft surfaces these models in consumer products.

- Any sign that OpenAI usage in Microsoft workflows becomes more selective.

Strengths and Opportunities

Microsoft’s move has several obvious strengths. It deepens the company’s AI sovereignty, improves platform leverage, and creates room to tailor models to enterprise needs that may be underserved by general-purpose frontier systems. It also turns Foundry into a more complete commercial destination, which could increase customer stickiness and reduce reliance on any single outside supplier.The opportunity is bigger than the immediate product set. If Microsoft can prove that it can build competitive models internally, it gains strategic flexibility across pricing, procurement, and roadmap planning. It also sends a message to the market that the company is not merely an OpenAI distribution channel, but a credible AI platform builder in its own right.

- Greater strategic independence from OpenAI

- Tighter enterprise integration inside Azure and Foundry

- More pricing flexibility for specialized workloads

- Better fit for regulated customers seeking governance and compliance

- Expanded model choice for developers building production apps

- Potential consumer spillover into Copilot and accessibility features

- Stronger negotiating position in future AI partnerships

Risks and Concerns

The biggest risk is that Microsoft overpromises and underdelivers relative to its own benchmarks. Claims like “most accurate” or “new standard” invite scrutiny, and if the models fail to clearly beat or at least match the competition, the launch could look like strategic theater. That would be especially damaging because Microsoft is now setting expectations for self-sufficiency in AI.There is also the possibility of channel conflict. Microsoft benefits from selling OpenAI models through Foundry, but it now also benefits from replacing some of that usage with its own models. Managing that tension without confusing customers or weakening the partnership will require careful packaging and messaging. That balance may be harder than the model training itself.

- Benchmark risk if claims are not independently confirmed

- Partner friction if OpenAI sees direct substitution

- Customer confusion over which Microsoft-branded model to choose

- Fragmentation risk if the product catalog becomes too complex

- High expectations for future in-house frontier model releases

- Possible pricing pressure if competitors undercut enterprise rates

- Execution risk as Microsoft scales model operations and governance

Looking Ahead

The next phase will be about evidence. Microsoft needs to show that these models are not just available, but adopted, benchmarked, and embedded into real enterprise workflows. If the company starts publishing comparative performance data, case studies, or workload-specific pricing advantages, the announcement will look much more consequential in hindsight.The broader strategic question is whether Microsoft continues to expand its in-house model family beyond speech and images. If it does, then the company is effectively building a parallel AI stack that can stand beside OpenAI rather than beneath it. If it does not, the current release may end up as a useful but limited proof point.

What to watch:

- New benchmark disclosures for MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2.

- Enterprise customer announcements tied to Microsoft Foundry.

- Any expansion of MAI Playground or broader availability.

- Pricing comparisons against OpenAI and other cloud model providers.

- Whether Microsoft introduces additional in-house frontier models later in 2026.

Source: Business Insider Microsoft released 3 new AI models, ramping up competition with its close partner, OpenAI