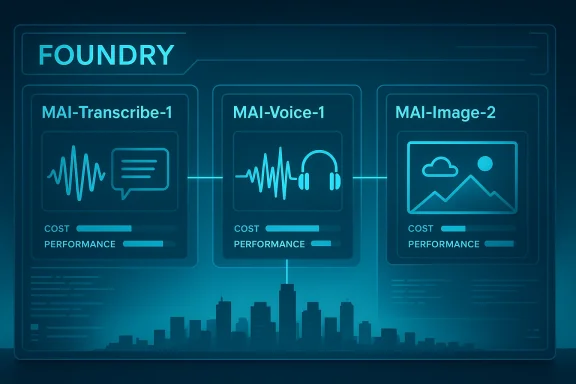

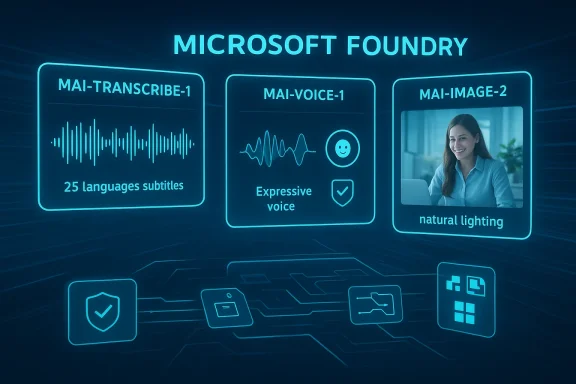

Microsoft’s new MAI transcription model lands at an important moment for the company, for enterprise AI buyers, and for anyone watching the balance of power between Redmond and OpenAI. On April 2, 2026, Microsoft began broadly surfacing its in-house MAI model family in Microsoft Foundry, including MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, signaling a more assertive push to own more of the AI stack rather than depend so heavily on outside frontier models. The move is not just about speech-to-text speed; it is about control, cost, product differentiation, and the ability to ship AI features that are more tightly aligned with Microsoft’s own platform priorities. (news.microsoft.com)

Microsoft has been building toward this moment for more than a year. Mustafa Suleyman joined Microsoft in March 2024 to lead Microsoft AI, with the company explicitly saying it would continue to invest in both OpenAI’s foundation models and its own infrastructure, custom systems, and research. That original arrangement made strategic sense while Microsoft was racing to bring Copilot to market, but it also left the company exposed to cost, latency, product timing, and roadmap dependence that come with leaning on a partner for the core intelligence layer.

The new MAI family looks like Microsoft’s answer to that dependency problem. The company’s Source news page says the models are now available to developers in Foundry, with MAI-Transcribe-1 described as a transcription model across 25 languages, MAI-Voice-1 positioned as expressive speech generation, and MAI-Image-2 framed as Microsoft’s most capable image model yet. Microsoft’s public messaging also emphasizes that these models are arriving for commercial use, which matters because it moves them from internal experimentation and selective deployment into the hands of customers building production workloads. (news.microsoft.com)

This launch also arrives after a period in which Microsoft has steadily broadened its Foundry model catalog. In November 2025, Microsoft was already publishing guidance around OpenAI’s GPT-4o audio models in Foundry, including transcription and text-to-speech use cases. Then, in March 2026, Microsoft Research introduced VibeVoice ASR, a long-form transcription model capable of handling up to 60 minutes of continuous audio in a single pass while preserving speaker structure and timestamps. The MAI announcement therefore fits a broader pattern: Microsoft is no longer treating voice AI as a single model dependency, but as a portfolio where it can mix third-party, open-source, and first-party systems. (devblogs.microsoft.com)

That matters because speech is one of the most commercially valuable forms of AI. Meetings, call centers, compliance recordings, closed captioning, note-taking, accessibility, dictation, multilingual translation, and customer support all sit on top of transcription quality. If Microsoft can deliver lower latency, lower cost, and better product integration than rivals, it has a chance to turn a model announcement into a platform advantage. If it cannot, the company risks adding another model family without changing the underlying economics of enterprise AI. (devblogs.microsoft.com)

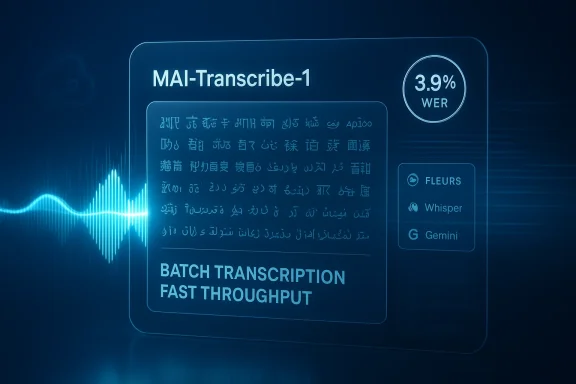

Microsoft’s framing of MAI-Transcribe-1 suggests it wants to move transcription out of the “good enough” category and into something strategically differentiated. Windows Central’s reporting says the model is built for speed and accuracy across meetings and audio, while Microsoft’s own Source page calls it the most accurate transcription model in the world across 25 languages. That is a bold claim, and it should be treated carefully, but the product direction is unmistakable: Microsoft wants transcription to be a first-class AI workload, not an afterthought. (news.microsoft.com)

The competitive implication is straightforward: if Microsoft can own the audio pipeline from recording to summarization to action, it can make Copilot more indispensable. That is especially important in Microsoft 365, where meeting data already lives inside Teams, Outlook, and related workflows. Better transcription is not just a feature; it is infrastructure for the next wave of AI productivity tools.

Microsoft’s official wording matters here. The company says the MAI family is being brought to “every developer in Foundry,” and it places MAI-Transcribe-1 alongside MAI-Voice-1 and MAI-Image-2 as part of a coherent platform story. In other words, this is not merely a model release. It is a statement that Microsoft intends to compete on the model layer while also retaining the distribution layer through Foundry and the product layer through Copilot and Microsoft 365. (news.microsoft.com)

It also changes the internal politics of Microsoft’s own product stack. If the company builds a strong first-party transcription path, then Copilot experiences can be tuned more aggressively for cost and latency. That gives Microsoft flexibility to reserve premium third-party models for the hardest jobs while keeping the bulk of everyday workloads on its own systems.

This is an important philosophical shift. A lot of AI discourse still treats “best model” as the only meaningful metric, but enterprise software is usually won by the system that reduces friction, integrates cleanly, and keeps unit economics under control. Microsoft is trying to use that reality to its advantage by turning model design into product strategy rather than a pure research contest.

The danger is that Microsoft becomes trapped between categories. If its own models are too modest, customers may still choose OpenAI, Anthropic, or Google for demanding workloads. If they are too similar to the best external options, Microsoft may not achieve enough differentiation to justify the investment. That balancing act will define the next year of Microsoft AI.

The appointment of Jacob Andreou to lead Copilot experience also matters. Microsoft is creating a sharper separation between product experience and model development, which is a classic move when a company wants to iterate faster on customer-facing design without letting research priorities dominate the roadmap. Suleyman’s role on the model side suggests Microsoft wants a stronger internal engine for the intelligence layer, while the experience team focuses on packaging and adoption.

For enterprise buyers, this can be attractive. It suggests Microsoft is building a stack where the model, the application, and the management layer are planned together rather than stitched together afterward. The risk, of course, is that stronger internal integration can also mean weaker openness if Microsoft starts steering customers toward preferred paths in ways that limit flexibility. That trade-off will be closely watched.

The MAI launch suggests Microsoft wants optionality. In practical terms, that means it can use in-house models where they are good enough, use partner models where they are clearly superior, and mix the two in ways that improve cost and reliability. That is a much stronger position than being locked into a single provider for every workload. It also gives Microsoft leverage in future negotiations, which is probably not lost on anyone in Redmond or at OpenAI.

Google, Anthropic, and a growing set of open-source alternatives add more pressure. Each competitor is trying to own part of the voice and multimodal stack. Microsoft’s advantage is distribution through Windows, Microsoft 365, Teams, Azure, and enterprise relationships. Its disadvantage is that users now expect AI features to be both seamless and inexpensive, which is a hard bar to clear across a huge installed base.

Accessibility is another major angle. Reliable transcription underpins captions for hearing-impaired users, supports language learning, and helps workers in noisy environments or with limited bandwidth. Microsoft has a long history of positioning accessibility as a core product principle, and a transcription model that handles 25 languages well can reinforce that message in a very practical way. (news.microsoft.com)

The best transcription models also reduce the hidden tax of cleanup. Anyone who has spent time correcting names, technical terms, or speaker labels knows that a “mostly right” transcript can still be expensive. Microsoft’s emphasis on structured output and long-form context suggests it knows the value proposition is not simply fewer errors, but less human intervention after the model finishes. (techcommunity.microsoft.com)

For legitimate use cases, this could be a strong fit for branded assistants, training content, customer service, and multilingual media. Businesses have long wanted synthetic voice systems that sound less robotic and more consistent across campaigns. Microsoft is clearly betting that the demand for high-quality voice generation will outweigh the concerns, at least in properly governed enterprise settings. (news.microsoft.com)

Microsoft’s advantage here is that it can bundle voice creation with enterprise governance. That could make the feature much more acceptable to large organizations than a standalone consumer app would be. Still, the line between legitimate synthesis and deceptive impersonation remains thin, and customers will expect Microsoft to provide strong guardrails.

The real significance is that Microsoft is packaging transcription, speech, and image generation together. That is exactly how modern AI platforms win enterprise mindshare: not by offering one dazzling model, but by making the surrounding ecosystem coherent enough that buyers can standardize on it. The more customers use one vendor for voice, image, and app integration, the harder it becomes to switch later. (news.microsoft.com)

It also gives Microsoft a way to speak to different audiences with one narrative. Developers want APIs. Enterprises want governance. Product teams want integrated features. The MAI family tries to serve all three by making the message about a platform, not a research demo. That is a more mature AI story than the industry often gives Microsoft credit for. (news.microsoft.com)

The most important thing to watch is not whether Microsoft has one model that beats everyone else in a vacuum. It is whether the company can make the full experience better: model selection, deployment, governance, latency, reliability, and product integration. That is where Microsoft has historically been strongest, and also where a platform company can most plausibly build a durable moat in the AI era.

Source: Windows Central Microsoft just launched a powerful new AI that can transcribe meetings and audio in seconds

Overview

Overview

Microsoft has been building toward this moment for more than a year. Mustafa Suleyman joined Microsoft in March 2024 to lead Microsoft AI, with the company explicitly saying it would continue to invest in both OpenAI’s foundation models and its own infrastructure, custom systems, and research. That original arrangement made strategic sense while Microsoft was racing to bring Copilot to market, but it also left the company exposed to cost, latency, product timing, and roadmap dependence that come with leaning on a partner for the core intelligence layer.The new MAI family looks like Microsoft’s answer to that dependency problem. The company’s Source news page says the models are now available to developers in Foundry, with MAI-Transcribe-1 described as a transcription model across 25 languages, MAI-Voice-1 positioned as expressive speech generation, and MAI-Image-2 framed as Microsoft’s most capable image model yet. Microsoft’s public messaging also emphasizes that these models are arriving for commercial use, which matters because it moves them from internal experimentation and selective deployment into the hands of customers building production workloads. (news.microsoft.com)

This launch also arrives after a period in which Microsoft has steadily broadened its Foundry model catalog. In November 2025, Microsoft was already publishing guidance around OpenAI’s GPT-4o audio models in Foundry, including transcription and text-to-speech use cases. Then, in March 2026, Microsoft Research introduced VibeVoice ASR, a long-form transcription model capable of handling up to 60 minutes of continuous audio in a single pass while preserving speaker structure and timestamps. The MAI announcement therefore fits a broader pattern: Microsoft is no longer treating voice AI as a single model dependency, but as a portfolio where it can mix third-party, open-source, and first-party systems. (devblogs.microsoft.com)

That matters because speech is one of the most commercially valuable forms of AI. Meetings, call centers, compliance recordings, closed captioning, note-taking, accessibility, dictation, multilingual translation, and customer support all sit on top of transcription quality. If Microsoft can deliver lower latency, lower cost, and better product integration than rivals, it has a chance to turn a model announcement into a platform advantage. If it cannot, the company risks adding another model family without changing the underlying economics of enterprise AI. (devblogs.microsoft.com)

Why Transcription Matters More Than It Sounds

Speech-to-text often gets treated as a commodity feature, but in enterprise AI it is a gateway capability. The quality of the transcript determines whether downstream tools can summarize, search, classify, redact, translate, or trigger workflows with confidence. A weak transcription layer forces human cleanup and destroys much of the ROI that vendors promise when they pitch AI meeting assistants. (techcommunity.microsoft.com)Microsoft’s framing of MAI-Transcribe-1 suggests it wants to move transcription out of the “good enough” category and into something strategically differentiated. Windows Central’s reporting says the model is built for speed and accuracy across meetings and audio, while Microsoft’s own Source page calls it the most accurate transcription model in the world across 25 languages. That is a bold claim, and it should be treated carefully, but the product direction is unmistakable: Microsoft wants transcription to be a first-class AI workload, not an afterthought. (news.microsoft.com)

The enterprise use case is the real prize

For consumers, transcription means notes and captions. For enterprises, it means searchable records, compliance support, analytics, and operational automation. A model that reliably handles multi-speaker meetings, jargon, and multilingual conversations can save real money across sales, legal, HR, and support teams. Microsoft’s earlier VibeVoice ASR announcement made that logic explicit by emphasizing long-form meetings, speaker diarization, and structured output. (techcommunity.microsoft.com)The competitive implication is straightforward: if Microsoft can own the audio pipeline from recording to summarization to action, it can make Copilot more indispensable. That is especially important in Microsoft 365, where meeting data already lives inside Teams, Outlook, and related workflows. Better transcription is not just a feature; it is infrastructure for the next wave of AI productivity tools.

MAI-Transcribe-1 and the New Foundry Pitch

Microsoft Foundry is becoming the company’s central message for developers who want choice without chaos. The new MAI models are being positioned as part of a broader commercial catalog, which means Microsoft wants developers to view Foundry as a place where speech, voice, image, and eventually more specialized AI components can be mixed and matched. That is a smart play in a market where buyers increasingly dislike lock-in but still want one vendor to handle governance, deployment, and support. (news.microsoft.com)Microsoft’s official wording matters here. The company says the MAI family is being brought to “every developer in Foundry,” and it places MAI-Transcribe-1 alongside MAI-Voice-1 and MAI-Image-2 as part of a coherent platform story. In other words, this is not merely a model release. It is a statement that Microsoft intends to compete on the model layer while also retaining the distribution layer through Foundry and the product layer through Copilot and Microsoft 365. (news.microsoft.com)

Why commercial availability changes the stakes

A model can be impressive in a lab and still fail commercially if it is hard to operationalize. By putting MAI models into Foundry, Microsoft is saying the models are intended for real workloads, not just demos. That matters for procurement teams, because commercial availability raises expectations around support, privacy, reliability, and integration with enterprise controls. (news.microsoft.com)It also changes the internal politics of Microsoft’s own product stack. If the company builds a strong first-party transcription path, then Copilot experiences can be tuned more aggressively for cost and latency. That gives Microsoft flexibility to reserve premium third-party models for the hardest jobs while keeping the bulk of everyday workloads on its own systems.

- Lower model dependence could reduce exposure to partner pricing.

- Better integration could improve Copilot’s end-user experience.

- Tighter governance could help enterprise buyers standardize on one platform.

- Stronger margins may follow if Microsoft shifts common tasks to in-house models.

- More product control lets Microsoft tune features for its own apps first.

The Mustafa Suleyman Strategy

Mustafa Suleyman has been clear that Microsoft wants to build off-frontier models that still matter in production. That phrase is doing a lot of work. It implies Microsoft is not trying to win every benchmark race against the very best frontier systems; instead, it wants models that are good enough, fast enough, cheaper, and easier to embed into products at scale.This is an important philosophical shift. A lot of AI discourse still treats “best model” as the only meaningful metric, but enterprise software is usually won by the system that reduces friction, integrates cleanly, and keeps unit economics under control. Microsoft is trying to use that reality to its advantage by turning model design into product strategy rather than a pure research contest.

Off-frontier does not mean off-relevance

There is a temptation to read off-frontier as second-tier. That would be too simplistic. In practical enterprise settings, a model that is 95% as capable but materially cheaper and faster can be a better business decision than a more expensive best-in-class option. Microsoft appears to believe there is a large market for that middle ground, especially when the models are wrapped in its security, identity, and management stack. That is a defensible theory, even if it is not the most glamorous one.The danger is that Microsoft becomes trapped between categories. If its own models are too modest, customers may still choose OpenAI, Anthropic, or Google for demanding workloads. If they are too similar to the best external options, Microsoft may not achieve enough differentiation to justify the investment. That balancing act will define the next year of Microsoft AI.

Copilot Reorganization and Product Control

Microsoft’s March 2026 Copilot leadership update makes this strategy even clearer. The company reorganized around four connected pillars: Copilot experience, Copilot platform, Microsoft 365 apps, and AI models. That structure is not just administrative housekeeping. It shows that Microsoft views model development as one leg of a larger system designed to ship AI across consumer and commercial products more coherently.The appointment of Jacob Andreou to lead Copilot experience also matters. Microsoft is creating a sharper separation between product experience and model development, which is a classic move when a company wants to iterate faster on customer-facing design without letting research priorities dominate the roadmap. Suleyman’s role on the model side suggests Microsoft wants a stronger internal engine for the intelligence layer, while the experience team focuses on packaging and adoption.

Why the reorg and the model launch belong together

If Microsoft were only trying to show off new model names, the organizational change would be a side story. But together, the reorg and the MAI launch tell a much bigger story: Microsoft is separating the question of what the model should be from the question of how the user experiences it. That should help the company move faster, especially when different segments need different trade-offs on speed, cost, tone, and accuracy.For enterprise buyers, this can be attractive. It suggests Microsoft is building a stack where the model, the application, and the management layer are planned together rather than stitched together afterward. The risk, of course, is that stronger internal integration can also mean weaker openness if Microsoft starts steering customers toward preferred paths in ways that limit flexibility. That trade-off will be closely watched.

- Four pillars imply a more modular AI organization.

- Model ownership should improve product coordination.

- Experience leadership can focus on adoption and usability.

- Platform leadership can unify tooling for commercial customers.

- Microsoft 365 integration could become the main distribution engine.

How This Compares With OpenAI and Other Rivals

Microsoft’s relationship with OpenAI has always been symbiotic and awkward. Microsoft benefited enormously from early access to OpenAI’s models, while OpenAI gained cloud scale, distribution, and enterprise credibility. But once a company becomes deeply dependent on a partner for the core engine of its flagship products, the strategic pressure to diversify is inevitable.The MAI launch suggests Microsoft wants optionality. In practical terms, that means it can use in-house models where they are good enough, use partner models where they are clearly superior, and mix the two in ways that improve cost and reliability. That is a much stronger position than being locked into a single provider for every workload. It also gives Microsoft leverage in future negotiations, which is probably not lost on anyone in Redmond or at OpenAI.

The speech stack is a competitive battlefield

Speech and voice are now a serious differentiator across the AI industry. Microsoft has recently been shipping Foundry audio capabilities, while OpenAI’s GPT-4o audio family is already integrated into Microsoft Foundry as well. This means Microsoft is competing both with and against its own ecosystem partners, and the customer benefit is choice. But the strategic consequence is that Microsoft must prove its own models are not just available, but genuinely better for specific workloads. (devblogs.microsoft.com)Google, Anthropic, and a growing set of open-source alternatives add more pressure. Each competitor is trying to own part of the voice and multimodal stack. Microsoft’s advantage is distribution through Windows, Microsoft 365, Teams, Azure, and enterprise relationships. Its disadvantage is that users now expect AI features to be both seamless and inexpensive, which is a hard bar to clear across a huge installed base.

- OpenAI remains the benchmark partner and rival.

- Google remains a strong multimodal competitor.

- Anthropic pressures enterprise AI buyers on safety and reasoning.

- Open-source models keep pushing down price expectations.

- Microsoft’s distribution may be its biggest moat.

What It Means for Meetings, Dictation, and Accessibility

The most immediate consumer-facing payoff from a better transcription model is not some futuristic agent. It is the humble meeting transcript. If MAI-Transcribe-1 is as fast and accurate as Microsoft suggests, then the quality of notes, captions, summaries, and searchable records could improve quickly across enterprise apps and potentially some consumer workflows too. (news.microsoft.com)Accessibility is another major angle. Reliable transcription underpins captions for hearing-impaired users, supports language learning, and helps workers in noisy environments or with limited bandwidth. Microsoft has a long history of positioning accessibility as a core product principle, and a transcription model that handles 25 languages well can reinforce that message in a very practical way. (news.microsoft.com)

Why speed matters as much as quality

A transcript that arrives a minute late can still be useful. A transcript that arrives in seconds can change the workflow entirely. Real-time or near-real-time transcription is what turns audio into an interactive medium, allowing search, summarization, and follow-up prompts to feel immediate rather than retrospective. That is why speed claims are not just marketing fluff; they shape how the product is actually used. (news.microsoft.com)The best transcription models also reduce the hidden tax of cleanup. Anyone who has spent time correcting names, technical terms, or speaker labels knows that a “mostly right” transcript can still be expensive. Microsoft’s emphasis on structured output and long-form context suggests it knows the value proposition is not simply fewer errors, but less human intervention after the model finishes. (techcommunity.microsoft.com)

- Meetings become easier to search and summarize.

- Closed captioning can become more timely and accurate.

- Dictation benefits from better punctuation and structure.

- Accessibility tools gain broader language support.

- Post-call workflows can be automated with less cleanup.

Voice Cloning, Branding, and the Commercial Stakes

MAI-Voice-1 may be the most commercially sensitive part of the launch. Microsoft says it can preserve speaker identity over long-form content and includes a voice-prompting feature that can create custom brand voices from one minute of audio. That is powerful, but it is also the sort of capability that forces companies to think carefully about consent, authenticity, and abuse prevention. (news.microsoft.com)For legitimate use cases, this could be a strong fit for branded assistants, training content, customer service, and multilingual media. Businesses have long wanted synthetic voice systems that sound less robotic and more consistent across campaigns. Microsoft is clearly betting that the demand for high-quality voice generation will outweigh the concerns, at least in properly governed enterprise settings. (news.microsoft.com)

Brand voice is a product, not just a feature

If a company can generate a recognizable voice from a minute of source audio, then voice becomes part of identity management. That is attractive for marketing and customer support, but it also raises the stakes around policy and auditability. The more realistic the voice, the more important it becomes to control who can create it, where it can be used, and how it is labeled. (news.microsoft.com)Microsoft’s advantage here is that it can bundle voice creation with enterprise governance. That could make the feature much more acceptable to large organizations than a standalone consumer app would be. Still, the line between legitimate synthesis and deceptive impersonation remains thin, and customers will expect Microsoft to provide strong guardrails.

- Marketing teams may want branded synthetic voices.

- Training departments can reduce production costs.

- Contact centers may use expressive assistants.

- Localization teams can scale audio content faster.

- Security teams will need clear usage controls.

Image Generation and the Broader MAI Portfolio

MAI-Image-2 may look like the least surprising part of the announcement, but it still matters. Microsoft says the model excels at natural lighting, skin tones, and in-image text, and it reportedly ranked among the top three on the Arena.ai text-to-image leaderboard. That suggests Microsoft is trying to compete on practical visual quality rather than just abstract benchmark prestige. (news.microsoft.com)The real significance is that Microsoft is packaging transcription, speech, and image generation together. That is exactly how modern AI platforms win enterprise mindshare: not by offering one dazzling model, but by making the surrounding ecosystem coherent enough that buyers can standardize on it. The more customers use one vendor for voice, image, and app integration, the harder it becomes to switch later. (news.microsoft.com)

The portfolio strategy reduces product risk

A company that depends on a single model family exposes itself to bottlenecks and surprise price changes. A portfolio strategy gives Microsoft the freedom to route workloads based on latency, quality, and cost. That should be especially useful in Foundry, where developers need to test and deploy models for different tasks without reconstructing their entire stack every time. (news.microsoft.com)It also gives Microsoft a way to speak to different audiences with one narrative. Developers want APIs. Enterprises want governance. Product teams want integrated features. The MAI family tries to serve all three by making the message about a platform, not a research demo. That is a more mature AI story than the industry often gives Microsoft credit for. (news.microsoft.com)

Strengths and Opportunities

The biggest strength of Microsoft’s move is that it aligns product, platform, and model strategy in one visible step. This is not just about chasing benchmarks; it is about reducing dependency and giving customers a clearer path to deploy AI in production. The timing is also strong, because enterprises are now asking harder questions about cost, reliability, and vendor concentration.- Diversifies Microsoft’s AI supply chain and reduces overreliance on OpenAI.

- Improves Foundry’s value proposition by adding first-party models across modalities.

- Strengthens Copilot economics if common workloads move to cheaper in-house models.

- Enhances enterprise adoption by combining model access with governance and tooling.

- Boosts accessibility and meeting productivity through better transcription and speech support.

- Creates leverage in partner negotiations by proving Microsoft has viable alternatives.

- Reinforces Microsoft’s platform moat across Teams, Microsoft 365, Azure, and Windows.

Risks and Concerns

The most obvious risk is that Microsoft overpromises on model quality while underestimating how hard speech and generation edge cases can be in production. Benchmarks and marketing claims do not guarantee real-world success, especially in noisy meetings, specialized terminology, and multilingual environments. There is also the strategic risk that Microsoft’s in-house models are good but not good enough, leaving the company with added complexity and only modest differentiation.- Benchmark claims may not hold up under real-world enterprise conditions.

- Voice cloning features raise safety, consent, and impersonation concerns.

- Model fragmentation could confuse developers if too many options feel similar.

- Governance gaps could slow deployment in regulated industries.

- Cost savings may be incremental rather than transformative.

- OpenAI dependence may persist for frontier workloads despite the new models.

- Customer expectations may rise faster than product maturity if Microsoft markets the models too aggressively.

Looking Ahead

The next few months will tell us whether MAI-Transcribe-1 is a symbolic milestone or a real platform shift. If Microsoft can demonstrate that its models improve cost, speed, and accuracy in everyday workloads, then Foundry becomes more than a model catalog; it becomes the place where Microsoft gradually internalizes more of its AI stack. If not, the launch will still matter, but mostly as evidence that Microsoft wants control, even if it still leans on partners for the hardest problems.The most important thing to watch is not whether Microsoft has one model that beats everyone else in a vacuum. It is whether the company can make the full experience better: model selection, deployment, governance, latency, reliability, and product integration. That is where Microsoft has historically been strongest, and also where a platform company can most plausibly build a durable moat in the AI era.

- Real-world transcription demos with meetings, interviews, and noisy audio.

- Pricing and inference economics compared with OpenAI and other partners.

- Integration depth in Copilot and Microsoft 365 across consumer and commercial experiences.

- Security and policy controls around voice generation and brand voice creation.

- Developer adoption in Foundry as a measure of whether customers trust the MAI family.

- Future model releases that show whether Microsoft is building a broad in-house stack or just filling gaps.

Source: Windows Central Microsoft just launched a powerful new AI that can transcribe meetings and audio in seconds