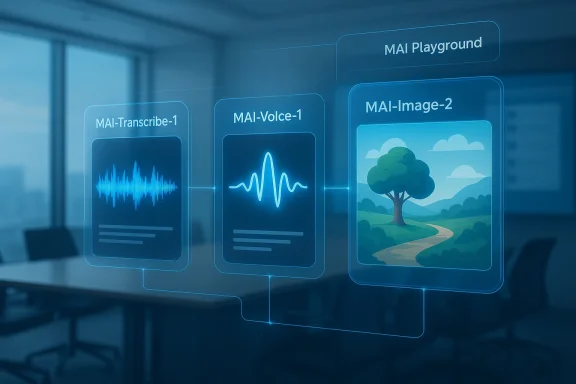

Microsoft’s latest AI push is less about flashy chatbot demos and more about filling the missing pieces of a complete multimodal stack. With MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, the company is broadening Microsoft Foundry and MAI Playground beyond text into speech, transcription, and image generation. That matters because the real battleground in 2026 is no longer whether AI can answer questions, but whether it can reliably move work across formats, workflows, and products at enterprise scale. Microsoft is now making a clear play to own that end-to-end layer. (news.microsoft.com)

The headline development is straightforward: Microsoft has moved from a text-first AI posture to a multimodal platform strategy. The company says these new models are available in Microsoft Foundry and MAI Playground, which means developers can test and deploy them in the same ecosystem where Microsoft has been steadily consolidating its AI tooling. That is a meaningful evolution, because model availability is increasingly a distribution story as much as a research story. (news.microsoft.com)

The strongest signal is not just that Microsoft built these models, but that it built them for specific production tasks. MAI-Transcribe-1 is positioned as a multilingual speech-to-text model for real-world audio, while MAI-Voice-1 is designed for natural speech generation and custom voice experiences. MAI-Image-2 rounds out the stack on the visual side, giving Microsoft a more coherent in-house creative pipeline than it had only a year ago.

This is also an ecosystem move. Microsoft has spent the past year pushing Copilot deeper into Microsoft 365, Teams, and Foundry, while simultaneously building out Microsoft AI as a more distinct model and product identity. The new release suggests the company wants to own not just the assistant layer, but the infrastructure beneath the assistant layer. In practical terms, that gives Microsoft more control over quality, pricing, latency, and product integration. (news.microsoft.com)

The timing is important too. Competitors are still racing to prove that multimodal AI can be useful, affordable, and dependable rather than merely impressive. Microsoft’s move suggests the company believes the market is now ready for specialized models that solve production problems, not just general-purpose models that demo well on stage. That distinction is subtle, but strategically decisive.

The most obvious beneficiary is Microsoft 365. Meeting transcription, captioning, document summarization, and internal knowledge workflows all become stronger when the underlying speech model is built for noisy, multilingual environments. Voice generation adds another layer for content creation, customer-facing agents, and accessibility use cases. In other words, Microsoft is moving toward a full content supply chain.

Microsoft frames the model as highly accurate and efficient, with a batch transcription speed advantage over existing Microsoft Azure Fast offerings. It is also already being phased into Copilot Voice and Microsoft Teams, which gives it immediate product relevance beyond developer experimentation. This is the kind of rollout that can quietly transform daily usage patterns without a splashy consumer launch.

A few implications stand out:

The significance here is not merely speed. Audio generation becomes valuable when it is cheap, consistent, and easy to personalize. If Microsoft can deliver high-quality voice output at scale, it can power everything from accessibility features to branded voice assistants to podcasting tools. That broadens the company’s addressable market beyond enterprise productivity into creator tools and media workflows.

The commercial upside is broad:

Microsoft has been building toward this for months. Earlier releases of MAI-Image-1 signaled a willingness to invest in in-house image generation rather than rely entirely on outside model partners. MAI-Image-2 now pushes that strategy forward, suggesting Microsoft sees image generation as too important to outsource in the long run.

That matters because Microsoft has spent the past year making Foundry feel less like a side project and more like the company’s central AI hub. By bringing in models across speech, voice, and vision, Microsoft reduces the incentive for developers to stitch together external services. The more workloads that stay inside Foundry, the stronger the lock-in and the better the telemetry for Microsoft.

That is why the platform packaging is so important:

The most important competitive implication is that Microsoft is reducing dependency. It still has a deep relationship with OpenAI, but it now has enough in-house capability to shape its own roadmap in speech, image, and voice. That gives the company leverage when product priorities diverge or when pricing and access terms become strategic pressure points.

The competitive dynamics break down like this:

For consumers, the impact is more subtle but potentially more visible. Users may first encounter these models through Bing, Copilot, Teams, or PowerPoint rather than through a standalone model interface. That makes the technology feel less like a research milestone and more like a quiet upgrade to software people already use. (news.microsoft.com)

There is also a pricing dimension. Enterprise buyers will tolerate premium pricing if the workflow return is clear, while consumer tools need to feel effectively free inside larger bundles. Microsoft’s advantage is that it can cross-subsidize across its product portfolio, something smaller rivals cannot easily do. That may prove to be one of its most underappreciated strengths.

The potential path is clear. Transcription can strengthen meetings, voice can deepen audio experiences, and image generation can improve presentations and visual storytelling. Together, those capabilities could make Copilot less like a single assistant and more like a distributed layer across Microsoft’s productivity stack.

Potential near-term integration points include:

The broader industry takeaway is that AI is moving from proof of concept to production utility. That favors companies with large installed bases, deep distribution, and enough capital to absorb the heavy infrastructure costs of multimodal AI. Microsoft fits that profile better than most, which is why this release feels strategically important even if individual model launches can look incremental on paper.

What to watch next:

Source: Laodong.vn Microsoft AI transforms strongly, far ahead of traditional document processing

Overview

Overview

The headline development is straightforward: Microsoft has moved from a text-first AI posture to a multimodal platform strategy. The company says these new models are available in Microsoft Foundry and MAI Playground, which means developers can test and deploy them in the same ecosystem where Microsoft has been steadily consolidating its AI tooling. That is a meaningful evolution, because model availability is increasingly a distribution story as much as a research story. (news.microsoft.com)The strongest signal is not just that Microsoft built these models, but that it built them for specific production tasks. MAI-Transcribe-1 is positioned as a multilingual speech-to-text model for real-world audio, while MAI-Voice-1 is designed for natural speech generation and custom voice experiences. MAI-Image-2 rounds out the stack on the visual side, giving Microsoft a more coherent in-house creative pipeline than it had only a year ago.

This is also an ecosystem move. Microsoft has spent the past year pushing Copilot deeper into Microsoft 365, Teams, and Foundry, while simultaneously building out Microsoft AI as a more distinct model and product identity. The new release suggests the company wants to own not just the assistant layer, but the infrastructure beneath the assistant layer. In practical terms, that gives Microsoft more control over quality, pricing, latency, and product integration. (news.microsoft.com)

The timing is important too. Competitors are still racing to prove that multimodal AI can be useful, affordable, and dependable rather than merely impressive. Microsoft’s move suggests the company believes the market is now ready for specialized models that solve production problems, not just general-purpose models that demo well on stage. That distinction is subtle, but strategically decisive.

Why This Release Matters

Microsoft is not simply adding features; it is expanding the number of surfaces where AI can generate value. Transcription, voice generation, and image creation each map to different business workflows, and each can be monetized separately inside enterprise and consumer products. That creates a broader moat than a single general model ever could.The most obvious beneficiary is Microsoft 365. Meeting transcription, captioning, document summarization, and internal knowledge workflows all become stronger when the underlying speech model is built for noisy, multilingual environments. Voice generation adds another layer for content creation, customer-facing agents, and accessibility use cases. In other words, Microsoft is moving toward a full content supply chain.

From assistant to infrastructure

For years, the narrative around Microsoft AI centered on Copilot. Copilot still matters, but the company is now clearly treating models as infrastructure rather than just product garnish. That is a more durable business position because it lets Microsoft serve developers, OEMs, and enterprises even when end-user interfaces shift. (news.microsoft.com)- Transcription supports meetings, subtitles, call centers, and voice assistants.

- Voice generation supports audiobooks, podcasts, narrations, and interactive agents.

- Image generation supports marketing, design, productivity, and creative workflows.

- Foundry access lowers friction for developers who want one platform for multiple modalities.

MAI-Transcribe-1 and the Speech-to-Text Race

MAI-Transcribe-1 is the most operationally significant of the three models because transcription is one of the most common and least glamorous AI workloads. Microsoft says the model covers 25 languages and is aimed at noisy, real-world settings rather than clean benchmark audio. That emphasis matters because enterprises do not record perfect studio audio; they record meetings, calls, and field conversations.Microsoft frames the model as highly accurate and efficient, with a batch transcription speed advantage over existing Microsoft Azure Fast offerings. It is also already being phased into Copilot Voice and Microsoft Teams, which gives it immediate product relevance beyond developer experimentation. This is the kind of rollout that can quietly transform daily usage patterns without a splashy consumer launch.

The enterprise use case is bigger than subtitles

The obvious use case is captioning and transcription, but the deeper value lies in structured knowledge extraction. Once speech is reliably converted into text, downstream systems can search it, classify it, summarize it, and feed it into agents. That turns an audio file into a first-class enterprise asset.A few implications stand out:

- Meetings become queryable memory rather than disposable audio.

- Customer calls can be analyzed for intent, compliance, and sentiment.

- Education and accessibility workflows become more scalable.

- Voice assistants improve because they depend on accurate transcription upstream.

MAI-Voice-1 and the Economics of Audio Generation

If transcription is the plumbing, voice generation is the performance layer. MAI-Voice-1 is designed for natural speech, emotional range, and speaker identity preservation, which places it in the crowded but commercially promising market for AI narration and conversational audio. Microsoft says the model can generate 60 seconds of audio in about one second, which is the sort of performance claim that immediately invites both excitement and scrutiny.The significance here is not merely speed. Audio generation becomes valuable when it is cheap, consistent, and easy to personalize. If Microsoft can deliver high-quality voice output at scale, it can power everything from accessibility features to branded voice assistants to podcasting tools. That broadens the company’s addressable market beyond enterprise productivity into creator tools and media workflows.

Why voice is a strategic category

Voice is one of the most emotionally sticky interfaces in AI. Users often trust or reject a system based on how it sounds, not just what it says. That makes voice generation a product layer with unusual leverage, because it shapes perceived quality even when the underlying model is similar to competitors’.The commercial upside is broad:

- Content creators can produce localized narration faster.

- Enterprises can build branded virtual agents.

- Accessibility teams can create more natural assistive audio.

- Training and learning products can generate spoken explanations cheaply.

MAI-Image-2 and Microsoft’s Creative Ambitions

MAI-Image-2 represents Microsoft’s next step toward owning the visual layer of AI creation. Microsoft says it is the company’s most capable image model yet and that it is already available in Microsoft Foundry and MAI Playground. The emphasis on creative quality suggests this is meant to compete in a market where visual polish, prompt adherence, and production speed all matter. (news.microsoft.com)Microsoft has been building toward this for months. Earlier releases of MAI-Image-1 signaled a willingness to invest in in-house image generation rather than rely entirely on outside model partners. MAI-Image-2 now pushes that strategy forward, suggesting Microsoft sees image generation as too important to outsource in the long run.

Why image matters inside the Microsoft stack

Image generation is not an isolated creative toy in Microsoft’s world. It feeds directly into presentation building, marketing materials, visual storytelling, and product documentation. If integrated into PowerPoint, Bing, or other Microsoft surfaces, it can become one of the most visible consumer-facing demos of the company’s AI stack. (news.microsoft.com)- PowerPoint could use it for charts, illustration, and slide imagery.

- Bing could use it for search-adjacent creation experiences.

- Copilot could use it for embedded visual responses.

- Enterprise design teams could use it for rapid concept generation.

Foundry, Playground, and Platform Control

The choice to ship these models in Microsoft Foundry and MAI Playground is just as important as the models themselves. Foundry is where Microsoft wants developers to build, evaluate, and deploy AI systems, and the Playground is where they experiment before committing to production. Together, those environments shape how quickly a model can become a product. (news.microsoft.com)That matters because Microsoft has spent the past year making Foundry feel less like a side project and more like the company’s central AI hub. By bringing in models across speech, voice, and vision, Microsoft reduces the incentive for developers to stitch together external services. The more workloads that stay inside Foundry, the stronger the lock-in and the better the telemetry for Microsoft.

Developer experience is becoming the moat

A model’s technical performance is only part of the story. Developers care about SDKs, deployment simplicity, billing clarity, evaluation tools, and region support. Microsoft has been steadily investing in all of those layers, which makes a new model release more likely to stick.That is why the platform packaging is so important:

- Developers can test models quickly in the Playground.

- They can deploy them into Foundry-backed workflows.

- Microsoft can then route them into Copilot and adjacent products.

- Enterprise admins get a familiar governance environment.

Competitive Implications: OpenAI, Google, and the Multi-Modal Squeeze

Microsoft’s move lands in a crowded market, but the competitive picture is changing. OpenAI has been narrowing some of its projects around core products, while Google continues to push efficiency and cost optimization in generative models. Against that backdrop, Microsoft’s in-house stack looks like a bet on breadth, distribution, and enterprise control rather than pure model spectacle.The most important competitive implication is that Microsoft is reducing dependency. It still has a deep relationship with OpenAI, but it now has enough in-house capability to shape its own roadmap in speech, image, and voice. That gives the company leverage when product priorities diverge or when pricing and access terms become strategic pressure points.

A different kind of AI race

The market used to reward “biggest model wins” headlines. Now it rewards platforms that can ship usable AI into everyday work. That shift favors companies with infrastructure, distribution, and enterprise relationships, which is exactly where Microsoft is strongest. (news.microsoft.com)The competitive dynamics break down like this:

- OpenAI remains strong on frontier brand and consumer mindshare.

- Google has broad research depth and multimodal integration across its own ecosystem.

- Microsoft has the richest enterprise distribution layer and strong cloud leverage.

Enterprise vs. Consumer Impact

For enterprises, the value proposition is immediate and practical. Better transcription lowers meeting friction, voice generation improves customer service and accessibility, and image generation accelerates content production. These are workflow gains that can be measured in time saved, fewer manual handoffs, and faster content turnaround.For consumers, the impact is more subtle but potentially more visible. Users may first encounter these models through Bing, Copilot, Teams, or PowerPoint rather than through a standalone model interface. That makes the technology feel less like a research milestone and more like a quiet upgrade to software people already use. (news.microsoft.com)

Different expectations, different risks

Enterprises expect stability, security, and compliance. Consumers expect convenience, quality, and speed. Microsoft has to serve both without letting one side degrade the other. That is a hard balancing act, especially when voice and image generation can create safety concerns if deployed too broadly or too loosely.There is also a pricing dimension. Enterprise buyers will tolerate premium pricing if the workflow return is clear, while consumer tools need to feel effectively free inside larger bundles. Microsoft’s advantage is that it can cross-subsidize across its product portfolio, something smaller rivals cannot easily do. That may prove to be one of its most underappreciated strengths.

Product Integration and the Next Layer of Copilot

The natural question after a release like this is where the models will surface first. Microsoft has already signaled phased rollouts into Copilot Voice and Teams, and it has repeatedly shown a willingness to inject new model capabilities into existing products rather than ask users to adopt entirely new apps. That makes integration the real product story.The potential path is clear. Transcription can strengthen meetings, voice can deepen audio experiences, and image generation can improve presentations and visual storytelling. Together, those capabilities could make Copilot less like a single assistant and more like a distributed layer across Microsoft’s productivity stack.

Where users may see the biggest change first

The most likely first-touch experiences are the ones that save time immediately. Meeting transcripts, auto-generated recaps, voice-driven content, and visually enriched slides all fit that category. Users do not need to understand the model names to feel the benefit.Potential near-term integration points include:

- Teams for transcription and meeting understanding.

- Copilot for voice and multimodal assistance.

- PowerPoint for visual generation.

- Bing for creative and search-adjacent experiences.

Strengths and Opportunities

Microsoft’s release stands out because it combines technical breadth with distribution discipline. It is not just building models; it is building a route to adoption through Foundry, Copilot, Teams, and the rest of the Microsoft stack. That creates a set of strengths that are hard for rivals to replicate quickly. (news.microsoft.com)- Broad modality coverage across text, speech, voice, and images.

- Strong enterprise distribution through Microsoft 365 and Azure.

- Immediate developer access via Foundry and MAI Playground.

- Product integration potential across Teams, Copilot, Bing, and PowerPoint.

- Better workflow continuity from raw audio to structured output.

- Cross-subsidy power from Microsoft’s cloud and software business.

- Greater strategic independence from external model partners.

Risks and Concerns

The bigger Microsoft’s AI footprint becomes, the more exposed it is to execution risk. Speech and voice systems are especially unforgiving because mistakes are immediately noticeable to users, and failures in high-stakes environments can erode trust fast. Enterprise buyers will also ask hard questions about accuracy, latency, privacy, and governance.- Hallucinated or inaccurate transcripts can distort decisions.

- Synthetic voice misuse can support impersonation and fraud.

- Image generation bias can create reputational and compliance problems.

- Integration complexity may slow real-world deployment.

- Pricing pressure could limit adoption if costs are not competitive.

- Security and data handling will be scrutinized in regulated sectors.

- Overpromising on speed or quality could backfire if results vary in practice.

Looking Ahead

The next phase will be measured by integration, not announcement volume. If Microsoft begins surfacing these models broadly inside Teams, Copilot, Bing, and PowerPoint, the market will start to see whether this is a genuine platform shift or just another product cycle. The best sign of success will be when the models disappear into the workflow and users simply notice that work got faster. (news.microsoft.com)The broader industry takeaway is that AI is moving from proof of concept to production utility. That favors companies with large installed bases, deep distribution, and enough capital to absorb the heavy infrastructure costs of multimodal AI. Microsoft fits that profile better than most, which is why this release feels strategically important even if individual model launches can look incremental on paper.

What to watch next:

- Wider rollout of MAI-Transcribe-1 into Microsoft 365 and Teams.

- Product integrations for MAI-Voice-1 in consumer and business audio tools.

- Whether MAI-Image-2 reaches Bing, PowerPoint, or other flagship products.

- Pricing and usage limits inside Microsoft Foundry.

- Any new safety, moderation, or enterprise governance features.

- Whether Microsoft continues reducing reliance on outside model ecosystems.

Source: Laodong.vn Microsoft AI transforms strongly, far ahead of traditional document processing

Last edited: