Microsoft and OpenAI dropped a carefully worded joint statement that reads less like a press release and more like a legal safety net: despite OpenAI’s headline-grabbing new funding and an expanded Amazon partnership, the companies say the core terms of their multi‑year relationship remain unchanged — notably Microsoft’s exclusive role as the host for stateless OpenAI API calls, continued IP licensing and revenue‑share arrangements, and the existing contractual framework set out in last year’s partnership update.

The landscape shifted fast on February 27, 2026: OpenAI announced a record‑scale funding package and a strategic collaboration with Amazon, while Microsoft and OpenAI immediately published a joint clarification intended to keep enterprise customers, partners, and markets steady. The headlines focused on the money — a combined funding round widely reported as roughly $110 billion with Amazon, NVIDIA and SoftBank among the lead investors — but the corporate counter‑message was procedural: nothing about today’s announcements changes the previously negotiated commercial terms between Microsoft and OpenAI.

Why does that matter? The October 2025 partnership rework — the deal that reshaped equity, licensing and long‑term cloud commitments between the two companies — created a set of commercial and technical guardrails that Microsoft and OpenAI now say continue to govern the relationship. The February joint statement explicitly references that October 2025 blog and reiterates the most sensitive elements: IP access and license terms for Microsoft, revenue sharing that extends across third‑party collaborations, and Azure’s exclusivity for stateless OpenAI APIs.

By contrast, stateful workloads maintain context across interactions: agent systems, multi‑step workflows, persistent conversation histories, and orchestrated AI agents that coordinate tasks over time require stateful architectures or additional runtime layers that manage context, memory, and long‑lived data. Statefulness can be implemented at the application layer, inside a managed runtime that maintains session objects, or via integrated state stores designed to persist context across calls.

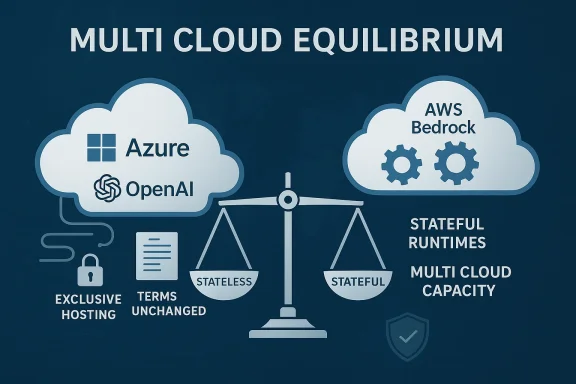

The joint statement secures Microsoft’s exclusivity over stateless API hosting, while leaving stateful runtimes and bespoke infrastructure more open for other providers — the kind of environment Amazon said it will target through expanded AWS services (including a “Stateful Runtime Environment” and deeper OpenAI integrations on Amazon Bedrock). This bifurcation is the technical axis of the new multi‑cloud reality.

Key commercial points to note:

At the community level, WindowsForum threads and other industry forums lit up with debate: some community voices framed the move as a pragmatic multi‑cloud normalization, while others warned about the erosion of Microsoft’s exclusive compute leverage. Those discussions reflect real operational concerns from admins and developers who must plan deployments across divergent cloud architectures.

That division is strategically sensible: it lets OpenAI accelerate infrastructure scale and diversify supply, while Microsoft keeps the distribution channel and enterprise integration that underpin its Copilot and Azure ambitions. But it also creates operational complexity and potential points of friction for enterprises that must now plan around hybrid hosting, cross‑cloud latency and layered governance.

For IT leaders, the takeaway is clear: the future will be multi‑cloud and multi‑model, but the path there will be defined by contractual clarity, technical abstraction and rigorous testing. The joint statement preserves important guarantees — but it also highlights the pragmatic reality that operational design, not press releases, will determine which vendor wins in any specific enterprise workload.

Source: The Tech Buzz https://www.techbuzz.ai/articles/microsoft-and-openai-reaffirm-exclusive-partnership-terms/

Background

Background

The landscape shifted fast on February 27, 2026: OpenAI announced a record‑scale funding package and a strategic collaboration with Amazon, while Microsoft and OpenAI immediately published a joint clarification intended to keep enterprise customers, partners, and markets steady. The headlines focused on the money — a combined funding round widely reported as roughly $110 billion with Amazon, NVIDIA and SoftBank among the lead investors — but the corporate counter‑message was procedural: nothing about today’s announcements changes the previously negotiated commercial terms between Microsoft and OpenAI.Why does that matter? The October 2025 partnership rework — the deal that reshaped equity, licensing and long‑term cloud commitments between the two companies — created a set of commercial and technical guardrails that Microsoft and OpenAI now say continue to govern the relationship. The February joint statement explicitly references that October 2025 blog and reiterates the most sensitive elements: IP access and license terms for Microsoft, revenue sharing that extends across third‑party collaborations, and Azure’s exclusivity for stateless OpenAI APIs.

What Microsoft and OpenAI actually said

- Microsoft maintains an exclusive license and access to OpenAI’s models and intellectual property.

- The pre‑existing commercial and revenue‑sharing arrangements remain in place and continue to include revenue generated through OpenAI’s partnerships with other cloud providers.

- Azure remains the exclusive cloud provider for stateless OpenAI APIs. The joint statement specifically says that stateless API calls to OpenAI models produced through any third‑party collaboration (including with Amazon) would be hosted on Azure.

- OpenAI’s first‑party products, including “Frontier” (the enterprise agent/orchestration product referenced in recent announcements), will continue to be hosted on Azure.

Decoding “stateless” vs “stateful”: why the distinction matters

What “stateless API” means in practice

A stateless API call is a single, self‑contained request and response: send a prompt (for example, “Summarize this contract”), get a response, and the system does not retain session memory between calls. These calls are the typical pattern for general model inference and are how developers integrate foundational models into web apps, bots, and many microservice architectures.By contrast, stateful workloads maintain context across interactions: agent systems, multi‑step workflows, persistent conversation histories, and orchestrated AI agents that coordinate tasks over time require stateful architectures or additional runtime layers that manage context, memory, and long‑lived data. Statefulness can be implemented at the application layer, inside a managed runtime that maintains session objects, or via integrated state stores designed to persist context across calls.

The joint statement secures Microsoft’s exclusivity over stateless API hosting, while leaving stateful runtimes and bespoke infrastructure more open for other providers — the kind of environment Amazon said it will target through expanded AWS services (including a “Stateful Runtime Environment” and deeper OpenAI integrations on Amazon Bedrock). This bifurcation is the technical axis of the new multi‑cloud reality.

Practical consequences for developers and enterprises

- If you call the stateless OpenAI model API (a simple inference request), Microsoft says that call will be hosted on Azure even when delivered via a third‑party commercial solution. That implies routing, billing, telemetry and SLA dependencies tied to Azure.

- If you build a stateful agent on AWS Bedrock that composes OpenAI models with long‑running memory, Amazon’s announced partnership suggests those runtime components will run on AWS — but the line between stateful and stateless is often blurred in production systems. Engineers building multi‑step pipelines must understand where each component runs, who controls logs and telemetry, and how egress and latency will be handled across providers.

Financial and commercial mechanics: who benefits, who pays

The headlines about a $110 billion funding round and large capital commitments — with Amazon reportedly investing $50 billion, NVIDIA and SoftBank investing tens of billions more — are attention‑grabbing but require careful parsing. Multiple outlets reported the size and composition of the round, and Amazon and OpenAI described expanded technical and commercial commitments, including multi‑year AWS consumptions tied to Trainium chips. Microsoft’s joint statement stressed that its revenue share and IP deals are unchanged and that those arrangements were constructed with third‑party partnerships in mind.Key commercial points to note:

- Microsoft’s previously negotiated equity stake and revenue arrangements continue to be core to its economic exposure to OpenAI’s growth; those rights were reaffirmed in October 2025’s restructured terms and reiterated in the February 2026 statement. Public reporting has consistently described Microsoft’s economic position as significant and long‑running.

- The joint statement explicitly notes revenue sharing also covers partnerships with other cloud providers, which is Microsoft’s contractual recognition that OpenAI will pursue external infrastructure deals as it scales. That language preserves Microsoft’s financial upside even as OpenAI diversifies compute.

- Amazon’s strategic investment and AWS commitments are framed around stateful enterprise agent products and training/inference capacity on Trainium, not replacing Azure’s role in stateless API hosting. AWS’s role appears aimed at expanding enterprise agent runtime and large‑scale infrastructure capacity.

Technical and product implications

For Azure and Microsoft products

- Microsoft preserves a central integrator role for stateless model access — which means Microsoft remains the primary gateway for the simplest, highest‑volume API interactions that underpin chatbots, webhooks, and many Copilot features. That preserves Microsoft’s leverage for enterprise product integration across Microsoft 365, Teams, GitHub, and Azure services.

- Microsoft’s control of stateless hosting reduces the near‑term risk of developer fragmentation for those core API surfaces; enterprises that rely on Azure SLAs and compliance certifications will see continuity.

For AWS and Amazon’s strategy

- Amazon appears to be targeting stateful runtimes, specialized inference and training scale, and deep commercial integrations for enterprise agent deployments. AWS and OpenAI described plans to build a stateful runtime offered through Amazon Bedrock and to be a distribution partner for OpenAI’s Frontier platform in third‑party clouds. That positions AWS as a provider of production runtime and vertical integration for agent‑style enterprise workflows.

- The technical contours of that stateful runtime — how it handles memory, data residency, governance, and model updates — will determine whether organizations view it as a true alternative to Azure or as a complementary option for specific workloads.

Risks, tensions and open questions

1) The devil is in the definition: what is “stateless” in legal and technical practice?

The joint statement uses stateless as a contractual fulcrum. In practice, many desirable features (session continuity, personalization, retrieval‑augmented generation) are implemented by composing stateless model calls with external state stores. Enterprises need clarity on where hosting obligations and revenue allocations apply when state is split across providers or when third parties implement hybrid runtimes. Expect ambiguous edge cases to generate negotiation and potential disputes.2) Vendor lock‑in and operational coupling

Enterprises that standardize on Azure for stateless inference will remain tied to Microsoft for many model‑access paths, including the billing, compliance attestations and telemetry necessary for regulated workloads. That dependency could create exposure to future price increases, changes to API terms, or operational constraints — especially because OpenAI’s commercial growth is capital‑intensive and cloud economics are a major part of its runway. The February statement reassures customers but does not eliminate lock‑in risk.3) Regulatory and antitrust scrutiny

The October 2025 restructuring and Microsoft’s continuing economic entanglement with OpenAI have already drawn regulator attention. Expanding AWS ties to OpenAI and preserving Azure’s stateless exclusivity could invite regulatory questions in multiple jurisdictions about market power, exclusivity clauses and anti‑competitive effects — particularly as cloud providers and AI model suppliers grow into adjacent markets like enterprise software and agent orchestration. Historical precedent suggests competition authorities will watch these developments closely.4) Cross‑cloud performance, latency and data gravity

Routing stateless API calls through Azure while executing stateful runtimes on AWS or other clouds introduces technical overhead: increased latency, egress costs, complex networking and potential performance variability. For latency‑sensitive applications (real‑time agents, voice assistants, financial trading tools), these cross‑cloud hops could materially affect user experience and total cost of ownership. Architects will need to plan data locality and caching strategies carefully.5) Intellectual property and governance opacity

The joint statement reaffirms Microsoft’s exclusive intellectual property access, but the long‑term governance of models, model updates, safety controls and AGI‑related clauses remains a subject of industry speculation. The companies explicitly reaffirmed that the contractual definition and process for determining AGI remain unchanged — language that preserves the prior safety and escrow mechanisms but leaves interpretation and independent verification as potential flashpoints.Market reaction and community response

Industry coverage treated the joint statement as a measured attempt to steady markets and reassure enterprise customers that previously agreed terms remain intact. Journalists and analysts highlighted the strategic choreography: OpenAI’s dramatic funding headline plus Amazon partnership, immediately followed by Microsoft and OpenAI clarifying the contractual boundaries that protect Microsoft’s core commercial interests.At the community level, WindowsForum threads and other industry forums lit up with debate: some community voices framed the move as a pragmatic multi‑cloud normalization, while others warned about the erosion of Microsoft’s exclusive compute leverage. Those discussions reflect real operational concerns from admins and developers who must plan deployments across divergent cloud architectures.

What enterprises should do now: an operational playbook

Enterprises planning or already running AI systems tied to OpenAI models should treat this moment as an inflection point and act accordingly.- Map workloads and classify them as stateless or stateful. Prioritize migration or containment strategies for latency‑sensitive flows.

- Revisit contracts and SLAs. Confirm how your vendor agreements address multi‑cloud routing, egress costs, telemetry access, and compliance evidence. Consider adding explicit language that clarifies where stateful vs stateless pieces run and who bears the operational cost.

- Architect for portability. Implement abstraction layers (model adapters, API gateways, and standardized data schemas) to make it easier to swap model providers or move runtime components between clouds.

- Test cross‑cloud performance. Run end‑to‑end tests that simulate real user loads and evaluate latency, cost and failure modes when parts of the stack cross provider boundaries.

- Strengthen governance and access controls. Confirm that audit trails, model‑update logs, and content moderation pipelines meet internal and regulator requirements regardless of hosting topology.

Competitive and strategic implications for cloud vendors

- Microsoft: Preserving exclusivity over stateless APIs secures Microsoft’s role as the primary distribution gateway for foundational model access — a high‑volume, sticky revenue stream that integrates tightly with Microsoft 365 and Copilot products. That positioning preserves Azure’s importance to enterprise customers who prioritize compliance and an integrated Microsoft stack.

- Amazon: By investing heavily and focusing on stateful runtimes, AWS seeks to dominate the orchestration and production runtime layer for complex, persistent AI systems. If AWS successfully establishes itself as the production runtime for agentic or domain‑specific AI, the cloud war will be fought on the turf of operational tooling, data pipelines and enterprise integration.

- NVIDIA, SoftBank and chip partners: Investments and commitments to provide training/inference capacity (on GPU or Trainium architectures) are a parallel axis of competition. Control over specialized silicon and inference capacity will constrain which providers can economically run frontier models at scale.

- Other vendors (Google Cloud, Anthropic, Oracle, AMD, etc.): Expect further strategic tie‑ups, sovereign cloud offerings and verticalized stacks aimed at customers with strict data residency, compliance or performance needs. The market is accelerating toward a multi‑vendor equilibrium where choice is defined by workload type, regulatory requirements and commercial terms.

Legal and policy watch‑list

- Regulators: Antitrust agencies in multiple jurisdictions will be watching whether exclusivity clauses materially foreclose competition or harm customers. The October 2025 restructuring attracted regulatory attention; renewed cross‑cloud deals will likely sustain that scrutiny.

- ss‑cloud architectures complicate data residency guarantees. Organizations subject to strict regional controls must verify that routing rules and data storage locations meet local law.

- AGI definitions and triggers: The joint statement reiterates that the contract definition of AGI and associated triggers are unchanged. Companies and policy makers should seek clarity about independent verification, governance triggers and contingency plans should claims about AGI arise. Ambiguity here could create legal and ethical complexity in the future.

Bottom line: a recalibrated equilibrium, not a simple divorce

The joint statement from Microsoft and OpenAI is simultaneously reassuring and revealing. On one hand, it preserves the commercial and technical continuity many enterprises demanded after OpenAI’s sudden broadening of partners and capital sources. On the other hand, the document codifies a practical division of labor: Microsoft retains the high‑volume stateless gateway and enduring IP/revenue ties, while OpenAI can expand infrastructure partnerships and scale compute capacity through third‑party providers like Amazon and NVIDIA for stateful runtimes and large training/inference fleets.That division is strategically sensible: it lets OpenAI accelerate infrastructure scale and diversify supply, while Microsoft keeps the distribution channel and enterprise integration that underpin its Copilot and Azure ambitions. But it also creates operational complexity and potential points of friction for enterprises that must now plan around hybrid hosting, cross‑cloud latency and layered governance.

For IT leaders, the takeaway is clear: the future will be multi‑cloud and multi‑model, but the path there will be defined by contractual clarity, technical abstraction and rigorous testing. The joint statement preserves important guarantees — but it also highlights the pragmatic reality that operational design, not press releases, will determine which vendor wins in any specific enterprise workload.

Final recommendations (quick checklist)

- Confirm where your applications call OpenAI models and whether those calls are stateless or stateful.

- Review vendor contracts for routing, billing, and SLA language that references third‑party partnerships.

- Build abstraction layers and adapter patterns so model backends can be swapped with minimal friction.

- Run cross‑cloud performance and failure‑mode tests under realistic loads.

- Monitor regulatory developments closely; new frameworks or inquiries could change terms or impose new compliance requirements rapidly.

Source: The Tech Buzz https://www.techbuzz.ai/articles/microsoft-and-openai-reaffirm-exclusive-partnership-terms/