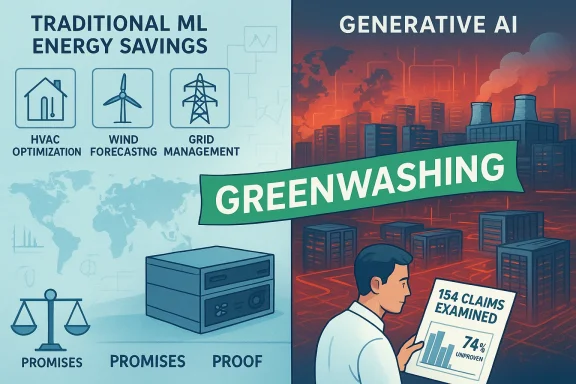

A damning new analysis by climate and energy researcher Ketan Joshi — backed by a coalition of environmental groups — accuses major tech companies of deliberately conflating low‑energy, long‑established machine‑learning techniques with the new, energy‑hungry wave of generative AI in order to present an upbeat, oversold narrative: that AI will “save the planet.” The report, which reviewed 154 prominent claims from industry reports and international agencies, concludes that roughly three‑quarters of these statements lack robust evidence. Even more strikingly, the analysis found no verified example where consumer generative systems such as Google’s Gemini, Microsoft’s Copilot, or OpenAI’s ChatGPT have produced material, verifiable, and substantial emissions reductions. The consequence is not merely puffery — according to Joshi and allied researchers, it is a form of modern greenwashing that masks the very real environmental and community harms of rapid data‑centre expansion.

The debate unfolded against a backdrop of accelerating data‑centre builds, growing grid stress, and intensifying local pushback. Data centres — the physical plants that power cloud services and AI — are electricity‑intensive and often water‑intensive operations. As the industry pivots to support large language models (LLMs) and multimodal generative systems, power demand has surged and, with it, scrutiny from regulators, utilities and communities.

Public resistance is now a political force. Reports from 2025–2026 show a sharp rise in cancelled or contested data‑centre projects, with community opposition cited as the proximate cause in scores of cases. Policymakers and advocates cite concerns ranging from noise and air pollution to water withdrawals for cooling and the long‑term strain on aging transmission systems. At the same time, Big Tech is publicly committing to renewables and even nuclear power to backstop expected demand — a move that has drawn both praise and skepticism.

This article unpacks the new report’s central findings, lays out the technical realities behind different AI workloads, highlights where corporate claims and the evidence diverge, assesses the environmental and social risks of current deployment choices, and proposes concrete steps for policy, industry and civil society to align AI’s growth with real climate responsibility.

Beyond the raw percentages, the authors identified a recurring rhetorical pattern: the broad, ill‑defined use of “AI” to mean everything from small predictive models used in grid management to sprawling generative systems that power image and video creation. That conflation enables companies to point to modest wins in narrow, efficient use cases while glossing over the outsized environmental footprint of the infrastructure needed for modern generative AI.

By contrast, generative AI applications — text, image, video generation and certain types of “reasoning” services — are far more compute‑intensive. The report cautions that treating the two as interchangeable in headline claims is misleading and enables a kind of bait‑and‑switch when companies present an aggregate climate narrative.

But there are several caveats:

At the same time, the report has limits that readers should note. It analyses public-facing statements and documentation; it does not contain proprietary energy data that only companies or utilities might hold. The absence of a documented, material emissions reduction from generative AI in public records is therefore a powerful—and valid—finding, but not a definitive proof that such benefits are impossible. Rather, it underlines the present lack of verifiable evidence and the need for industry transparency.

A second methodological caveat is scope: lifecycle analyses are complex and sensitive to boundary choices. Comparing the carbon impact of a generative AI deployment against the avoided emissions from an optimisation use case requires careful, standardized assumptions; when those assumptions are not disclosed, apples‑to‑apples comparisons are impossible.

Where the report excels is in exposing that ambiguity and in calling for standardization and verification rather than optimistic storytelling.

The industry, regulators and civil society must separate hopeful projection from demonstrable benefit. That means demanding traceable, time‑sliced, location‑specific evidence for claims that AI will materially reduce emissions at scale. It also means rethinking deployment choices: favouring efficiency, smaller models for appropriate tasks, and distribution of compute in ways that reduce environmental stress and respect local environmental constraints.

Generative AI is not inherently a climate solution — it can be a tool in the toolbox, but only if its owners and operators are transparent about costs, accountable for impacts, and committed to rigorous verification. Anything less risks converting an exciting set of technologies into a rhetorical smokescreen that obscures hard choices and delays genuine climate action.

That reality should change how policy is crafted, how communities are consulted, and how tech companies account for their climate impacts. The choice before the sector is stark: pursue a mature, transparent approach that measures and minimises real impacts — or continue to rely on broad, unverified claims that invite regulatory backlash, community resistance, and a deeper credibility crisis. Climate policy and corporate reputations will both be better served if the next chapter of AI growth is rooted in accountability, measurable progress, and a willingness to prioritise verifiable climate outcomes over optimistic marketing.

Source: The Cool Down New report uncovers dangerous tactics used by Google and Microsoft to promote their products: 'Serves as a distraction'

Background

Background

The debate unfolded against a backdrop of accelerating data‑centre builds, growing grid stress, and intensifying local pushback. Data centres — the physical plants that power cloud services and AI — are electricity‑intensive and often water‑intensive operations. As the industry pivots to support large language models (LLMs) and multimodal generative systems, power demand has surged and, with it, scrutiny from regulators, utilities and communities.Public resistance is now a political force. Reports from 2025–2026 show a sharp rise in cancelled or contested data‑centre projects, with community opposition cited as the proximate cause in scores of cases. Policymakers and advocates cite concerns ranging from noise and air pollution to water withdrawals for cooling and the long‑term strain on aging transmission systems. At the same time, Big Tech is publicly committing to renewables and even nuclear power to backstop expected demand — a move that has drawn both praise and skepticism.

This article unpacks the new report’s central findings, lays out the technical realities behind different AI workloads, highlights where corporate claims and the evidence diverge, assesses the environmental and social risks of current deployment choices, and proposes concrete steps for policy, industry and civil society to align AI’s growth with real climate responsibility.

What the new report actually found

A large sample, a stark conclusion

The analysis examined 154 public statements and claims — coming from corporate sustainability reports, spokespeople, and influential institutions — that framed AI as a net climate benefit. The headline finding: about 74% of those claims were unproven or supported by weak evidence. Only about a quarter cited peer‑reviewed research. A sizable fraction relied on internal white papers, vague case studies, or no citation at all.Beyond the raw percentages, the authors identified a recurring rhetorical pattern: the broad, ill‑defined use of “AI” to mean everything from small predictive models used in grid management to sprawling generative systems that power image and video creation. That conflation enables companies to point to modest wins in narrow, efficient use cases while glossing over the outsized environmental footprint of the infrastructure needed for modern generative AI.

No documented wins from mainstream generative systems

The report’s most provocative claim — and the one getting the most public attention — is that the analysis did not find a single verified instance where widely deployed generative AI tools led to material, verifiable emissions reductions. This is not a claim that AI cannot, in principle, reduce emissions; rather, it is an evidence‑driven observation: the current public record does not show generative AI delivering the kind of measurable, large‑scale decarbonisation some corporate statements imply.Evidence is skewed toward “traditional” AI

Where verifiable benefits were documented, they were overwhelmingly tied to what the report calls traditional AI — targeted machine‑learning models used for specific optimisation tasks such as improving HVAC controls, predicting wind power output, or reducing idle time in industrial processes. These systems are typically much smaller, less compute‑intensive, and easier to evaluate against concrete baselines.By contrast, generative AI applications — text, image, video generation and certain types of “reasoning” services — are far more compute‑intensive. The report cautions that treating the two as interchangeable in headline claims is misleading and enables a kind of bait‑and‑switch when companies present an aggregate climate narrative.

The technical difference that matters: traditional ML vs generative AI

What counts as “traditional” machine learning

Traditional machine‑learning systems generally solve narrow tasks with relatively compact models. Examples include:- Forecasting short‑term wind or solar generation with regression models or small neural nets.

- Detecting anomalies in grid telemetry to avoid outages.

- Optimising building HVAC schedules via reinforcement learning or rule‑based tuning.

What makes generative AI different — and costlier

Generative AI — especially large language models and state‑of‑the‑art multimodal systems — is different in three fundamental ways:- Scale: Modern LLMs contain billions to trillions of parameters and are trained on terabytes to petabytes of text, images, and other data. Training requires sustained, high‑performance GPU/TPU clusters running for days or weeks.

- Inference intensity: Many consumer services run millions to billions of inference queries per day. For complex queries (long text generation, high‑resolution video creation), per‑call compute and memory demands spike.

- Operational footprint: Hyperscale deployment requires dense racks, sophisticated cooling (often water‑assisted), uninterruptible power supplies, and large onsite electrical infrastructure. These elements compound both electricity and, in some regions, water consumption.

Where corporate claims fall short — and common tactics identified

The report documents several recurring promotional techniques that weaken the evidentiary value of corporate climate claims:- Semantic conflation: Lumping “AI” benefits drawn from small, traditional applications together with generative AI development to suggest an aggregate net benefit.

- Selective case studies: Highlighting a handful of high‑profile pilots (e.g., AI that optimised a factory’s energy use by a few percent) while ignoring the much larger emissions associated with the company’s expanding AI cloud footprint.

- Misleading accounting: Favouring adjusted metrics that rely on procurement of offsite renewables, credits, or hypothetical future technologies to offset current fossil‑fuel consumption without demonstrating real‑world, location‑based emissions reductions.

- Future‑tense promises: Emphasising projected efficiencies and speculative breakthroughs (e.g., “AI will enable gigatonne‑scale reductions by 2030”) without disclosing the assumptions and sensitivities undergirding such projections.

The real harms: communities, grids and climate

Local environmental impacts

Data centres generate localized environmental and quality‑of‑life impacts that communities increasingly cite when opposing new builds:- Noise: Mechanical cooling systems and backup generators can create persistent noise around 24/7 facilities.

- Air pollution: Backup diesel generators and nearby gas‑fired peaker plants used for grid support emit NOx, particulate matter and other pollutants.

- Water use: In water‑stressed regions, evaporative and closed‑loop cooling approaches still require nontrivial water volumes, intersecting with local supply constraints.

- Land use and local tax base distortions: Rapid, large footprint deployments change land availability and local service demands without always delivering commensurate long‑term local employment.

Grid stress and economic impacts

Rapid load growth from clustered data‑centre expansion strains transmission and distribution networks. Utilities and ratepayers often shoulder costs of grid upgrades, and in some regions the build‑out contributes to rising electricity prices and congestion during peak demand. The mismatch between where data centres want to locate and where low‑carbon power is available turns into a political and regulatory flashpoint.Climate and lifecycle risks

Even if a data centre operates on contracted renewables, lifecycle emissions remain: manufacturing servers and chips consumes energy and raw materials; mining and refining critical minerals (copper, aluminium, rare earths) has environmental costs; and end‑of‑life e‑waste handling remains a weak link in many markets. Add rebound effects — AI enabling more energy‑intensive processes because they become cheaper or more efficient — and the net climate balance becomes even less certain.What companies are doing — and why that doesn’t settle the debate

Many hyperscalers have responded to scrutiny by doubling down on clean energy procurement and publicly backing advanced technologies like small modular reactors (SMRs) and, in some cases, fusion PPAs. Those initiatives are significant: long‑term power purchase agreements for wind and solar, investments in grid‑scale storage, and exploratory deals with nuclear developers can provide low‑carbon energy and capacity that help decouple data‑centre growth from fossil fuels.But there are several caveats:

- Timing mismatch: Large clean projects and SMRs take years to deploy; meanwhile, demand is growing now. Interim power often still comes from gas or coal.

- Accounting gaps: Procuring renewables via offsite PPAs does not necessarily reduce the marginal fossil generation on a constrained grid at the time of a data‑centre’s peak demand.

- Supply chain and footprint: Clean energy contracts do not erase embodied emissions in hardware manufacturing or the water impacts of cooling systems.

- Political and social risk: Nuclear projects bring their own siting controversies and regulatory hurdles, and are unlikely to be a universal local solution.

Why a more rigorous approach matters

The implications are practical and urgent. If widespread datacentre expansion continues under a greenwashed narrative, the result may be:- Greater reliance on fossil generation during transition periods, locking in emissions.

- Local conflicts that slow deployments and add costs.

- Diversion of climate policy attention away from more impactful sectors and solutions.

- Misallocation of public subsidies and incentives toward projects that do not deliver commensurate climate benefits.

How to restore truthfulness and get to real climate outcomes

The report and subsequent analysis point to a set of policy, corporate and civil‑society actions that would improve accountability and guide the sector toward genuine climate mitigation.For policymakers and regulators

- Require standardized disclosures for AI and data‑centre emissions that mirror established frameworks (e.g., scoped to include training, inference, embodied hardware emissions and water intensity).

- Demand location‑based, time‑matched accounting for renewable procurement (so that claimed “clean” power corresponds to actual system impacts when the facility operates).

- Enforce community engagement and impact assessments for new data‑centre permits, including cumulative impacts on water, air quality, noise and grid reliability.

- Consider conditional approvals or moratoria in regions where grid upgrades or water availability have not been concretely planned or financed.

For companies and cloud providers

- Publish model‑level and workload‑level energy and water metrics: training energy use, expected per‑query inference cost, and typical query volumes for public products.

- Create and publish independent third‑party audits of claimed emissions savings and offset arrangements.

- Prioritise efficiency at the software and model architecture level: sparsity, quantisation, and model‑distillation techniques can substantially reduce inference costs.

- Offer “right‑sized” compute tiers: encourage smaller, specialist models where appropriate instead of defaulting to large LLMs for every task.

- Align procurement with local grid decarbonisation efforts rather than relying exclusively on distant PPAs.

For researchers and auditors

- Develop agreed‑upon measurement protocols for AI lifecycle emissions that include hardware manufacturing, data‑centre operations, and software development.

- Produce reproducible, open benchmarks for inference energy per query across representative tasks and model sizes.

- Partner with civil society to ensure methodologies are accessible and not obscured behind proprietary claims.

For communities and advocates

- Demand transparency and enforceable community benefit agreements tied to measurable environmental outcomes.

- Push for cumulative impact assessments rather than siloed, project‑by‑project reviews.

- Advocate for public investment in grid upgrades and equitable energy planning so communities don’t absorb disproportionate costs.

Practical steps companies can take today (a short checklist)

- Publish per‑model energy estimates for training and typical inference workloads.

- Disclose exact renewable procurement instruments, timing and location; avoid misleading “net‑zero” language that hides remaining marginal emissions.

- Test and deploy model compression and adaptive inference techniques that drastically cut compute without sacrificing required accuracy.

- Prioritise edge deployment for low‑latency, low‑energy tasks rather than always routing workloads to hyperscale cloud instances.

- Commission independent lifecycle analyses (including hardware manufacturing) and make summaries publicly available.

Strengths and limits of the new report

The report’s strengths are clear: it applies a consistent evidentiary standard across a broad set of public claims and names specific failures of logic and documentation. That fills an important gap in public debate, which has often been dominated by corporate messaging and forward‑looking scenarios.At the same time, the report has limits that readers should note. It analyses public-facing statements and documentation; it does not contain proprietary energy data that only companies or utilities might hold. The absence of a documented, material emissions reduction from generative AI in public records is therefore a powerful—and valid—finding, but not a definitive proof that such benefits are impossible. Rather, it underlines the present lack of verifiable evidence and the need for industry transparency.

A second methodological caveat is scope: lifecycle analyses are complex and sensitive to boundary choices. Comparing the carbon impact of a generative AI deployment against the avoided emissions from an optimisation use case requires careful, standardized assumptions; when those assumptions are not disclosed, apples‑to‑apples comparisons are impossible.

Where the report excels is in exposing that ambiguity and in calling for standardization and verification rather than optimistic storytelling.

The road forward: reality, not rhetoric

AI and machine learning have real potential to assist the energy transition — in forecasting demand, optimising renewables integration, aiding grid resilience and improving industrial efficiency. Those benefits should be pursued vigorously. But the path from potential to impact requires rigorous measurement, transparent accounting, and a realistic conversation about trade‑offs.The industry, regulators and civil society must separate hopeful projection from demonstrable benefit. That means demanding traceable, time‑sliced, location‑specific evidence for claims that AI will materially reduce emissions at scale. It also means rethinking deployment choices: favouring efficiency, smaller models for appropriate tasks, and distribution of compute in ways that reduce environmental stress and respect local environmental constraints.

Generative AI is not inherently a climate solution — it can be a tool in the toolbox, but only if its owners and operators are transparent about costs, accountable for impacts, and committed to rigorous verification. Anything less risks converting an exciting set of technologies into a rhetorical smokescreen that obscures hard choices and delays genuine climate action.

Conclusion

The new report is a wake‑up call: it challenges the tidy narrative that AI — treated as a single, uniform technology — will effortlessly offset the environmental costs of its own expansion. The evidence to date shows real, narrow wins from traditional, small‑scale ML. It does not show, in public records, that mainstream generative systems are delivering material emissions reductions that meaningfully counterbalance the surging energy demands they create.That reality should change how policy is crafted, how communities are consulted, and how tech companies account for their climate impacts. The choice before the sector is stark: pursue a mature, transparent approach that measures and minimises real impacts — or continue to rely on broad, unverified claims that invite regulatory backlash, community resistance, and a deeper credibility crisis. Climate policy and corporate reputations will both be better served if the next chapter of AI growth is rooted in accountability, measurable progress, and a willingness to prioritise verifiable climate outcomes over optimistic marketing.

Source: The Cool Down New report uncovers dangerous tactics used by Google and Microsoft to promote their products: 'Serves as a distraction'