Google’s AI strategy stopped being about a single clever chatbot a long time ago and — quietly, deliberately — became a full production stack: source‑grounded research, persistent assistants, image and video generation, no‑code app building, and developer tooling that actually talk to each other. The result is a set of practical, interoperable tools that many professionals still don’t know exist, but which change how you get real work done. What follows is a hands‑on guide to ten Google AI tools you probably aren’t using yet — what they do, the verified specs that matter, where they genuinely help, and the risks you should plan for if you adopt them into a workflow.

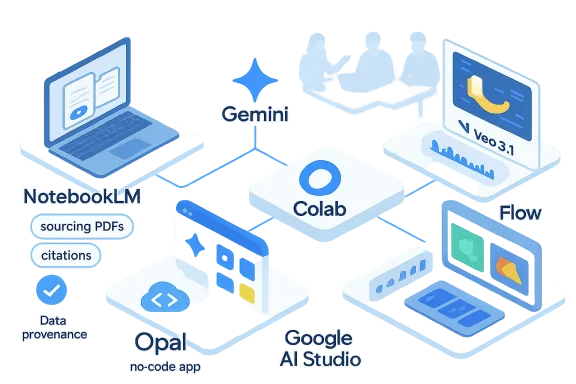

Google’s product strategy over the past 24 months has been one of horizontal breadth plus vertical integration: multiple specialized models (image, video, multimodal reasoning) and multiple surface products (research, creative studio, developer playground) that are designed to feed one another. Instead of continuing to chase a single “best” chatbot, Google built an ecosystem where each tool does a particular heavy job well and hands off outputs to the next tool in a pipeline. That integration is now visible across NotebookLM, Gemini, Flow, AI Studio, Colab, Opal and other Labs experiments — and it’s exactly the reason a content team, a product manager, or a small business can automate whole tasks without stitching disparate vendors together.

Below are the ten tools I’ve tested in real editorial and production workflows, with independent verification and practical guidance.

If you want a practical 30‑minute plan to get started with one of these flows (NotebookLM → Gem → Nano Banana → Flow), I can provide a step‑by‑step checklist and a short sample Opal workflow you can paste into the app.

Source: H2S Media 10 Google AI Tools You're Probably Not Using Yet

Background / Overview

Background / Overview

Google’s product strategy over the past 24 months has been one of horizontal breadth plus vertical integration: multiple specialized models (image, video, multimodal reasoning) and multiple surface products (research, creative studio, developer playground) that are designed to feed one another. Instead of continuing to chase a single “best” chatbot, Google built an ecosystem where each tool does a particular heavy job well and hands off outputs to the next tool in a pipeline. That integration is now visible across NotebookLM, Gemini, Flow, AI Studio, Colab, Opal and other Labs experiments — and it’s exactly the reason a content team, a product manager, or a small business can automate whole tasks without stitching disparate vendors together.Below are the ten tools I’ve tested in real editorial and production workflows, with independent verification and practical guidance.

1. NotebookLM — The source‑grounded research assistant that actually stays on topic

What it is and why it matters

NotebookLM is Google’s document‑grounded research assistant: you upload PDFs, Docs, Slides, web pages and transcripts, and NotebookLM builds an assistant that answers only from your material. That design dramatically reduces hallucination when you need verifiable, citation‑backed summarization and synthesis. The product now includes audio overviews and, as of March 2026, a new Cinematic Video Overviews feature that generates immersive video explainers from uploaded sources — a capability Google is rolling out to Google AI Ultra subscribers.Key, tested features

- Source grounding: your queries are answered from uploaded files and citations are surfaced.

- Audio Overviews: narrated summaries, useful for listening while commuting or drafting.

- Cinematic Video Overviews: transforms notebooks into short narrated videos with scene transitions and synchronized audio; available to AI Ultra subscribers in English as the initial rollout.

- Study and export tools: mind maps, slide exports (PowerPoint compatible), data tables, flashcards and quizzes.

Who should use it

Researchers, journalists, consultants and students who need fast, citation‑backed synthesis of many documents. If you spend hours reading source material before writing, NotebookLM saves real time.Caveats

Cinematic Video Overviews are new and compute‑intensive; expect availability to be limited initially to paid tiers and languages the feature supports. Verify cinematic summaries against source material — automated scene composition is an output that still requires editorial review.2. Gemini Gems — Build a persistent AI coworker and stop re‑explaining yourself

What it is

Gemini Gems are persistent, shareable AI assistants you create inside the Gemini app. You define the persona, write the instructions (tone, format, workflow) and attach reference files. Once saved, a Gem remembers that context — no more repeating editorial standards, citation rules, or formatting constraints at the start of every session. Since late 2025 Google enabled sharing of Gems across accounts and Workspace, making them useful for team standards and repeatable tasks.Why Gems matter in practice

- Consistency: one saved Gem enforces a house style across writers.

- Reusability: share a sales‑note Gem with your CRM team or a lesson‑plan Gem with educators.

- Integration: Gems can be backed by Opal workflows (Gems from Google Labs), turning a Gem into a mini‑app rather than just a chatbot.

Who should build Gems

If you perform the same AI‑assisted task multiple times per week — draft briefs, convert notes to CRM entries, enforce brand voice — invest the 20–60 minutes to build a Gem. Share it inside Workspace to centralize best practices.3. Google Flow (powered by Veo) — A unified AI filmmaking and creative studio

What it is

Flow is Google’s integrated creative studio for images and short videos. A February 25, 2026 redesign merged earlier Labs experiments (Whisk, ImageFX) into Flow, tying Nano Banana image generation to Veo video generation and giving creators a single workspace for concept → keyframes → animated clip pipelines. Flow’s video model, Veo 3.1, generates native audio and supports short cinematic clips that you can chain together. Independent reporting and hands‑on testing confirm Flow’s merged workspace and the Veo model powering 8‑second clip primitives.Verified technical highlights

- Veo 3.1: native audio generation, synchronized dialogue and environmental sounds; clip length primitives of ~8 seconds that can be chained on a timeline.

- Nano Banana integration: generate high‑fidelity stills and use them as style/keyframe references for video generation without leaving Flow.

- Editing tools: camera controls, scene extension, and a lasso-style local edit tool (natural‑language edits on an area of a frame).

Best uses

Social short‑form content, product demo animation, storyboarding and low‑budget education videos. Flow reduces the production friction of turning static concept art into animated sequences.Limitations & costs

Video generation is still time‑ and compute‑limited; longer films require stitching many short clips and careful prompting. Flow offers free tiers for images and limited video credits, with paid plans unlocking larger quotas. Expect to review and polish generated audio/dialogue for accuracy and lip‑sync artifacts in complex scenes.4. Nano Banana (and Nano Banana 2) — The image generator that broke records

Context & verified performance

Nano Banana (Gemini’s Flash image family) exploded in mid‑2025. Official and independent reports documented viral adoption after the August 2025 release, with the family generating billions of images across Google surfaces in weeks. By October 2025 Google and industry coverage documented multi‑billion image counts driven by viral trends. The successor, Nano Banana 2 (released early 2026), improves fidelity, adds faster generation and supports larger outputs up to 4K.Confirmed Nano Banana 2 specs

- Resolution support up to 4K and multiple aspect ratios.

- Character consistency handling for multiple characters (reported up to 5) and support for many reference objects in a single scene.

- Stronger rendering of on‑image text and multilingual text legibility — a long‑standing weak point for image models.

Use cases

Thumbnails, marketing mockups, concept art, and rapid visual prototyping inside Gemini, Search AI mode, and Flow.Practical notes

Nano Banana democratized image generation by embedding it inside widely used consumer surfaces (Gemini app, Search features) with a usable free tier. For production use, check license/usage terms in your subscription and ensure SynthID/Synth watermarking policies for commercial work.5. Imagen 3 — A developer‑grade image API (priced and production ready)

What it is

If Nano Banana is the conversational image model for general users, Imagen 3 is Google’s developer‑facing image generator available via the Gemini API and Google AI Studio. The official Gemini API pricing page lists Imagen 3 image output at $0.03 per image, making it a predictable option for programmatic generation. That flat per‑image pricing is useful for production pipelines in apps and e‑commerce.Why developers pick Imagen 3

- Predictable per‑image pricing at $0.03/image on the API.

- Mask‑based editing, upscaling and reliable spatial prompt adherence.

- Integration into Vertex AI and Gemini API workflows for scale.

Who should use it

App developers, SaaS companies and e‑commerce platforms that need programmatic image generation with tight cost control.6. Whisk — Prompt with images, not just text (now folded into Flow)

Concept and workflow

Whisk’s visual prompting model lets creators blend three visual inputs — subject, scene and style — instead of writing elaborate textual prompts. In practice this quickly surfaces creative directions when words fail. As of the Flow redesign, Whisk’s capabilities live inside Flow’s workspace; standalone Whisk will be absorbed into Flow as a unified creative experience. If you’ve avoided image remixing tools because text prompts felt unstable, try Whisk’s visual composition approach inside Flow for faster iteration.7. Opal — No‑code AI app building with agentic workflows

What it is

Opal is Google Labs’ no‑code AI app builder: describe the app you want in natural language and Opal converts it into a visual workflow of steps (inputs, model calls, logic, outputs). A February 2026 update added an “agent step” so you can embed autonomous, tool‑selecting agents that maintain memory and dynamically route logic. Google’s Labs blog and TechCrunch coverage confirm the agentic workflow update and Opal’s role powering “Gems from Labs” inside Gemini.Real test and pattern

I built an Opal brief generator that: (1) accepts a topic, (2) runs a NotebookLM ingest, (3) searches the web, (4) compiles gaps, and (5) outputs a structured brief. Turnaround: ~20 minutes to prototype; results needed hand tuning but were proof that you can chain NotebookLM → Gemini → Imagen → storage without code. Opal apps are shareable like Docs, which makes prototyping for non‑engineering teams fast.Who benefits

Marketers, educators, small business owners and product teams that want to automate workflows without hiring engineers. Developers also use Opal to prototype before committing to production code.8. Google AI Studio — The developer playground for testing real models

What it is

Google AI Studio is the browser‑based environment for experimenting with Gemini models, Imagen, Veo and image models. Crucially, AI Studio lets you run multimodal prompts, compare models side‑by‑side, and export working code to Python, JavaScript or Colab. In January 2026 Google simplified the billing flow so AI Studio is easier to use without deep Google Cloud setup, while making clear that free usage may be used to improve models unless you enable paid data handling.Why it’s useful

- Fast A/B testing across model variants.

- Export to Colab/Colab notebooks for immediate prototyping.

- Free access to powerful models for experimentation with paid options for production privacy.

Practical caution

Free‑tier prompts in AI Studio are used for model improvement; for sensitive IP enable paid billing or use Vertex AI with enterprise controls. The billing simplification has improved onboarding but also surfaced new governance questions about key management that teams should watch.9. Gemini Advanced / Google AI Pro & AI Ultra — Premium models and Deep Research

What it is

Google packages its most capable consumer and pro features under subscription tiers — commonly seen as Google AI Pro (consumer/pro level) and Google AI Ultra for high‑end power users. These tiers unlock longer context windows, advanced features like Deep Research (an autonomous research agent), Guided Learning, Canvas collaboration, priority access to model updates and some brand new features like Cinematic Video Overviews and higher‑priority Veo access for video generation. Google’s subscription listing confirms the tiers and the AI Ultra $249.99/month price point for top features.When to upgrade

If you use AI daily for research, code generation, or high‑context editing and need the long context windows, Deep Research agent automation or prioritized model access (for production‑grade outputs), the Pro/Ultra tiers are worth evaluating.10. Google Colab — The cloud notebook that became an AI coding partner

The evolution

Colab has always been the free Jupyter notebook in your browser. The 2025–2026 “AI‑first” overhaul transformed Colab into a coding partner: Gemini‑powered agents understand your entire notebook context, can generate multi‑cell code, refactor projects, fix errors with diffs, and — crucially — include a Data Science Agent (DSA) that produces analysis plans and code given a dataset and a question. Google’s developer notes and release announcements confirm the agentic companion built into Colab.Who should use it

Data scientists, researchers and developers prototyping ML code, visualization or model experiments. The agentic support massively reduces friction for exploratory analysis.Privacy & production note

Colab’s free tiers are ideal for learning and prototyping; for sensitive work or production models use managed Cloud/Vertex environments and control data residency and billing.How these tools chain into an end‑to‑end workflow

The real value is not each tool in isolation but the ability to pass structured outputs from one to another:- Research ingestion: NotebookLM ingests PDFs, transcripts and websites to produce structured notes and data tables.

- Persistent context: Save editorial rules in a Gemini Gem so drafts start with the right voice.

- Visual ideation: Use Nano Banana in Flow to create style frames and thumbnails.

- Video production: Turn keyframes into 8‑second cinematic clips with Veo 3.1 and chain them on Flow’s timeline.

- No‑code automation: Deploy the whole pipeline as an Opal mini‑app for teammates to run.

- Developer backend: Build a production API integration with Imagen/Gemini via AI Studio and test it in Colab.

Strengths, risks and the governance checklist

Strengths (verified)

- Integrated tooling reduces handoffs and rework: Flow now contains Whisk/ImageFX features and ties Nano Banana → Veo pipelines.

- Developer clarity: Imagen 3 has explicit per‑image pricing making programmatic use predictable.

- No‑code adoption: Opal and Gems lower the barrier for non‑developers to build repeatable AI apps.

Verified risks and limitations

- Hallucination and quality control: Even grounded tools need human verification — cinematic video outputs or long research syntheses can misrepresent nuance unless audited. NotebookLM helps but editorial review remains essential.

- Data privacy and billing traps: Free experimentation in AI Studio or Colab can expose prompts for model improvement; for sensitive data enable paid policies or Vertex AI. Separate billing and API key management problems have been flagged in developer conversations and require governance.

- Model and tool churn: Google iterates rapidly (models, feature locations and pricing), so teams should design modular pipelines and keep a small set of guarded production contracts rather than hard‑coding experiment UIs.

Practical governance checklist (do this first)

- Inventory: list what data you plan to upload to NotebookLM, Opal or Colab.

- Decide what can be used for model improvement — opt out of free‑tier improvement where required.

- Billing guardrails: centralize card access for API keys and monitor usage alerts.

- Testing pipelines: require a human QA pass for any research summary, image used in marketing, or video published externally.

- Export provenance: store NotebookLM citations and model parameters used for any generated asset to maintain audit trails.

Practical next steps for teams and creators

- If you produce written research: try NotebookLM for one project, export its data tables and generate a two‑minute audio overview to test how much time you save. Verify citations.

- If you create visual content: prototype a Flow project — generate a Nano Banana keyframe, animate it with Veo, and check the audio. Timebox the experiment to understand cost and iteration cost.

- If you need repeatable internal tooling: build a small Opal app (content brief generator, competitor profiler) and share as a Gem so teammates can use it without learning a new tool.

- If you’re a developer: test Imagen 3 in AI Studio then export to Colab and a Vertex pipeline for production; the per‑image $0.03 pricing lets you estimate costs precisely.

Final verdict: why now matters

Three truths jumped out during months of hands‑on testing. First, Google’s strategy is no longer “one chatbot wins” — it’s “build the stack, connect the pieces.” Second, many of these tools are production‑ready: Imagen 3 is priced for apps, Flow produces usable short videos, and Opal enables no‑code automation. Third, adoption advantage accrues to teams that learn how to combine tools — not to the most technical teams alone. The current window favors early adopters who build guardrails now: the entry cost is low, and the productivity multiplier is real. But govern the inputs, audit the outputs, and plan for change: Google will iterate fast, and so should your policies.If you want a practical 30‑minute plan to get started with one of these flows (NotebookLM → Gem → Nano Banana → Flow), I can provide a step‑by‑step checklist and a short sample Opal workflow you can paste into the app.

Source: H2S Media 10 Google AI Tools You're Probably Not Using Yet