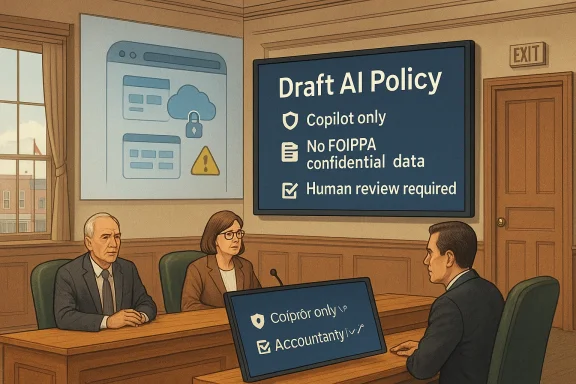

The Town of Oliver’s draft AI policy is a small local-government story with outsized significance, because it captures a problem every public body is now facing: how to use generative AI without leaking sensitive information, confusing accountability, or pretending the technology is easier to govern than it is. The April 7 Committee of the Whole discussion showed council trying to draw a narrow lane for Microsoft Copilot while blocking consumer-facing tools such as ChatGPT and Gemini, with privacy, records handling, and human review all at the center of the debate unique to Oliver; it reflects a broader municipal shift from informal experimentation to formal AI governance, and the town’s approach is already revealing both the promise and the limits of “safe” AI use in government .

Oliver’s AI debate is happening at a moment when local governments can no longer treat generative AI as an optional side project. AI features are now embedded inside email, office suites, search, transcription, and analytics tools, which means even councils that never bought a standalone chatbot still find AI showing up in day-to-day work. That makes a simple ban almost impossible to enforce, and it explains why public-sector policy language has started shifting toward controlled use, disclosure, and explicit prohibitions on sensitive data handling .

What makes Oliver’s case notable is the town’s decision to center the policy on Copilot, a product already installed on council and staff devices, rather than allowing broad use of consumer AI systems. The CAO’s explanation was straightforward: keep work inside the town’s enterprise environment, where access controls and data boundaries are more defensible, and avoid exposing municipal information to systems that may preserve histories or create discoverable traces outside the town’s control . That is a classic public-sector instinct: reduce the number of places data can go, then he policy discussion also reveals how quickly the conversation moves from technology to governance. Council was not merely debating whether AI is useful; it was deciding who counts as a “user,” whether contractors should be included, what kinds of outputs need human review, and whether sensitive information protected under FOIPPA can ever be entered into an AI tool at all . Those are not abstract questions. They determine whether AI becomes a practical assistant or a liability generator.

Oliver is also not starting from zero. The broader BC public-sector environment has already emphasized privacy protection, information handling, and responsible AI guidance. The province’s own 2024/25 FOIPPA administration report explicitly highlights responsible AI as something guided by policy, regulation, and privacy and security frameworks, reinforcing the idea that municipal AI policy is now part of a wider compliance culture rather than a novelty item . In that sense, Oliver’s draft is less a local curiosity than a municipal version of a much bigger provincial and national trend.

The town’s discussion also mirrors similar local-government debates elsewhere, where councils are trying to distinguish between AI as a drafting aid and AI as a decision-making system. That distinction matters because government institutions are not just producing text; they are producing public records, resident correspondence, and decisions that may later need to be audited, disclosed, or defended. Once AI enters that process, the policy question becomes not whether it saves time, but whether it changes responsibility.

That argument is not baseless. Microsoft says its commercial Copilot offerings are built around existing permissions and access controls, and that organizational data is not used to train foundation models without permission. For a municipal government, that is the kind of assurance procurement teams and CAOs want to hear, because it suggests a tighter privacy envelope than consumer AI tools usually provide. It also supports the town’s effort to create a single approved path for AI use rather than a patchwork of unofficial accounts.

This is where municipal AI governance becomes more than a technology preference. A Copilot-only rule is really a records-management and privacy rule in disguise. It reduces the number of possible failure points, which is useful when staff have varying levels of technical literacy and when the same town uses AI for documents, presentations, and bylaw-related work.

Still, the distinction is not perfect. Enterprise tools can be safer, but they are not magically safe. They still depend on user behavior, access configuration, vendor terms, and internal discipline. If staff paste confidential information into a prompt, the fact that the prompt sits inside an enterprise environment does not erase the underlying privacy issue; it only changes the risk profile.

Key implications of the Copilot-only approach include:

The policy’s emphasis on prohibited data is exactly what one would expect from a public body that handles sensitive information. BC’s FOIPPA framework exists to govern collection, use, disclosure, and protection of personal information, and the province continues to underline privacy security as a core public-sector obligation. In that context, Oliver’s caution is not reactionary; it is basic administrative hygiene.

Oliver’s draft policy seems to recognize that reality by making data input restrictions central, not optional. That is smart, because data discipline is easier to enforce than trying to audit every output after the fact. If the sensitive data never enters the system, the town avoids a long tail of compliance headaches.

The town is also confronting a familiar public-sector dilemma: the more useful a tool is, the more tempting it is to feed it richer material. That is precisely why municipal AI policy cannot rely on trust alone. It needs rules, training, and visible enforcement.

Important privacy takeaways:

This is especially important for governments because their words are not private notes. A memo, press release, bylaw draft, or presentation can shape public understanding, future decisions, and legal obligations. If a machine drafts the text but a human does not meaningfully verify it, accountability starts to blur in ways that councils should avoid.

That issue is not trivial. Residents do not just care that their town communicates quickly; they care that the communication is accurate, specific, and clearly owned by human decision-makers. If AI starts to shape the voice of the town too heavily, the public may reasonably wonder who is actually speaking.

Council’s discussion shows an awareness of that risk. The requirement to note when Copilot is used is a move toward transparency and accountability, and it gives the town a clearer audit trail if questions arise later. That is the right instinct, especially when a technology is being used in official settings.

Practical benefits of mandatory human review include:

Broad categories create loopholes if they are not carefully tied to actual access. Narrower categories make the policy easier to explain, easier to train against, and easier to audit. If a contractor is handling town business, the town needs the same privacy expectations for that contractor that it expects from employees.

In a municipal setting, that matters because different classes of personnel may use different devices, accounts, or workflows. Councillors may use one set of tools, staff another, and contractors another still. If the policy does not map cleanly onto those realities, the result is confusion rather than control.

There is also a cultural dimension. People comply better with rules that are clearly written and obviously meant for them. Ambiguity creates the opposite effect: it encourages quiet workarounds and “I thought that was allowed” explanations after the fact. A good AI policy should reduce that sort of ambiguity as much as possible.

Useful reasons to sharpen the user definition:

Councillor Petra Veintimilla’s support for a “living document” approach points to the same reality: the policy will need regular updating as the world changes . That is not weakness. It AI policies are the ones written as if the technology will stand still long enough for a static rulebook to remain useful.

A living policy, by contrast, signals that governance is continuous. It can adapt to changes in software defaults, privacy settings, document-retention practices, and vendor terms. It also gives council a mechanism to revisit the balance between efficiency and risk without pretending the first draft is the last word.

That said, a living policy only works if the town actually lives with it. Regular review has to mean more than polite annual housekeeping. It needs a formal cadence, clear ownership, and enough staff attention to catch changes in how AI is embedded across the workplace.

A useful review model would include:

What makes Oliver’s discussion interesting is that it exposes the practical trade-off. Councils want the efficiency gains of AI because administrative work is heavy and budgets are tight. Yet the same systems that help draft documents can also expose sensitive data, muddy authorship, or encourage staff to rely on text they have not fully checked. That is why AI governance is increasingly less about ideology and more about operational discipline.

Oliver’s preference for Copilot suggests the town is trying to make the governable choice, not the most dazzling one. That is a sensible position. It may not satisfy every user who wants broad experimentation, but municipal governments are not supposed to optimize for novelty. They are supposed to optimize for public trust, continuity, and defensible decision-making.

There is also a reputational component. A council that can say it uses AI carefully, inside a closed system, and with human review in place is in a much stronger position than one that looks improvisational. In the public sector, credibility is often built not through boldness but through restraint.

Broad lessons from the Oliver debate:

The town will also need to be careful not to overstate Copilot’s safety. Enterprise tools reduce exposure, but they do not eliminate it, and users may still enter information they should not. If the policy is treated as a substitute for training and monitoring, the town could end up with a false sense of control.

The next phase should also reveal how much appetite council really has for ongoing review. A living document only works when the updates are real, not ceremonial. That means the town will need to revisit not just the wording, but also the software stack, vendor terms, and the practical habits of staff who may already be experimenting quietly with AI.

Things to watch next:

Source: Times Chronicle Reality of AI policy proves challenging

Background

Background

Oliver’s AI debate is happening at a moment when local governments can no longer treat generative AI as an optional side project. AI features are now embedded inside email, office suites, search, transcription, and analytics tools, which means even councils that never bought a standalone chatbot still find AI showing up in day-to-day work. That makes a simple ban almost impossible to enforce, and it explains why public-sector policy language has started shifting toward controlled use, disclosure, and explicit prohibitions on sensitive data handling .What makes Oliver’s case notable is the town’s decision to center the policy on Copilot, a product already installed on council and staff devices, rather than allowing broad use of consumer AI systems. The CAO’s explanation was straightforward: keep work inside the town’s enterprise environment, where access controls and data boundaries are more defensible, and avoid exposing municipal information to systems that may preserve histories or create discoverable traces outside the town’s control . That is a classic public-sector instinct: reduce the number of places data can go, then he policy discussion also reveals how quickly the conversation moves from technology to governance. Council was not merely debating whether AI is useful; it was deciding who counts as a “user,” whether contractors should be included, what kinds of outputs need human review, and whether sensitive information protected under FOIPPA can ever be entered into an AI tool at all . Those are not abstract questions. They determine whether AI becomes a practical assistant or a liability generator.

Oliver is also not starting from zero. The broader BC public-sector environment has already emphasized privacy protection, information handling, and responsible AI guidance. The province’s own 2024/25 FOIPPA administration report explicitly highlights responsible AI as something guided by policy, regulation, and privacy and security frameworks, reinforcing the idea that municipal AI policy is now part of a wider compliance culture rather than a novelty item . In that sense, Oliver’s draft is less a local curiosity than a municipal version of a much bigger provincial and national trend.

The town’s discussion also mirrors similar local-government debates elsewhere, where councils are trying to distinguish between AI as a drafting aid and AI as a decision-making system. That distinction matters because government institutions are not just producing text; they are producing public records, resident correspondence, and decisions that may later need to be audited, disclosed, or defended. Once AI enters that process, the policy question becomes not whether it saves time, but whether it changes responsibility.

Why Copilot and Not Open AI

The most striking part of Oliver’s draft policy is the preference for Microsoft Copilot over “open” AI services. In practical terms, that is a bet on enterprise controls, identity management, and the assumption that a tool embedded in the town’s existing Microsoft environment is less risky than a consumer chatbot accessed through a personal account . The logic is familiar: if staff already work in Microsoft 365, then keeping AI inside that ecosystem should reduce the chance that sensitive content leaks into an outside service.That argument is not baseless. Microsoft says its commercial Copilot offerings are built around existing permissions and access controls, and that organizational data is not used to train foundation models without permission. For a municipal government, that is the kind of assurance procurement teams and CAOs want to hear, because it suggests a tighter privacy envelope than consumer AI tools usually provide. It also supports the town’s effort to create a single approved path for AI use rather than a patchwork of unofficial accounts.

Enterprise Boundary as the Real Policy Goal

The real policy goal is not Copilot itself; it is the enterprise boundary around Copilot. The town wants AI use to stay inside a managed environment where prompts, documents, and outputs do not freely spill into the wider internet or into personal consumer histories . That is why the CAO’s comments focused on the risk of content becoming discoverable elsewhere s framed the policy as a way to protect municipal information from casual exposure.This is where municipal AI governance becomes more than a technology preference. A Copilot-only rule is really a records-management and privacy rule in disguise. It reduces the number of possible failure points, which is useful when staff have varying levels of technical literacy and when the same town uses AI for documents, presentations, and bylaw-related work.

Still, the distinction is not perfect. Enterprise tools can be safer, but they are not magically safe. They still depend on user behavior, access configuration, vendor terms, and internal discipline. If staff paste confidential information into a prompt, the fact that the prompt sits inside an enterprise environment does not erase the underlying privacy issue; it only changes the risk profile.

Key implications of the Copilot-only approach include:

- a tighter data boundary around AI-assisted work;

- fewer consumer tools in circulation;

- easier training and support;

- clearer enforcement if a misuse occurs;

- stronger alignment with existing Microsoft 365 workflows.

Privacy, FOIPPA, and the Municipal Duty of Care

Privacy is the legal and political foundation of Oliver’s policy discussion. Under the draft, staff, council, and contractors would be barred from entering personal or confidential information into AI systems, with the term “confidential” anchored to FOIPPA definitions . That is a significant line in the sand, because municipal work often involves resident records, service files, correspondence, and material that cannot be treated like ordinary office text.The policy’s emphasis on prohibited data is exactly what one would expect from a public body that handles sensitive information. BC’s FOIPPA framework exists to govern collection, use, disclosure, and protection of personal information, and the province continues to underline privacy security as a core public-sector obligation. In that context, Oliver’s caution is not reactionary; it is basic administrative hygiene.

Why “Personal Information” Is the Critical Line

The problem with generative AI is not just that it can be wrong. It is that it can be fed with information the user may not fully control afterward. Once data is entered into a third-party service, the municipality has to understand retention, logging, model handling, and whether prompts or outputs are stored in ways that create future exposure. That is a much harder question than “can the tool draft a memo?”Oliver’s draft policy seems to recognize that reality by making data input restrictions central, not optional. That is smart, because data discipline is easier to enforce than trying to audit every output after the fact. If the sensitive data never enters the system, the town avoids a long tail of compliance headaches.

The town is also confronting a familiar public-sector dilemma: the more useful a tool is, the more tempting it is to feed it richer material. That is precisely why municipal AI policy cannot rely on trust alone. It needs rules, training, and visible enforcement.

Important privacy takeaways:

- sensitive resident data should stay out of AI prompts;

- enterprise controls matter, but they are not a substitute for judgment;

- FOIPPA is the floor, not the ceiling;

- contractors need the same rules as internal users;

- the policy’s credibility depends on daily compliance.

Human Review and Official Voice

Another crucial element of the draft is the requirement that staff review and confirm AI-generated content before it becomes formal, ial Town position” . That safeguard is common sense, but it carries real institutional weight. It reinforces the idea that AI can assist drafting, yet never replace the human responsibility attached to public communication.This is especially important for governments because their words are not private notes. A memo, press release, bylaw draft, or presentation can shape public understanding, future decisions, and legal obligations. If a machine drafts the text but a human does not meaningfully verify it, accountability starts to blur in ways that councils should avoid.

The Risk of Polished Mediocrity

The danger is not only factual error. It is also the rise of smooth but generic government language. AI tools are very good at producing polished prose, but public communication often requires local nuance, policy specificity, and the judgment to say what should not be said. If officials over-rely on machine-generated text, they risk flattening the town’s voice into something that sounds confident but feels hollow.That issue is not trivial. Residents do not just care that their town communicates quickly; they care that the communication is accurate, specific, and clearly owned by human decision-makers. If AI starts to shape the voice of the town too heavily, the public may reasonably wonder who is actually speaking.

Council’s discussion shows an awareness of that risk. The requirement to note when Copilot is used is a move toward transparency and accountability, and it gives the town a clearer audit trail if questions arise later. That is the right instinct, especially when a technology is being used in official settings.

Practical benefits of mandatory human review include:

- clearer responsibility for final wording;

- lower risk of factual mistakes becoming official;

- better alignment with municipal branding and tone;

- reduced chance of AI hallucinations going unchecked;

- stronger public confidence in published material.

Defining the User Base

One of the more mundane parts of the Oliver discussion may turn out to be one of the most important: who exactly counts as a user. The original draft reportedly used a broad “users” category, but council then considered a more precise “staff, council, and contrahat kind of definitional cleanup sounds bureaucratic, but it is the difference between a policy that can be enforced and one that merely sounds prudent.Broad categories create loopholes if they are not carefully tied to actual access. Narrower categories make the policy easier to explain, easier to train against, and easier to audit. If a contractor is handling town business, the town needs the same privacy expectations for that contractor that it expects from employees.

Why Precise Language Matters

Policy language is often where governance succeeds or fails. A vague “users” term may look harmless until someone argues that a volunteer, intern, or temporary consultant was never clearly covered. Then the town has an enforcement problem and, potentially, a compliance problem. Precision is not just legal neatness; it is operational protection.In a municipal setting, that matters because different classes of personnel may use different devices, accounts, or workflows. Councillors may use one set of tools, staff another, and contractors another still. If the policy does not map cleanly onto those realities, the result is confusion rather than control.

There is also a cultural dimension. People comply better with rules that are clearly written and obviously meant for them. Ambiguity creates the opposite effect: it encourages quiet workarounds and “I thought that was allowed” explanations after the fact. A good AI policy should reduce that sort of ambiguity as much as possible.

Useful reasons to sharpen the user definition:

- easier onboarding and training;

- clearer accountability after incidents;

- better contractor oversight;

- fewer interpretive disputes;

- more consistent enforcement across departments.

The Living Policy Problem

Mayor Martin Johansen’s comment that the policy should function as a working document was probably one of the wisest things said in the discussion . Aickly because the software landscape changes faster than most councils can revise bylaws, procedures, or internal standards. A rule written today may already be incomplete by the time staff begin using the next version of a productivity suite.Councillor Petra Veintimilla’s support for a “living document” approach points to the same reality: the policy will need regular updating as the world changes . That is not weakness. It AI policies are the ones written as if the technology will stand still long enough for a static rulebook to remain useful.

Why Static Rules Fail

A static policy invites two dangers. First, it becomes obsolete as vendors add AI features to products that did not previously have them. Second, it creates a false sense of security, leading staff to believe that anything not explicitly banned is safe. In a field moving this quickly, both assumptions are dangerous.A living policy, by contrast, signals that governance is continuous. It can adapt to changes in software defaults, privacy settings, document-retention practices, and vendor terms. It also gives council a mechanism to revisit the balance between efficiency and risk without pretending the first draft is the last word.

That said, a living policy only works if the town actually lives with it. Regular review has to mean more than polite annual housekeeping. It needs a formal cadence, clear ownership, and enough staff attention to catch changes in how AI is embedded across the workplace.

A useful review model would include:

- periodic policy reassessment;

- staff training refreshers;

- vendor and procurement checks;

- incident review and lessons learned;

- update notes when software behavior changes.

How This Fits the Broader Public-Sector Pattern

Oliver is not alone in wrestling with AI governance. Across local government, the same themes keep appearing: data protection, human oversight, vendor risk, and the need to distinguish assistive use from decision-making. British Columbia’s own governance posture, plus Microsoft’s enterprise messaging around Copilot, both point toward a model where AI is allowed only inside controlled environments with strong accountability hooks.What makes Oliver’s discussion interesting is that it exposes the practical trade-off. Councils want the efficiency gains of AI because administrative work is heavy and budgets are tight. Yet the same systems that help draft documents can also expose sensitive data, muddy authorship, or encourage staff to rely on text they have not fully checked. That is why AI governance is increasingly less about ideology and more about operational discipline.

Enterprise Governance Versus Consumer Convenience

Consumer AI tools are attractive because they are easy, fast, and familiar. Enterprise AI tools are attractive because they are governable. That difference sounds simple until you try to implement it in a municipality where people use personal phones, personal accounts, and mixed software stacks every day.Oliver’s preference for Copilot suggests the town is trying to make the governable choice, not the most dazzling one. That is a sensible position. It may not satisfy every user who wants broad experimentation, but municipal governments are not supposed to optimize for novelty. They are supposed to optimize for public trust, continuity, and defensible decision-making.

There is also a reputational component. A council that can say it uses AI carefully, inside a closed system, and with human review in place is in a much stronger position than one that looks improvisational. In the public sector, credibility is often built not through boldness but through restraint.

Broad lessons from the Oliver debate:

- AI should be governed like a records issue, not just a productivity issue;

- enterprise tools are easier to police than consumer tools;

- policy clarity matters more than ambition;

- human review remains essential;

- privacy is the first principle, not an afterthought.

Strengths and Opportunities

Oliver’s draft policy has several strengths that make it more promising than a vague “be careful with AI” memo. It draws an understandable boundary around approved use, keeps sensitive information out of consumer tools, and insists that humans remain responsible for anything that becomes official town output. That combination gives the town a reasonable path to use AI productively without surrendering oversight.- Clear preference for an enterprise-controlled environment.

- Strong privacy protection grounded in FOIPPA.

- Human review before formal publication or official positions.

- Contractor inclusion reduces loopholes.

- Copilot-only use limits tool sprawl.

- A living-policy mindset makes future updates easier.

- The framework supports safer experimentation inside town workflows.

Risks and Concerns

The biggest risk is that the policy becomes more symbolic than practical. A good rule on paper can still fail if staff ignore it, if contractor use is not monitored, or if AI features are embedded in software in ways the town does not fully track. Shadow AI is the obvious concern, but there are also subtler risks involving overconfidence in outputs and unclear responsibility when mistakes happen.The town will also need to be careful not to overstate Copilot’s safety. Enterprise tools reduce exposure, but they do not eliminate it, and users may still enter information they should not. If the policy is treated as a substitute for training and monitoring, the town could end up with a false sense of control.

- Staff may use personal or unapproved AI accounts anyway.

- Embedded AI features in existing software may be overlooked.

- Human review can become perfunctory if workloads are heavy.

- Vendor terms can change faster than policy updates.

- Sensitive data can still be mishandled by careless users.

- Too much restriction could drive experimentation underground.

- Residents may not understand how AI is actually being used.

Looking Ahead

What happens next will depend on how Oliver turns this draft into an actual operating policy. The key test is whether the town can translate broad principles into clear instructions, training, and enforcement that staff will understand and follow. If that happens, Oliver could end up with a pragmatic model for small-municipality AI governance.The next phase should also reveal how much appetite council really has for ongoing review. A living document only works when the updates are real, not ceremonial. That means the town will need to revisit not just the wording, but also the software stack, vendor terms, and the practical habits of staff who may already be experimenting quietly with AI.

Things to watch next:

- the formal Regular Council meeting discussion;

- whether the draft gets tightened or expanded;

- staff guidance on acceptable AI prompts and outputs;

- whether Copilot attribution rules become more explicit;

- how contractors are brought into compliance;

- whether training is scheduled alongside adoption;

- whether future reviews are calendar-based or event-driven.

Source: Times Chronicle Reality of AI policy proves challenging