Netguru’s internal sales agent Omega started as a pragmatic experiment — a Slack‑embedded assistant to reduce busywork for reps — and ended up as a textbook in how AI systems fail forward: every real production win in Omega’s evolution traces back to a specific, painful breakdown that forced a better architecture, clearer assumptions, and stronger guardrails.

Netguru built Omega to do useful, bounded work: generate call agendas, summarize discovery and expert calls, surface documents from Google Drive, suggest proposal features from past deals, and nudge teams when opportunities stalled. The ambition was deceptively simple — bring dispersed sales context into Slack without adding yet another silo — but the implementation challenges were anything but. Netguru’s practical tale highlights six failure modes that recur across enterprise agent projects: infrastructure mismatch, context size and summarization, agent orchestration loops, lack of deep research capability, blind spots on visual/structured documents, and framework lock‑in. Those failures and the fixes Netguru used are instructive for any team building AI agents today.

This article reconstructs Omega’s lessons, verifies the technical claims that mattered, and explains trade‑offs with actionable recommendations for engineering and product teams aiming to ship reliable agentic systems in production.

However, two risk areas deserve explicit caution:

For teams in the Windows and enterprise ecosystem, the core message is simple and urgent: build agents as systems, not as prompts. Architect for state, observability, governance, and human oversight from day one; measure everything that matters (latency, token spend, task success, hallucination rate); and keep the option to replace pieces simple by wrapping framework dependencies. The wins are tangible — faster meeting prep, fewer repetitive tasks, and better-informed sellers — but they only arrive when the hard engineering and governance work is done.

Netguru’s Omega proves a sober point: useful AI agents are less about model novelty and more about glue, control, and the discipline to learn from failure.

Source: Netguru 6 AI Failure Examples That Showed Us How to Build an AI Agent

Background

Background

Netguru built Omega to do useful, bounded work: generate call agendas, summarize discovery and expert calls, surface documents from Google Drive, suggest proposal features from past deals, and nudge teams when opportunities stalled. The ambition was deceptively simple — bring dispersed sales context into Slack without adding yet another silo — but the implementation challenges were anything but. Netguru’s practical tale highlights six failure modes that recur across enterprise agent projects: infrastructure mismatch, context size and summarization, agent orchestration loops, lack of deep research capability, blind spots on visual/structured documents, and framework lock‑in. Those failures and the fixes Netguru used are instructive for any team building AI agents today.This article reconstructs Omega’s lessons, verifies the technical claims that mattered, and explains trade‑offs with actionable recommendations for engineering and product teams aiming to ship reliable agentic systems in production.

Why Omega matters (overview)

Omega is not a chatbot for curiosity — it’s an embedded workflow assistant tied to opportunity channels in Slack. That placement raises two immediate constraints every architect must accept:- Low latency: Slack interactive payloads require an acknowledgement within a few seconds to avoid client timeouts and duplicate behavior.

- Multi‑step, stateful work: useful agent tasks often span retrieval, reasoning, tool calls, and follow‑ups, which look like long‑running stateful workflows rather than single-shot RPCs. Netguru discovered that these two constraints push teams away from naive serverless deployments and naive prompt dumping.

Technical reality check: the three system constraints you’ll hit first

- Short acknowledgment windows for conversational platforms — Slack expects an ack within 3 seconds for actions and slash commands, after which the client may show a timeout and reissue events.

- Serverless function semantics — AWS Lambda is excellent for short, stateless event handlers but enforces a maximum synchronous execution window and has cold‑start characteristics that matter for latency‑sensitive workloads. The maximum invocation duration is 15 minutes and cold starts can add measurable seconds depending on runtime, packaging, and VPC usage.

- LLM context limitations and hallucinations — even the newest models have finite context windows and hallucination risk when fed noisy, unstructured inputs. Avoid assuming a single LLM can reliably synthesize long, messy histories without structured retrieval and summarization. Recent deep‑context models raise the ceiling but don’t remove the need for disciplined context engineering.

AI Failure #1 — Infrastructure that couldn't keep up

What went wrong

Omega initially ran on AWS Lambda because it’s serverless, cost‑effective for event triggers, and fast to iterate with. In real Slack flows, Lambda’s cold starts, absence of durable local state, and the 15‑minute maximum execution duration caused timeouts, duplicate messages (because Slack retried), and complex debugging when flows spanned many micro‑steps. Netguru’s engineering notes include hacks like two‑phase Lambda replies (ack then re‑invoke) and moving Python imports into functions to speed initial response — valid short fixes but brittle long term.Technical verification

- Slack requires an ack/HTTP 200 within ~3 seconds for interactive actions to avoid client timeouts; Slack’s developer docs recommend returning 200 OK immediately and using response_url for async replies.

- AWS Lambda enforces a maximum synchronous invocation time (15 minutes) and has documented cold starts that vary by language/runtime, dependency size, and packaging; AWS advises strategies such as provisioned concurrency and SnapStart to reduce cold starts for Java/Python/.NET.

Why the design failed

Serverless functions are optimized for ephemeral, stateless work. Agent workflows look like conversations with memory and multi‑agent choreography; they need reliable state, long‑running orchestration, and deterministic retries. The Lambda pattern (ack + re‑invoke) traded one problem for another: it introduced orphaned executions, duplicated side effects when retries occurred, and brittle debugging traces.How Netguru fixed it (and what to consider)

- Move orchestration to a stateful workflow engine (Netguru chose AWS Step Functions for state management, retry, and visibility). Step Functions gives durable state between steps, error handling, and a visual trace to debug flows. This aligns with best practices for multi‑stage orchestration of async workloads.

- Offload heavier or longer tasks to EC2 or containerized services (or internal MCP servers) where you control runtime, keep persistent connections, and avoid cold starts.

- Use provisioned concurrency or SnapStart selectively for handlers that must maintain sub‑second latency, but treat these as cost and ops trade‑offs, not a universal fix.

AI Failure #2 — Token limits and Slack summarization at scale

What went wrong

Omega attempted to summarize entire Slack threads for context, which is an obvious idea — but long conversations exceed model context windows and introduce noisy, nonlinear data that confuses models. Naively stuffing entire histories created both token overflow and hallucinations: the model omitted important facts or invented details when forced to compress huge, fragmentary threads.Technical verification

- Every LLM has a context window (tokens). While context windows have expanded dramatically in 2024–2025 for newer models (some vendors now offer 128K or 1M token models), most production setups still mix smaller models for routing with larger models for deep synthesis — and practical costs and latency still push teams to chunk and retrieve. OpenAI and other vendors have launched models with very large context lengths, but those models introduce new cost/latency trade‑offs and are not a silver bullet.

How Netguru adapted

- Implement a nightly summarization pipeline rather than pulling raw Slack history in‑line. They used orchestration (Step Functions + SQS hybrid) to summarize older messages into condensed records stored in a database, and then only injected a fixed number of “starting messages” plus a short recent window into the agent runtime. This reduced noise, fixed token drift, and let Omega retrieve more detailed history on demand.

Engineering guidance

- Never assume a single turn should carry full raw history. Build incremental summarization and versioned conversation snapshots.

- Use retrieval‑augmented generation (RAG) patterns: short, high‑precision retrieval windows and a knowledge DB that’s updated asynchronously.

- Instrument and measure hallucination counts relative to different summarization thresholds; optimize for the lowest token budget that preserves factuality.

AI Failure #3 — Multi‑agent flows that got stuck in loops

What went wrong

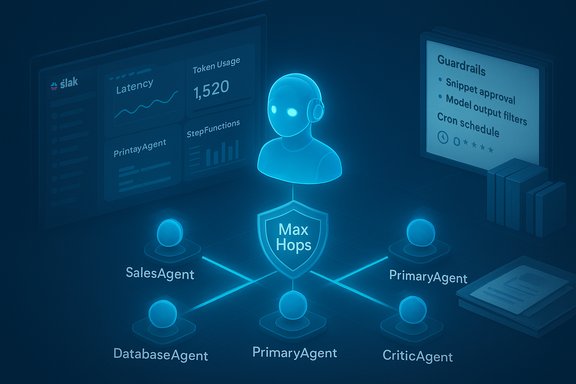

Netguru introduced multiple specialist agents (SalesAgent, DatabaseAgent, PrimaryAgent, CriticAgent) and initially used simple round‑robin or selector logic. Agents began ping‑ponging control, restarting each other, or favoring a single agent repeatedly — resulting in infinite loops, token burn, and no final output.Technical verification & context

- Multi‑agent orchestration is an active research and engineering area. Frameworks like Microsoft’s AutoGen provide structured APIs for multi‑agent messaging and coordination, but language models themselves don’t natively self‑coordinate reliably without explicit routing, role constraints, and termination conditions. AutoGen and similar frameworks help, but they don’t automatically prevent loops — good orchestration and constraints do.

Why it failed

The system lacked explicit routing rules, hard timeouts, and token budgets per agent. The CriticAgent — intended as quality control — sometimes triggered unnecessary restarts, compounding the loop problem.How Netguru stabilized flows

- They reframed two architectures for experiments: a swarm of specialized agents with strict handoff protocols, and a single main agent that treats specialized agents as tools (invoked deterministically). Both approaches require explicit termination policies, per‑agent token and call budgets, and a “max hops” policy to avoid infinite delegation.

Implementation checklist

- Enforce a global max‑turn budget and per‑agent invocation limits.

- Use deterministic Graph/Flow definitions for common tasks and only permit dynamic routing in well‑instrumented experiments.

- Treat Critic or reviewer functions as optional feedback tools invoked with thresholds (e.g., only when confidence < X).

- Instrument task success rate and token efficiency as primary metrics for choosing an orchestration pattern.

AI Failure #4 — Expecting deep reasoning without research tooling

What went wrong

Omega was asked to produce consultant‑level deliverables (industry case studies, competitor benchmarks) but had only internal data and no web research capability. The result: shallow answers or hallucinated facts.Technical verification

- LLMs are pattern learners. Without external knowledge sources or a structured web research tool, they will synthesize plausible but unverified statements. Perplexity, Sonar, and other “deep research” models provide APIs that can do source‑backed research and return citations; Azure and other stacks provide large context engines suited to multi‑step research workflows. Netguru examined these options (Perplexity Sonar, Azure Deep Research, and custom research agents) and weighed trade‑offs (context size, cost, integration complexity).

How to avoid the same mistake

- Build a dedicated research toolchain or integrate a citation‑capable research model for high‑stakes outputs. Options to consider:

- Perplexity Sonar for API‑driven, citation‑oriented research (128K context in some offerings, async APIs for long jobs).

- Azure’s research and large‑context tooling for enterprise workloads when you need deep context windows and integration with Azure security.

- A custom research agent using curated crawlers plus RAG, which is slower to build but gives full control and predictable provenance.

AI Failure #5 — Ignoring visual and structured inputs (tables, screenshots, PDFs)

What went wrong

Early Omega processed plain text only. Pitch decks, pricing tables, and screenshots were ignored or flattened, which lost crucial meaning and produced misleading summaries.Technical verification

- Document processing services (Azure Document Intelligence / Form Recognizer and Amazon Textract) are specifically built to extract tables, key‑value pairs, and layout from PDFs and images. Azure Document Intelligence provides APIs to extract tables and preserve page/structure, and it integrates with modern Azure stacks for reliable downstream processing. Amazon Textract also extracts tables but has limitations in certain layouts and orientation cases; practitioners report mixed results with complex or vertical text. The commercial and open‑source ecosystems (pdfplumber + Tesseract, or a multimodal LLM that accepts documents directly) offer different trade‑offs.

Netguru’s solution

- Netguru evaluated multiple extraction routes and settled on Azure Document Intelligence for consistent table extraction and layout preservation — then fed structured Markdown (tables + image metadata) to downstream agents. This allowed Omega to reason about structured data rather than guess from flattened text.

Implementation options and trade‑offs

- Amazon Textract: good managed service, pays per page, mixed results on exotic layouts; vendor lock‑in risk and per‑page cost.

- Azure Document Intelligence (Form Recognizer): robust table/key‑value extraction with SDKs and long‑running operations; integrates well in Azure environments.

- Open‑source stack (pdfplumber + Tesseract): cheaper and flexible but requires engineering to handle noisy inputs and complex table detection.

- Multimodal LLMs that accept PDFs: simplest pipeline but expensive in tokens and potentially inconsistent for precise data extraction.

AI Failure #6 — Picking a framework before knowing the trade‑offs

What went wrong

Netguru adopted AutoGen’s AgentChat high‑level API to accelerate development. That gave a fast prototype path but later limited customization, model portability, and deep debugging. As the system matured they moved from the high‑level API down into AutoGen Core for more control while adding wrappers to avoid framework coupling.Technical verification

- AutoGen is a widely used multi‑agent framework that provides layered APIs (Core, AgentChat, Extensions); it’s well‑suited for rapid prototyping but, like all abstractions, imposes trade‑offs when you need low‑level control or custom model integrations. Microsoft maintains AutoGen and documents the layered approach clearly.

Lessons and engineering controls

- Start with the simplest tooling that validates your business assumptions, not the one that seems “most production ready.” But design for portability:

- Encapsulate framework calls behind thin adapters/wrappers to enable future migration.

- Keep your orchestration logic in versioned, well‑documented graphs or state machines rather than hard‑wiring behavior into framework glue.

- Maintain a fallback agent path: e.g., a single agent + tools mode that can replace a complex multi‑agent swarm if coordination proves too brittle.

Operational and governance lessons (cross‑cutting)

Across these failures, consistent themes emerged:- Design for observability from day one. Flow‑level traces, token usage logs, per‑agent invocation counts, and human corrections are essential signals for tuning.

- Human‑in‑the‑loop (HITL) is not an afterthought. For high‑impact outputs, embed verification gates and audit trails. Log prompts, model versions, and the evidence used for claims.

- Data governance and DLP matter. Agents that can access documents and CRM fields must obey the principle of least privilege and tenant scoping policies. Don’t assume vendor promises are sufficient; validate contractual and telemetry protections for sensitive data.

- Be conservative with autonomy. Let agents act only when you can trace and roll back side effects. Automated writes to CRMs, contract systems, or billing engines require explicit approvals and robust rollback playbooks.

Practical blueprint: how to build an agent the Netguru way (do / don’t checklist)

- Start small and measure:

- Pick one narrow, high‑value workflow (meeting prep, triage, or doc retrieval). Define KPIs: time saved, error rate, and verification time.

- Architect for state and orchestration:

- Use a workflow engine (Step Functions, durable tasks, or similar) for multi‑step flows. Avoid trying to keep long state in ephemeral functions.

- Design context rigorously:

- Summarize and store conversation snapshots nightly. Use retrieval‑augmented patterns with short, relevant windows injected at runtime.

- Control agent routing:

- If using multiple agents, define deterministic handoffs and enforce max‑hops and token budgets. Evaluate single‑agent + tools vs. swarm approaches with measurable experiments.

- Add visual/structured extraction early:

- For sales and proposal work, integrate a document intelligence step (Azure Document Intelligence or equivalent). This prevents “silent misses” in decks and PDFs.

- Bake governance and logs into the product:

- Log prompt content, model version, retrieval evidence, agent flow traces, and user actions. Keep records for audit and debugging.

- Invest in research tooling for high‑stakes answers:

- For competitive analyses or industry reports, have a research agent that returns citations, or integrate Perplexity/Sonar or enterprise web research services.

Critical analysis: strengths, risks, and what Netguru’s story hides

Netguru’s approach is pragmatic and replicable: modular services, staged summarization, and experimenting with orchestration patterns are strong engineering responses. Their use of Azure Document Intelligence to fix visual extraction is a sensible match for document‑heavy sales work, and their decision to move orchestration into Step Functions is the correct operational trade‑off for reliability and observability.However, two risk areas deserve explicit caution:

- Model evolution changes the economics. New long‑context models (some vendors now advertise 128K–1M token models) alter trade‑offs but bring higher costs and new operational complexity (latency, token accounting). Don’t assume “more context” eliminates the need for retrieval and summarization. Validate cost vs. benefit with real workload simulations.

- Third‑party research / citation services can return fragile outputs. Early user reports and community threads show Sonar and research APIs sometimes truncate or vary in output length, and vendor APIs may have quirks you’ll need to handle. Expect to wrap such services with retry, chunking, and cascade fallback strategies.

Conclusion

Omega’s best lessons were learned the hard way: failure is the design teacher that reveals hidden assumptions. The six failure modes Netguru encountered — serverless limitations, token window exhaustion, agent orchestration loops, missing research tooling, visual‑document blind spots, and framework lock‑in — are practical, common obstacles. Each has clear engineering remedies: durable orchestration, summarization pipelines, disciplined agent routing, dedicated research agents, document intelligence, and cautious choice of framework with adapter patterns.For teams in the Windows and enterprise ecosystem, the core message is simple and urgent: build agents as systems, not as prompts. Architect for state, observability, governance, and human oversight from day one; measure everything that matters (latency, token spend, task success, hallucination rate); and keep the option to replace pieces simple by wrapping framework dependencies. The wins are tangible — faster meeting prep, fewer repetitive tasks, and better-informed sellers — but they only arrive when the hard engineering and governance work is done.

Netguru’s Omega proves a sober point: useful AI agents are less about model novelty and more about glue, control, and the discipline to learn from failure.

Source: Netguru 6 AI Failure Examples That Showed Us How to Build an AI Agent