OpenText’s latest push makes an urgent point for enterprise IT: the limit to scaling generative AI is rarely the models — it’s the content that feeds them. The company’s Content Cloud 26.1 release, the new Content Aviator–Microsoft Copilot integration, and the industry-focused Content Next collaboration with Fiserv collectively aim to convert promising pilots into repeatable, auditable, and governed AI outcomes by making enterprise content AI-ready.

Generative AI has matured rapidly, but enterprise adoption has followed a familiar trajectory: high-impact pilots, quick productivity wins for early users, then plateauing benefits driven by governance, traceability, and content quality challenges. Industry research highlights the gap: independent analyses show only a very small percentage of organizations are truly prepared to scale AI securely today, with security and governance frequently cited as the gating factors. OpenText positions its Content Cloud and companion services as the content-centric infrastructure designed to close that gap.

This article dissects the recent announcements, validates headline claims against independent industry research and vendor material, and offers practical analysis for IT leaders who must decide whether to build, buy, or partner to make their content backbone AI-ready.

Two practical implications:

At the same time, independent readiness studies show a large gap between intention and operational maturity. A notable industry survey found that only a very small fraction of organizations qualify as highly AI-ready, with most firms occupying a “moderately ready” band and struggling with governance and security. That gap explains why OpenText’s content-first positioning resonates: it directly targets the structural impediments that keep pilots from becoming enterprise-wide.

But technology alone will not solve the problem. To realize the promise, organizations must reorganize around content as an asset, invest in metadata and taxonomy, and align legal, compliance, and business teams to define acceptable risk thresholds and human oversight policies.

For IT leaders evaluating these options:

Enterprises that prioritize trusted content, clear provenance, and permission-aware AI will get measurable business value from generative models sooner — and with far less downstream risk — than those that chase model performance alone. The next phase of AI adoption will reward organizations that see content management not as a legacy cost center, but as the strategic bedrock of trustworthy, operational AI.

Source: OpenText Blogs Delivering impactful AI outcomes with AI-ready content

Background

Background

Generative AI has matured rapidly, but enterprise adoption has followed a familiar trajectory: high-impact pilots, quick productivity wins for early users, then plateauing benefits driven by governance, traceability, and content quality challenges. Industry research highlights the gap: independent analyses show only a very small percentage of organizations are truly prepared to scale AI securely today, with security and governance frequently cited as the gating factors. OpenText positions its Content Cloud and companion services as the content-centric infrastructure designed to close that gap.This article dissects the recent announcements, validates headline claims against independent industry research and vendor material, and offers practical analysis for IT leaders who must decide whether to build, buy, or partner to make their content backbone AI-ready.

Overview: What OpenText announced and why it matters

OpenText’s recent product and partnership news fall into three linked themes:- AI-to-AI integration: Content Aviator, OpenText’s content-aware AI assistant, is now integrated as an agent inside Microsoft Copilot so Copilot can query governed enterprise content without leaving Microsoft 365 apps. The feature is part of OpenText Core Content Management’s 26.1 release.

- Industry-tailored productization: A co-innovation with Fiserv produced Content Next, a cloud-native, multi-tenant content and workflow platform for regulated financial services that embeds AI-enabled classification, summarization, and automation.

- Market recognition and validation: OpenText has been called out by industry analyst reports for leadership in knowledge discovery and intelligent document processing, reinforcing the narrative that content platforms are foundational to operational AI.

Why content — not models — is the scaling bottleneck

Generative models are powerful and accessible, but when deployed in enterprises their outputs must be: accurate, auditable, compliant, and contextual. These requirements expose four common content problems:- Fragmentation: Content resides across repositories, collaboration tools, archives, and vertical systems. An assistant that cannot access the right repositories will produce incomplete or misleading responses.

- Poor metadata and discoverability: Without reliable classification and metadata, retrieval is noisy and relevance suffers.

- Governance and traceability gaps: Compliance teams demand answers that can be traced to source documents and policy decisions — something most early AI pilots did not prioritize.

- Data quality and privacy: Incomplete or stale content, sensitive information mixed with non-sensitive content, and inconsistent redaction policies create legal and operational risk.

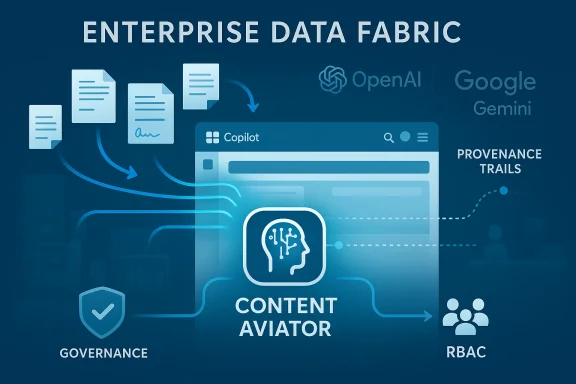

The AI-to-AI approach: Content Aviator inside Microsoft Copilot

What the integration delivers

The Content Aviator–Copilot integration is an example of what OpenText calls “AI-to-AI” — one AI (a content-aware agent) acting as a trusted data source for another AI (Copilot). Practically, the integration promises four core capabilities inside Microsoft 365:- Surface summaries and key insights that are grounded in enterprise content.

- Provide real-time access to source documents and supporting records from within the Copilot experience.

- Offer traceable references so users can validate AI responses against governed content.

- Reduce app switching by keeping workers inside familiar Microsoft apps while accessing enterprise-managed knowledge.

Technical posture and model choices

Content Aviator is designed to operate with multiple frontier models and cloud providers — OpenAI, Google Gemini, and cloud providers’ model families — while remaining repository-aware and permission-aware. That design lets enterprises choose model runtimes while preserving content governance and audit trails.Two practical implications:

- Enterprises can continue to use their preferred large models while centralizing trust at the content layer.

- Security teams can restrict which content is exposed to which models and retain audit logs that show what content was used to produce a particular response.

Why embedding matters more than connectors

APIs and connectors can stitch applications together, but they do not by themselves create a knowledge fabric — consistent metadata, robust classification, and a single source of truth. Embedding an agent like Content Aviator into a platform such as Copilot means the content system can apply enterprise rules, access policies, and explainable retrieval workflows before the model consumes the content. That order matters for compliance, for controlling hallucination risk, and for improving answer accuracy.Industry focus: Content Next and the financial services use case

The challenge for banks and credit unions

Financial institutions operate under strict regulatory regimes — KYC/AML, record retention, auditability, and consumer privacy regulations. They also handle high volumes of documentation: loan files, transaction records, regulatory filings, and customer communications. For these organizations, a misplaced or ungoverned AI response isn’t just embarrassing — it can be illegal.What Content Next promises

Developed in partnership with Fiserv, Content Next is a multi-tenant, cloud-native content and workflow suite purpose-built for financial institutions. The offering emphasizes:- Role- and process-based workspaces tailored to banking workflows (e.g., lending, compliance, customer service).

- Embedded AI for permission-aware search, automated document classification, summarization, and process automation.

- Self-service administrative controls enabling institutions to manage permissions, onboard users, and configure roles without heavy IT intervention.

- Multi-tenant, regulated-cloud architecture intended to support secure scaling.

Why industry-built matters

General-purpose content platforms can be adapted for regulated industries, but starting with a domain-specific blueprint accelerates time-to-value. Content Next bundles workflow templates, compliance guardrails, and integration patterns with core banking systems — reducing the customization burden that often stalls enterprise AI projects.Independent validation and analyst recognition

OpenText’s strategy is not just marketing; it has earned recognition in analyst research. Notably, an IDC MarketScape assessment and other industry analyses identify OpenText as a leader for knowledge discovery and intelligent document processing capabilities. Those judgments reinforce the claim that enterprise-grade content platforms — with governance, search, and metadata enrichment — are central to scaling AI in regulated and complex environments.At the same time, independent readiness studies show a large gap between intention and operational maturity. A notable industry survey found that only a very small fraction of organizations qualify as highly AI-ready, with most firms occupying a “moderately ready” band and struggling with governance and security. That gap explains why OpenText’s content-first positioning resonates: it directly targets the structural impediments that keep pilots from becoming enterprise-wide.

Strengths: What is compelling about OpenText’s approach

- Governance-first design: Embedding content governance before model consumption dramatically reduces compliance headaches and operationalizes traceability.

- Contextual AI: When AI answers are rooted in permission-aware content stores, accuracy and confidence rise — particularly for knowledge worker scenarios that require a defensible audit trail.

- Vendor and model neutrality: Supporting multiple model providers allows organizations to standardize on content controls while experimenting with models.

- Industry partnerships: Co-innovation with a payments and banking giant shortens the time-to-compliance and demonstrates real-world applicability in a high-regulation sector.

- Operationalization focus: The roadmap and product releases emphasize moving from pilots to production — a missing step in many enterprise AI journeys.

Risks and limitations: What to watch for

- Integration complexity in heterogenous environments: Many large enterprises run legacy content systems, custom ECMs, and archived repositories. Even with migration tools, the process of consolidating metadata, access policies, and data quality across diverse systems will be non-trivial.

- False sense of “magic”: Embedding a content agent doesn’t eliminate the need for robust data hygiene, continuous labeling, and active governance processes. Organizations must still invest in content remediation, retention policies, and human-in-the-loop validation.

- Operational metadata debt: If organizations rush to connect content but neglect to model business context appropriately (who owns a workspace, what retention applies, which fields are authoritative), auditability will be shallow and brittle.

- Model–content boundary risks: Even with permission-aware gates, models might still generate outputs that misrepresent context if retrieval relevance is weak. Guardrails need to include confidence scoring, fallback behaviors (e.g., “I don’t know” responses), and human review for high-risk outputs.

- Vendor lock-in perceptions: While Content Aviator aims to be model-agnostic, deep integration with a vendor’s content platform raises procurement questions. Organizations must evaluate total cost of ownership and exit strategies.

- Regulatory interpretation: Embedding AI into decision workflows can change legal responsibilities and audit expectations; banks and regulated entities must align product features with legal counsel and regulators before broad rollouts.

Practical guidance: How to get content AI-ready

Making content AI-ready is both technical and organizational. Here are pragmatic steps IT and data leaders should follow to move from pilot to scale:- Inventory and classify repositories

- Identify all content sources, owners, formats, and regulatory obligations.

- Prioritize high-impact domains

- Start where AI answers create measurable business value and where traceability is manageable (e.g., HR policies, contract summaries, customer support knowledge).

- Establish metadata and taxonomy standards

- Create consistent classification, tagging rules, and canonical fields that drive retrieval relevance.

- Apply permission-aware retrieval

- Ensure content access for AI agents is governed by the same RBAC and policy rules as humans.

- Instrument audit and provenance logging

- Record which documents and passages contributed to every AI response; surface that provenance to users.

- Define human-in-the-loop thresholds

- Require human validation for decisions above risk or monetary thresholds; automate low-risk summarizations.

- Continuously measure accuracy and business outcomes

- Track model answer precision, user trust metrics, time saved, and compliance KPIs.

- Plan migration and parallel operations

- Use migration tooling for legacy systems, but maintain parallel audits until provenance and quality are verified.

Operational considerations for Microsoft Copilot integration

Embedding Content Aviator in Copilot is operationally attractive, but it introduces specific administrative considerations:- Permission propagation: Verify that content permissions are enforced consistently when content is surfaced inside Copilot, and ensure that sharing artifacts don’t leak beyond intended audiences.

- User experience: Educate knowledge workers about provenance features — e.g., how to view source citations or escalate uncertain answers for review.

- Monitoring and alerting: Set up automated alerts for anomalous retrieval patterns that could indicate misconfigured permissions or model misuse.

- Retention and legal hold: Ensure AI query logs and provenance traces are accounted for in legal holds and retention policies.

- Model governance: Decide which models are permitted for which workloads and enforce those choices via platform controls.

Business impact: What success looks like

Organizations that nail the content layer will see several tangible benefits:- Faster decision cycles because knowledge workers can retrieve validated summaries without manual searches.

- Reduced operational risk as outputs are traceable and auditable.

- Lower friction for scaling AI across teams because governance becomes a re-usable service rather than a one-off project.

- Higher ROI on AI initiatives as efforts move from single-use pilots to embedded workflows that touch many users.

When to buy, build, or partner

- Buy (choose a packaged content platform) if you:

- Need rapid compliance and pre-built governance for regulated workflows.

- Prefer a single-vendor path for integrated provenance, RBAC, and UI experience.

- Want vendor-managed upgrades and audit features.

- Build (augment existing ECM with custom capabilities) if you:

- Have significant legacy investments that cannot be migrated quickly.

- Need highly specialized business logic tightly coupled to in-house systems.

- Possess the governance discipline and engineering bandwidth to operate a custom stack.

- Partner (co-innovate with a vendor or systems integrator) if you:

- Operate in an industry with specific regulatory needs and need accelerators (for example, banking, insurance, or healthcare).

- Want domain templates and workflow patterns that shorten time-to-value.

Final verdict and recommendations

OpenText’s content-first articulation of enterprise AI readiness is a practical answer to a persistent problem: models are only as useful as the data and governance that shape them. The company’s 26.1 release — particularly the Content Aviator integration with Microsoft Copilot — and the Content Next program with Fiserv are sensible steps toward operationalizing trustworthy, accountable, and scalable AI.But technology alone will not solve the problem. To realize the promise, organizations must reorganize around content as an asset, invest in metadata and taxonomy, and align legal, compliance, and business teams to define acceptable risk thresholds and human oversight policies.

For IT leaders evaluating these options:

- Treat content readiness as the critical path for AI scale.

- Insist on provenance, auditability, and permission-aware retrieval as non-negotiable features.

- Pilot with business value metrics, then harden governance and migration plans before broad rollouts.

- Consider industry-specific offerings when regulatory complexity is high — they accelerate compliance and reduce integration debt.

Conclusion

The path to reliable enterprise AI runs through the content estate. OpenText’s 26.1 innovations, Content Aviator’s Microsoft Copilot integration, and the Content Next partnership articulate a coherent answer to the toughest scaling problems: fragmentation, governance, traceability, and industry compliance. These are not silver bullets, but they are necessary plumbing for responsible, scalable AI.Enterprises that prioritize trusted content, clear provenance, and permission-aware AI will get measurable business value from generative models sooner — and with far less downstream risk — than those that chase model performance alone. The next phase of AI adoption will reward organizations that see content management not as a legacy cost center, but as the strategic bedrock of trustworthy, operational AI.

Source: OpenText Blogs Delivering impactful AI outcomes with AI-ready content