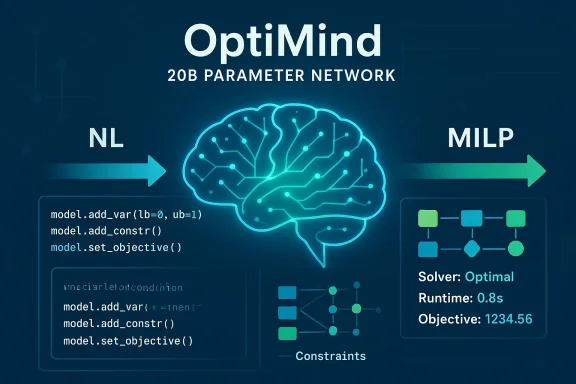

OptiMind is Microsoft Research’s new, domain-specialized small language model that translates natural-language optimization problems into solver-ready mixed-integer linear programs (MILPs) and executable code, promising to make optimization modeling faster, more reproducible, and—critically—more auditable for real-world decision systems.

Optimization models power everything from supply‑chain planning to last‑mile routing and energy grid management, but converting informal business requirements into precise mathematical formulations remains an expert task. OptiMind is explicitly built to sit at that interface: a 20‑billion‑parameter Mixture‑of‑Experts transformer fine‑tuned to output both the math (variables, objective, and constraints) and executable Python code (GurobiPy by default) that a solver can run. The project pairs a careful data‑cleaning pipeline and a library of domain hints with a lightweight model architecture to reduce common conceptual errors in NL→MILP translation. (huggingface.co)

At a glance:

Strengths of the method:

That said, practitioners should proceed cautiously:

In closing, OptiMind is an instructive case study: specialization plus structured domain knowledge produces a model that is smaller, faster to run locally, and—when used with careful verification—capable of producing much more reliable optimization formulations than unguided LLMs. The work reframes a practical question for organizations building decision systems: invest in larger models, or invest in domain knowledge and robust validation pipelines? OptiMind makes a persuasive argument for the latter.

Source: Microsoft https://www.microsoft.com/en-us/res...hink-like-optimization-experts-with-optimind/

Background

Background

Optimization models power everything from supply‑chain planning to last‑mile routing and energy grid management, but converting informal business requirements into precise mathematical formulations remains an expert task. OptiMind is explicitly built to sit at that interface: a 20‑billion‑parameter Mixture‑of‑Experts transformer fine‑tuned to output both the math (variables, objective, and constraints) and executable Python code (GurobiPy by default) that a solver can run. The project pairs a careful data‑cleaning pipeline and a library of domain hints with a lightweight model architecture to reduce common conceptual errors in NL→MILP translation. (huggingface.co)At a glance:

- Model: OptiMind‑SFT, 20B parameters (MoE variant). (huggingface.co)

- Primary task: Translate natural language problem descriptions into MILP formulations and runnable GurobiPy code.

- Data approach: Cleaned public OR datasets plus a hint library of expert rules used at training and inference.

- Availability & license: Released as microsoft/OptiMind‑SFT on Hugging Face under an MIT license; cleaned benchmarks and tooling are published with the research. (huggingface.co)

Why OptiMind matters: the gap it targets

Converting human requirements into an optimization-ready MILP is a brittle, error‑prone process. Typical pain points include:- Ambiguous or missing constraints in problem statements.

- Incorrect or inconsistent reference solutions in public datasets.

- Repeated, systematic modeling mistakes for particular problem families (e.g., subtour elimination in TSP).

How OptiMind works

1. Data triage and hint‑driven cleaning

The project began by auditing existing NL→MILP datasets and benchmarks and discovering that many test and training examples were flawed—between roughly 30–50% of some benchmark instances were problematic (missing constraints, ambiguous instructions, or incorrect reference models). Rather than manually repair every training example, authors developed a semi‑automated workflow:- Classify problems into known optimization families (routing, scheduling, network design, etc.).

- Identify repeatable error modes within each class.

- Codify expert hints that correct those error modes (for example: how to treat a start node in TSP subtour‑elimination constraints; enforce flow conservation; use symbolic “big‑M” bounds instead of hard constants).

- Apply hints to guide a teacher model to regenerate corrected solutions and use majority voting to produce robust cleaned labels.

2. Supervised fine‑tuning

OptiMind is fine‑tuned from a 20B base in the gpt‑oss family (unsloth/gpt‑oss‑20b BF16 variant referenced in the model card) using the cleaned datasets (OR‑Instruct, OptMATH subsets). The fine‑tuning objective encourages the model to produce both:- A structured mathematical formulation (variables, indices, constraints, objective) and

- An executable code block (GurobiPy is the primary target in published examples). (huggingface.co)

3. Inference: classification, hint retrieval, and optional solver‑in‑the‑loop self‑correction

At inference, OptiMind follows a multi‑stage flow:- Classification: detect the optimization class of the input problem.

- Hint retrieval: fetch the relevant expert hint(s) for that class and prepend them to the prompt as a guardrail.

- Generation: produce MILP math and GurobiPy code.

- (Optional) Self‑correction: run generated code against a solver, capture error messages, and iterate—using solver feedback as an additional check to ensure the code is executable and the formulation is feasible.

Verified technical specifications (checked against public artifacts)

Below are the load‑bearing technical claims and the public sources that verify them:- OptiMind is a 20 billion parameter model with a Mixture‑of‑Experts architecture; the Hugging Face model card lists 20B parameters and a 128k token context window. (huggingface.co)

- The team reports systematic accuracy improvements from their pipeline: the arXiv paper quantifies a 20.7% improvement in formulation accuracy across multiple benchmarks after their methods; Microsoft’s blog highlights roughly a ~10% improvement over the base model on cleaned benchmarks, noting that cleaning removed a large fraction of bad test data. These figures represent different but complementary summary metrics reported by the authors. (arxiv.org)

- The model outputs solver‑ready GurobiPy code and the Hugging Face README shows sample usage that expects a valid Gurobi license to run the generated scripts. (huggingface.co)

- The project paper and blog both describe the hint library and the training / inference workflows that use hints and solver feedback. (arxiv.org)

Evaluation and benchmark performance: what OptiMind actually achieves

The OptiMind team evaluated the model on classical NL→MILP benchmarks—most prominently IndustryOR, Mamo‑Complex, and OptMATH—after manually repairing the public test sets. Two key takeaways emerge:- Raw public benchmarks contained many flawed examples; after cleaning those test sets, OptiMind’s absolute performance rose substantially and outperformed open‑source models under 32B parameters on the corrected benchmarks.

- The authors report that their error‑classification + hint pipeline yields consistent gains under test‑time scaling methods (self‑consistency, multi‑turn feedback), enabling OptiMind to approach or match the performance of much larger frontier systems in certain settings. The arXiv paper quantifies a 20.7% improvement in formulation accuracy across several benchmarks after applying the method. (arxiv.org)

Strengths and practical benefits

- Domain alignment reduces error modes. By encoding expert practices as hints and cleaning the labels, OptiMind focuses on structural correctness rather than surface fluency—this is exactly what the MILP task requires. The result is fewer infeasible or logically inconsistent formulations. (arxiv.org)

- Smaller model, local deployment. At 20B parameters OptiMind is modest relative to the multi‑hundred‑billion‑parameter giants. That makes local or on‑prem deployment feasible for organizations that must keep sensitive supply‑chain or operational data inside their network. The Hugging Face model card explicitly targets local serving via SGLang and recommends ≥32GB GPU VRAM for comfortable inference. (huggingface.co)

- Reproducible research artifacts. The team published cleaned benchmarks, the training pipeline, the model card, and an arXiv paper—this level of openness is rare for domain‑specialized models and helps the community reproduce and extend the work. (arxiv.org)

- Solver‑in‑the‑loop validation. Running generated code against an actual solver and leveraging its error messages for iterative correction brings an engineering maturity that avoids purely syntactic evaluation and emphasizes executability.

Risks, limitations, and where to be cautious

No model output should be trusted blindly in operational settings. OptiMind’s own documentation and model card list multiple explicit limitations and safety warnings:- Generated code can still be incorrect (invalid constraints, wrong objective, or erroneous feasibility declarations). The model card cautions that OptiMind “can still produce incorrect formulations or invalid code” and warns against automatic execution without sandboxing and human oversight. (huggingface.co)

- Solver dependency and licensing: The default example path targets GurobiPy, a commercial solver. That creates practical constraints: users need a Gurobi license to execute generated scripts, and the model’s examples/infrastructure are structured around that ecosystem. Organizations relying on open solvers will need adapter tooling and additional verification. (huggingface.co)

- Benchmark sensitivity to cleaning decisions: The project’s performance uplifts depend heavily on the cleaned test sets. While cleaning is sensible and necessary, it also means that reported numbers are conditional on specific manual repair choices. The community should treat benchmark numbers with that context in mind and prefer the cleaned benchmarks for apples‑to‑apples comparisons. (arxiv.org)

- Overconfidence and automation risk: The model can produce plausible, executable code that nevertheless encodes incorrect assumptions. Deploying generated optimization models directly into production without a human‑in‑the‑loop and operational safety checks risks misallocation of resources, safety failures, or regulatory non‑compliance (for high‑stakes domains like healthcare, finance, or critical infrastructure). The Hugging Face card explicitly marks fully automated execution and safety‑critical use as out‑of‑scope. (huggingface.co)

- Security and injection risks: Any system that generates executable code must be treated as an attack surface. Prompt injection, model manipulation, or accidental generation of destructive commands requires hardened runtime controls, sandboxing, and policy enforcement—none of which are solved solely by a better model.

Deployment considerations for practitioners

If you plan to evaluate or use OptiMind in a research or production context, here are pragmatic recommendations:- Keep a human expert in the loop. Always have an OR practitioner review generated formulations before execution—particularly in production scenarios. The model card emphasizes this. (huggingface.co)

- Use solver feedback as a gate. Automate a verification pipeline that executes generated scripts in a sandboxed environment, captures solver logs, and checks for logical correctness (feasibility, constraint satisfaction, objective sanity) before any downstream action. OptiMind is designed to support such a solver‑in‑the‑loop flow; use it.

- Plan for solver portability. If you cannot rely on a commercial solver like Gurobi, develop adapter layers (e.g., generate Pyomo or CVXPY code as alternatives) and extend the hint library to reflect solver‑specific quirks and performance characteristics. The community contributions promised by the authors (cleaned benchmarks and tooling) make this tractable. (huggingface.co)

- Audit and provenance. Log model prompts, retrieved hints, generated math, and solver outputs. This traceability is essential for incident analysis, auditing decisions, and regulatory compliance when optimization outputs affect real assets or people.

- Hardware and cost planning. For inference the Hugging Face model card recommends ≥32GB of GPU VRAM for comfortable usage, and training used multi‑GPU clusters (8×B200 for training, 8×H100 for evaluation in the reference setup). Plan capacity accordingly. (huggingface.co)

Research critique and evaluation of the methodology

OptiMind’s central methodological contribution—explicitly encoding expert failure modes as hints and using them both for label regeneration and test‑time conditioning—is elegant and pragmatic. It addresses a real problem: noisy labels are not an inevitable limitation of LLMs; domain knowledge can be operationalized to create much cleaner supervision signals.Strengths of the method:

- It reduces the need for prohibitive manual repair of massive datasets by focusing human effort on identifying systematic errors. (arxiv.org)

- It yields outputs that are easier to validate with programmatic checks (solver runs) than purely textual outputs.

- The approach is, by design, domain specific. Hints and cleaning rules that work for classical MILP families may not generalize to newer or hybrid modeling families (nonlinear programs, stochastic optimization, reinforcement learning style formulations) without substantial new engineering. The authors acknowledge this and invite the community to expand the method.

- Reliance on cleaned benchmarks to demonstrate large gains means reproducibility depends on the community adopting the same cleaned sets and cleaning protocols. The authors’ public release of cleaned benchmarks mitigates this risk, but independent replications will be necessary to fully validate broad claims. (huggingface.co)

- There is a research opportunity (and risk) in automating hint generation: the authors discuss future work to have frontier models propose hints, but automating expert knowledge risks introducing subtle, compounding errors unless those autogenerated hints are themselves validated by human experts and by empirical checks.

Where this fits in the ecosystem: practical use cases

OptiMind is not a general‑purpose chatbot; it is a domain‑specific LLM for optimization formulation. It fits best in workflows where:- Teams have well‑defined NL problem descriptions and need rapid prototyping of MILPs and solver code.

- Data privacy requires local/on‑prem inference (20B models can be served on-prem compared with cloud‑only giant models).

- Educational contexts need examples that link informal problem text to the exact math modeling steps.

- Supply‑chain production planning and inventory optimization.

- Vehicle routing and last‑mile logistics.

- Scheduling problems (job‑shop, workforce scheduling).

- Resource allocation and network design prototypes.

Final assessment and recommendations

OptiMind represents a timely and important demonstration of how domain knowledge—encoded as hints and applied systematically—can make small, specialized LLMs behave like experts in narrow but high‑impact tasks. The combination of (1) publicly released artifacts (paper, model card, cleaned benchmarks), (2) a practical inference pipeline that uses solver feedback, and (3) a focus on reproducibility is notable and should accelerate follow‑on work in operational decision intelligence. (arxiv.org)That said, practitioners should proceed cautiously:

- Do not execute generated models without expert review and sandboxed verification. The model card explicitly lists fully automated deployment with no human oversight as out of scope. (huggingface.co)

- Treat head‑to‑head claims against proprietary frontier models as contextual until you can reproduce the comparisons on the same, cleaned benchmarks.

In closing, OptiMind is an instructive case study: specialization plus structured domain knowledge produces a model that is smaller, faster to run locally, and—when used with careful verification—capable of producing much more reliable optimization formulations than unguided LLMs. The work reframes a practical question for organizations building decision systems: invest in larger models, or invest in domain knowledge and robust validation pipelines? OptiMind makes a persuasive argument for the latter.

Source: Microsoft https://www.microsoft.com/en-us/res...hink-like-optimization-experts-with-optimind/