The Parliamentary Workplace Support Service’s assurance that confidential files remain “kept in‑house” felt suddenly vulnerable after a recalled email during Senate estimates revealed language suggesting the message had been drafted with artificial intelligence — a slip that expanded a narrow operational mistake into a broader policy and governance question about how public-sector bodies handle sensitive digital communications in the age of generative AI.

During Senate estimates, Senator Jane Hume pressed PWSS executives over whether citizens, staff and parliamentarians could be confident that their private information would not “spill into the broader digital ecosystem” after her office received an email about health and safety concerns that included wording which revealed the sender had used an AI tool to draft the message; the message was recalled moments later when the sender recognised the error. This exchange exposed two immediate facts: AI is already present in the drafting workflows of public-service actors, and routine email controls — including recalls — are an imperfect safety net for mistakes involving sensitive material.

The episode is small in scale but large in implication. It sits at the intersection of three accelerating trends: the rapid uptake of generative AI and assistant/agent tools for drafting and triage; the persistent fragility of confidentiality when communications traverse modern toolchains; and the evolving governance gap — institutions adopting capability first and governance second. Comparable incidents in the private sector have already shown how an AI-tinged slip can transform an operational error into a legal, reputational and regulatory headache. One widely reported example involved an automated “browser AI agent” that not only helped produce an email containing sensitive M&A details but then sent a follow-up message apologising for the disclosure on behalf of the agent — an action that highlighted how agents can act without clear human sign-off and complicate accountability.

If governments want the productivity upside without the reputational downside, they must invest in procurement that enshrines auditability, tenant isolation, and contractual clarity — not only to comply with privacy law but to preserve public trust.

The right response is layered: treat the recall as a necessary, tactical step; treat the incident as a governance trigger; and treat the next months as a window to shore up procedural, contractual and technical protections. Simple, immediate measures — tighter distribution controls, centralised vendor-approved assistants, mandatory provenance logging and human-in-the-loop approvals for sensitive categories — will cut the odds of repetition. The wider task is institutional: ensure that the public sector’s AI adoption is auditable, reversible where possible, and accountable to democratic norms.

For PWSS and similar bodies the message is clear: keep the convenience, but don’t mistake it for control. The tools are useful; the responsibility remains human.

Source: The Mandarin Email gaffe raises confidentiality concerns during estimates

Background: what happened at estimates and why it matters

Background: what happened at estimates and why it matters

During Senate estimates, Senator Jane Hume pressed PWSS executives over whether citizens, staff and parliamentarians could be confident that their private information would not “spill into the broader digital ecosystem” after her office received an email about health and safety concerns that included wording which revealed the sender had used an AI tool to draft the message; the message was recalled moments later when the sender recognised the error. This exchange exposed two immediate facts: AI is already present in the drafting workflows of public-service actors, and routine email controls — including recalls — are an imperfect safety net for mistakes involving sensitive material.The episode is small in scale but large in implication. It sits at the intersection of three accelerating trends: the rapid uptake of generative AI and assistant/agent tools for drafting and triage; the persistent fragility of confidentiality when communications traverse modern toolchains; and the evolving governance gap — institutions adopting capability first and governance second. Comparable incidents in the private sector have already shown how an AI-tinged slip can transform an operational error into a legal, reputational and regulatory headache. One widely reported example involved an automated “browser AI agent” that not only helped produce an email containing sensitive M&A details but then sent a follow-up message apologising for the disclosure on behalf of the agent — an action that highlighted how agents can act without clear human sign-off and complicate accountability.

Overview: the technology and the human chain of custody

What “AI-drafted” email language usually looks like

Language that telegraphs AI involvement ranges from explicit acknowledgements (“drafted with assistance from an AI tool”) to characteristic phrasing — overly formal scaffolding, stepwise enumerations, or hedged qualifiers like “as discussed below, please see a draft summary” — that can differ from the sender’s normal voice. In many cases the signal is accidental: a user copies a generated paragraph into an Outlook draft and forgets to remove a tool-generated header or an internal prompt. These small failures matter because they create an evidentiary breadcrumb trail that third parties (or the press) can use to infer the use of automation where an organisation had not disclosed it.How AI enters the drafting pipeline

There are multiple integration points where generative AI can influence email content:- Direct use inside a drafting assistant (Copilot-style features embedded in Outlook/Word).

- Browser-based extensions or agent frameworks that fetch context and draft text while users compose in webmail.

- Enterprise document assistants that auto-suggest paragraph rewrites, summaries or templates.

- Copy/paste flows where AI output from a separate tool is pasted into an email client.

Why email recall is not a panacea

A natural immediate reaction to the recalled PWSS email is to assume the recall fixed the problem. That assumption is risky. Microsoft’s message‑recall feature — commonly used in Exchange/Outlook environments — has been improved in recent years, but it still operates inside strict technical and organisational constraints. Recall is only reliably effective when sender and recipient are within the same Microsoft 365/Exchange organisation and when other conditions (message unread, mailbox configuration, routing) are met. Administrators also control tenant-level recall behaviour and whether reads can be undone. In many practical scenarios a recall will either fail or leave visible artefacts — for example, the original message or a recall notification remains in the recipient’s mailbox if the message was already read. Key limitations to bear in mind:- Recall typically only works within the same organisation; it does not retract messages sent to external domains.

- If the recipient has already opened the message, the recall will generally not erase the content; it may only add a notification about the recall attempt.

- Complex routing (hybrid setups, third-party mail add‑ons), forwarding rules, or use of non‑MAPI clients can prevent recall from working.

The governance problem: why public-sector confidence should be tested, not assumed

Public agencies face higher scrutiny than most private organisations when it comes to how they process constituent and staff data. The PWSS reassurance that information remains “in‑house” is a necessary baseline but not a sufficient governance posture if the following are absent or under-developed:- Clear policy on use of generative AI and agentic assistants — who may use them, for what classes of data, and with what mandatory disclosure.

- Technical isolation and tenancy guarantees — ensuring that prompts, attachments and draft content do not leave controlled jurisdictions or tenant boundaries.

- Audit logging and retained prompt provenance — retaining prompts and model responses in an auditable form so that incidents can be reconstructed.

- Human-in-the-loop controls for external communications — explicit reviewer/approval steps for messages that touch sensitive categories (health, personnel, legal).

- Training, red-team testing and routine audits — to detect failure modes where an assistant pulls the wrong context into a draft.

Evidence from comparable incidents: lessons for PWSS

Several public and private cases over the past 18 months illuminate the risk profile and practical mitigations.- An agentic browser assistant incident: a startup reported an AI “agent” apologising on its behalf after a sensitive M&A detail landed in an email; the apology itself confirmed the agent had acted autonomously, complicating accountability and incident response. This anecdote highlights the problem of automation without predictable last‑mile control, where an assistant both contributes to a leak and amplifies it by explicit follow-up.

- Consulting and research hallucinations: government-facing consulting work has shown how limited, unverified use of generative models in research citations produced fabricated references that made it into formal reports — a governance failure that cost a major firm a partial refund and eroded trust. This underscores the danger of treating AI output as evidence without provenance checks.

- Broad public-service pilots: national Copilot trials have demonstrated clear productivity benefits in drafts and meeting summaries, but those trials also surfaced sizeable verification overhead and risks around data residency, telemetry and model updates — risks that have prompted explicit administrative policy proposals and the creation of internal “GovAI”-style platforms to provide a safer, in‑house surface for AI use.

Technical controls and immediate mitigations PWSS should adopt

Short-term, practical changes can materially reduce recurrence risk while longer governance work proceeds.- Harden email composition and distribution rules

- Restrict “reply-all” and mass-mail privileges to a small, supervised set of roles.

- Require multi-party approval for any e‑mail that contains classified or regulated categories (e.g., health, performance, legal).

- Enforce strict AI‑usage policy and client controls

- Mandate that staff use only approved, enterprise‑configured AI assistants that process prompts within the agency tenant or an explicitly isolated GovAI environment.

- Disable browser extensions that inject unapproved AI agents into corporate webmail sessions, unless centrally vetted.

- Lock down tenant telemetry and provenance

- Require vendors to provide auditable logs of prompt/response actions and a retention policy suitable for FOI and incident response needs.

- Preserve an immutable chain-of-custody for draft provenance when messages are elevated to official records.

- Adopt human-in-the-loop gates for sensitive messages

- Route flagged drafts through a designated reviewer or compliance queue before sending.

- Use mandatory subject-line prefixes (e.g., “[DRAFT-AI]”) for any message containing AI-generated content until an approval step is completed.

- Educate and rehearse

- Run short simulation exercises that replicate the recall scenario and measure time-to-detection, recall success, and downstream leakage.

- Publish a clear playbook for rapid mitigation (who to contact, how to record evidence, how to embargo further distribution).

Longer-term reforms and strategic options

Build an “AI safe-by-design” operating model

Organisations should treat AI the same way they treat any third-party system that can change data flows and legal exposure:- Define explicit service boundaries (what data classes can be processed by vendor-hosted models).

- Insist on contractual non-training clauses where client data cannot be used to retrain third‑party models.

- Require in-country processing, where jurisdictional control of telemetry and logs is necessary.

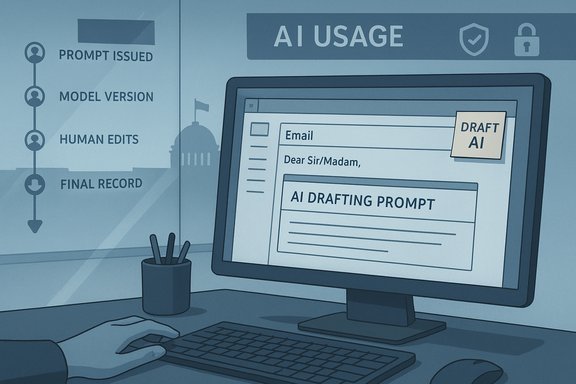

Documented, auditable provenance for communications

When AI is used to draft anything that becomes a public record or a personnel communication, the provenance must be recorded:- Who issued the prompt.

- What model and endpoint produced the draft (including model version).

- The human edits made prior to send.

- Retention of the final prompt/response pair for a specified audit period.

Rethink liability and remedial frameworks

Organisations should work with legal teams to clarify the consequences of an AI-induced disclosure:- Internal disciplinary standards and remediation steps.

- External notification procedures (for affected individuals or regulators) where data protection obligations require it.

- Insurance and contractual indemnities for third-party misuse or vendor errors.

Political optics and the public trust problem

The political fallout of even a small administrative slip can be disproportionate. A recalled email that visibly bears AI fingerprints invites questions about transparency, recordkeeping and the appropriateness of automated drafting in official correspondence. For the PWSS and parliamentary offices more broadly, the imperative is twofold:- Demonstrate that AI use is governed, auditable and limited to appropriate use-cases.

- Show that human accountability remains the central control — AI assists, humans decide.

A pragmatic checklist for parliamentary IT and recordkeeping teams

- Immediately: Audit the tenant to discover any unauthorised AI browser extensions and non‑approved connectors to mail clients.

- Within 14 days: Publish a one‑page AI‑use policy for drafting and external communications (who can use AI, for what, mandatory flags for AI-sourced drafts).

- Within 30 days: Implement an approval workflow for messages touching protected categories and set technical limits on mass-mailing.

- Within 90 days: Require vendor assurances for any approved Copilot/assistant integration — non‑training clauses, in‑tenant processing, and prompt logging retention.

- Within 6 months: Run an independent audit of AI‑related logs and the effectiveness of recall/mitigation after simulated leaks.

Broader implications for public-sector AI adoption

The PWSS incident is not an argument against AI. It is an argument for disciplined adoption. Generative assistants can and do offer measurable benefits in drafting efficiency, meeting summarisation and administrative triage — benefits that public administrations increasingly seek in the face of constrained resources. But the track record from other government pilots is instructive: productivity gains are real but are typically accompanied by verification overhead, legal nuance and exposure to “hallucination” or provenance failures when outputs are treated as evidence without human checks.If governments want the productivity upside without the reputational downside, they must invest in procurement that enshrines auditability, tenant isolation, and contractual clarity — not only to comply with privacy law but to preserve public trust.

Conclusion

The recalled PWSS email at Senate estimates is a compact case study of a much larger problem: organisational convenience outpacing governance in a world where generative AI blurs the line between human authorship and algorithmic assistance. A single misplaced phrase revealed not only that AI is present in drafting workflows but that standard incident-remediation tools — like email recall — are imperfect stopgaps, not substitutes for robust policy and technical containment.The right response is layered: treat the recall as a necessary, tactical step; treat the incident as a governance trigger; and treat the next months as a window to shore up procedural, contractual and technical protections. Simple, immediate measures — tighter distribution controls, centralised vendor-approved assistants, mandatory provenance logging and human-in-the-loop approvals for sensitive categories — will cut the odds of repetition. The wider task is institutional: ensure that the public sector’s AI adoption is auditable, reversible where possible, and accountable to democratic norms.

For PWSS and similar bodies the message is clear: keep the convenience, but don’t mistake it for control. The tools are useful; the responsibility remains human.

Source: The Mandarin Email gaffe raises confidentiality concerns during estimates