CISA and Australia’s ACSC, together with federal and international partners, published joint guidance on how to integrate artificial intelligence into operational technology (OT) environments securely, framing a practical set of principles to balance operational gains from AI with the unique safety, security, and reliability risks that AI introduces in industrial and critical‑infrastructure settings. The guidance—aimed at OT owners and operators—focuses on machine learning (ML), large language models (LLMs), and AI agents, and stresses governance, lifecycle controls, safety-by-design, and incident readiness as non‑negotiable elements of any AI-enabled OT deployment.

Operational technology systems control physical processes across energy, manufacturing, water, transportation and other critical sectors. Unlike IT systems, OT historically prioritized availability and deterministic behavior over rapid patching and software upgrades. That contrast makes the insertion of AI—particularly models that can change behavior as they learn or depend on external data sources—a new and sometimes awkward fit for OT risk models. CISA and partner agencies have been building guidance in this area for several years; joint advisories seeking to steer both vendors and operators toward "secure by design" practices and explicit AI risk-management are now converging into operational‑level guidance for OT practitioners. The new joint guidance reiterates long‑standing OT tenets—segmentation, least privilege, inventory hygiene—but overlays them with AI‑specific controls: model provenance and lineage, dataset governance, explainability and traceability, runtime verification, and human‑in‑the‑loop (HITL) gates for safety‑critical actions. These are practical measures that narrow the gap between fast‑moving AI development practices and the stringent availability and safety requirements of OT.

Two converging trends make this guidance timely:

Adoption will not be frictionless: organizations must accept trade‑offs in latency, operational complexity, and vendor negotiation time. But the alternative—fast, ungoverned deployments in environments where failure can mean physical harm—is far riskier. The guidance offers a conservatively pragmatic roadmap: achieve measurable gains with bounded risk, instrument everything, and never substitute AI’s convenience for deterministic human oversight in safety‑critical decision paths.

(Operational reference material and practical checklists used in this analysis are present in shared operational playbooks and forum summaries provided by practitioners; these emphasize identity‑first connectors, provenance tagging, sandboxed runtimes, and continuous red‑teaming as immediate, high‑value controls.

Source: CISA CISA, Australia, and Partners Author Joint Guidance on Securely Integrating Artificial Intelligence in Operational Technology | CISA

Background

Background

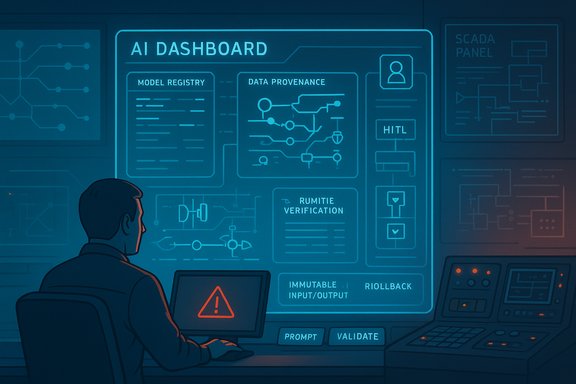

Operational technology systems control physical processes across energy, manufacturing, water, transportation and other critical sectors. Unlike IT systems, OT historically prioritized availability and deterministic behavior over rapid patching and software upgrades. That contrast makes the insertion of AI—particularly models that can change behavior as they learn or depend on external data sources—a new and sometimes awkward fit for OT risk models. CISA and partner agencies have been building guidance in this area for several years; joint advisories seeking to steer both vendors and operators toward "secure by design" practices and explicit AI risk-management are now converging into operational‑level guidance for OT practitioners. The new joint guidance reiterates long‑standing OT tenets—segmentation, least privilege, inventory hygiene—but overlays them with AI‑specific controls: model provenance and lineage, dataset governance, explainability and traceability, runtime verification, and human‑in‑the‑loop (HITL) gates for safety‑critical actions. These are practical measures that narrow the gap between fast‑moving AI development practices and the stringent availability and safety requirements of OT. What the Guidance Says (Executive Summary)

The guidance organizes recommendations around a small set of actionable principles designed to help owners and operators bring AI into production OT without undermining safety or resilience. Key themes are:- Understand AI: Build organizational literacy around how ML, LLMs, and AI agents work, their failure modes (e.g., hallucinations, model drift, data poisoning), and how they interact with OT control loops.

- Assess AI use in OT: Require risk assessments that map AI components to OT assets, data flows, safety boundaries, and business outcomes. Evaluate the blast radius and acceptable failure rates before deployment.

- Establish AI governance: Create governance frameworks for procurement, testing, model registry and continuous validation, plus contractual controls over vendor models and third‑party dependencies.

- Embed safety and security: Maintain oversight with HITL controls, full audibility for inputs/outputs, and integration of AI incidents into existing OT incident response plans.

Why This Guidance Matters Now

Industrial operators are under pressure to increase efficiency, reduce downtime, and extract predictive insights from growing telemetry—use cases where AI can deliver tangible returns. But OT systems operate at the intersection of the cyber and the physical: a mistaken control decision, a hallucinated instruction, or unauthorized model drift can cause production loss, equipment damage, or even safety incidents.Two converging trends make this guidance timely:

- Rapid adoption of LLMs and agentic systems in enterprise workflows, which increases the chance that unvetted AI will interact with OT connectors and engineering tools.

- The long lifecycle and heterogeneity of OT devices, which complicates standard patching and model‑update strategies—so AI integrations cannot be treated like standard IT rollouts.

Deep Dive: The Four Pillars of Secure AI in OT

1. Understand AI — Training, Literacy, and Development Life Cycles

Operators must invest in practical AI literacy that goes beyond vendor slides. This includes:- Role‑based training for engineers, operators, and SOC/SRE teams on model failure modes and safe‑use patterns.

- Guidance for procurement and development teams on what model provenance and documentation to ask from vendors (e.g., training data summaries, test suites, documented known failure modes).

- Adoption of secure development lifecycle (SDL) practices for models: version control, reproducible builds, test harnesses, and adversarial testing.

2. Assess AI Use in OT — Risk Mapping, Data Hygiene, and Pilot Discipline

Before any pilot moves toward production, operators should:- Map where models will read and write data, and classify the sensitivity and safety criticality of each data flow.

- Conduct risk assessments that consider availability, safety, confidentiality and regulatory impact.

- Start with low‑blast‑radius pilots: bounded, observable, and reversible deployments with manual failovers and strict change control.

3. Establish AI Governance — Model Registries, Contracts, and Continuous Testing

AI governance for OT should be treated as an extension of existing change control and safety management systems:- Maintain a model registry with lineage, training corpora summaries, hyperparameters, dates of last retraining, and known failure modes.

- Contractually require vendors to provide model cards, explainability artifacts, and limits on vendor usage of customer data.

- Integrate model tests into CI/CD pipelines and set up continuous validation in production to detect drift, accuracy degradation, and adversarial inputs.

4. Embed Safety and Security — Oversight, Observability, and Incident Integration

Embedding safety requires multi‑layered controls:- Human‑in‑the‑Loop (HITL) for any action that could affect safety, availability, or demonstrate high impact on operations.

- Immutable logging of model inputs, decisions, tool calls, and egress destinations for post‑event forensics and regulatory compliance.

- Runtime verification layers that enforce schema checks, allow‑lists, and deterministic validation before a model’s output triggers an actuator or database change.

Practical Controls and Technical Patterns

The guidance doesn’t stop at high-level principles; it endorses concrete architectural patterns that pragmatists can start implementing today:- Identity and Access Controls: Managed identities, ephemeral credentials, and least‑privilege connectors for model access to systems. Limit agent connectors by scope and enforce multi‑party approvals for high‑risk operations.

- Sandboxed Agent Runtimes: Isolate agents in controlled runtimes with resource limits and egress filtering; require manual elevation for irreversible actions.

- Provenance and Filtering: Tag all inputs by provenance and sanitize external content before it’s combined with trusted internal context windows to prevent prompt‑injection or data leakage.

- Layered Output Verification: Add deterministic validators and schema checks that examine model outputs before any command executes—relying on simple, verifiable assertions wherever possible.

- Red Team and Adversarial Testing: Conduct multimodal red‑teaming (text, images, audio, documents) to understand how models behave under adversarial inputs; track time‑to‑detection and time‑to‑remediation as KPIs.

Governance, Procurement, and Contractual Levers

A recurring theme is that technical measures alone are insufficient without contractual and governance levers:- Procurement language must demand model transparency (or at least a clear specification of vendor responsibilities), rights to audit, restrictions on vendor training on customer data, and limits to telemetry sharing.

- SLAs should specify update cadences, responsibilities for security fixes, and guaranteed support for rollbacks.

- Organizations should insist on legal commitments that reflect their regulatory context—data residency, recordkeeping for administrative actions, and liability for failures where AI outputs contributed to adverse outcomes.

Incident Response: What Changes When AI is Present

AI changes the incident‑response playbook in specific ways:- Expand telemetry to include model inputs, outputs, tool calls, and connector logs—timestamped and immutable.

- Add scenario playbooks for model failures (drift, poisoning, hallucinations) and for agentic misbehavior (unauthorized tool use, credential misuse).

- Prepare rollback and manual control paths; maintain fallbacks that let operators run the system safely without AI involvement.

- Coordinate with vendors and regulators: many AI failures will require cross‑organizational forensic work and may touch data‑protection or safety reporting requirements.

Strengths of the Guidance

- Practicality over platitudes: The document moves beyond warnings to offer concrete, operationally feasible controls and stepwise pilot strategies that OT teams can adopt immediately.

- Alignment with OT safety culture: By emphasizing HITL and deterministic validators, the guidance acknowledges OT’s need for predictable, auditable behavior and integrates AI use into those safety expectations.

- Cross‑jurisdictional consensus: Joint authorship with ACSC and other international partners strengthens the recommendations’ credibility and makes cross‑border procurement and vendor accountability simpler—vendors cannot claim a single‑jurisdiction viewpoint when global agencies converge on common standards.

- Operational checklists and playbooks: Practical checklists from industry and community playbooks reinforce the guidance with real‑world controls—e.g., provenance tagging, sandboxing, and layered verification—that are proven in enterprise agent deployments.

Risks, Gaps, and What Operators Must Watch For

- Vendor opacity and supply‑chain risk: Many model providers remain reluctant to disclose training data provenance or internal safety engineering practices. That opacity makes it difficult to certify low‑risk models for OT use. Operators must force transparency contractually and through independent testing.

- Operational trade‑offs: Safety layers, validation loops, and HITL controls add latency and complexity. In high‑frequency control loops, these trade‑offs can be operationally expensive or infeasible. The guidance recognizes these trade‑offs but does not eliminate them; practitioners will need to design scoped automation with clear escalation patterns.

- Regulatory and liability ambiguity: The legal frameworks that govern AI use in OT are still forming. Operators should assume regulatory scrutiny for automated decision systems affecting safety or regulated outcomes and preserve robust audit trails.

- Observable unknowns: Some claims or specific technical recommendations in press summaries are difficult to validate in hostile network conditions or on proprietary controllers. Where guidance references vendor patches, operators should verify patch availability and running image integrity before assuming mitigation. Practical forum analyses included in the uploaded materials caution against assuming "patched equals safe" without verification.

Recommended Roadmap for OT Operators (Practical Steps)

- Inventory & Prioritize

- Build an OT asset register that maps potential AI touchpoints and classifies criticality.

- Pilot with Controls

- Begin with a single low‑impact pilot, sandboxed and with HITL checks; measure accuracy, false positive/negative rates, and the time required for manual verification.

- Establish Governance

- Create an AI Oversight Committee that owns procurement, model registry maintenance, and approval authority for production deployments.

- Contract & Vendor Due Diligence

- Require model cards, attestation of data handling, rights to audit, and explicit warranties for safety‑critical use cases.

- Harden Operational Controls

- Implement identity‑first connectors, egress filtering, output schema validators, and immutable prompt/response logging.

- Test & Red Team

- Regularly adversarially test models for prompt injection, data exfiltration, and hallucinations, including multimodal inputs if applicable.

Cross‑Validation and Independent Sources

Key claims in the guidance—such as the emphasis on HITL, provenance, and continuous testing—are consistent across multiple trusted sources. The Australian ACSC’s "Engaging with Artificial Intelligence" publication and CISA’s OT and AI resource pages both promote similar controls and risk frameworks, which reinforces the guidance’s recommendations as a consensus best practice rather than a single‑agency prescription. Independent academic reviews of AI in critical sectors (for example, published analyses in technical journals) also emphasize the importance of explainability, adversarial testing, and robust data controls when AI is applied to safety‑critical domains.Where the Guidance Is Conservative — and Why That’s Appropriate

The guidance is intentionally conservative in three ways:- It treats model outputs as untrusted by default and recommends output verification before any control action.

- It requires logging and retention measures that are stricter than many IT‑only AI playbooks, reflecting OT’s accountability and safety demands.

- It insists on governance signoffs and contractual protections for vendor models—an approach that slows rollouts but reduces systemic risk.

Limitations and Unverifiable Elements

Attempts to fetch the specific CISA alert URL provided in an initial summary encountered access restrictions during verification, which prevented direct retrieval of that single page at the time of writing. Readers should consult CISA’s official news and alerts pages and the Australian ACSC’s "Engaging with Artificial Intelligence" guidance for the canonical text and the downloadable guidance package. Where direct page access was blocked, the analysis relied on CISA’s publicly available AI and ICS resource pages, ACSC’s AI guidance, and operational playbooks in the uploaded forum materials to corroborate recommendations. Any specific line‑by‑line quotations attributed to the unavailable page should be treated cautiously until the page is accessible for full verification.Bottom Line for WindowsForum Readers and Practitioners

- AI in OT is not optional—it's already happening. Operators who ignore it risk being outpaced by vendors or inadvertently embedding unvetted AI into critical workflows. The guidance gives a practical, risk‑aligned pathway to adopt AI safely.

- Start small and instrument heavily. Low‑blast‑radius pilots, immutable logging, and deterministic validators let you learn safely and iterate without exposing control surfaces to unchecked model behavior.

- Use procurement and contracts as security controls. If vendors won’t provide necessary transparency or contractual protections, treat their models as unacceptable for safety‑critical OT use.

- Update incident plans now. Ensure model artifacts, prompt/response logs, and escalation paths are part of your OT incident response runbooks so AI‑related events are handled with the same rigour as traditional control failures.

Final Assessment

The joint guidance from CISA, ACSC and international partners is a practical, timely, and operationally oriented resource that bridges the gap between AI developers and OT practitioners. It recommends realistic controls—sandboxing, identity‑first connectors, model registries, and HITL gates—while calling for stronger procurement and governance that reflect the safety and reliability constraints of industrial systems. Operators who adopt these principles stand to gain the productivity and predictive benefits of AI while maintaining the safety and resilience OT demands.Adoption will not be frictionless: organizations must accept trade‑offs in latency, operational complexity, and vendor negotiation time. But the alternative—fast, ungoverned deployments in environments where failure can mean physical harm—is far riskier. The guidance offers a conservatively pragmatic roadmap: achieve measurable gains with bounded risk, instrument everything, and never substitute AI’s convenience for deterministic human oversight in safety‑critical decision paths.

(Operational reference material and practical checklists used in this analysis are present in shared operational playbooks and forum summaries provided by practitioners; these emphasize identity‑first connectors, provenance tagging, sandboxed runtimes, and continuous red‑teaming as immediate, high‑value controls.

Source: CISA CISA, Australia, and Partners Author Joint Guidance on Securely Integrating Artificial Intelligence in Operational Technology | CISA