The Senate quietly cleared the way this week for aides to use ChatGPT and other generative chatbots in official work — a practical leap that brings obvious productivity gains but also reopens familiar security and legal fault lines for Congress and the wider federal enterprise.

The move follows months of pressure across government to make generative AI tools part of everyday workflows while simultaneously tightening controls around what those tools may see and store. For years, federal agencies and congressional offices have wrestled with a paradox: AI assistants can accelerate research, drafting, and briefing preparation, yet many of the most common consumer-grade chatbots route prompts through vendor systems that may retain or repurpose inputs. The Senate’s new internal guidance — reported by major outlets after a review of internal materials — signals an operational acceptance of that tradeoff, with caveats.

Historically, Congress has lagged some federal agencies in publishing formal AI use policies, though that trend changed in 2024–2025 as the House and several executive-branch agencies issued risk-based rules for staff. The Senate’s guidance appears to align with those broader federal developments: use allowed under policy controls, human verification required, and sensitive materials explicitly restricted. Where the Senate’s document goes beyond earlier guidance is in naming specific consumer and enterprise products approved for staff use.

The challenge now is not whether to use AI — that ship has sailed — but how to do it in ways that are auditable, contractually constrained, and safe. The cadence of future oversight hearings and procurement decisions will determine whether this policy becomes a durable template for government AI, or a cautionary tale about rushing governance after the fact.

Source: The New York Times https://www.nytimes.com/2026/03/10/us/politics/us-senate-chatgpt-ai-chatbots.html

Background

Background

The move follows months of pressure across government to make generative AI tools part of everyday workflows while simultaneously tightening controls around what those tools may see and store. For years, federal agencies and congressional offices have wrestled with a paradox: AI assistants can accelerate research, drafting, and briefing preparation, yet many of the most common consumer-grade chatbots route prompts through vendor systems that may retain or repurpose inputs. The Senate’s new internal guidance — reported by major outlets after a review of internal materials — signals an operational acceptance of that tradeoff, with caveats.Historically, Congress has lagged some federal agencies in publishing formal AI use policies, though that trend changed in 2024–2025 as the House and several executive-branch agencies issued risk-based rules for staff. The Senate’s guidance appears to align with those broader federal developments: use allowed under policy controls, human verification required, and sensitive materials explicitly restricted. Where the Senate’s document goes beyond earlier guidance is in naming specific consumer and enterprise products approved for staff use.

What the guidance reportedly permits — and what it does not

Tools explicitly named

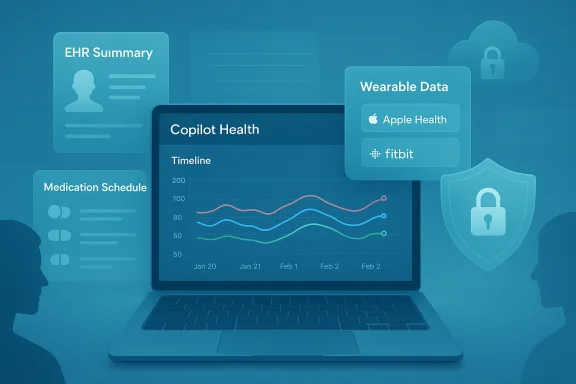

- The internal guidance lists OpenAI’s ChatGPT, Google’s Gemini, and Microsoft Copilot among the chatbots staffers may use for official tasks.

Typical, approved use cases

- Research and fact-gathering (preparatory queries, summarization of public materials)

- Drafting and editing (first drafts of memos, reports, and talking points)

- Briefing preparation (creating speaker notes, briefing slides drafts)

- Proofreading and formatting (copy-editing and language polishing)

Explicit restrictions and caveats

- Staff are reportedly barred from feeding classified materials and certain “sensitive” categories of internal information into public, consumer-grade chatbots. The memo — as described in reporting — leaves room for limited, controlled exceptions when enterprise-grade arrangements and contractual protections are in place. Reporters who reviewed the guidance state the policy is intended to let staff use AI for routine, non-sensitive tasks while keeping classified work and sensitive contracting materials off public models.

Why the Senate changed course now

There are three practical drivers behind the shift.- Efficiency pressure: AI assistants demonstrably cut time on routine tasks — drafting, summarizing, and searching — freeing staff to focus on policy judgment and constituent engagement. Lawmakers and chiefs of staff have increasingly asked for pragmatic, sanctioned ways to let staff use tools they already rely on informally.

- Alignment with federal adoption: Agencies from the Office of Personnel Management to line departments have been piloting and, in some cases, rolling out enterprise AI products with stronger privacy and compliance guarantees. The Senate guidance is the legislative branch’s counterpart to that trend.

- Risk normalization: Months of high-profile missteps — including a notable incident at the Department of Homeland Security where the agency’s acting cyber chief uploaded documents marked “for official use only” into a public ChatGPT instance — have sharpened the conversation about safe, governed adoption rather than prohibition. That episode highlighted the cost of informal, uncontrolled use and pushed overseers toward formalized rules instead of blanket bans.

The technical and security reality: what staff need to understand

Public versus enterprise models: a crucial distinction

Not all versions of a chatbot are equal from a security perspective. Commercial offerings typically come in at least two forms:- Consumer/public instances: Free or paid consumer access often routes prompts and outputs through vendor-managed systems with varying retention and reuse policies.

- Enterprise / government-deployed instances: These products offer contractual commitments such as no-training clauses (vendors commit not to use customer prompts to train their base models), enterprise-grade encryption, single-tenant or workspace isolation, and administrative controls for audit and deletion.

Data flow and retention

Even when a model vendor offers a “no training” promise for enterprise customers, telemetry, abuse monitoring, logging, and metadata retention may still occur for short periods. Default consumer product settings often retain prompt/response data unless a paid privacy feature or enterprise contract is in place. That means organizations that intend to let staff use AI must pair policy with technical controls: DLP (data loss prevention) that blocks sensitive text from leaving the network, enforced use of enterprise instances, and endpoint protections.The “hallucination” problem isn’t academic

Generative models can present incorrect facts confidently. For staffers drafting briefings, that risk translates directly into reputational and legal exposure: a legislator quoting an AI-only source in a briefing or floor statement could be misled by invented dates, citations, or legal claims. The Senate’s required human verification step is a necessary mitigation, but it is not sufficient on its own without training and auditing for accuracy.Governance, compliance and liability — where things get tricky

Data classification and permitted uses

The central governance question is simple: what data classification levels can flow into which class of model? Without a clear mapping, routine practices will drift back toward risky behavior. The Senate guidance reportedly attempts to codify that mapping — forbidding classified materials and limiting “for official use only” (FOUO) content from public chatbots — but the enforcement model will determine whether the policy actually sticks. Past incidents show that staff use personal accounts when a sanctioned tool is inconvenient, so controls must be both enforceable and usable.Vendor contracts and procurement law

Federal and congressional entities must secure contractual commitments from vendors to meet privacy, auditability, and data-residency requirements. That means moving beyond clickwrap consumer licenses to full enterprise agreements that specify:- No-training commitments for submitted prompts

- Audit logs and exportable records

- Encryption and KMS options under customer control

- Incident response and breach notification SLA

Legal teams should treat those contracts like any other high-risk technology procurement.

Oversight and accountability

Given the public-interest nature of congressional work, transparency and oversight are essential. Committees will likely want to know:- Which vendor instances are in use and under what contractual terms.

- How the Sergeant at Arms (or equivalent technology office) enforces DLP and logging.

- How staff training and audits are conducted and the results of those audits.

Productivity upside — and the measurable benefits

When configured correctly and used for appropriate tasks, AI assistants can deliver real productivity gains that translate into better constituent service and legislative output.- Faster first drafts reduce iteration time on memos and policy briefs.

- Executive summaries of long reports let staffers triage material more effectively.

- Automated formatting and redaction tooling can shave hours from routine production tasks.

Practical recommendations for Senate offices (and any public-sector team)

Below are practical, prioritized steps that any office adopting chatbots for official use should take immediately.- Require enterprise-level procurement

- Only permit AI usage through enterprise contracts that include no-training clauses, audit logs, and KMS options.

- Enforce data loss prevention (DLP) on endpoints

- Block or detect classified and otherwise sensitive content before it leaves the local environment.

- Implement mandatory training and certification

- Staff must complete short, role-based AI safety training with periodic recertification.

- Maintain human verification and bibliographic standards

- Any factual claim or quotation produced by AI must be corroborated with an authoritative human-checked source.

- Deploy logging, monitoring, and periodic audits

- Keep an auditable trail of AI interactions used in official work and schedule external compliance audits.

- Map data classifications to permitted tool classes

- Create and enforce a simple table: e.g., “Public web → consumer models allowed; FOUO → only enterprise-model instances with DLP; Classified → prohibited.”

- Create a rapid incident response playbook

- If sensitive data is leaked or a model hallucination yields an erroneous public statement, the office must have a pre-approved correction and notification procedure.

Legal and reputational exposure: what to watch for

- Discovery and litigation risk: AI-assisted drafting that is inaccurate or misrepresents sources can become an evidentiary problem in litigation or oversight investigations.

- Privacy/regulatory risk: Depending on the content (e.g., personal data), use of consumer tools could violate privacy laws or trigger data-protection investigations.

- Public trust: If a lawmaker’s briefing or floor statement cites AI-produced claims that later prove false, the reputational damage can be severe and fast.

What this means for AI policy and future oversight in Congress

The Senate’s shift from blanket restrictions to a controlled-allowance model mirrors a broader movement in government: adopt cautiously, govern tightly, and assume that informal use will continue unless controls are both strong and usable. Expect these downstream effects:- More enterprise AI procurements across congressional offices and committees.

- Increased budget requests for secure AI tooling, DLP, and training programs.

- Congressional oversight hearings examining the adoption of AI across the executive branch and legislative operations — particularly after high-profile missteps.

Strengths and weaknesses of the Senate’s approach

Notable strengths

- Pragmatism: The guidance accepts reality — staff will use AI — and seeks to govern it rather than pretend prohibition will stop adoption. That pragmatic posture lets the Senate reap efficiency gains while establishing guardrails.

- Alignment with enterprise practice: By explicitly permitting enterprise-grade AI tools and naming major vendors, the Senate can leverage contractual privacy and security features that consumer versions lack.

Real risks and blind spots

- Implementation is everything: Guidance without enforcement, DLP, and procurement discipline will devolve into shadow usage via personal accounts.

- Human verification is necessary but not sufficient: Training and auditing standards matter; a checkbox “verify outputs” without a defined verification process leaves rooms for error.

- Public transparency: The lack of a public version of the guidance limits external oversight and undermines trust; the Senate should publish the memo or a sanitized summary to promote accountability.

Bottom line and next steps

The Senate’s reported policy — allowing tools like ChatGPT, Gemini, and Copilot for official use under specific, governed conditions — is a practical step that recognizes the productivity value of AI while attempting to mitigate risk. But the success of this policy will be decided by procurement choices, technical enforcement, and sustained training programs.- Offices must insist on enterprise contracts with clear no-training guarantees and admin controls.

- Technology offices should pair policy with robust DLP and monitoring, not rely solely on user discipline.

- Congress should publish the guidance publicly and schedule oversight hearings to ensure compliance and to capture lessons learned.

The challenge now is not whether to use AI — that ship has sailed — but how to do it in ways that are auditable, contractually constrained, and safe. The cadence of future oversight hearings and procurement decisions will determine whether this policy becomes a durable template for government AI, or a cautionary tale about rushing governance after the fact.

Source: The New York Times https://www.nytimes.com/2026/03/10/us/politics/us-senate-chatgpt-ai-chatbots.html