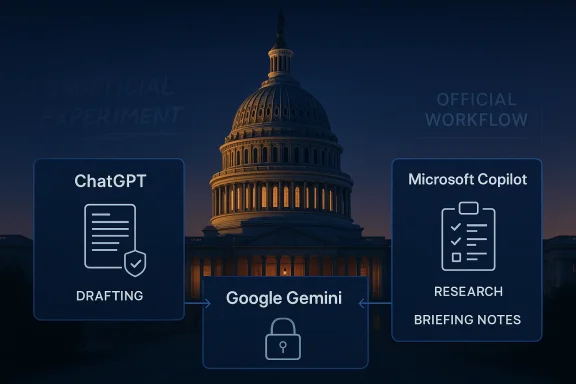

The Senate has now formally opened the door to ChatGPT, Google Gemini, and Microsoft Copilot for official work, a notable milestone in Capitol Hill’s slow but unmistakable embrace of generative AI. According to a memo sent to Senate offices and reported by Business Insider, staffers can use the tools for drafting, summarizing, research, and briefing prep, with Copilot immediately available and the other two requiring Senate-assigned licenses. The move matters not just because it legitimizes AI use in a high-stakes environment, but because it signals that the Senate is trying to convert a messy, unofficial experiment into a governed workflow.

For much of the last two years, Congress has treated AI the way many large institutions do at the start of a technology shift: cautiously, inconsistently, and with a fair amount of private experimentation. Staffers were already using consumer AI tools behind the scenes, often without formal approval, while offices debated how much risk they were willing to tolerate. This memo suggests that the Senate has finally decided that avoidance is less realistic than setting rules.

The approval is significant because it turns generative AI from an informal productivity hack into an official Senate capability. That is a subtle but important distinction. Once a platform is sanctioned for Senate data, the institution is no longer merely observing the technology; it is embedding it into its own security and compliance model.

The memo’s emphasis on Microsoft Copilot Chat is especially telling. Copilot is already woven into Microsoft 365, which means many staffers can use it without shifting to a new interface or retraining entire teams. That lowers friction, but it also raises the odds that AI becomes invisible infrastructure rather than a clearly bounded tool, which is where governance gets tricky.

The broader backdrop is a federal government that has become more comfortable buying and deploying AI services, even as agencies and oversight bodies continue to worry about data handling, hallucinations, and mission creep. The Senate move fits that larger pattern. It is not an endorsement of unrestricted AI use; it is a controlled admission that the technology is already part of modern legislative work.

The approved list also reflects a pragmatic balance between innovation and familiarity. ChatGPT Enterprise, Google Workspace with Gemini Chat, and Copilot Chat all sit inside ecosystems that are already widely used in government and enterprise environments. The Senate is not betting on a fringe tool; it is choosing products backed by major vendors with government cloud offerings.

That said, the memo is just as notable for what it does not include. Claude, Anthropic’s chatbot, was not approved in this round, even though the House has reportedly already authorized Claude alongside the other three tools. The omission hints at a slower Senate risk posture, vendor evaluation differences, or simply the bureaucratic reality that approvals often arrive in stages rather than all at once.

The practical implication is that the Senate is not simply saying “use AI.” It is building a controlled access model with licensing, training, and policy references. That helps manage cost and usage, but it also means staffers may experience uneven availability depending on which tool they want and how quickly the CIO distributes licenses.

The memo also places the tools under the Senate AI Policy and office-level policies. That is a quiet but crucial line. It means the institutional rules do not end with technical approval; each office can still set boundaries about what should and should not go into a prompt.

The memo’s language about Copilot is also designed to reduce fear. It says Copilot does not access Senate data unless a user explicitly shares it in a prompt, and that it does not roam through drives, shared folders, email, or Teams on its own. That reassurance matters because the greatest fear in government AI deployments is often accidental overexposure, not intentional misuse.

Still, that promise should be read carefully. A tool that only sees what users paste into it is safer than a tool that indexes everything, but it also puts more responsibility on staff to judge what is safe to share. In practice, that creates a human controls problem as much as a software controls problem.

There may also be a vendor-policy layer here. Anthropic has been in dispute with the Trump administration over restrictions tied to mass surveillance and autonomous weapons, and the administration has reportedly ordered federal agencies to stop using Anthropic technology, though that does not necessarily reach the legislative branch. That context may not fully explain the Senate’s delay, but it helps explain why Claude has become entangled in broader political and procurement questions.

More broadly, the absence of Claude reveals how quickly AI vendor positioning has become a governance issue. In a market where major model providers are competing for government credibility, each approval is both a technical decision and a signal about trust. That is especially true on Capitol Hill, where staff use can shape future procurement norms.

The memo also creates a stronger argument for time savings. If AI can handle a first-pass draft, summarize a long report, or help shape talking points, then staff can spend more effort on legislative judgment and less on mechanical work. The best-case scenario is not replacing staff expertise; it is giving that expertise more room to operate.

But the new policy also implies new discipline. Staff will need to learn where prompts end and judgment begins, especially when handling sensitive or politically consequential material. Convenience without caution can turn a productivity gain into an embarrassingly public mistake.

That distinction matters because government use cases are not just about productivity. They involve retention, privacy, access control, and auditability. When a legislative body handles constituent data, policy drafts, and internal strategy, the threshold for acceptable data handling is far higher than in casual consumer use.

At the same time, government cloud does not eliminate risk. It reduces some exposure, but it cannot eliminate hallucinations, prompt misuse, or the possibility that staff will over-share information in a way that weakens policy boundaries. The Senate is therefore solving a security problem and creating a training problem at the same time.

For Microsoft, the win is obvious because Copilot is embedded in the productivity suite the Senate already uses. For OpenAI and Google, the approval shows that standalone AI assistants can still secure a place inside a highly regulated environment. The fact that the Senate selected three platforms rather than one also suggests that competition in public-sector AI is still open.

The exclusion of Claude, however, creates a pressure point. Anthropic’s absence may not be permanent, but every delay in federal authorization matters in a market where procurement momentum can influence long-term adoption. In government AI, being first often matters almost as much as being best.

The Senate memo suggests lawmakers are reaching a practical consensus: staff should not be left to improvise indefinitely. The fact that some senators publicly said they were comfortable with AI use as early as late 2025 indicates that the cultural shift was already underway. This approval merely formalizes what many offices were already doing in private.

That matters politically because AI is no longer a futuristic side topic. It is now part of the normal machinery of governance, with implications for speed, quality, and accountability. Once an institution like the Senate blesses the use of these tools, the debate becomes less about whether they belong and more about what guardrails are necessary.

The bigger question is whether this becomes the template for more formalized AI use across Congress. If Senate offices adopt these tools responsibly, the House and other legislative support agencies may follow with broader approval frameworks, tighter guardrails, and more standardized guidance. If problems emerge, however, the reaction could be a familiar Washington pattern: cautious expansion followed by abrupt retrenchment.

Source: AOL.com https://www.aol.com/news/read-memo-authorizing-senate-offices-152506973.html

Overview

Overview

For much of the last two years, Congress has treated AI the way many large institutions do at the start of a technology shift: cautiously, inconsistently, and with a fair amount of private experimentation. Staffers were already using consumer AI tools behind the scenes, often without formal approval, while offices debated how much risk they were willing to tolerate. This memo suggests that the Senate has finally decided that avoidance is less realistic than setting rules.The approval is significant because it turns generative AI from an informal productivity hack into an official Senate capability. That is a subtle but important distinction. Once a platform is sanctioned for Senate data, the institution is no longer merely observing the technology; it is embedding it into its own security and compliance model.

The memo’s emphasis on Microsoft Copilot Chat is especially telling. Copilot is already woven into Microsoft 365, which means many staffers can use it without shifting to a new interface or retraining entire teams. That lowers friction, but it also raises the odds that AI becomes invisible infrastructure rather than a clearly bounded tool, which is where governance gets tricky.

The broader backdrop is a federal government that has become more comfortable buying and deploying AI services, even as agencies and oversight bodies continue to worry about data handling, hallucinations, and mission creep. The Senate move fits that larger pattern. It is not an endorsement of unrestricted AI use; it is a controlled admission that the technology is already part of modern legislative work.

Why This Memo Matters

The biggest significance of the memo is institutional, not technical. By naming approved tools and clarifying use cases, the Senate is creating a policy boundary that staff can follow, managers can audit, and IT can support. That matters because the lack of a formal path often pushes employees toward shadow IT, where convenience outruns security.The approved list also reflects a pragmatic balance between innovation and familiarity. ChatGPT Enterprise, Google Workspace with Gemini Chat, and Copilot Chat all sit inside ecosystems that are already widely used in government and enterprise environments. The Senate is not betting on a fringe tool; it is choosing products backed by major vendors with government cloud offerings.

That said, the memo is just as notable for what it does not include. Claude, Anthropic’s chatbot, was not approved in this round, even though the House has reportedly already authorized Claude alongside the other three tools. The omission hints at a slower Senate risk posture, vendor evaluation differences, or simply the bureaucratic reality that approvals often arrive in stages rather than all at once.

The hidden policy shift

The memo suggests the Senate is moving from “Can staff use AI?” to “Under what controls can staff use AI?” That is a much more mature question. It acknowledges that the real issue is not whether people will use AI, but whether the institution can define acceptable use before habits harden.- It normalizes AI in legislative workflows.

- It reduces the appeal of unofficial tools.

- It creates a path for training and oversight.

- It pushes the Senate toward standardized governance.

What the Memo Actually Authorizes

According to the notice, Copilot Chat is available immediately to all Senate employees at no cost, while Google Workspace with Gemini Chat and OpenAI ChatGPT Enterprise are approved with Senate licenses. The memo also says each Senate employee will receive one generative AI license at no cost for either Google or OpenAI, with more licensing details to follow within 30 days. That suggests a phased rollout rather than a single, fully baked deployment.The practical implication is that the Senate is not simply saying “use AI.” It is building a controlled access model with licensing, training, and policy references. That helps manage cost and usage, but it also means staffers may experience uneven availability depending on which tool they want and how quickly the CIO distributes licenses.

The memo also places the tools under the Senate AI Policy and office-level policies. That is a quiet but crucial line. It means the institutional rules do not end with technical approval; each office can still set boundaries about what should and should not go into a prompt.

The approved workflows

The memo specifically identifies routine productivity tasks. Those include drafting and editing documents, summarizing information, preparing talking points and briefing materials, and conducting research and analysis. In other words, the Senate is authorizing the kinds of work where generative AI is most tempting and most useful.- Drafting internal memos and constituent replies.

- Summarizing hearings and reports.

- Preparing committee briefs and talking points.

- Speeding early-stage research.

- Helping staff refine language for members.

Copilot Gets the Spotlight

It is no accident that Copilot received the most detailed treatment in the memo. Because it is integrated into Microsoft 365, it can meet staff where they already work instead of asking them to adopt a separate platform. That is a huge adoption advantage, especially in an environment where time is scarce and workflow disruption is politically expensive.The memo’s language about Copilot is also designed to reduce fear. It says Copilot does not access Senate data unless a user explicitly shares it in a prompt, and that it does not roam through drives, shared folders, email, or Teams on its own. That reassurance matters because the greatest fear in government AI deployments is often accidental overexposure, not intentional misuse.

Still, that promise should be read carefully. A tool that only sees what users paste into it is safer than a tool that indexes everything, but it also puts more responsibility on staff to judge what is safe to share. In practice, that creates a human controls problem as much as a software controls problem.

Why integration changes behavior

When AI sits inside Word, Excel, or the broader Microsoft environment, people use it more casually. That can improve productivity, but it also increases the odds of overreliance. Staff may begin to treat machine-generated text as a first draft they can trust too quickly, which is where factual errors can slip into official work.- Lower friction encourages broader use.

- Broad use increases the need for training.

- Embedded AI can blur the line between drafting and decision-making.

- Simple access can create hidden dependency.

Why Claude Was Left Out

The most obvious omission in the Senate’s approved list is Claude, especially because the House appears to have already authorized it. The memo and accompanying Senate IT references suggest Claude is still under evaluation, which is a reminder that federal adoption is not always uniform even inside Congress. Different chambers, different administrators, and different security teams can all move at different speeds.There may also be a vendor-policy layer here. Anthropic has been in dispute with the Trump administration over restrictions tied to mass surveillance and autonomous weapons, and the administration has reportedly ordered federal agencies to stop using Anthropic technology, though that does not necessarily reach the legislative branch. That context may not fully explain the Senate’s delay, but it helps explain why Claude has become entangled in broader political and procurement questions.

More broadly, the absence of Claude reveals how quickly AI vendor positioning has become a governance issue. In a market where major model providers are competing for government credibility, each approval is both a technical decision and a signal about trust. That is especially true on Capitol Hill, where staff use can shape future procurement norms.

Approval is not endorsement

A platform can be evaluated, delayed, or excluded for reasons that have nothing to do with model quality. Security posture, contracting terms, cloud dependencies, and policy sensitivities all matter. That is why the lack of Claude should be read as a governance outcome, not a definitive technical verdict.What This Means for Senate Staff

For staffers, the immediate impact is simple: the gray area is shrinking. Many offices already used AI unofficially, but this memo gives them a sanctioned path and a clearer standard for acceptable behavior. That should reduce uncertainty, especially for junior staff who are often the first to adopt new tools but the last to know what their office considers acceptable.The memo also creates a stronger argument for time savings. If AI can handle a first-pass draft, summarize a long report, or help shape talking points, then staff can spend more effort on legislative judgment and less on mechanical work. The best-case scenario is not replacing staff expertise; it is giving that expertise more room to operate.

But the new policy also implies new discipline. Staff will need to learn where prompts end and judgment begins, especially when handling sensitive or politically consequential material. Convenience without caution can turn a productivity gain into an embarrassingly public mistake.

Office-by-office realities

Not every Senate office will adopt AI the same way. Some will build strict internal rules, while others will allow broader experimentation with minimal supervision. That variability may create a two-speed Senate, where digitally mature offices move faster than more traditional ones.- Some offices will embrace AI quickly.

- Some will limit it to non-sensitive tasks.

- Some will require supervisor review.

- Some may avoid it altogether until policies stabilize.

Enterprise, Government Cloud, and Security

One reason this memo is important is that it leans heavily on security language. The notice says Copilot Chat runs in Microsoft’s secure government cloud and meets federal and Senate cybersecurity requirements. That framing is designed to reassure staff that this is not consumer-grade AI being loosely tolerated; it is an approved enterprise deployment.That distinction matters because government use cases are not just about productivity. They involve retention, privacy, access control, and auditability. When a legislative body handles constituent data, policy drafts, and internal strategy, the threshold for acceptable data handling is far higher than in casual consumer use.

At the same time, government cloud does not eliminate risk. It reduces some exposure, but it cannot eliminate hallucinations, prompt misuse, or the possibility that staff will over-share information in a way that weakens policy boundaries. The Senate is therefore solving a security problem and creating a training problem at the same time.

Why “secure” still needs supervision

Secure hosting is necessary, but not sufficient. Human behavior remains the weakest link in many enterprise deployments, especially when tools become easy enough to use that employees stop thinking about the boundary conditions. The memo’s training references are therefore as important as the approval itself.The Competitive Implications

The Senate’s approval has symbolic value for the AI vendors involved. Official congressional use is a credibility marker, especially when public-sector clients often become reference accounts that others in government and enterprise study closely. If Senate staff can use these tools safely, that strengthens the vendors’ pitch to other agencies and institutions.For Microsoft, the win is obvious because Copilot is embedded in the productivity suite the Senate already uses. For OpenAI and Google, the approval shows that standalone AI assistants can still secure a place inside a highly regulated environment. The fact that the Senate selected three platforms rather than one also suggests that competition in public-sector AI is still open.

The exclusion of Claude, however, creates a pressure point. Anthropic’s absence may not be permanent, but every delay in federal authorization matters in a market where procurement momentum can influence long-term adoption. In government AI, being first often matters almost as much as being best.

The broader market signal

This memo may seem narrow, but vendors will read it as part of a larger procurement trend. The federal government wants AI tools that can be governed, audited, and deployed without drama. That favors companies that can package their models with compliance-ready infrastructure rather than simply the most impressive chatbot demo.- Government credibility can accelerate enterprise adoption.

- Secure cloud offerings are now table stakes.

- Integration into existing office tools is a major advantage.

- Procurement success may depend on policy clarity as much as model quality.

The Political and Institutional Angle

Congress is both a lawmaker and a workplace, which makes AI policy uniquely awkward there. Legislators are trying to write rules for the technology while also deciding how much of it their own staff can use. That creates a built-in tension between regulation and self-interest, but it also forces a more honest conversation about productivity in public service.The Senate memo suggests lawmakers are reaching a practical consensus: staff should not be left to improvise indefinitely. The fact that some senators publicly said they were comfortable with AI use as early as late 2025 indicates that the cultural shift was already underway. This approval merely formalizes what many offices were already doing in private.

That matters politically because AI is no longer a futuristic side topic. It is now part of the normal machinery of governance, with implications for speed, quality, and accountability. Once an institution like the Senate blesses the use of these tools, the debate becomes less about whether they belong and more about what guardrails are necessary.

A workplace issue, not just a tech story

The smartest way to read this memo is as a workplace modernization story. The Senate is acknowledging that its staff need better digital tools and that generative AI has matured enough to be considered in official workflows. That may sound mundane, but institutional modernization often happens through exactly this kind of procedural change.Strengths and Opportunities

The Senate’s AI rollout has several clear advantages, especially if it is implemented with real training and office-level discipline. It can reduce rote work, make research faster, and free staff to spend more time on policy judgment. It also gives the institution a better chance to define acceptable use before the practice becomes too fragmented to govern.- Productivity gains for drafting and summarization.

- Faster briefing prep for hearings and floor work.

- Better standardization across offices.

- Reduced shadow IT through official approval.

- Stronger security posture than ad hoc consumer use.

- Training opportunities that can raise staff competence.

- More competitive procurement across government vendors.

Risks and Concerns

The downside is that AI tools can accelerate mistakes just as easily as they accelerate work. Hallucinated facts, overconfident wording, and careless prompt sharing can all create reputational and operational risk for Senate offices. There is also a danger that staff will trust polished output more than they should, especially when deadlines are tight.- Hallucinations could contaminate memos or talking points.

- Data leakage remains possible through careless prompting.

- Overreliance may weaken staff judgment over time.

- Uneven adoption could create policy inconsistency.

- Vendor lock-in may deepen as workflows standardize.

- Training gaps could leave junior staff exposed.

- Governance drift could happen if offices ignore policy updates.

Looking Ahead

The next phase will depend less on the memo itself and more on execution. License distribution, training quality, and office compliance will determine whether this becomes a useful productivity upgrade or just another policy announcement that staff ignore. The Senate CIO’s next 30 days will therefore matter a great deal, because rollout details often decide whether a policy takes root.The bigger question is whether this becomes the template for more formalized AI use across Congress. If Senate offices adopt these tools responsibly, the House and other legislative support agencies may follow with broader approval frameworks, tighter guardrails, and more standardized guidance. If problems emerge, however, the reaction could be a familiar Washington pattern: cautious expansion followed by abrupt retrenchment.

- License rollout and user access timing.

- Whether Claude is eventually added.

- Office-level rules for sensitive information.

- Training completion rates and compliance.

- Any future audits or policy updates.

Source: AOL.com https://www.aol.com/news/read-memo-authorizing-senate-offices-152506973.html