SoDa’s decision to place TAIM Insight Hub on Microsoft Azure is more than a routine cloud deployment. It signals where the market for enterprise knowledge systems is heading: away from static repositories and toward natural-language business intelligence that can interpret fragmented information at scale. If the promise holds, the move could help organizations turn sprawling internal data into something employees can actually use, not just store.

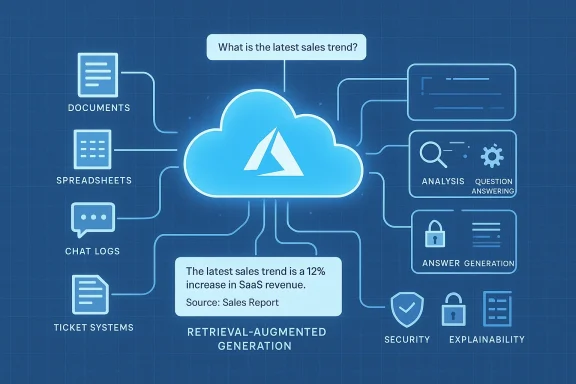

The timing matters. Enterprises are under pressure to do more with less, while also proving that AI outputs are trusted, explainable, and secure. In that environment, SoDa’s pitch for TAIM Insight Hub — as an AI layer for discovering and applying corporate knowledge — fits neatly with the broader shift toward RAG-based and search-grounded AI systems. Microsoft’s own Azure guidance emphasizes knowledge mining, retrieval-augmented generation, and enterprise grounding as core building blocks for these kinds of experiences. (azure.microsoft.com)

For SoDa, the Azure deployment also reframes the product as an enterprise platform rather than a niche information tool. The practical appeal is clear: scalable infrastructure, security controls, and a cloud foundation that can expand with demand. The strategic question is harder: can TAIM deliver enough accuracy, governance, and workflow integration to become a durable business system rather than just a polished conversational layer?

Enterprise knowledge management has spent years trying to escape the limits of the old intranet era. Traditional document libraries, SharePoint sites, line-of-business apps, and email archives often contain the answer — but not in a form employees can find quickly or trust confidently. That is why the modern market has shifted from storage to search, and from search to contextual answer engines.

Microsoft’s own Azure knowledge-mining positioning reflects this evolution. The company describes a three-step pattern: ingest, enrich, and explore, using connectors, AI capabilities, and search experiences to make hidden content useful. It also highlights common enterprise use cases like content research, compliance, customer support, and digital asset management, all of which depend on making unstructured data easier to navigate. (azure.microsoft.com)

That broader trend explains why the SoDa announcement matters. TAIM Insight Hub is being framed not merely as another database front end, but as an AI-powered environment that lets employees ask questions in natural language and receive grounded answers. In practical terms, that is the same market category now occupied by copilots, enterprise search assistants, and document intelligence platforms.

Microsoft’s architecture guidance reinforces the relevance of this model. Its Azure AI architecture documentation describes RAG as a way to scope generative AI to content sourced from vectorized documents and other data formats, while emphasizing enterprise data grounding as the mechanism that improves response quality. In other words, the industry is moving toward AI systems that are useful precisely because they are tethered to company knowledge rather than free-floating model memory. (learn.microsoft.com)

There is also a governance angle. Business leaders want less searching and more certainty, especially in regulated or high-stakes environments. A system that can show its sources, apply access controls, and respect permissions is much easier to defend than an opaque chatbot.

The product positioning is interesting because it moves beyond simple retrieval. SoDa is not only promising search; it is promising comprehension. That difference matters, because most businesses do not merely need a better indexing layer. They need systems that can synthesize, summarize, and guide decisions while still pointing back to the underlying evidence.

That is why the phrase explainable AI is important here. In enterprise settings, answer quality is not enough on its own. The system also has to show why an answer is credible, what sources support it, and where confidence should stop.

SoDa’s messaging suggests that TAIM is intended to behave like a knowledge layer rather than a simple conversational assistant. If implemented well, that could help it stand apart from generic chatbot products that look impressive in demos but fall apart under governance, permissions, and document provenance requirements.

That makes TAIM more credible as an enterprise proposition. It suggests a system that does not merely sit on top of one repository, but instead harmonizes content across the organization. If that harmonization includes access controls and metadata preservation, the result could be a meaningful productivity layer rather than another isolated AI experiment.

That foundation is particularly relevant for an AI knowledge platform. A system like TAIM needs to ingest documents, run searches, manage permissions, support authentication, and potentially orchestrate AI workloads that can spike with user demand. Azure’s positioning around Azure Firewall, Azure Front Door, and globally scalable entry points aligns with those workload patterns. (learn.microsoft.com)

That matters because knowledge platforms often deal with sensitive data: contracts, performance reviews, policy documents, incident reports, and financial records. If TAIM can live inside a secure Azure environment, SoDa can make a stronger pitch to compliance-conscious buyers. Security is not just about encryption; it is about reducing procurement friction.

That matters for a platform intended to serve many internal users simultaneously. If adoption grows, the architecture must absorb that usage without breaking response times or forcing expensive overprovisioning. In enterprise software, reliability is often the difference between a pilot and a permanent procurement line item.

That kind of alignment can be decisive. Buyers do not just ask whether the AI works; they ask how it will fit into their existing security model, tenant structure, and operations stack. Azure gives SoDa a credible answer to those questions.

This is significant because the BI market is already crowded. Traditional analytics vendors, cloud data platforms, and emerging AI copilots are all competing to own the front end of insight delivery. SoDa’s differentiation appears to be centered on knowledge accessibility: less dashboard fatigue, more direct answers, and a tighter bridge between unstructured content and business action.

That combination is powerful because it reduces the translation burden on the user. Employees do not need to know every table, file path, or report name. They can ask direct questions and let the system orchestrate the search and synthesis process.

That is especially valuable in teams that are heavily document-driven. Compliance, operations, support, sales enablement, and project delivery groups all spend time hunting for context rather than acting on it. If TAIM shortens that cycle, the return on investment could be substantial.

That is why explainability matters again. Business users need confidence that an answer was derived from approved sources and not from a brittle guess. If TAIM can consistently show provenance, it may earn a place in real workflows. If not, it risks becoming yet another layer of AI theater.

The best enterprise tools often hide complexity rather than amplify it. If TAIM can become a front door to information that is otherwise spread across documents, knowledge bases, and applications, it has a chance to become sticky. The more employees use it, the more it becomes part of the organization’s operating rhythm.

There is also a strong onboarding case. New employees often struggle with hidden knowledge, scattered documentation, and informal expertise. A natural-language interface could reduce ramp time and help standardize how information is accessed across teams.

This is where AI products often fail in practice. They optimize for conversation but neglect the decision trail. Businesses need both speed and auditability, especially when the output may influence a policy decision, customer response, or internal approval.

That is why the most durable AI platforms will feel less like experiments and more like infrastructure. If TAIM is being deployed in Azure with enterprise controls, SoDa is clearly aiming for that kind of operational permanence rather than a short-lived pilot.

This is why Azure is strategically useful. It places TAIM closer to the ecosystem enterprises already know, while also aligning it with the cloud patterns that Microsoft promotes for AI workloads. That does not guarantee success, but it reduces the sense that SoDa is asking customers to adopt an unfamiliar or risky foundation.

In that sense, SoDa’s TAIM Insight Hub is competing less against a single product and more against a direction of travel. Its real rivals are the products that already have distribution, trust, and integration depth. If TAIM can focus on a specific pain point and do it exceptionally well, it can still carve out space.

SoDa’s messaging suggests it wants to win on clarity and practical enterprise usefulness. That is a sensible strategy. In a crowded market, the products that survive are usually the ones that solve a painful problem elegantly rather than promising to reinvent everything.

Microsoft’s documentation repeatedly points toward architectures that use grounding data, private endpoints, and carefully designed AI workflows. That is a strong signal that the future of enterprise AI is not just generative, but governable. The model may produce the response, but the organization still needs to understand and control the path that led there. (learn.microsoft.com)

That matters in sectors where knowledge is highly regulated or operationally sensitive. Even in less regulated environments, explainability reduces internal skepticism. Users are more likely to rely on a system that can point to a source document than one that simply asserts a conclusion.

This is where many products stumble. They emphasize a conversational interface but do not provide enough structural transparency behind it. If SoDa gets this right, TAIM could become a much more defensible enterprise proposition.

That is why real-world proof will matter. The best evidence will be how TAIM behaves in production, not how convincingly it is described in a launch announcement.

It also creates room for expansion. A platform that starts with knowledge access can often grow into process support, analytics, compliance assistance, and workflow automation. If TAIM proves itself as a trusted interface to enterprise information, SoDa may have an opening to deepen into higher-value use cases.

There is also a procurement risk. Enterprises are increasingly cautious about AI products that sit on top of sensitive data without clear governance. Even with Azure underneath, SoDa will need to demonstrate how TAIM handles access control, data lineage, retention, and compliance in real deployments.

It will be especially important to watch whether SoDa expands the platform into adjacent capabilities such as workflow automation, analytics integration, or domain-specific knowledge packs. Those extensions could turn TAIM from a useful point solution into a broader strategic platform. If the company can combine trust, usability, and integration depth, it may have a credible long-term position.

In the end, the significance of TAIM Insight Hub on Azure is not just that another vendor chose another cloud. It is that enterprise BI is being redefined around natural language, grounded answers, and trusted infrastructure. If SoDa can deliver that combination consistently, the launch could be remembered as part of a much larger shift in how organizations find, validate, and act on their own knowledge.

The market is moving quickly from data abundance to decision acceleration. Platforms that can bridge that gap with speed, security, and explainability will shape the next phase of enterprise intelligence, and SoDa is now making its case to be counted among them.

Source: TechAfrica News SoDa Launches TAIM Insight Hub in Microsoft Azure to Transform Business Intelligence - TechAfrica News

The timing matters. Enterprises are under pressure to do more with less, while also proving that AI outputs are trusted, explainable, and secure. In that environment, SoDa’s pitch for TAIM Insight Hub — as an AI layer for discovering and applying corporate knowledge — fits neatly with the broader shift toward RAG-based and search-grounded AI systems. Microsoft’s own Azure guidance emphasizes knowledge mining, retrieval-augmented generation, and enterprise grounding as core building blocks for these kinds of experiences. (azure.microsoft.com)

For SoDa, the Azure deployment also reframes the product as an enterprise platform rather than a niche information tool. The practical appeal is clear: scalable infrastructure, security controls, and a cloud foundation that can expand with demand. The strategic question is harder: can TAIM deliver enough accuracy, governance, and workflow integration to become a durable business system rather than just a polished conversational layer?

Background

Background

Enterprise knowledge management has spent years trying to escape the limits of the old intranet era. Traditional document libraries, SharePoint sites, line-of-business apps, and email archives often contain the answer — but not in a form employees can find quickly or trust confidently. That is why the modern market has shifted from storage to search, and from search to contextual answer engines.Microsoft’s own Azure knowledge-mining positioning reflects this evolution. The company describes a three-step pattern: ingest, enrich, and explore, using connectors, AI capabilities, and search experiences to make hidden content useful. It also highlights common enterprise use cases like content research, compliance, customer support, and digital asset management, all of which depend on making unstructured data easier to navigate. (azure.microsoft.com)

That broader trend explains why the SoDa announcement matters. TAIM Insight Hub is being framed not merely as another database front end, but as an AI-powered environment that lets employees ask questions in natural language and receive grounded answers. In practical terms, that is the same market category now occupied by copilots, enterprise search assistants, and document intelligence platforms.

Microsoft’s architecture guidance reinforces the relevance of this model. Its Azure AI architecture documentation describes RAG as a way to scope generative AI to content sourced from vectorized documents and other data formats, while emphasizing enterprise data grounding as the mechanism that improves response quality. In other words, the industry is moving toward AI systems that are useful precisely because they are tethered to company knowledge rather than free-floating model memory. (learn.microsoft.com)

Why this category is accelerating

The biggest reason is simple: organizations are drowning in disconnected information. Data lives in databases, file shares, chat threads, CRM systems, ERP platforms, support desks, and project tools, but employees need answers in seconds. That gap has made enterprise AI attractive because it promises a single interface over multiple systems.There is also a governance angle. Business leaders want less searching and more certainty, especially in regulated or high-stakes environments. A system that can show its sources, apply access controls, and respect permissions is much easier to defend than an opaque chatbot.

- Unstructured content is still the dominant bottleneck.

- Natural language access reduces training and search friction.

- Grounding and explainability are now competitive necessities.

- Security and permissions shape enterprise adoption as much as model quality.

- Workflow integration increasingly matters more than model novelty.

What SoDa Is Claiming With TAIM

According to the TechAfrica News report, SoDa says TAIM Insight Hub is designed to help organizations transform fragmented enterprise data into a single trusted source of actionable intelligence. The company positions the platform as a way to make internal information easier to discover, interpret, and apply, using natural-language interaction and explainable AI. That is a strong value proposition, especially for organizations that have spent years accumulating content they cannot effectively operationalize.The product positioning is interesting because it moves beyond simple retrieval. SoDa is not only promising search; it is promising comprehension. That difference matters, because most businesses do not merely need a better indexing layer. They need systems that can synthesize, summarize, and guide decisions while still pointing back to the underlying evidence.

From retrieval to interpretation

This is the core product challenge. A good enterprise knowledge platform must do three things at once: find the right material, interpret it in context, and present it in a way users can act on. If one of those pieces fails, the whole experience degrades into either a search box with a chat skin or a chatbot with weak factual grounding.That is why the phrase explainable AI is important here. In enterprise settings, answer quality is not enough on its own. The system also has to show why an answer is credible, what sources support it, and where confidence should stop.

SoDa’s messaging suggests that TAIM is intended to behave like a knowledge layer rather than a simple conversational assistant. If implemented well, that could help it stand apart from generic chatbot products that look impressive in demos but fall apart under governance, permissions, and document provenance requirements.

A platform for scattered enterprise data

The platform’s value also depends on how well it handles disparate sources. TechAfrica News says TAIM is built for enterprises managing growing volumes of disconnected and unstructured data, which is exactly the problem space that knowledge mining and RAG patterns were designed to address. Microsoft’s Azure documentation notes that knowledge mining can ingest content from a wide range of sources and then enrich it before it is explored through search or business apps. (azure.microsoft.com)That makes TAIM more credible as an enterprise proposition. It suggests a system that does not merely sit on top of one repository, but instead harmonizes content across the organization. If that harmonization includes access controls and metadata preservation, the result could be a meaningful productivity layer rather than another isolated AI experiment.

- Natural language lowers the barrier to discovery.

- Explainability helps build trust with enterprise users.

- Multi-source ingestion is essential for real business value.

- Context-aware answers are more useful than keyword hits.

- Grounded responses reduce the risk of hallucination-driven decisions.

Why Azure Matters Here

The choice of Microsoft Azure is not accidental. For enterprise AI products, cloud platform selection often determines whether a solution scales cleanly, survives security review, and integrates with existing identity and governance frameworks. Azure’s own documentation emphasizes security capabilities, high availability, global scalability, and enterprise cloud controls as part of its core foundation. (learn.microsoft.com)That foundation is particularly relevant for an AI knowledge platform. A system like TAIM needs to ingest documents, run searches, manage permissions, support authentication, and potentially orchestrate AI workloads that can spike with user demand. Azure’s positioning around Azure Firewall, Azure Front Door, and globally scalable entry points aligns with those workload patterns. (learn.microsoft.com)

Security as a product feature

For enterprise customers, cloud security is not a background concern. It is part of the product. The Azure infrastructure security page points users toward Microsoft Defender for Cloud and other controls for preventing, detecting, and responding to threats, while the general security overview describes a platform designed to host millions of customers securely. (learn.microsoft.com)That matters because knowledge platforms often deal with sensitive data: contracts, performance reviews, policy documents, incident reports, and financial records. If TAIM can live inside a secure Azure environment, SoDa can make a stronger pitch to compliance-conscious buyers. Security is not just about encryption; it is about reducing procurement friction.

Scalability and reliability

The other major advantage is elasticity. AI systems are unpredictable from an infrastructure standpoint because demand can vary by department, by time of day, and by business cycle. Azure’s documentation highlights unrestricted scalability in services like Azure Firewall and describes Azure Front Door as a global, scalable entry point. (learn.microsoft.com)That matters for a platform intended to serve many internal users simultaneously. If adoption grows, the architecture must absorb that usage without breaking response times or forcing expensive overprovisioning. In enterprise software, reliability is often the difference between a pilot and a permanent procurement line item.

Enterprise integration advantages

Azure also lowers the integration burden for organizations already invested in Microsoft ecosystems. Identity, monitoring, storage, and search components can be composed into a broader enterprise architecture more naturally than in a fragmented multi-vendor setup. Microsoft’s AI architecture guidance explicitly discusses private endpoints, monitoring, Microsoft Entra ID, and grounding via enterprise data sources as part of a reference pattern for chat-based AI solutions. (learn.microsoft.com)That kind of alignment can be decisive. Buyers do not just ask whether the AI works; they ask how it will fit into their existing security model, tenant structure, and operations stack. Azure gives SoDa a credible answer to those questions.

- Security controls help satisfy IT and compliance teams.

- Global infrastructure supports distributed enterprise use.

- Identity integration reduces operational friction.

- Monitoring and governance improve trust and manageability.

- Cloud elasticity allows growth without immediate replatforming.

The Business Intelligence Angle

The phrase business intelligence is doing important work in this announcement. TAIM Insight Hub is not being framed as a pure AI chatbot or a consumer-style assistant. Instead, it is being positioned as a decision support layer that helps employees move from raw information to usable insight. That shifts the discussion from novelty to operational value.This is significant because the BI market is already crowded. Traditional analytics vendors, cloud data platforms, and emerging AI copilots are all competing to own the front end of insight delivery. SoDa’s differentiation appears to be centered on knowledge accessibility: less dashboard fatigue, more direct answers, and a tighter bridge between unstructured content and business action.

What makes BI “AI-native”

An AI-native BI platform does not simply visualize data. It helps users ask questions they might not know how to formulate in advance, then turns mixed-format enterprise content into coherent responses. Microsoft’s architecture guidance notes that language models can support semantic search and natural language interaction, while RAG helps tie answers to enterprise data sources. (learn.microsoft.com)That combination is powerful because it reduces the translation burden on the user. Employees do not need to know every table, file path, or report name. They can ask direct questions and let the system orchestrate the search and synthesis process.

Decision velocity versus data volume

The key economic benefit is faster decision-making. When information is scattered, the real cost is not just storage or software licensing. It is time lost to manual searching, inconsistent answers, and duplicated effort. SoDa’s pitch suggests TAIM can compress that cycle by giving workers a single conversational access layer.That is especially valuable in teams that are heavily document-driven. Compliance, operations, support, sales enablement, and project delivery groups all spend time hunting for context rather than acting on it. If TAIM shortens that cycle, the return on investment could be substantial.

The limits of “insight” branding

Still, insight is a strong claim and a hard one to prove. Many products promise intelligence but merely surface summaries. True BI value depends on whether the platform can preserve nuance, support drill-down, and distinguish between factual retrieval and analytical inference.That is why explainability matters again. Business users need confidence that an answer was derived from approved sources and not from a brittle guess. If TAIM can consistently show provenance, it may earn a place in real workflows. If not, it risks becoming yet another layer of AI theater.

- Faster answers can improve productivity.

- Conversational access reduces reliance on specialist training.

- Grounded outputs are essential for BI credibility.

- Workflow fit matters more than flashy demos.

- Provenance determines whether users trust the system.

Enterprise Use Cases and User Experience

One of the strongest aspects of the announcement is the emphasis on user experience. SoDa says employees can interact with corporate knowledge in natural language rather than navigating multiple systems and repositories. That is not a minor convenience; it is a usability shift that can reshape how people expect internal systems to work.The best enterprise tools often hide complexity rather than amplify it. If TAIM can become a front door to information that is otherwise spread across documents, knowledge bases, and applications, it has a chance to become sticky. The more employees use it, the more it becomes part of the organization’s operating rhythm.

Who benefits first

The first beneficiaries are likely to be knowledge workers who repeatedly ask the same kinds of questions. Support teams, analysts, managers, legal staff, and operations personnel all spend time seeking answers that may already exist somewhere inside the company. A tool that can aggregate and contextualize those answers could save hours each week.There is also a strong onboarding case. New employees often struggle with hidden knowledge, scattered documentation, and informal expertise. A natural-language interface could reduce ramp time and help standardize how information is accessed across teams.

What a good enterprise UX must include

A successful enterprise AI interface needs more than chat. It needs clear source references, permission-aware results, and easy escalation paths when the system is uncertain. It should also support search, browse, and visualization patterns so that users can move from answer to evidence without friction.This is where AI products often fail in practice. They optimize for conversation but neglect the decision trail. Businesses need both speed and auditability, especially when the output may influence a policy decision, customer response, or internal approval.

The importance of adoption design

Enterprise adoption is not automatic just because the technology is interesting. People have to believe the tool will save time, not create another place to check. Training, change management, and clear governance matter as much as model quality.That is why the most durable AI platforms will feel less like experiments and more like infrastructure. If TAIM is being deployed in Azure with enterprise controls, SoDa is clearly aiming for that kind of operational permanence rather than a short-lived pilot.

- Onboarding should be faster and easier.

- Support teams can gain immediate efficiency.

- Managers need answer trails, not just summaries.

- New hires benefit from centralized knowledge access.

- Adoption will depend on trust, not hype.

Competitive Context

SoDa is entering a market where the competitive bar is already high. Microsoft, Google, AWS, and numerous specialist vendors all want a share of the enterprise AI and knowledge discovery stack. The winners will not simply be the companies with the most impressive model demos; they will be the ones that solve permissioning, ingestion, governance, and user trust better than everyone else.This is why Azure is strategically useful. It places TAIM closer to the ecosystem enterprises already know, while also aligning it with the cloud patterns that Microsoft promotes for AI workloads. That does not guarantee success, but it reduces the sense that SoDa is asking customers to adopt an unfamiliar or risky foundation.

How this compares to mainstream AI platforms

Microsoft’s architecture docs describe generative AI systems, copilots, agents, and RAG as part of a broader enterprise AI design toolkit. That framing matters because it shows the market’s direction: users will increasingly expect AI to be embedded into workflows and grounded in enterprise content rather than bolted on as a novelty feature. (learn.microsoft.com)In that sense, SoDa’s TAIM Insight Hub is competing less against a single product and more against a direction of travel. Its real rivals are the products that already have distribution, trust, and integration depth. If TAIM can focus on a specific pain point and do it exceptionally well, it can still carve out space.

Where a specialist can win

Specialist vendors often win by being narrower but deeper. They can tailor ingestion pipelines, refine answer quality for a specific business context, and support domain-specific governance requirements. That can make them more valuable than broad platforms that do many things but excel at none.SoDa’s messaging suggests it wants to win on clarity and practical enterprise usefulness. That is a sensible strategy. In a crowded market, the products that survive are usually the ones that solve a painful problem elegantly rather than promising to reinvent everything.

What rivals should notice

The most important lesson for competitors is that enterprises want AI with boundaries. They want models that understand corporate knowledge, but they also want secure infrastructure, access control, and explainability. Azure’s own guidance around grounding and private enterprise data underscores that the market is moving toward controlled intelligence, not open-ended experimentation. (learn.microsoft.com)- Platform ecosystems matter as much as raw model capability.

- Vertical focus can be a major differentiator.

- Enterprise trust beats flashy demonstrations.

- Workflow integration is a moat if done well.

- Security review can make or break sales cycles.

The Role of Explainable AI

The inclusion of explainable AI in SoDa’s messaging is one of the more important details in the announcement. As AI systems become more embedded in business processes, companies are increasingly unwilling to accept answers that cannot be justified. Explainability is moving from a nice-to-have to a procurement requirement.Microsoft’s documentation repeatedly points toward architectures that use grounding data, private endpoints, and carefully designed AI workflows. That is a strong signal that the future of enterprise AI is not just generative, but governable. The model may produce the response, but the organization still needs to understand and control the path that led there. (learn.microsoft.com)

Why explainability changes buying behavior

Explainability affects far more than user confidence. It affects legal exposure, auditability, and cross-functional approval. When a system can identify its sources and show how it reached an answer, IT leaders have a far easier time defending deployment decisions.That matters in sectors where knowledge is highly regulated or operationally sensitive. Even in less regulated environments, explainability reduces internal skepticism. Users are more likely to rely on a system that can point to a source document than one that simply asserts a conclusion.

What “good” looks like in practice

In a robust enterprise setup, explainability should include source citations, confidence indicators, permission-aware retrieval, and a way to trace which content influenced the answer. It should also preserve human override. AI can accelerate decisions, but it should not become a black box that removes accountability.This is where many products stumble. They emphasize a conversational interface but do not provide enough structural transparency behind it. If SoDa gets this right, TAIM could become a much more defensible enterprise proposition.

The risk of overstating it

At the same time, the industry overuses the phrase. Explainable is often used loosely, especially in vendor marketing. A system can be somewhat transparent without being fully explainable in a rigorous technical sense, and buyers should be careful about the distinction.That is why real-world proof will matter. The best evidence will be how TAIM behaves in production, not how convincingly it is described in a launch announcement.

- Source transparency increases trust.

- Auditability supports compliance.

- Confidence signals help users interpret results.

- Human oversight remains necessary.

- Marketing language should not be confused with technical guarantees.

Strengths and Opportunities

The SoDa-Azure combination has several clear strengths. First, it aligns with a real and growing enterprise need: making scattered knowledge easier to discover and use. Second, it rides on a cloud platform that already has a strong story around security, scalability, and enterprise AI architecture. Third, it targets a use case — natural-language access to corporate knowledge — that is easy for buyers to understand and difficult to ignore once they see it working.It also creates room for expansion. A platform that starts with knowledge access can often grow into process support, analytics, compliance assistance, and workflow automation. If TAIM proves itself as a trusted interface to enterprise information, SoDa may have an opening to deepen into higher-value use cases.

- Clear enterprise pain point

- Strong alignment with Azure’s AI stack

- Natural-language UX

- Potential for workflow expansion

- Better onboarding and productivity gains

- Security-friendly cloud foundation

- Opportunity for domain specialization

Risks and Concerns

The biggest risk is that the platform may promise more than it can consistently deliver. Enterprise AI systems are easy to demo and hard to operationalize, especially when they must respect permissions, sources, and changing content at scale. If answers are incomplete, inconsistent, or difficult to verify, user trust will erode quickly.There is also a procurement risk. Enterprises are increasingly cautious about AI products that sit on top of sensitive data without clear governance. Even with Azure underneath, SoDa will need to demonstrate how TAIM handles access control, data lineage, retention, and compliance in real deployments.

- Hallucination and answer quality

- Permissioning and access-control complexity

- Governance and audit requirements

- Integration friction with existing systems

- Adoption risk if UX is not intuitive

- Vendor overpromising versus real-world performance

- Dependence on clean, well-managed source data

What to Watch Next

The next phase will be about proof. Buyers will want to know what TAIM looks like in production, how quickly it can index and refresh knowledge, and whether it can support enterprise-scale usage without degrading response quality. They will also want to see whether SoDa can quantify productivity gains rather than simply describe them.It will be especially important to watch whether SoDa expands the platform into adjacent capabilities such as workflow automation, analytics integration, or domain-specific knowledge packs. Those extensions could turn TAIM from a useful point solution into a broader strategic platform. If the company can combine trust, usability, and integration depth, it may have a credible long-term position.

Key things to monitor

- Customer deployments and reference cases

- Measured accuracy and grounding performance

- Support for compliance and governance

- Integration with common enterprise systems

- Hybrid and multi-cloud deployment options

- Evidence of real productivity impact

- Whether SoDa builds industry-specific variants

In the end, the significance of TAIM Insight Hub on Azure is not just that another vendor chose another cloud. It is that enterprise BI is being redefined around natural language, grounded answers, and trusted infrastructure. If SoDa can deliver that combination consistently, the launch could be remembered as part of a much larger shift in how organizations find, validate, and act on their own knowledge.

The market is moving quickly from data abundance to decision acceleration. Platforms that can bridge that gap with speed, security, and explainability will shape the next phase of enterprise intelligence, and SoDa is now making its case to be counted among them.

Source: TechAfrica News SoDa Launches TAIM Insight Hub in Microsoft Azure to Transform Business Intelligence - TechAfrica News