When Bill Gates and Charles Simonyi began talking about “softer software” in the early 1980s, they did not reject intelligence — they rejected mythology. Their idea was simple and practical: build programs that learn from users, adapt over time, and serve as partners rather than oracles. That distinction — between marketing fireworks and incremental, user-centered engineering — mattered then and matters even more in 2026, when generative models dominate headlines, venture capital, and enterprise road maps. This article revisits the moment Gates and his colleagues sketched a vision that anticipated today’s personalization engines and copilots, weighs what they got right and wrong, and extracts practical lessons for technologists, product leaders, and regulators wrestling with AI’s promises and pitfalls.

At stake was a fundamental product-design question: should companies promise machines that “think” for people, or should they pursue incremental software that remembers and assists? Gates and Simonyi positioned themselves in the latter camp with the phrase softer software — a deliberately pragmatic rebranding of “AI” that emphasized learning through usage and small, testable improvements in UX.

At the same time, the landscape has changed. Cloud-scale models, centralized platforms, and new social harms demand new engineering and regulatory responses. The most productive path forward is hybrid: embrace large-scale model capabilities where they clearly add value, but deploy them under the softer-software constraints that always won — transparency, reversibility, and human oversight.

If you are building AI today, ask not whether your product is “intelligent,” but whether it reliably makes someone’s work easier, safer, and more efficient — and whether that assistance can be audited and undone. The rhetoric may change across decades, but the engineering imperative remains timeless: build systems that help people do their jobs better, and measure that help in plain, empirical terms.

Source: TechRadar In 1983, Bill Gates rejected the AI hype and championed “softer software”

Background

Background

The scene: early personal computing and the 1985 AI moment

By the mid-1980s the personal-computer revolution had moved from hobbyist basements into offices and classrooms. Software was transitioning from single-purpose utilities to integrated suites, and the industry was hungry for the “next big thing.” In January 1985, industry leaders and commentators were already warning about an approaching rush toward artificial intelligence: some voices called it hype, others a genuine opportunity. The debate spun around two competing impulses — the desire to sell imagination and the need to build reliable, useful features that actually improved users’ work.At stake was a fundamental product-design question: should companies promise machines that “think” for people, or should they pursue incremental software that remembers and assists? Gates and Simonyi positioned themselves in the latter camp with the phrase softer software — a deliberately pragmatic rebranding of “AI” that emphasized learning through usage and small, testable improvements in UX.

Overview: What “softer software” meant in the 1980s

A practical reframe of intelligence

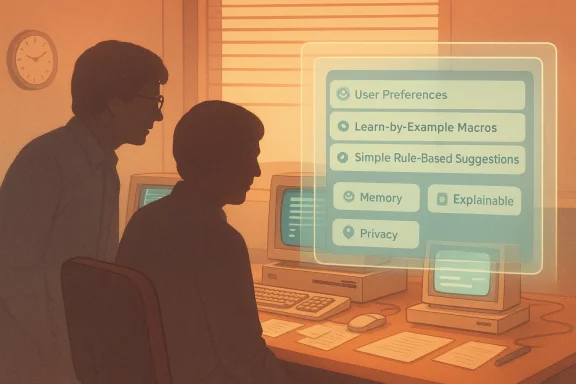

Softer software was never intended to be a philosophical definition of cognition. Instead, it was an engineering discipline: design software that modifies its behavior over time based on experience with the user. The goal was not to create general intelligence but to reduce friction in routine workflows via pattern recognition and adaptive defaults. That meant three concrete capabilities:- Remembering user preferences and applying them automatically.

- Observing repeated sequences of actions and offering automation (macros, templates).

- Using rule-based or expert-system logic to recommend next steps without insisting on complete autonomy.

Why the term mattered

The phrase “artificial intelligence” in the early 1980s carried baggage — images of thinking machines, dystopian surveillance, and inaccessible academic research. By contrast, “softer software” reframed the conversation toward concrete product design and human-centered outcomes. It was a rhetorical move with a technical backbone: it invited engineering tradeoffs (how much memory? what telemetry? what algorithms?) instead of speculative metaphysics.Key actors and moments

Bill Gates and Charles Simonyi — engineering first

In August 1983, Bill Gates and Charles Simonyi jointly articulated the notion of software that learns from use and anticipates needs. Their emphasis on empirical improvements — software that changes behavior after observing user actions — foreshadowed modern personalization engines. Simonyi’s engineering pedigree (lead architect of early word processing and spreadsheet innovations) grounded the idea in product reality, not academic hype.InfoWorld’s editorial skepticism — February 25, 1985

Industry press picked up the debate. A 1985 editorial and contemporary commentary worried that the market would split between vacuous “AI-hype” products and overdesigned systems that tried to solve grand problems instead of practical ones. The editors distinguished between:- AI-hype: superficial products that promised decision-making magic with trivial inputs.

- Rube Goldberg overdesign: feature-laden systems that solved scientifically interesting but commercially irrelevant problems.

Mitch Kapor’s warning — January 1985

At a Personal Computer Forum in January 1985, Mitch Kapor cautioned that the industry could fall into a “lemming-like rush” toward AI. His comment was not anti-innovation; it was a cautionary call for clarity about customer value. Kapor’s voice represented a market-oriented pragmatism: hype can distort product priorities and damage customer trust.Excel’s “learn-by-example” macros — May 27, 1985

One of the earliest, practical embodiments of softer software ideas appeared in spreadsheet automation. Spreadsheet software began shipping with learn-by-example macro features that recorded user actions and enabled repeatable automation without requiring programming knowledge. Those macros are a direct ancestor of modern “record-and-playback” automation tools and the personalized automation pathways embedded in today’s productivity copilots.How the 1980s blueprint maps to 2026 AI

The metaphorical lineage from softer software to today’s copilots is direct and instructive. Consider these parallels:- Remembering habits → Long-term memory in assistants. Modern copilots are explicitly designed to remember user preferences across sessions, an idea Gates described in the 1980s.

- Learn-by-example macros → Low-code/no-code action pipelines. “Record-and-repeat” remains a major UX pattern in automation tooling.

- Expert systems → Domain-specific models and rules. Early rule-based systems evolved into hybrid architectures where rules augment statistical models for reliability in critical domains.

What Gates and Simonyi got right

- Practicality beats mystique. Gates’ reframing away from sensationalist “AI” toward usable, incremental software anticipated a long-term trajectory where practical tools — not grand promises — win adoption.

- Pattern recognition as the lever. The prediction that software that records and uses behavioral histories would become valuable was prescient. Personalization and context-aware assistance are central to modern productivity and consumer software.

- The user-as-partner model. The idea that software should be a working partner, suggesting actions rather than replacing judgment, matches current best practices for human-AI collaboration.

What they underestimated

- Scale of data and compute. The mid-1980s vision naturally assumed local, user-level learning. The explosion of cloud-scale compute, large datasets, and pretraining was not foreseeable in full. That shift enabled capabilities (and risks) that local, rule-based systems could not match.

- Model opacity and societal risk. Softer software imagined explainable rules and patterns. Modern deep models are often inscrutable and can produce confidently wrong answers — a new kind of product failure mode.

- Business incentives and platform dynamics. The economics of modern AI — platform lock-in, data network effects, and concentrated model ownership — create dynamics far more complex than the company-versus-user tradeoffs of the spreadsheet era.

Strengths and risks of the softer-software legacy in today’s AI era

Strengths (what to preserve)

- Human-centered design: prioritize assistive over autocratic agents.

- Incrementalism: ship features that demonstrably reduce friction, measure, and iterate.

- Explainability by design: where possible, favor architectures that yield interpretable reasoning or combine models with rule-based systems.

Risks (what to actively mitigate)

- Overpromising: marketing that frames models as decision-makers will erode trust when mistakes occur.

- Data governance failures: personalization is valuable, but persistent memory models risk privacy violations and hard-to-revoke inferences.

- Automation complacency: making humans overly reliant on assistants without clear oversight increases operational and regulatory risk.

Concrete product lessons for engineers and teams

- Instrument every assistive feature. Measure accuracy, error modes, and user override behavior. If a copilot suggests work, track whether users accept, edit, or reject that suggestion.

- Design for reversible memory. Long-term memories and preferences must be auditable and erasable by users. From a product perspective, provide explicit controls for retention and sharing.

- Combine models with rules for critical tasks. Use rule-based guardrails where model outputs could cause harm (finance, legal, healthcare). Hybrid pipelines improve reliability while retaining flexibility.

- Prioritize graceful degradation. When a model is uncertain, prefer lightweight prompts that ask clarifying questions rather than producing definitive-but-wrong answers.

- Keep humans in the loop for consequential decisions. Design workflows that surface model confidence and require human sign-off for irreversible actions.

Governance and regulation: policy implications grounded in the past

The 1980s admonitions about hype and overdesign map to current regulatory debates. Three policy directions emerge as pragmatic:- Require transparency about capabilities and limits. Public-facing products should declare the confidence and typical failure modes of their assistants.

- Mandate data provenance and revocation rights. Users should be able to view what historical data informs an assistant’s behavior and request deletion or correction.

- Regulate high-risk autonomous behaviors. For agents that act across accounts or execute transactions, require explicit consent flows and auditable logs.

Business strategy: how companies should think about “softer” AI today

- Compete on utility, not mystique. Products that demonstrably save time will outlast those that sell narratives. Prioritize measurable UX improvements.

- Leverage edge-local personalization where feasible. Not every assistant needs full cloud memory; local personalization reduces privacy and compliance friction.

- Use partnerships for domain expertise. Combine general models with domain-specific knowledge curated by experts to raise reliability and lower regulatory risk.

Tactical playbook: from prototype to production

- Start with a low-risk pilot. Identify a repetitive task and instrument it for automation trials.

- Build a feedback loop. Track user corrections and use them to refine model prompts, rules, or training datasets.

- Add transparency surfaces. Show users why a suggestion was made (e.g., “Based on your last five filings, we suggest…”).

- Introduce memory controls. Allow users to opt-in to remembering context for specific tasks, with clear undo and export options.

- Scale with hybrid architectures. Move from rule-based prototypes to model-augmented systems only after establishing guardrails.

Cultural lessons for product teams

- Avoid jargon-driven roadmaps. Terms like “autonomous agent” should be translated into user stories with accept/reject rates, safety checks, and audit requirements.

- Maintain a humility-first posture. Celebrate assistive wins and document failure cases publicly to build trust.

- Reward explainability. Incentivize engineers to produce interpretable signals that product teams can surface to users.

Case studies: echoes of softer software in modern products

- Productivity copilots that remember editorial tone and preferred formatting emulate the 1980s idea of macros that “remember” your habits.

- Email triage models that learn which messages a user prioritizes are a direct-line descendant of rule-based, user-focused automation systems.

- Low-code automation platforms that allow non-programmers to record workflows and add conditions reflect the same usability priorities that drove early spreadsheet macros.

Where the analogy breaks down — and why that matters

While the lineage from softer software to modern AI is useful, important differences require attention:- Scale and externalities. Modern models trained on massive third-party corpora introduce cross-user effects and copyright disputes that early personal-computing tools never faced.

- Economic concentration. Platform-level model ownership creates competitive dynamics and vendor lock-in risks that the 1980s ecosystem (dominated by desktop apps) did not introduce.

- Novel failure modes. “Hallucinations,” biased inferences, and syntactic plausibility without semantic grounding are problems without close analogues in classic rule-based systems.

A balanced conclusion: use the past as a compass, not a map

The softer-software story is more than nostalgia. It is a disciplined product philosophy that emphasizes measurable, incremental improvements over grand rhetorical claims. Gates and Simonyi’s instincts were right when they prioritized usefulness, predictability, and partnership between users and machines. Their framing offers vital guardrails for 2026: resist hype, engineer explainability, and design for user control.At the same time, the landscape has changed. Cloud-scale models, centralized platforms, and new social harms demand new engineering and regulatory responses. The most productive path forward is hybrid: embrace large-scale model capabilities where they clearly add value, but deploy them under the softer-software constraints that always won — transparency, reversibility, and human oversight.

If you are building AI today, ask not whether your product is “intelligent,” but whether it reliably makes someone’s work easier, safer, and more efficient — and whether that assistance can be audited and undone. The rhetoric may change across decades, but the engineering imperative remains timeless: build systems that help people do their jobs better, and measure that help in plain, empirical terms.

Source: TechRadar In 1983, Bill Gates rejected the AI hype and championed “softer software”