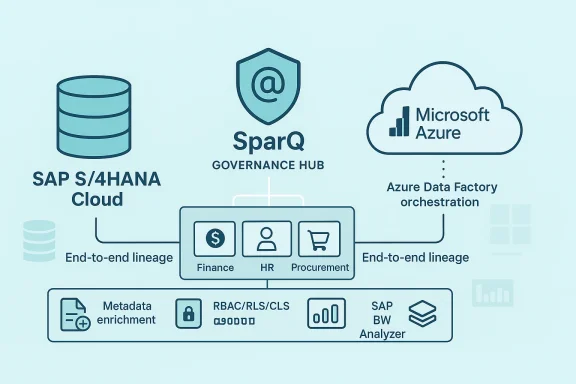

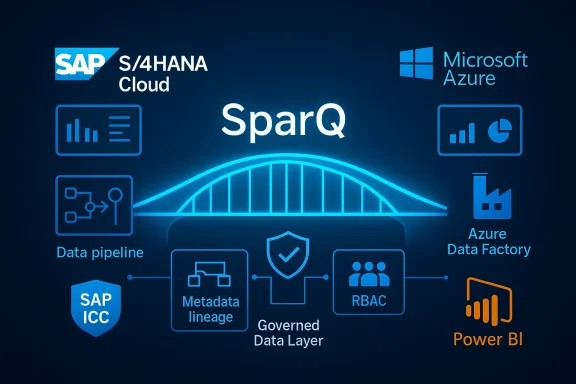

Kagool’s SparQ has been formally recognised by SAP’s Integration and Certification Center as SAP‑certified for clean core with SAP S/4HANA Cloud — a milestone announced on February 24, 2026 that positions the platform as a governed bridge between SAP’s digital core and Microsoft Azure analytics services. The certification covers SparQ integration software, Version 2.3, and reinforces Kagool’s positioning: deliver enterprise-grade, AI‑ready datasets from S/4HANA Cloud into Azure while preserving the integrity of the SAP clean core strategy.

The concept of a clean core has moved from best practice to board‑level strategy for SAP customers. SAP’s clean core guidance encourages organisations to keep the S/4HANA core as close to standard as possible, push differentiation to side‑by‑side extensions, and manage custom logic through upgrade‑safe approaches. The result is faster upgrades, lower technical debt, and a more resilient digital core ready for business innovation.

At the same time, enterprises are under pressure to democratise analytics and scale AI use cases. That creates a tension: business teams demand fast, flexible access to data for Power BI dashboards and generative AI experiments, while IT and SAP teams must avoid changes that jeopardise upgradeability or create compliance risk in the core ERP.

Kagool’s SparQ is pitched squarely at that tension. The product promises a governed, automated data pipeline to Azure that gives business users analytic agility while maintaining SAP clean core hygiene — and the SAP certification is intended to signal vendor alignment with SAP’s architecture and upgrade commitments.

What the certification delivers:

However, certification is a starting point — not a guarantee of project simplicity. Buyers should validate the vendor’s operational maturity, confirm the scope and limits of the certification for their planned architecture, model ongoing run costs for ADF and Databricks workloads, and factor in the non‑trivial semantic work required to faithfully reproduce BW logic in Azure models.

For CIOs and data leaders evaluating SparQ, recommended next steps are: run a focused proof of value on one domain, validate governance and RLS/CLS integration with your identity management, and verify contractual commitments for upgrade readiness as SAP releases evolve. Done well, a certified platform like SparQ can become the trusted data backbone for enterprise reporting and AI — accelerating insight delivery while honouring the discipline of a clean SAP core.

Conclusion

SparQ’s SAP clean core certification is a timely validation that addresses a real market tension: enabling enterprise analytics and AI without compromising the upgradeability and integrity of S/4HANA Cloud. The platform’s combination of ingestion automation, BW rationalisation tooling, governance controls, and Azure‑native pipeline orchestration positions it as a practical option for organisations embracing Microsoft cloud for analytics. Still, the usual caveats apply: test with a pilot, quantify migration effort for complex BW semantics, verify operational and cost assumptions, and ensure contractual commitments hold across S/4HANA release cycles. With those precautions, SparQ could materially shorten the path from SAP data to trusted, auditable insights in Azure.

Source: Weekly Voice SparQ by Kagool Is Certified by SAP® for clean core with SAP S/4HANA Cloud | Weekly Voice

Background: why "clean core" matters now

Background: why "clean core" matters now

The concept of a clean core has moved from best practice to board‑level strategy for SAP customers. SAP’s clean core guidance encourages organisations to keep the S/4HANA core as close to standard as possible, push differentiation to side‑by‑side extensions, and manage custom logic through upgrade‑safe approaches. The result is faster upgrades, lower technical debt, and a more resilient digital core ready for business innovation.At the same time, enterprises are under pressure to democratise analytics and scale AI use cases. That creates a tension: business teams demand fast, flexible access to data for Power BI dashboards and generative AI experiments, while IT and SAP teams must avoid changes that jeopardise upgradeability or create compliance risk in the core ERP.

Kagool’s SparQ is pitched squarely at that tension. The product promises a governed, automated data pipeline to Azure that gives business users analytic agility while maintaining SAP clean core hygiene — and the SAP certification is intended to signal vendor alignment with SAP’s architecture and upgrade commitments.

What Kagool announced: the essentials

- The announcement date was February 24, 2026 and references SparQ integration software, Version 2.3, as SAP‑certified for clean core with SAP S/4HANA Cloud.

- SparQ is framed as a governed enterprise data platform that moves and prepares SAP data into Microsoft Azure for reporting, analytics, and AI.

- Core product themes described by the vendor include:

- Automated, governed SAP‑to‑Azure data pipelines

- Pre‑built domain data models (Finance, HR, Procurement, Inventory)

- Enterprise governance (metadata enrichment, sensitivity classification, compliance tagging, lineage)

- Data observability (quality monitoring, freshness tracking)

- Centralised access controls (RBAC, Row‑Level Security, Column‑Level Security, audit trails)

- SAP BW Analyser capability to inventory and rationalise legacy BW landscapes

- An Approval Cockpit for delegated approvals of dataset uploads/downloads

- Example GUI workflows that automate Azure Data Factory (ADF) orchestration and Databricks CDC/transformation in the background

Why the SAP ICC clean core certification matters (and what it does — and doesn’t — guarantee)

The SAP Integration and Certification Center (ICC) clean core designation is an important signal for SAP customers evaluating third‑party solutions for S/4HANA Cloud integration.What the certification delivers:

- A formal validation that the vendor’s integration approach complies with SAP’s clean core principles and integration patterns.

- A public assurance that the solution has been tested against SAP S/4HANA Cloud integration standards for the certified version.

- Confidence that the solution follows upgrade‑safe practices promoted by SAP, reducing the likelihood of unsupported modifications to SAP objects.

- Zero operational work: integration still requires project architecture, SAP Basis and security configuration, and organisational governance.

- Pricing, performance at scale, or fit for a specific customer’s BW migration complexity — those remain implementation matters.

- Permanent compliance across future product versions: customers should verify upgrade and support commitments; SAP’s clean core program generally expects partners to maintain upgrade‑readiness as new S/4HANA releases appear.

What SparQ brings technically: a closer look

SparQ is presented as a unified data platform with several discrete technical layers and capabilities. Breaking these down:Ingestion & change data capture (CDC)

- The product automates the extraction of SAP S/4HANA Cloud data and delivers it to Azure targets. Kagool references orchestration with Azure Data Factory (ADF) and transformation / DeltaLake processing in Databricks, with CDC support to keep datasets current.

- CDC modes and delta strategies are essential in SAP migrations: timestamp deltas, counter fields, and join‑aware CDC reduce load and preserve transactional integrity. Kagool’s approach appears to combine ABAP‑based extraction logic (proprietary or certified ABAP extractors) with Azure orchestration.

Transformation & modelling

- SparQ provides a low‑code/no‑code pipeline studio for configuring transformations, naming conventions, validation, enrichment, and domain data models.

- Pre‑built models for Finance, HR, Procurement and Inventory aim to accelerate delivery and ensure consistent KPI definitions for downstream reporting.

Governance, security & observability

- Automated metadata enrichment, sensitivity classification, compliance tagging, and full lineage tracing from SAP tables to reporting datasets are core claims.

- Access controls include RBAC, Row‑Level Security (RLS), Column‑Level Security (CLS), and an Approval Cockpit for delegated approvals when sensitive datasets are uploaded or exported.

- Data quality monitoring and freshness tracking provide operational observability and SLA enforcement.

SAP BW Analyser

- One notable capability is the SAP BW Analyser which inventories legacy BW artifacts, identifies duplicated models and unused reports, maps usage/volumetrics, and estimates the number of Azure models needed. This helps create a migration roadmap and estimate implementation effort.

Integration stance

- Kagool positions SparQ as an enterprise data layer, not a BI front end. The platform is designed to feed trusted, governed datasets to Power BI, Databricks, Fabric, and other analytics runtimes.

Strengths: where SparQ can immediately add value

- Alignment with SAP clean core guidance. The certification removes a key vendor evaluation blocker for SAP S/4HANA Cloud customers worried about side‑effects of third‑party integration.

- Speed of delivery for analytics. Pre‑built data models and automated ingestion pipelines reduce the time to generate trusted reporting datasets for Power BI teams.

- Governance by design. Integrated lineage, metadata, sensitivity classification, and approval workflows help enterprises meet audit and compliance requirements while enabling decentralised report creation.

- Targeted BW rationalisation. The BW Analyser is a practical tool: BW estates are often littered with duplicate cubes, unused queries, and obsolete reports. A discovery tool that quantifies re‑use opportunities and rationalisation can substantially reduce migration scope.

- Azure native orchestration. Leveraging ADF + Databricks is a pragmatic pattern in Microsoft ecosystems — ADF for control and orchestration, Databricks for heavy transformation — which many enterprises already support operationally.

- Business‑friendly operations. Low‑code/no‑code pipeline configuration and governance workflows enable business analysts to participate without bypassing central controls.

Practical risks, caveats, and areas for buyer due diligence

While the certification and product claims are compelling, there are several practical considerations every CIO and data leader should evaluate before committing.1. Scope of the certification vs. implementation realities

- Certification covers the integration software for the declared version (2.3). Customers should confirm the scope and whether companion components (extraction packages, connectors) used in their rollout are included in the certified artefacts.

- Future S/4HANA updates will require the vendor to maintain upgrade readiness — confirm contractual commitments and the vendor’s roadmap for certification continuity.

2. Hidden complexity in BW migrations

- The SAP BW Analyser can quantify and prioritise assets, but migrating complex BW logic (calculated key figures, process chains, multi‑dimensional models) to relational or lakehouse models is non‑trivial.

- Expect significant effort for semantic parity: KPI definitions, currency translations, time‑dependent attributes and calculated measures must be re‑engineered, tested, and validated.

3. Cost and vendor lock‑in considerations

- ADF and Databricks have predictable operational and compute cost models, but large‑scale CDC and heavy transformations can be expensive. Model expected consumption and run a cost projection.

- Organisations should review whether SparQ workflows create dependencies on Kagool‑specific metadata stores, and ensure exportability of models and lineage to avoid vendor lock‑in.

4. Security & compliance posture

- The platform claims encryption, sensitivity classification, and auditability, but buyers must validate:

- Where encryption keys are managed (customer‑managed keys recommended)

- How CLS/RLS integrates with the organisation’s identity provider and access management

- Data residency and retention controls for regulated industries

5. Operational ownership & skillset

- Orchestration across ADF, Databricks, and SAP extraction layers requires a blended skillset: SAP Basis/ABAP, Azure platform engineering, data engineering on Databricks, and data governance teams.

- Organisations must ensure SRE runbooks, incident escalation, and monitoring are defined; certification does not eliminate the need for operational maturity.

6. Vendor claims and performance metrics

- Marketing claims (e.g., “reduce manual reporting effort by X%” or “3x faster insight delivery”) should be validated via proof of value pilots. Ask for customer references, measurable before/after metrics, and architecture blueprints for similar deployments.

Recommended adoption playbook for CIOs and data leaders

If you’re evaluating SparQ (or any certified clean core integration solution), follow a staged adoption approach to reduce risk and accelerate value.- Pilot selection and business case

- Pick one domain (Finance or Inventory) with moderate complexity and clear KPIs.

- Define success metrics: data freshness SLAs, report delivery time, reduction in manual ETL hours.

- Discovery & BW rationalisation

- Run the SAP BW Analyser to identify candidate reports/models for migration.

- Rationalise: retire unused artefacts, consolidate duplicates, and prioritise high‑value models.

- Architecture & security design

- Define target Azure architecture (ADF orchestration, Databricks compute, ADLS/Delta Lake storage).

- Confirm key management, identity integration (Azure AD), and encryption at rest/in transit.

- Proof of Value pipeline

- Configure a single, governed pipeline for extraction, CDC, and transformation into a gold dataset.

- Validate lineage, sensitivity labels, and RLS/CLS enforcement with a sample report consumed by Power BI.

- Operationalise observability & runbooks

- Implement monitoring (pipeline health, freshness, quality alerts) and incident runbooks.

- Define roles: data owners, data stewards, platform SRE, and consumer champions.

- Scale and expand

- Expand to other domains, iterating on model templates and governance policies.

- Optimise compute patterns for cost and performance — e.g., use Databricks job clusters for heavy transforms and ADF for orchestration/light processing.

- Contractual & certification governance

- Ensure SLAs for vendor support, patching, and upgrade readiness are contractually agreed.

- Define a cadence to re‑validate certification alignment after major S/4HANA releases.

Operational and cost considerations to model up front

- Plan CDC cadence and retention: How long will you store historical versions in Delta Lake? Retention impacts storage costs and compliance.

- Model compute patterns: short‑lived interactive Databricks clusters for dev/validation and scheduled job clusters for production transforms can optimise costs.

- Security: use customer‑managed keys, and ensure audit events from SparQ flow into enterprise SIEM for compliance monitoring.

- Licensing: confirm the licensing model — platform subscription, per‑pipeline pricing, or capacity tiers — and how that interacts with Azure consumption.

- Change management: training and governance for citizen Power BI developers to ensure they consume published datasets, not bypass governance.

How this fits broader cloud and analytics strategies

- For organisations standardising on Microsoft Azure, a certified integration that automates pipelines into ADF/Databricks reduces integration project risk and speeds analytics enablement.

- Enterprises balancing decentralised BI and centralised governance will benefit from a model where trusted datasets are centrally produced and curated, while report authors retain agility to build business‑focused dashboards.

- For customers migrating off SAP BW, the combination of a discovery/rationalisation tool plus automated pipeline generation can significantly reduce the initial lift — but ongoing semantic reengineering remains the critical workstream.

Final assessment: credible step forward with sensible caveats

Kagool’s announcement that SparQ (Version 2.3) is certified by SAP for clean core with S/4HANA Cloud is a meaningful commercial and technical validation. The certification removes a key hurdle for organisations that must reconcile SAP clean core principles with enterprise demands for faster, AI‑ready analytics. The product’s emphasis on governance, automated pipelines, CDC support, and a BW Analyser makes it a credible option for Azure‑centric SAP customers seeking an accelerated migration and reporting modernisation path.However, certification is a starting point — not a guarantee of project simplicity. Buyers should validate the vendor’s operational maturity, confirm the scope and limits of the certification for their planned architecture, model ongoing run costs for ADF and Databricks workloads, and factor in the non‑trivial semantic work required to faithfully reproduce BW logic in Azure models.

For CIOs and data leaders evaluating SparQ, recommended next steps are: run a focused proof of value on one domain, validate governance and RLS/CLS integration with your identity management, and verify contractual commitments for upgrade readiness as SAP releases evolve. Done well, a certified platform like SparQ can become the trusted data backbone for enterprise reporting and AI — accelerating insight delivery while honouring the discipline of a clean SAP core.

Conclusion

SparQ’s SAP clean core certification is a timely validation that addresses a real market tension: enabling enterprise analytics and AI without compromising the upgradeability and integrity of S/4HANA Cloud. The platform’s combination of ingestion automation, BW rationalisation tooling, governance controls, and Azure‑native pipeline orchestration positions it as a practical option for organisations embracing Microsoft cloud for analytics. Still, the usual caveats apply: test with a pilot, quantify migration effort for complex BW semantics, verify operational and cost assumptions, and ensure contractual commitments hold across S/4HANA release cycles. With those precautions, SparQ could materially shorten the path from SAP data to trusted, auditable insights in Azure.

Source: Weekly Voice SparQ by Kagool Is Certified by SAP® for clean core with SAP S/4HANA Cloud | Weekly Voice