Enterprise AI’s most consequential lesson in 2026 is surprisingly old-school: start small, stitch carefully, and govern deliberately.

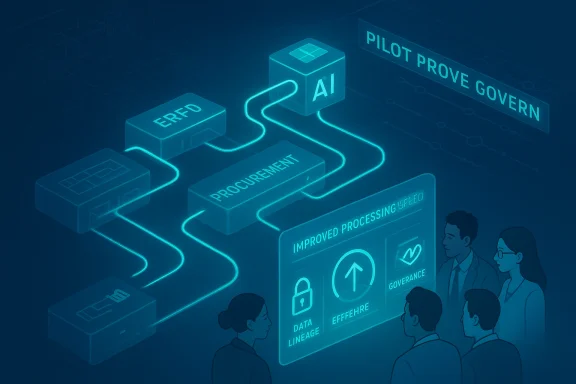

Enterprises are not failing to adopt artificial intelligence because the technology is immature; they’re choosing restraint because the operational world around AI is messy, regulated, and heterogeneous. The pattern is consistent across industries: organisations are embedding narrowly scoped AI into existing systems and workflows rather than attempting wholesale redesigns. That pragmatic sequencing — pilot, prove, govern, then scale — explains why many early AI wins look pedestrian on the surface but are highly consequential in the aggregate. This measured approach is visible across practitioner playbooks and vendor roadmaps and reflects an industry-wide preference for containment and measurability over grand transformation narratives.

What’s emerging is a reproducible pattern: AI delivers the fastest value when treated as a workflow accelerator — a capability sewn into the seams of established applications — rather than as a new platform that displaces the systems people already know how to operate. This article unpacks why enterprise AI adoption “starts small,” analyzes the technical and organizational constraints that keep it that way, and lays out pragmatic guidance for CIOs and technology leaders who want to move from experimentation to durable value.

For leaders, the imperative is to treat AI adoption like an operational competency: scaffold pilots with strong data and governance foundations, hire the cross-functional roles that make scale repeatable, and insist on ROI signals before expanding scope. That path is less glamorous than a sweeping platform migration, but it’s how durable, enterprise-grade AI gets built. Start small, instrument everything, and scale only when the architecture, the data, and the governance are aligned — because in the enterprise, fit always outranks novelty.

Source: TechTarget 6 reasons enterprise AI adoption starts small | TechTarget

Background / Overview

Background / Overview

Enterprises are not failing to adopt artificial intelligence because the technology is immature; they’re choosing restraint because the operational world around AI is messy, regulated, and heterogeneous. The pattern is consistent across industries: organisations are embedding narrowly scoped AI into existing systems and workflows rather than attempting wholesale redesigns. That pragmatic sequencing — pilot, prove, govern, then scale — explains why many early AI wins look pedestrian on the surface but are highly consequential in the aggregate. This measured approach is visible across practitioner playbooks and vendor roadmaps and reflects an industry-wide preference for containment and measurability over grand transformation narratives.What’s emerging is a reproducible pattern: AI delivers the fastest value when treated as a workflow accelerator — a capability sewn into the seams of established applications — rather than as a new platform that displaces the systems people already know how to operate. This article unpacks why enterprise AI adoption “starts small,” analyzes the technical and organizational constraints that keep it that way, and lays out pragmatic guidance for CIOs and technology leaders who want to move from experimentation to durable value.

Why enterprises choose narrow AI deployments

1) Fit beats novelty: AI as an enhancer, not a revolution

Enterprises prefer AI to act as an enhancement to familiar processes. That’s because replacing entrenched systems is expensive, risky, and slow. When AI is used to classify spend, monitor suppliers, or improve compliance workflows inside a procurement system, it increases governance and insight without forcing a massive replatforming effort. Narrow pilots let teams confirm that AI behaves correctly against their specific data and control models before expanding its footprint. This is a core takeaway repeatedly observed in practitioner accounts: containment produces confidence and reduces downstream change management costs.2) Integration complexity outpaces model capability

The technical story is simple: today’s models can do a lot. The hard part is embedding them into enterprise application architectures that were not designed with intelligent services in mind. Years of SaaS proliferation have left many enterprises with distributed data, duplicated processes, and brittle integrations. Adding AI to that mix magnifies coordination problems, and integration complexity often grows faster than model capability. Examples from ERP modernization efforts show how aligning market signals, historical data, pricing rules, and live order-entry systems is the gating factor for scaled value — not the accuracy of a model per se.3) Data readiness must come first

Reliable AI outcomes are the product of disciplined data environments. Model sophistication matters, but without standardised data pipelines and consistent process mapping, AI tends to amplify noise. Organisations embedding AI into IoT, digital twins, or ERP systems frequently find that intelligence only becomes useful once a unified data architecture exists. That sequencing — structure first, intelligence second — is why many enterprises modernise their data foundations while running narrow AI pilots. Short-cycle pilots against clean, sculpted datasets produce the trust necessary to proceed.4) Measurable ROI is easier in narrow scopes

Narrow deployments make value tangible. When AI is tied to a specific operational task — for example, invoice OCR plus automated verification — leaders can measure improvements in speed, error rates, and throughput within weeks. That visibility is critical for justifying additional investment. Broad, ambitious transformations create too many moving parts to isolate the contribution of AI, which inhibits decision-making. The ROI-first approach helps convert experimental budgets into predictable, repeatable projects.5) Governance constraints force a phased approach

AI introduces new oversight requirements around data privacy, model explainability, access controls, and human accountability. Enterprises are rightly cautious: very few organisations are comfortable letting opaque models make decisions without layered human review and audit trails. Confining pilots to specific functions gives IT and compliance teams time to develop governance playbooks that can later scale. Mature organisations increasingly treat controlled deployment as both a compliance strategy and a risk-management discipline.6) Organizational muscle: new roles and cross-functional coordination

Scaling AI requires more than models and data — it needs new operational muscles. Enterprises are quietly hiring cross-functional operators (roles sometimes labelled AI productivity director, LLMops owners, or AI product managers) who translate AI capability into repeatable operations. These operators sit at the intersection of IT, data, and the business, shepherding pilots from proof-of-concept to production and establishing the guardrails needed for expansion. That operational capability does not scale overnight; it is built case by case.Embedding AI inside workflows: practical advantages and examples

Faster time-to-value

Embedding AI in existing tools reduces the friction of change. Workers continue to use familiar interfaces while benefiting from automation and improved insight. The Department for Work and Pensions’ controlled trials of embedded assistants — for example, AI copilots inside productivity suites — demonstrate that time-savings and user satisfaction are achievable when governance and training accompany the rollout. Those controlled deployments produce measurable upstream benefits while containing risk exposure.Lower integration friction

A contained AI service that enriches a business process (e.g., spend classification inside procurement) typically needs fewer integration touchpoints than a platform-level replacement. This reduces the coordination load across vendors, middleware, and internal teams. Practically, this means shorter delivery cycles and faster learning loops: teams can iterate on model prompts, evaluation metrics, and human-in-the-loop patterns without waiting for enterprise-wide architecture changes.Stronger governance signal

When AI is limited to a defined function, compliance teams can instrument logging, data lineage, and review checkpoints in a focused way. That narrower scope lets organisations test model explainability, bias mitigation, and privacy-preserving techniques in production without exposing the whole estate to risk. Effective governance at small scale creates templates and artifacts that streamline broader adoption later.The integration problem: architecture, not accuracy

Why integration is the true bottleneck

Many CIOs report that the hardest part of scaling AI is aligning it with existing architecture — reconciling master data, permission models, and business logic across systems. It's not an academic issue: a pricing recommendation generated by an LLM must respect contractual discounts, regional tax rules, legacy overrides, and live inventory constraints before it can be safely surfaced to a seller. Building those guardrails requires engineering work that is often more arduous than training or fine-tuning a model. The result: model innovation can outpace organisational ability to adopt it safely.Practical integration checklist

- Inventory data sources and owners.

- Map business logic and exception paths for the target workflow.

- Define human-in-the-loop escalation paths.

- Implement secure model access and audit logging.

- Run closed-loop validation with production-like data.

Data readiness: the unstated prerequisite

Build a disciplined data foundation

AI amplifies the effects of good — and bad — data. Teams that invest in canonical data models, consistent ETL pipelines, and operational telemetry get reliable gains from relatively simple models. Conversely, organisations that deploy complex models on inconsistent datasets tend to see noisy or brittle outputs. The practical implication is straightforward: modern enterprises are simultaneously running two programmes — narrow AI pilots and gradual data consolidation — because intelligence without coherence is often misleading.Tactical steps for data-first AI pilots

- Normalize key entities (customers, suppliers, products).

- Centralize high-value event streams with retention and lineage.

- Implement data quality monitoring on pilot datasets.

- Use synthetic or anonymised data when privacy constraints block access.

ROI-first adoption: measuring what matters

How to design pilots that prove value

Start with a hypothesis that links AI to a measurable operational delta. Good pilot metrics are specific: reduction in average processing time, percentage drop in manual exceptions, or improvement in forecast accuracy. Narrow pilots deliver measurable outcomes because they control variance and isolate the AI’s contribution. Once a pilot demonstrates a consistent lift, organisations can begin cost-benefit calculations that include engineering, licensing, and governance overhead.Avoiding the “transformation trap”

Leaders sometimes succumb to the allure of radical transformation — trying to solve multiple problems with a single AI initiative. That approach increases uncertainty and makes it difficult to measure the AI’s contribution. Instead, pursue a portfolio of small, high-impact pilots that cumulatively deliver broad change. Those pilots also generate case studies and internal advocates that reduce cultural resistance when you scale.Governance and risk: why controlled adoption is responsible adoption

Practical governance patterns

- Apply role-based access and least-privilege for model calls.

- Record decisions and maintain model versioning for auditability.

- Require human sign-off for model suggestions that affect compliance or finance.

- Maintain a “high‑risk” list of workflows that require stricter controls.

The accountability imperative

One durable lesson is that responsibility cannot be outsourced. Enterprises must own the governance of models deployed against their data and business logic. That means building internal capabilities — policy, audit, model risk management — rather than relying solely on vendor assurances.People and process: translating pilots into operational modes

New roles and the factory model for AI

Scaling AI is a socio-technical problem. Organisations are building centralised enablement teams that provide templates, model connectors, and governance controls to business unit pilots. Roles that matter include:- AI productivity director or AI product manager

- LLMops engineer

- Data steward with domain expertise

- Compliance lead for AI usage

Change management and user adoption

Embedding AI inside existing tools reduces training friction, but leaders must still invest in change management. Simple steps — pilots with early adopter cohorts, clear escalation pathways for false positives, and ongoing user feedback loops — dramatically increase adoption rates and reduce scepticism.Case studies: what working pilots look like

Procurement automation (representative example)

A procurement team embeds a classification model inside its existing ERP to automate spend categorization and supplier risk scoring. Because the model lives where buyers already work, the team reduces manual reconciliation, increases compliance checks, and can measure a clear uplift in on‑time supplier validation. The success hinges on targeted data pipelines and a small set of well-defined outcomes — precisely the conditions that make narrow deployments effective.Microsoft 365 Copilot trials (controlled deployment example)

Controlled trials of AI assistants inside productivity suites show measurable time savings and improved worker satisfaction when the rollout includes governance and training. These examples prove that embedding AI in familiar tools, coupled with explicit policy, yields immediate operational benefits while limiting risk exposure.ERP decision augmentation (integration-heavy example)

ERP projects that add AI-driven decision support for pricing or inventory must reconcile multiple sources of truth. These efforts require extensive integration work — a reminder that model readiness is necessary but not sufficient; the surrounding architecture often determines whether an AI feature is actionable.Risks, blind spots, and mitigations

Risk: Overconfidence in model outputs

AI can create confident-seeming answers that are incorrect. Mitigation: require human review thresholds and instrument confidence signals and provenance.Risk: Exposed data and compliance gaps

Inadequate data controls can leak sensitive information. Mitigation: apply data minimisation, anonymisation, and secure enclaves; treat model access as an API with least-privilege controls.Risk: Scaling complexity

What works for one business unit may not translate. Mitigation: codify integration patterns, create a central enablement function, and invest in reusable connectors.Risk: Cost drift and procurement surprises

AI projects can generate unexpected consumption costs without careful FinOps. Mitigation: define cost guardrails and measure cost-per-outcome during pilots.A pragmatic playbook for leaders

- Start with business outcomes: pick a single, measurable KPI.

- Limit scope: choose a specific workflow or user cohort.

- Prepare data: create a production-like dataset and monitor quality.

- Instrument governance: define model controls, logging, and escalation paths.

- Assign accountability: name an AI product owner and a data steward.

- Measure, iterate, and document: extract learnings and build a reusable template.

- Scale with guardrails: expand to adjacent workflows only after reproducible results.

Conclusion

Enterprise AI’s early story is not one of dramatic reinvention but of disciplined plumbing: improving the work people already do by carefully inserting intelligence where it delivers measurable value and can be audited. The constraints — integration complexity, data readiness, governance, and organisational capability — are not failures of AI; they are the natural friction of operating technology at scale.For leaders, the imperative is to treat AI adoption like an operational competency: scaffold pilots with strong data and governance foundations, hire the cross-functional roles that make scale repeatable, and insist on ROI signals before expanding scope. That path is less glamorous than a sweeping platform migration, but it’s how durable, enterprise-grade AI gets built. Start small, instrument everything, and scale only when the architecture, the data, and the governance are aligned — because in the enterprise, fit always outranks novelty.

Source: TechTarget 6 reasons enterprise AI adoption starts small | TechTarget