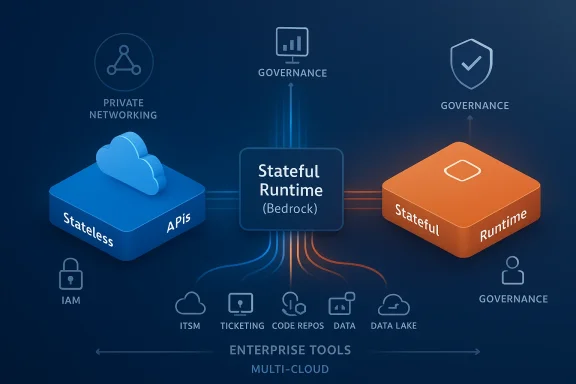

OpenAI’s move to bring a stateful runtime to Amazon Web Services rewrites a key piece of the enterprise AI playbook: models are no longer just stateless engines answering one-off prompts, they’re becoming persistent, orchestrated workers that live inside cloud control planes. Announced February 27, 2026, the collaboration will deliver a Stateful Runtime Environment that runs natively on Amazon Bedrock, positions AWS as the exclusive third‑party distributor for OpenAI’s Frontier enterprise platform, and secures an enormous compute commitment and investment tied into AWS hardware. At the same time, OpenAI and Microsoft publicly reiterated that Azure remains the exclusive home for stateless OpenAI APIs — a careful carve-out that frames this as a control‑plane tug‑of‑war rather than a simple cloud handoff.

Stateless AI — the familiar model API pattern where each request is independent — has dominated how developers consumed large language models: send a prompt, receive a response, repeat. That pattern works beautifully for many tasks (summaries, translation, coding snippets), but it breaks down when workflows are long-lived, multi-step, permissioned, or reliant on external tools and data systems.

A stateful runtime changes the calculus. Instead of stitching ephemeral API calls together with custom orchestration, the runtime itself preserves context, memory, tool and workflow state, identity boundaries, and the execution environment. In practical terms, that means agents can:

Analysts quoted in coverage call this a “control plane shift”: models are commoditized to some degree, but the runtime stack that guarantees continuity, auditability, and orchestration becomes the strategic asset. The AWS/OpenAI move plants a flag in the territory where enterprises actually run mission‑critical automation, not merely experiment with prompts.

Key implications:

Why that matters:

Those conversations mirror the media reporting and analyst commentary: there’s excitement about reduced friction, but also a chorus of warnings about lock‑in and supply concentration. In practice, many organizations will pilot the stateful runtime for specific use cases (customer claims processing, SRE automation, or internal knowledge agents) before committing broader workloads.

Longer term, expect the market to bifurcate:

The AWS/OpenAI collaboration is not a death blow to Azure or Microsoft’s role in AI; rather, it reveals how the ecosystem is evolving into complementary zones of capability. Organizations that thoughtfully evaluate which workloads deserve a stateful runtime, enforce rigorous governance, and maintain migration options will extract the most value while containing risk.

In short: stateful runtimes will change how enterprises build with AI. The key question for IT leaders is no longer just which model is best — it’s which runtime guarantees continuity, security, and operational resilience for the critical workflows you can’t afford to lose.

Source: Network World OpenAI launches stateful AI on AWS, signaling a control plane power shift

Background: why “stateful” matters now

Background: why “stateful” matters now

Stateless AI — the familiar model API pattern where each request is independent — has dominated how developers consumed large language models: send a prompt, receive a response, repeat. That pattern works beautifully for many tasks (summaries, translation, coding snippets), but it breaks down when workflows are long-lived, multi-step, permissioned, or reliant on external tools and data systems.A stateful runtime changes the calculus. Instead of stitching ephemeral API calls together with custom orchestration, the runtime itself preserves context, memory, tool and workflow state, identity boundaries, and the execution environment. In practical terms, that means agents can:

- Remember prior work across hours or days

- Retry and resume long-running tasks safely

- Maintain auditable permission and identity propagation

- Coordinate multiple tool invocations without developer duct‑tape

What OpenAI and AWS announced — the headline bullets

- Co-development of a Stateful Runtime Environment for agentic workflows, running natively on Amazon Bedrock and optimized for AWS infrastructure.

- AWS named the exclusive third‑party cloud distribution provider for OpenAI’s Frontier enterprise platform.

- OpenAI committed to consuming roughly 2 gigawatts of AWS Trainium compute, and the companies expanded their cloud commitment substantially. The deal includes a multibillion-dollar investment from Amazon into OpenAI (announced as a $50 billion investment, staged).

- Crucial carve-out: Microsoft and OpenAI said that Azure remains the exclusive cloud provider for stateless OpenAI APIs, and OpenAI’s first‑party products (including Frontier in some contexts) will continue to be hosted on Azure under existing IP and revenue‑sharing terms. That public reassurance frames the AWS work as complementary rather than a full break from Microsoft.

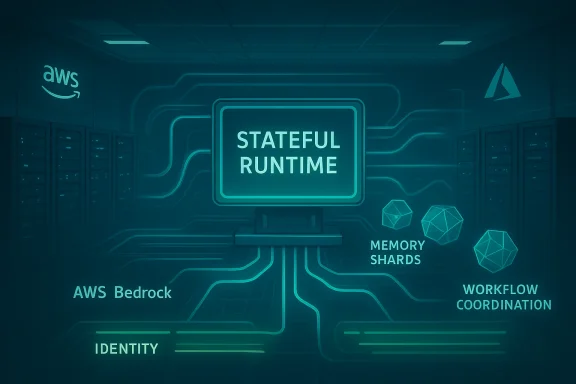

Technical anatomy: what a stateful runtime provides

The pitch from OpenAI and AWS sketches a runtime with several built-in capabilities that matter to engineering and security teams:- Persistent working memory. Agents retain history and working state across sessions, enabling long‑horizon reasoning and progress on multi‑step tasks without rehydrating context each call.

- Tool and workflow state management. Built-in mechanisms for invoking external services, tracking tool outputs, and coordinating retries and exception handling.

- Identity and permission propagation. The runtime honors AWS identity primitives (IAM, VPC boundaries, audit logging) so actions executed by agents can be correlated with human and system identities for compliance.

- Governance, observability, and audit trails. Enterprise readiness means fine‑grained logging, replayability, and deterministic workflow replay — essential for regulated industries.

- Hardware and cost optimizations. The runtime will be tuned for AWS’s Trainium family and Bedrock services, promising better price/performance for OpenAI workloads on AWS silicon.

Why this is a control‑plane story, not just a compute play

Historically, the AI race emphasized models and compute. But the next frontier is the runtime control plane — the systems that run, manage, observe, and govern agents in production. Whoever controls the runtime shapes operational behavior, interoperability, cost model, and — ultimately — vendor lock‑in.Analysts quoted in coverage call this a “control plane shift”: models are commoditized to some degree, but the runtime stack that guarantees continuity, auditability, and orchestration becomes the strategic asset. The AWS/OpenAI move plants a flag in the territory where enterprises actually run mission‑critical automation, not merely experiment with prompts.

Key implications:

- Enterprises that standardize agent orchestration on Bedrock + OpenAI runtime will implicitly adopt AWS as the operational control plane for agentic workloads.

- Security and compliance postures will be shaped by the runtime’s integration with AWS IAM, networking, and audit systems — a convenience that doubles as an anchoring mechanism.

- Portability becomes a trade‑off: the ease of running on a hyperscaler‑native runtime reduces the attractiveness of building cloud‑agnostic orchestration layers.

The Microsoft factor: exclusivity, carve‑outs, and carefully worded assurances

OpenAI’s relationship with Microsoft has been foundational for years — spanning investments, licensing, engineering integration, and product co‑development. In the wake of the AWS announcement, OpenAI and Microsoft issued clarifying language: the core IP relationship, revenue-sharing, and the exclusivity of Azure as the cloud provider for stateless OpenAI APIs remain intact. That means simple model access — the classic request/response API experience — is still Microsoft‑centric.Why that matters:

- Azure continues to be the primary hosting fabric for the majority of stateless API traffic, and Microsoft retains licensing and revenue rights that flow from that traffic.

- The AWS deal does not cannibalize Azure’s stateless model hosting role; instead, it redefines where stateful agent orchestration can flourish.

- Functionally, enterprises could use stateless OpenAI APIs hosted on Azure for simple interactions and adopt AWS‑native stateful runtimes for agent orchestration and long‑running automations.

Economic and hardware dynamics: Trainium, scale, and why Amazon spent big

A striking part of the announcement is the compute and investment commitments. OpenAI pledged to consume an estimated 2 gigawatts of AWS Trainium capacity and expanded an earlier cloud commitment substantially, while Amazon disclosed a staged $50 billion investment in OpenAI. Those numbers are eye‑watering, and they underscore two realities:- Compute supply is strategic. Large‑scale model training and inference require predictable access to specialized hardware. Securing Trainium capacity provides OpenAI guaranteed silicon, lowering supply risk and cost volatility.

- Capital as strategic alignment. Amazon’s investment aligns its long‑term incentives with OpenAI’s success on AWS; it’s not merely a financial transaction but a stake in the future revenue and product trajectories that OpenAI will realize when its stateful runtime drives enterprise consumption. Independent reporting corroborates the scale of the investment and compute commitments.

Opportunities for IT teams and developers

The AWS/OpenAI stateful runtime promises near‑term practical gains for organizations wrestling with agentization:- Faster time to production. Less custom engineering to hold state across tool calls means prototypes can become production services faster.

- Safer long‑running automation. Built‑in identity and environment boundaries reduce the operational risk of agents acting with excessive privileges.

- Better observability and governance. Native logging, replay, and audit facilities target enterprise compliance needs out of the box.

- Lowered integration burden. For teams already on AWS, Bedrock integration reduces the cost and friction of connecting agents to data sources, VPCs, and identity systems.

Risks, trade‑offs, and technical caveats

No architectural shift is risk‑free. The very properties that make stateful runtimes powerful create new attack surfaces, governance headaches, and lock‑in vectors.- Expanded attack surface. Persistent memory and long‑running workflows multiply the opportunities for data leakage or exploitation. Enterprises must demand encryption‑at‑rest for memory, fine‑grained ACLs, and immutable audit trails. Coverage warns explicitly about the need to govern persistent state.

- Operational lock‑in. A runtime tightly coupled to Bedrock and Trainium could make future migrations harder. Portability becomes a design constraint: either accept a degree of lock‑in for faster delivery, or invest in an abstraction layer that preserves portability at higher upfront cost.

- Supply concentration. As major AI workloads consolidate on a small set of cloud + silicon stacks, systemic risks grow: hardware shortages, pricing shocks, or geopolitical events could disrupt entire swathes of AI services. Analysts told reporters to watch the supply chain concentration risk closely.

- Regulatory and compliance friction. Long‑lived agent memory and cross‑system actions complicate data residency, consent, and audit expectations in regulated industries.

- Interoperability headaches. Mixing stateless Azure APIs with stateful Bedrock runtimes may create complexity for teams that want a single, unified dev experience.

How vendors and competitors will react

Expect two simultaneous trends in vendor behavior:- Hyperscalers will double down on opinionated runtimes. Azure, Google Cloud, and AWS will each attempt to own the orchestration layer that enterprises use to run agents. Microsoft’s existing Copilot and Azure agent work emphasize governance-first approaches; AWS’s Bedrock play focuses on operational friction and silicon economics. The market will fragment along runtime and control‑plane lines.

- Third‑party orchestration and portability vendors will surge. Startups and incumbents that provide cloud‑agnostic control planes, session management, and agent middleware could see demand from customers that want portability without sacrificing enterprise controls. Amazon already introduced session management APIs in Bedrock preview last year — a signal that both hyperscalers and tooling vendors are racing to provide robust state management primitives.

Community and enterprise reaction — early signals

On forums and enterprise discussion threads, practitioners are parsing what “stateful” implies for day‑to‑day operations: developers appreciate the promise of reduced orchestration burden, while architects are asking hard questions about portability, encryption, and exit strategies. Community threads flagged by our site index reflect both bullish and cautious takes on the announcement, discussing implications for hybrid and multicloud strategies.Those conversations mirror the media reporting and analyst commentary: there’s excitement about reduced friction, but also a chorus of warnings about lock‑in and supply concentration. In practice, many organizations will pilot the stateful runtime for specific use cases (customer claims processing, SRE automation, or internal knowledge agents) before committing broader workloads.

Practical guidance for IT leaders: a decision framework

If you’re a CIO, cloud architect, or engineering leader, consider a staged decision framework:- Audit workloads. Identify which applications genuinely require long‑horizon state (multi‑step approvals, cross‑system processes) versus those that can remain stateless.

- Define portability requirements. For each workload, codify whether portability is critical or whether cloud‑native operational benefits outweigh migration risk.

- Set security and observability bar. Require encryption of persistent memory, human‑in‑the‑loop approvals for privileged actions, and deterministic replay for critical flows.

- Pilot in a bounded environment. Start with a single high‑value workflow; evaluate failover behavior, auditability, and total cost of ownership.

- Design an exit plan. Ensure you can export agent state and replay logs in case you must migrate away from a hyperscaler runtime.

- Governance first. Implement policy guardrails before broad deployment — agentic missteps are fast and consequential.

Where this leaves Microsoft, AWS, and the future of multicloud AI

The announcement represents a more nuanced multicloud reality: different clouds, different planes. Microsoft retains its commercial and licensing primacy for stateless APIs, while AWS becomes the home for one major style of stateful orchestration. That division could persist as a pragmatic compromise: enterprises will pick the runtime that best matches their operational needs, rather than a single cloud winning everything.Longer term, expect the market to bifurcate:

- Model access layer (stateless): centralized, with heavy investment in IP licensing and high‑volume API traffic (Azure’s strength).

- Runtime and orchestration layer (stateful): distributed across hyperscalers, each offering different trade‑offs in silicon, governance, and integration (AWS’s Bedrock play among them).

Final assessment: a strategic inflection, not a single winner

OpenAI’s stateful runtime on AWS is a strategic inflection point in enterprise AI. It marks the shift from a model race to a control‑plane race, where persistence, governance, and operational resilience matter as much as model quality. For enterprises, this brings practical opportunities — faster time to production, safer long‑running automations, and deeper integration with cloud security stacks — but it also demands a more disciplined approach to architecture, vendor economics, and risk management.The AWS/OpenAI collaboration is not a death blow to Azure or Microsoft’s role in AI; rather, it reveals how the ecosystem is evolving into complementary zones of capability. Organizations that thoughtfully evaluate which workloads deserve a stateful runtime, enforce rigorous governance, and maintain migration options will extract the most value while containing risk.

In short: stateful runtimes will change how enterprises build with AI. The key question for IT leaders is no longer just which model is best — it’s which runtime guarantees continuity, security, and operational resilience for the critical workflows you can’t afford to lose.

Source: Network World OpenAI launches stateful AI on AWS, signaling a control plane power shift