The UK’s reversal on AI copyright is more than a policy tweak; it is a signal that permission, payment, and provenance are becoming central to the next phase of generative AI. After a consultation in which the government says it received more than 11,500 responses and found overwhelming opposition to an opt-out approach, London has effectively stepped back from the idea that AI developers should be able to train on copyrighted works first and sort out compensation later. For companies like Skill Refinery, the Dallas-based platform founded by Matt Cretzman, the decision reads less like a surprise and more like validation of a model built around licensing, attribution, and recurring payment from the start.

The copyright debate around AI has been building for years, but the UK’s latest shift gives it sharper edges. In the government’s own progress statement, officials described three goals that have shaped the discussion: control for rightsholders, access for AI developers, and transparency about how copyrighted works are used. The same statement makes clear that the consultation ran from 17 December 2024 to 25 February 2025 and that the government had initially preferred a data-mining exception with rights reservation. (gov.uk)

That earlier preference was always politically fragile. The report now published by the UK government says “by far the greatest number” of respondents opposed the opt-out option, with creators, performers, publishers, and collecting organizations warning that a reservation regime would be burdensome, hard to administer, and unfair to smaller rights holders. The government’s own summary captures the central objection: an opt-out model can look elegant in theory, but in practice it often places the burden of enforcement on the people with the least capacity to police it. (assets.publishing.service.gov.uk)

The policy change also lands in a market that is already moving toward collective licensing and structured AI rights management. Publishers’ Licensing Services says it is now inviting publishers to opt in to an industry-led collective licensing initiative for generative AI, and it has publicly described new licensing products for text and data mining, workplace generative AI, and broader generative-AI uses of text such as training, fine-tuning, and retrieval-augmented generation. In other words, the commercial rails are already being built, whether government policy likes it or not. (pls.org.uk)

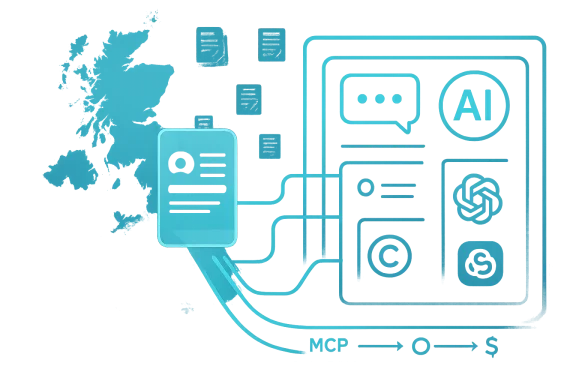

Skill Refinery is entering that same conversation from a different angle. Rather than treating expert content as a pool to be mined for model training, it positions expert methodology as a licensable product delivered into AI interfaces. The company says it converts books, courses, and coaching frameworks into structured skill cards, then delivers them through MCP-compatible tools such as Claude, ChatGPT, and Microsoft Copilot. Its pitch is simple but potent: source material should stay governed, expert knowledge should stay compensated, and AI should become the delivery layer rather than the appropriation layer.

The significance of that distinction is easy to miss if you focus only on headlines about AI copyright. Traditional training-data licensing is about what goes into a model. Skill Refinery’s model is about what comes out of a model and who gets paid when that knowledge is used. That puts it closer to a digital rights infrastructure business than a pure software startup, and it helps explain why the UK reversal is being framed by supporters as a wider vindication of licensing-first design.

But the proposal immediately ran into a familiar problem: how do you reserve rights at the scale and speed of the open web? The government’s report notes concerns about timing, location, and the granularity of rights reservation. Should an opt-out work only for future crawls? Should it be expressed on a website, in a registry, or both? Can a reservation be made collectively, and what happens to co-authored or derivative works where one rights holder objects and another does not? Those are not minor implementation details. They are the difference between a workable regime and a compliance maze. (assets.publishing.service.gov.uk)

The politics are just as important as the mechanics. Creators and publishers have spent the last two years arguing that AI firms are extracting value from a permissionless ecosystem built by others. AI developers, by contrast, have argued that model progress depends on scale, and scale depends on access to broad corpora of text and media. The UK consultation became a proxy war over the fundamental bargain of digital culture: whether innovation should continue to outrun compensation, or whether compensation should become a first-class design constraint.

That’s where the current market context matters. PLS is not merely lobbying; it is shipping. Its generative-AI licensing initiative and associated products suggest a system in which publishers can opt in to structured licenses rather than opt out of unchecked use. The direction of travel is notable because it shows that rights holders are no longer content to protest from the sidelines. They are building the plumbing themselves. (pls.org.uk)

Skill Refinery sits in this same ecosystem but at a more specialized layer. It is not trying to be a mass content aggregator for model training. It is trying to make expert knowledge portable inside AI tools without surrendering control of the underlying source material. That may sound subtle, but the strategic consequences are large. If a platform can turn a book, methodology, or coaching framework into an AI-delivered skill that remains traceable and monetizable, it is effectively creating a new category between publishing, licensing, and workflow software.

The message also extends beyond Britain. If one advanced economy leans away from default access and toward remunerated use, it becomes easier for other jurisdictions to justify stricter regimes. That does not mean every country will follow the same blueprint, but it does mean the old assumption — that AI training can rely indefinitely on frictionless scraping — is losing policy credibility.

The opposition was not monolithic, which is exactly why the government’s retreat is so telling. Some AI developers opposed the opt-out proposal because they believed easy reservation would make the exception toothless. Right holders opposed it because they believed it would shift costs onto the weakest participants in the ecosystem. When both sides distrust the same framework for opposite reasons, the framework is usually unstable.

The deeper issue is that opt-out systems often collapse under their own administrative weight. If the process is too easy, the exception becomes meaningless. If the process is too hard, the reservation becomes inaccessible. Either way, the market ends up somewhere between legal uncertainty and broken incentives.

A few key observations stand out:

That architecture matters because it reframes the AI stack. Rather than asking, “What content can we ingest into the model?” Skill Refinery asks, “How can an AI assistant deliver a licensed expert workflow without consuming the underlying work?” That is a very different business logic, and it is closer to software distribution than training-data harvesting.

That said, the model also raises questions about definition and scope. What exactly is a skill card? How much originality remains in the source material after extraction? At what point does a framework become a derivative product rather than a reusable methodology? Those questions will matter as the company scales.

The company’s reported emphasis on recurring monthly revenue for experts is especially important. One-off licensing payments can help rightsholders cover the past. Recurring revenue can make expert IP feel like a living asset with long-term economics. That is attractive to authors, coaches, consultants, and niche professionals who have spent years building methodology but have struggled to package it into software-like economics.

This is important because the UI layer is where AI adoption actually happens. Most users do not care whether a knowledge asset lives in a vector store, a database, or a proprietary index. They care whether the AI gives them an answer that is useful, attributable, and safe to use. MCP-compatible delivery is a practical way to turn expert frameworks into a repeatable experience.

It also means the company may be able to avoid some of the fraught politics of model training. If a knowledge asset is used at the point of response rather than hidden in pretraining, the value exchange is more visible. That visibility could become a selling point in industries where provenance matters.

A few implications follow:

The company’s positioning also matters because the AI licensing market is beginning to bifurcate. One side is about training-data rights and legal indemnity. The other side is about operational knowledge delivery, workflow assistance, and compensation for domain experts. The first category is dominated by publishers, rights agencies, and legal negotiations. The second category may become more relevant for consultants, educators, professional associations, and specialist communities.

The broader competitive threat is that large AI vendors may absorb some of this functionality into their own ecosystems. If the big platforms decide to make licensing, provenance, and expert skill modules native features, standalone startups could find themselves squeezed between platform owners and incumbents in publishing and professional services.

Still, there is a plausible wedge here. The company’s focus on a published author catalog and expert methodology could give it enough density to prove the business model. If it can show that licensed knowledge packages improve output quality and generate recurring income for experts, it may establish a category before bigger players fully commit.

For enterprises, the economics are more complicated but potentially more powerful. Businesses care about IP risk, auditability, and whether AI output can be defended internally. A licensed skill-delivery layer may reduce the fear that teams are quietly building workflows on content they do not own.

The platform’s reported emphasis on keeping source files out of the context window is especially relevant for enterprise governance. It reduces the risk that proprietary source material becomes casually echoed, blended, or redistributed through the AI layer. That does not eliminate all risk, but it does improve the control story.

The consumer story is more about utility; the enterprise story is more about assurance. That divide could help the company market to both ends of the spectrum without collapsing into a single generic AI knowledge product.

That changes the psychology of participation. Authors, coaches, and specialist educators are more likely to contribute when they can see a continuing income stream rather than a one-off check. It also changes the product economics for the platform, because the company must continuously prove value to both subscribers and contributors.

The model also invites comparisons to royalties, but it is not identical. Traditional royalties often depend on unit sales or distribution milestones. AI-delivered methodology depends on usage in an interactive environment, which could make measurement both richer and messier. The good news is that usage can be granular. The bad news is that it can also be more difficult to standardize.

The second strength is product clarity. The company is not trying to be everything to everyone. It is offering a specific answer to a specific question: how can expert knowledge live inside AI systems without being flattened into training data? That clarity should help with partner conversations, especially in publishing and professional education.

There is also legal and reputational risk. Even if the platform is built around licensing, questions about derivative use, attribution, and contractual scope will follow it. Any company that sits between copyright holders and AI interfaces will be judged not just on the structure of its contracts, but on how faithfully those contracts are interpreted in the real world.

Skill Refinery’s near-term challenge is to prove that expert methodology can be delivered consistently across tools without losing the qualities that made it valuable in the first place. If the platform can do that, it may end up looking less like a startup riding an AI news cycle and more like infrastructure for the post-scraping economy.

The UK’s reversal may not settle the copyright debate, but it does sharpen the direction of travel. AI is moving from a world that treated knowledge as free fuel toward one that increasingly treats it as a negotiated asset. For Matt Cretzman and Skill Refinery, that shift is less a headline than a hypothesis finally gaining policy support — and the next proof point will come not from consultation papers, but from whether users, experts, and enterprises actually choose to pay for AI-delivered knowledge that respects the people who created it.

Source: The Chronicle-Journal User

Overview

Overview

The copyright debate around AI has been building for years, but the UK’s latest shift gives it sharper edges. In the government’s own progress statement, officials described three goals that have shaped the discussion: control for rightsholders, access for AI developers, and transparency about how copyrighted works are used. The same statement makes clear that the consultation ran from 17 December 2024 to 25 February 2025 and that the government had initially preferred a data-mining exception with rights reservation. (gov.uk)That earlier preference was always politically fragile. The report now published by the UK government says “by far the greatest number” of respondents opposed the opt-out option, with creators, performers, publishers, and collecting organizations warning that a reservation regime would be burdensome, hard to administer, and unfair to smaller rights holders. The government’s own summary captures the central objection: an opt-out model can look elegant in theory, but in practice it often places the burden of enforcement on the people with the least capacity to police it. (assets.publishing.service.gov.uk)

The policy change also lands in a market that is already moving toward collective licensing and structured AI rights management. Publishers’ Licensing Services says it is now inviting publishers to opt in to an industry-led collective licensing initiative for generative AI, and it has publicly described new licensing products for text and data mining, workplace generative AI, and broader generative-AI uses of text such as training, fine-tuning, and retrieval-augmented generation. In other words, the commercial rails are already being built, whether government policy likes it or not. (pls.org.uk)

Skill Refinery is entering that same conversation from a different angle. Rather than treating expert content as a pool to be mined for model training, it positions expert methodology as a licensable product delivered into AI interfaces. The company says it converts books, courses, and coaching frameworks into structured skill cards, then delivers them through MCP-compatible tools such as Claude, ChatGPT, and Microsoft Copilot. Its pitch is simple but potent: source material should stay governed, expert knowledge should stay compensated, and AI should become the delivery layer rather than the appropriation layer.

The significance of that distinction is easy to miss if you focus only on headlines about AI copyright. Traditional training-data licensing is about what goes into a model. Skill Refinery’s model is about what comes out of a model and who gets paid when that knowledge is used. That puts it closer to a digital rights infrastructure business than a pure software startup, and it helps explain why the UK reversal is being framed by supporters as a wider vindication of licensing-first design.

Background

The UK has been wrestling with copyright and AI since the earliest wave of foundation-model commercialization. The original consultation laid out a spectrum of options, from doing nothing to strengthening copyright so that licensing would be required in all cases. The government’s preferred path at the time was an exception for data mining with a rights reservation mechanism, paired with transparency measures and supporting standards. (gov.uk)But the proposal immediately ran into a familiar problem: how do you reserve rights at the scale and speed of the open web? The government’s report notes concerns about timing, location, and the granularity of rights reservation. Should an opt-out work only for future crawls? Should it be expressed on a website, in a registry, or both? Can a reservation be made collectively, and what happens to co-authored or derivative works where one rights holder objects and another does not? Those are not minor implementation details. They are the difference between a workable regime and a compliance maze. (assets.publishing.service.gov.uk)

The politics are just as important as the mechanics. Creators and publishers have spent the last two years arguing that AI firms are extracting value from a permissionless ecosystem built by others. AI developers, by contrast, have argued that model progress depends on scale, and scale depends on access to broad corpora of text and media. The UK consultation became a proxy war over the fundamental bargain of digital culture: whether innovation should continue to outrun compensation, or whether compensation should become a first-class design constraint.

That’s where the current market context matters. PLS is not merely lobbying; it is shipping. Its generative-AI licensing initiative and associated products suggest a system in which publishers can opt in to structured licenses rather than opt out of unchecked use. The direction of travel is notable because it shows that rights holders are no longer content to protest from the sidelines. They are building the plumbing themselves. (pls.org.uk)

Skill Refinery sits in this same ecosystem but at a more specialized layer. It is not trying to be a mass content aggregator for model training. It is trying to make expert knowledge portable inside AI tools without surrendering control of the underlying source material. That may sound subtle, but the strategic consequences are large. If a platform can turn a book, methodology, or coaching framework into an AI-delivered skill that remains traceable and monetizable, it is effectively creating a new category between publishing, licensing, and workflow software.

Why the UK reversal matters

The UK’s reversal matters because policy signals shape capital allocation. Investors, publishers, and AI vendors all watch the legal temperature of major markets. When a government that initially favored a broad exception concludes that the consultation overwhelmingly rejects it, the message to the market is unmistakable: the licensing-first position has momentum. (assets.publishing.service.gov.uk)The message also extends beyond Britain. If one advanced economy leans away from default access and toward remunerated use, it becomes easier for other jurisdictions to justify stricter regimes. That does not mean every country will follow the same blueprint, but it does mean the old assumption — that AI training can rely indefinitely on frictionless scraping — is losing policy credibility.

The Consultation Shock

The scale of the response is one of the most important parts of the story. The government says the consultation received more than 11,500 responses, and the published report indicates that most respondents opposed the opt-out exception. That is a remarkable volume for a policy question that is often discussed in highly technical language. It suggests the issue has broken out of specialist circles and entered mainstream creator, publisher, and enterprise concern. (gov.uk)The opposition was not monolithic, which is exactly why the government’s retreat is so telling. Some AI developers opposed the opt-out proposal because they believed easy reservation would make the exception toothless. Right holders opposed it because they believed it would shift costs onto the weakest participants in the ecosystem. When both sides distrust the same framework for opposite reasons, the framework is usually unstable.

The burden of opt-out design

The UK report is particularly blunt about the burden placed on creators and SMEs. It records concerns that a reservation regime would be especially harmful to small rightsholders and impractical for large bodies of work. That is not just a copyright argument; it is an operational one. A rights model that requires every contributor to become a compliance manager is not a scalable market mechanism. (assets.publishing.service.gov.uk)The deeper issue is that opt-out systems often collapse under their own administrative weight. If the process is too easy, the exception becomes meaningless. If the process is too hard, the reservation becomes inaccessible. Either way, the market ends up somewhere between legal uncertainty and broken incentives.

A few key observations stand out:

- Opt-out mechanisms may look balanced on paper but often fail in practice.

- Small creators bear the heaviest monitoring burden.

- AI firms want clarity, not a patchwork of uncertain reservations.

- Collective licensing emerges as the most administratively plausible compromise.

- Transparency becomes essential if compensation is to be enforced.

Skill Refinery’s Thesis

Skill Refinery’s core pitch is that expert knowledge should not be treated like undifferentiated training fodder. According to the company, it extracts methodology from books, courses, and coaching frameworks, structures it as skill cards, and delivers that knowledge through MCP to AI tools including Claude, ChatGPT, and Microsoft Copilot. The source files, it says, do not re-enter the AI context window after extraction, which is meant to preserve governance and reduce IP leakage.That architecture matters because it reframes the AI stack. Rather than asking, “What content can we ingest into the model?” Skill Refinery asks, “How can an AI assistant deliver a licensed expert workflow without consuming the underlying work?” That is a very different business logic, and it is closer to software distribution than training-data harvesting.

Licensing versus training

There is a strategic distinction between licensing for model training and licensing for user-facing delivery. Training licenses monetize access to content before the model is built. Delivery licenses monetize knowledge every time a user invokes it. Skill Refinery appears to be aiming at the second category, which could prove more durable if AI assistants become the interface layer through which professionals actually work.That said, the model also raises questions about definition and scope. What exactly is a skill card? How much originality remains in the source material after extraction? At what point does a framework become a derivative product rather than a reusable methodology? Those questions will matter as the company scales.

The company’s reported emphasis on recurring monthly revenue for experts is especially important. One-off licensing payments can help rightsholders cover the past. Recurring revenue can make expert IP feel like a living asset with long-term economics. That is attractive to authors, coaches, consultants, and niche professionals who have spent years building methodology but have struggled to package it into software-like economics.

The Role of MCP and AI Interfaces

The use of Model Context Protocol is what makes the Skill Refinery pitch feel modern rather than merely repackaged. MCP has become an important bridge layer in the broader AI ecosystem because it lets assistants connect to external tools and structured knowledge sources without stuffing everything into the model itself. In Skill Refinery’s case, that means the AI assistant can pull a relevant skill card while the original source material remains under governance.This is important because the UI layer is where AI adoption actually happens. Most users do not care whether a knowledge asset lives in a vector store, a database, or a proprietary index. They care whether the AI gives them an answer that is useful, attributable, and safe to use. MCP-compatible delivery is a practical way to turn expert frameworks into a repeatable experience.

Why delivery is a different market

The delivery layer is not the same as the training layer. A company that sells access to expert methodology inside AI tools is serving a different buyer, a different use case, and a different compliance requirement. That distinction opens the door to enterprise knowledge management, professional education, and publisher partnerships.It also means the company may be able to avoid some of the fraught politics of model training. If a knowledge asset is used at the point of response rather than hidden in pretraining, the value exchange is more visible. That visibility could become a selling point in industries where provenance matters.

A few implications follow:

- MCP delivery makes expertise accessible inside existing workflows.

- Attribution becomes more plausible when knowledge is retrieved, not absorbed.

- Governance is easier when source files stay outside the model context.

- Recurring revenue can align expert incentives with platform growth.

- Enterprise adoption becomes more realistic when compliance is built in.

Market Position and Competitive Landscape

Skill Refinery is entering a market that is already crowded with AI licensing talk but still relatively sparse in execution. Many companies focus on content aggregation, content provenance, or enterprise knowledge retrieval. Fewer are trying to turn expert methodology itself into a monetized AI-delivered product with a recurring compensation model for creators. That makes Skill Refinery unusual even if its components are familiar.The company’s positioning also matters because the AI licensing market is beginning to bifurcate. One side is about training-data rights and legal indemnity. The other side is about operational knowledge delivery, workflow assistance, and compensation for domain experts. The first category is dominated by publishers, rights agencies, and legal negotiations. The second category may become more relevant for consultants, educators, professional associations, and specialist communities.

Who the platform competes against

Skill Refinery is not competing only with publishing licenses. It is also competing with no-code knowledge products, custom GPTs, private prompt libraries, RAG systems, and enterprise knowledge bases. Each of those alternatives solves part of the problem, but not all of them solve compensation, attribution, and delivery together.The broader competitive threat is that large AI vendors may absorb some of this functionality into their own ecosystems. If the big platforms decide to make licensing, provenance, and expert skill modules native features, standalone startups could find themselves squeezed between platform owners and incumbents in publishing and professional services.

Still, there is a plausible wedge here. The company’s focus on a published author catalog and expert methodology could give it enough density to prove the business model. If it can show that licensed knowledge packages improve output quality and generate recurring income for experts, it may establish a category before bigger players fully commit.

- Publishing licenses monetize content rights.

- Knowledge platforms monetize workflow outcomes.

- AI assistants monetize user engagement.

- Skill cards sit at the intersection of all three.

- Recurring compensation could become the differentiator that matters.

Enterprise Versus Consumer Impact

For consumers, the appeal is obvious: a better AI assistant that can access expert frameworks without the user needing to know where those frameworks came from. That could make coaching, education, and professional guidance feel more precise, more actionable, and more trustworthy. It also aligns with the growing expectation that AI should explain not only what it recommends, but why.For enterprises, the economics are more complicated but potentially more powerful. Businesses care about IP risk, auditability, and whether AI output can be defended internally. A licensed skill-delivery layer may reduce the fear that teams are quietly building workflows on content they do not own.

Enterprise governance advantages

Enterprises could view Skill Refinery-style systems as a controlled route into expert knowledge. Instead of buying a generic model and hoping employees do the right thing, they can buy access to curated methodology tied to usage rules and compensation logic. That matters in sectors where content provenance is a board-level issue.The platform’s reported emphasis on keeping source files out of the context window is especially relevant for enterprise governance. It reduces the risk that proprietary source material becomes casually echoed, blended, or redistributed through the AI layer. That does not eliminate all risk, but it does improve the control story.

The consumer story is more about utility; the enterprise story is more about assurance. That divide could help the company market to both ends of the spectrum without collapsing into a single generic AI knowledge product.

The Economics of Recurring Revenue for Experts

One of the most interesting claims in the company’s pitch is that expert contributors receive recurring monthly revenue as subscribers access their knowledge. If true in practice and not just in theory, this is an important departure from the usual creator economy model, where compensation is often either ad-supported, subscription-gated, or paid as a one-time licensing fee. Recurring revenue gives experts a much stronger incentive to keep their materials current and high quality.That changes the psychology of participation. Authors, coaches, and specialist educators are more likely to contribute when they can see a continuing income stream rather than a one-off check. It also changes the product economics for the platform, because the company must continuously prove value to both subscribers and contributors.

What this means for experts

The recurring model could become a differentiator if it can be scaled transparently. Experts may be willing to package methodology into AI-delivered modules if they believe the platform will track usage, preserve attribution, and distribute revenue fairly. That kind of trust is hard to build and easy to lose.The model also invites comparisons to royalties, but it is not identical. Traditional royalties often depend on unit sales or distribution milestones. AI-delivered methodology depends on usage in an interactive environment, which could make measurement both richer and messier. The good news is that usage can be granular. The bad news is that it can also be more difficult to standardize.

- Recurring compensation can deepen creator loyalty.

- Usage-based economics may be better aligned with AI behavior.

- Transparency becomes essential for trust.

- Measurement must be reliable enough for audits.

- Quality control becomes a commercial necessity, not a nice-to-have.

Strengths and Opportunities

The biggest strength in this moment is timing. The UK’s policy reversal, the rise of collective licensing, and growing public skepticism toward unfettered AI scraping all favor platforms that can prove they respect rights from day one. Skill Refinery’s model is aligned with that mood, and alignment matters when trust is the scarce resource.The second strength is product clarity. The company is not trying to be everything to everyone. It is offering a specific answer to a specific question: how can expert knowledge live inside AI systems without being flattened into training data? That clarity should help with partner conversations, especially in publishing and professional education.

- Policy momentum is shifting toward licensing-first approaches.

- Creator trust is easier to win when compensation is built in.

- Enterprise buyers may prefer governed AI knowledge delivery.

- Recurring revenue improves creator incentives.

- MCP compatibility keeps the platform close to where users already work.

- Attribution and provenance are becoming marketable features.

- Specialized methodology is easier to monetize than generic content.

Risks and Concerns

The most obvious risk is execution. Turning methodology into machine-deliverable skill cards is a hard product problem, and hard product problems tend to hide their complexity until scale exposes them. What looks elegant in a demo can become messy when dozens of experts, platforms, and use cases are involved.There is also legal and reputational risk. Even if the platform is built around licensing, questions about derivative use, attribution, and contractual scope will follow it. Any company that sits between copyright holders and AI interfaces will be judged not just on the structure of its contracts, but on how faithfully those contracts are interpreted in the real world.

- Scale complexity could strain governance and QA.

- Platform dependence on major AI ecosystems is a structural risk.

- Licensing ambiguity may create disputes over scope or attribution.

- Competitive pressure from larger AI vendors could compress margins.

- User confusion may arise if the product category is not intuitive.

- Creator skepticism could slow supply-side growth.

- Regulatory change may alter the economics again.

What to Watch Next

The next phase will hinge on whether policy and product continue to move in the same direction. The UK is expected to keep refining its copyright and AI framework, and the wider licensing market will likely keep building new products around generative AI. At the same time, the practical adoption of MCP-based delivery and expert skill packaging will reveal whether this is a durable category or an appealing but narrow niche. (gov.uk)Skill Refinery’s near-term challenge is to prove that expert methodology can be delivered consistently across tools without losing the qualities that made it valuable in the first place. If the platform can do that, it may end up looking less like a startup riding an AI news cycle and more like infrastructure for the post-scraping economy.

Key signals to monitor

- New licensing frameworks in the UK and other major markets.

- Enterprise adoption of governed AI knowledge delivery.

- Publisher partnerships and other expert-supply relationships.

- Evidence of recurring revenue for contributors at scale.

- Integration depth with Claude, ChatGPT, Microsoft Copilot, and other MCP-compatible tools.

- Competitive responses from larger AI and publishing platforms.

The UK’s reversal may not settle the copyright debate, but it does sharpen the direction of travel. AI is moving from a world that treated knowledge as free fuel toward one that increasingly treats it as a negotiated asset. For Matt Cretzman and Skill Refinery, that shift is less a headline than a hypothesis finally gaining policy support — and the next proof point will come not from consultation papers, but from whether users, experts, and enterprises actually choose to pay for AI-delivered knowledge that respects the people who created it.

Source: The Chronicle-Journal User